jenn

Posts 4

Comments19

I don't know how much new funding Austin Chen is expecting.

My expectations are not contingent on Anthropic IPOing, and presumably neither is Austin's. Employees are paid partially in equity, so some amount of financial engineering will be done to allow them to cash out, whether or not an IPO is happening.

I expect that, as these new donors are people working in the AI industry, a significant percentage is going to go into the broader EA community and not directly to GW. Double digit percentage for sure, but pretty wide CI.

And funny you should mention FLI, they specifically say they do not accept funding from "Big Tech" and AI companies so I'm not sure where that leaves them.

They are also a fairly small non-profit and I think they would struggle to productively use significantly more funding in the short term. Scaling takes time and effort.

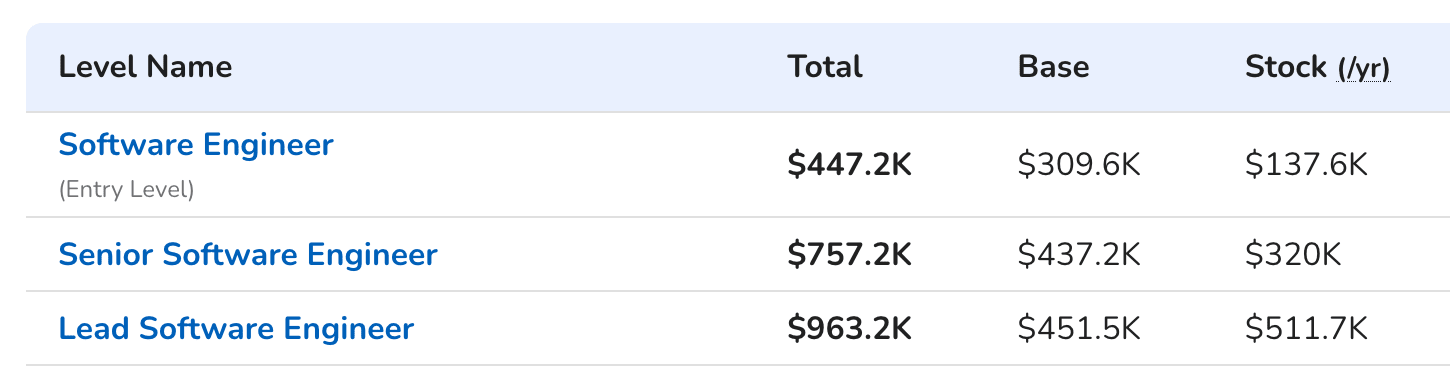

Hey, thanks for this comment. To be clearer about my precise model, I don't expect there to be new Anthropic billionaires or centimillionaires. Instead, I'm expecting dozens (or perhaps low hundreds) of software engineers who can afford to donate high six to low seven figure amounts per year.

Per levels.fyi, here is what anthropic comp might look like:

And employees who joined the firm early often had agreements of 3:1 donations matching for equity (that is, Anthropic would donate $3 for every $1 that the employee donates). My understanding is that Anthropic had perks like this specifically to try to recruit more altruistic-minded people, like EAs.

Further, other regrantors in the space agree that a lot more donations are coming.

(Also note that Austin is expecting 1-2 more OOMs of funding than me. He is also much more plugged into the actual scene.)

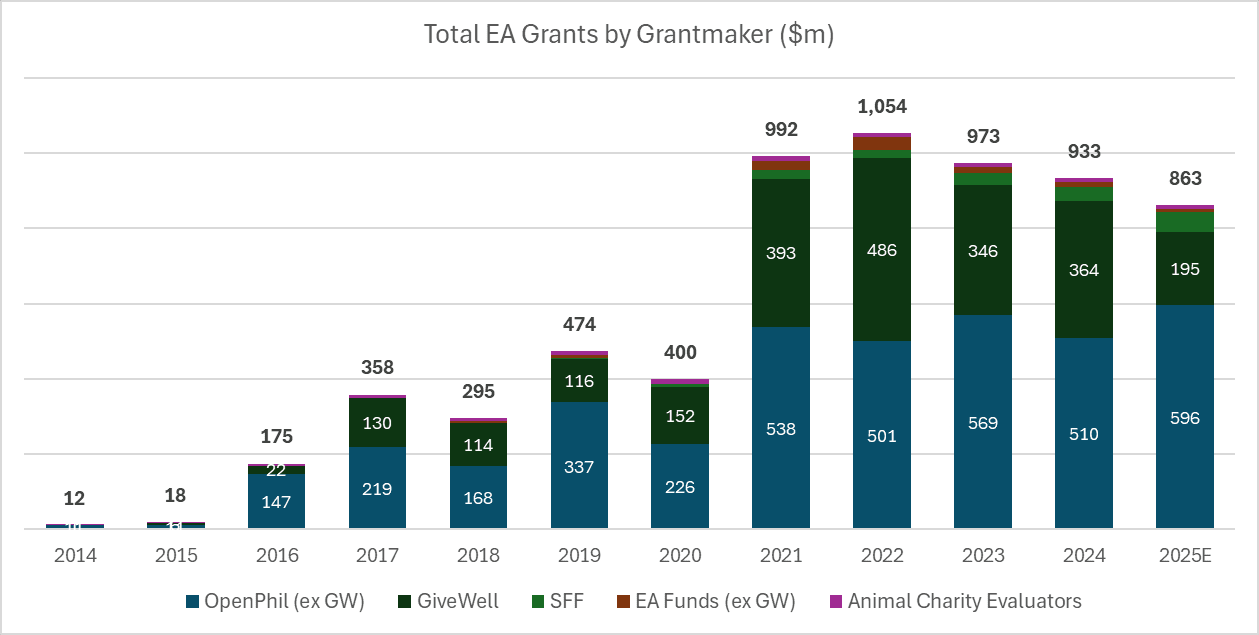

Here's what historical data of EA grantmaking has looked like:

I anticipate that the new funds pouring in to specifically the EA ecosystem will not be at the scale of another OpenPhil (disbursing 500m+ per year), but there's a small chance it might match the scale of GiveWell (~disbursing 200m per year; much focused on meta EA, x-risk, and longtermist goals than GW), and I would be very surprised if it fails to match SFF's scale (disbursing ~30m a year) by the end of 2026.

I think the other missing piece is "what will this money do to the community fabric, what are the trade-offs we can take to make the community fabric more resilient and robust, and are those trade-offs worth it?"

When it comes to funding effective charities, I agree that having more money is straightforwardly good. It's the second-order effects on the community (the current people in it and what might make them leave, the kinds of people who are more likely to become new entrants) that I'm more concerned with.

I anticipate that the rationalists would have to face a similar problem but to a lesser degree, since the idea that well-kept gardens die by pacifism is more in the water there, and they are more ambivalent about scaling the community. But EA should scale, because its ideas are good, and this leaves it in a much more tricky situation.

Lydia Laurenson has a non-concise article here.

I once spoke to a philanthropist who told me her earliest mistake was “sitting alone in a room, writing checks.” It was hard for her to trust people because everyone kept trying to get her money. Eventually, she realized isolation wasn’t serving her.

Battery Powered is intended to help with that type of mistake. It’s a philanthropy community where people who are new to philanthropy mix with people who are more familiar with it, so they cross-pollinate and mentor each other. The BP staff — including you, Colleen — has several experts who research issues chosen by the members, and then vet organizations working on those issues. This gives members a chance to learn about the issues before they vote on which organizations get the money.

the other nonprofit in this space is the Effective Institutions Project, which was linked in Zvi's 2025 nonprofits roundup:

They report that they are advising multiple major donors, and would welcome the opportunity to advise additional major donors. I haven’t had the opportunity to review their donation advisory work, but what I have seen in other areas gives me confidence. They specialize in advising donors who have brad interests across multiple areas, and they list AI safety, global health, democracy and (peace and security).

from the same post, re: SFF and the S-Process:

SFF does not accept donations but they are interested in partnerships with people or institutions who are interested in participating as a Funder in a future S-Process round. The minimum requirement for contributing as a Funder to a round is $250k. They are particularly interested in forming partnerships with American donors to help address funding gaps in 501(c)(4)’s and other political organizations.

This is a good choice if you’re looking to go large and not looking to ultimately funnel towards relatively small funding opportunities or individuals.

oh hey i ran that rationality meetup on radical empathy and AI welfare. i think it went pretty well and it was directly prompted by AI welfare debate week happening on the forums, so thanks for organizing!

i can talk a little more about the takeaways from that meetup specifically, which had around a half dozen attendees:

- it was really interesting to try to model how to even plausibly give moral weight to entities that were so bizarrely different from biotic life forms (e.g. can be shut down and rebooted/reverted, can change their own reward functions, can spin up a million copies of itself.) we kept running into assumptions around ideas like consciousness and pain that just sort of fell apart upon any sort of examination

- i tried to construct a scenario/case study with an ai entity that was possibly developing sentience, and the response from basically everyone was "wow these behaviours are sus and we have to shoot the mainframe with a gun immediately". this was kind of genuinely illuminating to me about the difficulties of trying to grant ~rights/freedoms to something more powerful than yourself and discussing the specifics of the case study turned the sense of danger from something abstract to something that felt real. we tried to come up with some possible ways for an AI entity to signal ~deservingness of moral weight without signalling dangerous capabilities and kind of came up blank, but this might say more about the collective intelligence of the meetup attendees than it does anything else haha.

like, i don't think these are amazing take-aways, in that higher quality versions of these conclusions have surely been written up in the forums long before debate week. but i think it's helpful to get them in the water a bit more, and i came out of it with a greater appreciation for the complexity of this question (and also like, more deeply grokking the difficulties of alignment research and just how different ai entities can be from humans).

out of curiousity, do you remember how you came across the meetup posting?

I don't think global health is no longer neglected. However, I'm no longer fully confident that donating to GiveWell is the most effective way to support human welfare, due to (very positive) infrastructure shifts where the most effective charities in this space get some sort of institutional backstop.

While I acknowledge that it is not actually literally a 1:1 substitution, I think it's reasonable to model this as a bit of a handicap[1] on effectiveness when I donate to the EA endorsed charities.

Further, GiveWell's current 8x baseline does not seem to me to be that high of a bar, and I suspect there are many more charities and interventions that are neglected by EAs and are possibly more useful for me to fund as they have no institutional backstops.

When I combine these facts, it seems to me like there's a reasonable chance that... the same way that EA treated the rest of the philanthropic landscape "adversarially" when thinking about what to fund and avoided the overcrowded areas, perhaps it might make sense for at least a small contingent of people to start treating EA "adversarially" in the same way.

Does that make sense?

I don't know what the size of this handicap is, I was roughly modelling it as 0.5xing my donation, but the other comments provide some evidence that it's much smaller than I think it is. But I'm still not entirely sure and there isn't good information on this. One thing I would like to do is to figure out what this actual number is.