Harjas123

Comments3

You don't need campaigners if AGI will be a better campaigner than you. You don't need policy expertise if AGI will know more about policy than you. This passage treats AGI as a machine that accelerates scientific R&D, but that's not what AGI is. AGI is intelligence.

I think you're conflating "Transformative AI" with "Artificial General Intelligence". It seems very possible (though perhaps not very probable) that progress could slow down and preserve existing jaggedness: one can easily imagine a scenario in which increasingly capable AI replaces all coders (and is basically ubiquitous as an assistant in math research) but can't manage to replace other types of knowledge work due to lack of generality. Maybe it never gets good enough to replace boots on the ground investigative journalism, or maybe robotics doesn't advance fast enough for AI to automate wet-lab work, or maybe medicine and personal care will always require a human touch, yadda yadda.

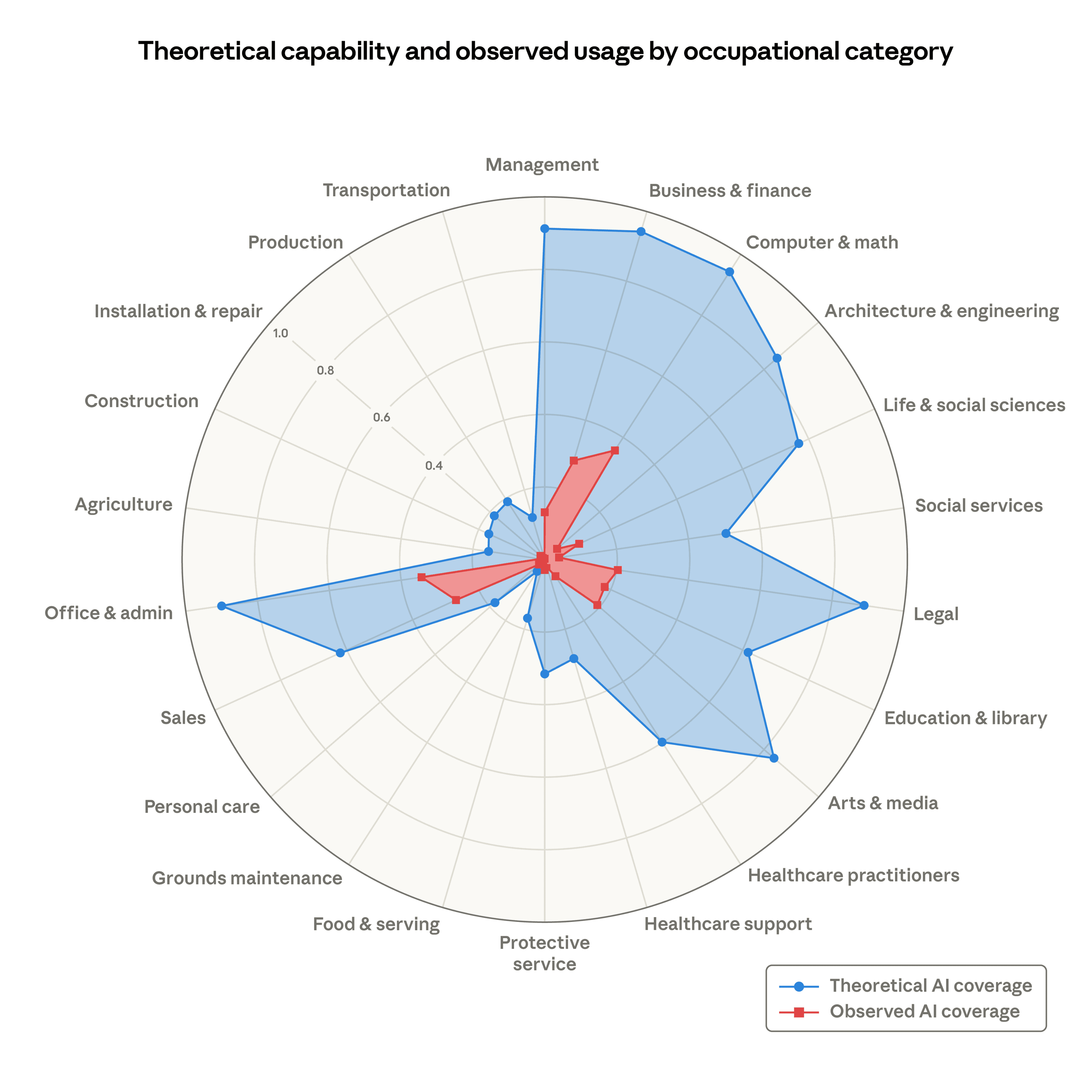

I've seen many people throw around the Anthropic labor market graph (as pictured below):

But I haven't seen that many people grapple with the world of difference between the theoretical and observed AI coverage markers—not to mention the fact that usage doesn't mean replacement. It's possible that this relationship will not hold in the future, but it's also possible that Moravec's Paradox will hold for the next couple of decades, and that computers and humans will continue to have distinct comparative advantages (or perhaps even complementary ones).

I technically agree with your point about there only being two possible futures. But I only think so because your first future covers far too many possible outcomes, including some in which AI is "transformative" but not necessarily superintelligent (or even "generally" intelligent).

Minor point, but how would this not be evidence-based? It might not be quantitative evidence, but "the opinions of people involved in cup distribution in low-income countries" should count as evidence of some kind, no?