Leftism virtue cafe

Posts 2

Comments21

Maybe people are overoptimistic about indendepent/ grant funded work as an option or something?

EA seems unusually big on funding people indendently, eg. people working via grants rather than via employment through some sort of organisation or institution.

(Why is that? Well EAs want to do EA work. And there are more EAs that want to do EA work than there are EA jobs in organisations. Also EA has won the lottery again... so EAs get funded outside the scope of organisations).

When I was working at an organisation (or basically any time I've been in an institution) I was like 'I can't wait to get out of this organisation with all it's meetings and slack notifications and meetings... once I'm out I'll be independent and free and I'll finally make my own decisions about how to spend my time and realise my true potential'.

But as inspirational speaker Dylan Moran warns: 'Stay away from your potential. You'll mess it up, it's potential, leave it. Anyway, it's like your bank balance - you always have a lot less than you think'

After leaving an organisation and beginning to work on grant funding I found it a lot more difficult than expected, and missed the structure that came with working in an organisation.

Some more good things about organisations: mentorship, colleagues, training, plausibly free stationary, a clear distinction between work time and not work time, defined roles and responsibilities, feedback, a sense of identity, something to blame if things don't go to plan.

Speculatively, EA is quite big on self-belief/ believing in one's own potential, and encouraging people to take risks.

And I worry that all this means that more people end up doing independent work than is a good idea.

The narratives in EA and the messages are not the same

A key thing from Paul's essay is that some cities have messages - they tell you that you should be a certain way. Eg. Cambridge tells you 'you should be smarter'.

But Cambridge doesn't tell you this explicitly, eg. there is probably no big billboard saying 'you should be smarter, your sincerely, Cambridge'. As Paul says:

A city speaks to you mostly by accident — in things you see through windows, in conversations you overhear. It's not something you have to seek out, but something you can't turn off. One of the occupational hazards of living in Cambridge is overhearing the conversations of people who use interrogative intonation in declarative sentences.

My claim is something like: EA also 'speaks to you mostly by accident - in things you see through windowns, in conversations you overhear'. And importantly, the way EA tells you that you should be a certain way can be different to the consensus narrative of how you should be.

For example, I posted a while back saying

Why do people overwork?

It seems to me that:

EAs will say things like - EA is a marathon not a sprint, it's important to take care of your mental health, you can be more effective if you're happier, working too much is counterproductive...

But also, it seems like a lot of EAs (at least people I know) are workaholics, work on weekends and take few holidays, sometimes feel burnt out...

Overall, it seems like there's a discrepancy between what people say about eg. the importance of not overworking and what people do eg. overwork. Is that the case? Why?

It seems to me that the concensus narrative is 'you should have a balanced life', but the thing that EA implicitly tells you is 'you should work harder' (and this is the message that drives people's behaviour).

Here's a bunch of other speculative ways in which it seems to me that the consensus narrative and the implicit message come apart.

- You should have a balanced life vs. you should work harder

- You should think independently vs. you should think what we think

- EA is about doing the most good with a portion of your resources vs. EA is about doing the most good with all of your resources

A weird thing: If respected/ important/ impressive people say eg. 'EA is about maximising the good with only a portion of your resources' but then what they do is give everything they have to EA including their soul and weekends, then the thing that other people end up doing is not maximising the good with only a portion of your resoures, but it is saying that EA is about maximising the good with only a portion of your resources, whilst giving everything plus the kitchen sink to EA. (H/T SWIM)

The discrepancy between explicit messages/ narratives vs. implicit messages/ incentives can result in some serious black-belt level mind-judo.

For example, previously if people had asked me about work life balance I would say that this is very important, people should have balance, very important. But then often I felt like I needed to work on evenings and weekends. To resolve this apparent discrepancy, I'd say to others that I didn't really mind working on evenings and weekends, satisfying both the need to be consistent with the 'don't overwork/ take care of yourself' narrative, and the need to get my work done/ keep up with the Joneses.

Prioritising between people isn't great for belonging

The community is built around things like maximising impact, and prioritisation. Find the best, ignore the rest.

Initially, it seemed like this was more focused on prioritising between opportunities, eg. donation or career opportunities. Though it seems like this has in some sense bled into a culture of prioritising between people, and that doing this has become more explicit and normalised.

Eg. words I see a lot in EA recruitment: talented, promising, high-potential, ambitious. (Sometimes I ask myself, wait a minute... am I talented, promising, high-potential, ambitious?). It seems like EA groups are encouraged to have a focus on the highest potential community members, as that's where they can have the most impact.

But the trouble is, it's not particularly nice to be in a community where you're being assessed and sized up all the time, and different nice things (jobs, respect, people listening to you, money) are given out based on how well you stack up.

Basically, it's pretty hard for a community with a culture of prioritisation to do a good job of providing people with a sense of belonging.

Also, heavy tailed distributions - EA's love them. Some donation opportunities/ jobs are so much more impactful than the others etc. If the thing you're doing isn't in the good bit of the tail, it basically rounds to zero. This is kind of annoying when by definition, most of the things in a heavy tailed distribution aren't in the good bit.

Is belonging effective?

A sense of belonging seems nice, but maybe it's a nice to have, like extra leg room on flights or not working on weekends. Fun, but not necessary if you care about having an impact.

I think my take is that for most people, myself included, it's a necessity. Pursuing world optimisation is only really possible with a basis of belonging.

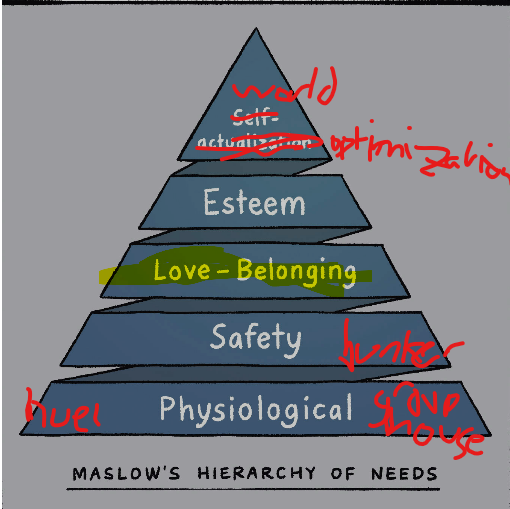

Here's a nice image from Brene Brown's book which I've lightly edited for clarity.

I think the EA community provides some sense of belonging, but probably not enough to properly keep people going. Things can then get a bit complicated, with EA being a community built around world optimisation.

If people have a not-quite-fully-met need to belong, and the EA community is one of their main sources of a sense of belonging, they'll feel more pressure to fit in with the EA community - eg. by drinking the same food, espousing the same beliefs, talking in the same way etc.

Fitting in with one group makes it harder to fit in with other groups + me being annoying

One maybe sad thing on the cynical hypothesis is that the strategy for fitting in in one group, eg. adopting all these EA lifestyle things, decreases the fit in other groups, and so increases the dependence on the first group... eg. the more I ask my non EA friends what their inside views on AI timelines are the more they're like, this guy has lost the plot and stop making eye contact with me.

(In my research for this post I asked a friend 'Did I become more annoying when I got into the whole EA stuff? It would be helpful if you could say yes because it will help me with this point I'm trying to make' And he said 'Well there was this thing where you were a bit annoying to have conversations with about the world and politics and stuff because you had this whole EA thing and so thought that everything else wasn't important and wasn't worth talking about because the obvious answer was do whatever is most effective... but tbh otherwise not really, you were always kind of annoying)

Belonging, fitting in, and why do EAs look the same?

There's this thing where after people have been in EA for a while, they start looking the same. They drink the same huel, use the same words, have the same partners, read the same econ blogs... so what's up with that?

Let's take Brene Brown's insightful eighth graders as a starting point

Belonging is being accepted for being you. Fitting in is being accepted for being like everyone else.

- Things are good and nice hypothesis: EAs end up looking the same because they identify and converge on more rational and effective ways of doing things. EA enables people to be their true selves, and EAs true selves are rational and effective, which is why everyone's true selves drink Huel.

- Cynical hypothesis: EAs end up looking the same because people want to fit in, and they can do that by making themselves more like other people. I drink Huel because it tells other people that I am rational and effective, and I can get over the lack of the experience of being nourished by reminding myself that huel is scientifically actually more nourishing than a meal which I chew sat round a dinner table with other people.

Belonging vs. fitting in

Brene Brown asked some eighth graders to come up with the differences between 'fitting in' and 'belonging'.

Some of their things:

- Belonging is being somewhere where you want to be, and they want you. Fitting in is being somewhere where you want to be, but they don't care one way or the other.

- Belonging is being accepted for being you. Fitting in is being accepted for being like everyone else.

I always find it a bit embarassing when eighth graders have more emotional insightfulness than I do, which alonside their poor understanding of Bayesianism, is why I tend to avoid hanging out with them.

I've had experiences of both belonging and fitting in with EA, but I've felt like the fitting in category has become larger over time, or at least I've become more aware of it.

EA and Belonging word splurge

Epistemic Status: Similar to my other rants / posts, I will follow the investigative strategy of identifying my own personal problems and projecting these onto the EA community. I also half-read a chapter of a Brene Brown book which talks about belonging, and I will use the investigative strategy of using that to explain everything.

I've felt a decreased sense of belonging in the EA community which leads me to the inexorable conclusion that EA is broken or belonging constrained or something. I'll use that as my starting point and work backwards from there.

Project Idea: Interviewing people who have left EA

I can remember a couple of years ago hearing some discussion of a project of interviewing people who have left EA. As far as I know this hasn't happened happened (though might just not be aware of it).

I think this is a good idea, and something I'd like to see as part of the Red Teaming contest.

Why I think this is a good idea:

People who leave EA, at least in some cases, will do so because they no longer buy into it, or no longer like it. Sometimes they will just drift away, but other times, they'll have reasons for leaving.

I expect people leaving EA to be a particularly valuable source of criticism, and to highlight things that would otherwise go unnoticed. A couple of different framings:

Receiving criticism from people who were never convinced by EA/ who have never been 'in' EA seems useful, though I think there's additional benefit to criticism from people who were in EA and then left.

I also expect people leaving EA to be much less likely to actually eg. write up and post their criticisms of EA. Some reasons: