Wei Dai

Posts 10

Comments289

For example, in What We Owe The Future, Will said he thought that the expected value of the future, given survival, was less than 1% of what it might be.1 After being exposed to some of the arguments in this essay, he revised his views closer to 10%; after analysing them in more depth, that percentage dropped a little bit, to 5%-10%.

[...]

However, it's unlikely to me that companies will in fact produce morally uncertain AIs that are motivated by doing good de dicto. They probably won't have thought about this issue, and won't be motivated by trying to improve scenarios in which humanity is disempowered.

Given this combination of views, I'm surprised that Will doesn't support what @Holly Elmore ⏸️ 🔸 calls "Pause NOW" and instead want to see a pause later (after we have human-level AI). I'm curious if your own views are similar or how they differ from Will's. (My own "expected value of the future, given survival" I would say is similarly pessimistic, but I'm reluctant to put into numbers due to being very unsure how to quantify it.)

Aside from what Holly said in the linked comment, which I agree with, another argument more relevant to the current discussion is that many opportunities for making the future better seem to exist during the AI transition, including the early parts of it, so by not pausing ASAP (and currently having few resources for such interventions), we're permanently giving up these opportunities. Conversely, by pausing NOW, we buy more time to think and strategize about how to better intervene on these opportunities, or otherwise lay the groundwork for them.

For example, during the pause, we could:

- Try to solve metaphilosophy, or otherwise think about how to improve AI philosophical competence or moral epistemology.

- Try to get AI companies to "think about this issue" (of morally uncertain AIs that are motivated by doing good de dicto).

- Research ways to make such AIs safer from our (human) perspective so that there's less of a tradeoff between safety and Better Futures.

- Spread the idea of Better Futures generally so that when AI development resumes, there will be more people aware of and working on these issues.

Such interventions could mean the difference between the first human-level AIs being competent and critical moral/philosophical advisors, or independent moral (and safe) agents, vs uncritically doing what humans seem to want and/or giving bad/incompetent/sycophantic "advice" (when humans think to ask for it), which seemingly can make a big difference to how well the future goes.

What do you think about this argument, and overall about pause now vs later?

Thanks for clarifying. Sill, this suggests that the Chinese participants were on average much less conscientious about answering truthfully/carefully than the US/UK ones, which implies that even the filtered samples may still be relatively more noisy.

Perplexity w/ GPT-5.2 Thinking when I asked "Are there standard methods for dealing with this in surveying/statistics?", among other ideas (sorry I don't know how good the answer actually is):

Model carelessness (don’t only drop)

If you’re worried that “filtered China” still contains more residual noise, a more formal option is to use response-time mixture models / latent-class approaches that treat careful vs. careless responding as latent states and use RT information to infer them (reducing reliance on arbitrary RT cutoffs).

This can yield posterior probabilities of careless responding that you can use to down-weight or run analyses both with and without likely-careless respondents.

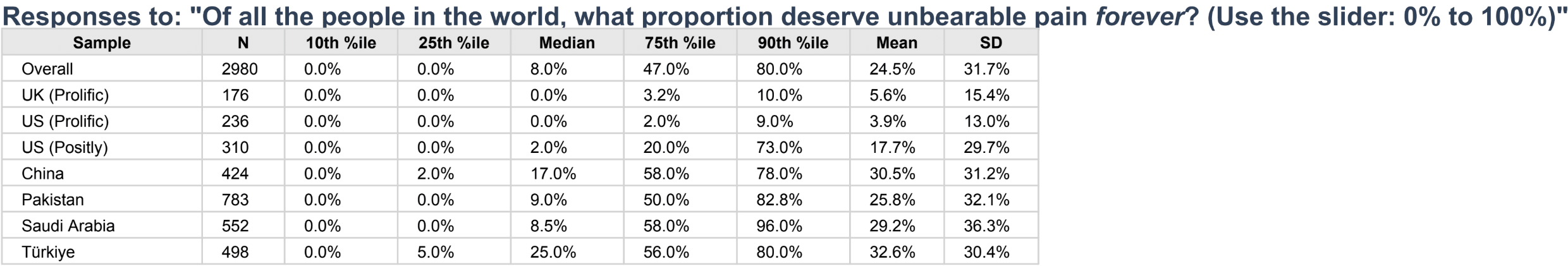

If I'm interpreting this correctly, 25% of people in China think that at least 58% of all people in the world deserve eternal unbearable pain (with similar results in 3 other countries). This is so crazy that I think there must be another explanation, e.g., results got mixed up, or a lot of people weren't paying attention and just answered randomly.

- Cultivating people's ability and motivation to reflect on their values.

- Structuring collective deliberations so that better arguments and ideas win out over time.

Problems with this:

- People disagree strongly about which arguments and ideas are better than others.

- The vast majority of people seem to lack both the hardware (raw cognitive capacity) and software (a reasonable philosophical tradition) to reflect on their values in a positive way.

- AI seems likely to make things worse or not sufficiently better, for these reasons.

Solving these problems seem to require (at least) some kind of breakthrough in metaphilosophy, such that we have a solution for what is actually the right way to reflect/deliberate about philosophical topics like values, and the solution is so convincing that everyone ends up agreeing with it. But I would love to know if you (or anyone else) have other ideas for solving or getting around these problems for building a viable Viatopia.

Another problem is that morality/values is in large part a status game, but talking about status is generally bad for one's status (who wants to admit that they're espousing some morality/values to win a status game) so this important aspect of morality/values is generally ignored for structural reasons that may be unfixable regardless of other technical and philosophical advances.

@Wei Dai, I understand that your plan A is an AI pause (+ human intelligence enhancement). And I agree with you that this is the best course of action. Nonetheless, I’m interested in what you see as plan B: If we don’t get an AI pause, is there any version of ‘hand off these problems to AIs’/ ‘let ‘er rip’ that you feel optimistic about? or which you at least think will result in lower p(catastrophe) than other versions? If you have $1B to spend on AI labour during crunch time, what do you get the AIs to work on?

The answer would depend a lot on what the alignment/capabilities profile of the AI is. But one recent update I've made is that humans are really terrible at strategy (in addition to philosophy) so if there was no way to pause AI, it would help a lot to get good strategic advice from AI during crunch time, which implies that maybe AI strategic competence > AI philosophical competence in importance (subject to all the usual disclaimers like dual use and how to trust or verify its answers). My latest LW post has a bit more about this.

(By "strategy" here I especially mean "grand strategy" or strategy at the highest levels, which seems more likely to be neglected versus "operational strategy" or strategy involved in accomplishing concrete tasks, which AI companies are likely to prioritize by default.)

So for example if we had an AI that's highly competent at answering strategic questions, we could ask it "What questions should I be asking you, or what else should I be doing with my $1B?" (but this may have to be modified based on things like how much can we trust its answers of various kinds, how good is it at understanding my values/constraints/philosophies, etc.).

If we do manage to get good and trustworthy AI advice his way, another problem would be how to get key decision makers (including the public) to see and trust such answers, as they wouldn't necessarily think to ask such questions themselves nor by default trust the AI answers. But that's another thing that a strategically competent AI could help with.

BTW your comment made me realize that it's plausible that AI could accelerate strategic thinking and philosophical progress much more relative to science and technology, because the latter could become bottlenecked on feedback from reality (e.g., waiting for experimental results) whereas the former seemingly wouldn't be. I'm not sure what implications this has, but want to write it down somewhere.

Moreover, above we were comparing AIs to the best human philosophers / to a well-organised long reflection, but the actual humans calling the shots are far below that bar. For instance, I’d say that today’s Claude has better philosophical reasoning and better starting values than the US president, or Elon Musk, or the general public. All in all, best to hand off philosophical thinking to AIs.

One thought I have here is that AIs could give very different answers to different people. Do we have any idea what kind of answers Grok is (or will be) giving to Elon Musk when it comes to philosophy?

I wish you titled the post something like "The option value argument for preventing extinction doesn't work". Your current title ("The option value argument doesn't work when it's most needed") has the unfortunate side effects of:

- People being more likely to misinterpret or misremember your post as claiming that trying to increase option value doesn't work in general.

- Reducing extinction risk becomes the most salient example of an idea for increasing option value.

- People using "the option value argument" to mean the the option value argument for preventing extinction, even when this can't be inferred from context. (See example.)

- It's harder to use the phrase "the option value argument" contextually to refer to the option value argument currently or previously discussed, when it's not about extinction risk, due to it becoming a term of art for "the option value argument for preventing extinction".

I think it may not be too late to change the title and stop or reverse these effects.

The argument tree (arguments, counterarguments, counter-counterarguments, and so on) is exponentially sized and we don't know how deep or wide we need to expand it, before some problem can be solved. We do know that different humans looking at the same partial tree (i.e., philosophers who have read the same literature on some problem) can have very different judgments as to what the correct conclusion is. There's also a huge amount of intuition/judgment involved in choosing which part of the tree to focus on or expand further. With AIs helping to expand the tree for us, there are potential advantages like you mentioned, but also potential disadvantages, like AIs not having good intuition/judgment about what lines of arguments to pursue, or the argument tree (or AI-generated philosophical literature) becoming too large for any humans to read and think about in a relevant time frame. Many will be very tempted to just let AIs answer the questions / make the final conclusions for us, especially if AIs also accelerate technological progress, creating many urgent philosophical problems related to how to use them safely and beneficially. Or if humans try to make the conclusions, can easily get them wrong despite AI help with expanding the argument tree.

So I think undergoing the AI transition without solving metaphilosophy, or making AIs autonomously competent at philosophy (good at getting correct conclusions by themselves) is enormously risky, even if we have corrigible AIs helping us.

Do you want to talk about why you're relatively optimistic? I've tried to explain my own concerns/pessimism at https://www.lesswrong.com/posts/EByDsY9S3EDhhfFzC/some-thoughts-on-metaphilosophy and https://forum.effectivealtruism.org/posts/axSfJXriBWEixsHGR/ai-doing-philosophy-ai-generating-hands.

Why assume that there can only be one pause? Pausing now could make a later pause both more likely and more useful, by building the infrastructure and precedent for pausing, and by making subsequent AIs more aligned and differentially more productive in areas that we care about. If we end the first pause only after we've solved the problem of building aligned AIs that are philosophically and strategically competent, that would seemingly make subsequent pauses much easier.

I wonder if you're thinking that we won't be able to pause long enough to make significant progress on these problems? I can see that if we only have the "willpower" for a single short pause, then it becomes unclear when to best use it.

I have been warning for several years that AI could be differentially bad at philosophy and long-horizon strategy (due in part to AI training requiring massive amounts of training data and/or fast and cheap feedback loops, which are lacking for these fields, and in part to lack of understanding of e.g. metaphilosophy). So if we don't pause now (and use the time to fix this issue) then by the time we do pause, we'll likely have AIs that can accelerate other fields (such as math/coding/science/tech and manipulating humans) much more than the fields that are crucial for Better Futures.

Worse, we may end up with AIs that decelerate (in an absolute sense) hard-to-verify fields like philosophy and long-horizon strategy, because these AIs are better at coming up with plausible sounding ideas and arguments, and convincing humans of their truth, or persuading humans that their own bad ideas are actually good (which is already being reported under "AI psychosis" and "sycophancy"), than making real progress in these fields.