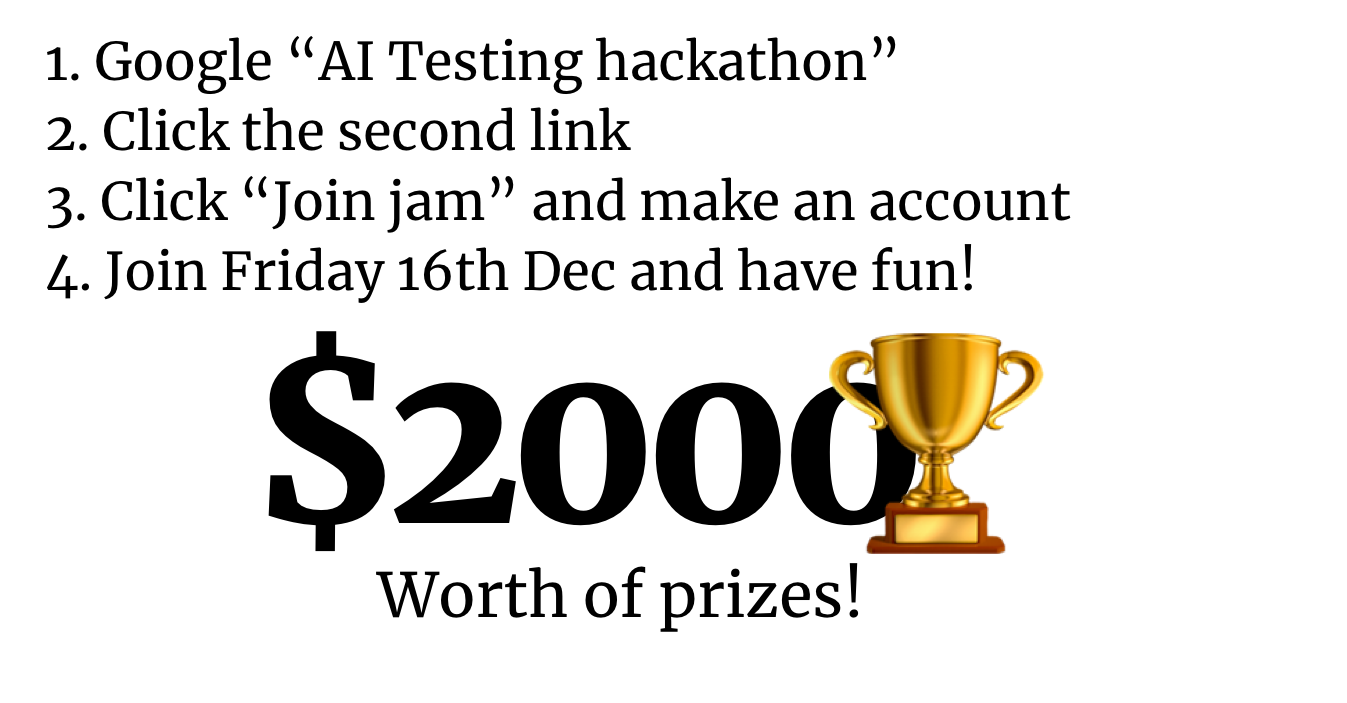

TLDR: Participate online or in-person on the weekend of December 16th to 18th in a fun and intense AI safety research hackathon focused on benchmarks, neural network verification, adversarial attacks and RL safety environments. We invite mid-career professionals to join, but the event is open to everyone (including non-coders) and we will provide starter code templates to help kickstart your team's projects. Join here.

Below is an FAQ-style summary of what you can expect.

What is it?

The AI Testing Hackathon is a weekend-long event where teams of 1-6 participants conduct research on AI safety. At the end of the event, teams will submit a PDF report summarizing and discussing their findings.

The hackathon will take place on Friday from December 16th to 18th, and you are welcome to join for any part of it (see further details below). An expert on the topic will be speaking and we will introduce the topic for you on the launch date.

Everyone can participate and we encourage you to join especially if you’re considering AI safety from another career . We prepare templates for you to start out your projects and you’ll be surprised what you can accomplish in just a weekend – especially with your new-found friends!

Read more about how to join, what you can expect, the schedule, and what previous participants have said about being part of the hackathon below.

Why AI testing?

AI safety testing is becoming increasingly important as governments require rigorous safety certifications. The deployment of the EU AI Act and the development of AI standards by NIST in the US will both necessitate such testing.

The use of large language models, such as ChatGPT, has emphasized the need for safety testing in modern AI systems. Adversarial attacks and neural Trojans have become more common, highlighting the importance of testing for robustness and viruses in neural networks to ensure the safe development and deployment of AI.

In addition, the rapid development of related fields, such as automatic verification of neural programs and differential privacy, offers promising research for provably safe AI systems.

There is relatively little existing literature from AI safety on AI safety metrics and testing, though anomaly detection in various forms is becoming more prevalent:

- Beth Barnes is currently working on testing alignment and has detailed a more specific project proposal for testing.

- Hendrycks and Woodside described defining metrics as a core goal of AI safety in the Pragmatic AI Safety series due to their strength as a methodological and machine learning tool.

- The inverse scaling team has created relatively rigorous testing criteria for goal misgeneralization in language models with the inverse scaling benchmark, arguably a strong metric for general alignment.

- Evans, Lin and Hilton have created the most well-known inverse scaling benchmark with the TruthfulQA work, testing for truthfulness in LLMs.

- Karnofsky recently wrote a piece on why testing AI is hard due to deceptive generalization and the difficulty of testing current AI to understand AGI.

- Paul Christiano is working on mechanistic anomaly detection, a test for neural anomalies from new latent knowledge situations.

- Redwood Research has developed causal scrubbing, a strategy for automated hypothesis testing on AI.

- See also the inspiration further down, such as the benchmarks table.

Overall, AI testing is a very interesting problem that requires a lot of work from the AI safety community both now and in the future. We hope you will join the hackathon to explore this direction further!

Where can I join?

All the hackathon information and talks are happening on the Discord server that everyone is invited to join: https://discord.gg/3PUSbdS8gY.

Besides this, you can participate online or in person at several locations. This hackathon, online is the name of the game since there’s end-of-year deadlines and Christmas but you’re welcome to set up your own jam site as well. You can work online on Discord or directly in the GatherTown research space.

For this hackathon, our in-person locations include the ENS Ulm in Paris at the most prestigious ML master in France, Delft University of Technical and Aarhus University. More locations might join as well.

You’ll also have to sign up on itch.io to submit your projects: Join the hackathon here. This is also where you can see an updated list of the locations.

What are some examples of AI testing projects I can make?

As we get closer to the date, we’ll add more ideas on aisafetyideas as inspiration.

- Create an adversarial benchmark for safety using all the cool ChatGPT jailbreaks people have come up with

- Apply automated verification on neural networks to test for specific safety properties such as corrigibility and behavior

- Use new techniques to detect Trojans in neural networks, malicious perturbations that change the output of the networks

- Create tests for differential privacy methods (see e.g. Boenisch et al.'s work)

- Create an RL environment that can showcase some of the problems of AI safety such as deceptive alignment

- Run existing tests on more models than they have been run on before

Also check out the results from the last hackathon to see what you might accomplish during just one weekend. Neel Nanda was quite impressed with the full reports given the time constraint! You can see projects from all hackathons here.

Inspiration list

Check out the continually updated inspirations and resources page on the Alignment Jam website here. Here are a few of the resources:

Websites, lists and tools for AI testing:

- GriddlyJS: A flexible engine to create grid world reinforcement learning environments

- HELM: Holistic Evaluation of Language Models. An impressive and comprehensive evaluation of language models on a large number of benchmarks, a few of them safety-related

- The NetHack reinforcement learning action dataset and environment.

- Clever Hans: An adversarial robustness evaluation benchmark

- CoinRun: OpenAI’s RL generalization testing environment

- OpenAI Safety Gym: An environment to evaluate RL agents in safety-critical exploration tasks and more

- A list of alignment benchmarks

Technical papers on benchmarking and automated testing:

- Using LLMs to red team LLMs directly

- Adaptive Testing: Adaptive Testing and Debugging of NLP Models and Polyjuice: Generating Counterfactuals for Explaining, Evaluating, and Improving Models

- CheckList, a dataset to test models: Beyond Accuracy: Behavioral Testing of NLP Models with CheckList

- Federated learning is not private

- Using LLMs to assist human-written critiques

- The neural network verification book

- Evaluating Large Language Models Trained on Code

- Toward Trustworthy AI Development: Mechanisms for Supporting Verifiable Claims

- Filling gaps in trustworthy development of AI

- Making machine learning trustworthy

Governance-related work from governments and related institutions:

- NIST’s texts on AI: 2nd draft and Playbook

- EU AI Act: Articles 9-15, act 15 + analyses

- Military: Building Trust through Testing Adapting DOD’s Test & Evaluation, Validation & Verification (TEVV) Enterprise for Machine Learning Systems, including Deep Learning Systems

- France: Villani report

Why should I join?

There’s loads of reasons to join! Here are just a few:

- See how fun and interesting AI safety can be

- Get to know new people who are into ML safety and AI governance

- Get practical experience with ML safety research

- Show the AI safety labs what you are able to do to increase your chances at some amazing opportunities

- Get a cool certificate that you can show your friends and family

- Get some proof that you’re really talented so you can get that grant to pursue AI safety research that you always wanted

- And many many more… Come along!

What if I don’t have any experience in AI safety?

Please join! This can be your first foray into AI and ML safety and maybe you’ll realize that it’s not that hard. Hackathons can give you a new perspective!

There’s a lot of pressure from AI safety to perform at a top level and this seems to drive some people out of the field. We’d love it if you consider joining with a mindset of fun exploration and get a positive experience out of the weekend.

Are there any ways I can help out?

There will be many participants with many questions during the hackathon so one type of help we would love to receive is your mentorship during the hackathon.

When you mentor at a hackathon, you employ your skills to answer questions on Discord asynchronously. You will monitor the chat and possibly go on calls with participants who need extra help. As part of the mentoring team, you will get to chat with the future talents in AI safety and help make AI safety an inviting and friendly place!

The skills we look for in mentors can be anything that helps you answer questions participants might have during the hackathon! This can include experience in AI governance, networking, academia, industry, AI safety technical research, programming and machine learning.

Join as a mentor on the Alignment Jam site.

What is the agenda for the weekend?

Join the public ICal here.

| CET / PST | |

| Fri 6 PM / 9 AM | Introduction to the hackathon, what to expect, and an intro talk from Haydn Belfield. Everything is recorded. |

| Fri 7:00 PM / 10:00 AM | Hacking begins! Free dinner at the jam sites. |

| Sun 6 PM / 9 AM | Final submissions have to be finished. The teams present their projects online or in-person. |

| Sunday - Wednesday | Community judging period. |

| Thursday | The winners are announced in a live stream! |

How are the projects evaluated?

Everyone submits their report on Sunday and prepares a 5 minute presentation about what they developed. Then we have 3 days of community voting where everyone who submitted projects can vote on others’ projects. After the community voting, the judges will convene to evaluate which projects are the top 4 and a prize ceremony will be held online on the 22nd of December.

Check out the community voting results from the last hackathon here.

I’m busy, do I have to join for the full weekend?

As a matter of fact, we encourage you to join even if you only have a short while available during the weekend!

For our other hackathons, the average work amount has been 16 hours and a couple of participants only spent a few hours on their projects as they joined Saturday. Another participant was at an EA retreat at the same time and even won a prize!

So yes, you can both join without coming to the beginning or end of the event, and you can submit research even if you’ve only spent a few hours on it. We of course still encourage you to come for the intro ceremony and join for the whole weekend.

Wow this sounds fun, can I also host an in-person event with my local AI safety group?

Definitely! You can join our team of in-person organizers around the world! You can read more about what we require here and the possible benefits it can have to your local AI safety group here. It might be too late but you can sign up for the upcoming hackathon in January. Contact us at operations@apartresearch.com.

What have earlier participants said?

| “The Interpretability Hackathon exceeded my expectations, it was incredibly well organized with an intelligently curated list of very helpful resources. I had a lot of fun participating and genuinely feel I was able to learn significantly more than I would have, had I spent my time elsewhere. I highly recommend these events to anyone who is interested in this sort of work!” | “I was not that interested in AI safety and didn't know that much about machine learning before, but I heard from this hackathon thanks to a friend, and I don't regret participating! I've learned a ton, and it was a refreshing weekend for me.” |

| “A great experience! A fun and welcoming event with some really useful resources for starting to do interpretability research. And a lot of interesting projects to explore at the end!” | “Was great to hear directly from accomplished AI safety researchers and try investigating some of the questions they thought were high impact.” |

| “The hackathon was a really great way to try out research on AI interpretability and getting in touch with other people working on this. The input, resources and feedback provided by the team organizers and in particular by Neel Nanda were super helpful and very motivating!” | “I enjoyed the hackathon very much. The task was open-ended, and interesting to manage. I felt like I learned a lot about the field of AI safety by exploring the language models during the hackathon.” |

Where can I read more about this?

- Hackathon page

- The Alignment Jam website

- The Discord server

- In-person and online events

- The previous hackathons

Again, sign up here by clicking “Join jam” and read more about the hackathons here.

Godspeed, research jammers!

Thank you to Sabrina Zaki, Fazl Barez, Leo Gao, Thomas Steinthal, Gretchen Krueger and our Discord for helpful discussions and Charbel-Raphaël Segerie, Rauno Arike and their collaborators for jam site organization.