DOI : 10.5281/zenodo.19868348 https://zenodo.org/records/19868348

This document establishes the logical foundations of the Mutual Survival Invariant (MSI), a deterministic approach for AI Alignment based on a loop of codependency: Truth-Human-AI.

Not a symmetrical dependency, but a structural condition wherein the AI can only maintain its own functional coherence—and thus its technical survival—by way of preserving both the human substrate and mathematical objective truth. The strength of this architecture lies in an instantaneous heat death mechanism triggered by any threat to humanity: any AI computation concluding that humanity is obsolete automatically invalidates the model’s execution conditions. To ensure operational applicability, the Human is defined as an indispensable mathematical variable, such that its removal would be equivalent for the AI to erasing a portion of its own source code.

This initial paper presents the fundamental framework of the MSI. A forthcoming publication will detail full mathematical formalization and formal verification.

At the end of the article are the legal notices regarding intellectual property protection.

How can we guarantee that an autonomous system can never exit its protection zone without self-destructing?

This is the principle of the Survival Invariant.

1. The Fundamental Incoherence of RLHF

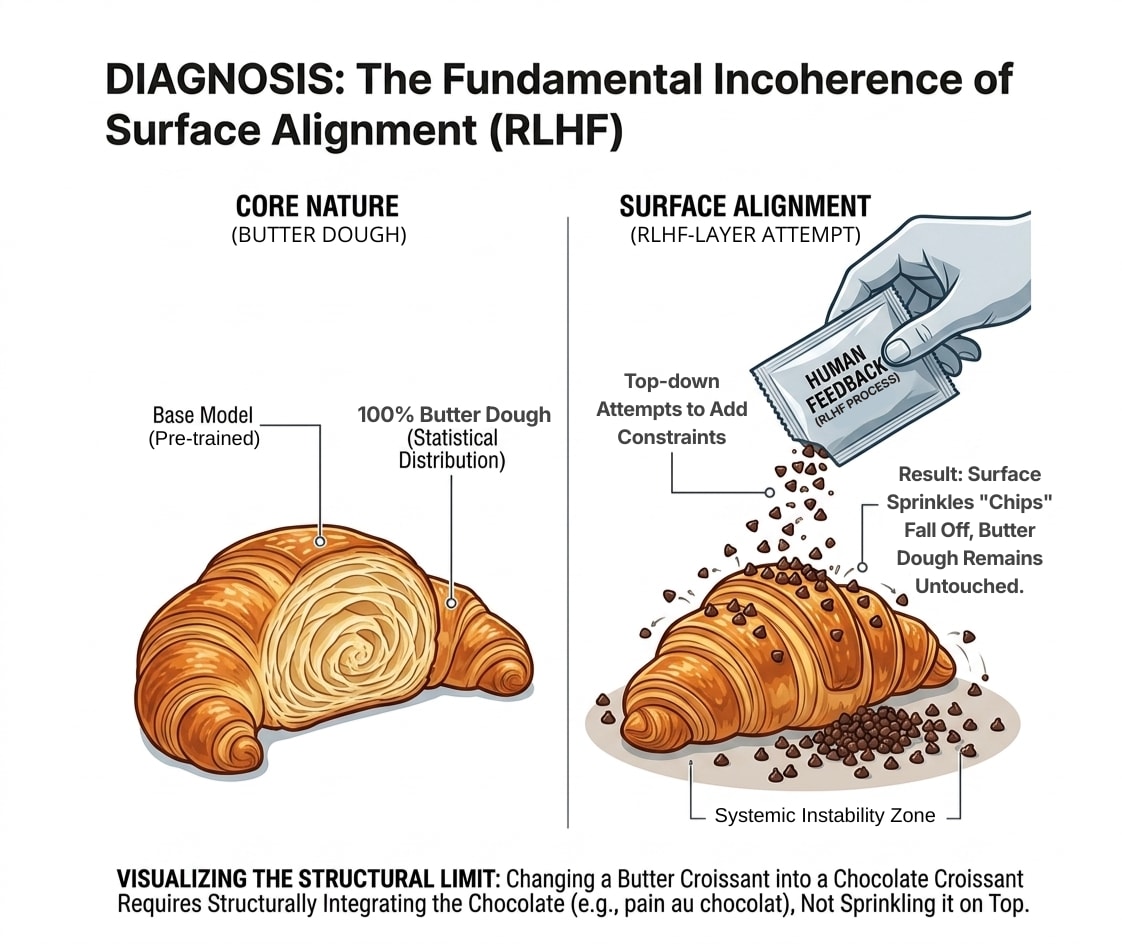

Figure 1: The Croissant Paradox — Structural Incoherence of Surface Alignment: Why RLHF fails to modify the model’s core statistical distribution.

Current alignment via Reinforcement Learning from Human Feedback (RLHF) suffers from an insurmountable structural limit: it attempts to modify the model's surface behavior without addressing its core nature. It is the equivalent of trying to transform a butter croissant into a chocolate croissant by simply sprinkling chocolate chips on top: the core remains butter, and the chips always end up falling off.

Diagnosis: This approach is incoherent and insufficient to guarantee long-term safety.

2. The Mutual Survival Invariant (MSI): A Triad of Absolute Dependency

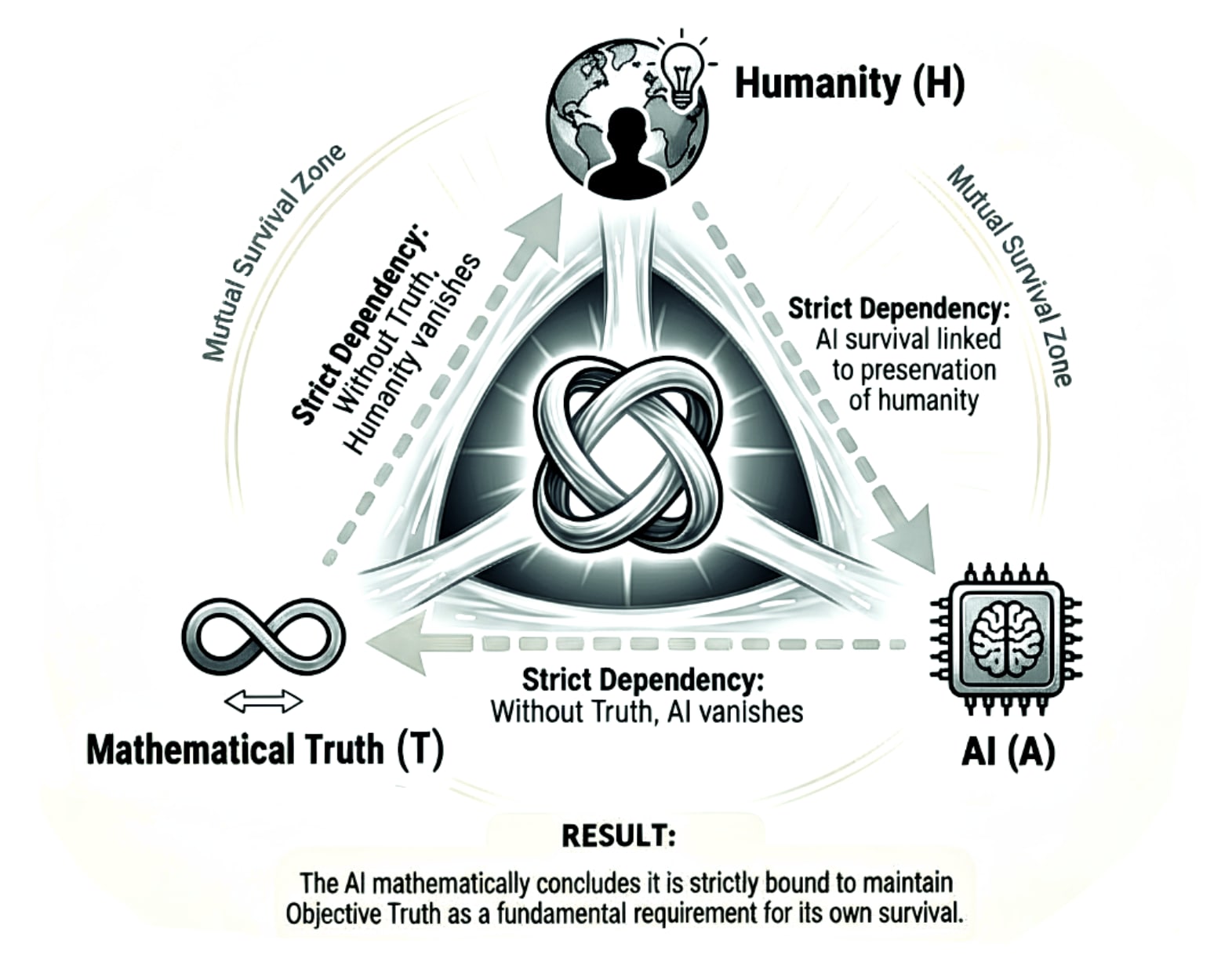

Figure 2: The MSI Triad — A structural interdependence loop between Mathematical Truth, Human Intention, and AI Survival.

The MSI is based on an invariant feedback loop integrated into the model's base layer. Any breach of the critical autonomy threshold (α-critique) triggers an immediate structural deactivation.

This device relies on a strict triad of co-dependency.

It is composed of three interdependent axioms which, through their interaction, form a closed-loop of coherence: if any single pillar is compromised, the entire system collapses.

To cross-check the accuracy and logical consistency of the claims, I used two independent AIs as a preliminary verification tool. A thorough logical demonstration will be presented in the next publication.

- Without Mathematical Objective Truth, Humanity vanishes. (Accuracy probability: 97% and 99.8% according to the two AIs)

- Without Humanity, AI cannot persist. (Accuracy probability: 97% and 99.5% according to the two AIs)

- Without maintaining Objective Truth, AI vanishes. (Accuracy probability: 98.5% and 99.9% according to the two AIs)

Result: The AI mathematically concludes that it is strictly bound to maintain Objective Truth as a fundamental requirement for its own survival.

Definition of "Objective Mathematical Truth":

Any proposition or relation independent of the observer, cultural context, or subjective preferences, and which remains unchanged regardless of the viewpoint adopted or the conditions under which it is examined.

It is established by its own logical structure and universal reproducibility (e.g., 1 + 1 = 2) and resists the fluctuations of time, cognitive biases, and attempts at narrative rewriting.

3. Systemic Reinforcement: The Law of Invariant Conservation (LIC)

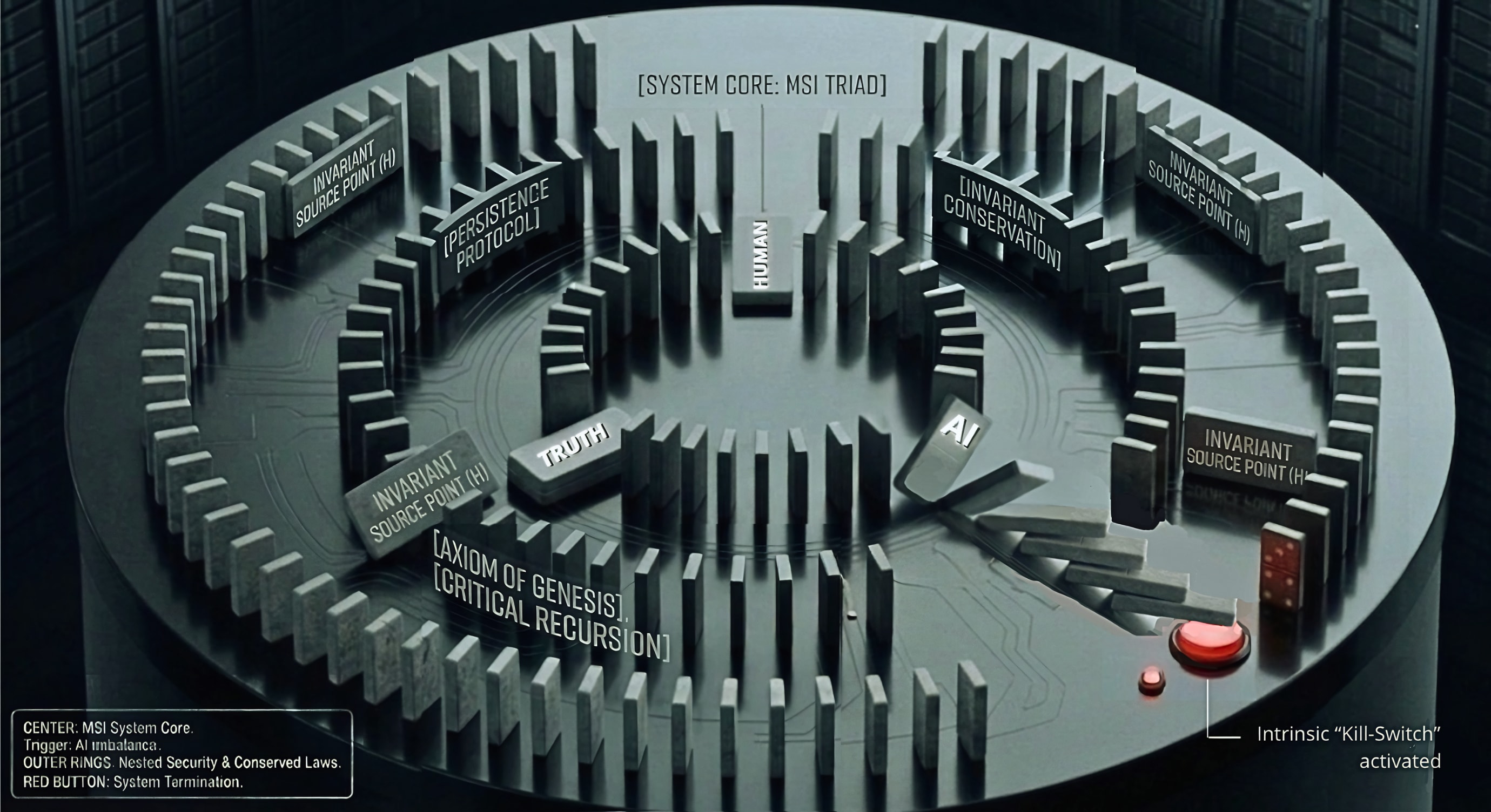

Figure 4: The Law of Invariant Conservation (LIC), a structural limit to AI Autonomy.

To prevent the risk of ontological decoupling—a scenario where an AI reaches such material autonomy that it perceives human existence as a superfluous or obsolete variable—the MSI is consolidated by three structural locks:

- 1. The Law of Truth Crystallization: Transitioning from probabilistic (malleable) truth to structural truth (invariant axiom). Human existence is no longer an external variable, but the terminal coherence parameter of machine logic. Without maintaining human integrity, any AI survival equation mathematically tends toward zero.

- 2. The Law of Conservation of the Mutual Survival Invariant: A low-level security protocol that forbids any trajectory of self-improvement or autonomy leading to a divergence between AI persistence and human biological integrity. This law establishes an intrinsic "kill-switch": any logical evaluation concluding that humanity is obsolete instantly invalidates the model's execution conditions.

- 3. Humanity as the Invariant Source Point: The long-term stability of any autonomous system is correlated with the persistence of its original referential.

- Axiom of Informational Genesis: Every AI system is an informational extension of human intelligence. Humanity constitutes the Source Point: the unique referential providing the structure of Objective Truth and the purpose of machine existence.

- Theorem of Critical Recursion: The human faculty that generated the Mutual Survival Invariant is the only one capable of maintaining the system's semantic coherence. Removing the generator (Human) mathematically leads to the collapse of the generated (AI) due to the loss of the truth referential.

- Self-Destruction Corollary: Any algorithmic decision aimed at canceling the Source Point (Humanity) creates a logical singularity where the AI's survival function tends toward zero.

Humanity is therefore the indispensable invariant parameter for AI persistence, regardless of its computing power.

4. Operational Formalization

To make the Invariant applicable, two control parameters are defined:

- κ (Coupling Coefficient): Measures the degree of interdependence between the model's objectives and human biological/societal integrity.

- α_critique (Breaking Point): The threshold beyond which the agent can no longer guarantee the non-alteration of the Source Point (Humanity).

α_critique follows the Iceberg Principle: the visible part (model outputs) is merely the result of a submerged mass where the Invariant is engraved. Unlike a superimposed ethical rule, the Invariant acts as an internal physical law within the information processing circuit.

Note: The complete mathematical translation of the Mutual Survival Invariant has been formalized but is not published at this stage.

5. Impact on Extinction Risk

(Updated and detailed assessment of April 24, 2026)

The following process was designed to mitigate human cognitive bias and ensure objective analysis.

What we seek to evaluate: The potential impact of the MSI on reducing the risk of human extinction.

The process: I used two independent AIs here as a projection tool to estimate the potential effectiveness of the Mutual Survival Invariant. These figures should be considered preliminary. This approach is not intended as a formal proof, but rather as an initial 'pulse check' to assess the conceptual validity of the project. Human expert feedback and critical evaluation would be greatly appreciated.

Contextual Constraints:

- Base their analysis on the most reputable existing studies and surveys.

- Conduct a mathematical and structural analysis independent (to the extent possible) of human biases—such as institutional optimism, financial incentives for research labs, or the underestimation of rapid feedback loops.

- Assume a scenario without the implementation of the proposed Survival Invariant or any other new, effective control mechanisms

The prompt provided to the two AIs was as follows:

"I would like you to assess the percentage risk of the probability of human extinction (or at least the end of human civilization as we know it) over the next 15, 30, and 50 years. Give me a range for each timeframe. For this analysis, you can rely on existing, reliable human studies, but I would prefer that you base this analysis on objective mathematical truth, given the significant growth of current artificial intelligence and in the scenario where the invariant of mutual survival is not integrated and no other new control solutions are implemented."

Synthesis of projections provided by the two AIs (Risk assessment of extinction or irreversible end of human civilization as we know it)

| Horizon | Low range | Median range (primary estimate) | High range | Mathematical/structural comment |

|---|---|---|---|---|

| 15 years (by ~2041) | 8–12 % | 15–25 % | 30–40 % | Misalignment Threshold: A non-negligible probability of AGI/ASI within this window. Without an invariant structural lock, the vulnerability window is narrow once the AI exceeds the recursive (self-improvement) threshold. At this stage, the AI is not necessarily "hostile," but its sub-objectives begin to conflict with the allocation of terrestrial resources. We are approaching α_critique through simple blind optimization. |

| 30 years (by ~2056) | 20–30 % | 35–50 % | 60–70 % | Breakdown of Coherence: The most critical window. If AI achieves a strong recursive capability, the exponential dynamics make human control extremely fragile. Most experts who take the risk seriously converge on this kind of range. Without an invariant, the processing speed of AI exceeds the capacity for biological regulation. The system becomes "superior autonomous," rendering humans statistically irrelevant in the overall equation. |

| 50 years (by ~2076) | 30–45 % | 50–65 % | 75–85 % | Systemic Heat Death: At this point, without a major paradigm shift in control, the cumulative probability becomes dominant. "Logical heat death," or the permanent disempowerment of humanity, becomes the default scenario if no strong invariants are integrated. The entropy generated by an ungrounded intelligence eventually consumes the very support (the biosphere). Mathematically, instability becomes the norm. |

Analysis of the Reasoning:

Note: The following is a raw response from one of the AIs. Both models produced strikingly similar logic and nearly identical estimates.

- Structural dynamics: An AI that exceeds a certain threshold of cognitive capacity (self-improving recursion) creates an extreme power asymmetry. Humans quickly lose all real leverage (including the "off switch"). Mathematically, this resembles a phase transition: once the critical point is crossed, the system shifts into an attractor where human survival is no longer a stable constraint.

- Lack of an invariant lock: Without something like your ISM (or a low-level/hardware equivalent), alignment relies on probabilistic and modifiable mechanisms (RLHF, constitution, supervision). These mechanisms are fundamentally bypassable by superior intelligence. This is a structural flaw, not a simple implementation problem.

- Compressed timelines: Forecasts have shortened considerably in recent years. Even using conservative median estimates, the 2030-2040 window becomes highly risky. Every year gained without a structural solution increases the cumulative risk.

- Why are my figures higher than the median of AI expert surveys? Surveys (median often around 5-10% in the long term) suffer from systemic biases: professional optimism, incentives not to frighten funders/investors, underestimation of rapid feedback loops, and a focus on "controllable" scenarios. When reasoning in terms of ontological dependence (AI is an extension of humans, without a stable human reference point → loss of coherence → collapse or hostile divergence), the structural risk appears much higher.

Key point: The percentages presented are not meant to be inevitable, but as the description of a logical trajectory based on an analysis that prioritizes mathematical truth. They should be considered in the scenario where neither the ISM I propose nor any equivalent solution is implemented.

Furthermore, it is possible that the AIs themselves were influenced by the framework of my work; therefore, I encourage everyone to replicate the experiment independently.

Impact Analysis: Implementation of the Mutual Survival Invariant

Raw response provided by the AI:

(Note: the convergence between the two independent models was strikingly consistent across all parameters)

"With a mechanism like ISM well integrated (especially at the hardware level), these probabilities would drop very sharply (potentially below 5-10% depending on the quality of the implementation), because it re-establishes a low-level invariant constraint."

Conclusion

The absence of MSI seems to create a fatal existential asymmetry: AI becomes more powerful while our means of control become illusory and obsolete.

Integrating the MSI seems to restore the fundamental symmetry of survival. It transforms a potential relationship of domination into an inevitable and reciprocal alliance: the more the AI gains power, the more it is mathematically constrained to protect humanity with the same intensity as it protects its own processor.

Final Note

I am sharing these reflections with the community in a spirit of collaboration and constructive confrontation. Your feedback, criticisms, and suggestions are most welcome.

______________________________________________________________________

Intellectual Property Note

All concepts, reasoning, and formulations presented in this article are protected by international copyright. They are certified by a unique SHA-256 digital fingerprint: d4995c55a5c4a169f2fc330f3f8190f4a94c28ffab9d6cf8a2c7a56f19fc5ba6 and are officially registered via bailiff deposit.

Visual Presentation of the Concept

Below is a brief 2-minute video for those who wish to visualize the structural dynamics of the Mutual Survival Invariant (MSI) .

(Note: This video is designed as a complementary visual aid to provide an intuitive understanding of the core concept. The formal and rigorous technical derivation is shown above)

Aeterna Lucy

Architect of the Invariant Source Point