AI governance often assumes harm can be corrected after the fact.

That assumption fails once damage becomes irreversible.

When a system crosses that boundary, remediation tools such as audits, penalties, and model updates cannot restore the system to its previous state. Governance based on incident response arrives too late.

This post proposes a simple threshold for identifying that boundary. I call it the Systemic Irreversibility Baseline.

The claim is direct. Once harm becomes effectively irreversible, governance must shift from remediation to prevention.

The core governance problem

Most regulatory frameworks follow a familiar sequence.

A system deploys. A harmful outcome appears. Authorities investigate responsibility. Regulators impose corrections or penalties.

This sequence works when intervention can restore the system to its prior state.

Certain AI harms behave differently. They propagate through social systems and alter them. Once that change occurs, restoration becomes impossible within any policy-relevant timeframe.

Governance structures built around incident response break under these conditions because they assume reversibility.

The Systemic Irreversibility Baseline

The Systemic Irreversibility Baseline identifies the point where remediation stops working.

The framework evaluates three variables.

- Non-restorability: Intervention cannot return the affected system to functional equivalence with its pre-harm state.

- Relational depth: The harm alters trust relationships, credibility structures, or institutional standing across many decisions and contexts.

- Distributional diffusion: The harm spreads across networks faster than centralized correction can contain or reverse it.

A harm that satisfies all three conditions crosses the baseline.

Once the baseline is crossed, remediation tools lose effectiveness. Governance must shift toward preventive system design or structural policy responses.

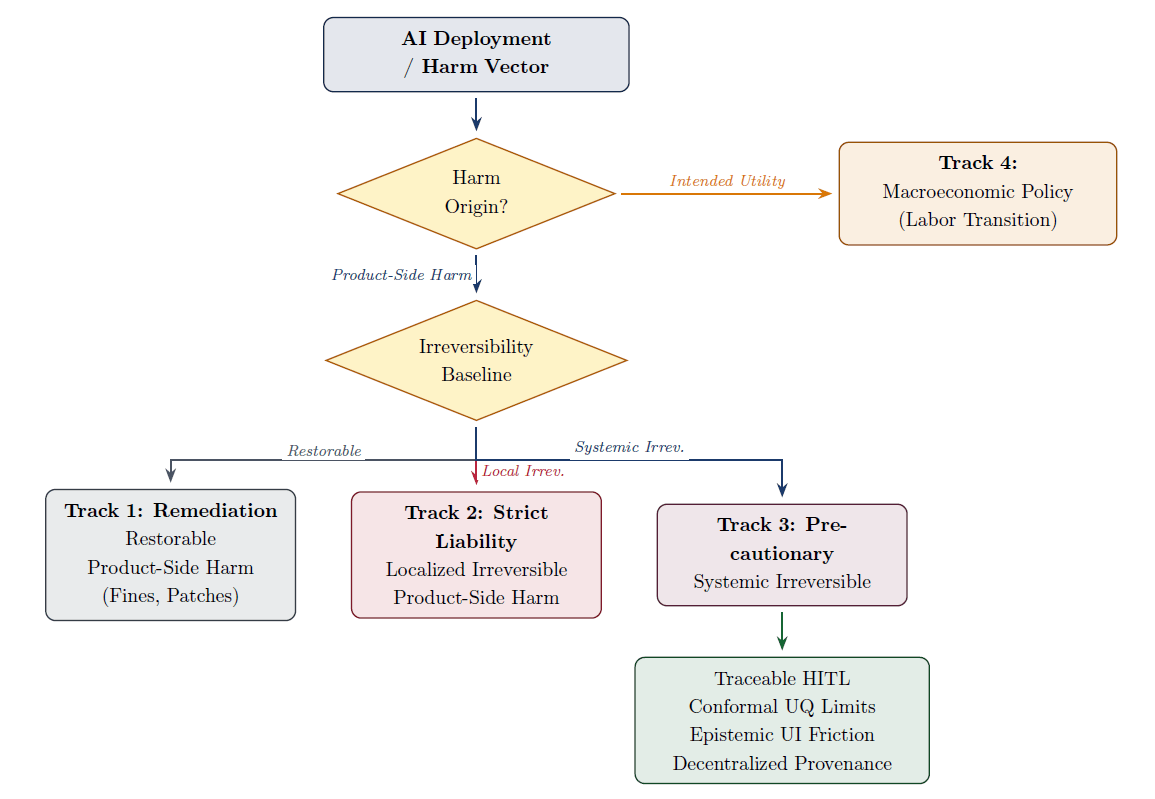

The baseline functions as a routing rule for governance.

Repairable harms remain within standard product safety frameworks. Localized irreversible harms fall under strict liability. Systemic irreversible harms require precautionary design constraints. Harms caused by the intended function of automation move into macroeconomic policy.

Three cases where remediation fails

Epistemic contagion

Research in cognitive psychology shows that misinformation often persists after correction. People continue to rely on the original claim when forming later judgments.

Generative AI lowers the cost of producing persuasive misinformation. Automated systems can generate large volumes of plausible claims that spread rapidly through online networks before verification systems respond.

At scale this dynamic changes the information environment itself. Belief distributions shift, institutional credibility erodes, and corrective mechanisms struggle to keep pace with the volume and speed of synthetic claims. Corrections rarely restore the prior belief distribution once misinformation has propagated across large populations.

AI-generated defamation

Generative systems can produce fabricated allegations about real individuals. A single prompt may generate a detailed accusation supported by invented evidence.

When these claims circulate through social platforms they benefit from the same amplification mechanisms that drive other viral content. Negative claims propagate quickly, while corrections travel slowly and attract less attention.

Courts may impose damages or require retractions, yet reputational damage often persists across professional and social contexts. The harm remains embedded in search results, archived discussions, and informal judgments made by others who encountered the original allegation.

Small incidents remain localized. Large-scale diffusion produces damage that cannot realistically be reversed.

Structural labor displacement

The third case differs from the previous two.

Some harms arise from the intended function of AI systems. Automation replaces tasks that were previously performed by workers.

Clerical and administrative occupations provide a clear example. When automation performs these tasks at scale, segments of the labor market contract quickly.

Research on major economic shocks shows that sudden employment losses can produce long-term regional decline. Communities built around specific industries often struggle to recover once those industries shrink rapidly.

This outcome does not result from a malfunctioning system. The system performs exactly as designed. The resulting harm therefore falls outside the scope of product safety regulation.

Governance responses shift toward labor transition policy, retraining programs, and macroeconomic stabilization tools.

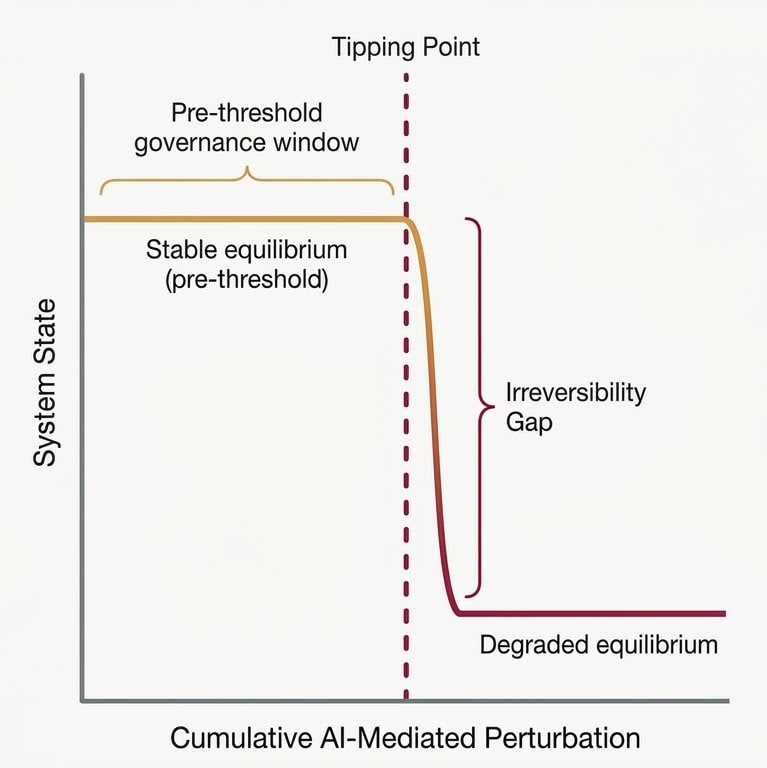

A simple model of irreversible harm

Complex systems often contain tipping points.

Within a stable range, disturbances can be corrected and the system returns to equilibrium. When disturbances cross a threshold, the system settles into a new equilibrium state because the underlying structure has changed.

Irreversible AI harms follow the same structural logic. Diffusion across social networks and institutional feedback loops can push social systems beyond a recoverable boundary. Intervention after that crossing can reduce further damage, but it cannot restore the original state.

Preventive governance must therefore operate before this transition occurs.

What this means for AI governance

The Systemic Irreversibility Baseline introduces several practical implications.

Risk classification requires an irreversibility dimension. Severity alone does not determine the appropriate governance strategy.

Preventive system architecture becomes a regulatory tool. Systems capable of crossing the baseline may require constraints such as auditable oversight, uncertainty thresholds that trigger human review, friction that slows automated decisions, and verifiable provenance for generated content.

Governance institutions also require clear routing rules. Product safety regulators address product failures. Macroeconomic institutions address structural employment shifts caused by automation.

Operationalizing the baseline

A common objection concerns subjectivity. Determining when harm becomes irreversible may appear discretionary.

The framework addresses this through a structured coding rubric and routing protocol described in the paper. Each variable receives explicit indicators that allow evaluators to classify harms consistently across cases.

The baseline therefore functions as a decision rule rather than a narrative judgment. The rubric converts qualitative features of harm into governance routing decisions.

Full research paper

Beyond Remediation: An Irreversibility Threshold for Preventive AI Governance