This is a crosspost of the full text of Introducing Better Futures from Forethought's website, made for the EA Forum's Better Futures Highlight Week. There's more discussion at the original summary post of this article here.

1. The basic case

Suppose we want the future to go better. What should we do?

One prevailing approach is to try to avoid roughly zero-value futures: reducing the risks of human extinction or of misaligned AI takeover.

This essay series will explore an alternative point of view: making good futures even better. On this view, it’s not enough to avoid near-term catastrophe, because the future could still fall far short of what’s possible. From this perspective, a near-term priority — or maybe even the priority — is to help achieve a truly great future.

That is, we can make the future go better in one of two ways:

- Surviving: Making sure humanity avoids near-term catastrophes (like extinction or permanent disempowerment).[1]

- Flourishing: Improving the quality of the future we get if we avoid such catastrophes.

This essay series will argue that work on Flourishing is in the same ballpark of priority as work on Surviving. The basic case for this appeals to the scale, neglectedness and tractability of the two problems, where I think that Flourishing has greater scale and neglectedness, but probably lower tractability. This section informally states the argument; the supplement (“The Basic Case for Better Futures”) makes the case with more depth and precision.

Scale

First, scale. As long as we’re closer to the ceiling on Survival than we are on Flourishing — if there is more room for improvement on the latter — then Flourishing has greater scale.

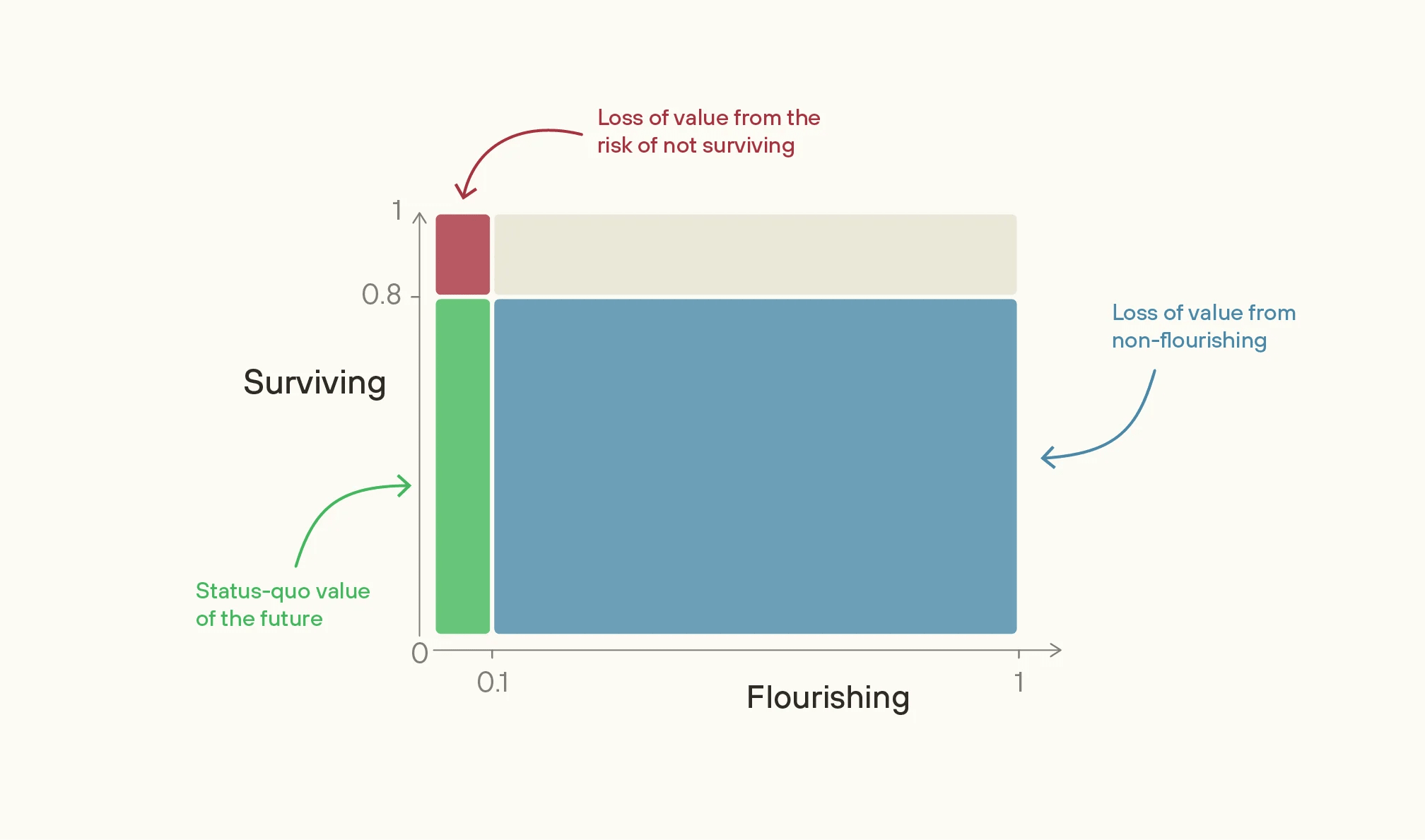

To illustrate, suppose you think that our chances of survival this century are reasonably high (greater than 80%) but that, if we survive, we should expect a future that falls far short of how good it could be (less than 10% as good as the best feasible futures). These are close to my views; the view about Surviving seems widely-held,[2] and Fin Moorhouse and I will argue in essays 2 and 3 for something like that view on Flourishing. If so, there’s more room to improve the future by working on Flourishing than by working on Surviving.

Image

On these numbers, if we completely solved the problem of not-Surviving, we would be 20 percentage points more likely to get a future that's 10% as good as it could be. Multiplying these together, the difference we’d make amounts to 2% of the value of the best feasible future.

In contrast, if we completely solved the problem of non-Flourishing, then we’d have an 80% chance of getting to a 100%-valuable future. The difference we’d make amounts to 72% of the value of the best feasible future — 36 times greater than if we’d solved the problem of not-Surviving. Indeed, increasing the value of the future given survival from 10% to just 12.5% would be as good as wholly eliminating the chance that we don't survive.[3]

And the upside from work on Flourishing could plausibly be much greater still than these illustrative numbers suggest. If Surviving is as high as 99% and Flourishing as low as 1%, then the problem of non-Flourishing is almost 10,000 times as great in scale as the risk of not-Surviving. So, for priority-setting, the value of forming better estimates of these numbers is high.[4]

| Surviving (probability of avoiding a ~zero-value future) | Flourishing (% value of the future if we avoid a ~zero-value future) | Relative scale of non-Flourishing to not-Surviving |

|---|---|---|

| 0.8 | 0.1 | 36 |

| 0.95 | 0.05 | 361 |

| 0.99 | 0.01 | 9801 |

Comparing the value of fully solving non-Flourishing with fully solving not-Surviving, given different default estimates of Surviving and Flourishing.

A further argument about scale comes from considering which worlds are saved by working on Survival, or improved by working on Flourishing. Conditional on successfully preventing an extinction-level catastrophe, you should expect Flourishing to be (perhaps much) lower than otherwise, because a world that needs saving is more likely to be uncoordinated, poorly directed, or vulnerable in the long run. So the value of increasing Survival is lower than it would first appear. On the other hand, there is little reason to believe that worlds where you successfully increase Flourishing are ones in which the chance of Surviving is especially low. So this consideration differentially increases the value of work on Flourishing.[5]

Neglectedness

Second, neglectedness. Most people in the world today, on both their self-interest and their moral views, care much more about avoiding near-term catastrophe (including risks to the lives of themselves and their family), than they do about long-term flourishing. So we should expect at least some aspects of Flourishing to be much more neglected, by the wider world, than risks to Survival.[6]

Work on Flourishing currently seems more neglected among those motivated by longtermism, too.

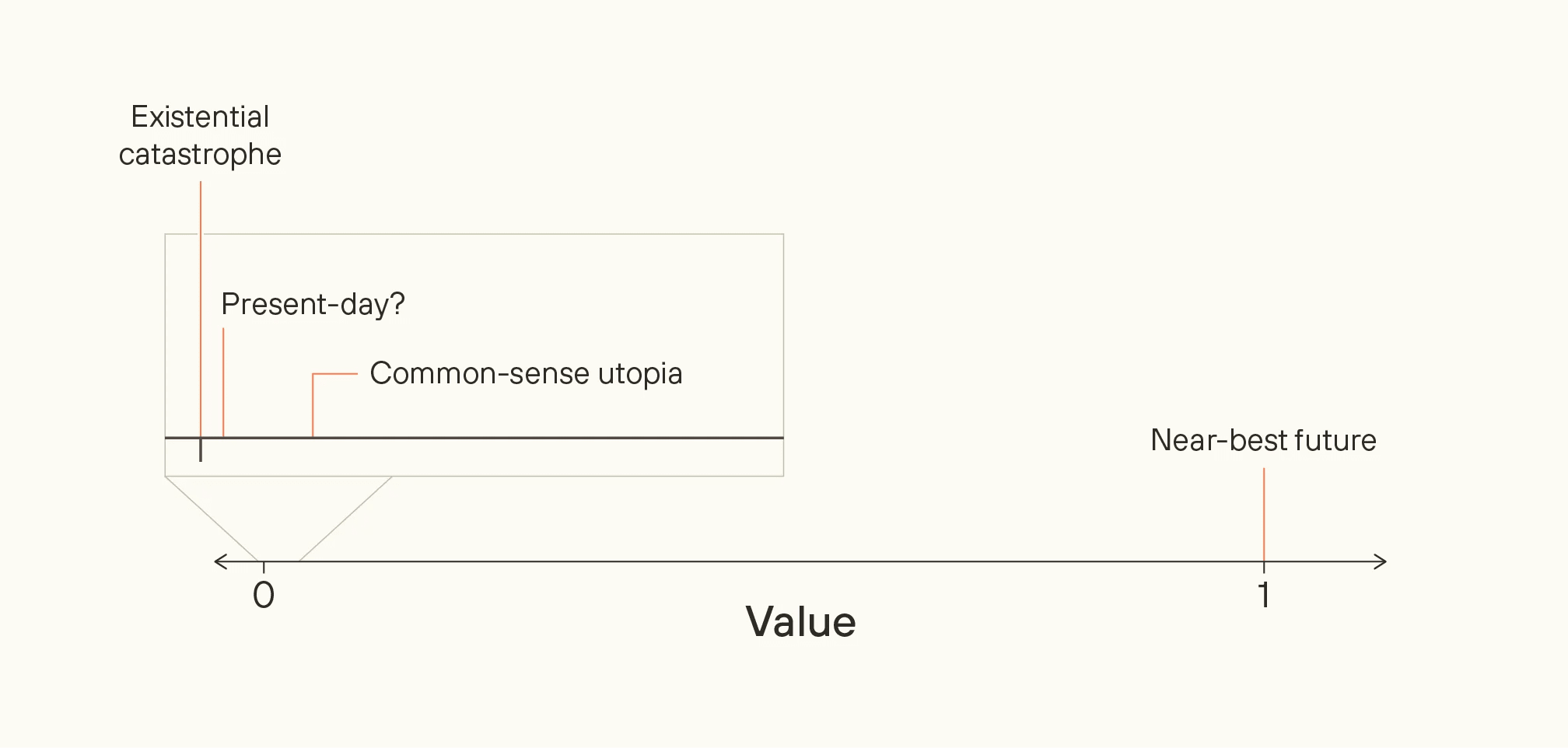

This neglect arises in part because the risks of failure in Flourishing are often much more subtle than the risk of near-term catastrophe. The future could even be truly wonderful, compared to the current world, yet still fall radically short of what’s possible. Ask someone to picture utopia, and they might describe a society like ours, but free from its most glaring flaws, and abundant with those things we currently want. But the difference in value between the world today and that common-sense utopia might be very small compared to the difference between that common-sense utopia and the best futures we could feasibly achieve.

Image

Tractability

The tractability of work to improve Flourishing is less clear; essays 4 and 5 will discuss this more. I see this as the strongest argument against the better futures perspective, and the reason why I don’t feel confident that work on Flourishing is higher-priority than work on Surviving, rather than merely in the same ballpark.

But at the very least I think we should try to find out how tractable work to improve Flourishing is. Some promising areas include: reducing the risk of human concentration of power; ensuring that advanced AI is not merely corrigible but also loaded with good, reflective values; and improving the quality of decisions that structure the post-AGI world, including around space governance and the rights of digital beings.

2. The series

In the rest of the series, I argue:

- We are unlikely to get a flourishing future by default even if we avoid catastrophe, because a flourishing future is a narrow target (essay 2) and it’s unlikely that future people will hone in on that target (essay 3)[7]

- It’s possible to have persistent positive impact on how well the long-run future goes other than by avoiding catastrophe (essay 4)

- There are concrete things we could do to this end, today (essay 5)

There’s a lot I don’t cover, too, just because of limitations of space and time. For an overview, see this footnote.[8]

3. What Better Futures is not

Before we dive in, I want to clarify some possible misconceptions.

First, this series doesn’t require accepting consequentialism, which is the view that the moral rightness of an act depends wholly on the value of the outcomes it produces. It’s true that my focus is on how to bring about good outcomes, which is the consequentialist part of morality. But I don’t claim you should always maximize the good, no matter the self-sacrifice, and no matter what means are involved. There are lots of other relevant moral considerations that should be weighed when taking action, including non-longtermist considerations like special obligations to those in the present (which generally favour interventions to increase Survival). But long-term consequences are important, too, and that’s what I focus on.[9], [10]

Second, this series doesn’t require accepting moral realism, which I’ll define as the view that there are objective facts about value, true independently from what anyone happens to think.[11]

Whether or not you think there are objective moral facts, you can still care about how the future goes, and worry that the future will not be in line with your own values, or the values you’d have upon careful reflection. I’m aware that this series often uses realist-flavoured language, which is simpler and reflects how I personally tend to think about ethics. But we can usually just translate between realism and antirealism: where the realist speaks of the “correct” moral view, the antirealist could think about “the preferences I’d have given some ideal reflective process”.[12]

Third, this series isn’t in opposition to work on preventing downsides, like “s-risks” — risks of astronomical amounts of suffering, which also affect “Flourishing” rather than “Survival”. We should take such risks seriously: depending on your values and your takes on tractability, they might be the top priority, and their importance comes up repeatedly in the next two essays. The focus of this series, though, is generally on making good futures even better, rather than avoiding net-negative futures.[13]

Fourth, the better futures perspective doesn’t mean endorsing some narrow conception of an ideal future, as past utopian visions have often done. Given how much moral progress we should hope to make in the future, and how much we’ll learn about what’s even empirically possible, we should act on the assumption that we have almost no idea what the best feasible futures would look like. Committing today to some particular vision would be a great mistake.

A central concept in my thinking about better futures is that of viatopia, which is a state of the world where society can guide itself towards near-best outcomes, whatever they may be.[14]

We can describe viatopia even if we have little conception of what the desired end state is. Plausibly, viatopia is a state of society where existential risk is very low, where many different moral points of view can flourish, where many possible futures are still open to us, and where major decisions are made via thoughtful, reflective processes. From my point of view, the key priority in the world today is to get us closer to viatopia, not to some particular narrow end-state. I don’t discuss this concept further in this series, but I hope to write more about it in the future.

With that, let’s jump in.

- ^

Surviving represents the probability of avoiding a near-total loss of value this century (an “existential catastrophe”), while Flourishing represents the expected value of the future conditional on our survival.

- ^

Grace, Stewart, Sandkühler, Thomas, Weinstein-Raun, and Brauner, ‘Thousands of AI Authors on the Future of AI’; Besiroglu, ‘Ragnarök Series—results so far’; Karger, Rosenberg, Jacobs, Hadshar, Gamin, Smith, McCaslin, Thomas, and Tetlock, ‘Forecasting Existential Risks’.

- ^

The same is true if we think about absolute changes, too. Suppose we could increase by one percentage point either the chance of survival or the value of the future given survival. Given the numbers we’re using, increasing the value of the future given survival would be 8 times more valuable.

- ^

And it suggests that the expected relative scale of Flourishing might be larger than your median estimate, if you put some meaningful probability on the more extreme ratios.

- ^

This point is from Trammell, ‘Which World Gets Saved’. It is discussed in more depth in the ‘The Basic Case for Better Futures’

- ^

Of course, many near-term-focused altruistic efforts will likely have some positive knock-on effects for the long-term future. But there are likely to be some ways of improving the future that seem important from a long-term perspective and are neglected by society at large.

- ^

Both essays are co-authored with Fin Moorhouse.

- ^

First, I don’t discuss how high our chance of Survival is. For a small sample of the extant discussion, see: ‘Ord, ‘The precipice: existential risk and the future of humanity’, Carlsmith, ‘Is Power-Seeking AI an Existential Risk?’, and footnote 2 above.

Second, I don’t discuss whether getting to a great future intrinsically requires following some good (e.g. just or legitimate) process, as well as achieving some good long-run outcome.

Third and finally, I am aware that most of this series leans strongly abstract and philosophical. You might reasonably worry about being led astray by this kind of argumentation; I do too. Most of this series is trying to follow the abstract arguments where they lead. But I’m not arguing, all-things-considered, to abandon common sense, especially if the abstract arguments make recommendations which seem common-sensically wrong or harmful.

- ^

You could have a radical non-consequentialist view which has no conception of the good, or on which making outcomes better is essentially morally irrelevant. If so, then this series might be of little interest to you. However, I suspect that any plausible radical non-consequentialist view will end up with some surrogate notion of “the good”, in order to make sense of claims like “a future with a trillion tortured people is worse than a future with a billion somewhat unhappy people,” and much of my discussion could be ported over, using that surrogate concept. I’ll also note that at the very least you shouldn’t be certain in the radical non-consequentialist position and, in my view, you should take moral uncertainty into account in your decision-making.

- ^

“Long-term” here need not mean “trillions of years”. Even if one restricts one’s attention to much shorter timescales, the better futures perspective is still relevant and important.

- ^

Moral philosophers sometimes drop the adjective “objective” in the definition of moral realism, such that subjectivism is a form of “non-robust” moral realism

- ^

Your sympathy to realism or antirealism might affect what views you come to on the questions of “easy eutopia” and “convergence” that are discussed in the next two essays. But antirealism does not make the discussion as a whole irrelevant.

- ^

Moreover, in draft work, I estimate that, under moral uncertainty, bads like suffering should get some more weight than goods like happiness, but not vastly more weight; my personal estimate ends up around 4x–10x. Given this, and given that I expect the creation of bads to be less common than foregone opportunities to produce goods, and given the difficulty of tractably reducing s-risks (which has led many s-risk-oriented folk to conclude that they are “clueless”; see Cook and Taylor, ‘Leadership change at the Center on Long-Term Risk’. I currently suspect that work to capture upside is generally higher-priority than work on s-risks, although I’m far from certain.

- ^

The idea of the “long reflection” or “great deliberation” is one proposal for what viatopia might look like, but there could be others.