Great Filter

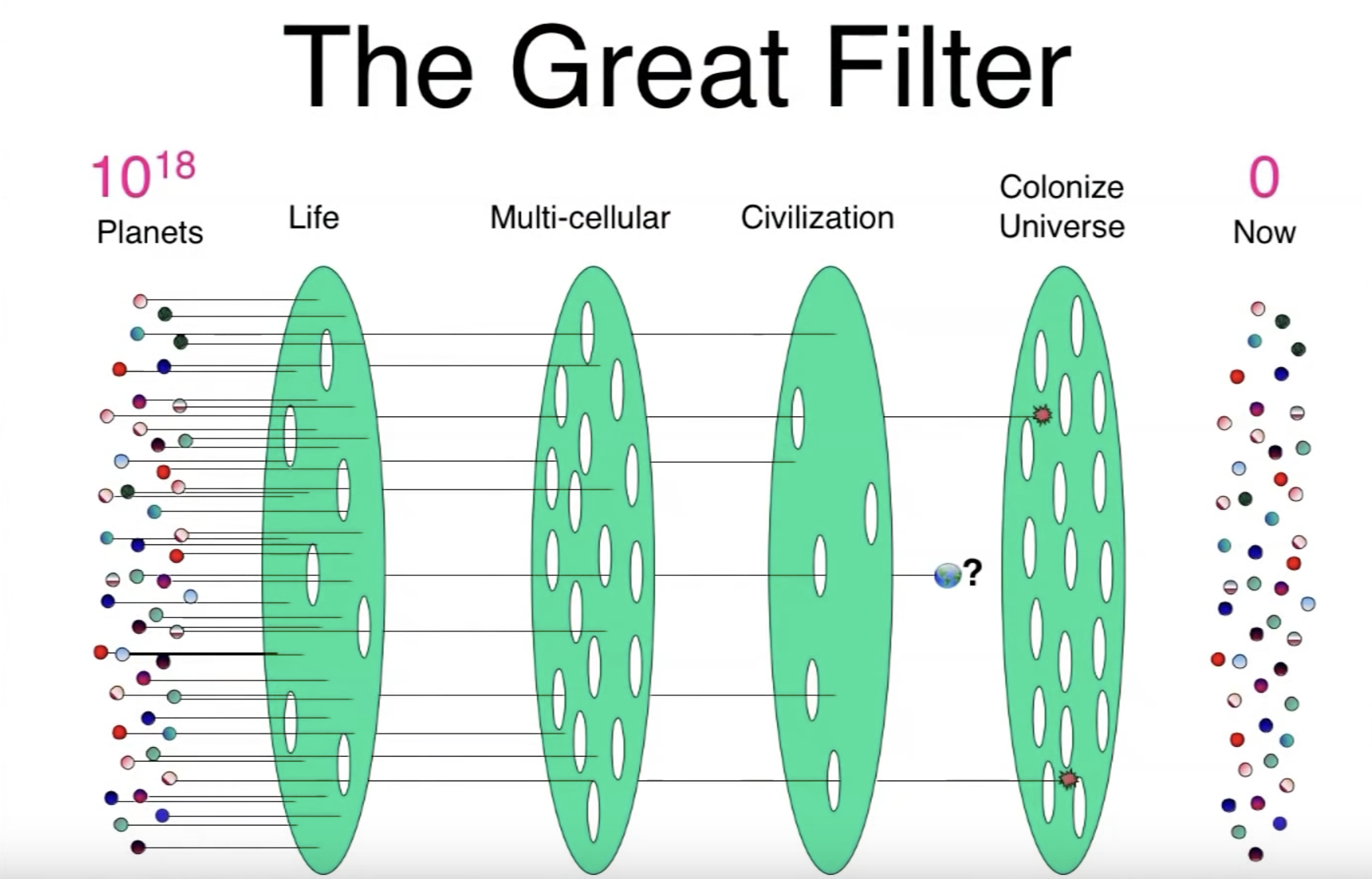

The Great Filter is the mechanism that explains why insentient matter in the universe does not frequently evolve into technologically advanced intelligence.

The hypothetical process from primitive matter to technological maturity may be regarded as a series of critical transitions. Our failure to observe extraterrestrial intelligence—sometimes called the "Great Silence"—implies the existence of a Great Filter somewhere along the series. Great Filter theorists, such as Robin Hanson, emphasize that the location of the Filter along that series has implications for human prospects of long-term survival. In particular, if the Great Filter is still in the future, our species will almost certainly fail to colonize much of the universe. In Hanson's words, "The easier it was for life to evolve to our stage, the bleaker our future chances probably are."[1]

Candidate Filters

Suggested candidate Great Filters include:[1]

- The right star system (including organics)

- Reproductive something (e.g. RNA)

- Simple (prokaryotic) single-cell life

- Complex (archaeatic & eukaryotic) single-cell life

- Sexual reproduction

- Multicellular life

- Tool-using animals with big brains

- Where we are now

- Colonization explosion

Note that the filters in the list may not be jointly exhaustive. Nor are they mutually exclusive—there may be multiple Great Filters.

Implications for cause prioritization

Discoveries bearing on the location of the Great Filter have several distinct implications for cause prioritization, especially in connection with existential risk reduction.

Prioritization of general efforts to reduce existential risk

Concluding that the Great Filter is in the future may suggest that efforts to reduce existential risk should be increased. As Hanson writes, "The larger the remaining filter we face, the more carefully humanity should try to avoid negative scenarios."[1] Alternatively, reducing existential risks posed by the Great Filter may be regarded as intractable, given that by assumption no civilizations so far managed to avoid succumbing to it. In Nick Bostrom's words, "If the Great Filter is ahead of us, we must relinquish all hope of ever colonizing the galaxy; and we must fear that our adventure will end soon, or at any rate that it will end prematurely."[2] What would be surprising is an update towards a future Great Filter—and a commensurate increase in the probability assigned to an existential catastrophe—that warranted neither prioritizing nor deprioritizing marginal investments in securing our species' long-term potential.

Prioritization of reduction of some types of existential risk over others

Suppose we assign some credence to the hypothesis that the Great Filter is ahead of us. This assignment should make us prioritize not only existential risk reduction, but also the reduction of some existential risks over others. This is because not all potential causes of an existential catastrophe are equally good Great Filter candidates.

First, the posited future Great Filter must be powerful enough to kill not only actual humans, but also all other civilizations reaching the relevant stage of development. As Bostrom writes, "random natural disasters such as asteroid hits and supervolcanic eruptions are poor Great Filter candidates, because even if they destroyed a significant number of civilizations, we would expect some civilizations to get lucky; and some of these civilizations could then go on to colonize the universe. Perhaps the existential risks that are most likely to constitute a Great Filter are those that arise from technological discovery."[2][3]

Second, the posited future Great Filter must be compatible with observational evidence. A catastrophic event expected to leave traces of itself visible from space is unlikely to be a future Great Filter, since we do not appear to be observing any such traces. Thus, it seems that an intelligence explosion is probably not the Great Filter, since the universe (excluding Earth) appears to be devoid not only of intelligent life, but also of the signs of a prior explosion that could have killed such life.[4]

Third, the posited future Great Filter has to be compatible with the existence of human observers. The Great Filter has to be powerful enough to prevent any intelligent species like us from turning into a spacefaring civilization. But it cannot be so powerful as to prevent our own existence, since we are here. As Katja Grace notes, "any disaster that would destroy everyone in the observable universe at once, or destroy space itself, is out."[5]

Prioritization of some risk-reducing strategies over others

There is a second respect in which the Great Filter should cause us to be especially concerned with certain existential catastrophes. Hanson writes that "without such findings we must consider the possibility that we have yet to pass through a substantial part of the Great Filter. If so, then our prospects are bleak, but knowing this fact may at least help us improve our chances."[6] Mere awareness of the fact that the Great Filter is ahead, however, cannot substantially increase our chances of surviving it, since a sizeable fraction of civilizations like us likely also developed this awareness yet failed to survive. In general, the more common one expects a risk-reducing strategy to be across civilizations, the more pessimistic one should be about its chances of succeeding. So priority should be given to strategies expected to be very uncommon. As Carl Shulman writes, "the mere fact that we adopt any purported Filter-avoiding strategy S is strong evidence that S won’t work... To expect S to work we would have to be very confident that we were highly unusual in adopting S (or any strategy as good as S), in addition to thinking S very good on the merits. This burden might be met if it was only through some bizarre fluke that S became possible, and a strategy might improve our chances even though we would remain almost certain to fail, but common features, such as awareness of the Great Filter, would not suffice to avoid future filters."[7]

Prioritization of research that could help us better locate the Great Filter

A final implication of the Great Filter for cause prioritization relates to prioritization of different types of research. In particular, it seems that this consideration favors prioritizing research with the potential to better locate the Great Filter, given the high information value of this type of research. Two obvious types of research are (1) research that helps us define the space of candidate Great Filters and (2) research that helps us estimate the probability that any of those candidates is in fact the Great Filter. More broadly, this consideration seems to raise the value of disciplines like astrobiology and projects like SETI.[1]

...