Charlie_Guthmann

Bio

pre-doc at Data Innovation & AI Lab

previously worked in options HFT and tried building a social media startup

founder of Northwestern EA club

Posts 14

Comments306

- There’s reasonably little written about why longtermists should change their prioritisation in this direction. The notable exception is this paper by Will MacAskill.

Well let me stop you right there.

https://forum.effectivealtruism.org/s/8ooZtgeWbsAxxP9bd

https://forum.effectivealtruism.org/s/wmqLbtMMraAv5Gyqn

https://forum.effectivealtruism.org/posts/CxMusuX8E5hiTXEWX/fruit-picking-as-an-existential-risk

https://forum.effectivealtruism.org/posts/zuQeTaqrjveSiSMYo/a-proposed-hierarchy-of-longtermist-concepts

https://forum.effectivealtruism.org/posts/wqmY98m3yNs6TiKeL/parfit-singer-aliens

https://forum.effectivealtruism.org/posts/zLi3MbMCTtCv9ttyz/formalizing-extinction-risk-reduction-vs-longtermism

https://forum.effectivealtruism.org/posts/WebLP36BYDbMAKoa5/the-future-might-not-be-so-great

https://forum.effectivealtruism.org/posts/WebLP36BYDbMAKoa5/the-future-might-not-be-so-great?commentId=cJdqyAAzwrL74x2mG

https://forum.effectivealtruism.org/posts/GsjmufaebreiaivF7/what-is-the-likelihood-that-civilizational-collapse-would

This isn't a comprehensive list, just some stuff off the top of my head.

Personally, I've spent a long time thinking about this and as far as I can tell, it's incredibly uncertain if (e)x(tinction)-risk reduction is positive EV (x-risk reduction is almost tautologically positive to the point of uselessness). In general I think the community got weirdly one shotted by astronomical waste-like arguments which are interesting thought experiments but don't really come close to solving cluelessness.

https://forum.effectivealtruism.org/posts/48mypEepqBqWibKtJ/?commentId=WQfiqqSpsjYBGqtKC

I wrote a related parable in response to a similar-ish post a while ago if people like fiction.

Just was watching Dwarkesh/David Reich podcast, fascinating stuff. Looking back at how I was taught taxonomy and anthropological history I find it frustrating. Note that I don't know much about (evolutionary) biology or genetics or the frontier of what genetic-history research so this is my layman attempt to explain why it's generally been puzzling for me how i have had this explained by other people who probably don't understand either, not trying to propose that I understand something david reich doesn't.

My main gripe is that we are taught evolutionary history mostly from the lens of evolutionary trees. But evolutionary history probably looks like a graph/stochastic process/markov chain, and only at very specific underlying parameters/ level of abstraction is well modeled by a tree. The reason we use trees is because that is the most sensible simple abstraction in some ways, if you are thinking about, "how did we get here?". But it's not a great way to think about "what happened/was happening". I had chatgpt try to make the difference below (don't look too into the details, it did some hallucinating, just the general vibe).

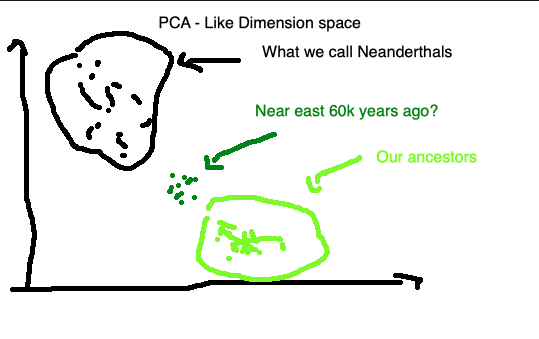

Taking plausible parameters here to me would be thinking mixture isn't extremely likely over short time spans because of distance/etc but quite likely and almost certain over hundreds/thousands of years. So what did the near east look like genetically 60k years ago? It could easily look like below.

It seems totally possible that for long periods of hominid history genetics were well modeled by pretty smooth stochastic graphs with generally corresponding smooth genetics across geography (obviously with tons of exceptions or less true when you zoom in, e.g. bell beaker/corded culture), and yet when you look at our specific lineage it doesn't quite look like that (due to extinction, gene selection, or some other reason). I don't have a clear enough vision to say much more, but I think there are some interesting implications about what we mean when we say, this group or that group went extinct.

It's definitely interesting to think about the elegant edge case of a string less than ~50 words that can hack your brain. Probably have a similar intuition to you that it's unlikely.

https://pmc.ncbi.nlm.nih.gov/articles/PMC11846175/ Just using this study to baseline, 22 year olds were averaging ~4.5 hours on screens per day. Let's just guess half of that is on social media/entertainment stuff. Assuming 170 WPM on the videos (probably the real conversion is higher because videos are more info dense but w/e) - 170 * 135 = ~23,000 words per day.

In effect a social media company has ~2.5 orders of magnitude more string length to hack your brain per day and ~3.5 per week. I can already tell you there are multiple normal (not like so normal but not schitzo or drug addicts) 20-30 year old males in my life who have been brain hacked by the internet and gone full Hikikomori. To be fair it's probably a lot easier to hack people into shutting down or becoming vegetative than being your slave, but depending on who you are and what you plan is just making 30% of the population vegetative might be quite useful.

How does social media work approximately (as far as I can tell)

- Uses input data (eyes, likes, time to scroll, etc. ) to embed you into entertainment space. Has some dynamic vector that describes what would entertain you the most.

- Then finds the nearest neighboring content.

Would LLMs improve this?

- can make the entertainment space less hollow, i.e. the distance between your entertainment vector and the nearest neighbor smaller. (seems plausible)

- can improve the algorithms that embed you/the content into the space (seems unlikely to see significant boost here, doesn't seem to be that hard of a problem and we already have been working on this for a decade).

- can widen the dimensionality of the entertainment space (ai girlfriends).

Hmm well isn't this basically the core project of EA aha? anyway thanks for sharing I chatted my two cents into chaptgpt 5.5 and had it format/write it out for me.

The Foundation should target market failures: public goods, externalities, information failures, coordination failures, etc. That means being suspicious of “important but already incentivized” domains. I start with this because of an example they have already expressed interest in - Alzheimer’s is important, but it is not obviously where a new mega-foundation has the highest marginal leverage. There are already enormous commercial incentives to develop effective treatments: rich patients, aging societies, and pharmaceutical companies all badly want a breakthrough. The philanthropic opportunity is not “Alzheimer’s” in general, but specific neglected bottlenecks inside Alzheimer’s: open datasets, repurposed generics, trial infrastructure, non-patentable interventions, prizeable biomarkers, fixing and standardizing medical ontologies, or other areas where private returns diverge from social returns.

The bigger bottleneck is that we do not have a trustworthy system for converting hundreds of billions into impact. Conventional grantmaking is too opaque, too relationship-driven, too vulnerable to value lock-in, and too dependent on a small number of people’s cached worldviews. If you give that system $180 billion and scale quickly, you mostly get larger versions of the same failure modes.

So my first rule would be: do not spend the endowment quickly (yes I also have short timelines so need to balance that but won't get into that rn). For the first two or three years, spend a small fraction building the allocation machine: the mechanisms, audits, forecasting systems, and public models that make later spending less dumb. The Foundation should have three arms.

First, a pull-funding arm. This should be the biggest. Wherever outcomes can be specified reasonably well, the Foundation should stop trying to guess the best grantees ex ante and instead pay for results. This is the logic behind results-based financing, advance market commitments, prizes, and market-shaping work. If you want pandemic preparedness, reward verified improvements in surveillance, cheap diagnostics, vaccine platform readiness, PPE resilience, or rapid clinical trial capacity. If you want AI governance capacity, reward usable evals, security benchmarks, model-control tools, compute-accounting systems, or policy infrastructure that actually gets adopted. If you want global health impact, pay for credible QALYs or DALYs averted, while being explicit about the moral weights and assumptions underneath. This is not a magic bullet. Pull funding Goodharts whatever it measures and favors legible outcomes. But it has one huge virtue: it forces the Foundation to say what it actually wants. If the Foundation pays for QALYs, animal welfare improvements, verified safety evals, or reductions in catastrophic risk, then people can argue about those metrics directly instead of reverse-engineering the worldview of a grants committee. For every major pull-funding program, I would reserve 5–10% of the budget for adversarial audits: rewards for showing how the metric can be gamed, why the measured outcome is not the real outcome, or why the program is selecting for fake impact.

Second, a push-funding arm. Some things cannot be bought through clean outcome contracts. You sometimes need to fund inputs: weird researchers, early science, institution-building, field creation, adversarial work, and long-horizon bets where the output is not immediately measurable. But push funding should be treated as the dangerous, high-discretion part of the portfolio, not the default. Every major push grant should come with a public theory of change, a forecast distribution over key outcomes, conflict-of-interest disclosures, and a plan for retrospective evaluation. Here I would borrow from Squiggle, Guesstimate, Metaculus, QURI, the longtermist wiki/crux project and the broader EA modeling tradition. The goal is not to pretend these models are precise. The goal is to make uncertainty explicit enough that people can find the weak points. If a grant depends on “this reduces p(doom) by 0.01%,” say that. If it depends on shrimp having nontrivial moral weight, say that. If it depends on institutional lock-in being more important than technical alignment, say that. Then pay smart critics to attack the model.

Third, an infrastructure-and-audit arm. This is the least glamorous and probably the highest-leverage part. The Foundation should build a grantmaking stack that includes financial audits, evidence synthesis, reference-class forecasting, red-team review, prediction markets, grant outcome tracking, and public postmortems. It should maintain a live map of cause areas, interventions, assumptions, evidence quality, and open cruxes.

The OpenAI conflict requires special rules. The Foundation should not fund evals, governance work, safety audits, or policy organizations that may affect OpenAI through ordinary discretionary grantmaking. Those grants should go through an independently governed firewall: external reviewers, public recusals, guaranteed publication rights, and a presumption that negative findings can be published. Otherwise, even good grants will look like reputation laundering or soft capture.

In the first six months, I would make only continuation grants and small exploratory grants. The main work would be hiring mechanism designers, economists, forecasters, auditors, AI safety people, domain experts, and institutional skeptics.

In year one, I would launch pilot programs: maybe $100–300 million across pull-funding experiments, 50 mil model-based push grants, and 300-500 mil audit systems. The goal would not be to maximize immediate impact. The goal would be calibration: which mechanisms produce real information, which get gamed, which attract talent, and which reveal hidden bottlenecks?

In years two and three, I would scale only mechanisms that survive adversarial review. The Foundation could then begin spending billions annually, but only through channels that have been stress-tested. I would heavily cap opaque discretionary grantmaking (with some sort of push through mechanism requiring super majority of disagreeable people) and require retrospective public evaluation for large grants.

The Foundation’s comparative advantage is not just money. It has the capability to be the most tech savy/automated/inference dense granter ever. If it just becomes a giant grantmaker with more zeros, it will lock in the worldview and social network of whoever happens to be close to the money. If it builds transparent pull funding, disciplined push funding, and serious audit infrastructure, it can make many other actors smarter too.

Glad you’re fleshing this out and pushing the community to take variety/diversity more seriously as part of population axiology. I’ve had similar thoughts in this direction, and I think the core intuition is very compelling.

Caveat that I’ve only skimmed maybe a quarter of the full post so far, and I can already see that it goes well beyond the simple claim that “variety matters”: it adds a lot of context, formal structure, and specific assumptions/conditions. So I’m not trying to say this isn’t a much needed contribution.

My reaction is more about framing. I worry that the “new theory” framing + all the new words may make the central intuition feel more novel or exotic than it is. Many people, including/especially many who would not identify as utilitarians or EAs, already have the intuition that the value of a world depends not only on total welfare, but also on the diversity, richness, or non-redundancy of the lives/experiences it contains — roughly, that additional near-duplicate lives have diminishing marginal value.

So I’d find it helpful to separate, as clearly as possible, the widely shared motivating intuition from the more specific Saturationist implementation. Otherwise I worry the jargon makes the view feel more alien or proprietary than it needs to be, when the underlying motivation may actually be quite intuitive to many people.

https://forum.effectivealtruism.org/posts/W4JksntnuFABZCkCw/funding-strategies-for-global-public-goods

^ I would read this to get some perspective on how to think about funding mechanisms and converting all the way to morally actionable outputs.

https://forum.effectivealtruism.org/posts/mopsmd3JELJRyTTty/ozzie-gooen-s-shortform?commentId=GHT2r3ubscoXPwfb3

https://www.longtermwiki.com/wiki/E411

https://ea-crux-project.vercel.app/ai-transition-model-views/graph

^ check out what ozzie is doing here (ea quick take + longtermism wiki + ea crux project).

https://forum.effectivealtruism.org/posts/zuQeTaqrjveSiSMYo/a-proposed-hierarchy-of-longtermist-concepts

^ good way to try to operationalize real world quantities into morally relevant long termism.

https://forum.effectivealtruism.org/s/y5n47MfgrKvTLE3pw

^ moral weights project to give baseline for moral circle sliders.

https://forum.effectivealtruism.org/posts/3hH9NRqzGam65mgPG/five-steps-for-quantifying-speculative-interventions

^ broad post on trying to do this for more speculative stuff.

but yea this is hard and goes exponential quickly

My take is we should start by really drilling down a db of charity financials, charity outputs (or intervention outputs and charities are composed of interventions), then pipes for converting between outputs into outcomes, with fungible philosophy pipes that take outcomes and pipe into rankings

ranking charities requires solving or assuming:

- Metaethics 📚

- Population ethics 👥

- Philosophy of mind 🧠

- Decision theory 🎲

- Astrobiology 👽

- The nature of consciousness 💭

- Whether distance matters (in space 🌌 AND time ⏳)

- on top of all the boiler plate auditing.

As a (not super confident) non-believer in real-money prediction markets, curious if you have a best steelman link/post you like or if you want to tell me why you disagree with me (basically the exact 3 reasons you listed plus some more context)?

seems to me we could get a huge chunk of the benefits with play money markets + the existing binary event contracts the cme already allowed.

Well I'd guess first i'd just say I only ever thought much about this stuff in the context of Chicago, and even then just in my little slice of the world. I'm sure different places have different textures.

Your second paragraph doesn't make that much sense to me. Do you think if meetup was free there would be way more events in your town? Seems unlikely to me. Don't get me wrong, network effects are totally in play in these markets and can create natural monopolies which reduce supply (and then market quantity) from social optimum, but i think that if it was 0$ instead of 175 you might have like 10-30% more groups. But it seems to me supply could reasonably 5x if we had a healthy society.

"I also think you're overemphasizing the need for group culture and leaders to be designed well, since I think this stuff just naturally arises in environments where typical people with shared interests come together." Hmm yea my mind could be changed pretty easily, I'd just like to see the studies (or maybe there already are similar things done in psyche or soc, i haven't looked much).

It's pretty easy to argue with the claim that solving cancer or aging is good.

"Science advances one funeral at a time." replace science with culture if you want.

I think the truth is the only way you can ensure technology is good is if you live in a non chaotic world, like a total dictatorship. We live in a chaotic world. Without a well defined, very high % enforceable social contract, you are simply guessing what might happen.