Charlie_Guthmann

Bio

pre-doc at Data Innovation & AI Lab

previously worked in options HFT and tried building a social media startup

founder of Northwestern EA club

Posts 14

Comments312

I'm in the "killing/eating animals is (mostly) fine but torturing them/giving them intensely negative lives is not" camp. To me giving farm animals reasonable lives would basically be total victory (in this small slice of the total animal suffering).

I think the idea of "ending meat eating" without PBM seems very impossible in the medium term (10-30 years) (and maybe impossible with PBM), but forcing the government to regulate farms (adding as much utility as possible, with an allowed +30-50% price increase, effectively a tax on suffering) seems totally possible to me. Maybe not quite yet because we are too poor but at some point pretty soon (like potentially when the elasticity of meat starts approaching 0). I think the parsimonious answer is that people do in fact have compassion for farm animals. Just not as much as for dogs and they gain more from their suffering. I'd expect if we double US GDP in the next ~15 years even without a lot of other culture changes (ignoring that ai will rule the world for sec.) it will be much more politically palatable to ban (or tax) animal torture of all mammals at least.

I'm sure ACE and others have thought much more about this and have actual psychological evidence, for my part I guess I just feel a little bit optimistic that humanity can fix this soonish.

Broadly in agreement but I don't think the examples are actually the pure version of what he is saying. It sounds like he is classifying goods based on the ratio of private benefit/social effect ish, so yes things with a very high private benefit and plausibly low externalities are definitely good but I feel less confident than him we could say that about refrigerators for instance.

OpenAI is claiming a legitimate breakthrough on a "prominent open question". I don't know exactly how to phrase this, but I'm well past the point where i'm interested in arguing whether or not we are on the precipice of some insane stuff. Yes there are still reasonable disputes about exact timelines, foom, economic impacts, diffusion curves, etc. I'm not saying the world is gonna end in 2029. But if you are legitimately arguing anything approximating stochastic parrots, or that this is just a hype train, I'm going to immediately assume you have motivated reasoning or need to think harder.

It's pretty easy to argue with the claim that solving cancer or aging is good.

"Science advances one funeral at a time." replace science with culture if you want.

I think the truth is the only way you can ensure technology is good is if you live in a non chaotic world, like a total dictatorship. We live in a chaotic world. Without a well defined, very high % enforceable social contract, you are simply guessing what might happen.

- There’s reasonably little written about why longtermists should change their prioritisation in this direction. The notable exception is this paper by Will MacAskill.

Well let me stop you right there.

https://forum.effectivealtruism.org/s/8ooZtgeWbsAxxP9bd

https://forum.effectivealtruism.org/s/wmqLbtMMraAv5Gyqn

https://forum.effectivealtruism.org/posts/CxMusuX8E5hiTXEWX/fruit-picking-as-an-existential-risk

https://forum.effectivealtruism.org/posts/zuQeTaqrjveSiSMYo/a-proposed-hierarchy-of-longtermist-concepts

https://forum.effectivealtruism.org/posts/wqmY98m3yNs6TiKeL/parfit-singer-aliens

https://forum.effectivealtruism.org/posts/zLi3MbMCTtCv9ttyz/formalizing-extinction-risk-reduction-vs-longtermism

https://forum.effectivealtruism.org/posts/WebLP36BYDbMAKoa5/the-future-might-not-be-so-great

https://forum.effectivealtruism.org/posts/WebLP36BYDbMAKoa5/the-future-might-not-be-so-great?commentId=cJdqyAAzwrL74x2mG

https://forum.effectivealtruism.org/posts/GsjmufaebreiaivF7/what-is-the-likelihood-that-civilizational-collapse-would

This isn't a comprehensive list, just some stuff off the top of my head.

Personally, I've spent a long time thinking about this and as far as I can tell, it's incredibly uncertain if (e)x(tinction)-risk reduction is positive EV (x-risk reduction is almost tautologically positive to the point of uselessness). In general I think the community got weirdly one shotted by astronomical waste-like arguments which are interesting thought experiments but don't really come close to solving cluelessness.

https://forum.effectivealtruism.org/posts/48mypEepqBqWibKtJ/?commentId=WQfiqqSpsjYBGqtKC

I wrote a related parable in response to a similar-ish post a while ago if people like fiction.

Just was watching Dwarkesh/David Reich podcast, fascinating stuff. Looking back at how I was taught taxonomy and anthropological history I find it frustrating. Note that I don't know much about (evolutionary) biology or genetics or the frontier of what genetic-history research so this is my layman attempt to explain why it's generally been puzzling for me how i have had this explained by other people who probably don't understand either, not trying to propose that I understand something david reich doesn't.

My main gripe is that we are taught evolutionary history mostly from the lens of evolutionary trees. But evolutionary history probably looks like a graph/stochastic process/markov chain, and only at very specific underlying parameters/ level of abstraction is well modeled by a tree. The reason we use trees is because that is the most sensible simple abstraction in some ways, if you are thinking about, "how did we get here?". But it's not a great way to think about "what happened/was happening". I had chatgpt try to make the difference below (don't look too into the details, it did some hallucinating, just the general vibe).

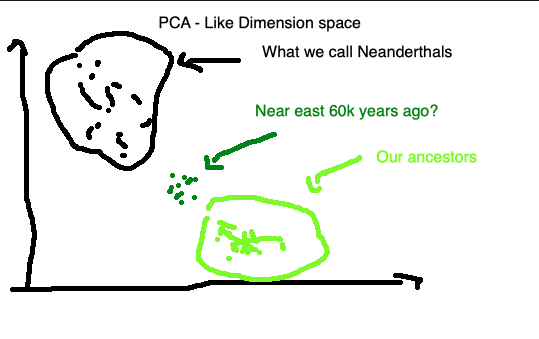

Taking plausible parameters here to me would be thinking mixture isn't extremely likely over short time spans because of distance/etc but quite likely and almost certain over hundreds/thousands of years. So what did the near east look like genetically 60k years ago? It could easily look like below.

It seems totally possible that for long periods of hominid history genetics were well modeled by pretty smooth stochastic graphs with generally corresponding smooth genetics across geography (obviously with tons of exceptions or less true when you zoom in, e.g. bell beaker/corded culture), and yet when you look at our specific lineage it doesn't quite look like that (due to extinction, gene selection, or some other reason). I don't have a clear enough vision to say much more, but I think there are some interesting implications about what we mean when we say, this group or that group went extinct.

Relating to the Bernie news today, I don't think capitalism is compatible with believing in the singularity and some combo of humanism/utilitarianism. I have been a neolib for a long time before now fwiw. If you ran the zoo would you give the biggest banana to the sexiest gorilla?