eukaryote

Bio

Georgia Ray. Interested in biodefense, existential risk reduction, effective altruism, animal and other minds, and how to be the best we can be.

Posts 12

Comments9

I think this is a great point, and I think it's alright here.

The part of the series that I'm about to go into (as of today) contains a panoply of possible explanations for the apparent paradox, none of which are "it wouldn't actually be that bad" or "it just hasn't occurred to anyone."

And this series is pretty mild in terms of the large body of "are we prepared against X type of bioweapon attack" literature and analyses out there (for which the answer is usually "no, we are not prepared).

The series is also more theoretical than concrete, which seems like it should reduce the risk factor.

Does this hit on your concern? If you still have your concerns or have different ones, I'm interested to know.

It seems quite possible. Japan aside (and those largely weren't "accidents"), the anthrax accidentally released at Sverdlovsk by the USSR program is a major one. I think Alibek describes more in his book Biohazard, but I'm not certain, and nothing else on the scale of that incident. It seems possible that there have been more accidental releases to civilian populations, than just these.

I had an interview with them under the same circumstances and also had the belief reporting trial. (I forget if I had the Peter Singer question.) I can confirm that it was supremely disconcerting.

At the very least, it's insensitive - they were asking for a huge amount of vulnerability and trust in a situation where we both knew I was trying to impress them in a professional context. I sort of understand why that exercise might have seemed like a good idea, but I really hope nobody does this in interviews anymore.

Georgia here - The direct context, "Research also shows that diverse teams are more creative, more innovative, better at problem-solving, and better at decision-making," is true based on what I found.

What I found also seemed pretty clear that diversity doesn't, overall, have a positive or negative effect on performance. Discussing that seems important if you're trying to argue that it'll yield better results, unless you have reason to think that EA is an exception.

(E.g., it seems possible that business teams aren't a good comparison for local groups or nonprofits, or that most teams in an EA context do more research/creative/problem-solving type work than business teams, so the implication "diversity is likely to help your EA team" would be possibly valid - but whatever premise that's based on would need to be justified.)

That said, obviously there are reasons to want diversity other than its effect on team performance, and I generally quite liked this article.

One year later:

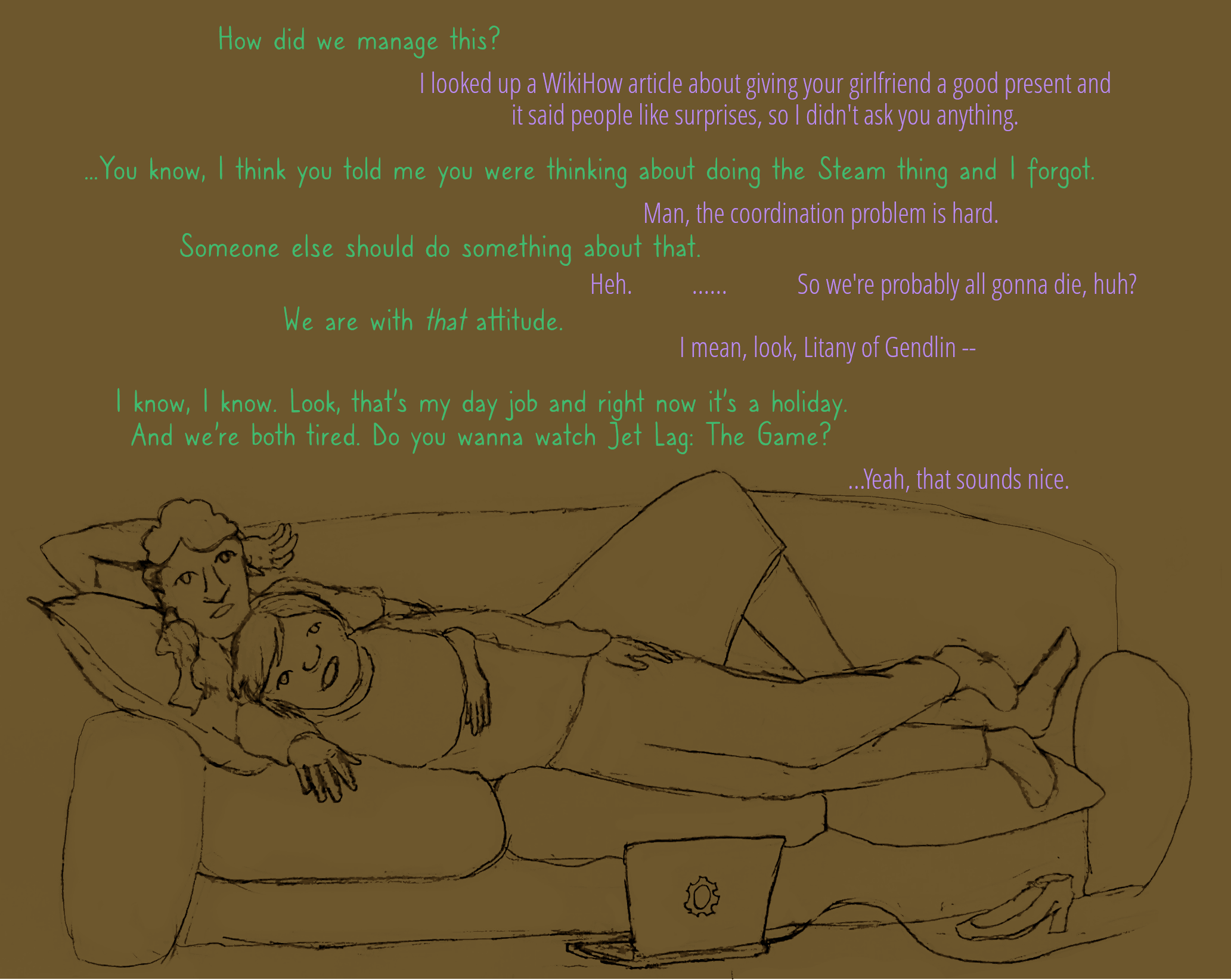

Yes, tandem is my alt account, I made the original. Really pleased this resonated with people. I just went to the Bay Secular Solstice and was thinking about these two again, and the work of making the world and ourselves the best we can be. Have one more bonus:

(Jet Lag: The Game is really good, if you like competitive game shows about train logistics. I recommend starting with the first season of Tag Across Europe.)