Jeff Kaufman 🔸

Bio

Participation4

Boston-based, Director of Detection at SecureBio, GWWC board member, parent, musician. Switched from earning to give to direct work in pandemic mitigation. Married to Julia Wise. Speaking for myself unless I say otherwise. Full list of EA posts: jefftk.com/news/ea

Posts 118

Comments1089

Topic contributions1

Fortunately, however, DIY solutions that use abundant materials (e.g., fans, filters, tape, blankets, vacuum cleaners) have a good shot at working. Slapdash preliminary tests by colleagues, using just tape and towels, have already attained a ~30x reduction in the hardest-to-filter particle sizes.

This sounds like so much fun to work on, non-seriously tempted.

Existing particle counters typically cost thousands of dollars, generally aren't designed for stockpiling or in-respirator wear, and have no manufacturing plan suited to a crisis ramp-up.

The cheapest ready-to-go option for DIY work today is probably the Temtop P600, which I see as $70. While I haven't tried it, it's a stripped-down version of the Temtop M2000 which is what I bought several years ago to use for DIY experiments.

Professional grade ones are better in various ways, but the big one is that they are calibrated. The cool thing is, for many kinds of experiments you don't actually need that! You just need some number that is (at least within a known concentration) linearly proportional to pm2.5, which an uncalibrated meter can do. For example, if you're trying to see how quickly something can clear smoke from a room you don't need to generate a target amount of smoke or know exactly how much smoke you've generated: you can just measure the half life. This gives you relative efficacy directly, or CADR if you have a sealed room of known volume.

If you want to make something cheaper, you can get a PMS5003, which I see as $21, and connect it to a cheap SoC (~$10) or to an Android phone (adapters in the $15 range). At scale I think you could get this down below $15: a PMS5003 or clone at high volume would be ~$7, the phone adapter would be under $1 at this scale, then a box, assembly, and some QC.

But all this is for in-room measurement, good enough for measuring rooms. Measuring non-valved respirators is way harder, because you need to get the sensor inside the mask. State of the art for quantitative fit testing involves poking a hole in a mask, which means you can't do it on an ongoing basis. I don't know if wireless is practical with current tech: getting a particle counter sufficiently miniaturized seems super hard. Building respirators with a test port could also work? (For a valved respirator you can measure how clean the air coming out of the valve is.)

I'm not very familiar with the situation in Nigeria, but my understanding is there's a lot of dust in the air much of the year from the Sahara, plus in Lagos and other cities there's a lot of pollution, is that right? In that case I wouldn't recommend UVC at all (since it inactivates pathogens but doesn't touch dust or pollution). Instead, something filter-based would have much broader benefits: dust and pollution in addition to pathogen control.

In the US the cheapest filter option is generally as Corsi-Rosenthal box (a box fan plus HVAC filters, both commodity items here). In Nigeria, something commercial would probably be cheaper since those aren't everyday items. Looking online a bit, maybe the Acerpure Pro P2 at ~₦120,000 for 191 CFM CADR is best? While that's a lot cheaper than the Aerolamp, though, that's still out of reach for someone at ₦70,000 / month.

(Minor: the Aerolamp also uses Care222)

My external post probably would have been better with some explicit comparisons, but my claim is that in-duct UVC (a) isn't widely applicable, and so the overall potential benefit of pushing for it is low and (b) isn't cost effective even where it's applicable.

I think (b) is the more important one and where we most disagree. I've now added the cost-effectiveness calculation to the end of https://www.jefftk.com/p/against-in-duct-uv and it looks to me like even in the best case in-duct is much more expensive per CADR than filters or far-uvc.

vastly more effective, cost-efficient, and problem-free method of UV in ductwork (Near UV) gets pretty much zero attention

The big problem is that ducts are relatively rare, something like 10% globally. While ducts are common in the US, Canada, and Australia, they're rare elsewhere including Europe and Asia. [1]

You also need to tune your HVAC to recirculate a lot of air even when the system isn't calling for heat or cooling, which people usually don't.

And then if you do have ducts and are moving a lot of air you don't need UV: if you're running MERV-13 (typically the most the blower is able to handle) that's removing worst case 50% of particles, and you can generally put out enough air to hit targets with the existing system. And then consider that in-duct UV systems fail invisibly and fail open.

[1] Around here the old houses are mostly radiators, new ones are mostly mini-splits, and only ones built or renovated in between have ducts. Older commercial buildings are also generally radiators, though that's becoming less common. I asked Claude Opus 4.7, ChatGPT 5.5 Thinking, and Gemini 3.1 Pro "Approximately what fraction of indoor hours spent by humans around the world are in spaces with a ducted HVAC system? What's your 50% confidence interval?" and got 9-13%, 10-20%, and 6-11%. The big factor here is that while ducts are common in the US, Canada, and Australia, they're rare elsewhere including Europe and Asia.

Thanks for updating the post! Some minor comments:

the $500 row reflects the cheapest current Care222-based fixture, not the price of a productized, FCC- and UL-8802-certified consumer product.

Good point! This is definitely an issue I've run into in talking to people about whether installing Aerolamps makes sense, and I was excited to learn they're working on a new version that should both cost less and be certified.

Jeff Kaufman's post reaches ~$53 per eACH for the Aerolamp using aerosol-k coronavirus susceptibility (Welch et al. (2022))

Not exactly: I used the median eACH value I got from Illuminate:

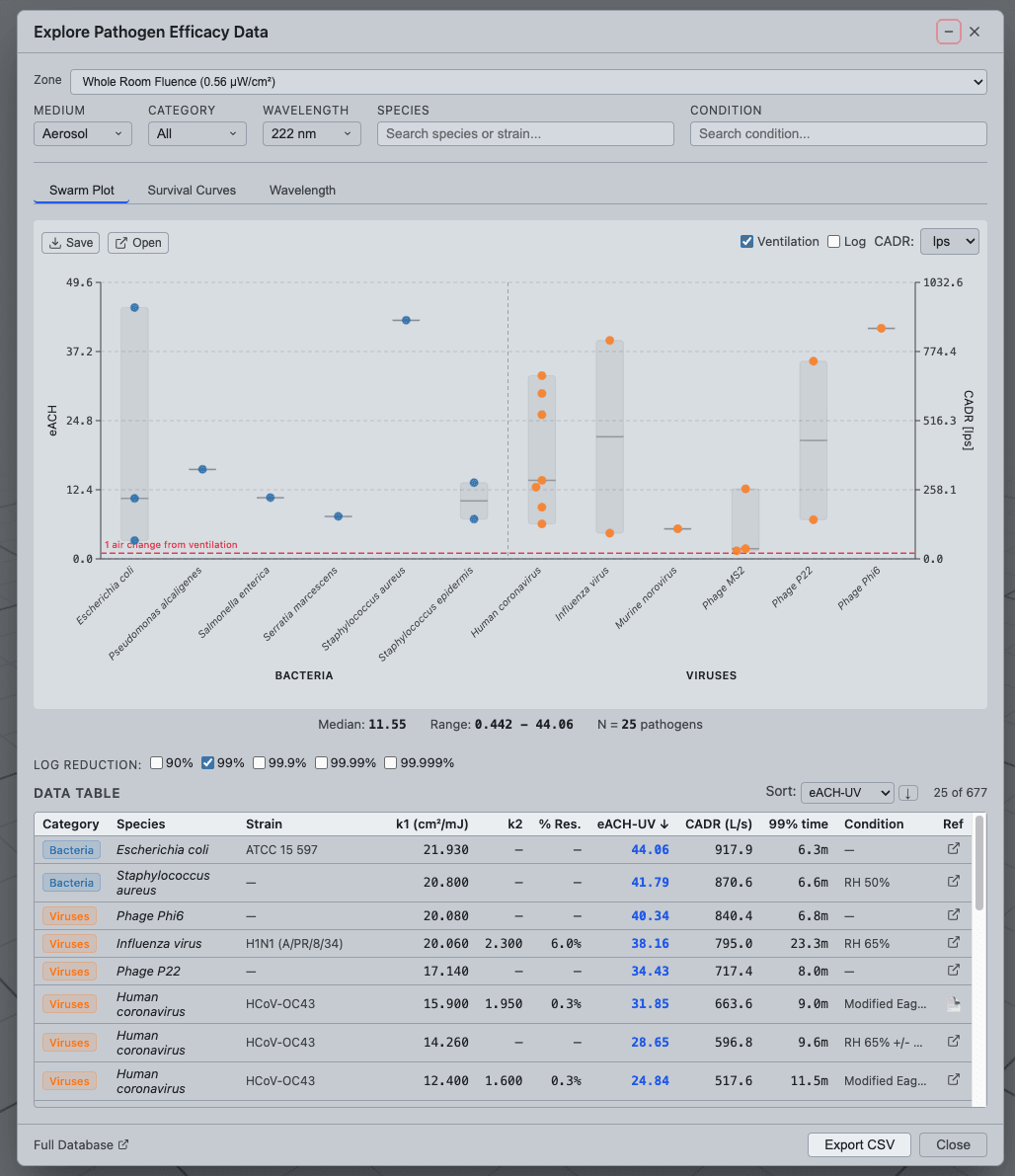

This gave me a median of 11.55 eACH, across 25 bacteria and viruses with a range from 0.442 to 44.06.

I also assume no replacement for the Aerolamp but use bench k

Why use bench-measured k? Isn't that less realistic for real-world use? This isn't something I know much about, though, and I'm just going with Illuminate's defaults.

I think you may also somewhat overestimate the CADR decline for these devices when not operating at full power

Certainly possible, and I'd be happy to yield to lab testing on this, but in my DIY testing turning a AP-1512 from "high" to "medium" dropped CADR by 50%, and this AirFanta review found going from 56 dB to 45 dB dropped CADR by 40%.

That seems much too strong to me: it's very important that AI companies have accurate views on how dangerous their models are. When AISI evaluated Mythos and confirmed its high level of cybersecurity ability, this (from the outside) looks critical to Anthropic deciding not to release it publicly yet. This likely reduced near term risk, set some precedent, and also slowed the race slightly.

(Disclosure: the other side of SecureBio does AI evals; speaking for myself)

Of these, (1), (5), (6), (7), and (8) have the form "X is important, figure out how to get countries to have X in an emergency". This is good, but I think for each of these you should also strongly consider figuring out get your own household to have X in an emergency. Since you likely care about your own welfare several times more than that of strangers, these are typically worth doing even at current prices (and each person who sees to their own household makes the world marginally more prepared):

(1) PPE stockpile: You Should Get a Reusable Mask. You have the advantage of not needing to organize a distribution system.

(5) Cleanroom bedrooms: you have the advantage of being able to use non-improvised materials, like air purifiers and far-UVC.

(6) DIY Respirators: you don't need these if you Get a Reusable Mask.

(7) Particle monitoring: you can get one for ~$70

(8) Food stockpiles: Store Food

Also, if anyone in your household seems likely to create mirror life, probably good to address that.