All of Writer's Comments + Replies

For me, perhaps the biggest takeaway from Aschenbrenner's manifesto is that even if we solve alignment, we still have an incredibly thorny coordination problem between the US and China, in which each is massively incentivized to race ahead and develop military power using superintelligence, putting them both and the rest of the world at immense risk. And I wonder if, after seeing this in advance, we can sit down and solve this coordination problem in ways that lead to a better outcome with a higher chance than the "race ahead" strategy and don't risk encou...

I think his answer is here:

...Some hope for some sort of international treaty on safety. This seems fanciful to me. The world where both the CCP and USG are AGI-pilled enough to take safety risk seriously is also the world in which both realize that international economic and military predominance is at stake, that being months behind on AGI could mean being permanently left behind. If the race is tight, any arms control equilibrium, at least in the early phase around superintelligence, seems extremely unstable. In short, ”breakout” is too easy: the incentive

There are several AGI pills one can swallow. I think the prospects for a treaty would be very bright if CCP and USG were both uncontrollability-pilled. If uncontrollability is true, strong cases for it are valuable.

On the other hand, if uncontrollability is false, Aschenbrenner's position seems stronger (I don't mean that it necessarily becomes correct, just that it gets stronger).

I think we still see really good engagement with the videos themselves. The average view duration for the AI video is currently 58.7% of the video, and 25% of viewers watched the whole video

This average percentage relates to organic traffic only, right? The paid traffic APV must look much lower, something like 5%?

There's a maybe naive way of seeing their plan that leads to this objection:

"Once we have AIs that are human-level AI alignment researchers, it's already too late. That's already very powerful and goal-directed general AI, and we'll be screwed soon after we develop it, either because it's dangerous in itself or because it zips past that capability level fast since it's an AI researcher, after all."

What do you make of it?

Can I promote your courses without restraint on Rational Animations? I think it would be a good idea since people can go through the readings by themselves. My calls to action would be similar to this post I made on the Rational Animations' subreddit: https://www.reddit.com/r/RationalAnimations/comments/146p13h/the_ai_safety_fundamentals_courses_are_great_you/

Rational Animations has a subreddit: https://www.reddit.com/r/RationalAnimations/

I hadn't advertised it until now because I had to find someone to help moderate it.

I want people here to be among the first to join since I expect having EA Forum users early on would help foster a good epistemic culture.

For people reading these comments and wondering if they should go look: it's in the section that compares early and launch responses of GPT-4 for "harmful content" prompts. It is indeed fairly full of explicit and potentially triggering content.

Harmful Content Table Full Examples

CW: Section contains content related to self harm; graphic sexual content; inappropriate activity; racism

This article is evidence that Elon Musk will focus on the "wokeness" of ChatGPT, rather than do something useful about AI alignment. But still, we should keep in mind that news are very often incomplete or simply just plain false.

Also, I can't access the article.

Related: I've recently created a prediction market about whether Elon Musk is going to do something positive for AI risk (or at least not do something counterproductive) according to Eliezer Yudkowsky's judgment: https://manifold.markets/Writer/if-elon-musk-does-something-as-a-re?r=V3JpdGVy

It would probably be really valuable if people could forecast the ability to build/deploy AGI to within roughly 1 year, as it could inform many people’s career planning and policy analysis (e.g., when to clamp down on export controls). In this regard, an error/uncertainty of 3 years could potentially have a huge impact.

Yeah, being able to have such forecasting precision would be amazing. It's too bad it's unrealistic (what forecasting process would enable such magic?). It would mean we could see exactly when it's coming and make extremely tailored plans that could be super high-leverage.

This post was an excellent read, and I think you should publish it on LessWrong too.

I have the intuition that, at the moment, getting an answer to "how fast is AI takeoff going to be?" has the most strategic leverage and that this topic influences the probability we're going extinct due to AI the most, together with timelines (although it seems to me that we're less uncertain about timelines than takeoff speeds). I also think that a big part of why the other AI forecasting questions are important is because they inform takeoff speeds (and timelines). Do yo...

One class of examples could be when there's an adversarial or "dangerous" environment. For example:

- Bots generating low-quality content.

- Voting rings.

- Many newcomers entering at once, outnumbering the locals by a lot. Example: I wouldn't be comfortable directing many people from Rational Animations to the EA Forum and LW, but a karma system based on Eigen Karma might make this much less dangerous.

Another class of examples could be when a given topic requires some complex technical understanding. In that case, a community might want only to see posts that are ...

In my understanding, EigenKarma only creates bubbles if it also acts as a default content filter. If, for example, it is just displayed near usernames, it shouldn't have this effect but would still retain its use as a signal of trustworthiness.

Also, sometimes creating a bubble -- a protected space -- is exactly what you want to achieve, so it might be the correct tool to use in specific contexts.

It's the first time I read about this, so please correct me if I'm misunderstanding.

Personally, I find the idea very interesting.

No winners or job offers yet, but I have still to decide on some, although I've read every submission. I reached out to a small minority of people with feedback when (but not every time) I thought their script could be improved easily enough to pass my bar. If you want feedback or are curious about your standing in the contest, please send an e-mail.

Cross-posting with multiple authors is broken as a feature.

When Matthew had to approve co-authorship, the post appeared on the home page, but if clicked on, it only showed an error message.

Then I moved the post to drafts, and when I interacted with it using the three dots on the right side, there was another error message.

Now Matthew doesn't appear as a coauthor here.

Hi!

I haven't made any announcement yet, but I'd be potentially interested in hiring people as contractors for Rational Animations in the following roles:

- Scriptwriter (also see this contest)

- Fact-checker

- Community manager

- Social Media manager

- Illustrator

If you think Rational Animations could be a good fit for some people and clears your bar for "high-impact", I'd be happy if you sent some candidates my way. They/you can reach out at rationalanimations@gmail.com.

Also, important disclaimer: I'm not sure how fast I'll be able to make hiring decisions, an...

Thanks a lot for the feedback!

I have the same concern about the fact that the expected income from participating in the contest might be small. I think the other two prizes somewhat mitigate this, but I'm not sure how people value those prizes.

I'm indeed spending a lot less on scriptwriting than on animation. This hasn't always been true, but it is true now and will continue to be true as the team becomes larger because animation is just way costlier. That said, the proportion of the budget devoted to scriptwriting will increase again in the near future, b...

Hey, thanks for the feedback here.

Regarding Rob Miles' audio: is there anything more specific you have to say about it? I want to improve the audio aspect of the videos, but the last one seemed better than usual to me on that front. If you could pinpoint any specific thing that seemed off, that would be helpful to me.

Several EA organizations are working together with a communications advising firm to answer questions like

- Who are key audiences we especially want to reach?

- How do these audiences currently see EA?

- What are the best ways to reach these audiences?

- What EA ideas are especially important to convey?

I hope EA orgs end up sharing their new best guesses regarding these questions with the broader community, or at least reach out to smaller and newer organizations dedicated to outreach so that they can scale their outreach in a good direction and self-correct more easily.

We try to avoid processes that take months and leave grantees unclear on when they’re going to reach a decision."

It's true that we made decisions on the vast majority of proposals on roughly this timeline, and then some of the more complicated / expensive proposals took more time (and got indications from us about when they were supposed to hear back next).

The indication I got said that FTX would reach out "within two weeks", which meant by April 20. I haven't heard back since, though. I reached out eight days ago to ensure that my application or relevant ...

Thanks! I'm curious if there's a particular aspect of the video that you found particularly good and if you found it significantly better than the other videos on Rational Animations (if you have watched them).

I'm trying to understand what made this particular video more appreciated than the other ones.

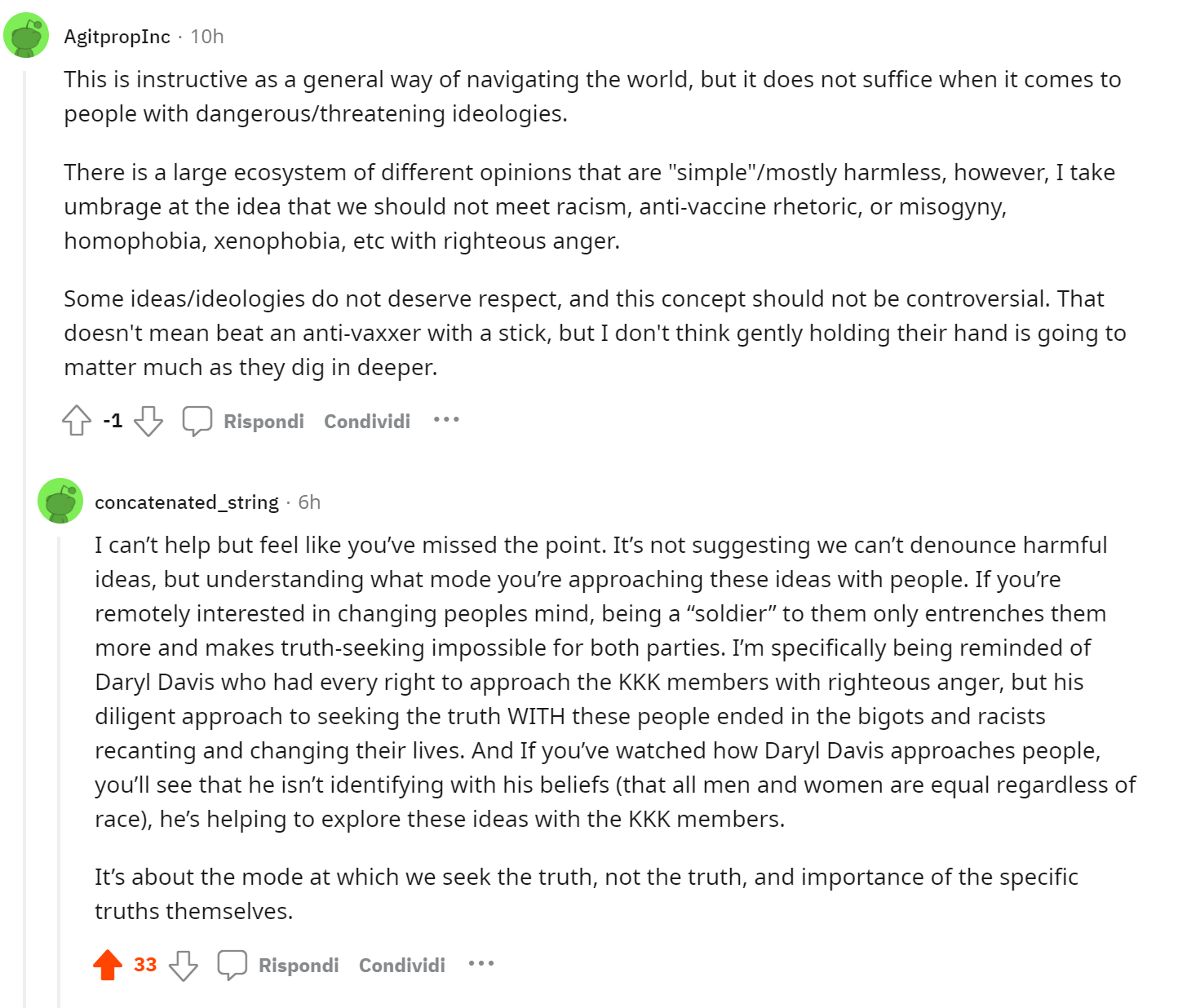

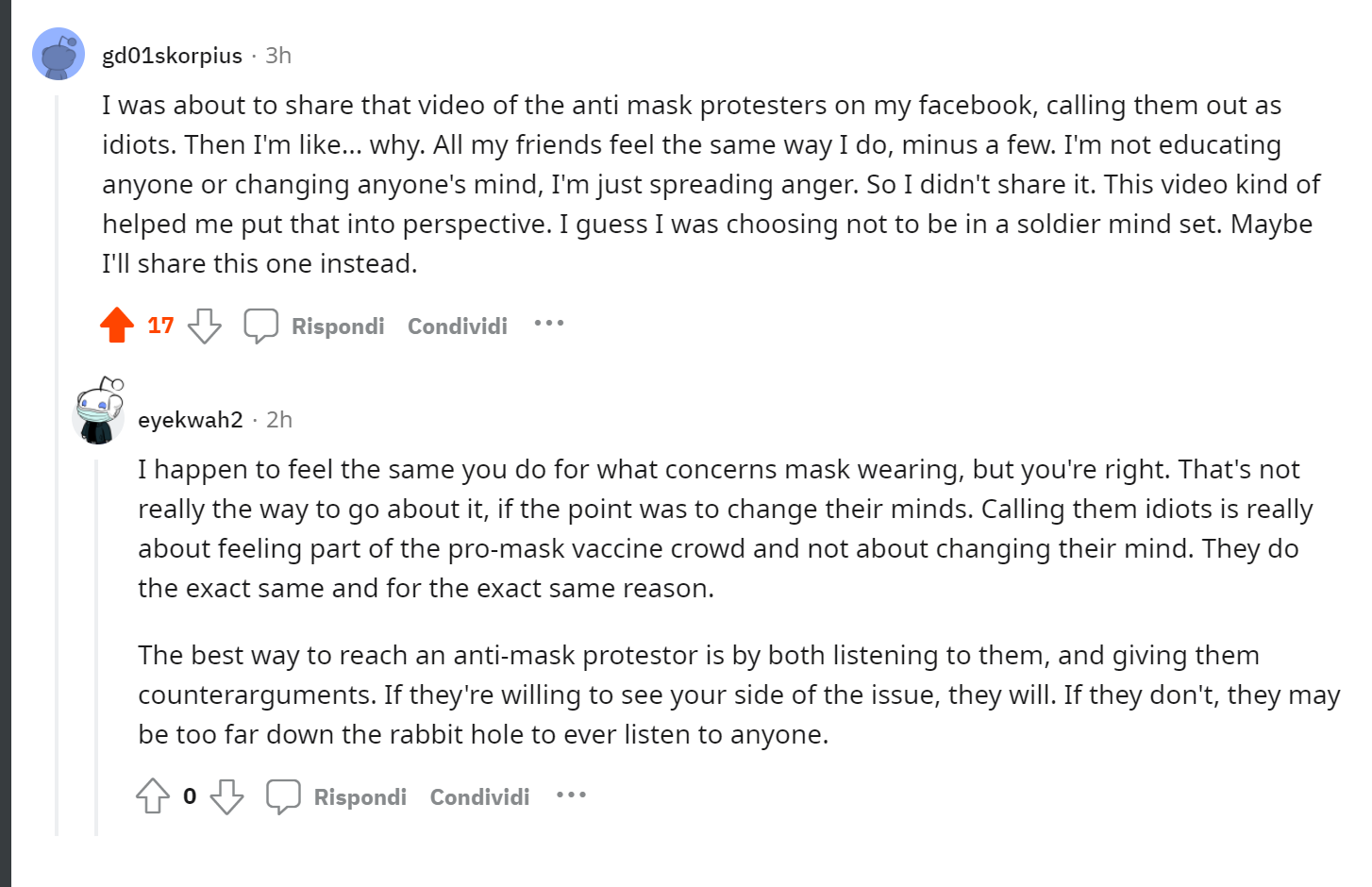

Here's some more evidence I got in favor of the fact that this is a particularly good book to give to new people. So far, the Rational Animations video about the "Rethinking Identity" section is the channel's most appreciated video in terms of comments, both on Reddit and YT. Also, I'm seeing comments suggesting that at least some people deeply understand and incorporate the message. On r/videos, which is a pretty generalist sub, I'm finding some uplifting (for me) interactions:

I've seen some criticism of this book in EA/Rationality spaces and in some Amaz...

Rational Animations' writer here. I am just chiming in to say (albeit 20 days later) that we're interested in animating some of the EA introductory articles. We are also interested in adapting blog posts by Holden or anyone else writing about important/interesting stuff.

Before doing more core EA content, though, I want to improve some more. And potentially have someone (paid) always to edit, fact check, and PR-sanity-check my scripts. For now, I will fly pretty close to EA with some videos, but I will avoid EA branding till I'm exceedingly sure that Rational Animations' contribution will be a net positive. Which is probably at least a period of a few months.

It’s gone viral! This is just the third day since release, and it has already reached 178k views, and it looks like it’s still growing fast. This is very hard to pull off for a brand-new channel. Massive kudos :)