Forecasts based on benchmarks and time horizons have failed to produce a consensus timeline for the arrival of AGI. We supplement these with several alternative methods.

1: MATS Applications

AGI - artificial intelligence capable of performing every task - is by definition cognitively capable of putting all humans out of work. However, there are certain edge cases where human employment might persist for other reasons. As the majority of jobs get automated, we expect newly unemployed workers to migrate to these edge case industries.

The clearest case for an industry which cannot be completely automated after AGI is AI safety research. The advent of AGI will likely be followed shortly by superintelligence, and this superintelligence will require alignment work beyond that which produced AGI. Although AGI will be intellectually capable of the technical aspects of this work, we may be uncertain whether it fully understands human values, and we may not trust it to do the work alone. Therefore, as every other industry is automated, AI safety will become one of the few remaining fields that absorbs human labor. Conservatively, we predict that within three years of AGI, 10% of the human population - about 800 million people - will be doing AI safety work. The overwhelming majority of these people will have no previous training in AI safety, and will have to go through MATS.

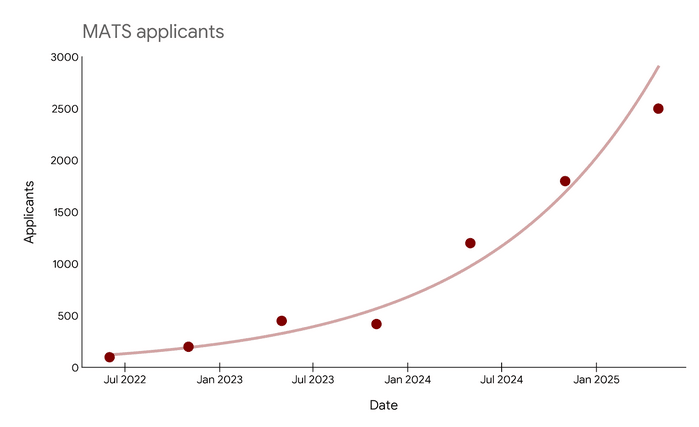

From this graph (source here), we observe there were 3000 MATS applicants in early 2025, and that this number doubles approximately every twelve months. At this rate, we expect that 800 million people will have applied to MATS in 2042. If our previous prediction that we will reach this level three years after AGI is correct, this places the advent of AGI in 2039.

2: Model Release Cadence

Kurzweil defines the Singularity as the point of maximum technological change. Major discoveries took millennia during the Stone Age and centuries during the Bronze Age, but come almost yearly during our own time. During the Singularity, AI will recursively self-improve at timescales that seem impossible to humans, advancing centuries in objective weeks.

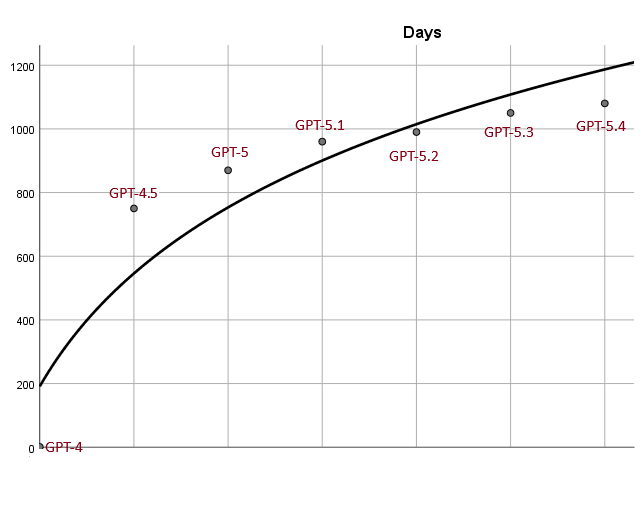

We can formalize this idea of maximum change by examining the time between AI model releases. For example, here are all new numbered GPTs after GPT-4, taken from this table, plotted on a graph whose vertical axis is the number of days between GPT-4's release date and their own. GPT-4.1 came out after GPT-4.5, and I didn't know what to do with this, so I've excluded it from the analysis.

We see that releases are getting closer together, and that the overall pattern approximates a logarithmic curve. If we extrapolate out, we find that OpenAI is releasing a new model every day by September 2032, and a new model every second by 2048. Presumably the singularity is somewhere in between those two dates.

3: Google Ngram Mentions Of "Apocalypse"

Plausibly, AGI will cause the apocalypse. When the apocalypse is happening, it will be plausibly the main topic of conversation, most likely by an overwhelming margin.

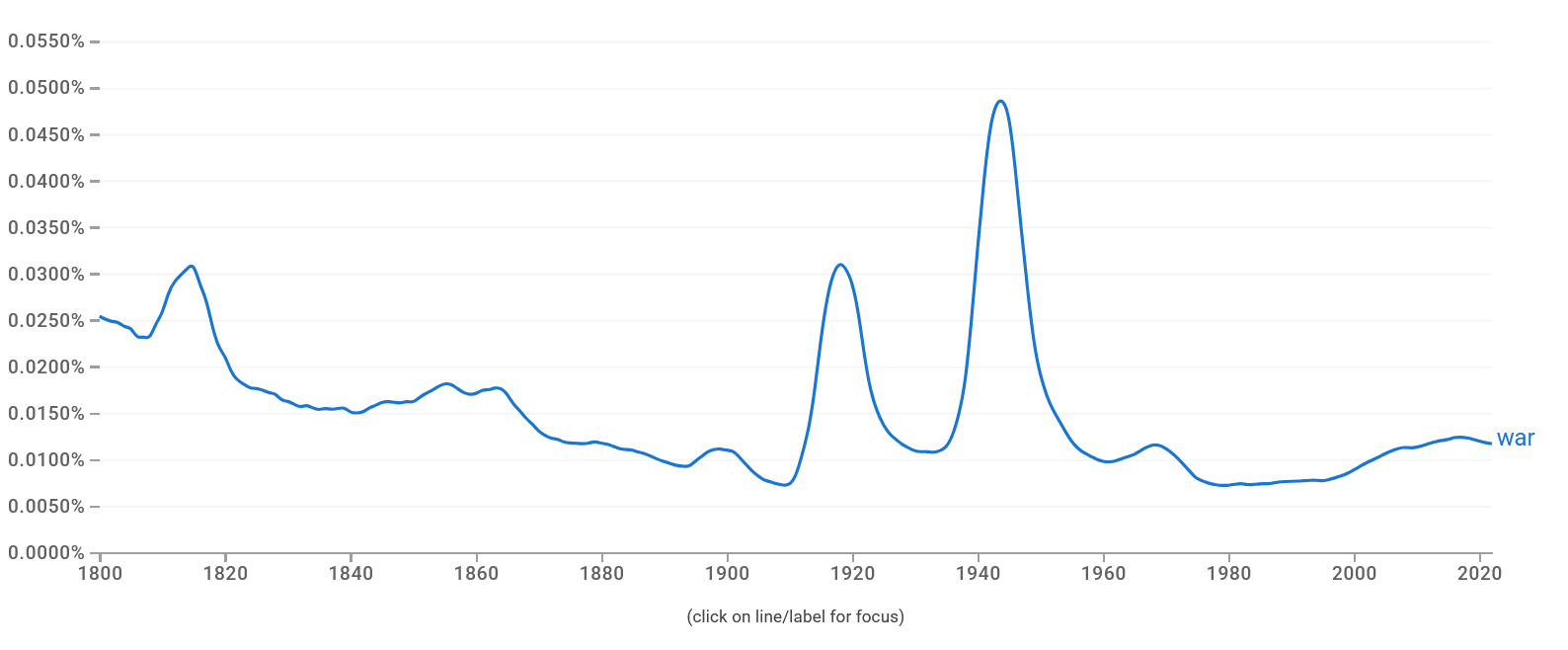

Even in this scenario, we shouldn't expect 100% of words to be the word "apocalypse"; even the sentence "the apocalypse is happening now" only reaches 20% on this metric. To set a lower bound for the frequency of the word "apocalypse" during the apocalypse, we take the frequency of the word "war" during World War II, another period when world events provided an overwhelmingly salient discussion topic. We find that this peaked in 1943 at 0.048% of all English words (source).

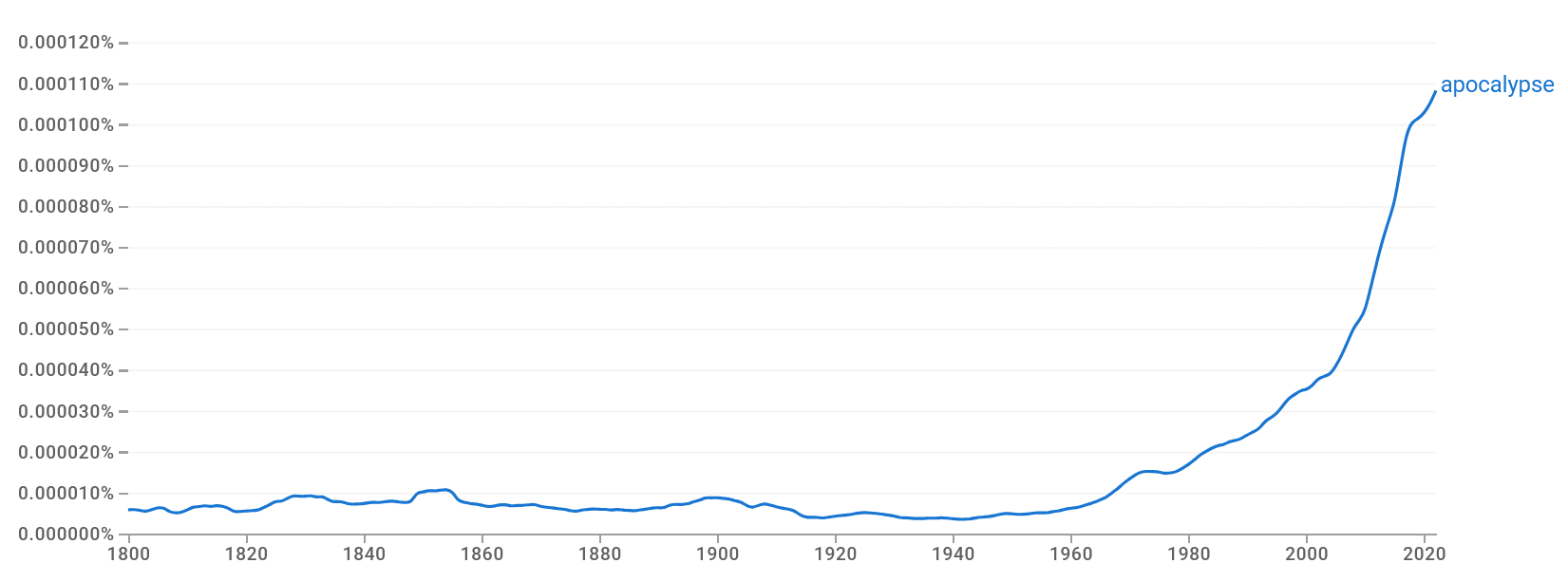

Here is the corresponding graph for "apocalypse" (source):

We calculate that this reaches the peak level of "war" in the year 2139.

This disagrees substantially with our previous estimates, and with the median estimate of groups like AI Futures Project and Epoch. One possibility is that the apocalypse may come very quickly, with less than one year's warning. Since Google bins results by year, this could artificially suppress apparent yearly mentions of the word. Therefore, this doubles as an argument for fast takeoffs.

Interesting and thoughtful post. However, I believe your Google Ngram result is potentially confounded by the imminent rise of the Antichrist and ensuing Tribulation of convulsive war and death that will rip like wildfire across all the nations of Earth. This event is projected to occur sometime in the early 2040s, c.f. Angela Cotra's "Forecasting Transformative AC with Eschatological Anchors" (though more recent estimates like "AC 2027" have argued for shorter timelines), which is spuriously boosting mentions of the "apocalypse".

Once you correct for this confounding variable (ie, that each and every one of us is about to be plunged into a world of ceaseless conflict and violence, mercilessly hunted down by the Four Horsemen, moon turned to blood, etc), you'll see that eschatologically-adjusted mentions of "apocalypse" are actually no higher than the historical base rate, proving that there's nothing to worry about and everything will be fine.