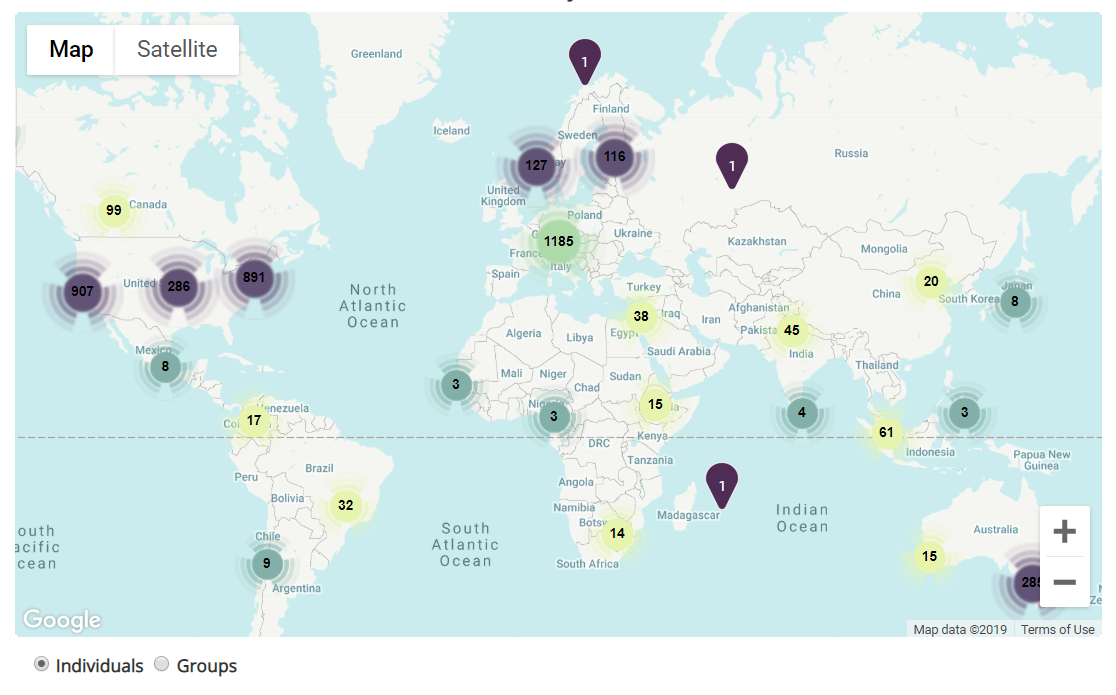

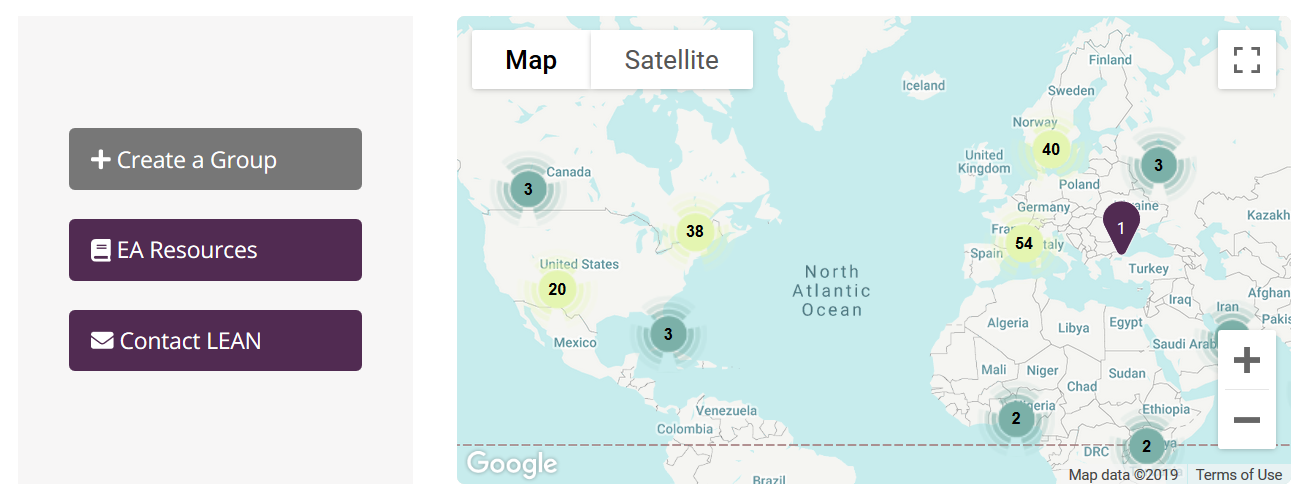

The EA Hub was relaunched in April and is now home to over 700 profiles of Effective Altruists and over 200 local groups in more than 50 countries all over the world. We await around 2000 more of our former users to reactivate their accounts by changing their password (you can do this here), and welcome new users to create a profile here.

Connecting ideas with talent, resources, and support is one of the biggest bottlenecks of high potential individuals and a cause of promising ideas not reaching fruition.

The vision for the Hub is to enable and inspire collaboration between EAs by making it easier for people to learn, network, and work together on promising initiatives. By synchronising projects, individuals, and groups, initiatives can build traction more effectively. The EA Hub also links to other resources and platforms in the EA space, including the Effective Altruism Forum, job and volunteering opportunities, Donation Swap, and Effective Thesis.

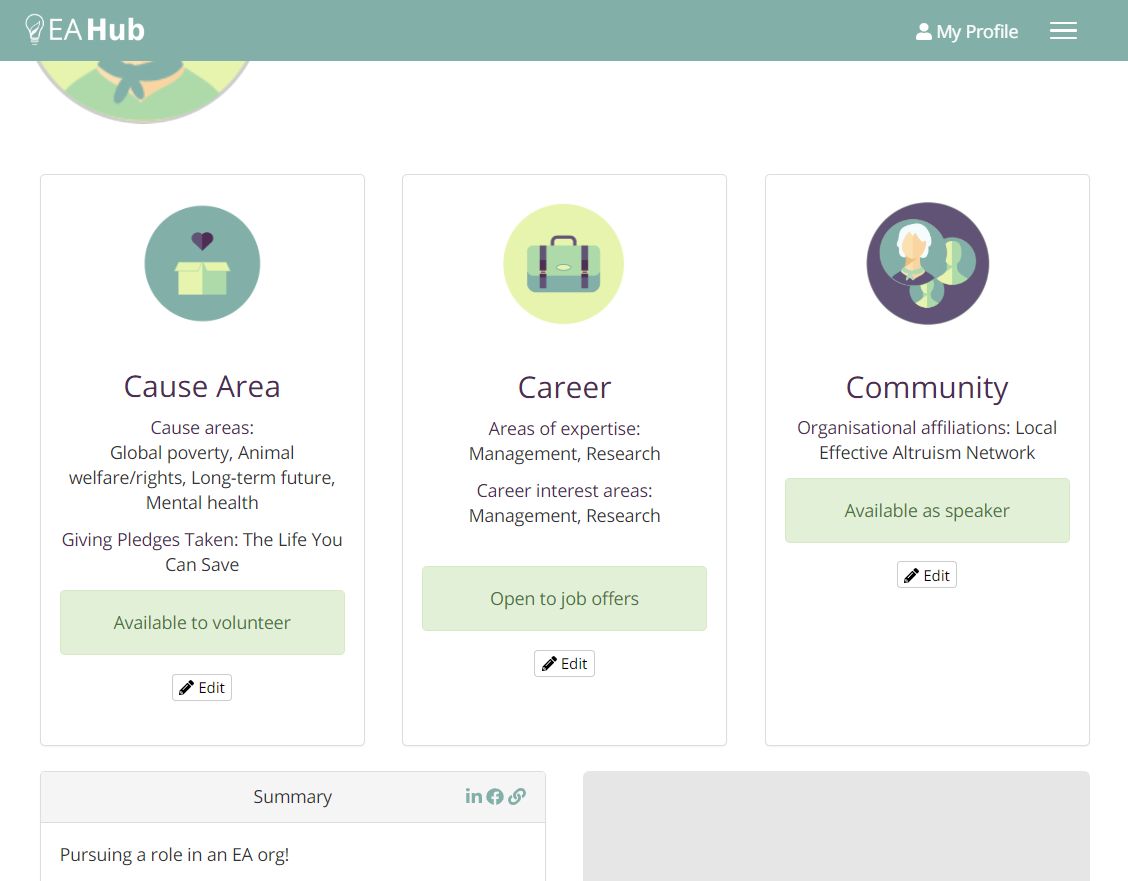

We’ve recently added new features, including listing job candidates, volunteers, people willing to give presentations. At the time of writing this post, we list 159 job candidates, 171 volunteers, and 117 speakers awaiting engagement on new altruistic initiatives.

We’ve also implemented features allowing you to link your social media profiles and a personal website.

We manually approve each new account to make sure that no spam gets through and you are only served the useful and true information posted by fellow EAs and EA-friendly people.

The resources section is an extensive and up to date collection of written guides and resources, answering the need of local group leaders and regular EAs alike. Look out for an upcoming Forum post about the resources.

The Hub team continues to work with the Centre for Effective Altruism (CEA) on curating http://eahub.org/groups, the golden source of information on local chapters of Effective Altruism around the world, having recently updated our listing with the results of the 2019 Local Group Organizers Survey.

Currently our team of staff and volunteers (which we invite you to join!) are continuing to develop the resources section, cleaning up the codebase and implementing fixes and minor improvements.

In 2020, we aim to deliver on our promise to keep the platform useful, stable, secure and growing.

We want to hear your feedback. Email contact@eahub.org and post your ideas here https://feedback.eahub.org/.

See you on the EA Hub!

I have a little bit of hesitation doing much on EA Hub because I lost all my data (including donation history) from my last profile. It was deleted without warning during the switch—or at least I missed the heads up. That aside, the updates sound exciting.

Hi, Aaron! Thanks for raising your concern! Your profile is still here https://eahub.org/profile/aaron-hamlin/, but it hasn't been activated yet (it will become publicly accessible only after you've reset your password). About donation data, we decided to no longer keep that on the Hub, but we would be happy to try to restore your data and send it to you if that's what you'd like.

Sending my old data would be awesome. Thanks! It took awhile to track everything down. myfullname@gmail.

Choosing how much and what of previous data to keep and use was a challenging decision which the team took very seriously. GDPR changed things quite a lot, and we have to factor in our responsibility to keep data private and secure. If people don't come back and reclaim old accounts, some on the team feel leery of holding onto data indefinitely because that might not be the most responsible thing to do. Additionally, we made functional and structural improvements to the site when we rebuilt that means it does not perfectly follow on from what was before, and we needed to prioritise.

Any new updates on sending me the old information? I pester others on giving publicly and want to be sure that I model well personally. I'm thinking of adding a section to my personal website about my current, past, and planned giving for accountability.

Hi Aaron, I've just sent the data again. I used the email address associated with your eahub.org account. Please, write us at contact@eahub.org in case you did not receive it by now.