This post is detailing our experience attending the AI Impact Summit and its associated side events in Delhi, February 2026. We are both unfamiliar with the policy and governance domain. This is just an honest reaction attending these events, maybe there are 2nd order effects we are not privy to and we welcome feedback.

Two events ran in parallel: the main AI Impact Summit and a separate, more curated side event called AI Safety Connect (AISC), co-hosted with the International Association for Safe and Ethical AI (IASEAI). We attended primarily governance and policy tracks at the main summit. On the second day, we also organized an independent AI safety mixer. This post covers what we observed across all three events, what worked, what didn't, and where we hope things would go in the future.

The Main Summit

What Worked

The summit's admission policy was open and inclusive. Late applications were accepted. Entry was free. Nobody was turned away. For an event of this scale that relates to AI, it really matters that this should not be a conversation restricted to a small group, and India has an opportunity to lead on broad, inclusive participation.

The summit was also useful as a reading of the room. By attending governance and policy sessions, we got insight into how different stakeholders, government officials, nonprofits, educators are orienting towards AI. We could hear what is salient to them: concerns about automation and job displacement, bias in AI systems, ownership of compute. Getting this kind of signal on world models and priorities across sectors was valuable.

I enjoyed the “Whose Language, Whose Model? Public-Interest Multilingual LLMs” by Aliya Bhatia, Center for Democracy & Technology Dhanaraj Thakur, Multiracial Democracy Project because it was a workshop format, this involved breaking up the room into rows of two and we all had discussions among ourselves. This format was much better than the panel version where we just got to listen and there was limited time allotted for Q/A.

The prompt we picked was “What incentives could drive private sector AI development to be inclusive and participatory” and we talked about

1) Civil society outcry - accessibility to ensure all the stakeholders affects are able to hold companies responsible for quality

2) Regulation - how government need to ensure compliance, it helps to have benchmarks, design specs that can set expectations from industry

3) Research on how serving rare languages, and niche cultures can be a great test of out of distribution generalization. So if you want “AGI” you can be motivated to be inclusive for instrumental reasons.

4) We can trace where they are raising money from. Is it ESG funds, donations from non profits, there is value in ensuring transparency of such investments.

5) The org culture itself would be a big factor, so decisions around hiring, representation from all stakeholders affected by the technology.

I am taking a concrete thread to showcase the kind of discussions I had at the event.

The event “Cognitive Infrastructure for Sustainable and Resilient Futures” had Bertrand Badré, Dr. Saurabh Mishra, Taiyo.AI G. Sayeed and there were interesting parallels drawn between how reliable infrastructure projects have to be and just like the internet revolution, people will need reliable infra of AI so that others can build on top of it. So safety concepts are being borrowed from such construction-esque domains.

The “Safe AI Solutions in Education - A Practitioner-oriented Dialogue for the Global South Perspective” by Anil Ananthaswamy, IIT Madras Krishnan Narayanan, itihaasa Research & Digital Shaveta Sharma had a lot of people attending it. The event had audience interactions through a slido kind of app. It was energetic to see folks participate at scale.

The “Small AI for Big Impact” had Alpan Raval, Wadhwani AI talking about deploying more local distilled smaller model at the edge that reduces dependencies on larger centralized providers, this is especially impactful in privacy sensitive domains like healthcare.

What Was Missing

The summit's own website was surprisingly poor for an AI-focused tech event. With hundreds of sessions, there was no effective way to filter, search, or plan attendance. There was no audience segmentation, no parallel tracks for newcomers versus experienced professionals versus academics versus school students versus entrepreneurs. Attendees were thrown into a large, complex venue with no clear signposting for context level. Day one was extremely crowded, with bottlenecked entry points: multiple queues feeding into only one or two scanners. The result was confusion and wasted time.

There were no dedicated social or networking spaces, and no structured tools like Swapcard (Maybe EAG has spoiled us) for attendee discovery. Sessions were packed back to back with no breathing room. In practice, the most productive conversations happened in queues, at random desks, or while sitting down to eat not because those were good contexts for networking, but because there was nowhere else. The probability of meeting relevant people by chance was low, and the sessions themselves (jam packed rooms) left little room for interaction.

On the topic of substance, the sessions we attended stayed at a high level. Panelists often used language that was vague and didn't convey much. Questions during Q&A segments were frequently not addressed directly. There was no discussion of gradual disempowerment, economic concentration of power, or structural risk from AI; the conversation stayed within a narrow band of surface-level concern. In private, some panelists were far more candid about uncertainty and genuine risk, but on stage, the tone was managed maybe for optics. More technical AI safety people on panels would have sharpened the discourse considerably. Sessions on concrete contribution pathways, both technical and policy were absent but would have served the audience well.

A recurring theme across summit sessions was a refusal to update ontologies. In an event on AI for teaching, for instance, panelists were still operating within a framework where the teacher is the fixed authority and AI is a supplementary tool to be rationed. Some of the questions being asked should a principal decide how much time a child spends with AI? revealed a failure to reckon with how deeply this technology disrupts existing institutional structures. Even setting aside catastrophic risk scenarios, the best-case trajectory of AI capabilities demands a fundamental rethinking of what education prepares people for.

If entire job categories are heading toward automation, the response cannot be "teach students to use AI tools." It requires working backwards from which skills will remain in demand or resist automation, which in turn requires staying current with capability evaluations and model bottlenecks. The people in high decision-making positions we observed did not appear to be doing this.

Whether this stems from a top-down narrative mandate to frame AI positively, or from genuine ignorance of the pace of progress, the effect is the same: policymakers and educators are not prepared for the disruption that is already underway, even under optimistic assumptions.

AI Safety Connect (Side Event)

What Worked

The AI Safety Connect event on February 18–19 at The Imperial was a marked improvement over the main summit in organization, curation, and substance. Approximately 250 policymakers, researchers, and industry leaders attended. The venue was opulent, the group was smaller and more intimate, and the conversations were noticeably more focused. Panelists were approachable; you could walk up to Stuart Russell or researchers from FAR AI, Oxford's Martin School, or Stanford after their sessions and have substantive one-on-one exchanges about their published work, ongoing research, or collaboration opportunities. The high-bandwidth access to influential researchers and policymakers was one of the strongest features.

Several sessions stood out. The ACM TechBrief launch on whether governments should buy or build their own LLMs offered a useful framework for thinking about data localization and the trust implications of API dependence. Hearing Singapore as a case study really grounded the discussion in the concrete. SaferAI presented quantitative modeling of cybersecurity risks from AI misuse, with concrete metrics aimed at policymakers, a welcome departure from the vague language that dominated the main summit.

The Demonstration Fair featured Humane Intelligence showcasing interfaces for running evaluations, alongside demos from Apart Research, AgentiCorp, UNESCO India, and Accenture covering GenAI evaluation, AI manipulation defense, and child safety. There were more common spaces for people to meet and talk, and while things ran behind schedule, the flexibility meant people had genuine time to ask questions rather than being rushed through.

We had really amazing 1 on 1 conversations with people where we went into threat models, got some pushback on our own models within the Indian context where there is significant inertia towards adoption. It would really help if we could work with the government in ensuring they are ready for AI cyberattacks. More work like anthropic’s economic index would help.

On the second day, the Shared Responsibility: Industry and Future of AI Safety session surfaced important geopolitical tensions: the contradiction between advocating multilateral cooperation with China while simultaneously pushing for export controls, the difficulty of framing AI governance without the zero-sum dynamics that plague nuclear nonproliferation discourse, and the recognition that AI's massive positive upside makes aggressive stances more diplomatically costly than with weapons. Hearing how DC policymakers approach lobbying and coalition-building was informative, it helped to hear how staffers are informed about development in the AI space and how it informs policy directly.

Stuart Russell gave one of the talks, moderated by Adam Gleave from FAR AI, and they discussed implementation details of inverse reinforcement learning and specific control protocols. That level of technical engagement was a welcome change.

What Was Missing

Despite being safety-focused, the Connect event still had significant gaps. The conversation was oriented heavily toward policymakers rather than technical people, making it difficult to network with the right counterparts if your background was technical. The red lines workshop — formally titled "Defining and Governing Unacceptable AI Risks," building on the Global Call for AI Red Lines signed by over 100 leaders and Nobel laureates, covered ground that has been said many times before.

The room was largely in agreement, which raised the question of whether this consensus is actually moving the needle when the main summit doesn't prioritize safety at all. The bar for red lines was also troublingly low: "we cannot let people die" and "we cannot allow CSAM" are floors, not ceilings, and there is an enormous space of harmful outcomes above those floors that went unaddressed. There was no clear articulation of enforcement mechanisms or how to actually implement any of it.

Concentration of power was either not discussed or discussed without any clear path forward. The discourse had a quality of performed assurance, people acting as though they understood the situation more than they did. When pressed on specifics, many policymakers and even some newer researchers could not point to concrete evidence for the claims they were making about risk, or identify specific capability evaluations or reports that informed their views. It felt like a memetic capture: "safety is important" repeated as a mantra without anyone pausing to ask what safety means operationally, what the evidence base is, what the timelines are, or what capabilities they expect. One session attempted to define what safety means, but even there, only a narrow perspective was explored. A closed-door session allowed for more intense discussion, but was limited in scope and attendance.

Both the main summit and the Connect event shared a deeper failure: neither seemed to fully grasp the speed at which capabilities are advancing. The discourse felt disconnected from the pace visible on Twitter and in San Francisco, where new model releases and capability jumps are happening continuously. People at both events were still treating AI Alignment like other fields where they can defer to experts blindly, it would be better if they could acknowledge that it is a pre-paradigmatic field without established consensus.

One note on epistemics and diplomacy: some members of our community have written about the summit in ways that may not be sensitive to how the Indian government receives criticism. In policy spaces, coalition-building, relationships, and framing matter in ways that are unfamiliar and sometimes uncomfortable for people trained in EA and rationality norms. The tension between epistemic honesty and diplomatic pragmatism was something we thought more about even as we write this reflection post, we do not have easy answers. The summit's dates coinciding with Chinese Lunar New Year, resulting in China not sending top officials is one example of the kind of strategic maneuvering happening that makes cooperation harder.

The AI Safety Mixer

On Feb 17, we organized an independent AI safety mixer. Around 80 people registered, and roughly thirty to forty attended. Safety was not a formal track at the summit, so this was an informal, self-organized event, funded entirely out of pocket.

The mixer opened with introductions, which initially became chaotic. We restructured into themed tables, one on risks and threat models, one on expected capabilities, one on whether AI development should be paused, and others on topics like post-AGI economy, optimistic futures and timelines. This structure helped manage the energy in the room and led to substantive, open discussions. Participants were not judgmental or defensive; the discourse was genuine and exploratory.

The community turned out to be larger than we expected. But it was also clear that many silos remain. There are people working on AI safety in India often don't know about each other and have no coordination point or Schelling point to converge on. This article is partly an attempt to address that. If you are working in this space or want to get involved, reach out to either of us. (Aditya or Bhishma) We can connect you to others in your city, invite you to communities and groups, or support you in attending future events, retreats, etc.

One concern that surfaced was gender representation. The summit itself had reasonably balanced attendance, but the safety mixer skewed noticeably. We don't yet have clear answers on why this gap exists or how to address it, but we are looking into the bottlenecks. (Please comment if you have ideas)

On logistics, we had to place fifteen to twenty people on a waiting list because the venue couldn't accommodate everyone. With proper funding and a larger venue, future events could serve the full demand. Many thanks to Ankur, Aman, Kunal for organizing this <3

Recommendations

The main summit would benefit from threat-model-based and opportunity-based track design, with clear pathways organized by audience: policy professionals, technical researchers, entrepreneurs, students, newcomers.

Dedicated networking zones with structured matchmaking would dramatically improve the quality of connections made. Panels need more technical participants and a norm of honesty about uncertainty, the gap between private candor and public messaging is a problem worth naming explicitly. Decision-makers in education and governance need to engage with current capability evaluations and think forward rather than retrofitting AI into existing institutional assumptions.

The Connect event should push beyond consensus-building toward concrete implementation discussions, enforcement mechanisms. There should be space and time for examining core assumptions without loaded terminology or performative agreement.

For the AI safety community in India specifically, there is clear demand for more frequent, well-organized spaces for discussion and taking action. The mixer demonstrated that people want to engage seriously with risk, governance, and contribution pathways. What's missing is infrastructure coordination tools, funding, visibility, and a Schelling point for coordination.

Misc

It was heartening to see the AI safety community self-organize so effectively. A coordination whatsapp group of around 180 members formed (thanks to Aman), and community members built tools to help sort through the event schedule which was necessary, because the official infrastructure fell short on that front.

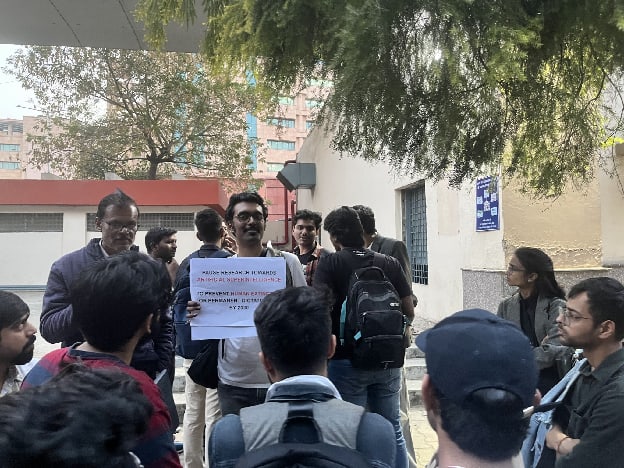

Our friend Samuel was protesting outside the summit to pause the development towards ASI. Himanshu also advocated a pause due to being concerned about risks of power concentration and surveillance by nation states. It would be encouraging to see more civil society oversight of developments in this domain.

We have reading groups in Bangalore, coworking spaces offered by Electric Sheep, and more regular events. I recently conducted an event in Hyd so we can have more frequent meetups to collectively sensemake.

Many thanks to Saksham for hosting us in the city, and thanks to Opus 4.6 for helping us draft this piece.

Conclusion

The summit and its side events signal real and growing institutional interest in AI safety and governance across India and internationally. That is a net positive. But the event design at the main summit didn't match the seriousness of the subject matter, and the public discourse at panels lagged well behind what participants were willing to discuss privately. The Connect event was better but still constrained by a narrow framing of safety and an orientation toward policy over technical depth. We need more events that reckon with the actual pace of capability development.

I made a conference app, if you're interested. Feel free to message me or take a look at the demo conference (currently at aconference.app)

Let me know if you have feedback, you know better than me what would make such a conference great