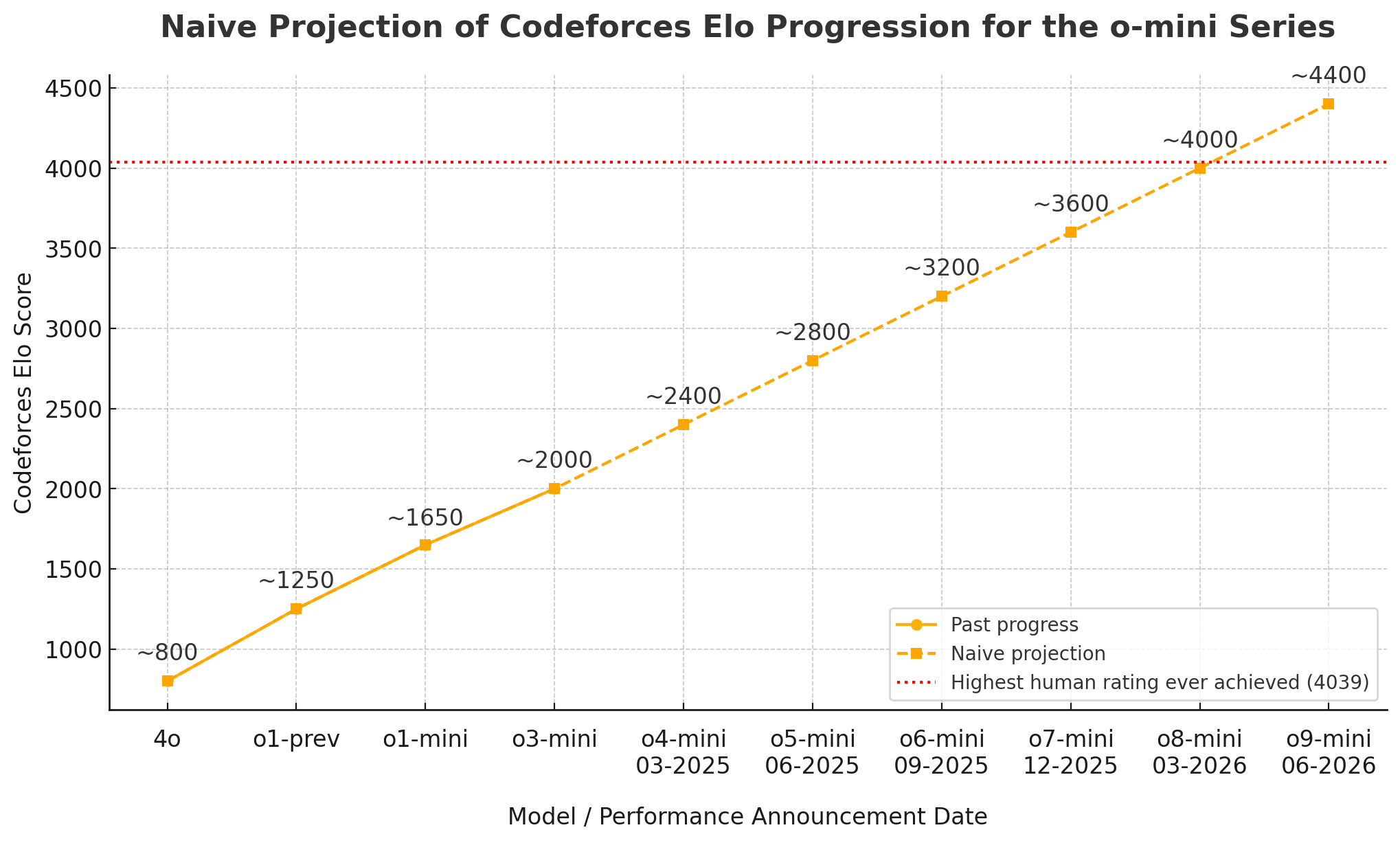

Naive projection about o4 and beyond

The Codeforces Elo progression from o1-mini to o3-mini was around 400 points (with compute costs held constant). Similarly, the Elo jumps from 4o (~800) to o1-preview (~1250) to o1-mini (~1650) were also each around 400 points (the compute costs of 4o appear similar to those of o1-mini, while they're higher for o1-preview).

People from OpenAI report that o4 is now being trained and that training runs take around three months in the current "reasoning paradigm". So if we were to engage in naive projection, we might project a continued ~400 point Codeforces progression every three months.

Below is a naive such projection for the o1-mini cost range, with the dates referring to when model scores are announced (not when the models are released).

- March 2025 (March 14th?): o4 ~2400

- June 2025: o5 ~2800

- September 2025: o6 ~3200

- December 2025: o7 ~3600

- If high compute adds around 700 Elo points for full o7 (as it does for o3), this would give full o7 a superhuman score of ~4300

- March 2026: o8 ~4000 (a score only ever achieved by two people)

- June 2026: o9 ~4400 (superhuman level for cheap)

Part of the motivation for making such a naive projection is that it can provide a salient yardstick to hold future progress up against, to notice whether progress on this benchmark is slowing down, keeping pace, or accelerating.

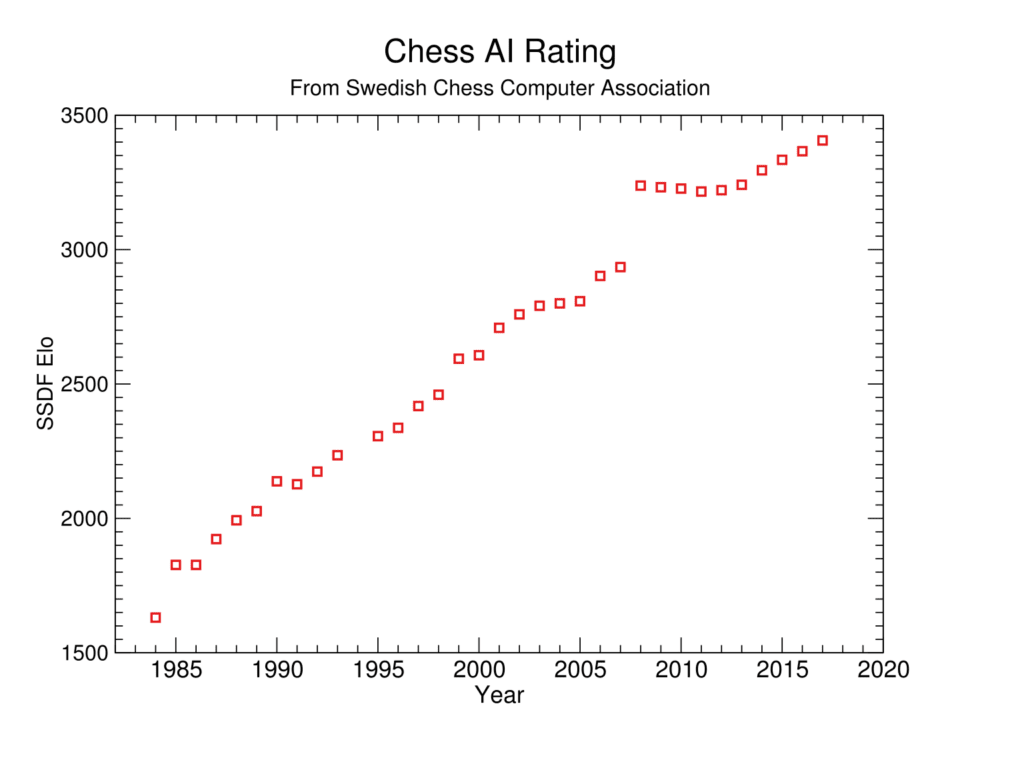

Additionally, as further motivation, one can note that there is some precedent for Elo scores improving linearly over time in other domains, e.g. in chess:

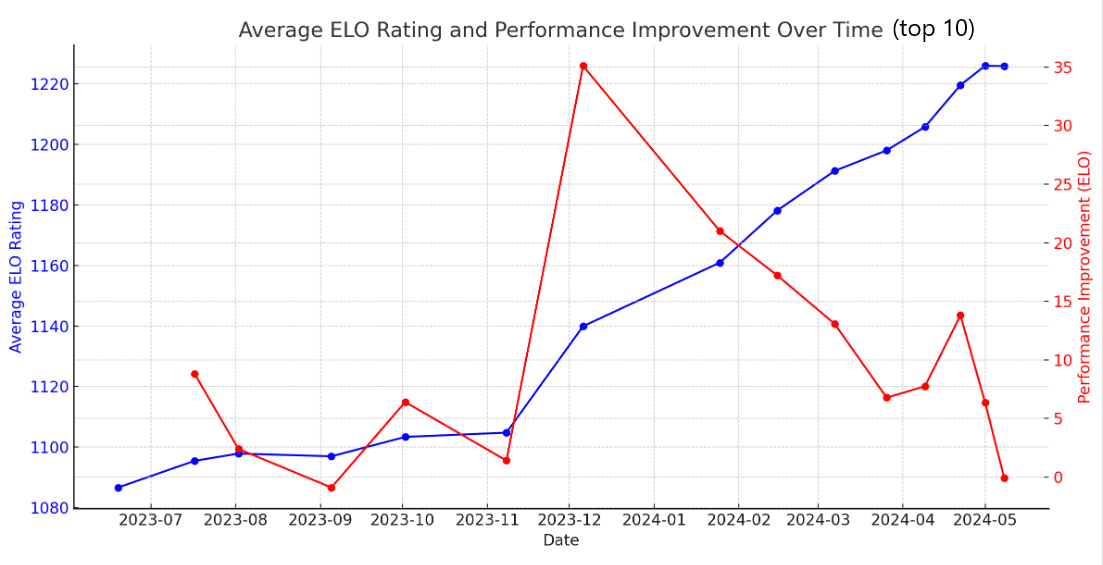

Likewise, while they're more subjective, Elo scores on the LLM leaderboard also appear to have increased fairly consistently by an average of ~20 points per month over the last year (the trend has continued beyond the graph below; the current top 10 average is at the ~1360 level one would have predicted based on a naive extrapolation of the post-2023-11 trendline below):

An argument in favor of (fanatical) short-termism?

[Warning: potentially crazy-making idea.]

Section 5 in Guth, 2007 presents an interesting, if unsettling idea: on some inflationary models, new universes continuously emerge at an enormous rate, which in turn means (maybe?) that the grander ensemble of pocket universes consists disproportionally of young universes.

More precisely, Guth writes that, "in each second the number of pocket universes that exist is multiplied by a factor of exp{10^37}." Thus, naively, we should expect earlier points in a given pocket universe's timeline to vastly outnumber later points — by a factor of exp{10^37} per second!

(A potentially useful way to visualize the picture Guth draws is in terms of a branching tree, where for each older branch, there are many more young ones, and this keeps being true as the new, young branches grow and spawn new branches.)

If this were true, or even if there were a far weaker universe generation process to this effect (say, one that multiplied the number of pocket universes by two for each year or decade), it would seem that we should, for acausal reasons, mostly prioritize the short-term future, perhaps even the very short-term future.

Guth tentatively speculates whether this could be a resolution of sorts to the Fermi paradox, though he also notes that he is skeptical of the framework that motivates his discussion:

Perhaps this argument explains why SETI has not found any signals from alien civilizations [because if there were an earlier civ at our stage, we would be far more likely to be in that civ], but I find it more plausible that it is merely a symptom that the synchronous gauge probability distribution is not the right one.

I'm not claiming that the picture Guth outlines is likely to be correct. It's highly speculative, as he himself hints, and there are potentially many ways to avoid it — for example, contra Guth's preferred model, it may be that inflation eventually stops, cf. Hawking & Hertog, 2018, and thus that each point in a pocket universe's timeline will have equal density in the end; or it might be that inflationary models are not actually right after all.

That said, one could still argue that the implication Guth explores — which is potentially a consequence of a wide variety of (eternal) inflationary models — is a weak reason, among many other reasons, to give more weight to short-term stuff (after all, in EV terms, the enormous rate of universe generation suggested by Guth would mean that even extremely small credences in something like his framework could still be significant). And perhaps it's also a weak reason to update in favor of thinking that as yet unknown unknowns will favor a short(er)-term priority to a greater extent than we had hitherto expected, cf. Brian Tomasik's discussion of how we might model unknown unknowns.

Hi Magnus, thank you for writing out this idea!

I am very encouraged (although, perhaps anthropically I should be discouraged for not having been the first one to discover it) that I am not the only one who thought of this (also, see here.)

I was thinking about running this idea by some physicists and philosophers to get further feedback on whether it is sound. It does seem like adding at least a small element of this to a moral parliament might not be a bad idea, especially considering that making it only 1% of the moral parliament would capture the vast majority of value in terms of orders of magnitude (indeed, if at any given moment a single person who is encountering this idea just tried to “live in the moment” or smile for a second at the moment they thought of it, and then everyone forgot about it forever, we would still capture the vast majority [again, in orders of magnitude terms] of the value of the idea; but this continues to be true in every proceeding moment.)

Anyways, thanks for posting this, I am hoping to come back to my post sometime soon and add some things to it and correct a few mistakes I think I made. Let me know if you’d like to be involved in any further investigation of this idea! By the way, here’s the version I wrote in case you are interested in checking it out.

I'm very late responding, but I have two questions about this:

- Each new pocket universe starts "from scratch" with no humans (or planets or whatever) in it, right? It's not like the many worlds interpretation where new local branches are nearly identical to one another and the seconds before, right?

- Even if almost all of the pocket universes are young, that doesn't mean they're short-lived (or only support moral patients for a short period), right? They could still have very long futures with huge numbers of moral patients each ahead of them. In each pocket universe, couldn't targeting its far future be best (assuming risk neutral expected value-maximizing utilitarianism)? And then the same would hold across pocket universes.

- If there are infinitely many pocket universes, then maybe the order of summation matters? If you first sum value across all pocket universes at each time point, and then sum over time, then maybe you get fanatical ultra-neartermism, like you suggest. If you first sum over time in each pocket universe and then across all pocket universes, then you get longtermism (or at least not ultra-neartermism).

- If everything were finite, then summation order wouldn't matter, and as long as the pocket universes didn't end up disproportionately short-lived, you wouldn't get fanatical ultra-neartermism.

Thanks for your comment, Michael :)

I should reiterate that my note above is rather speculative, and I really haven't thought much about this stuff.

1: Yes, I believe that's what inflation theories generally entail.

2: I agree, it doesn't follow that they're short-lived.

In each pocket universe, couldn't targeting its far future be best (assuming risk neutral expected value-maximizing utilitarianism)? And then the same would hold across pocket universes.

I guess it could be; I suppose it depends both on the empirical "details" and one's decision theory.

Regarding options a and b, a third option could be:

c: There is an ensemble of finitely many pocket universes wherein new pocket universes emerge in an unbounded manner for eternity, where there will always be a vast predominance of (finitely many) younger pocket universes. (Note that this need not imply that any individual pocket universe is eternal, let alone that any pocket universe can support the existence of value entities for eternity.) In this scenario, for any summation between two points in "global time" across the totality of the multiverse, earlier "pocket-universe moments" will vastly dominate. That might be an argument in favor of extreme neartermism (in that kind of scenario).

But, of course, we don't know whether we are in such a scenario — indeed, one could argue that we have strong anthropic evidence suggesting that we are not — and it seems that common-sense heuristics would in any case speak against giving much weight to these kinds of speculative considerations (though admittedly such heuristics also push somewhat against a strong long-term focus).

(Added a graph that visualizes the naive projection discussed above.)