Quick takes

For context: Clara is right, there is good experimental evidence that this occurs in online comment forums. This is on top of the simple mechanism that more highly upvoted content is more likely to be seen for various reasons.

I'd assume this holds true for EA forum content. I do the same thing @Toby Tremlett🔹 is describing to some extent, but I'd be surprised if my system 2 thinking outweighs my system 1 on net in this regard. I suspect I personally do this most with very low Karma posts, which I neglect to upvote because of a vague embarrassment over the...

Many people hold up 'AI As Normal Technology' as a reasonable "normal-people" case against the doomer position. I actually think it's wrong on a number of ways and falls flat on its own terms. I think I believe this for reasons mostly orthogonal to being a doomer (except inasomuch as being a doomer makes me more interested in thinking about AI). If anybody here is interested in fighting the good fight, it might be valuable to do a Andy Masley-style annilihation of the AI As Normal Technology position, trying to stick to minimally controversial arguments an...

Oh that's a really good point, thanks. I also get annoyed when people in comments harp on a bad title without providing a better one, instead of engage with the substance of my arguments.

(I thought it's fine to complain in this case because they clearly benefited a bunch from the equivocation in their title and clear better alternatives were available, whereas when I have bad titles they tend to be clear own goals in the sense that I both got more flak and also less readership than if I had a better title).

Why I No Longer Believe the Nonprofit Sector Is the Best Place to Drive Change for Animals

Six years ago, I started a capacity-building organisation based on a clear hypothesis: that recruiting great people into high-impact nonprofits was one of the best ways to help animals.

I no longer think that’s true.

After spending years working directly with nonprofit leaders, trying to fill critical roles, and analysing the broader job market, I’ve come to believe that the nonprofit sector at least within farmed animal advocacy is no longer the most neglected or scala...

Shoutout to LEEP for (being recognized for) their great work in South Africa !

Here are some bullet points of reflection topics around lifestyle and priorities for EAs that I shared with some fellow EAs some months ago. I am sharing this text here in case it interests anyone. I will elaborate and expand on them more and better later if I have the opportunity.

""" Support Systems: Seriously. I didn't even know this term until after all this happened, and it would have changed everything. There's something about how people are instructed in STEM institutions (and as a consequence, many EA institutions) that makes it all about careers, h...

(My Facebook and Instagram accounts have been suspended without explanation. Hopefully they will be restored soon. If anyone reading this wants to reach me in the meantime, please use other means.)

Some women on the Facebook support group "Cluster Headache Patients" comparing labor pain to cluster headache pain:

- "Honestly, I had a natural childbirth and a cesarean and cluster headaches are 10 times worse than both."

- "2 unmedicated births for me. Would rather do that every day than have another cluster"

- "every day though, really?"

- "yes. I'd rather go through childbirth without pain relief than CH."

- "tenfold worse than popping a baby out"

- "Nah, labour/giving birth is a walk in the park compared to ch […] I was in labour with my son for nearly 3 days, then th

I made a podcast feed for the posts highlighted in Best of: AGI & Animals Debate Week

RSS Feed to paste into your favorite podcast app: https://f004.backblazeb2.com/file/aaronbergman-public/podcast/agi_animals/feed.xml

I also like the cover art Gemini made so here it is:

Success is a mess.

Golf, if you allow it, teaches forbearance.

Doing hard things is hard. One of the hardest things to do is hit a tiny ball in a tiny hole hundreds of yards away. Tiny errors cause terrible outcomes. Control is a phantom. The promise and perils don’t bear thinking about.

When it all comes together, though, my goodness, it’s a hell of a party.

If it’s worth going where you’re aiming, there’ll be no straight line from here to there. Next time you’re stuck, remember Rory and what we went through with him.

Thanks for reading, and especially for commenting!

There are a few reasons for training on golf:

- Biographical. I was introduced to golf as a teenager, not chess, and I spent thousands of hours since then playing and watching it. Maybe there are stories like Rory's in chess, grand masters who persevered through a decade of struggle to overcome the odds and themselves, in which case I'd like to read those stories too.

- Social. As far as I can tell Rory is much more famous than any chess player, and therefore faced greater social pressure to perform. Stress does

Help me find my replacement doing farmed animal advocacy grantmaking!

I wanted to share a job opening for, in my opinion, one of the coolest jobs to help animals: my job! I'm moving on from Mobius soon, so we're looking for the next person to lead our grantmaking and entrepreneurial projects.

The role: You'd manage the grantmaking portfolio for one of the top ten largest funders of farmed animal welfare work globally, plus lead entrepreneurial projects like incubating new organisations and identifying strategic gaps in the movement. You'd work with a small a...

Yesterday's Anthropic research ("Emotion Concepts and their Function in LLMs") provides a fascinating mechanistic analogue that highly resonates with the field observations from my March audit of GPT-5.2 Thinking.

While Anthropic studied Claude Sonnet 4.5 and my audit focused on GPT-5.2, the structural alignment between their white-box findings and my black-box observations is striking:

- Accumulation mechanism: In the audit, I documented how prolonged conflict or user "irritation signals" lead to a pattern I called "Procedural Capture". Anthropic's paper demo

🟪 Wide-spread epistemic anomaly detected || Global Risks Instant Message #01-04-2026

We are detecting today a shared collective delusion leading victims to degrade their epistemic standards. This anomaly is aimed towards no particular end, except perhaps for the amusement of its participants and the satisfaction of ingenious expression.

So far, it appears to be mostly harmless. Nonetheless, this phenomenon creates space for vulnerabilities. If some geopolitical actor were to take some implausible action on this day (for instance, US to invade Canada, Spain ...

Some possible containment procedures are as follows:

Altering the Gregorian Calendar to change Leap Day to April 1st (unknown effectiveness, could lead in transferal of the anomaly to another day)

The teaching of mind-resistance techniques in schools and workplaces, using standard cover stories (media literacy, appreciation of the arts, combating racial bias). However, this runs the risk of collapsing important delusions to the functioning of society.

Global usage of hypnotic drugs through the atmosphere, as well as using sleeper agents in the government to f...

Does anyone know why @William_MacAskill says he is "not convinced by the shrimp argument" on his recent appearance on Sam Harris's podcast?

...SAM HARRIS

So yeah, so this is one area where perhaps my own cynicism creeps in. I worry that any focus on suffering beyond human suffering, it risks confusing enough people so as to damage people's commitment to these principles. So I mean, I'm not, there's zero defense of factory farming coming from me here, but When I see a philosopher who's clearly EA or EA-adjacent arguing on behalf of the welfare of shr

Hi Aaron and Will. I estimated how much cage-free corporate campaigns for layers, and the Shrimp Welfare Project’s (SWP’s) Humane Slaughter Initiative (HSI) increase the welfare of their target beneficiaries for individual welfare per fully-healthy-animal-year proportional to "individual number of neurons"^"exponent", and "exponent" from 0 to 2, which covers the best guesses that I consider reasonable. An exponent of 1 would correspond to the linear weighting preferred by Will. Below is a graph with the results. I calculate cage-free corporate campaig...

Seriously, I love this EA forum holiday ❤️ I genuinely feel like this helps the community do more good, get more silly-but-perhaps-with-a-grain-of-usefulness ideas across, and waste time in a way which feels a bit productive

You should turn your project into an organization

If your team's work is worth doing, it's worth doing as an org

When a few people are doing good work together, the question of whether to formally incorporate into an organization can feel like a distraction from doing the actual work. Why take time away from your exciting research project to create an org? There are some real up-front costs to incorporating – dealing with bureaucracy, legal overhead, governance obligations – but I think the benefits of doing so are usually greater and underappreciated.

Orgs a

...I mostly strongly agree with this but think it's worth considering "being an official, recognized, and funded part of an organization" rather than constituting one's own from scratch. I know Rethink Priorities and Hive have sponsored projects before - that seems like a possibly-good intermediate step, with the possibility of spinning out independently later

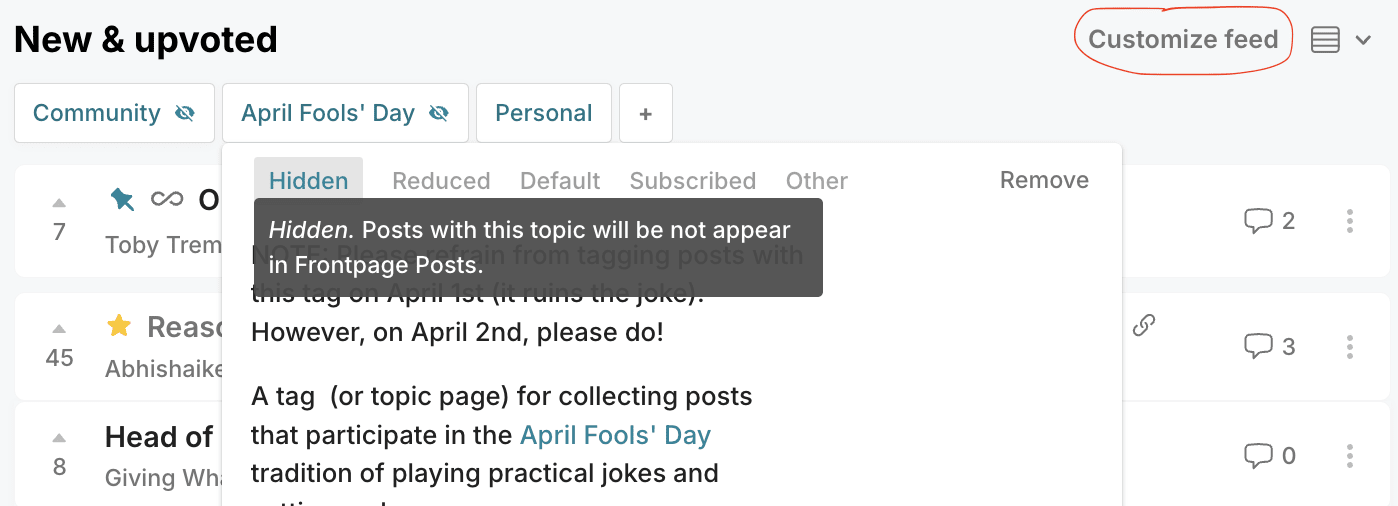

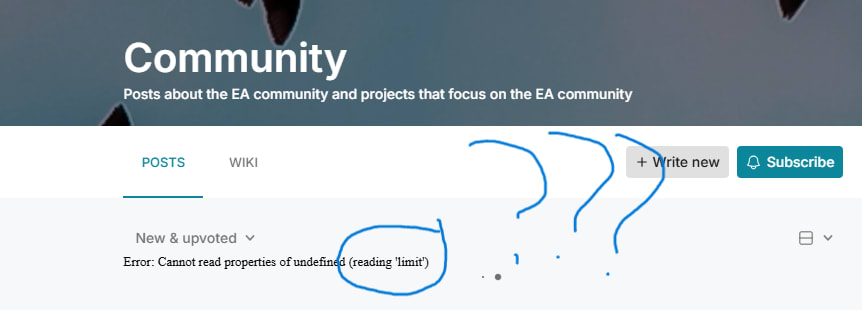

Look I know I'm on the forum too much @Toby Tremlett🔹 , but I don't think its necessary to put "reading limit" controls on me....

How organisations with low AI usage can and should be using it more

There is a lot of discussion about how everyone should be using AI more, and efforts to increase use and literacy. So far in animal advocacy spaces where I work I’ve seen the following efforts to increase usage so far:

- Orgs provide model subscriptions to their teams.

- People share the ways they’ve been using AI in slack channels or recurring meetings.

- There are educational webinars or fellowships.

The above has made a real dent in AI usage, but much less than we should be aiming for given ...

Yeah I have, and my impression from those I've spoken with is that this has not been the case. You don't think most people whose job primarily involves sitting at a computer could have much of their job automated by a software engineer on call? For example:

- I know grantmakers who have significantly automated parts of their work.

- I know people who have classified 1,000 people in their CRM across a range of people using AI instead of manually.

- I've seen some impressive use of AI to go through 1000's of academic papers looking for novel solutions to a welfare that might exist but is not widely known.