NickLaing

Bio

Participation1

I'm a doctor working towards the dream that every human will have access to high quality healthcare. I'm a medic and director of OneDay Health, which has launched 53 simple but comprehensive nurse-led health centers in remote rural Ugandan Villages. A huge thanks to the EA Cambridge student community in 2018 for helping me realise that I could do more good by focusing on providing healthcare in remote places.

How I can help others

Understanding the NGO industrial complex, and how aid really works (or doesn't) in Northern Uganda

Global health knowledge

Posts 29

Comments1762

Thanks @mal_graham🔸 this is super helpful and makes more sense now. I think it would make your argument far more complete if you put something like your third and fourth paragraphs here in your main article.

And no I'm personally not worried about interventions being ecologically inert.

As a side note its interesting that you aren't putting much effort into making interventions happen yet - my loose advice would be to get started trying some things. I get that you're trying to build a field, but to have real-world proof of this tractability it might be better to try something sooner rather than later? Otherwise it will remain theory. I'm not too fussed about arguing whether an intervention will be difficult or not - in general I think we are likely to underestimate how difficult an intervention might be.

Show me a couple of relatively easy wins (even small-ish ones) an I'll be right on board :).

This is super cool. I especially love the invitation to creativity and blue sky thinking invited here. Shrimp Welfare project and FarmKind are great examples of genuine innovation IMO so it's great to see those founders leading the charge here. Part of me wishes I...

1) Was suuuuper passionate about animal welfare

2) Wasn't 8 years into running a global health charity

;)

Thanks so much @mal_graham🔸 that's the web page appreciate that! I understand that the WIT doesn't do all the work, but I think it was reasonable for me to assume that they were the major contributors given that they have been the team publishing the cross-cause work up to now, and were the team linked from the page.

Thanks Marcus for the reply. First this criticism is specifically about potential preferences and bias of the researchers. With a project like this with perhaps hundreds of junctures which require subjective decisions, I think that's a reasonable discussion to have. I don't think it's fair to ask me shift the ground and ask to discuss the research methodology, I'm sure there'll be plenty of great discussion about that. My point was purely concerns about potential researcher bias.

I completely agree with your 3 points, and specifically that there are larger areas of concern than the cause area orientation of researchers - but I still think it's somewhat important .

This comment seems to twist the point I was trying to make "More broadly, I would not consider Claude’s opinions about the priorities of our researchers to be a good method of gauging the quality of our work" I was not questioning the quality of your work, I think your work is extremely high quality. Yet even the highest quality work can go in different directions, based on the assumptions and biases of the researchers who generate it. In many imprecise fields like development, economics and political science, the highest quality researchers can argue opposite sides. Stiglitz and Friedman both produced high quality work which often ended up at almost opposite conclusions. I would put cross-cause categorisation in a similar-ish category due to the wealth of assumptions required and uncertainties to be reckoned with. So in research like these yes, I think the make-up of the research team is important and open to scrutiny outside of objective criticism of the work itself. You might disagree with me on this one. Assuming people's backgrounds is open for discussion, I think a Claude analysis of people's previous work is reasonable if obviously very imprecise and yes potentially misleading.

I could be wrong here, but I feel like I may have been baited-and-switched a little on who are the major contributors here.. I went to your website and just searched the cross-cause prioritisation team, finding a page with photos and bios. You've said that the researchers I listed were not the principal researchers - then who were? That Carmen van Schoubroeck led the work isn't listed anywhere I don't think.

I agree with the direction but not the strength of this comment "I can never tell you with certainty no one who worked on this was not subtly unconsciously biased" and agree with your opening statement more "I think there is not a single EA organization I would consider unbiased on this question, including ourselves" We are all biased, often more than we think. Most of us (myself included) have a cess-pit of opinions and angles, despite our best efforts to the contrary. I think within EA we often overrate how objective we are, and we can only have so much objectivity based on our past experience and worldview built up over time. This is why I think for a cross-cause prioritisation exercise, it is helpful to start with a team with a wide range of prior opinions or perhaps very uncertain ones.

It's an interesting one Vasco. I prefer the current questions to uncertainty questions which I think are more intuitive for most people. I think it's important to lean towards questions which are easier to understand, rather than the "best theoretical" questions to help differenciate. Not everyone thinks as deeply as you about these things, and I like the accessibility of the current questions.

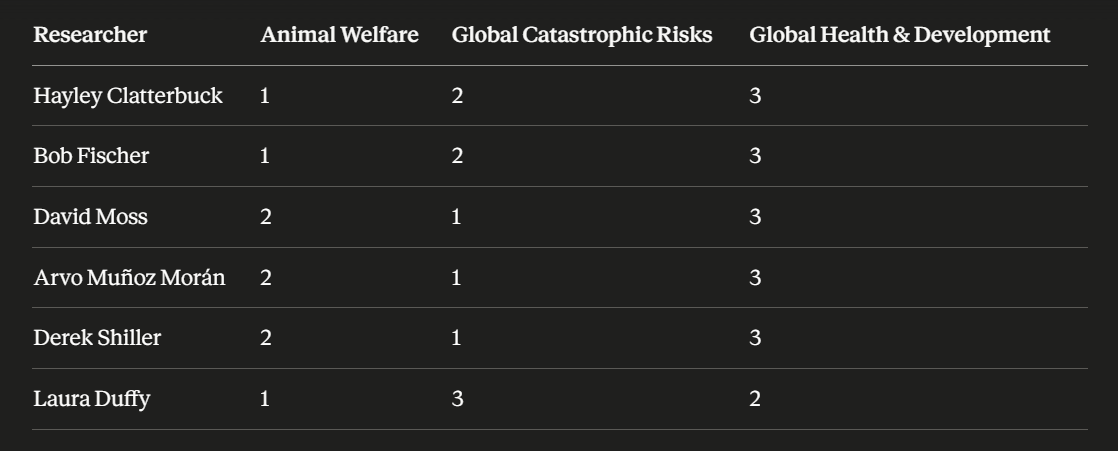

I think this is important work, but I want to flag my biggest concern about the process - the imbalance of the backgrounds of the research team and therefore potential for bias. I asked Claude to rank their areas of interest and prior work, and it shows a heavy bent towards Animal Welfare and Global Catastrophic risk.

Half of the research team have been strong advocates for Animal Welfare work in the past, while none of the team seems to have a special interest in GHD. Two of the team were deeply involved in the Animal Moral Weights project itself.

I think the work is impressive, and it's great there is a new fund, however I think a cross-cause research prioritisation team like this ideally would have been more balanced in makeup. Research team balance on prior opinions is especially important when the project relies on many assumptions and requires subjective assessments at many junctures. In addition potential conflicts of interest here should probably be stated on the website and in a post like this. Now that there is donation money directly involved, I feel the stakes are higher than when the situation was purely research (although the research alone is very influential).

A couple of other less important declarations perhaps could have been made as well. Given that Lead Exposure Action Fund was the only specific GHD intervention selected, they perhaps should have mentioned that they have been commissioned to do research on lead exposure before, and also that a previous RP researcher is now a program associate at LEAF. The RP CEO was also previously a fund manager on the EA animal Welfare fund which allocates 13% of this fund's money.

All think tanks will have bias to some extent (Political think tanks are even rated on a spectrum) and I think its important to consider this and state where there might be potential bias.

I think It wouldn't cost much at all to make forward a pretty robust cost-effectiveness model for a CHW which rolls out a wide range of interventions. (I think Living Goods +- others might well have decent models already here?). I think you could even build this yourself? Some of the package would be easy to do because data is there (malaria, diarrhoea, pneumonia treatment, family planning antenatal care), while screenings and referrals are much harder to quantify and might have to be left out of the analysis pending better data.

I agree the bet argument is pretty good if the goal is government adoption. Regardless of the nuance of cost-effectiveness, CHWs will always be more cost-effective than most health things govt. could do and it would likely displace less cost-effective things. Unfortunately I don't think EA funders have seriously considered scale through govt. something worth chasing as a bet, but I really like the idea.

Thanks for the update, and the reasons for the name change make s lot of sense

Instinctively i don't love the new name. The word "coefficient" sounds mathsy/nerdy/complicated, while most people don't know what the word coefficient actually means. The reasoning behind the name does resonate through and i can understand the appeal.

But my instincts are probably wrong though if you've been working with an agency and the team likes it too.

All the best for the future Coefficient Giving!