Note: this post is a (minorly) edited version of a new 80,000 Hours career review.

Progress in AI — while it could be hugely beneficial — comes with significant risks. Risks that we’ve argued could be existential.

But these risks can be tackled.

With further progress in AI safety, we have an opportunity to develop AI for good: systems that are safe, ethical, and beneficial for everyone.

This article explains how you can help.

Summary

Artificial intelligence will have transformative effects on society over the coming decades, and could bring huge benefits — but we also think there’s a substantial risk. One promising way to reduce the chances of an AI-related catastrophe is to find technical solutions that could allow us to prevent AI systems from carrying out dangerous behaviour.

Pros

- Opportunity to make a significant contribution to a hugely important area of research

- Intellectually challenging and interesting work

- The area has a strong need for skilled researchers and engineers, and is highly neglected overall

Cons

- Due to a shortage of managers, it’s difficult to get jobs and might take you some time to build the required career capital and expertise

- You need a strong quantitative background

- It might be very difficult to find solutions

- There’s a real risk of doing harm

Key facts on fit

You’ll need a quantitative background and should probably enjoy programming. If you’ve never tried programming, you may be a good fit if you can break problems down into logical parts, generate and test hypotheses, possess a willingness to try out many different solutions, and have high attention to detail.

If you already:

- Are a strong software engineer, you could apply for empirical research contributor roles right now (even if you don’t have a machine learning background, although that helps)

- Could get into a top 10 machine learning PhD, that would put you on track to become a research lead

- Have a very strong maths or theoretical computer science background, you’ll probably be a good fit for theoretical alignment research

Recommended

If you are well suited to this career, it may be the best way for you to have a social impact.

Thanks to Adam Gleave, Jacob Hilton and Rohin Shah for reviewing this article. And thanks to Charlie Rogers-Smith for his help, and his article on the topic — How to pursue a career in technical AI alignment.

Why AI safety technical research is high impact

As we’ve argued, in the next few decades, we might see the development of hugely powerful machine learning systems with the potential to transform society. This transformation could bring huge benefits — but only if we avoid the risks.

We think that the worst-case risks from AI systems arise in large part because AI systems could be misaligned — that is, they will aim to do things that we don’t want them to do. In particular, we think they could be misaligned in such a way that they develop (and execute) plans that pose risks to humanity’s ability to influence the world, even when we don’t want that influence to be lost.

We think this means that these future systems pose an existential threat to civilisation.

Even if we find a way to avoid this power-seeking behaviour, there are still substantial risks — such as misuse by governments or other actors — which could be existential threats in themselves.

There are many ways in which we could go about reducing the risks that these systems might pose. But one of the most promising may be researching technical solutions that prevent unwanted behaviour — including misaligned behaviour — from AI systems. (Finding a technical way to prevent misalignment in particular is known as the alignment problem.)

In the past few years, we’ve seen more organisations start to take these risks more seriously. Many of the leading industry labs developing AI — including Google DeepMind and OpenAI — have teams dedicated to finding these solutions, alongside academic research groups including at MIT, Oxford, Cambridge, Carnegie Mellon University, and UC Berkeley.

That said, the field is still very new. We think there are only around 300 people working on technical approaches to reducing existential risks from AI systems,[1] which makes this a highly neglected field.

Finding technical ways to reduce this risk could be quite challenging. Any practically helpful solution must retain the usefulness of the systems (remaining economically competitive with less safe systems), and continue to work as systems improve over time (that is, it needs to be ‘scalable’). As we argued in our problem profile, it seems like it might be difficult to find viable solutions, particularly for modern ML (machine learning) systems.

(If you don’t know anything about ML, we’ve written a very very short introduction to ML, and we’ll go into more detail on how to learn about ML later in this article. Alternatively, if you do have ML experience, talk to our team — they can give you personalised career advice, make introductions to others working on these issues, and possibly even help you find jobs or funding opportunities.)

Although it seems hard, there are lots of avenues for more research — and the field really is very young, so there are new promising research directions cropping up all the time. So we think it’s moderately tractable, though we’re highly uncertain.

In fact, we’re uncertain about all of this and have written extensively about reasons we might be wrong about AI risk.

But, overall, we think that — if it’s a good fit for you — going into AI safety technical research may just be the highest-impact thing you can do with your career.

What does this path involve?

AI safety technical research generally involves working as a scientist or engineer at major AI labs, in academia, or in independent nonprofits.

These roles can be very hard to get. You’ll likely need to build up career capital before you end up in a high-impact role (more on this later, in the section on how to enter). That said, you may not need to spend a long time building this career capital — we’ve seen exceptionally talented people move into AI safety from other quantitative fields, sometimes in less than a year.

Most AI safety technical research falls on a spectrum between empirical research (experimenting with current systems as a way of learning more about what will work), and theoretical research (conceptual and mathematical research looking at ways of ensuring that future AI systems are safe).

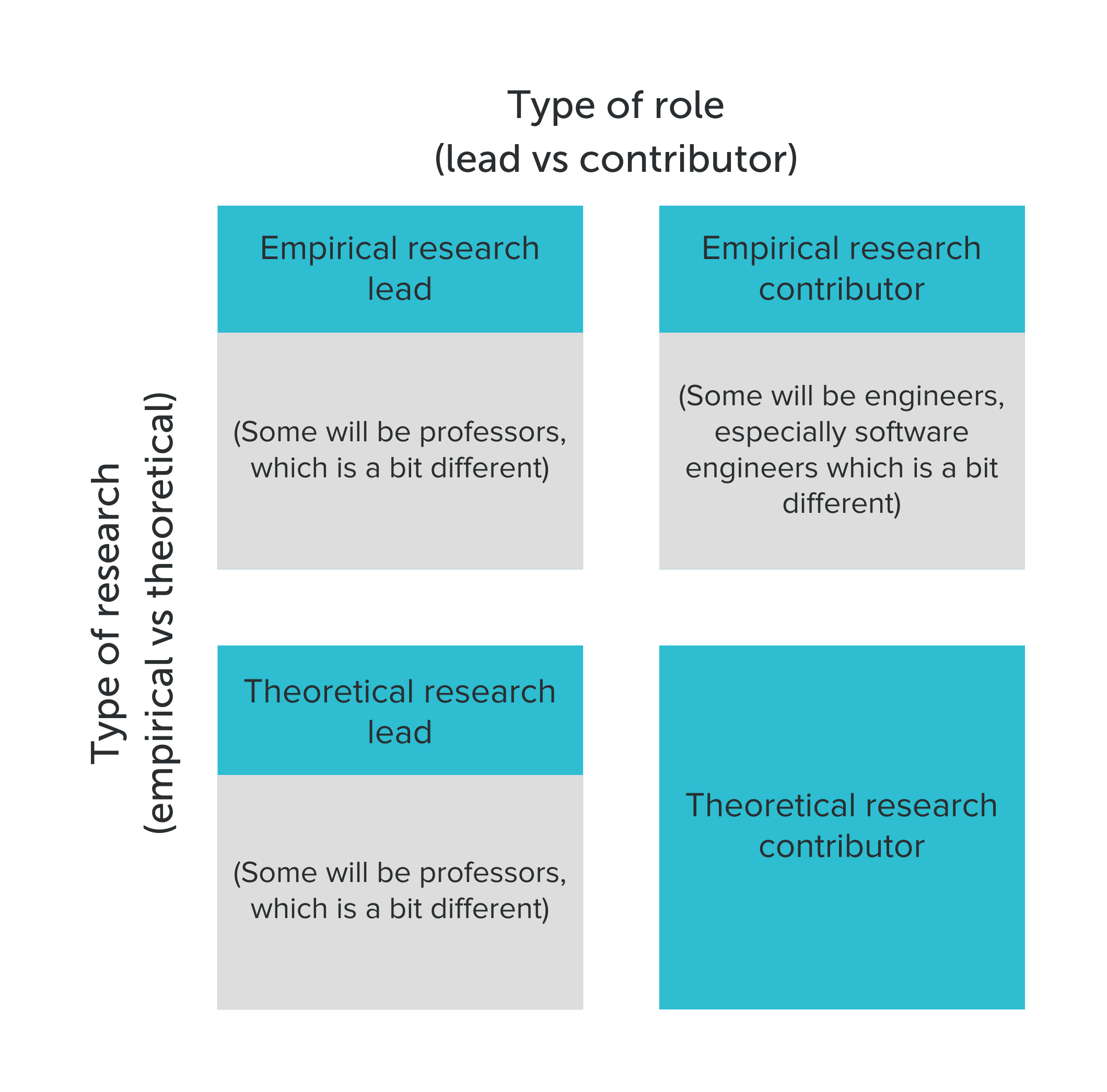

No matter where on this spectrum you end up working, your career path might look a bit different depending on whether you want to aim at becoming a research lead — proposing projects, managing a team and setting direction — or a contributor — focusing on carrying out the research.

Finally, there are two slightly different roles you might aim for:

- In academia, research is often led by professors — the key distinguishing feature of being a professor is that you’ll also teach classes and mentor grad students (and you’ll definitely need a PhD).

- Many (but not all) contributor roles in empirical research are also engineers, often software engineers. Here, we’re focusing on software roles that directly contribute to AI safety research (and which often require some ML background) — we’ve written about software engineering more generally in a separate career review.

We think that research lead roles are probably higher-impact in general. But overall, the impact you could have in any of these roles is likely primarily determined by your personal fit for the role — see the section on how to predict your fit in advance.

Next, we’ll take a look at what working in each path might involve. Later, we’ll go into how you might enter each path.

What does work in the empirical AI safety path involve?

Empirical AI safety tends to involve teams working directly with ML models to identify any risks and develop ways in which they might be mitigated.

That means the work is focused on current ML techniques and techniques that might be applied in the very near future.

Practically, working on empirical AI safety involves lots of programming and ML engineering. You might, for example, come up with ways you could test the safety of existing systems, and then carry out these empirical tests.

You can find roles in empirical AI safety in industry and academia, as well as some in AI safety-focused nonprofits.

Particularly in academia, lots of relevant work isn’t explicitly labelled as being focused on existential risk — but it can still be highly valuable. For example, work in interpretability, adversarial examples, diagnostics and backdoor learning, among other areas, could be highly relevant to reducing the chance of an AI-related catastrophe.

We’re also excited by experimental work to develop safety standards that AI companies might adhere to in the future — for example, the work being carried out by ARC Evals.

To learn more about the sorts of research taking place at labs focused on empirical AI safety, take a look at:

- OpenAI’s approach to alignment research

- Anthropic’s views on AI safety

- Redwood Research’s recent research highlights

- Publications from Google DeepMind’s safety team

While programming is central to all empirical work, generally, research lead roles will be less focused on programming; instead, they need stronger research taste and theoretical understanding. In comparison, research contributors need to be very good at programming and software engineering.

What does work in the theoretical AI safety path involve?

Theoretical AI safety is much more heavily conceptual and mathematical. Often it involves careful reasoning about the hypothetical behaviour of future systems.

Generally, the aim is to come up with properties that it would be useful for safe ML algorithms to have. Once you have some useful properties, you can try to develop algorithms with these properties (bearing in mind that to be practically useful these algorithms will have to end up being adopted by industry). Alternatively, you could develop ways of checking whether systems have these properties. These checks could, for example, help hold future AI products to high safety standards.

Many people working in theoretical AI safety will spend much of their time proving theorems or developing new mathematical frameworks. More conceptual approaches also exist, although they still tend to make heavy use of formal frameworks.

Some examples of research in theoretical AI safety include:

- Risks from learned optimisation in advanced machine learning systems by Hubinger et al.

- Eliciting latent knowledge by Christiano, Cotra and Xu.

- Formalizing the presumption of independence by Christiano, Neyman, and Xu

- Discovering agents by Kenton et al.

- Active reward learning from multiple teachers by Barnett et al.

There are generally fewer roles available in theoretical AI safety work, especially as research contributors. Theoretical research contributor roles exist at nonprofits (primarily the Alignment Research Center), as well as at some labs (for example, Anthropic’s work on conditioning predictive models and the Causal Incentives Working Group at Google DeepMind). Most contributor roles in theoretical AI safety probably exist in academia (for example, PhD students in teams working on projects relevant to theoretical AI safety).

Some exciting approaches to AI safety

There are lots of technical approaches to AI safety currently being pursued. Here are just a few of them:

- Scalably learning from human feedback. Examples include iterated amplification, AI safety via debate, building AI assistants that are uncertain about our goals and learn them by interacting with us, and other ways to get AI systems trained with stochastic gradient descent to report truthfully what they know.

- Threat modelling. An example of this work would be demonstrating the possibility of (allowing us to study) dangerous capabilities, like deceptive or manipulative AI systems. You can read an overview in a recent Google DeepMind paper. This work splits into work that evaluates whether a model has dangerous capabilities (like the work of ARC Evals in evaluating GPT-4), and work that evaluates whether a model would cause harm in practice (like Anthropic’s research into the behaviour of large language models and this paper on goal misgeneralisation).

- Interpretability research. This work involves studying why AI systems do what they do and trying to put it into human-understandable terms. For example, this paper examined how AlphaZero learns chess, and this paper looked into finding latent knowledge in language models without supervision. This category also includes mechanistic interpretability — for example, Zoom In: An Introduction to Circuits by Olah et al.). For more, see this survey paper, as well as Hubinger’s a transparency and interpretability tech tree, and Nanda’s A Longlist of Theories of Impact for Interpretability for overviews of of how interpretability research could reduce existential risk from AI.

- Other anti-misuse research to reduce the risks of catastrophe caused by misuse of systems. (We’ve written more on this in our problem profile on AI risk. For example, this work includes training AIs so they’re hard to use for dangerous purposes. (Note there’s lots of overlap with the other work on this list).

- Research to increase the robustness of neural networks. This work involves ensuring that the sorts of behaviour neural networks display when exposed to one set of inputs continues when exposed to inputs they haven’t previously been exposed to, in order to prevent AI systems changing to unsafe behaviour. See section 2 of Unsolved Problems in AI safety for more.

- Work to build cooperative AI. Find ways to ensure that even if individual AI systems seem safe, they don’t produce bad outcomes through interacting with other sociotechnical systems. For more, see Open Problems in Cooperative AI by Dafoe et al. or the Cooperative AI Foundation. This seems particularly relevant for the reduction of ‘s-risks.’

- More generally, there are some unified safety plans. For more, see Hubinger’s 11 possible proposals for building safe advanced AI, or Karnofsky’s How might we align transformative AI if it’s developed very soon.[2]

It’s worth noting that there are many approaches to AI safety, and people in the field strongly disagree on what will or won’t work.

This means that, once you’re working in the field, it can be worth being charitable and careful not to assume that others’ work is unhelpful just because it seemed so on a quick skim. You should probably be uncertain about your own research agenda as well.

What’s more, as we mentioned earlier, lots of relevant work across all these areas isn’t explicitly labelled ‘safety.’

So it’s important to think carefully about how or whether any particular research helps reduce the risks that AI systems might pose.

What are the downsides of this career path?

AI safety technical research is not the only way to make progress on reducing the risks that future AI systems might pose. Also, there are many other pressing problems in the world that aren’t the possibility of an AI-related catastrophe, and lots of careers that can help with them. If you’d be a better fit working on something else, you should probably do that.

Beyond personal fit, there are a few other downsides to the career path:

- It can be very competitive to enter (although once you’re in, the jobs are well paid, and there are lots of backup options).

- You need quantitative skills — and probably programming skills.

- The work is geographically concentrated in just a few places (mainly the California Bay Area and London, but there are also opportunities in places with top universities such as Oxford, New York, Pittsburgh, and Boston). That said, remote work is increasingly possible at many research labs.

- It might not be very tractable to find good technical ways of reducing the risk. Although assessments of its difficulty vary, and while making progress is almost certainly possible, it may be quite hard to do so. This reduces the impact that you could have working in the field. That said, if you start out in technical work you might be able to transition to governance work, since that often benefits from technical training and experience with the industry, which most people do not have.)

- Relatedly, there’s lots of disagreement in the field about what could work; you’ll probably be able to find at least some people who think what you’re working on is useless, whatever you end up doing.

- Most importantly, there’s some risk of doing harm. While gaining career capital, and while working on the research itself, you’ll have to make difficult decisions and judgement calls about whether you’re working on something beneficial (see our anonymous advice about working in roles that advance AI capabilities). There’s huge disagreement on which technical approaches to AI safety might work — and sometimes this disagreement takes the form of thinking that a strategy will actively increase existential risks from AI.

Finally, we’ve written more about the best arguments against AI being pressing in our problem profile on preventing an AI-related catastrophe. If those are right, maybe you could have more impact working on a different issue.

How much do AI safety technical researchers earn?

Many technical researchers work at companies or small startups that pay wages competitive with the Bay Area and Silicon Valley tech industry, and even smaller organisations and nonprofits will pay competitive wages to attract top talent. The median compensation for a software engineer in the San Francisco Bay area was $222,000 per year in 2020.[3] (Read more about software engineering salaries).

This $222,000 median may be an underestimate, as AI roles, especially in top AI labs that are rapidly scaling up their work in AI, often pay better than other tech jobs, and the same applies to safety researchers — even those in nonprofits.

However, academia has lower salaries than industry in general, and we’d guess that AI safety research roles in academia pay less than commercial labs and nonprofits.

How to predict your fit in advance

You’ll generally need a quantitative background (although not necessarily a background in computer science or machine learning) to enter this career path.

There are two main approaches you can take to predict your fit, and it’s helpful to do both:

- Try it out: try out the first few steps in the section below on learning the basics. If you haven’t yet, try learning some python, as well as taking courses in linear algebra, calculus, and probability. And if you’ve done that, try learning a bit about deep learning and AI safety. Finally, the best way to try this out for many people would be to actually get a job as a (non-safety) ML engineer (see more in the section on how to enter).

- Talk to people about whether it would be a good fit for you: If you want to become a technical researcher, our team probably wants to talk to you. We can give you 1-1 advice, for free. If you know anyone working in the area (or something similar), discuss this career path with them and ask for their honest opinion. You may be able to meet people through our community. Our advisors can also help make connections.

It can take some time to build expertise, and enjoyment can follow expertise — so be prepared to take some time to learn and practice before you decide to switch to something else entirely.

If you’re not sure what roles you might aim for longer term, here are a few rough ways you could make a guess about what to aim for, and whether you might be a good fit for various roles on this path:

- Testing your fit as an empirical research contributor: In a blog post about hiring for safety researchers, the Google DeepMind team said “as a rough test for the Research Engineer role, if you can reproduce a typical ML paper in a few hundred hours and your interests align with ours, we’re probably interested in interviewing you.”

- Looking specifically at software engineering, one hiring manager at Anthropic said that if you could, with a few weeks’ work, write a complex new feature or fix a very serious bug in a major ML library, they’d want to interview you straight away. (Read more.)

- Testing your fit for theoretical research: If you could have gotten into a top 10 maths or theoretical computer science PhD programme if you’d optimised your undergrad to do so, that’s a decent indication of your fit (and many researchers in fact have these PhDs). The Alignment Research Center (one of the few organisations that hires for theoretical research contributors, as of 2023) said that they were open to hiring people without any research background. They gave four tests of fit: creativity (e.g. you may have ideas for solving open problems in the field, like Eliciting Latent Knowledge); experience designing algorithms, proving theorems, or formalising concepts; broad knowledge of maths and computer science; and having thought a lot about the AI alignment problem in particular.

- Testing your fit as a research lead (or for a PhD): The vast majority of research leads have a PhD. Also, many (but definitely not all) AI safety technical research roles will require a PhD — and if they don’t, having a PhD (or being the sort of person that could get one) would definitely help show that you’re a good fit for the work. To get into a top 20 machine learning PhD programme, you’d probably need to publish something like a first author workshop paper, as well as a third author conference paper at a major ML conference (like NeurIPS or ICML). (Read more about whether you should do a PhD.

Read our article on personal fit to learn more about how to assess your fit for the career paths you want to pursue.

How to enter

You might be able to apply for roles right away — especially if you meet, or are near meeting, the tests we just looked at — but it also might take you some time, possibly several years, to skill up first.

So, in this section, we’ll give you a guide to entering technical AI safety research. We’ll go through four key questions:

- How to learn the basics

- Whether you should do a PhD

- How to get a job in empirical research

- How to get a job in theoretical research

Hopefully, by the end of the section, you’ll have everything you need to get going.

Learning the basics

To get anywhere in the world of AI safety technical research, you’ll likely need a background knowledge of coding, maths, and deep learning.

You might also want to practice enough to become a decent ML engineer (although this is generally more useful for empirical research), and learn a bit about safety techniques in particular (although this is generally more useful for empirical research leads and theoretical researchers).

We’ll go through each of these in turn.

Learning to program

You’ll probably want to learn to code in python, because it’s the most widely used language in ML engineering.

The first step is probably just trying it out. As a complete beginner, you can write a Python program in less than 20 minutes that reminds you to take a break every two hours. Don’t be discouraged if your code doesn’t work the first time — that’s what normally happens when people code!

Once you’ve done that, you have a few options:

- Teach yourself to program. Try working through a free beginner course like Automate the boring stuff with Python by Al Seigart. There also are many great introductory computer science and programming courses online, including: Udacity’s Intro to Computer Science, MIT’s Introduction to Computer Science and Programming, and Stanford’s Programming Methodology. Then, try finding something you want to build, and building it — or getting involved in an open-source project. For interview practice, try leetcode or TopCoder, or the exercises in Cracking the Coding Interview by Gayle McDowell.

- Take a college course. If you’re in university, this is a great option because it allows you to learn programming while the opportunity cost of your time is lower. You can even consider majoring in computer science (or another subject involving lots of programming).

- Learn on the job. If you can find internships, you’ll gain practical experience and skills you otherwise wouldn’t pick up from academic degrees.

- Go to a bootcamp. Coding bootcamps are focused on taking people with little knowledge of programming to as highly paid a job as possible within a couple of months — though some claim the long-term prospects are not as good because you lack a deep understanding of computer science. Course Report is a great guide to choosing a bootcamp. Be careful to avoid low-quality bootcamps. You can also find online bootcamps — for people completely new to programming — focused on ML, like Udemy’s Python for Data Science and Machine Learning Bootcamp.

You can read more about learning to program — and how to get your first job in software engineering (if that’s the route you want to take) — in our career review on software engineering.

Learning the maths

The maths of deep learning relies heavily on calculus and linear algebra, and statistics can be useful too — although generally learning the maths is much less important than programming and basic, practical ML.

We’d generally recommend studying a quantitative degree (like maths, computer science or engineering), most of which will cover all three areas pretty well.

If you want to actually get good at maths, you have to be solving problems. So, generally, the most useful thing that textbooks and online courses provide isn’t their explanations — it’s a set of exercises to try to solve, in order, with some help if you get stuck.

If you want to self-study (especially if you don’t have a quantitative degree) here are some possible resources:

- Calculus: 3blue1brown’s video series on calculus could be a good place to start. You may also be able to follow recorded university courses: MIT’s single variable calculus (which requires only high school algebra and trigonometry) followed by MIT’s course in vector and multivariable calculus.

- Linear algebra: Again, we’d suggest 3blue1brown’s video series on linear algebra as a place to start. In his post about technical alignment careers, Rogers-Smith recommends Linear Algebra Done Right by Sheldon Axler. Finally, if you prefer lectures, try MIT’s undergraduate course in linear algebra (although note that this course assumes knowledge of multivariate calculus).

- Probability: Take a look at MIT’s undergraduate course in probability and random variables.

You might be able to find resources that cover all these areas, like Imperial College’s Mathematics for Machine Learning.

Learning basic machine learning

You’ll likely need to have a decent understanding of how AI systems are currently being developed. This will involve learning about machine learning and neural networks, before diving into any specific subfields of deep learning.

Again, there’s the option of covering this at university. If you’re currently at college, it’s worth checking if you can take an ML course even if you’re not majoring in computer science.

There’s one important caveat here: you’ll learn a huge amount on the job, and the amount you’ll need to know in advance for any role or course will vary hugely! Not even top academics know everything about their fields. It’s worth trying to find out how much you’ll need to know for the role you want to do before you invest hundreds of hours into learning about ML.

With that caveat in mind, here are some suggestions of places you might start if you want to self-study the basics:

- 3blue1brown’s series on neural networks is a really great place to start for beginners.

- When I was learning, I used Neural Networks and Deep Learning — it’s an online textbook, good if you’re familiar with the maths, with some helpful exercises as well.

- Online intro courses like fast.ai (focused on practical applications), Full Stack Deep Learning, and the various courses at deeplearning.ai.

- For more detail, see university courses like MIT’s *Introduction to Machine Learning, NYU’s Deep Learning for even more detail. We’d also recommend Google DeepMind’s lecture series.

PyTorch is a very common package used for implementing neural networks, and probably worth learning! When I was first learning about ML, my first neural network was a 3-layer convolutional neural network with L2 regularisation classifying characters from the MNIST database. This is a pretty common first challenge, and a good way to learn PyTorch.

Learning about AI safety

If you’re going to work as an AI safety researcher, it usually helps to know about AI safety.

This isn’t always true — some engineering roles won’t require much knowledge of AI safety. But even then, knowing the basics will probably help land you a position, and can also help with things like making difficult judgement calls and avoiding doing harm. And if you want to be able to identify and do useful work, you’ll need to learn about the field eventually.

Because the field is still so new, there probably aren’t (yet) university courses you can take. So you’ll need to do some self-study. Here are some places you might start:

- Section 3 of our problem profile about preventing an AI-related catastrophe provides an introduction to the problems that AI safety attempts to solve (with a particular focus on alignment).

- Rob Miles’ YouTube channel is full of popular and well-explained introductory videos that don’t need much background knowledge of ML.

- AXRP – the AI X-risk Research Podcast — is full of in-depth (and enjoyable) conversations with researchers about their research.

- The courses from AGI Safety Fundamentals, in particular the AI Alignment Course, possibly followed by Alignment 201, which provide an introduction to research on the alignment problem.

- Intro to ML Safety, a course from the Center for AI Safety focuses on withstanding hazards (“robustness”), identifying hazards (“monitoring”), and reducing systemic hazards (“systemic safety”), as well as alignment.

For more suggestions — especially when it comes to reading about the nature of the risks we might face from AI systems — take a look at the top resources to learn more from our problem profile.

Should you do a PhD?

Some technical research roles will require a PhD — but many won’t, and PhDs aren’t the best option for everyone.

The main benefit of doing a PhD is probably practising setting and carrying out your own research agenda. As a result, getting a PhD is practically the default if you want to be a research lead.

That said, you can also become a research lead without a PhD — in particular, by transitioning from a role as a research contributor. At some large labs, the boundary between being a contributor and a lead is increasingly blurry.

Many people find PhDs very difficult. They can be isolating and frustrating, and take a very long time (4–6 years). What’s more, both your quality of life and the amount you’ll learn will depend on your supervisor — and it can be really difficult to figure out in advance whether you’re making a good choice.

So, if you’re considering doing a PhD, here are some things to consider:

- Your long-term vision: If you’re aiming to be a research lead, that suggests you might want to do a PhD — the vast majority of research leads have PhDs. If you mainly want to be a contributor (e.g. an ML or software engineer), that suggests you might not. If you’re unsure, you should try doing something to test your fit for each, like trying a project or internship. You might try a pre-doctoral research assistant role — if the research you do is relevant to your future career, these can be good career capital, whether or not you do a PhD.

- The topic of your research: It’s easy to let yourself become tied down to a PhD topic you’re not confident in. If the PhD you’re considering would let you work on something that seems useful for AI safety, it’s probably — all else equal — better for your career, and the research itself might have a positive impact as well.

- Mentorship: What are the supervisors or managers like at the opportunities open to you? You might be able to find ML engineering or research roles in industry where you could learn much more than you would in a PhD — or vice versa. When picking a supervisor, try reaching out to the current or former students of a prospective supervisor and asking them some frank questions. (Also, see this article on how to choose a PhD supervisor.)

- Your fit for the work environment: Doing a PhD means working on your own with very little supervision or feedback for long periods of time. Some people thrive in these conditions! But some really don’t and find PhDs extremely difficult.

Read more in our more detailed (but less up-to-date) review of machine learning PhDs.

It’s worth remembering that most jobs don’t need a PhD. And for some jobs, especially empirical research contributor roles, even if a PhD would be helpful, there are often better ways of getting the career capital you’d need (for example, working as a software or ML engineer). We’ve interviewed two ML engineers who have had hugely successful careers without doing a PhD.

Whether you should do a PhD doesn’t depend (much) on timelines

We think it’s plausible that we will develop AI that could be hugely transformative for society by the end of the 2030s.

All else equal, that possibility could argue for trying to have an impact right away, rather than spending five (or more) years doing a PhD.

Ultimately, though, how well you, in particular, are suited to a particular PhD is probably a much more important factor than when AI will be developed.

That is to say, we think the increase in impact caused by choosing a path that’s a good fit for you is probably larger than any decrease in impact caused by delaying your work. This is in part because the spread in impact caused by the specific roles available to you, as well as your personal fit for them, is usually very large. Some roles (especially research lead roles) will just require having a PhD, and others (especially more engineering-heavy roles) won’t — and people’s fit for these paths varies quite a bit.

We’re also highly uncertain about estimates about when we might develop transformative AI. This uncertainty reduces the expected cost of any delay.

Most importantly, we think PhDs shouldn’t be thought of as a pure delay to your impact. You can do useful work in a PhD, and generally, the first couple of years in any career path will involve a lot of learning the basics and getting up to speed. So if you have a good mentor, work environment, and choice of topic, your PhD work could be as good as, or possibly better than, the work you’d do if you went to work elsewhere early in your career. And if you suddenly receive evidence that we have less time than you thought, it’s relatively easy to drop out.

There are lots of other considerations here — for a rough overview, and some discussion, see this post by 80,000 Hours advisor Alex Lawsen, as well as the comments.

Overall, we’d suggest that instead of worrying about a delay to your impact, think instead about which longer-term path you want to pursue, and how the specific opportunities in front of you will get you there.

How to get into a PhD

ML PhDs can be very competitive. To get in, you’ll probably need a few publications (as we said above, something like a first author workshop paper, as well as a third author conference paper at a major ML conference (like NeurIPS or ICML), and references, probably from ML academics. (Although publications also look good whatever path you end up going down!)

To end up at that stage, you’ll need a fair bit of luck, and you’ll also need to find ways to get some research experience.

One option is to do a master’s degree in ML, although make sure it’s a research masters — most ML master’s degrees primarily focus on preparation for industry.

Even better, try getting an internship in an ML research group. Opportunities include RISS at Carnegie Mellon University, UROP at Imperial College London, the Aalto Science Institute international summer research programme, the Data Science Summer Institute, the Toyota Technological Institute intern programme and MILA. You can also try doing an internship specifically in AI safety, for example at CHAI, although there are disadvantages to this approach: it may be harder to publish, and mentorship might be more limited.

Another way of getting research experience is by asking whether you can work with researchers. If you’re already at a top university, it can be easiest to reach out to people working at the university you’re studying at.

PhD students or post-docs can be more responsive than professors, but eventually, you’ll want a few professors you’ve worked with to provide references, so you’ll need to get in touch. Professors tend to get lots of cold emails, so try to get their attention! You can try:

- Getting an introduction, for example from a professor who’s taught you

- Mentioning things you’ve done (your grades, relevant courses you’ve taken, your GitHub, any ML research papers you’ve attempted to replicate as practice)

- Reading some of their papers and the main papers in the field, and mention them in the email

- Applying for funding that’s available to students who want to work in AI safety, and letting people know you’ve got funding to work with them

Ideally, you’ll find someone who supervises you well and has time to work with you (that doesn’t necessarily mean the most famous professor — although it helps a lot if they’re regularly publishing at top conferences). That way, they’ll get to know you, you can impress them, and they’ll provide an amazing reference when you apply for PhDs.

It’s very possible that, to get the publications and references you’ll need to get into a PhD, you’ll need to spend a year or two working as a research assistant, although these positions can also be quite competitive.

This guide by Adam Gleave also goes into more detail on how to get a PhD, including where to apply and tips on the application process itself. We discuss ML PhDs in more detail in our career review on ML PhDs (though it’s outdated compared to this career review).

Getting a job in empirical AI safety research

Ultimately, the best way of learning to do empirical research — especially in contributor and engineering-focused roles — is to work somewhere that does both high-quality engineering and cutting-edge research.

The top three labs are probably Google DeepMind (who offer internships to students), OpenAI (who have a 6-month residency programme) and Anthropic. (Working at a leading AI lab carries with it some risk of doing harm, so it’s important to think carefully about your options. We’ve written a separate article going through the major relevant considerations.)

To end up working in an empirical research role, you’ll probably need to build some career capital.

Whether you want to be a research lead or a contributor, it’s going to help to become a really good software engineer. The best ways of doing this usually involve getting a job as a software engineer at a big tech company or at a promising startup. (We’ve written an entire article about becoming a software engineer.)

Many roles will require you to be a good ML engineer, which means going further than just the basics we looked at above. The best way to become a good ML engineer is to get a job doing ML engineering — and the best places for that are probably leading AI labs.

For roles as a research lead, you’ll need relatively more research experience. You’ll either want to become a research contributor first, or enter through academia (for example by doing a PhD).

All that said, it’s important to remember that you don’t need to know everything to start applying, as you’ll inevitably learn loads on the job — so do try to find out what you’ll need to learn to land the specific roles you’re considering.

How much experience do you need to get a job? It’s worth reiterating the tests we looked at above for contributor roles:

- In a blog post about hiring for safety researchers, the DeepMind team said “as a rough test for the Research Engineer role, if you can reproduce a typical ML paper in a few hundred hours and your interests align with ours, we’re probably interested in interviewing you.”

- Looking specifically at software engineering, one hiring manager at Anthropic said that if you could, with a few weeks’ work, write a new feature or fix a serious bug in a major ML library, they’d want to interview you straight away. (Read more.)

In the process of getting this experience, you might end up working in roles that advance AI capabilities. There are a variety of views on whether this might be harmful — so we’d suggest reading our article about working at leading AI labs and our article containing anonymous advice from experts about working in roles that advance capabilities. It’s also worth talking to our team about any specific opportunities you have.

If you’re doing another job, or a degree, or think you need to learn some more before trying to change careers, there are a few good ways of getting more experience doing ML engineering that go beyond the basics we’ve already covered:

- Getting some experience in software / ML engineering. For example, if you’re doing a degree, you might try an internship as a software engineer during the summer. DeepMind offer internships for students with at least two years of study in a technical subject,

- Replicating papers. One great way of getting experience doing ML engineering, is to replicate some papers in whatever sub-field you might want to work in. Richard Ngo, an AI governance researcher at OpenAI, has written some advice on replicating papers. But bear in mind that replicating papers can be quite hard — take a look at Amid Fish’s blog on what he learned replicating a deep RL paper. Finally, Rogers-Smith has some suggestions on papers to replicate. If you do spend some time replicating papers, remember that when you get to applying for roles, it will be really useful to be able to prove you’ve done the work. So try uploading your work to GitHub, or writing a blog on your progress. And if you’re thinking about spending a long time on this (say, over 100 hours), try to get some feedback on the papers you might replicate before you start — you could even reach out to a lab you want to work for.

- Taking or following a more in-depth course in empirical AI safety research. Redwood Research ran the MLAB bootcamp, and you can apply for access to their curriculum here. You could also take a look at this Deep Learning Curriculum by Jacob Hilton, a researcher at the Alignment Research Center — although it’s probably very challenging without mentorship. [4] The Alignment Research Engineer Accelerator is a program that uses this curriculum. Some mentors on the SERI ML Alignment Theory Scholars Program focus on empirical research.

- Learning about a sub-field of deep learning. In particular, we’d suggest natural language processing (in particular transformers — see this lecture as a starting point) and reinforcement learning (take a look at Pong from Pixels by Andrej Karpathy, and OpenAI’s Spinning up in Deep RL). Try to get to the point where you know about the most important recent advances.

Getting a job in theoretical AI safety research

There are fewer jobs available in theoretical AI safety research, so it’s harder to give concrete advice. Having a maths or theoretical computer science PhD isn’t always necessary, but is fairly common among researchers in industry, and is pretty much required to be an academic.

If you do a PhD, ideally it’d be in an area at least somewhat related to theoretical AI safety research. For example, it could be in probability theory as applied to AI, or in theoretical CS (look for researchers who publish in COLT or FOCS).

Alternatively, one path is to become an empirical research lead before moving into theoretical research.

Compared to empirical research, you’ll need to know relatively less about engineering, and relatively more about AI safety as a field.

Once you’ve done the basics, one possible next step you could try is reading papers from a particular researcher, or on a particular topic, and summarising what you’ve found.

You could also try spending some time (maybe 10–100 hours) reading about a topic and then some more time (maybe another 10–100 hours) trying to come up with some new ideas on that topic. For example, you could try coming up with proposals to solve the problem of eliciting latent knowledge. Alternatively, if you wanted to focus on the more mathematical side, you could try having a go at the assignment at the end of this lecture by Michael Cohen, a grad student at the University of Oxford.

If you want to enter academia, reading a ton of papers seems particularly important. Maybe try writing a survey paper on a certain topic in your spare time. It’s a great way to master a topic, spark new ideas, spot gaps, and come up with research ideas. When applying to grad school or jobs, your paper is a fantastic way to show you love research so much you do it for fun.

There are some research programmes aimed at people new to the field, such as the SERI ML Alignment Theory Scholars Program, to which you could apply.

Other ways to get more concrete experience include doing research internships, working as a research assistant, or doing a PhD, all of which we’ve written about above, in the section on whether and how you can get into a PhD programme.

One note is that a lot of people we talk to try to learn independently. This can be a great idea for some people, but is fairly tough for many, because there’s substantially less structure and mentorship.

Recommended organisations

AI labs in industry that have empirical technical safety teams, or are focused entirely on safety:

- Anthropic is an AI safety company working on building interpretable and safe AI systems. They focus on empirical AI safety research. Anthropic cofounders Daniela and Dario Amodei gave an interview about the lab on the Future of Life Institute podcast. On our podcast, we spoke to Chris Olah, who leads Anthropic’s research into interpretability, and Nova DasSarma, who works on systems infrastructure at Anthropic.

- ARC Evals works on assessing whether cutting-edge AI systems could pose catastrophic risks to civilization, including early-stage, experimental work to develop techniques, and evaluating systems produced by Anthropic and OpenAI.

- The Center for AI Safety is a nonprofit that does technical research and promotion of safety in the wider machine learning community.

- FAR AI is a research nonprofit that incubates and accelerates research agendas that are too resource-intensive for academia but not yet ready for commercialisation by industry, including research in adversarial robustness, interpretability and preference learning.

- Google DeepMind is probably the largest and most well-known research group developing general artificial machine intelligence, and is famous for its work creating AlphaGo, AlphaZero, and AlphaFold. It is not principally focused on safety, but has two teams focused on AI safety, with the Scalable Alignment Team focusing on aligning existing state-of-the-art systems, and the Alignment Team focused on research bets for aligning future systems.

- OpenAI, founded in 2015, is a lab that is trying to build artificial general intelligence that is safe and benefits all of humanity. OpenAI is well known for its language models like GPT-4. Like DeepMind, it is not principally focused on safety, but has a safety team and a governance team. Jan Leike (co-lead of the superalignment team) has some blog posts on how he thinks about AI alignment.

- Ought is a machine learning lab building Elicit, an AI research assistant. Their aim is to align open-ended reasoning by learning human reasoning steps, and to direct AI progress towards helping with evaluating evidence and arguments.

- Redwood Research is an AI safety research organisation, whose first big project attempted to make sure language models (like GPT-3) produce output following certain rules with very high probability, in order to address failure modes too rare to show up in standard training.

Theoretical / conceptual AI safety labs:

- The Alignment Research Center (ARC) is attempting to produce alignment strategies that could be adopted in industry today while also being able to scale to future systems. They focus on conceptual work, developing strategies that could work for alignment and which may be promising directions for empirical work, rather than doing empirical AI work themselves. Their first project was releasing a report on Eliciting Latent Knowledge, the problem of getting advanced AI systems to honestly tell you what they believe (or ‘believe’) about the world. On our podcast, we interviewed ARC founder Paul Christiano about his research (before he founded ARC).

- The Center on Long-Term Risk works to address worst-case risks from advanced AI. They focus on conflict between AI systems.

- The Machine Intelligence Research Institute was one of the first groups to become concerned about the risks from machine intelligence in the early 2000s, and its team has published a number of papers on safety issues and how to resolve them.

- Some teams in commercial labs also do some more theoretical and conceptual work on alignment, such as Anthropic’s work on conditioning predictive models and the Causal Incentives Working Group at Google DeepMind.

AI safety in academia (a very non-comprehensive list; while the number of academics explicitly and publicly focused on AI safety is small, it’s possible to do relevant work at a much wider set of places):

- The Algorithmic Alignment Group in the Computer Science and Artificial Intelligence Laboratory at MIT, led by Dylan Hadfield-Menell

- The Center for Human-Compatible AI at UC Berkeley, led by Stuart Russell, focuses on academic research to ensure AI is safe and beneficial to humans. (Our podcast with Stuart Russell examines his approach to provably beneficial AI.)

- Jacob Steinhardt’s research group in the Department of Statistics at UC Berkeley

- The NYU Alignment research Group led by Sam Bowman

- David Krueger’s research group at the Computational and Biological Learning Laboratory at the University of Cambridge

- The Foundations of Cooperative AI Lab at Carnegie Mellon University

- The Future of Humanity Institute at the University of Oxford has an AI safety research group

- The Alignment of Complex Systems research group at Charles University, Prague

Learn more about AI safety technical research

Here are some suggestions about where you could learn more:

- To help you get oriented in the field, we recommend the AI safety starter pack.

- Charlie Rogers-Smith’s step-by-step guide to AI safety careers (which this article is in large part based on) provides some helpful concrete advice, including ways you might get some funding to help you move into an AI safety technical research career.

- Careers in Beneficial AI Research by Adam Gleave, CEO of FAR AI

- Our problem profile on AI risk

- This curriculum on AI safety (or, for something shorter, this sequence of posts by Richard Ngo)

- Our career review of machine learning PhDs

- Our career review of software engineering

- Our career review of working at a leading AI lab

If you prefer podcasts, there are some relevant episodes of the 80,000 Hours podcast you might find helpful:

- Dr Paul Christiano on how OpenAI is developing real solutions to the ‘AI alignment problem,’ and his vision of how humanity will progressively hand over decision-making to AI systems

- Machine learning engineering for AI safety and robustness: a Google Brain engineer’s guide to entering the field

- The world needs AI researchers. Here’s how to become one

- Chris Olah on working at top AI labs without an undergrad degree and What the hell is going on inside neural networks

- A machine learning alignment researcher on how to become a machine learning alignment researcher

- Richard Ngo on large language models, OpenAI, and striving to make the future go well

Notes and references

- ^

Estimating this number is very difficult. Ideally we want to estimate the number of FTE (“full-time equivalent“) working on the problem of reducing existential risks from AI using technical methods. After making a number of assumptions, I estimated that there were 76 to 536 FTE working on technical AI safety (90% confidence). To learn more, read the section on neglectedness in our problem profile on AI, alongside footnote 3.

- ^

Holden Karnofsky is the co-founder of Open Philanthropy, 80000 Hours’ largest funder.

- ^

Data from Levels.fyi (visited Jan 27, 2022).

- ^

Jacob is my brother.