The potential Anthropic IPO could lead to hundreds of millions of dollars flowing into AI safety in the coming years. With a lack of funding mechanisms that can scale, it is likely that this capital will either go to the same few organizations that are already present in the AI Safety circle or become frozen in donor advised funds due to lack of urgency/clarity in the structure- both causing an inability to build a funnel to attract new talent (and solutions).

Even at current levels of available funding we see traces of failure already-

Several AI safety funding groups have faced criticism for struggling to deploy capital at the pace their available resources would allow. Concerns include a strong dependence on networks centered in the Bay Area and London, grant processes that can take over four months, and challenges in identifying and supporting organizations beyond their existing circles. This funding is also largely concentrated in a handful of grant-making organizations such as Coefficient Giving and Future of Life Institute.

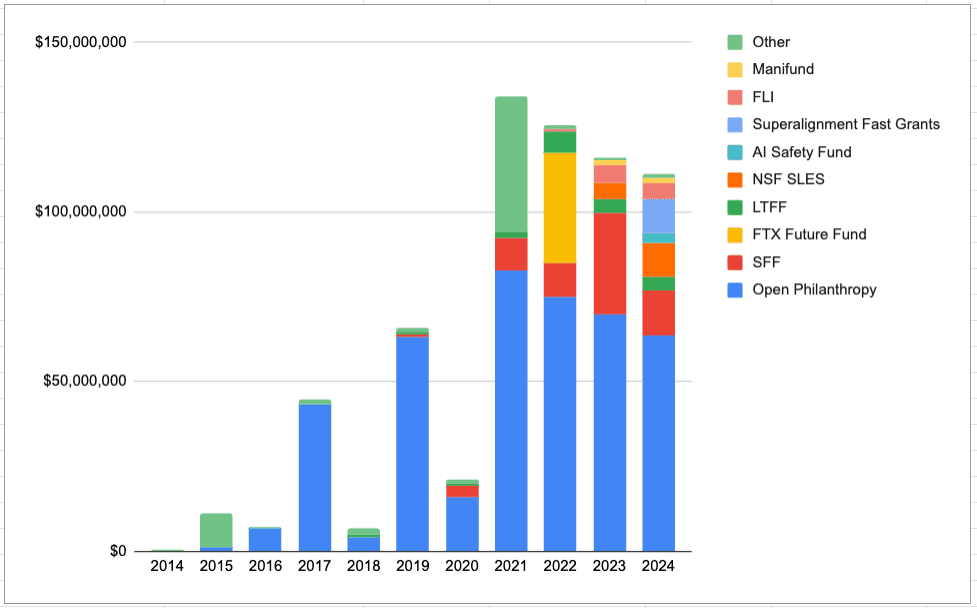

Here’s what current funding landscape looks like-

Source An Overview of the AI Safety Funding Situation

Sophie Kim and Ady Mehta have written the canonical version of the argument:roughly 30 to 60 people in the world do serious AI safety grantmaking, and the capital is about to scale by orders of magnitude while their numbers haven’t.

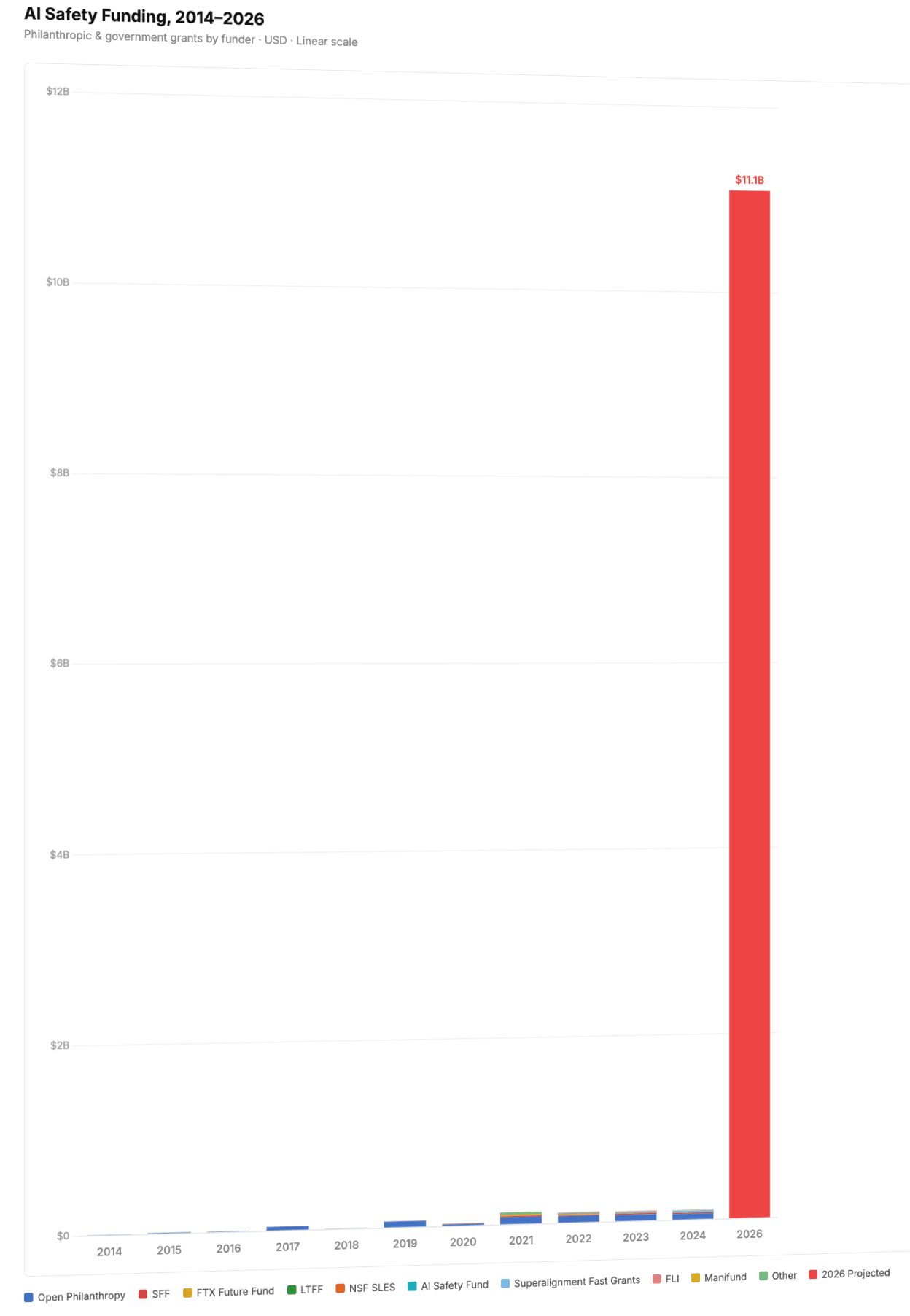

As Transformer recently reported “All seven of its co-founders…have pledged to donate 80% of their wealth…each co-founder’s pledge would be worth roughly $5.4b, or $37.8b combined. For scale: that’s nearly ten times what Coefficient Giving.”

I’m not sure how the allocation would be decided, but even if 30% of that may be allocated to AI safety, here’s what that would look like compared to current landscape-

Source: Claude Code generated graph

Obviously this is hypothetical, and even if this amount of funding were to flow, it would be deployed over a range of years (not in a single year), but the argument I’m trying to make is that current AI safety funding mechanisms aren’t built for the scale of capital that we might see.

I want to explore the bigger problem.

The wrong problem statement

If we somehow tripled the number of AI safety grantmakers tomorrow, we would still be deploying capital through a mechanism that, by its nature, scales with labor instead of with money.

The bottleneck isn’t only that we need more grantmakers. The bottleneck is that grantmaking, as a mechanism, is fundamentally push-driven: a small number of people with discretion deciding ex ante where capital should go. Push mechanisms work brilliantly for high-trust, small-N decisions. They work poorly when you suddenly need to deploy ten billion dollars and the talent pool you want to fund is mostly people you don’t yet know exist.

The interesting question isn’t “how do we hire faster?”

It’s: what does pull-driven AI safety funding look like, and why don’t we have any of it at scale?

Push vs. pull

A pull mechanism doesn’t ask “who should we fund?” It asks “what outcome do we want, and how much will we pay for it?” Then it lets anyone in the world qualify. The labor cost per dollar deployed collapses. The talent pool widens by orders of magnitude. And, crucially, the result becomes legible in a way that pre-allocated grants almost never are.

Three case studies make the point.

CASE STUDY 1: XPRIZE $10M prize, $100M invested, an entire industry

Peter Diamandis launched the Ansari XPRIZE in 1996: $10 million to the first private team to fly a three-passenger vehicle to space twice within two weeks. By 2004, 26 teams from 7 countries had spent more than $100 million chasing a $10 million prize. SpaceShipOne won. The technology was licensed by Richard Branson to start Virgin Galactic. Modern commercial spaceflight was significantly catalyzed by the Ansari XPRIZE.

That’s a 10x leverage ratio on the prize purse, and it ignores the long-tail value of an entire industry being legibly born.

Two decades later, XPRIZE ran the same play at higher stakes with the Musk Foundation’s $100M Carbon Removal prize. The numbers are extraordinary. Over four years, the competition pulled in 1,300 teams from 88 countries. Participating companies raised $3.3 billion in capital across the prize period, a 33x return on the prize purse. The 136 distinguished teams collectively removed 243,552 tonnes of CO2 and sold 9.4 million tonnes of carbon credits. Mati Carbon, the grand prize winner, deploys enhanced rock weathering on smallholder farms in India, turning a fringe geochemistry idea into a credible, investable industry.

The actual product was a category-defining event that pulled venture capital, public attention, scientific talent, and policy interest into a field that had previously been niche. The prize money was the smallest part of what got deployed.

This is what pull-driven funding looks like at scale. You set a goal that’s hard but legible. You commit money for hitting it. The world brings you talent you’ve never heard of.

CASE STUDY 2: DARPA Grand Challenge- how a $2M prize created Waymo

In 2004, DARPA offered $1 million to whoever could build a self-driving car capable of completing a 142-mile desert course. Every single entrant crashed, broke down, or caught on fire. The prize went unclaimed. A day later, DARPA announced a second challenge with the purse doubled to $2 million. In 2005, Stanford’s “Stanley” finishedthe 132-mile course in under seven hours. Five vehicles completed it.

The competition became a proving ground for early self-driving talent: Stanford team lead Sebastian Thrun and Carnegie Mellon’s Chris Urmson later founded Google’s self-driving car project, which evolved into Waymo. While not the sole cause, the DARPA Grand Challenge is widely credited with catalyzing the modern autonomous vehicle industry. It birthed an industry now valued in the hundreds of billions.

The point isn’t that prizes always work. The Google Lunar XPRIZE expired with no winner. The XPRIZE Feed the Next Billion judges declined to award a grand prize because no team met the bar. The point is that even the failed prizes generate community, talent migration, and investment that vastly exceeds the purse. Teams in the Lunar XPRIZE invested over $420 million collectively. Some of those teams went on to land actual spacecraft on the moon for actual NASA contracts a decade later.

CASE STUDY 3: Advance Market Commitments: paying for outcomes, not effort

In 2007, five governments and the Gates Foundation committed $1.5 billion to an Advance Market Commitment for pneumococcal vaccines. The program guaranteed that if manufacturers supplied vaccines meeting predefined safety and efficacy standards for low-income countries, donors would subsidize the initial doses by topping up the price.

By 2020, three vaccines qualified, more than 150 million children had been immunized, and the pilot is estimated to have saved roughly 700,000 lives. By 2024, the program had immunized over 225 million children. The “tail price” for low-income countries fell to $2 to $3 per dose, against $50 to $100 in high-income markets.

AMCs are a different shape of pull mechanism than prizes. Prizes pay for the first solution to qualify. AMCs commit to buy at scale. The shared logic is that funders specify the outcome and let providers compete to deliver it, instead of pre-selecting providers and hoping they figure it out.

The model has already crossed over from health to climate. In 2022, Stripe’s Nan Ransohoff launched Frontier Climate, an AMC committing to buy $925 million of permanent carbon removal between 2022 and 2030.

The basic insight, that you can manufacture demand for technologies that don’t yet exist by promising to pay if they do, applies in any field where the bottleneck is risk-adjusted incentive to build, not raw research.

Additional case: Y Combinator- programmatic, not bespoke

The other relevant case study isn’t a prize at all. It’s the YC model. Since 2005, Y Combinator has funded over 5,668 companies. Each batch is now 250 to 300 startups. Acceptance rate is below 1%. Each company gets a standard $500K deal. Combined portfolio valuation is over $600 billion.

What’s interesting is the operational structure. YC has a relatively small core of partners. They process tens of thousands of applications per batch through a programmatic, repeatable evaluation pipeline. The deal terms are standardized. The diligence is light by venture standards. The selection signal comes from a combination of structured interviews, founder track record, and intrinsic project quality, evaluated quickly and at scale.

Compare this to AI safety. Coefficient Giving’s technical AI safety team had three grant investigators evaluating $140M+ in grants in 2025. The unit economics here are: roughly $46 million in grants per investigator, with bespoke evaluation and long turnaround (relative to a model like YC). YC’s economics are: roughly $500K per company across thousands of companies, with a fixed turnaround of weeks.

I’m not arguing AI safety should adopt YC’s investment thesis. These are fundamentally different spaces, with one being for-profit and the other largely non-profit. I’m arguing it should adopt/learn from YC’s deployment architecture. Programmatic terms, batch processing, fast standardized decisions, and most importantly, a permanent ongoing operation that the field knows exists and applies to without negotiating from scratch every time.

There are some early-stage versions of this in safety: Seldon Lab and Catalyze Impactare two popular ones that come to mind. They are tiny relative to the wave of capital coming.

The gap

Stack the case studies up against AI safety today and the gap is glaring.

The scarce thing in AI safety isn’t money, increasingly. It isn’t even grantmakers, though more would help. It’s deployment surface area. There aren’t enough places where capital can land productively without going through a person.

What to actually build

If I had a real share of the Anthropic IPO wave to direct, I would not give all of it to existing grantmakers, however good they are. I would put a significant fraction into pull mechanisms that don’t exist yet. Here’s some concrete pathways:

A $100M+ AI Safety XPRIZE equivalent. Pick a hard, legible target. This is the kind of thing that would put safety on the front page in the way the Ansari prize put commercial spaceflight on the front page.

An AMC for AI safety infrastructure. There are several products that current funding mechanisms struggle to incentivize because they’re awkwardly between research and product: scalable interpretability tooling (I know Goodfire is already on this), third-party audit pipelines, secure compute clusters with verified safety properties. An AMC commits something like, “if a vendor builds a tool meeting this spec, donors will top up the price for the first $X of usage by qualifying labs.”

A YC-scale safety accelerator. Pair it with a global hackathon series, run in 20+ cities, designed to surface builder talent that would never apply to a US grant program. Talent trumps everything; the best talent is always in competition for. AI safety is structurally bad at this and shouldn’t be.

A bounty stack for specific technical results. Smaller than a prize, more frequent. $50K to $500K bounties on a rolling basis for specific deliverables: a verified circuit, a reproduction of a paper’s central claim, a working benchmark.

These mechanisms don’t replace grantmakers. They sit alongside grantmakers and absorb the kinds of capital that grantmakers can’t deploy fast enough.

The marketing problem solves itself

There’s a structural critique of AI safety I want to flag, briefly, because pull mechanisms partially solve it.

The field operates in silos. Funding moves through trust networks, which are illegible by design, which means the field’s actual achievements are illegible too. This is a marketing problem, but it’s also an epistemics problem. You cannot run a healthy field on private knowledge alone.

Prizes fix this almost as a side effect. The Ansari XPRIZE was a media event before it was a technology achievement. When you build a pull mechanism, you build a story arc, with stakes, characters, and a finish line. AI safety lacks a public story arc. Funding mechanisms that double as cultural infrastructure are unusually valuable here.

Closing

The argument is simple. The capital wave coming to AI safety will exceed the field’s grantmaking capacity. The standard response is “hire more grantmakers.” This is correct and insufficient. The deeper move is to build mechanisms that deploy capital without proportionally requiring grantmaker time, by paying for outcomes instead of paying for people.

Building that surface area is, plausibly, the highest-leverage thing anyone in this field can do over the next eighteen months.

If you have a vision for one of these mechanisms and you’ve been waiting for permission, this is it. Go build it.

NOTE: this substack was submitted to the Manifund: “What systems might manage the coming torrent of funding?” essay competition.

Also note: that I am not an expert at fund management, and have limited exposure to X prize and some of the other sources mentioned. The goal of this blog is to introduce a new funding methodology that I found was interesting, and back that with promising case studies that show its success.

AI was used in research and structuring parts of the blog, but all sources were double checked, and the AI-suggested structuring were taken as inspiration and suggestions as I wrote this blog.

Roughly 10-15% of the blog was AI-generated but it was edited thoroughly to ensure accuracy and information relay was effective.