Note: This post was crossposted from Planned Obsolescence by the Forum team, with the author's permission. The author may not see or respond to comments on this post.

| Most new technologies don’t accelerate the pace of economic growth. But advanced AI might do this by massively increasing the research effort going into developing new technologies. |

What will happen to economic growth once AI has made us all obsolete? Economists are often skeptical of big effects.

One reason they give is that new technologies typically don’t accelerate economic growth. Instead, they typically cause a one-time gain in economic output, and then growth continues at its normal rate.

For example, Bryan Caplan, an econ prof at GMU, recently tweeted:

Tech moved 10x faster than I expected in the last year…

Economic effects will be modest & gradual. Even electricity took decades to make a huge difference…

What I doubt is that any one new tech will raise growth by even 1 percentage-point per year.

This dynamic has played out over the past 50 years. We developed computers and the internet, but economic growth didn’t speed up, and if anything it got slower.

I think this is the right way to understand the economic impact of current AI. GPT-4 will raise productivity in many sectors as it is gradually adopted across the economy, but it won’t permanently accelerate economic growth by itself.

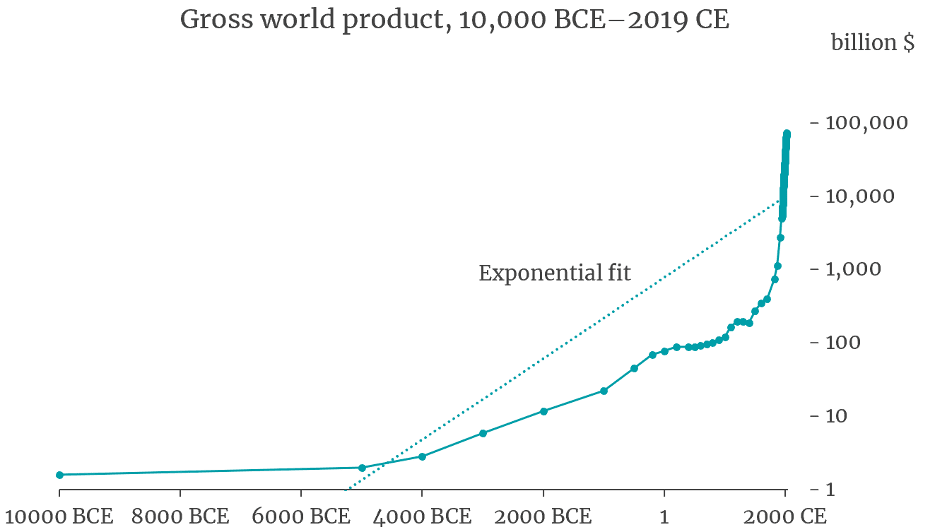

But this doesn’t mean it’s impossible to ever accelerate economic growth. In fact, economic growth has become much faster over the past 2000 years. Over the last few decades, the global economy has grown at about ~3% per year. But around 1800 it grew more slowly, at about ~1% per year. And earlier in time growth was slower still, below 0.1% per year if you go back far enough.

So, if new technologies don’t ever really accelerate economic growth, why is growth so much higher today than it was 2000 years ago? The consensus view in economics is that modern growth is so fast because we put continual effort into innovation[1]. We deliberately invest in R&D to invent new technologies, we put effort into making our manufacturing processes more efficient, we design supply chains to efficiently distribute new technologies quickly across the economy, and so on.

The world is collectively putting more effort into innovation now compared to 2000 years ago for two main reasons: we have more people overall, and a larger fraction of those people are working on developing new technologies.

- On the first point: The global population is about thirty times larger than it was 2000 years ago, so there are more people who can potentially come up with ideas for new technologies, and more people who can work to make them a reality.

- On the second point: a larger fraction of the population today specializes in R&D for new technologies.

- One big reason for this is better education — 2000 years ago almost nobody received an academic education; today, mass education means that a larger fraction of the population has the background skills needed to contribute to technology R&D.[2]

- Another major reason is better institutions for encouraging and enabling innovation. For example, in the past you had to be independently wealthy to be an inventor, because you had to fund your own research. But today, investment markets and government grants will often finance promising ideas, so you can try to invent new technologies even if you couldn’t fund all the research yourself.

Today around ~20 million people work in R&D worldwide.[3] Two thousand years ago the effort going into research was much smaller, I’d guess by a factor of 1000.[4] Economic growth is much faster today than it was 2000 years ago not because of any single new technology, but because we are putting in so much more effort into generating a steady stream of new innovations.

I think future AI might massively increase the world’s innovation efforts again, and thereby accelerate economic growth. Rather than being “just one more technology”, it might massively increase the pace at which humanity develops new technologies.

How much might future AI increase the world’s innovation efforts?

As I discussed in a previous post, once we develop AI that is “expert human level” at AI research, it might not be long before we have AI that is way beyond human experts in all domains. That is, AI that is way better than the best humans at thinking of new ideas, designing experiments to test those ideas, building new technologies, running organizations, and navigating bureaucracies.[5]

What’s more, because it takes so many more computer chips to train powerful AI than to run it, once we’ve trained these superhuman AIs we would potentially have enough computation to run them on billions of tasks in parallel.[6] There could be massive research organizations where AIs manage other AIs to conduct millions of research projects in parallel. And these AIs could innovate tirelessly day and night.[7]

As well as having superhuman intelligence, these AIs could think much more quickly than humans. ChatGPT Turbo can already write ~800 words per minute, whereas humans typically write about 40 words per minute. So AI can already write ~20X faster than humans. In just one day, each AI could potentially think as many thoughts as a human thinks in a month.[8]

My best guess is that AI this powerful would increase the world’s innovative efforts by more than 100X. Perhaps, like before, this would significantly accelerate economic growth.

In standard neoclassical models growth is ultimately driven by better technology, which the model assumes improves exponentially. Semi-endogenous models go further in modeling technological progress as resulting from targeted R&D efforts. Other models take a different approach and represent technological progress as driven by “learning by doing”. In all these models, growth is ultimately driven by innovation.

There are some growth models where growth is ultimately driven by capital accumulation rather than technological progress. But these aren’t particularly popular and they must deny that there are diminishing returns to capital on the current margin. Interpreted at face value, they imply that developed countries became richer over the past 100 years solely by producing more of the goods that already existed 100 years ago, rather than by developing higher quality goods and new technologies.

I do think standard growth models miss part of the picture, which is that growth in GDP/capita has been in part driven by one-time changes like increased workforce participation by women. ↩︎Better education also means that the people doing R&D are more skilled on average. ↩︎

OECD data from 2015 estimates that the global workforce in science and engineering is 7 million, though this omits India and Brazil. A recent paper estimates ~20 million full-time equivalents do R&D worldwide. ↩︎

Why a factor of 1000? Population was ~30X lower, and various data sources suggest research concentration in 1800 was 30X lower than today (see the subsection “Data on the research concentration in 1800” in this report). Combining those factors, the number of researchers 2000 years ago was lower than today by a factor of 30*30 = ~1000X.

In fact, the fraction of people doing research 2000 years ago was likely lower than in 1800, suggesting an even bigger difference than 1000X. On the other hand, research effort 2000 years ago may have come more from many people making small innovations in their personal workflows than from full time researchers. ↩︎There are some innovative activities that disembodied AI couldn’t automate, because they require interacting with the physical world. This could significantly limit AI’s effect on economic growth. On the other hand, AI might design robots that can do all the physical tasks that humans can do. Then AIs could control these robots remotely and perform all the tasks involved in innovation. ↩︎

Of course, we don’t know how much compute superhuman AI would take to train or to run. To guess at this, I estimated how many tasks GPT-8 could perform in parallel using only the computer chips needed to train it.

In a previous post I estimated that GPT-4 could perform 300,000 tasks in parallel with the compute used to train it. Compared to GPT-4, I assumed that GPT-8 would have 10,000 times as many parameters and need 100 million times as much computing power to train (in line with the Chinchilla scaling law). This implies that GPT-8 could perform 10,000X (= 100 million/10,000) as many tasks in parallel. I.e. 3 billion (=300,000 * 10,000) tasks. See calc. ↩︎What’s more, the size of this AI workforce could grow rapidly over time. AI could work to increase the number of AIs and how smart they are by designing better AI algorithms, designing better AI chips, and investing more money to build more AI chips. Already AI algorithms are becoming about twice as efficient each year, AI chips are becoming twice as efficient every ~2-3 years, and investments in AI are growing quickly. If this pace of improvement keeps up, the size of the AI workforce would more than double every year! This fast-growing workforce could innovate quickly despite ideas becoming harder to find. ↩︎

A previous footnote argued that if we took the computer chips that were used to train superhuman AI and used them to run copies of the superhuman AI, we could run 3 billion copies in parallel. They could work tirelessly day and night, rather than the ~8 hours/day from human workers, which increases the size of the effective AI workforce to 9 billion.

If 2 million out of these 9 billion AIs work on innovation, that is already a 100X increase on the current size of the R&D workforce (~20 million – see previous footnote). But there are two reasons the increase in innovative effort will be bigger than this. Firstly, each superhuman AI is much more smart and productive than the best R&D workers today. This is a massive effect, bigger than turning every scientist alive today into a top performer in their field. Lastly, there’s a large gain in productivity from the AIs being able to think faster. Rather than having 2 billion AIs thinking at human speed, we could have 100 million AIs thinking at 20X human speed. ↩︎