As an 80,000 Hours advisor, I’ve spoken with hundreds of people who want to use their careers to mitigate AI risks but don’t know where to start.

While the most efficient route to enter depends on your background, there’s some foundational advice for breaking into AI safety that I find myself repeating on almost every advising call.

These steps are hard, but they’re not particularly complicated. Almost everyone I’ve advised who successfully found work in AI safety followed them, and most experts or hiring managers that I’ve spoken to have endorsed similar ideas for how to get started. If they're efficient and driven, I think people from many backgrounds can pivot within three months.

So, consider this post a crash course for breaking into AI safety. It briefly covers how to build foundational knowledge, identify your comparative advantages, test your fit, develop side projects, tighten your feedback loops, network, and start applying.

1. Build your foundational knowledge and AI safety ‘context’

There are a couple dozen hours of foundational reading/listening that I think everyone trying to enter the AI safety field should do — but it might be more approachable than you realize. If you consistently take ~30 minutes a day to read while drinking your morning coffee, or listen to podcasts and article narrations during your commute, you can build your foundation quickly. Or get some friends together and form a reading group. When my advisees have enthusiastically jumped into the AI safety rabbit hole, I’ve seen them get a better sense of where to focus and how to improve their applications.

What should you read or listen to? 80,000 Hours’ AI risk essential reading list and problem profiles are great places to start. Then, you might consider BlueDot course curricula (or better, enrolling in a course directly!) and foundational papers like “The Alignment Problem from a Deep Learning Perspective.” Once you have a sense of what you want to specialize in (see the next section of this post), you can review more specific resources, like our lists for technical work, policy, etc.

Your goal should be to reach the point where you could have a 30-minute conversation at an AI safety conference where the other person comes away thinking that you understand the core problems and a few potential solutions. Research-heavy roles will require more specialized domain expertise, but most jobs will not require you to implement the transformer architecture — it will often suffice to know what the transformer is and why it’s significant.

Specifically, you should have an answer for how fast you think AI progress might be over the next few years, which problems you think are most pressing based on this, and a few ideas for which organizations’ “theories of change” you think make the most sense. Your views can evolve over time, but to ensure you’re efficient with your job search, you should know that MIRI and CSET might expect to hear different answers from competitive applicants.

You might think: “Who am I to form opinions about this? I’m not an expert.” I’d respond that you don’t have to be confident, but you should have something to say. It’s fine to keep your views loosely held and defer to experts well (deferral is a skill, after all!), but you should take ownership over forming your own takes. Hiring managers will also want to hear your thoughts and reasoning.

Some people refer to this as developing your AI safety ‘context.’ The post “Why experienced professionals fail to land high-impact roles” explains why some otherwise promising candidates can get rejected from hiring rounds for not communicating that they understand AI safety issues and the organization’s approach to solving them — in other words, they haven’t developed sufficient context about the field.

In other industries, this isn’t as necessary. But in most jobs tackling catastrophic risk from AI, organizations want to find candidates who share their mission and are willing to think rigorously about problems in a scope-sensitive way, including non-research jobs. These organizations have ambitious goals to solve big problems that others haven’t necessarily grasped yet, so it’s important that you’d be obsessed with thinking about similar issues. That said, you don’t have to agree with everything a hiring manager thinks — thoughtful pushback can show that you’ve developed your own views.

2. Identify the in-demand skills you have or could build

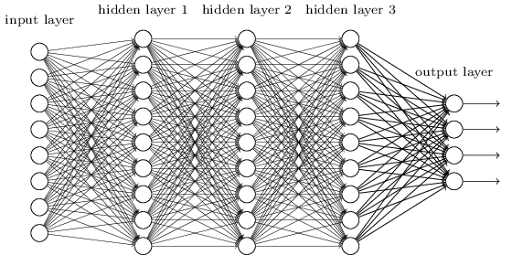

To solve problems, you identify and build skills… but which ones? It’s not always obvious from the outside which skills are in demand in AI safety, especially because there are so many options for how to contribute. Some of the broad categories include:

- technical safety research

- AI policy

- strategic operations and scaling organizations

- fieldbuilding

- communicating ideas

- … and various other options like AI biorisk, macrostrategy, politics, etc.

You should reflect on what skills and experience you’ve built so far that could fit one of these approaches. Once you have, you should look at job postings for roles where, even if you aren’t ready to apply yet, you can get a sense of which skills and experience organizations are looking for. If there are experts that you admire, you could read about their own career paths, or listen to interviews that they’ve given. Similarly, one of the fastest ways to learn about a field is to speak directly with people working in it. A later section of this article covers strategies for how to do this, or you can see our networking guide.

Ultimately, what you’d be good at and could quickly learn is an empirical question. Self-reflection and reading are a good start, but soon you’ll want to run experiments. At first, these should be short time-bounded experiments that you can do over a weekend or a few evenings. Seek to efficiently find where you should be applying.

You can “test your fit” with small projects to see what you enjoy and can get better at. This could involve taking a free technical AI safety course, writing a blog post about a think tank report, building a data dashboard that someone might find useful, hosting your own event part time, etc. If you enjoy your experiment, that’s useful data. But if it puts you to sleep or makes you feel miserable, that’s also important to know!

Most people should start by expanding the number of options they’re considering, then narrow them down through experiments and feedback. I’d also encourage you to apply for advising to get more tailored advice.

3. Apply for fellowships and jobs

Compared to other industries, AI safety has an unusually high number of incubators, fellowship programs, and resources to help you find jobs — including our job board! The AI safety field has grown about 3000% in the last eight years, driven by the urgency of these problems and its flourishing talent development ecosystem.

80,000 Hours has a list of technical AI safety fellowships here, and AISafety.com lists many resources as well. In AI policy, we have a list of fellowships here, and EmergingTechPolicy.org has a broader fellowships guide and database. Fellowships can be a quick way to get feedback, work on a concrete AI safety project, and get referrals for roles.

There are fewer fellowships for generalists who can get things done, manage teams or projects, and unblock bottlenecks for organizations. If this describes you, you should try to learn from experience as soon as possible by applying for full-time operations jobs in AI safety, and/or developing side projects.

Of course, you should apply for a few jobs directly, even if you’re unsure how competitive you are. I’ve seen many people underrate themselves; applications can provide useful information about your skills and can teach you new ones through interview prep and work tests. You can set up alerts on the 80,000 Hours job board for particular kinds of roles to be proactively emailed to you — some positions only open for a couple weeks before closing, and I’ve seen competitive candidates accidentally miss a deadline. Also consider submitting an expression of interest to a top organization if you have relevant experience.

Since you only have so many hours in a week, you might divide your applications into a few categories: maybe 25% for ‘dream job’ applications where you want to learn from the process and aim high, 50% for your core focus, and depending on your situation, allocate the rest to safer applications for career-capital-building jobs that will let you develop specific skills for a year outside of AI safety.

4. ‘Networking’ is cliche, but seriously, go talk to people

People often hate ‘networking.’ It can feel transactional, inauthentic, or like the only way to get a job is to already know somebody. I empathize with this, but I think a better approach is to treat your networking like a research project and to remember that talent markets are shockingly inefficient. It’s extremely time-consuming for an organization to assess thousands of resumes, and even after an interview or work test, it’s not always clear whether a candidate is a good fit.

A referral from a trusted source can save an organization a lot of time. Even “we had a good chat at an event” can provide a useful signal because it can show you’re thoughtful and have context (see above). However, this shouldn’t be seen as a necessary condition for finding impactful work — lots of people are hired from cold applications, and many organizations in AI safety use multi-stage processes with paid work tests, graded anonymously, to find great candidates who weren’t on their radar.

Speaking with experts is also one of the fastest ways to learn – you might get more tacit knowledge and direction from a 30-minute coffee chat with someone in the field than you could get in a month of self-study. It can also help you quickly test your own fit. Could you see yourself doing the kind of work that they do? What would it take to get there? 80,000 Hours tries to distill this expert advice into our career reviews and podcasts but, when possible, you should go to the source.

How can you start speaking with AI safety professionals? This is easier said than done, but our networking guide has some tips: you could attend a local AI safety, rationality, or EA meetup; go to talks; start participating in online subcommunities; get coffee with friends-of-friends; or try well-designed cold emails.

I also recommend taking a trip to a hub city like San Francisco, London, or DC — or moving, if you can. There’s no substitute for being surrounded by the tacit knowledge and expertise of a specific community. You can get this from attending conferences like EA Global or FAR.AI.

5. If you aren’t breaking through to jobs or fellowships yet, do deliberate practice and develop a portfolio of side projects

Movies skip over training sequences with a montage because it’s a grind to get good at anything, but in real life it takes dedicated time. When working through AI safety courses or projects, you should do ‘deliberate practice’, meaning that you set specific goals, work at the edge of your comfort zone, and build in concrete feedback loops. This is a habit that can take time to cultivate but pays off over time. It helps to carve out specific time for yourself to do this each week, even if you only have a couple hours.

This can take the form of a structured course where feedback loops from the curriculum or an instructor are built in, but it might also mean developing your own projects. AI safety is a relatively new and fast-moving field, so it’s often better to learn by doing. AI models are also increasingly good at helping you get feedback and designing your learning.

Which AI safety project you choose will depend a lot on your background and what topics interest you, but there’s no shortage of options. Our technical and policy resource lists include projects, and this “what are some projects I can try?” page includes many others. It’s more important that you’re working away at something to build skills than that you have a novel contribution early on.

Still, side projects are not a silver bullet. If you don’t have much relevant direct career experience for the kinds of roles you’re targeting, you might need to focus on finding an intermediate job that helps you build skills for a year first, like trying to find jobs outside of AI safety in software engineering, congressional staffing, communications, etc.

6. ‘Work in public’ and tighten your feedback loops

In our survey of almost 40 AI safety organizations, we consistently heard that applicants should publish their outputs:

Respondents strongly emphasised the importance of being public with your work and personal journey: show projects; write blogs, posts, and comments; make videos; build and share cool, useful things; build things using AI and show them off. Why? Employers need to know that you can do the work, and that you’re proactive, energetic, and reasonable. Doing work in public is one of the best ways to give them that information.

Hiring managers can’t see inside your head. They sometimes only have a few minutes to review your application at the first stage. You can differentiate yourself with job experience, but you can also showcase your thinking and personal agency by having concrete projects. “Oh, she wrote a response to one of our papers” probably won’t guarantee you a job, but it’ll be notable that you’re aware of an organization and are proactively learning.

‘Working in public’ is also important because it helps you tighten your feedback loops. You might save months of effort by getting feedback on a project early. People working in AI safety spend a lot of time online. They might respond to your questions on LessWrong / the EA Forum / Twitter / Substack if you engage with their work in good faith or (briefly) post your own.

It can sting if you get negative feedback on your public work, but this is also useful data. Either you haven’t built the requisite skills yet (which constructive feedback might help with), or you don’t know how to frame your outputs well yet. This is scary, but you’ll want to know this information so you can learn more, change how you communicate, or pivot to something else that you’re better at.

Don’t wait for permission to do the kinds of work you want to be doing.

AI safety has been building the plane as we fly it. While the field is more mature today, with established organizations and research agendas, it’s still moving fast and has many urgent gaps that need to be filled. If you can find a way to be helpful to solve some problem, you might get noticed. While it won’t guarantee you a full-time job, I’ve seen relatively new researchers get noticed by publishing interesting writing online or by having a thoughtful Twitter presence.

Even at 80,000 Hours, we recently hired someone who we discovered because of his AI Safety Talent Network project. Luca noticed a clear gap within AI safety, then built a career platform with a single profile, automated opportunity matching, support for hiring managers, etc. When 80k started building tools to improve our own job board, someone in our network recommended that we speak with Luca. We were excited by his experience and invited him to a multi-stage assessment, then made him an offer to join our team full time.

Not all hiring works this way, but as a general principle, I think it’s valuable to start doing the kinds of work you want to be doing (even part time on your own) before you get a full-time offer. You’ll learn a lot and it’ll be easier for people to notice your skills.

Caveats: imposter syndrome, burnout, and acknowledging how hard this is

Many people considering an AI safety career are held back by imposter syndrome. I’ve had advisees tell me they have nothing to contribute to this field, then land AI safety jobs a few months later. When you’re comparing yourself to others, make sure that your comparisons are:

- Time-sensitive: Are you sad you’re not as successful as someone five years older than you? Or who has been at this for 3x as long as you? Why?

- In the right reference class: Are you comparing yourself only to highly visible top performers? The right reference class for “could do great work at the margin” is much wider than that.

- Empirical: Have you actually tested if you can get incredibly good at this skill? For how many hours? With how many approaches? How much feedback have you gotten?

Similarly, burnout is real and it’s terrible. AI safety has many urgent problems to solve now, but I want your work to be sustainable — 30 minutes a day sustained over a few months will take you much farther than a couple frenzied weekends of work that you give up on.

Lastly, I want to acknowledge how stressful job searching can be, particularly in a new and competitive field like AI safety. When you deeply care about a problem, then put hours into learning and applying, rejections can sting. I hope you’ll be kind and patient with yourself.

But if you’ve made it this far, kudos to you for trying to keep people safe and make AI go well. If we’re right about the risks AI might pose, this could be one of the most important moments in human history for thoughtful people to shape decisions about the technology. You should feel proud that you’re paying attention to these problems and looking for ways to contribute. From here, you need to get good and be known.