Summary

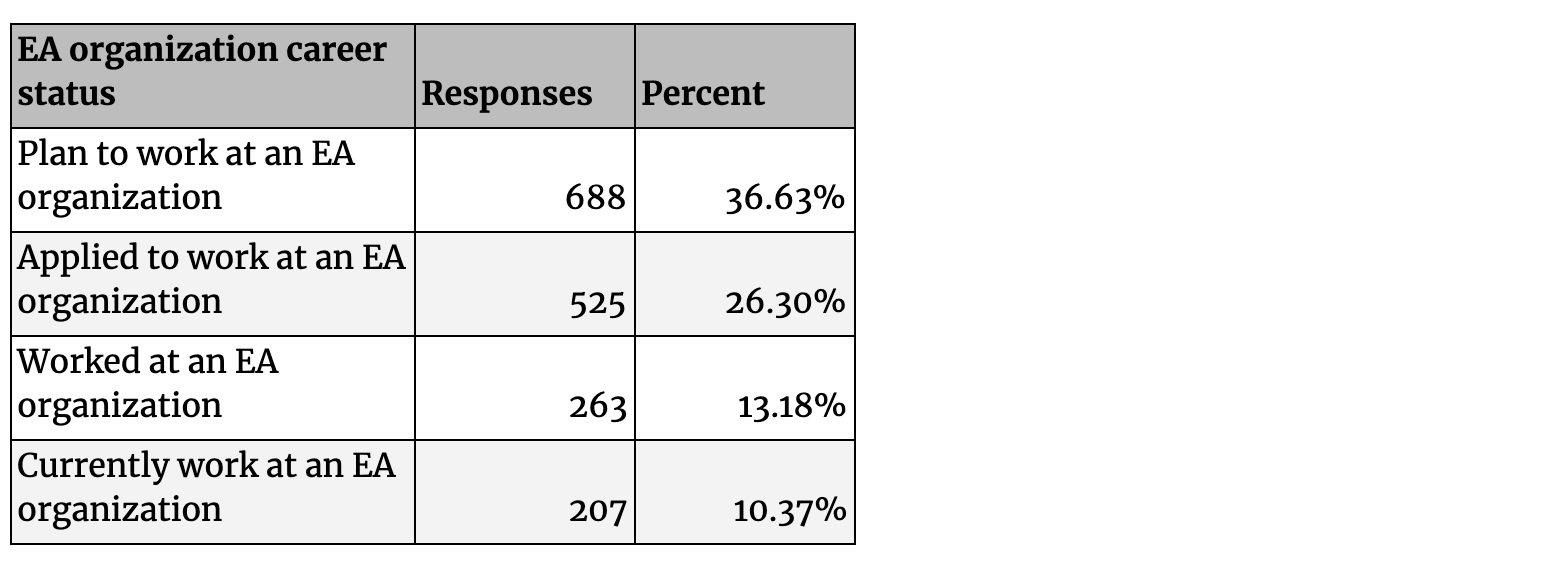

- The most popular career paths that effective altruists in the survey (EAs) plan to follow are in earning to give roles (38%) and working at EA organizations (37%).

- 50% of EAs have only one planned broad career path.

- Two of the top four significant barriers to becoming more involved in EA were not enough job opportunities that seemed like a good fit for me (29%) and too hard to get an EA job (23%).

- 462 (38%) EAs have at least 3 years work or graduate experience in the most popular skills highlighted as important talent needs for EA in a recent 80, 000 Hours/CEA survey of EA leaders.

- 607 (30%) EAs have applied to work at an EA organization or plan on working in an EA organization, but don’t yet have experience in one.

- 296 (15%) EAs in the survey already work(ed) at an EA organization.

- 1,014 (58%) EAs want to become more involved in the community by pursuing a career in an EA-aligned cause area.

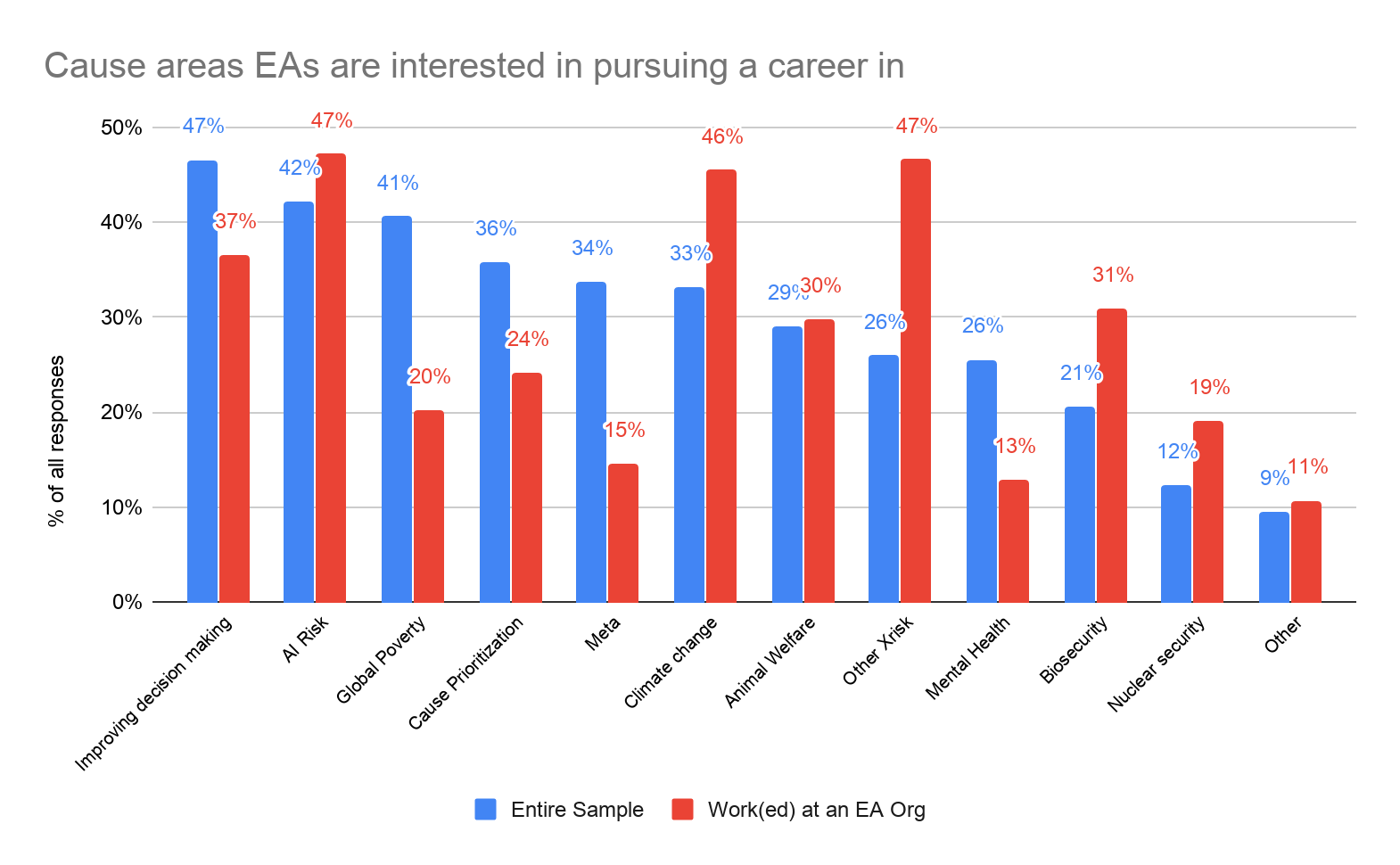

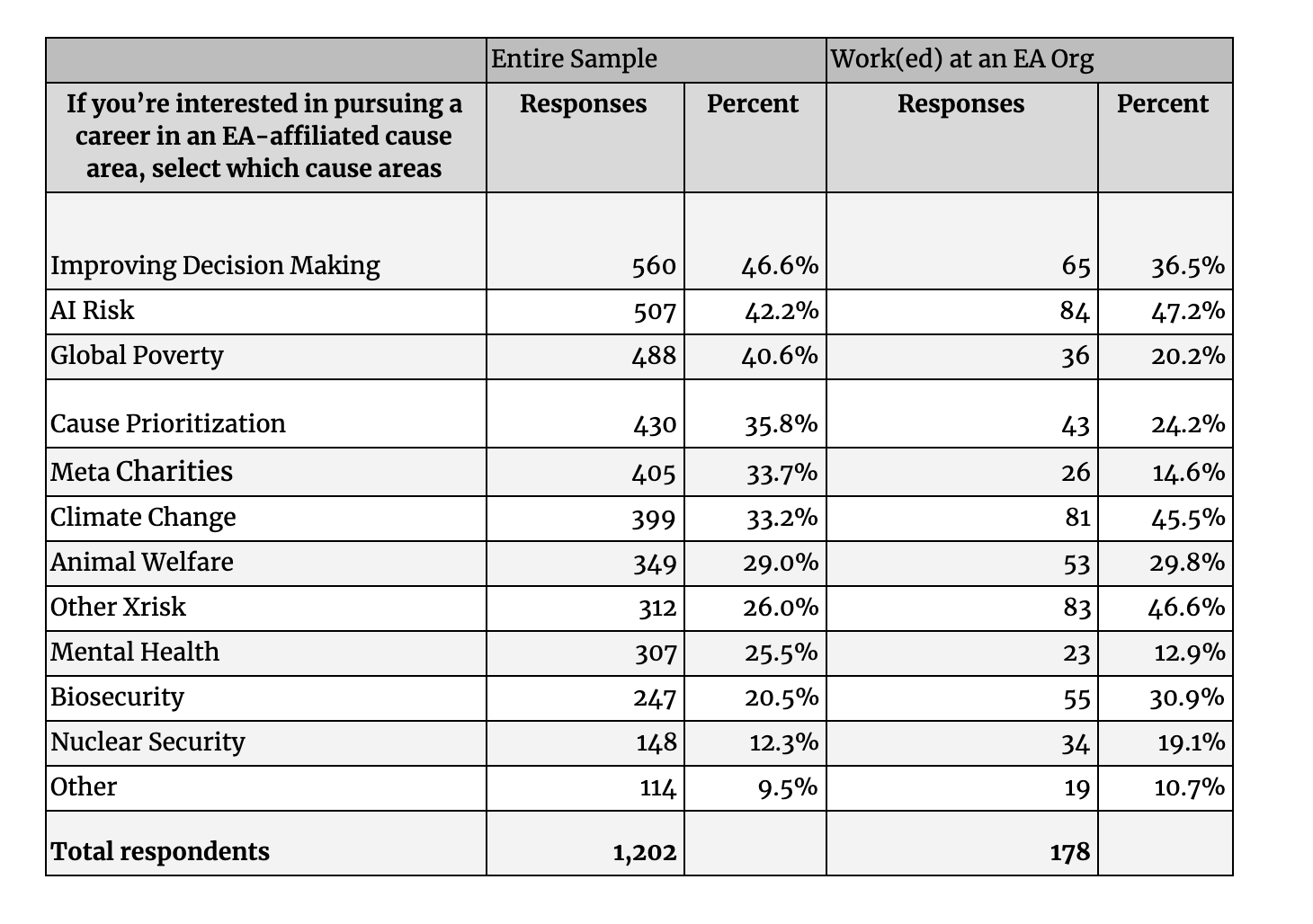

- AI Risk, Other X-Risk and Climate Change are the most common cause areas EAs are interested in pursuing a career in.

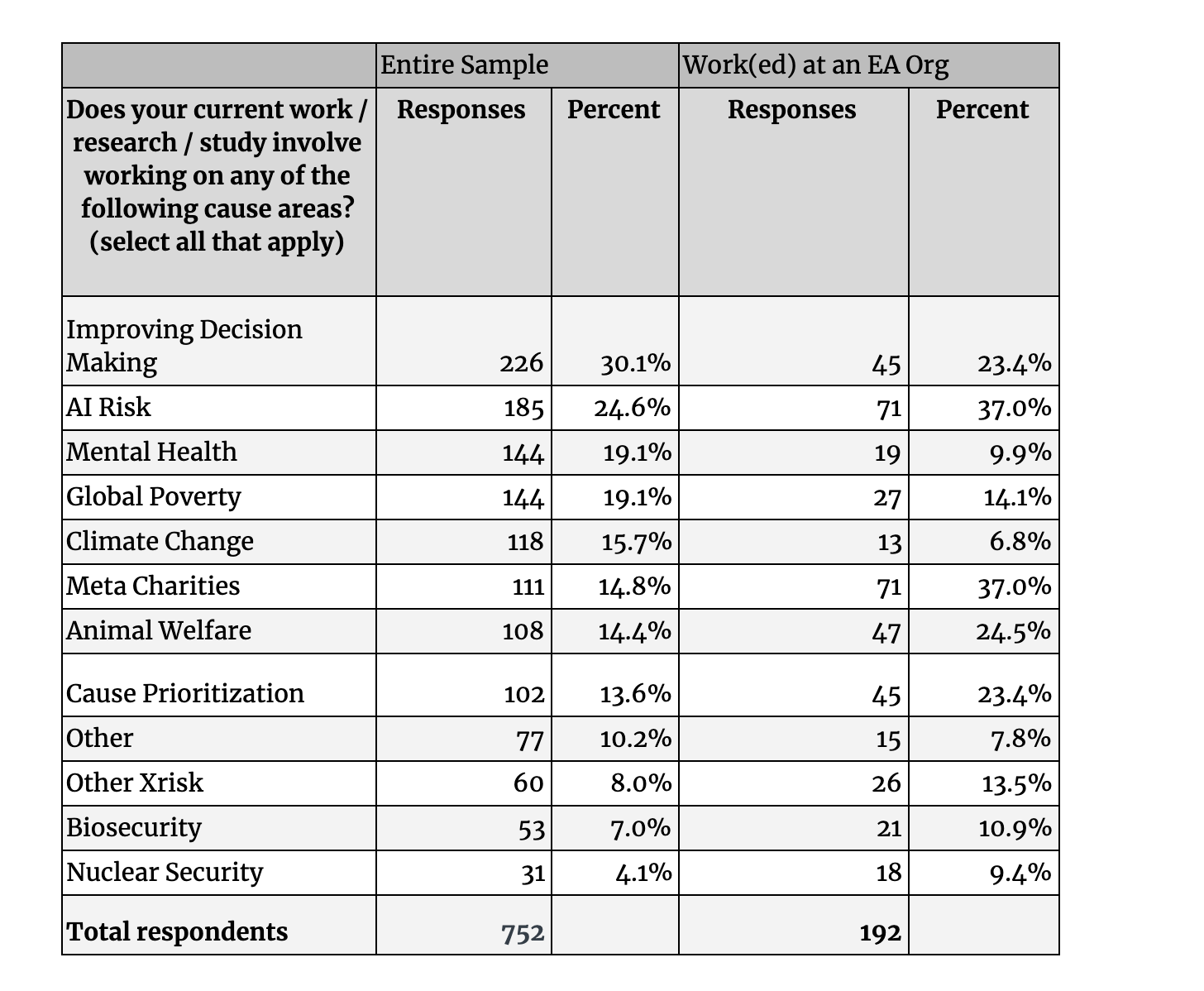

- AI Risk and Meta work are the cause areas EAs are most often currently researching/studying/working on.

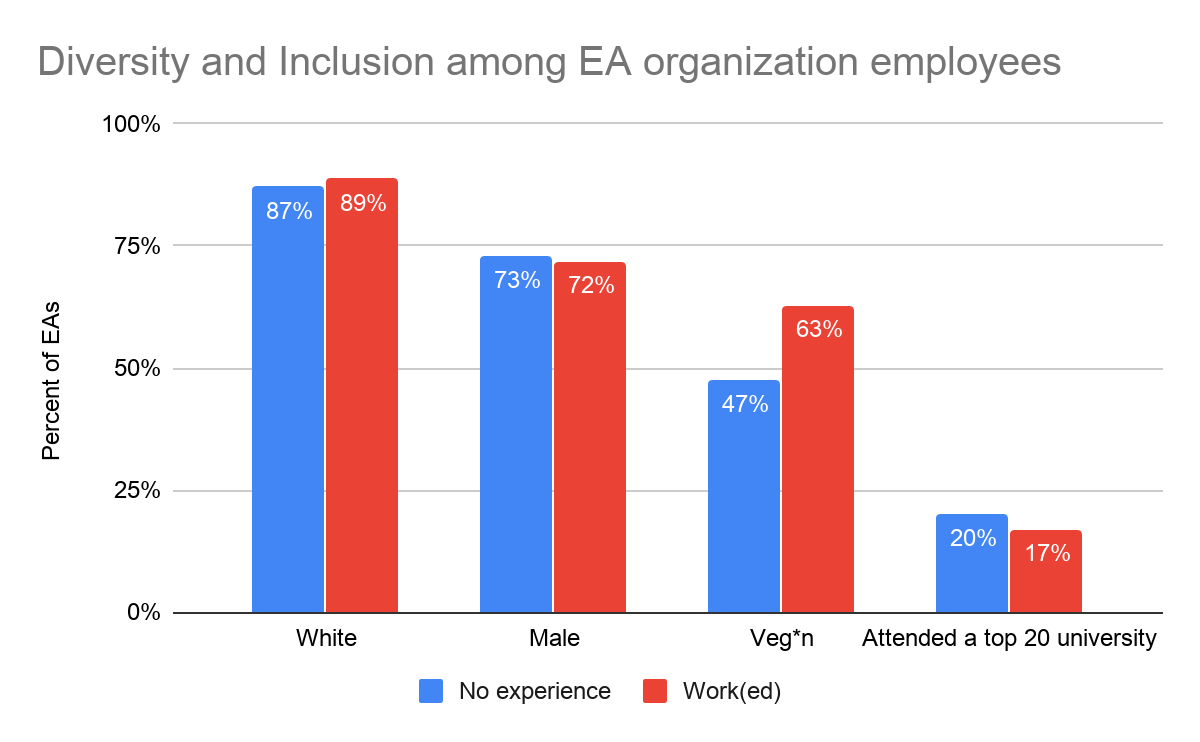

- Those who work(ed) at an EA organization are demographically similar to other EAs.

This is a supplementary post to Rethink Charity's main series on the EA Survey 2019, which provides an annual snapshot of the EA community. In this report, we explore what career paths EAs are planning to follow, their academic and skills background, and specifically highlight issues around working at an EA organization. In the first post we explored the characteristics and tendencies of EAs captured in the survey. In the second post we explored cause preferences of EAs. In future posts, we will explore how these EAs first heard of and got involved in effective altruism, their donation patterns and geographic distribution, among others.

Introduction

Using your career to achieve impact is clearly a major interest of EA, with an entire organization, 80,000 Hours, dedicated to this pursuit. In this year’s EA Survey we asked a number of questions about EAs’ career paths and skills. We think this will be of special interest to the EA community given the discussion on pressures to work at EA organizations, how hard it is to get these types of jobs, and the impetus to put more focus on “ non-standard ” EA careers (much more discussion here, here, and here). We can provide some data on whether bottlenecks lie in skills available in the talent pool, the number of opportunities available, or other areas such as cause prioritization misalignment. This may help decide where EA needs to develop the talent that already exists in the movement,where EA needs to grow new talent, and how much of the bottlenecks lie in mismatched skills versus missing skills.

Career plans

When asked about the top ways EAs are interested in becoming more involved in the community, the most common response was Pursue a career in an EA-aligned cause area (58%,1,014 EAs)[1] and a majority changed their career plans based on EA principles (51%, 1,025). However, when asked about significant barriers to greater involvement in EA, two of the top four reasons among the 1,756 who answered this question were that there are not enough job opportunities that seemed like a good fit for me (29%, 514) and it is too hard to get an EA job (23%, 410).

We asked Which broad career path (s) are you planning to follow? and presented a list of options from which respondents could choose as many as they liked. 1,877 EAs responded with at least one of the career path options.[2] However, 50% (943) of these only reported one career path. That so many EAs don’t appear to have alternative career plans may be one contributing factor to the sense of disillusionment surrounding EA-aligned job applications and rejections.

A plurality of responses were for a planned career path in For-profit (earning to give) (37.8%, 709). This is interesting given the discussion in recent years about whether earning to give should be the default strategy for most EAs or if only a small proportion of people should earn to give long term (also see discussions here and here). A similar share (36.6%, 688) of responses were for Work at a non-profit (EA organization) which is generally seen as a promising career path for impact. There was overlap between these two groups, with 219 EAs selecting both. 21% (147) of those planning to work at an EA organization and 40% (283) of those planning an earning to give role did not report any other planned career path. The most common second career path of those aiming to work at an EA organization was Think tanks / lobbying / advocacy (242), while it was Work at a non-profit (EA organization) (219) for those planning on earning to give.

EA organization career status

Although it is just one of many promising career paths, the goal of working at an EA organization has attracted a lot of comment. In the data below we can see significant numbers of EAs are considering this option, far more than the number of opportunities there appear to be. We draw on data from two questions in the survey that allowed respondents to select multiple activities they have engaged in or career paths they plan to follow.[3] There are 207 EAs in the survey who claim to currently work at an EA organization and far more who have applied to work at, or plan to work at an EA organization. However, these raw descriptive statistics are not very informative without accounting for overlap between these categories.

We grouped responses for Worked at an EA organization and Currently work at an EA organization together into a single category of “work(ed) at an EA organization”. We clearly see in the venn diagram below that the number of people who have applied to work at or expect to follow a career path working at an EA organization is huge compared to the apparent current number of EA organization jobs (indicated by the current numbers working in EA). This may serve to explain why EA jobs are so competitive. 280 (32%) of the 887 EAs that have applied for an EA job or plan to follow this career path also work(ed) at an EA organization.[4] 607 (30%) reported they have applied for a job at an EA organization and/or are planning to follow a career path working at an EA organization, but neither currently work nor previously worked at an EA organization. There are 54 EAs who work(ed) at an EA organization but didn’t report having applied for such a job. This might reflect that some people who work at EA organizations are co-founders or are headhunted without an application.

We can imagine numerous ways of segmenting our data. One straightforward approach is to divide EAs between those with experience in EA organizations (“work(ed) at an EA organization”), those working towards getting such experience (“candidates”), and those not interested or involved in EA organization work (“not interested”).

- Candidates: 607 who reported they have applied to work at, or plan to work at an EA organization but haven’t yet worked at one.

- Work(ed) at an EA organization: 296 EAs in the survey have worked or currently work at an EA organization.

- Not interested: 1,610 have not applied to, plan to work at, or already have experience working at an EA organization.

Talent & Skills

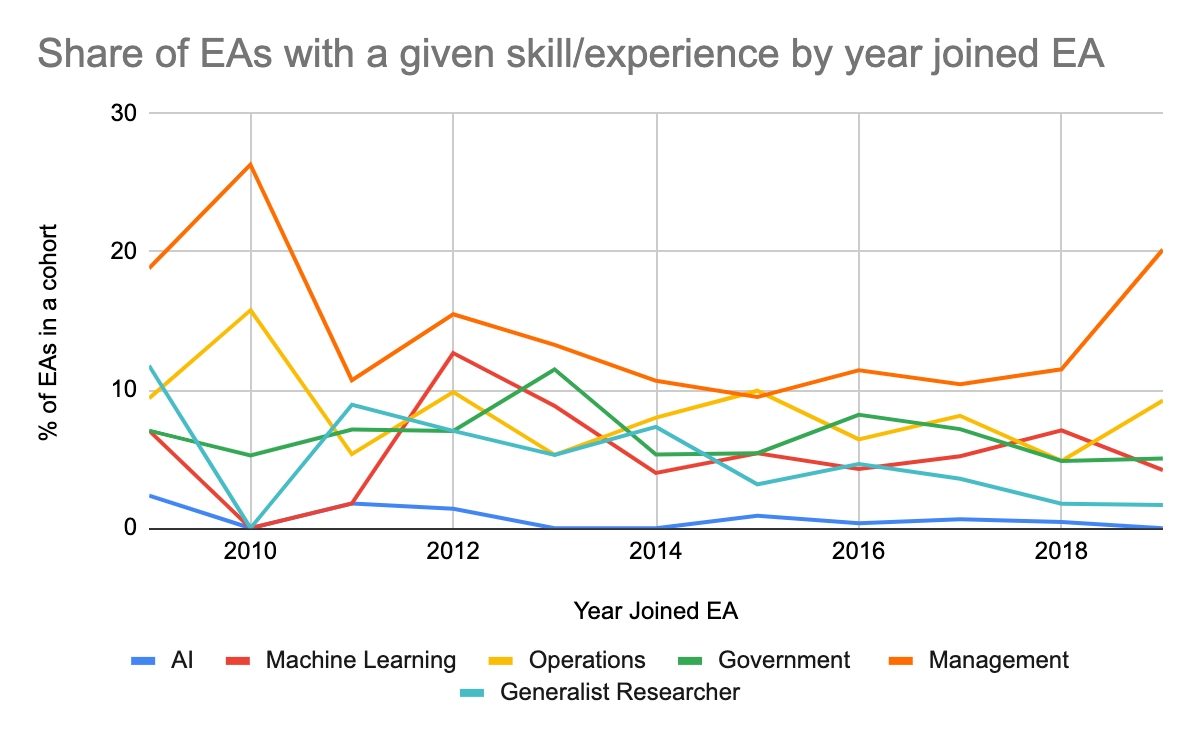

A recent CEA and 80,000 Hours survey of EA leaders [5] asked about the talents EA as a whole or their organization would need more of over the next 5 years. The most common responses in that survey for EA as a whole were government and policy (12.9% of all responses) and management (12.3%), while generalist researchers (9.4%), operations (8.9%), and management (7.8%) were the main needs for respondents’ own organizations.[6] “Machine learning / AI technical expertise” attracted ~7% for each. The percentages and the figure below uses data from that survey and highlight only the most popular responses that have a comparable category in the EA survey.

In the 2019 EA Survey, we asked respondents about areas in which they had at least 3 years of work experience or graduate study. Respondents could select multiple options. The top three responses among the 1,206 who answered were software engineering (27.2% of all responses), management (17.7%), and maths and statistics (16.7%), with the first being clearly the most common response. Only one of these (management) appeared among the top talent needs in the EA leaders forum survey.

There were 462 EAs (38%) who selected at least one of the most common areas cited in the leaders forum survey. 17.7% (214) reported having experience in management,10.9% (131) in operations, 9.8% (118) in government and policy, 6.3% (76) as generalist researchers, 8.5% (103) in Machine Learning, and 1% (13) in AI technical safety. One cannot make a direct comparison between the two surveys because the leaders forum survey reflects the percent of leaders who think skill/experience X is important, but not what percent of EAs need have this skill/experience. To the extent that the share of EAs with experience in X reflects the importance they place on these skills, one can compare the ranking of skills held by EAs to the relative ranking of the skills needed by EA as a whole by EA leaders. In that case, EAs in the survey appear to underemphasize experience in government/policy, generalist research and AI technical work, and perhaps overemphasize Machine Learning.

.

.

Certain subgroups in the EA survey have these skills more so than the sample as a whole. Due to the large number of categories, many with zero responses among a subgroup, significance testing the differences would not offer very reliable estimates here. Instead, we highlight some descriptive differences.

42% of those planning to follow an earning to give career path had a background in software engineering (versus 27% of the sample as a whole) and 20% selected web development (versus 13%). This was a “select all” question so there was overlap between these two categories. This makes sense given that these are typically high-earning jobs.These respondents were most interested in becoming more involved in EA by giving more (69%) and pursuing a career in an EA-aligned cause area (57%).

As one would expect given the historical focus of the site, members of LessWrong have more experience in Machine Learning (14% versus 9%) and technical AI safety (4% versus 1%) than the typical EA. EAs with self-reported high levels of engagement in EA most often responded with experience in management (25% versus 18%), operations (22% versus 11%), and did so more than the survey as a whole. That more highly engaged EAs tend to have the skills most valued by EA leaders compared to other EAs is not surprising given that a suggested criterion for choosing high engagement was working at an EA organization.

There is a clear gender divide in terms of skills and experience. The most common response among women was management while the most common response among men was software engineering. The top three skills/experiences that female EAs reported were management (19% versus 18% for men), government/policy (15% versus 8% for men), and/or operations (14% versus 10% for men), while male EAs most often reported software engineering (33% versus 10% for women), maths (19% versus 11% for women), and/or management (% as above).

EA organization employees

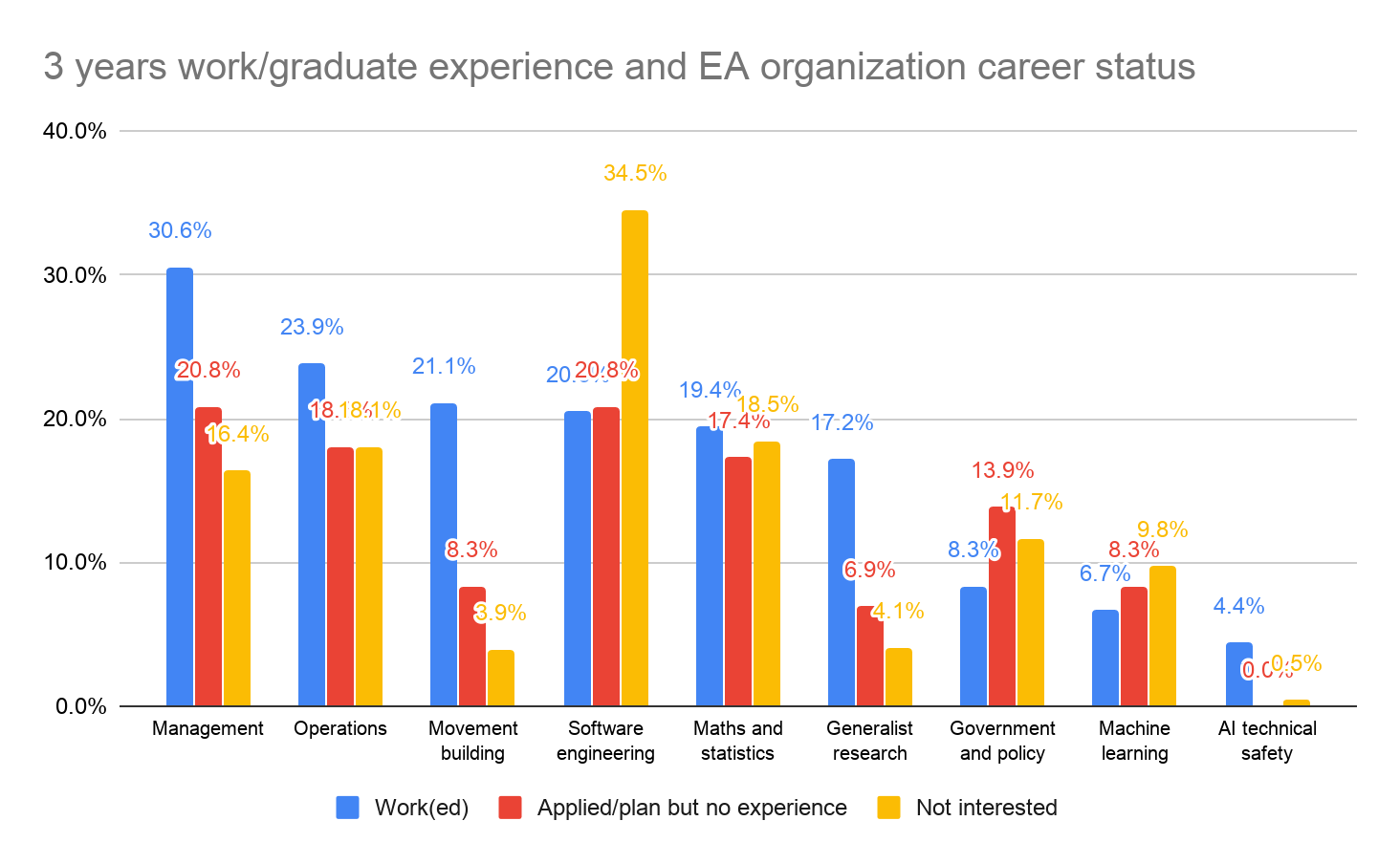

As another proxy for the demand and supply of talent, we can explore the experience and skills of those who work(ed) at EA organizations compared to other EAs. Those who work(ed) in EA organizations are most likely to have 3 years of work/graduate experience in management (31% versus 18% for the sample as a whole) and operations (24% versus 11%). For “candidates” the most common responses were management (21% versus 18%) and software engineering (21% versus 27%). Those not interested in EA organization careers most often had experience in software engineering (35%) and/or maths and statistics (19% versus 17%). The figure below highlights only the most common responses and those highlighted in the EA leaders forum survey and includes the group of EAs not pursuing or involved in jobs at EA organizations.

Those who work(ed) at an EA organization appear to have experience in AI technical safety and generalist research more so than “candidates”, who themselves appear to more often have government and policy experience than those who work(ed) at an EA organization. Similar shares of each group appear to have experience in software engineering and maths and statistics. If one were to assume the share of those who work(ed) at an EA organization reflects the need for a certain skill, this might suggest that those currently in the EA organization “job pool” are underskilled in the areas of management, operations, movement building, and generalist research, while there appears to be an oversupply of candidates with government and policy experience and machine learning.

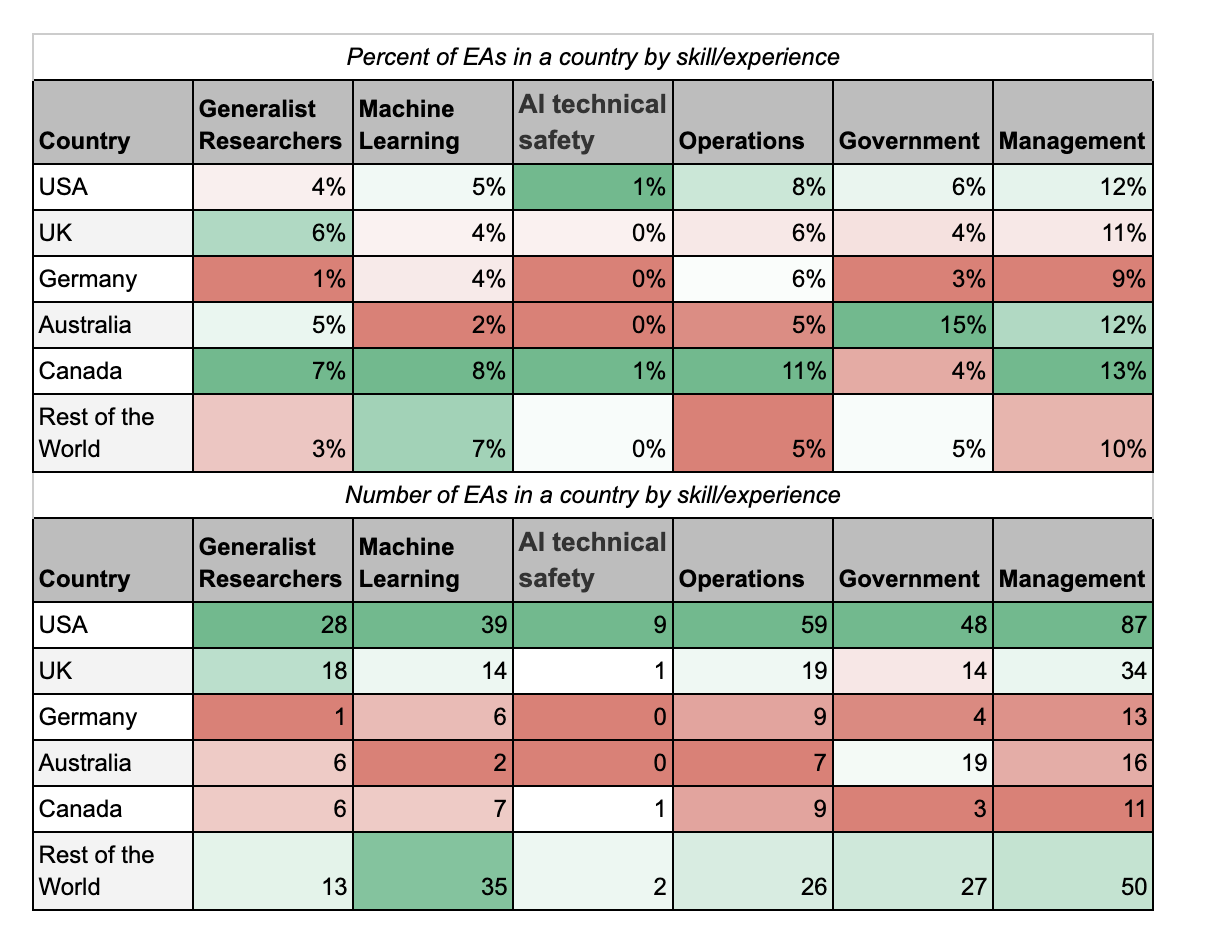

Geographic distribution of skills

We will explore the geographic distribution of EAs in detail in forthcoming post, but here we can look at the skills/experience distribution among the countries most EAs live in (USA, UK, Germany, Australia, Canada, Rest of the World). Given that a plurality of EAs live in the USA, it is not surprising that in raw numbers the USA tops the list in each skill category here, although the gaps might not be as large as some expect. It is also interesting to explore what percent of EAs in a country have each skill/experience. The tables below highlight in dark green which country had the largest percent/number of its EAs with a given experience, and the lowest in dark red. For example, 7% of EAs living in Canada had generalist researcher experience, higher than the share in the other countries listed. However, in absolute numbers there were more EAs with generalist researcher experience in the USA than Canada (28 versus 6).

A larger share of EAs living in Canada (7.1%), the UK (5.8%), and Australia (4.6%) have generalist researcher experience than among those in the USA (3.7%).EAs based in Canada appear disproportionately more experienced in Machine Learning (8.3%) and operations (10.7%) than among EAs based in other countries. AI technical experience is markedly absent or low among most EAs, regardless of country of residence. Only 1.2% of the USA- and Canada-based EAs have such experience. Australia-based EAs appear to be more experienced in government and policy (14.5%) than EAs based elsewhere. ~9 to 13% of EAs in the top countries, and “Rest of the World” have experience in management.

Time in EA

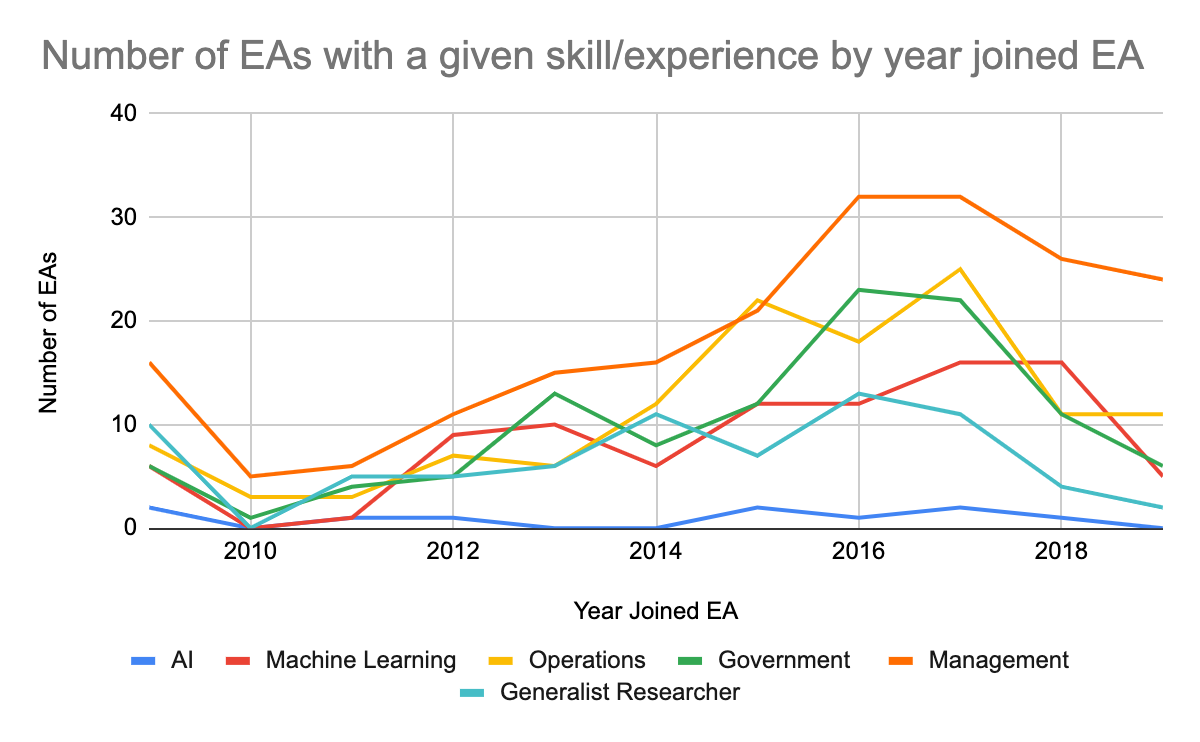

Given the growth in the EA movement, we might expect that veteran EAs would be experienced in the skills emphasized in previous years, while newer EAs would be experienced in the skills being emphasized today such as operations and management. This of course assumes that veteran EAs have not been gaining new experiences in line with the changing needs of the movement, which may not be true. It is also worth bearing in mind that those who joined EA in 2019 were younger on average than the sample as a whole but joining EA at an older age than previous cohorts. It clearly is more difficult for younger people to have as much experience as older people. To the extent that newer EAs also tend to be younger we might expect newer EAs to have less experience in those areas which are higher up the ladder of experience (it is likely easier to get generalist researcher experience when you are younger than a position in government or policy). The figures below chart the number of EAs and their experience by year they joined EA, and the share of that year’s EAs and their experience, excluding anyone under the age of 23.

The share of EAs with experience in government and policy is relatively steady across the cohorts (with the exception of those who joined in 2011 topping the list at 11%), but the largest number of EAs with such experience are those who joined EA 2-3 years ago. This then could reflect that the newest EAs have yet to build up such experience and that individuals who already have government/policy experience have not been joining the movement in recent years. Similarly, while the share of EAs with operations experience is higher among vetern EAs, the largest number of EAs with such experience are those who joined EA 2-4 years ago. A greater share of veteran EAs have experience in Machine Learning than newer EAs, although in absolute numbers newer EAs make up the bulk of those with Machine Learning experience. There doesn’t appear to be a trend of newer EAs being more experienced in AI technical safety than veteran EAs, with very few EAs having this experience at all. The number of EAs with management experience decreases with time in EA while the share increases, although there appears to be a plateau and drop-off in absolute numbers among EAs who joined after 2016. A greater share of veterean EAs have experience as generalist researchers than new EAs, but the trend in the absolute number has been irregular and has also started dropping off among EAs who joined after 2016.

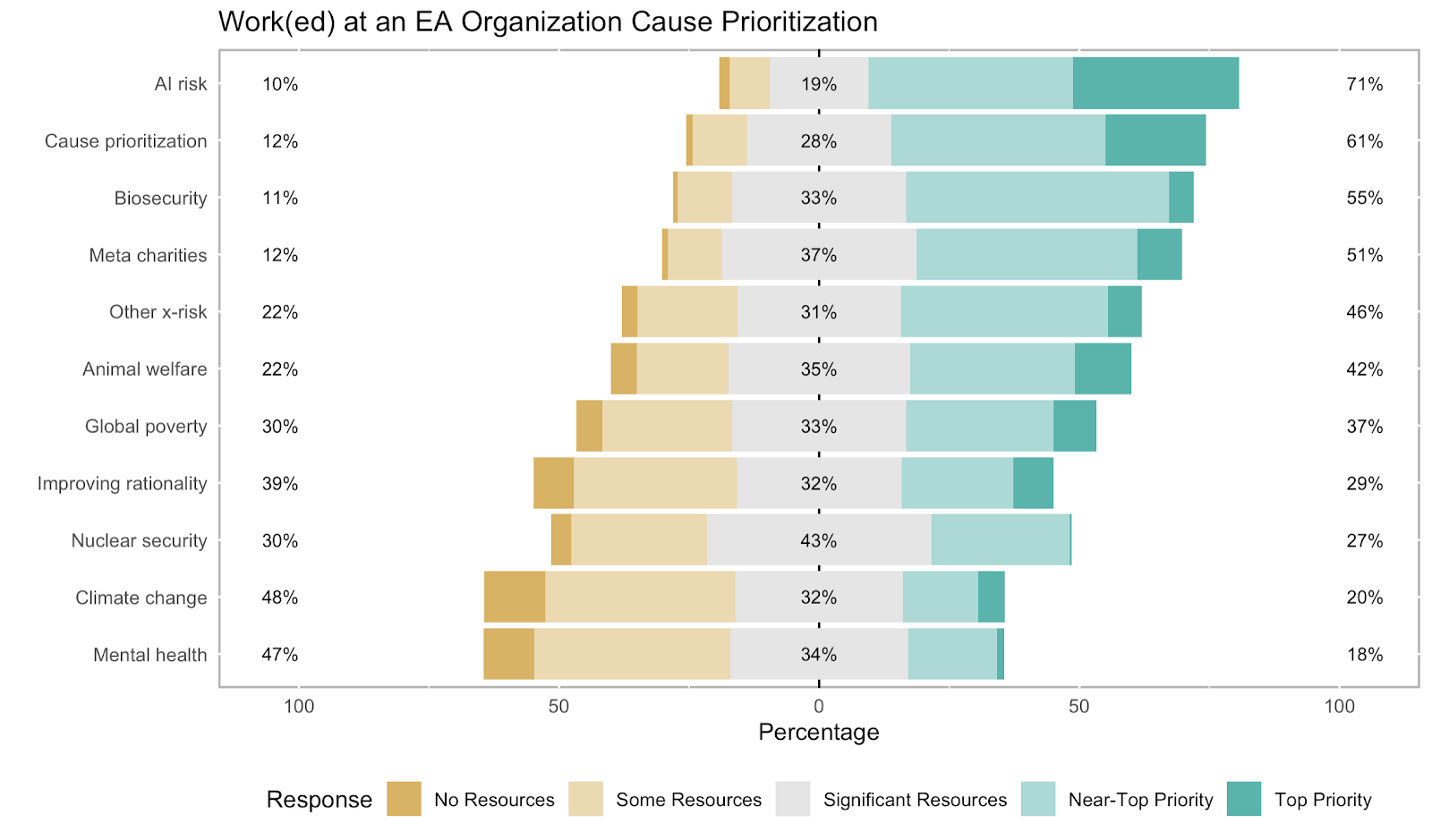

Do EA organization employees prioritize different cause areas to EAs in general?

As mentioned above, the most common top way EAs in the survey are interested in becoming more involved in the community was Pursue a career in an EA-aligned cause area. Therefore, it seems worthwhile to examine how the cause prioritization of EAs differs by career status and path.

The table below shows the mean rating for each cause, [7] with the causes receiving the highest rating per group highlighted in dark green, and the cause a group gave the lowest rating to highlighted in dark red. Both those who work(ed) at an EA organization and “candidates” tend to prioritize AI Risk and Cause Prioritization the most. Candidates appear to prioritize Climate Change and Global Poverty more than those who work(ed) at an EA organization.[8] More striking is the difference in average cause prioritization between those who work(ed) at an EA organization and the rest of the sample. Those who work(ed) at an EA organization tend to place a higher priority on X-Risk, Meta, AI Risk, Cause Prioritization, Animal Welfare, and Biosecurity than other EAs, who in turn place a higher priority on Global Poverty, Climate Change and Mental Health.[9] It is unclear here whether EA organization career paths are more attractive to those interested in these causes, or EAs interested in these career paths alter their cause preferences to align with a perceived cause preference. It could also be that there is a correlation between engagement in EA, working in an EA organization, and cause prioritization. As one comparison, AI Risk (both short and long timelines) and Global Health were among the causes areas EA leaders thought the highest percentages of resources should be devoted to over the next five years.

When asked what cause area EAs in general are currently working on, Improving Rationality/Decision Making/Science is the most popular response among all EAs, followed by AI Risk, Mental Health, and Global Poverty. Among those with work experience in EA organizations, the most popular causes are Meta, AI Risk, and Animal Welfare.

When asked what cause area they are interested in pursuing a career in, Improving Rationality/Decision Making/Science is the most popular response among all EAs, followed by AI Risk and Global Poverty. Among those with work experience in EA organizations, the most popular causes are AI Risk, Other X-risk, and Climate Change.

Diversity among EA organization employees

Overall, EAs in the survey continue to be most often male, white, well-educated, and between the ages of 25-34 and many EA organizations are aiming to increase the diversity and inclusion of their staff. Those who work(ed) at an EA organization appear to be on average ~2 years younger than other EAs (29 versus 31).[10] 17% of those who work(ed) at an EA organization have attended a top 20 university, compared to 20% of other EAs. Based on our data, there were no significant associations between working at an EA organization or not and gender or race (the split was heavily and similarly skewed towards males and those identifying as white in both groups).[11] Those who work(ed) at an EA organization are more likely to be veg*n (vegetarian or vegan) than other EAs, however this is likely due to those being more engaged in EA being both more likely to be veg*n and to be working at an EA organization.

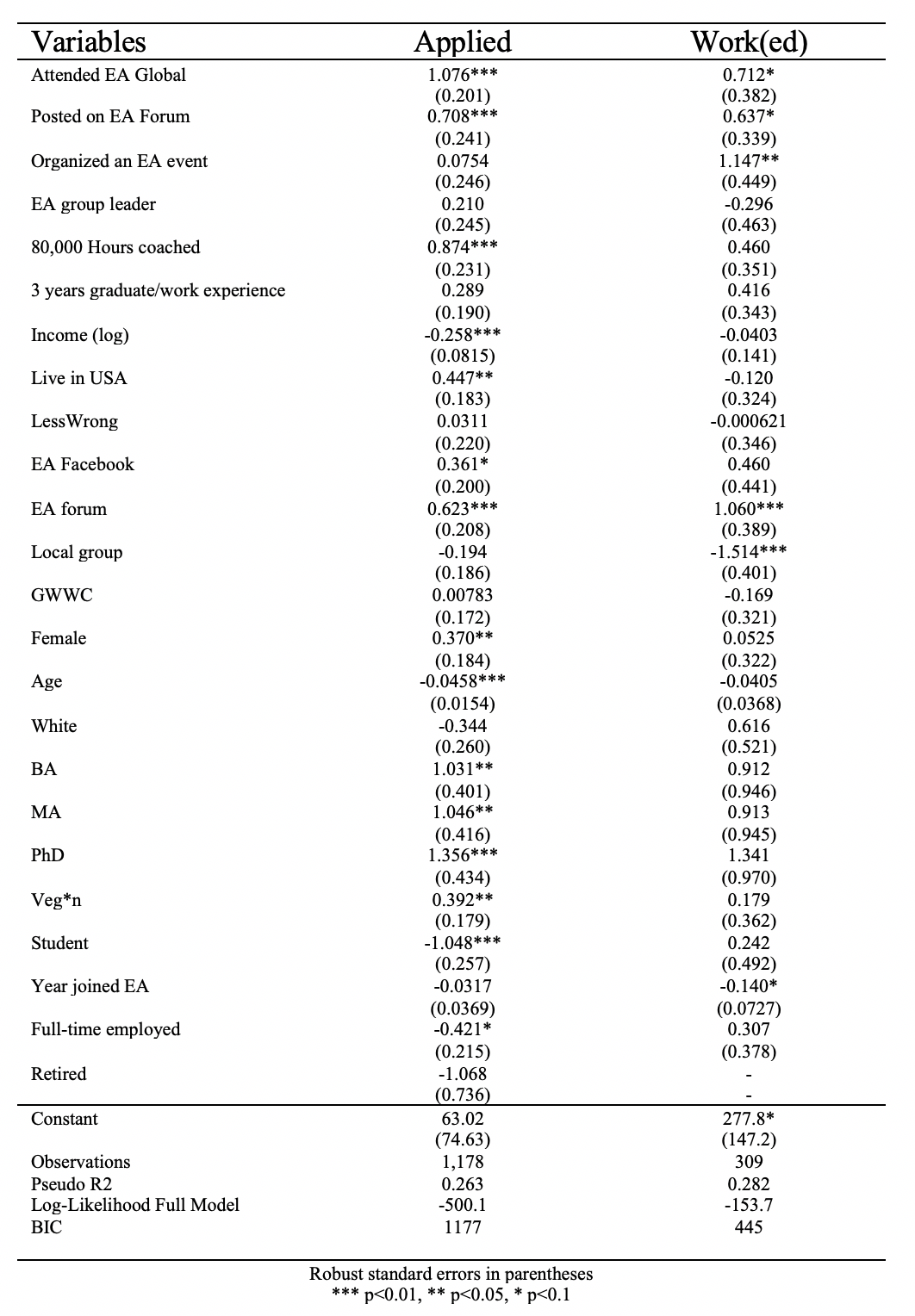

Predictors of EA organization careers

What are the characteristics associated with being more likely to apply for a job in an EA organization? What are the characteristics associated with being more likely to work at an EA organization, conditional upon having applied?

A complete model of who applies for jobs at EA organizations is doubtless beyond the scope of the data available in the EA survey, however we can look at some of the indicators that are likely to play a role. In their write-up of this career path, 80,000 Hours note that these organizations generally look for people who are already engaged with the community in some way such as attending an EA Global, and can demonstrate their ability to do the work well such as helping to run a local EA group, publishing content on the EA forum, and/or have work or graduate experience in the relevant field. It has been noted that spending resources on applications is easier for those with a financial runway and a support network. There is also an impression that recruitment configurations may favor elite (& highly privileged) applicants. Some EA/ EA-aligned organizations, like the Open Philanthropy Project, have discussed that in some cases the applicant pool was not as diverse as desired, and that needing visas (especially for the USA where many EA organizations are based) is a difficulty.

We therefore explored a logistic regression model of applying for an EA organization job against data in the survey that corresponded to these factors as best we could, and included some further controls such as years in EA and current employment status. The model essentially asks if we assumed there was no real relationship between these factors and the probability of applying for a job, how surprising would the data we have be? This can be used to update one’s priors about any hypothesized relationships. This model explained ~26% of variance. [12]

The figure above plots the estimated odds ratios and their 95% confidence intervals of factors that had p-values <0.05, suggesting this data would be very surprising to see in a world where there was no association between the variables. These are more straightforward to interpret than regression coefficients. For example, one can interpret it as someone who has attended an EA Global is ~2.9 times more likely have applied than someone who has not attended.

The model results suggest that in our sample an EA was more likely to have applied to an EA organization job if they had completed or were in the process of completing a PhD, had attended EA Global, had a Master’s or Bachelor’s, received 80,000 Hours coaching, posted on the EA Forum, are an EA Forum member, currently live in the USA, are veg*n, and are a woman. An EA in the survey was less likely to have applied for an EA organization job if they were a student, had a high income (keeping in mind that some EAs in the survey are extremely high earners and presumably in lucrative earning to give roles), and were older ( for example, a 40 year old was ~15% less likely to have applied compared to a 20 year old). We did not find results that would be surprising given the null hypothesis above for race, years in EA, having 3 years experience in any field,being an EA local group leader, having organized an EA event, being a member of LessWrong, EA Facebook, an EA local group, GWWC, full time employed, or being retired.

It is important to keep in mind that we do not have chronological data on when someone applied. For example, while there is an association with currently living in the USA, we don’t know how many were living in the USA when they applied. We can only suggest that those who applied are more likely to currently live in the USA than elsewhere, which is the case for a plurality of EAs in the sample.

How does this model perform when we look at whether or not a respondent work(ed) at an EA organization, conditional upon them having applied?

The same model explains ~28% of variance in choosing work(ed) at an EA org, conditional upon having applied, however far fewer factors appear to play a significant role. In the figure above we again plot odds ratios, but also include factors which were not statistically surprising (those where 95% confidence interval bars cross the dashed line) as a comparison to the model before. The model results suggest that in our sample an EA was more likely to work(ed) at an EA organization if they also had organized an EA event and were an EA forum member. An EA in the survey was less likely to work(ed) at an EA organization if they were a member of a local group, presumably because they were not able to keep up membership in a local group in addition to their EA job responsibilities. For the other factors in the model there were not statistically significant differences.[13]

Credits

The annual EA Survey is a project of Rethink Charity with analysis and commentary from researchers at Rethink Priorities.

This essay was written by Neil Dullaghan with contributions from David Moss. Thanks to Derek Foster, Saulius Simcikas, and Peter Hurford for comments.

If you like our work, please consider subscribing to our newsletter. You can see all our work to date here.

Other articles in the EA Survey 2019 Series can be found here

67 additional respondents selected the option “NA”, as distinct from simply not answering the question. These responses were excluded from this analysis. ↩︎

Of course not the same as working for an EA organization ↩︎

1,991 EAs responded to the question Which of the following activities have you ever done? From which the “applied to/worked at/currently work at” responses are drawn. 1,792 EAs responded to the question If you had to guess, which broad career path (s) are you planning to follow? from which the “plan to work” responses are drawn. ↩︎

One should not interpret this as 32% of those in the EA job pipeline are internal. We do not have chronological data to distinguish between those who are currently applying/expecting an EA organization career path and those who already applied or to expect to continue on the career path they already have in an EA organization. ↩︎

Note that that there are some concerns expressed in the comments section of that post that this survey did not contain a representative sample of EA leaders. ↩︎

Respondents could select up to 6 options. There were other skills listed that do not have a directly comparable datapoint in the EA Survey. For example, “The ability to really figure out what matters most and set the right priorities”. ↩︎

Converting each of these options into a numerical pointon a five point scale (ranging from (1) ‘I do not think any resources should be devoted to this cause’, to (5) ‘This cause should be the top priority’). We recognise that the mean of a Likert scale as a measure of central tendency has limited meaning in interpretation. Nevertheless, it's unclear that reporting the means is a worse solution than other options the team discussed. ↩︎

Simple bivariate ordered logistic regressions suggests those in EA jobs gave higher responses more for X-risk, Meta, AI Risk, Cause Prioritization, Animal welfare, and Biosecurity, and lower responses for Global Poverty, Mental Health, and Climate Change (where p<0.05 and odds ratios range from 1.5 to 2.7). ↩︎

Simple bivariate ordered logistic regressions suggests those who work(ed) at an EA organization are 1.4 to 2.7 times more likely to prioritize the former group of causes than other EAs, and other EAs are 1.2 to 3.3 times more likely to prioritize the latter group of causes than those who work(ed) at an EA organization (where p<0.05 ). ↩︎

Welch’s t-test suggests a statistically surprising difference in means (p<0.00001), a difference of 1.9 years (Cohen’s d 0.19), assuming a null hypothesis of no difference in means an alpha of 0.05, with 80% power to detect an effect of 0.186 or greater. ↩︎

For gender a chi-squared test of association found Pearson chi2 (1) = 0.1332 Pr = 0.715, with power of 95% to detect a small effect size. For race_white, a chi-squared test of association found Pearson chi2 (1) = 0.5489 Pr = 0.459, with power of 95% to detect a small effect size. Based on sample sizes of 1,745 and 1,760 respectively and Cohen suggested “small” w value of 0.1. ↩︎

We do not think these models are the final word on who is more likely to apply for or have an EA organization job, but for the sake of space and simplicity not all research avenues could be explored here. There are some issues anyone seeking to build on this work should keep in mind. The effects discussed are “uncorrected” in the sense that an adjusted alpha level for multiple comparisons was not used. We used a variable for having 3 or more years work or graduate experience in any listed category rather than dummy variables for each experience. This was primarily for two reasons, 1) without knowing what job a respondent was applying to we do not know which experiences are relevant, and 2) logistic regressions tend to identify those features that are heavily biased as significant which may be the case for some categories with only a few responses. Similar concerns surround including any information about cause prioritization data and subjects studied. The log of income appeared statistically significant in the model of applied to work at an EA organization, but descriptive statistics suggested the association may be curvilinear, such that the lowest and highest earning EAs are less likely to apply than middle-range earners. ↩︎

Given our sample size of 309 in this model, our alpha level of 0.05, and a power of 80%, only odds ratios of 0.69 or more extreme could be statistically significant, and our observed odds ratios for all other factors in the model were smaller than this, so we could not reject the null hypothesis for them. ↩︎