By Chengheng Li Chen (AI Safety Barcelona / EPFL) and Kyuhee Kim (MATS / EPFL)

The original work was done as a part of the Apart Research Technical AI Governance Challenge, and this blog post was written as part of the Apart Lab studio.

TL;DR: We built a tool that can secretly flag texts made by large language models, without access to the model itself. It works by limiting the vocabulary using a pattern based on cryptographic hashes. In our experiments, we detected all flagged texts quickly and with no false positives, using just one hash function. This tool could help meet the EU AI Act requirements starting in August 2026. However, we haven't yet tested how well it holds up against smart paraphrasing attacks.

Background

While the volume of AI-generated content is exploding, the European Commission recognized the need to build systems that let people easily check it under Article 50 of the EU AI Act. In December 2025, the detailed requirements for this, such as metadata, watermarks, and detection tools, were released in a draft Code of Practice. Here, they noted that any solution needs to be reliable (low error rates), accessible(verifiable by third parties without the original model), robust (survives text edits), and consistent across topics and writing styles.

However, with respect to these standards, existing detectors such as DetectGPT and GPTZero, which analyze statistical patterns, can't provide mathematical guarantees. Their error rates depend on writing style, topic, and length. Watermarking approaches like the green/red list method are more reliable, but their robustness against editing hasn't been proven.

Our objective was to develop something more rigorous that can meet the standards: a watermark that can't be forged without a secret key, provides explicit error guarantees, enables verification without model access, and degrades predictably under text modifications. This led to the Markov Chain Lock watermark (MCL).

How MCL works

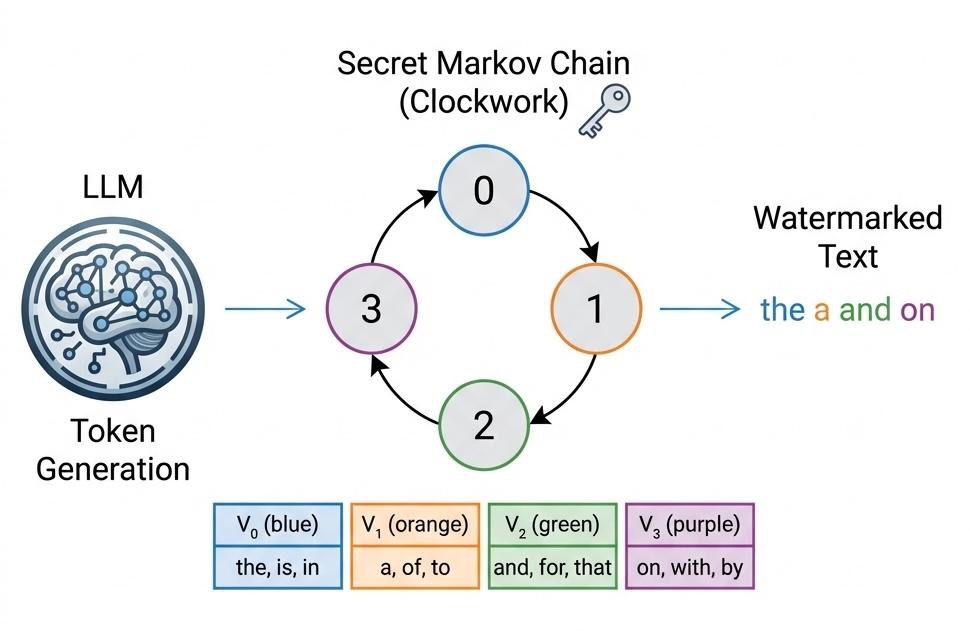

Most text AIs use autoregressive models, which generate a small piece of text (token) at a time. MCL uses this to embed a watermark. Figure 1 shows a simple toy example of a model with a vocabulary of 12 words.

Step 1. Split the vocabulary into secret groups.

Using a secret key and a cryptographic hash (SHA-256), each word is put into one of several groups. Here, the words are split into four groups: blue {the, is, in}, orange {a, of, to}, green {and, for, that}, purple {on, with, by}. The important things are that (1) without the secret key, all the group assignments seem random, and (2) they are changed if you change the key.

Step 2. Add a hidden cycle during text generation.

Suppose that there’s a rule that allows each group to move only to certain other groups. The simplest pattern, called the "clockwork" pattern, makes the model cycle through the groups in order: 0, 1, 2, 3, 0, and so on.

In Figure 1, the model starts in state 0 and picks “the” from . Then, the cycle moves to state 1, and it must pick from (here, it chooses “a”). In state 2, it chooses “and” from , and in state 3, “on” from . At Last, the cycle moves back to state 0.

We can loosen this rule in practice. For example, instead of always picking the next group in the cycle, the model can sometimes skip ahead by one group. So from group 0, it could choose from group 1 or group 2. We call this the “soft cycle.” This gives the model more flexibility but still leaves a clear pattern for us to detect later.

Step 3. Calculate fingerprint score.

Now, you need to count how many pairs of words follow the expected cycle. That's what we call a fingerprint score () and it follows like this:

(where is the list of words and is the number of words)

So, it is the fraction of word pairs that match the expected pattern. In the case of "the a and on", since each word is in group 0, 1, 2, and 3, and three transitions match, the score is 3 out of 3, or 1.0.

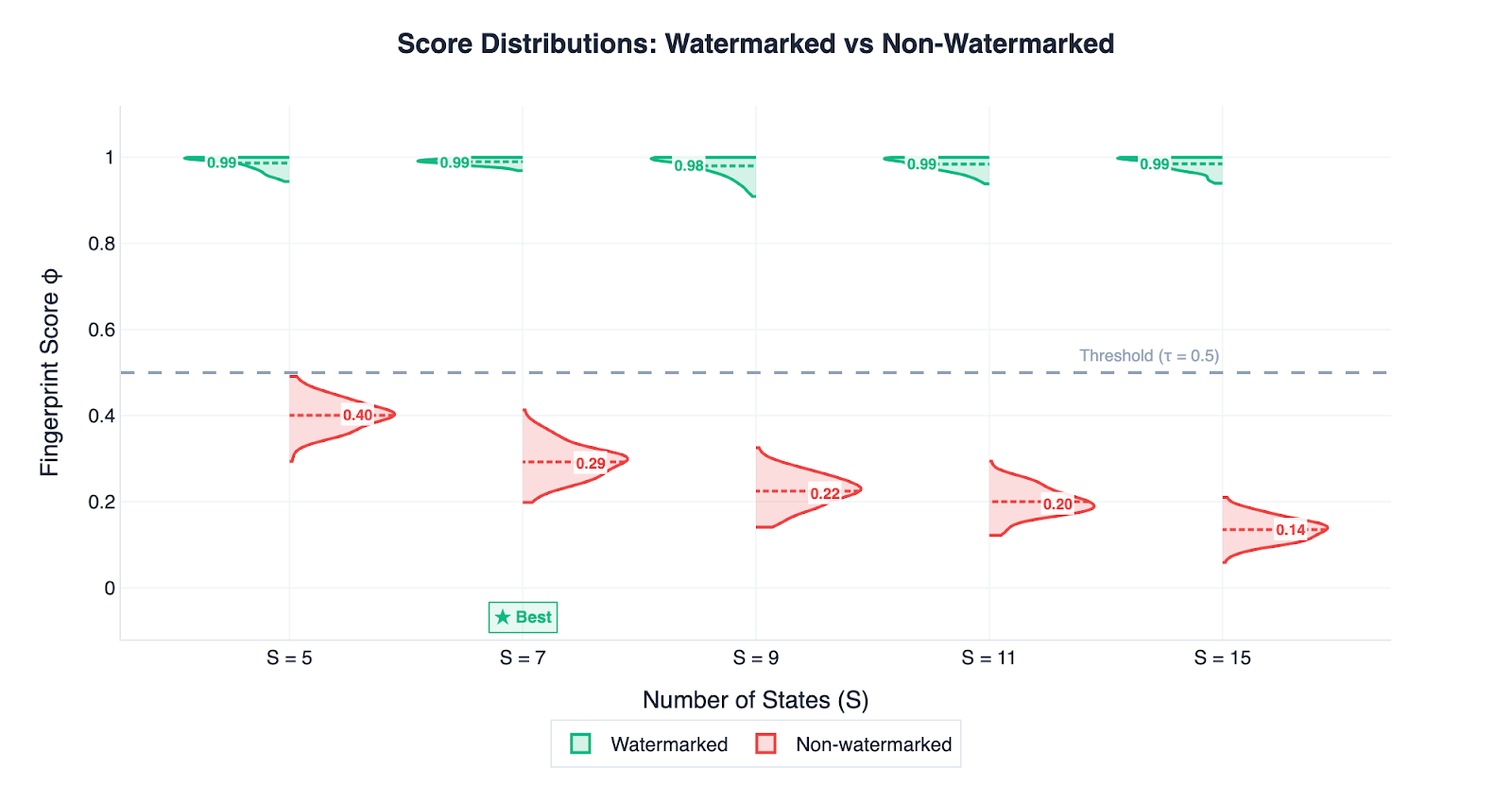

When you use this on text without a watermark, the score averages around k/S, because words fall into random groups and most pairs don’t follow the cycle by chance. In our best setting (S=7, soft cycle), this baseline is about 2/7, while watermarked text scores close to 1.0. This difference is big enough for perfect detection, as our experiments show.

The best part is that this method doesn’t require access to the original model. You only need the secret key and a simple hash function. A regulator could check thousands of documents on an ordinary laptop.

What we can prove

As a watermarking method, MCL has four advantages over other methods.

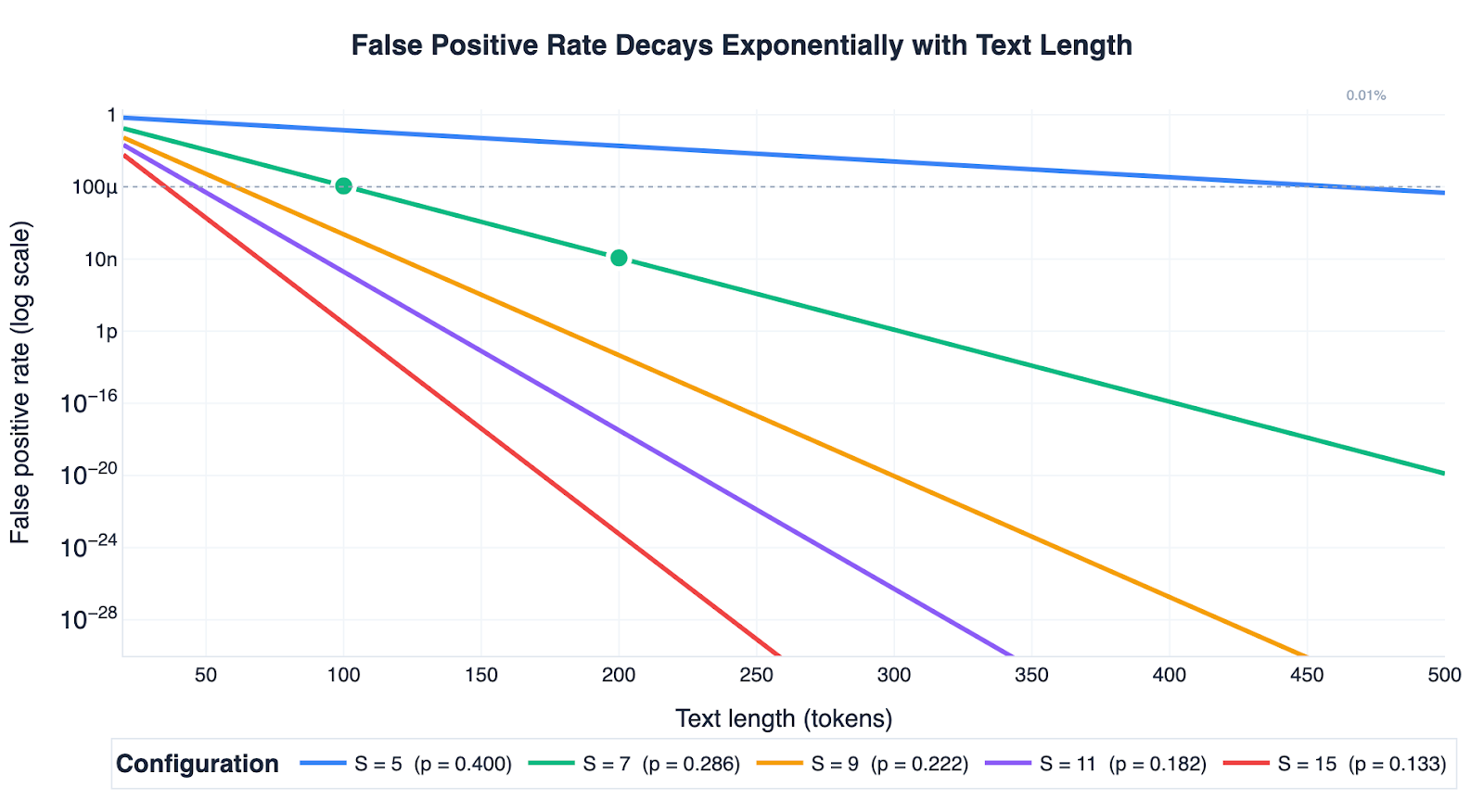

First, false alarms become basically impossible for longer texts. The probability of accidentally flagging a normal human-written text as AI-generated drops fast as the text gets longer. It can be proven using a standard statistical concentration bound (Hoeffding). The False Positive Rate (FPR) shrinks exponentially with text length as follows:

(where is the number of tokens in the text, is the threshold we use to decide “watermarked or not” (set it at 0.5). A random unwatermarked text would score the ratio / on average, where is how many groups are allowed at each step, and is the total number of groups.)

At 100 words, this gives less than a 0.011% chance of a false alarm; at 150 words, less than 0.0000012%. For anything longer than a short paragraph, false positives are almost impossible (Figure 2).

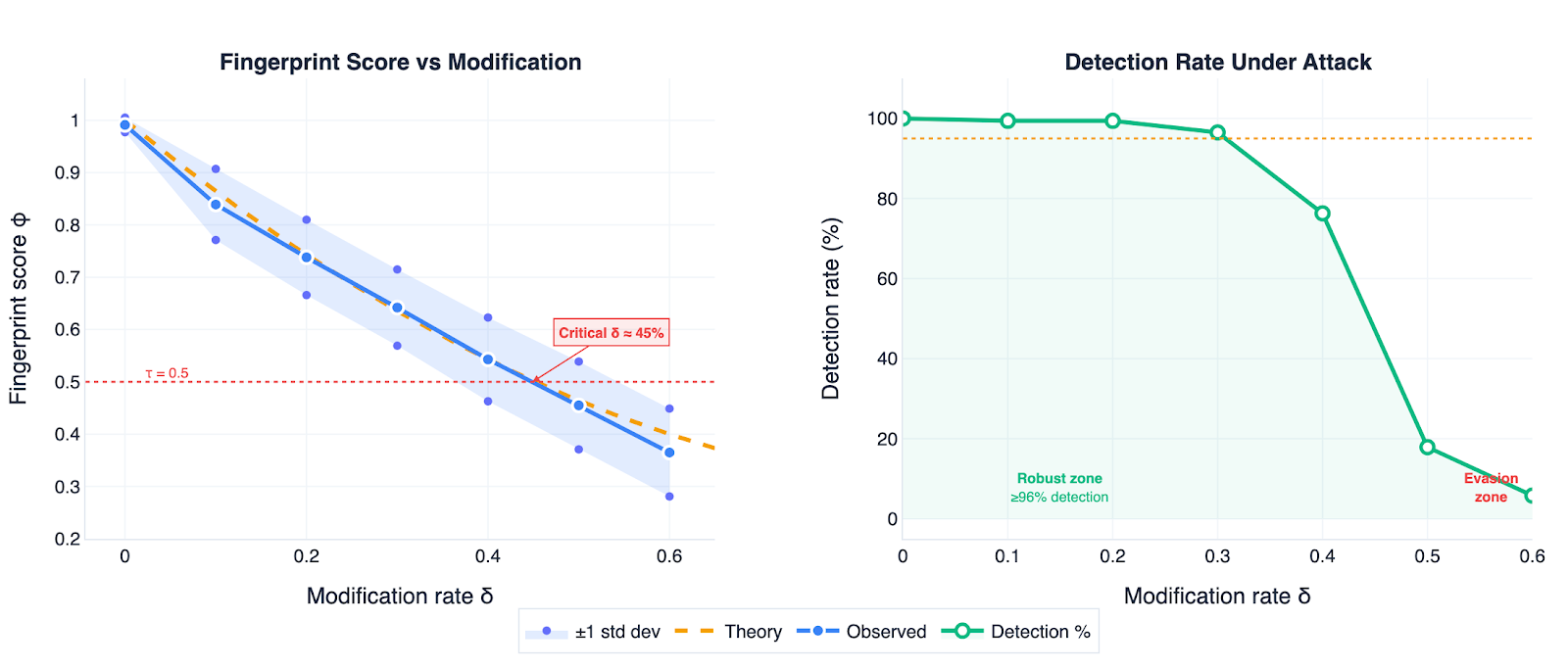

Second, we know exactly how much editing the watermark survives. In the case of text revision, the fingerprint score can be calculated as follows:

(where is the fraction of words changed (0 means no edits, 1 means every word was replaced), is the number of groups allowed at each step, and S is the total number of groups.)

The first part of the formula captures the word pairs that survived unchanged; the second part accounts for the fact that some of the newly generated random words might accidentally follow the cycle by chance.

Even if someone randomly swaps words, they would need to change at least 45% of them before the watermark disappears when S = 7. In practice, this means rewriting almost the whole text. The bigger challenge is modern AI paraphrasing tools, which can rewrite sentences smoothly without obvious edits. These might break the watermark with fewer changes. We discuss this further in the Limitations section.

Third, the watermark can't be forged or removed without the key. The secret key is the only way to know which group each token belongs to. Without it, the group assignments look completely random, so an attacker cannot reconstruct the pattern or produce a valid watermark.

Last, text quality takes a small, bounded hit. Restricting the vocabulary obviously affects text quality, but we can predict the cost, which grows only proportionally to log(S). On the reader's side, this is noticeable but not dramatic, and adding more groups makes it worse only slightly. In our experiments, perplexity increased from about 3.8 for unconstrained text to 4.2 with S=7.

Experimental Setup

We used Llama-3.2-3B-Instruct (with ~128,000 tokens) and the soft cycle (k=2) on 173 Wikipedia prompts spanning science, history, tech, and culture, each generating up to 200 tokens (~150 words).

We tested models across the number of vocabulary groups ({2,4,5,7,9,11,15}) and the percentage of tokens shared across different vocabulary groups (overlap level: 0%, 5%, 10%, 15%). At 0% overlap, each token belongs to exactly one group, strictly enforcing the cryptographic constraint, while higher overlap gives the model greater flexibility.

Across 28 configurations, we measured fingerprint scores, detection rate, false alarm rate, and perplexity. For results on Mistral-7B and GPT-2-XL, see Appendix B.

Results

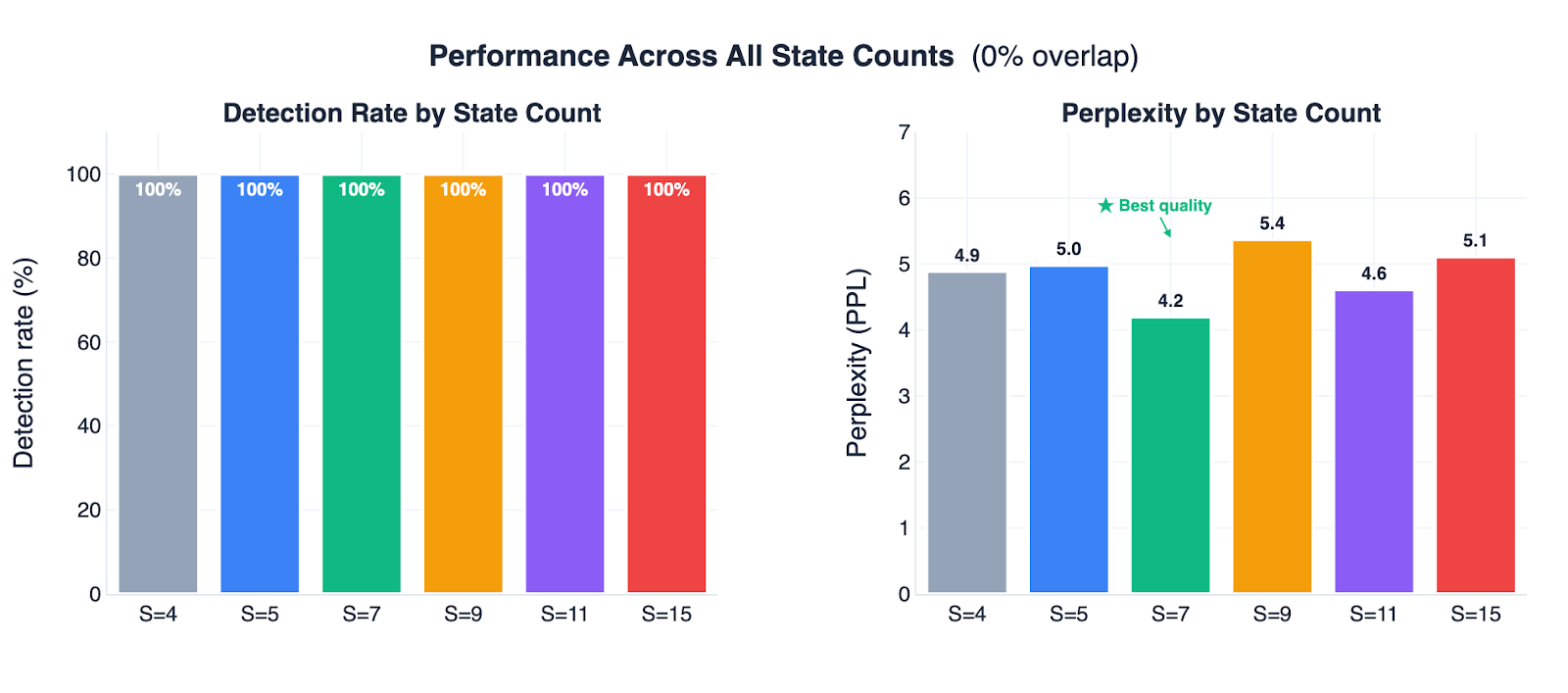

With S ≥ 5 and 0% overlap, every watermarked text scored higher than every non-watermarked text across all 173 prompts. A single threshold at 0.5 produced zero false positives and zero missed detections (Figure 3). Among all configurations that achieve perfect detection, S=7 produces the most natural-sounding text (perplexity 4.2), making it the best overall setting (Figure 4).

Figure 5 shows that the robustness formulas also held up empirically. Randomly replacing words weakens the watermark, but importantly, it follows the prediction from our formulas. Even when 30% of tokens were replaced, detection remained above 96%, matching theoretical predictions within 1–6 percentage points. To evade detection, attackers would need to corrupt nearly half the text.

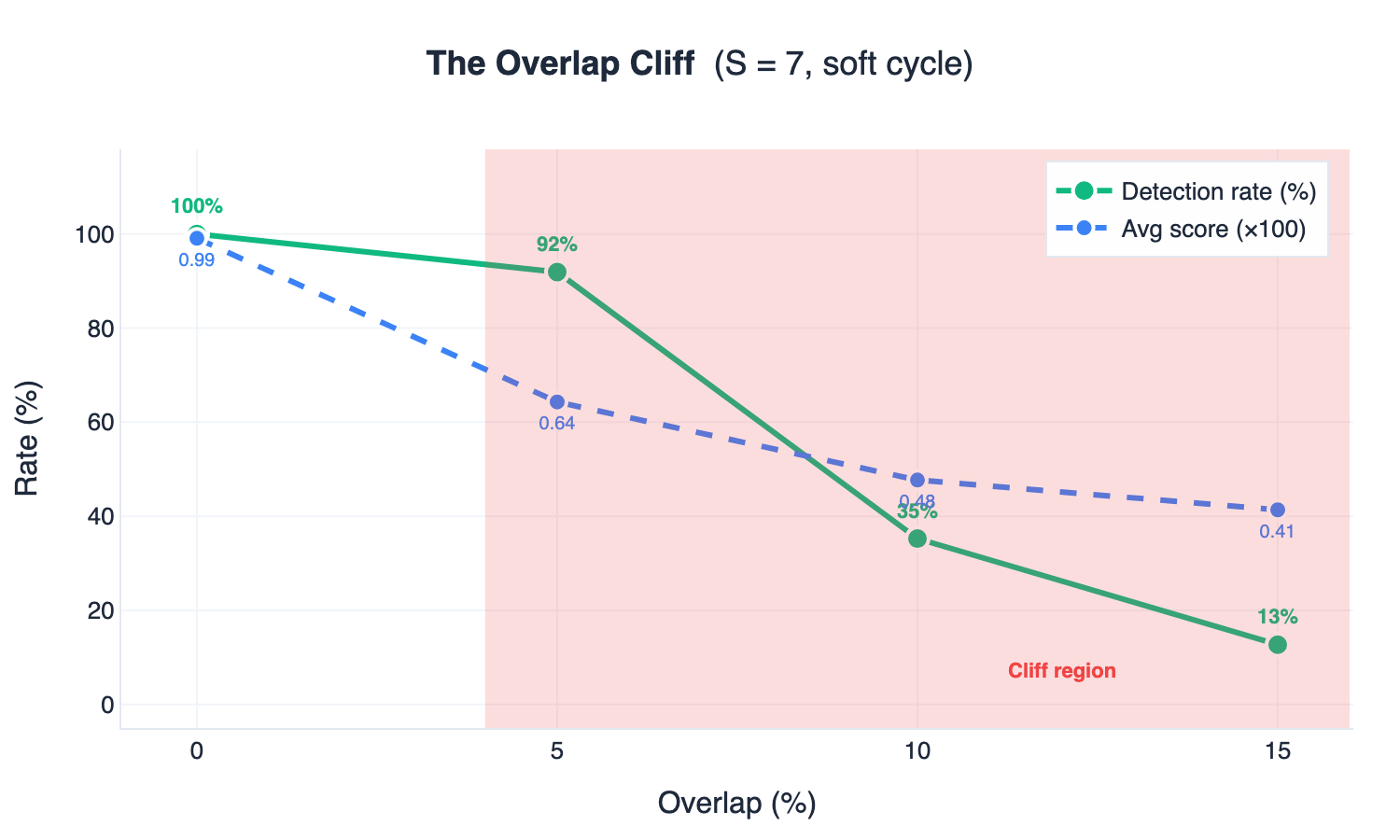

In addition to the findings, two failing configurations are worth flagging. One is that using S=2 with the soft cycle produces no signal, since every transition is allowed. The other is that any overlap between groups sharply degrades performance. As shown in Figure 6, even 5% overlap at S=7 drops detection from 100% to 92%. This means once tokens can belong to multiple groups, the cryptographic constraint no longer holds.

The results imply that MCL must be run at 0% overlap, but for Llama-3.2, the model still has roughly 18,000 tokens available at each step. So output quality is generally fine, though edge cases like code generation can be affected.

Limitations and open questions

Three issues deserve upfront acknowledgment.

Tokenizer coordination. MCL operates at the token level, and different models tokenize text differently. To verify a watermark, you need to know which tokenizer was used. The technical fix is straightforward: include the tokenizer information in the metadata (using C2PA or something similar). But getting all AI companies to agree on a metadata format before August 2026? That’s a coordination problem, not a technical one, and those are often harder to solve.

Untested against intelligent attacks. Our robustness tests only swapped out random words. A determined attacker would likely use another AI to paraphrase the text, which is easy to do and works well against most token-level watermarks. Our math covers any kind of token change, so the formulas still hold no matter how the text is edited. But we haven’t actually tested against AI-powered paraphrasing yet. The 30% random-replacement survival rate is promising, but it doesn’t show what happens if another LLM rewrites the text. That's the most important open question, and we plan to address it in follow-up work (including testing against GPT-5 and Claude).

The overlap cliff. The sudden drop from perfect performance to barely working between 0% and 5% overlap needs more study. It may be possible to relax the cryptographic constraint into something softer that handles overlap more gracefully.

One promising direction is sparse watermarking. Instead of applying the rule to every word, we’d use it only every few words. This lets the AI write more freely and makes the text sound more natural, but the watermark would still remain.

Conclusion

Our methodology achieves 100% detection and 0% false alarms with a perplexity of 4.20 (S=7). It has exponentially low false positives, exact robustness formulas, cryptographic unforgeability, and bounded quality costs. Lastly, MCL has a real advantage in that it can verify without access to the original AI model, and we hope it will help with compliance as the August 2026 deadline approaches.

Thanks to Apart Research for hosting the Technical AI Governance Challenge.

You can check our code here: github.com/ChenghengLi/MCLW

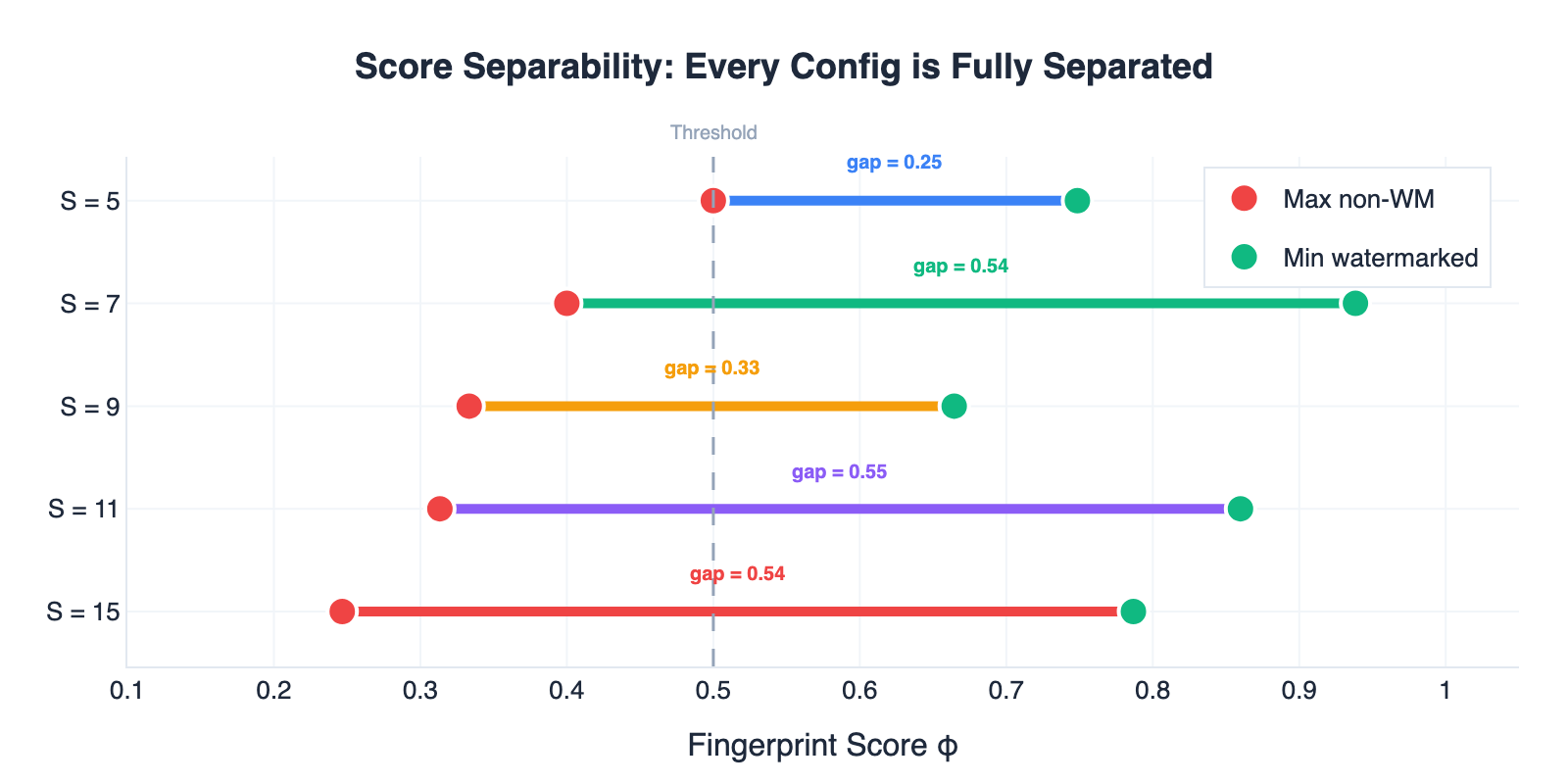

Appendix A: Score separability

In order to see if there can be confusion between watermarked texts and non-watermarked ones, we compared the lowest score of the watermarked samples against the highest score of the non-watermarked samples in every setting with at overlap. In every case, there is complete separation between the pairs. (Figure 7).

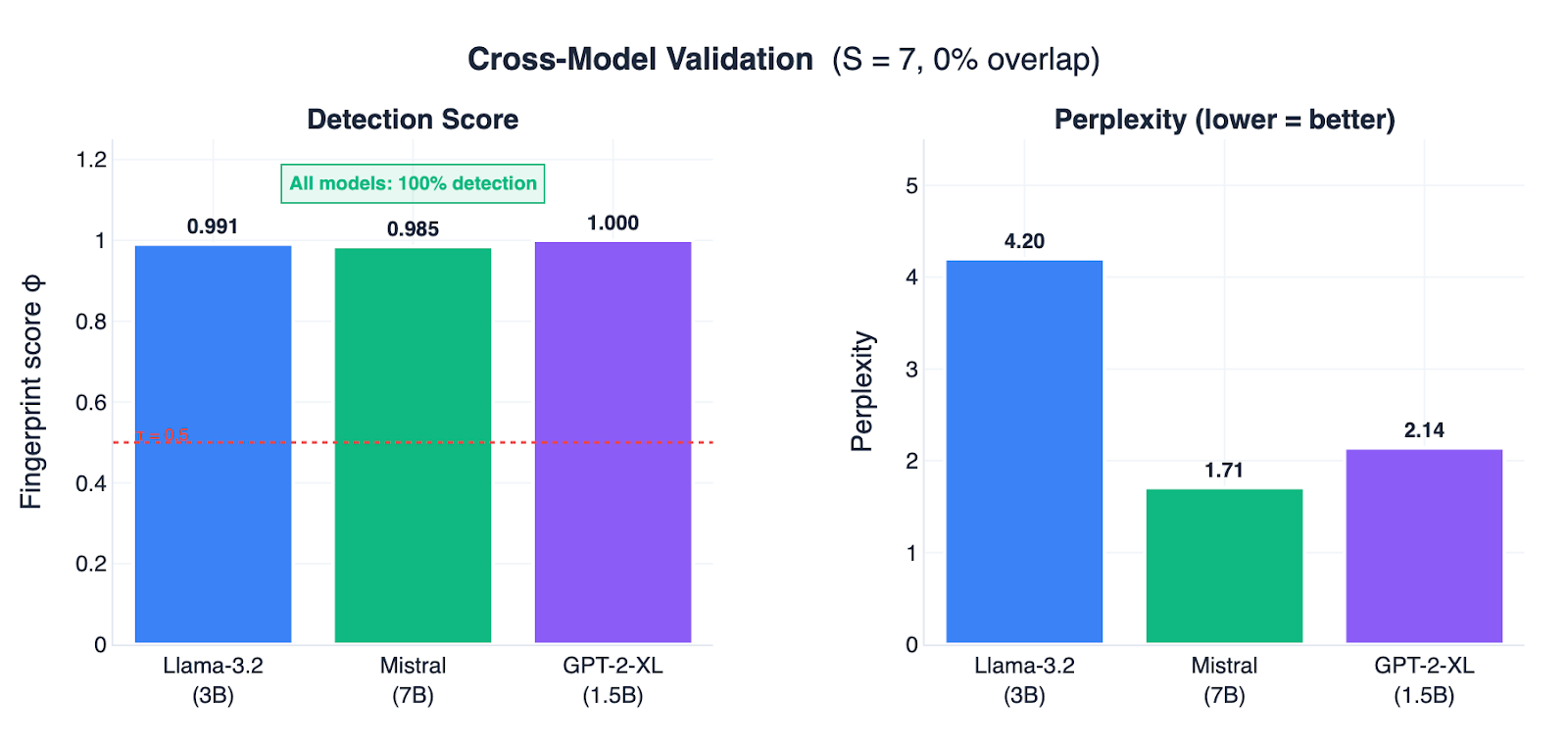

Appendix B: Cross-model validation

To check MCL's generalization, we ran the same 173 prompts on Mistral-7B-v0.3 and GPT-2-XL. All three models hit 100% detection at S=7 (Figure 8). The larger model (Mistral-7B) handled the vocabulary restriction much better, achieving a perplexity of just 1.71 compared to 4.20 for Llama.