John Salter

Bio

Participation1

Founder of Overcome, an EA-aligned mental health charity

Posts 15

Comments228

1. Get a pilot up and running NOW, even if it's extremely small.

You will cringe at this suggestion, and think that it's impossible to test your vision without a budget. Everyone does this at first, before realizing that it's extremely difficult to stand out from the crowd without one. For you, maybe this is a single class delivered in a communal area. 30 students attending regularly, demonstrating a good rate of progress, is a really compelling piece of evidence that you can run a school.

- Do you have the resilience and organisation skills it takes to independently run a project?

- Will people actually use it?

- Can you keep your staff?

- Can you cost-effectively produce results?

It can compelling prove the above, whilst having a ton of other benefits.

2. YOU need to be talking to funders NOW

Don't fall into the trap of trying to read their minds. Get conversations with them. Get their take on your idea. Ask what their biggest concerns would be. Go address them. Repeat. Build relationships with them and get feedback on your grant proposals before submitting them.

As the founder, its YOUR job to raise money. Don't delegate it. It'll take forever to get them to understand your organisation well enough, they won't be as sufficiently motivated to perform, and you won't learn. This is going to be a long-term battle that you face every year. You need to build the network, skills & knowledge to do it well.

3. Be lean AF

The best way to have money is not to spend it. Both you and your charity may go without funding for months or years. Spend what little money you have, as a person and as a charity, very slowly. The longer you've been actively serving users, the easier fundraising gets. It's about surviving until that point.

4. Funders will stalk your website, LinkedIn, and social media if they can

As much as possible, make sure that they all tell the same story as your grant application - especially the facts and figures.

5. When writing your proposals, focus on clarity and concreteness above all else

Bear the curse-of-knowledge in mind when writing. Never submit anything without first verifying other people can understand it clearly. Write as though you're trying to inform, not persuade.

- Avoid abstractions

- State exact values ("few" -> "four", "lots" -> "nine", "soon" -> "by the 15th March 2024")

- Avoid adjectives and qualifiers. Nobody cares about your opinions.

- Use language that paints a clear, unambiguous image to the readers mind

OLD: mean student satisfaction ratings have increased greatly increased since programs began and we believe it's quite reasonable to extrapolate due to our other student-engagement enhancements underway and thus forecast an even greater increase by the end of the year"

NEW: When students were asked to rate their lessons out of 10, the average response was 5. Now, just three months later, the average is 7/10. Our goal is to hit 9/10 by 2025 by [X,Y,Z].

Good luck!

I think schlep blindness is everywhere in EA. I think the work activities of the average EA suspiciously align with activities nerds enjoy and very few roles strike me as antithetical. This makes me suspicious that a lot of EA activity is justified by motivated reasoning, as EAs are massive nerds.

It'd be very kind of an otherwise callous universe to make the most impactful activities things that we'd naturally enjoy to do.

Strongly upvoted because:

1. I think Shamiri model is worth replicating in other places

2. Recent regulatory changes are fantastic times to start new orgs

That being said, your presentation of the idea here and in your longer pdf is weak.

1. There's lots of typos / formatting mistakes.

2. The information density is too low - I can't quite bring myself to read it for more than a few minutes.

3. Your call to action is a 50 page PDF. That's too larger first commitment. The type of people who'd fund this or want to collaborate don't have that type of time.

We don't have a public page for it; people sign-up via word-of-mouth and invite via incubators. We handpick and train mental health coaches for EA founders from the people who got the best results for regular EAs. The thesis was that people who're founding or scaling an EA charity founders face a ton of mental health challenges and that can be resolved quickly and help them and their charity succeed.

I figured getting the results would be the hard part, or convincing founders you could, but no. Within ~2 years, over half of AIM incubated charities have had one or more founders successfully resolve one mental health problem with us. ~90% of people who do the first session complete the programme and ~50% decide to keep going after it ends to work on their next most pressing problem. This is waaaaaaaay better than our stats for regular EAs and regular people - Founders underinvest in themselves so hard, and are so focussed on making their organisation succeed, that tons of low hanging fruit remain.

The problem is getting someone to fund it long-term:

- Early stage founders are broke, irrationally self-sacrificial, and time-poor

- Mental health funders, for good reason, care mostly just for LMICs

- Meta funders, for good reason, don't want to choose for others what service would work best for them / their incubatees.

So, while finding seed funding to demonstrate POC was really easy, getting something durable isn't. Donors think incubators should fund it. Incubators think donors should, after all, it's an ecosystem wide service.

It only costs ~$80k a year to run, I'll figure out a way to do it, it's whether I can do that in time to avoid losing talent I can't replace. I have one coach with a ~90% success rate, who only costs ~$33k a year, considering quitting because they don't believe the job will exist in 2 years. The founders she supports collectively have a budget in the tens of millions and several are widely used as examples as EA's most successful ever charities. We can't replace her: she's dramatically better than anyone else we employ, miles better than me, and neither she nor I understand how she does it.

I don't really want a grant. I want some mechanism whereby we can be paid by results or just compete in an open-market that isn't so distorted by the expectation that donors will cover everything.

We're sadly no longer accepting sign-ups for our founder's programme. We've had an influx of demand and we're now fully at capacity for the foreseeable future. Its funding situation is precarious and I've sadly got to focus on that now. Results are nuts, but mental health funders are focussed on LMICs, meta funders don't like mental health interventions, so it's a challenging category to even survive in.

For now, I've got to focus on doing a good job for our existing clients. I'm sorry!

Hi there, Angel here, John's co-founder who ran the study.

Intervention

The four-week, one-to-one coaching programme combined motivational interviewing and cognitive behavioural therapy techniques, delivered via weekly 60-minute video calls by trained volunteer coaches following a manualised guide.

Session 1 focused on identifying procrastination triggers and building personalised action plans. Sessions 2-3 reviewed progress and addressed unhelpful thoughts and emotions through cognitive restructuring, behavioural activation, and self-monitoring. Session 4 consolidated learning and built a long-term maintenance plan.

Coaches

Outside of this RCT, Overcome runs a three-month internship programme to aspiring mental health professionals to get training and hand-on experience delivering coaching. The first month of this internship is a full-time training programme on techniques from MI, CBT, and acceptance and commitment therapy, including workshops, readings, role-plays, and assessments. In the later two months of the internship, the interns then coach adults across the globe to build healthy habits or manage low mood/worries.

For this RCT, five volunteer coaches from Overcome were trained and delivered the procrastination coaching programme. The selected coaches performed strongly in their initial training and had promising client outcomes.

To prepare the selected coaches, they received two hours of study-specific training, including a workshop on the intervention protocol and a role-play session. They also attended weekly one-hour group supervision with a counselling psychologist to discuss and troubleshoot client cases.

Hi there, Angel here, John's co-founder who ran the study.

1) Question on "20 multiply imputed datasets"

a) What does this mean? What are you imputing and how are you imputing it?

Excluding 3 participants who later withdrew, we had 114 participants in the trial. However, only 94 participants had complete data (pre & post for control; pre, post & follow-up for intervention). This is around 82% of complete cases. One way of handling missing data is multiple imputation, which makes plausible guesses about missing values using the information you do have. I used multiple imputation (via programming in R) to create 20 complete datasets of all 114 participants, with guesses based on each participant's demographics (age, gender, group) and outcome scores (pre, post, and follow-up scores; follow-up for the intervention group only).

b) What are the results if you don't do any imputation?

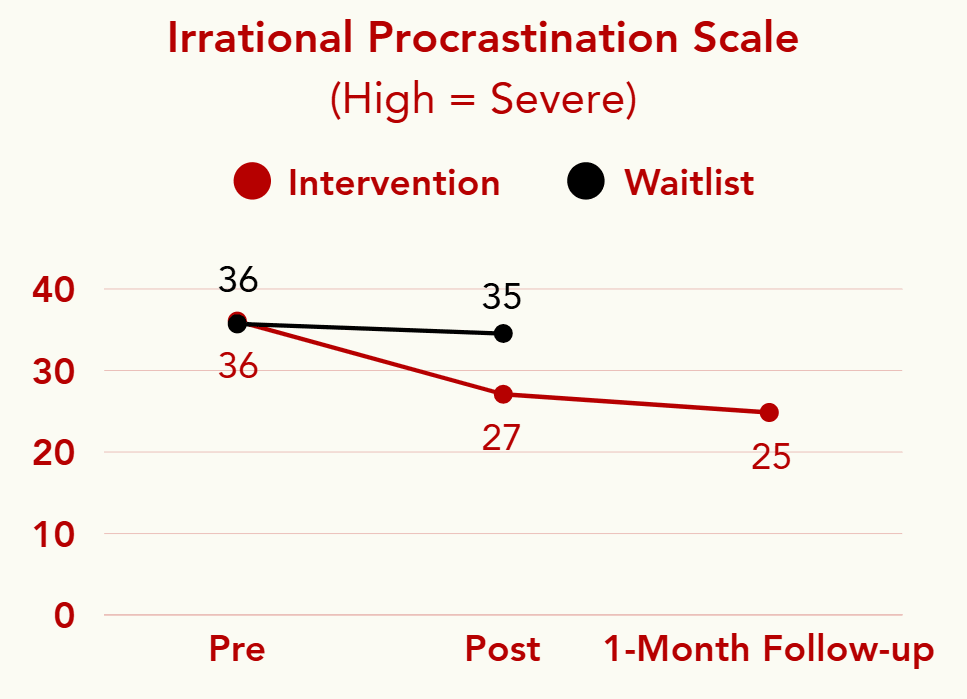

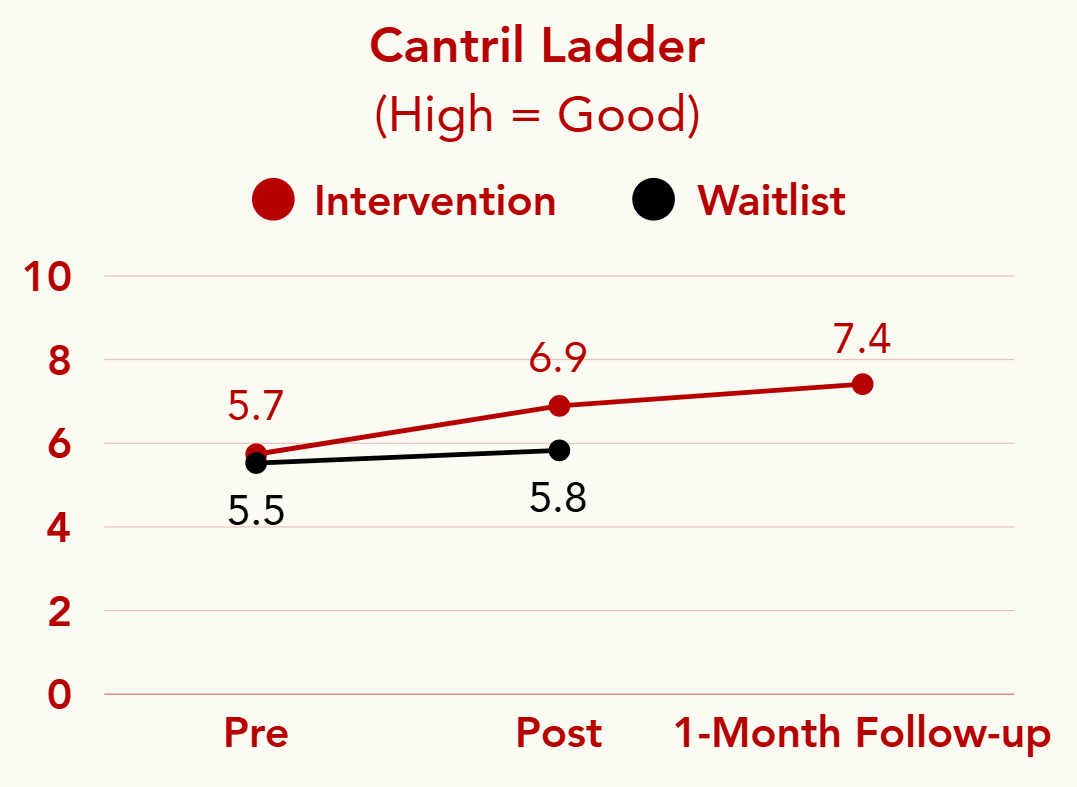

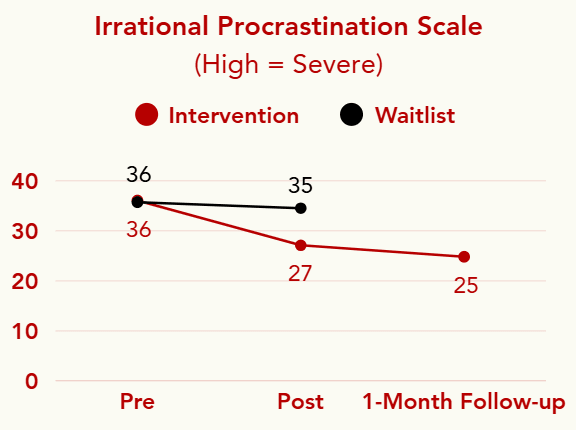

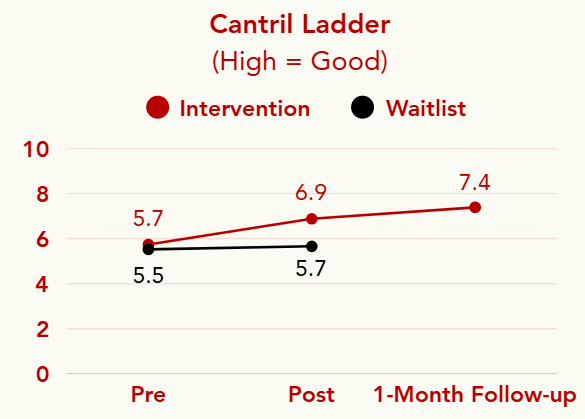

See the attached graphs for the results of the complete-case analysis of 94 participants. The results are fairly similar:

Procrastination: The four-week intervention led to a statistically significant reduction in procrastination compared to the waitlist (p < .001, Cohen’s d = 1.56). Effect size is comparable to 10-week internet CBT guided by professional therapists (Rozental et al., 2017).

Life satisfaction: The four-week intervention led to a statistically significant increase in life satisfaction compared to the waitlist (p < .001, Cohen’s d = 0.95).

2) Question on 1-month follow-up effects

You raise a fair point. The 1-month follow-up was only collected for the intervention group. The waitlist control group began the intervention immediately after the waitlist period ended, and time constraints meant we couldn't collect follow-up data from them.

Because of this, we didn't compare intervention and control groups at follow-up. Instead, the claim that effects were maintained is based on within-group comparisons for the intervention group only (post-intervention vs. 1-month follow-up), using Hedges' g rather than Cohen's d to reflect this. We should have been clearer about that in the post.

You're right that without a control group follow-up, we can't rule out that scores would have continued to improve naturally over time. We're running a larger RCT with a longer follow-up period in the future and plan to collect follow-up data from the control group too, which will let us make stronger claims about longer-term effects.

Quick answers

Control group was a four-week waitlist. 1-month follow-up was only collected for intervention group as the waitlist group started the intervention as soon as waitlist ended, plus time constraints for the project.

When analysing the results with 20 multiply imputed datasets:

1. Procrastination: Statistically significant pre-post reduction in intervention compared to waitlist (p < .001, Cohen’s d = 1.52). Within intervention group, further small reduction at one-month follow-up compared to post-intervention (n = 47, p < .001, Hedges’ g = 0.36).

2. Life satisfaction: Statistically significant pre-post increase in intervention compared to waitlist (p < .001, Cohen’s d = 0.93). Within intervention group, further small increase at one-month follow-up compared to post-intervention (n = 47, p < .001, Hedges’ g = 0.42).

Detailed version: https://www.canva.com/design/DAGqbQzPKJY/8NkRiubgsgDUpDPIbIkwqg/edit?utm_content=DAGqbQzPKJY&utm_campaign=designshare&utm_medium=link2&utm_source=sharebutton

How Fran goes out of her way to acknowledge the good, even after a genuinely awful experience, is a testament to her truth-seeking.

- She calls the CEO's final apology genuine and says she appreciated it.

- She's enthusiastic about the new HR hire.

- She praises her line manager who otherwise might have faced a ton of undue scrutiny.

Perhaps largely due to this, the comment section has remained unusually civil and constructive for something as scandalous. As bad as it would be to punish CEA for allowing this post to happen, it'd be even worse if despite it nothing changes. I really hope this works out for both parties!

I think there's a ton of obvious things that people neglect because they're not glamorous enough:

1. Unofficially beta-test new EA stuff e.g. if someone announces something new, use it and give helpful feedback regularly

2. Volunteer to do boring stuff for impactful organisations e.g. admin

3. Deeply fact-check popular EA forum posts

4. Be a good friend to people doing things you think are awesome

5. Investigate EA aligned charities on the ground, check that they are being honest in their reporting

6. Openly criticise grifters who people fear to speak out against for fear of reprisal

7. Stay up-to-date on the needs of different people and orgs, and connect people who need connecting

In generally, looking for the most anxiety provoking, boring, and lowest social status work is a good way of finding impactful opportunities.