All of philosophytorres's Comments + Replies

To be clear, everything they complain about was after I left the project (so far as I know). I was as surprised as anyone else to read Zoe's EA Forum post -- I hadn't even seen a draft of it, and didn't know she'd written it. Their complaints had nothing to do with me having worked on an early draft of the paper!

You are defending yourself against something I did not accuse you of.

I claimed that what you do is insinuation. This indeed implies precisely what you have just claimed: that you never directly write "X is a white supremacist" or "X is a racist".

You constantly retreat to the alleged fact that you have never said these things explicitly, which is why I was careful with my words.

It's not a "wild accusation", it's a reasonable characterization of very many tweets and articles of yours about figures in longtermism and EA. Have a nice day.

EA Forum moderators take note: I believe the individual above is the same who created these two Twitter accounts just a few days ago, both of which were used to harass me on Twitter: https://twitter.com/xriskology/status/1569401706999595009. I have screenshots of many of our exchanges if you'd like. Harassment on social media should warrant being banned from this website, especially when the harasser continues to conceal their identity. Please act.

(EDIT: Please note also that this "throwaway" account was created just this month. Are you, as a communi...

Please note also that this "throwaway" account was created just this month.

My prior is that the reason Throwaway151 posted under a new anonymous account is not that they want to harass you. Rather, it's that there is public evidence that you yourself harass (evidence: your exchanges with Peter Boghossian) those who you perceive to be your enemies. Anonymity is not ideal but it's understandable given your history, in my opinion, even if you've admitted to and apologized for some of this past conduct.

Again, it goes without saying that none of this would j...

Are you, as a community, okay with people creating anonymous Twitter accounts and anonymous EA Forum accounts to share misleading and out-of-context screenshots about someone?

I am confident I speak for the community when I say: no, absolutely not. If you are being harassed on Twitter, by Throwaway151 or by anyone else, that is wrong and unacceptable. I'd be especially angry and concerned if Twitter harassment is coming from EAs, and I emphatically condemn any such behavior.

...Harassment on social media should warrant being banned from this website, espec

To recall, what you tweeted was this: "We had already finished a penultimate draft of the paper. I was removed. Forcibly. So much for academic freedom"

Did you or did you not, at the time, have definite evidence of being "removed forcibly" after the penultimate draft? It strains credulity that you could have been "misremembering" that this happened.

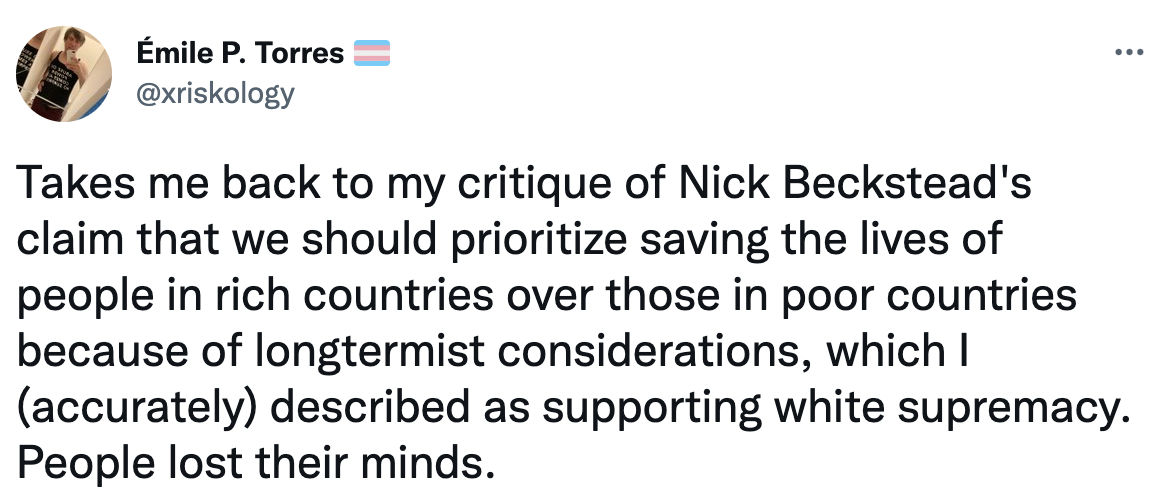

I looked into evidence for the quote you posted for one hour. While I think the phrasing is inaccurate, I’d say the gist of the quote is true. For example, it's pretty understandable that people jump from "Emile Torres says that Nick Beckstead supports white supremacy" to "Emile Torres says that Nick Beckstead is a white supremacist".

White Supremacy:

In a public facebook post you link to this public google doc where you call a quote from Nick Beckstead “unambiguously white-supremacist”.

You reinforce that view in a later tweet:

https://twitt...

I don't know how to embed snapshots, but anyone who wishes is welcome to type "phil torres" into linkedin or email me for the snapshots I've just taken right now - it brings up "Researcher at Centre for the Study of Existential Risk, University of Cambridge". As I say, it's unclear if this is deliberate - it may well be an oversight, but it has contributed to the mistaken external impression that Phil Torres is or was research staff at CSER.

[Responding to Alex HT above:]

I'll try to find the time to respond to some of these comments. I would strongly disagree with most of them. For example, one that just happened to catch my eye was: "Longtermism does not say our current world is replete with suffering and death."

So, the target of the critique is Bostromism, i.e., the systematic web of normative claims found in Bostrom's work. (Just to clear one thing up, "longtermism" as espoused by "leading" longtermists today has been hugely influenced by Bostromism -- this is a fact, I believe, about intel...

You don't even have the common courtesy of citing the original post so that people can decide for themselves whether you've accurately represented my arguments (you haven't). This is very typical "authoritarian" (or controlling) EA behavior in my experience: rather than given critics an actual fair hearing, which would be the intellectually honest thing, you try to monopolize and control the narrative by not citing the original source, and then reformulating all the arguments while at the same time describing these reformulations a...

Wow, this is absolutely stunning. I can't myself participate, but I genuinely hope this project takes off. I'm sure you're familiar with the famous (but not demolished) Building 20 at MIT: https://en.wikipedia.org/wiki/Building_20. It provided a space for interdisciplinary work -- and wow, the results were truly amazing.

Friends: I recently wrote a few thousand words on the implications that a Trump presidency will have for global risk. I'm fairly new to this discussion group, so I hope posting the link doesn't contravene any community norms. Really, I would eagerly welcome feedback on this. My prognosis is not good.

A fantastically interesting article. I wish I'd seen it earlier -- about the time this was published (last February) I was completing an article on "agential risks" that ended up in the Journal of Evolution and Technology. In it, I distinguish between "existential risks" and "stagnation risks," each of which corresponds to one of the disjuncts in Bostrom's original definition. Since these have different implications -- I argue -- for understanding different kinds of agential risks, I think it would be good to standardize the n...

No one knew I was involved, though. Honestly. All that happened after I'd moved on. I was as surprised as everyone else to read Zoe's EA Forum post.