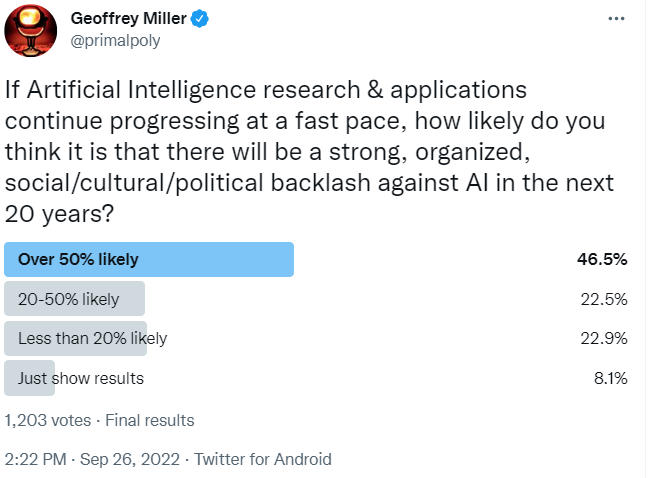

I ran a little Twitter poll yesterday asking about the likelihood of a strong, organized backlash against Artificial Intelligence (AI) in the next 20 years. The results from 1,203 votes are below; I'm curious about your reactions to these results.

Caveats about poll sampling bias: Only about 1% of my 124k followers voted (which is pretty typical for polls). As far as I can tell, most of my followers seem to be based in the US, with some in the UK, Europe, etc.; most are politically centrist, conservative, libertarian, or slightly Lefty; most are male, college-educated, and somewhat ornery. These results should not be taken seriously as a globally, demographically, or politically representative poll; more research is needed.

Nonetheless: out of the 1,106 people who voted for one of the likelihood options, about 69% expected more than a 20% likelihood of a strong anti-AI backlash. That's much higher than I expected (given that most people carry around dozens of narrow AI systems every day, arguably, in the form of apps on their smartphones.)

We've seen timelines predicting when AGI will be developed. Has anyone developed any timelines about if/when an anti-AI backlash might develop, and/or considered how such a backlash might delay (or stop) AI research and development?

I'm familiar with arguments that there are irresistible geopolitical and corporate incentives to develop AI as fast as possible, and that formal government regulation of AI would be unlikely to slow that pace. However, the arguments I've seen so far don't seem to take seriously the many ways that informal shifts in social values could stigmatize AI research, analogous to the ways that the BLM movement, MeToo movement, anti-GMO movement, anti-vax movement, etc have stigmatized some previously accepted behaviors and values -- even those that had strong government and corporate support.

PS I ran two other related polls that might be of interest: one on general attitudes towards AI researchers (N=731 votes; slightly positive attitudes overall, but mixed), and one on whether people would believe AI experts who claim to have develop theorems proving some reassuring AI safety and alignment results (N=972 votes; overwhelming 'no' votes, indicating high skepticism about formal alignment claims).

I think the results being surprising are indicative of EAs underestimating how likely this is. AI has many bad effects; social media, bias + discrimination, unemployment, deepfakes, etc. Plus I think sufficiently competent AI will seem scary to people; a lot of people aren't really aware of recent developments but I think would be freaked out if they were. I think we should position ourselves to utilize this backlash if it happens.

Yes, I think that once AI systems start communicating with ordinary people through ordinary language, simulated facial expressions, and robot bodies, there will be a lot of 'uncanny valley' effects, spookiness, unease, and moral disgust in response.

And once technological unemployment from AI really starts to bite into blue collar and white collar jobs, people will not just say 'Oh well! Life is meaningless now, and I have no status or self-respect, and my wife/husband thinks I'm a loser, but universal basic income makes everything OK!'

I agree, but with a caveat: EA should be willing to ditch any group that makes it a partisan issue, rather than a bipartisan consensus. Because I can easily see a version of this where it gets politicized, and AI safety starts to be viewed as a curse word similar to words like globalist, anti-racist, etc.

Tricky thing is, everything we can imagine tends to become a partisan, polarized issue, if it's even slightly associated with any existing partisan, polarized positions, and if any political groups can gain any benefit from polarizing it.

I have trouble imagining a future in which AI and AI safety issues don't become partisanized and polarized. The political incentives for doing so -- in one direction or another -- would just be too strong.

I think if there is major labor displacement by AI there will be a backlash just based on historical precedent. I think it would be much weirder if people were happy or even neutral about being replaced. I think the idea that massive amounts of people will be able to be retrained especially if they are middle aged is unrealistic. Especially since most of the jobs that are prone to replacement are those that are low or middle skill and retraining to a higher skill job requires quite a bit of effort. The other side of the coin is that the demographic shift that will happen in the coming decades will keep the labor market tight enough to prevent automation having such a huge impact. The increased automation may just make up for the lower labor force participation.

Good points. Collapsing birth rates and changing demographics might slightly soften the technological unemployment problem for younger people. But older people who have been doing the same job for 20-30 years will not be keen to 'retrain', start their careers over, and make an entry-level income in a job that, in turn, might be automated out of existence within another few years.

What do imagine "anti-AI backlash" would look like? Comparing it to BLM also seems a bit odd to me, especially by you saying that BLM "stigmatized some previously accepted behaviors and values". What behaviours and values were stigmatized by BLM? and what behaviours and values do you see as having the potential to be stigmatized with regards to AI?

Behaviours like police traffic stops, disputing people of colour's lived experiences, or calling the cops in response to disturbances or crimes, and values like support for the police or fear of crime.

Lauren - I was thinking of how BLM stigmatized certain policing methods such as chokeholds, rough restraint tactics, etc (that had been previously accepted).

The analogy would be, an anti-AI movement could stigmatize previously accepted behavior such as doing AI research without any significant public buy-in or oversight, which would be re-framed as 'summoning the demon', 'recklessly endangering all of humanity', 'soulless necromancy', 'playing Dr. Frankenstein', etc.

Thanks, that’s helpful.

More importantly, BLM backfired on it's goals.

Interestingly enough, I sort of want to say that your movement examples are interesting mostly in their failure modes, albeit the anti-vax movement was probably the closest to success. However AGI is a new problem, so sustained backlash could plausibly slow or stop AGI as long as it's bipartisan.

Yes; it might also happen that AGI attitudes get politically polarized and become a highly partisan issue, just as crypto almost became (with Republicans generally pro-crypto, and Democrats generally anti-crypto). Hard to predict which direction this could go -- Leftist economic populists like AOC might be anti-AI for the unemployment and inequality effects; religious conservatives might be anti-AI based more on moral disgust at simulated souls.