Summary

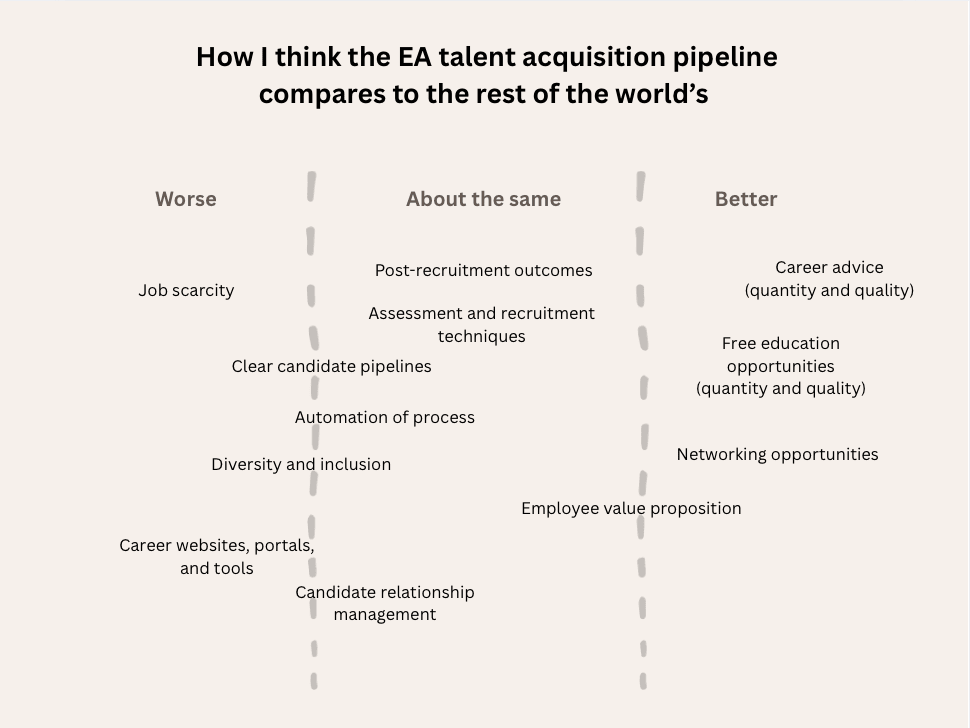

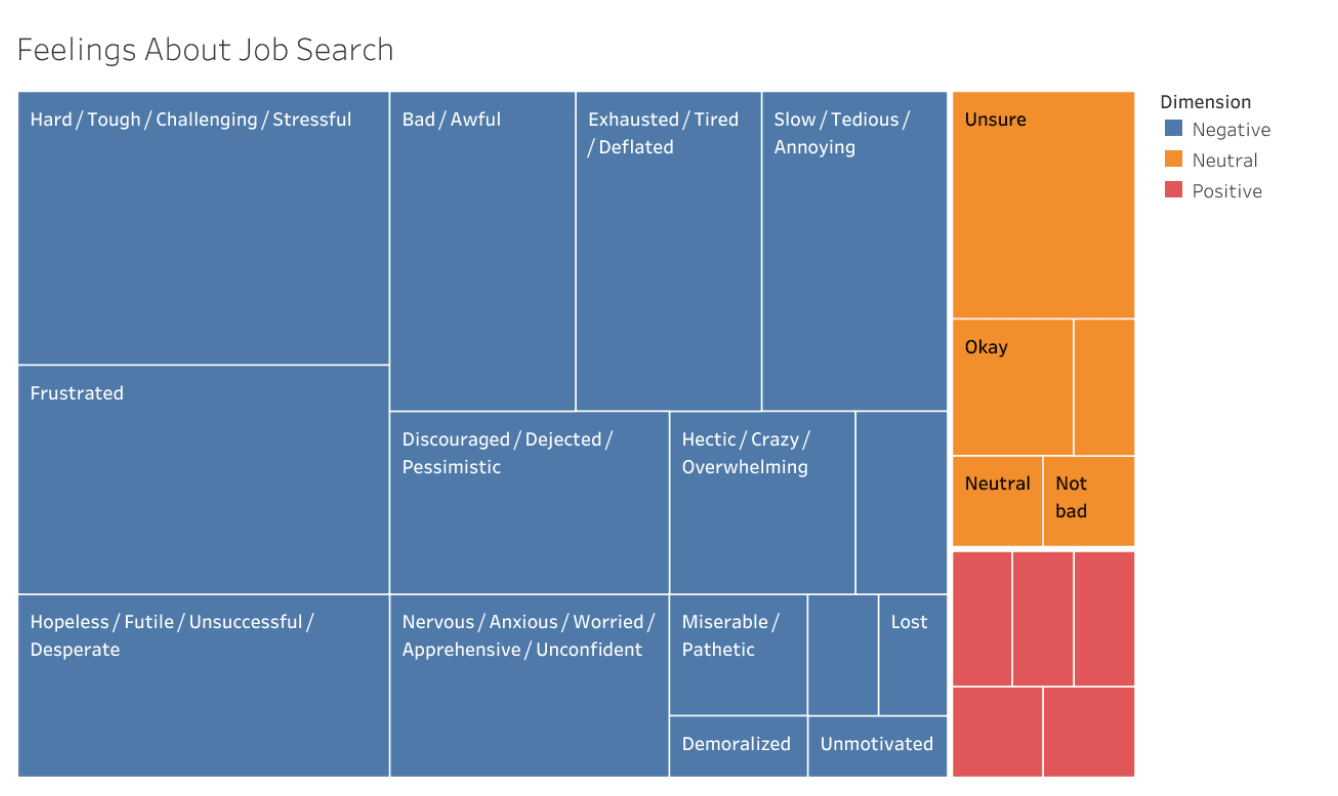

- The process of finding a job within EA is widely described as enormously inefficient, draining time and resources from applicants and organisations alike.[1] Although the difficulties around talent acquisition are largely representative of today's broader reality outside of EA, I argue that the particularities of the EA ecosystem offer unique opportunities to improve on this system. Many of the solutions proposed here have been proposed in whole or in part by different EA stakeholders, from applicants to organisations, through to EA members concerned about the negative impact that this has on the movement as a whole. See below for a list of relevant forum posts.

- To improve on the current situation, I make three concrete proposals:

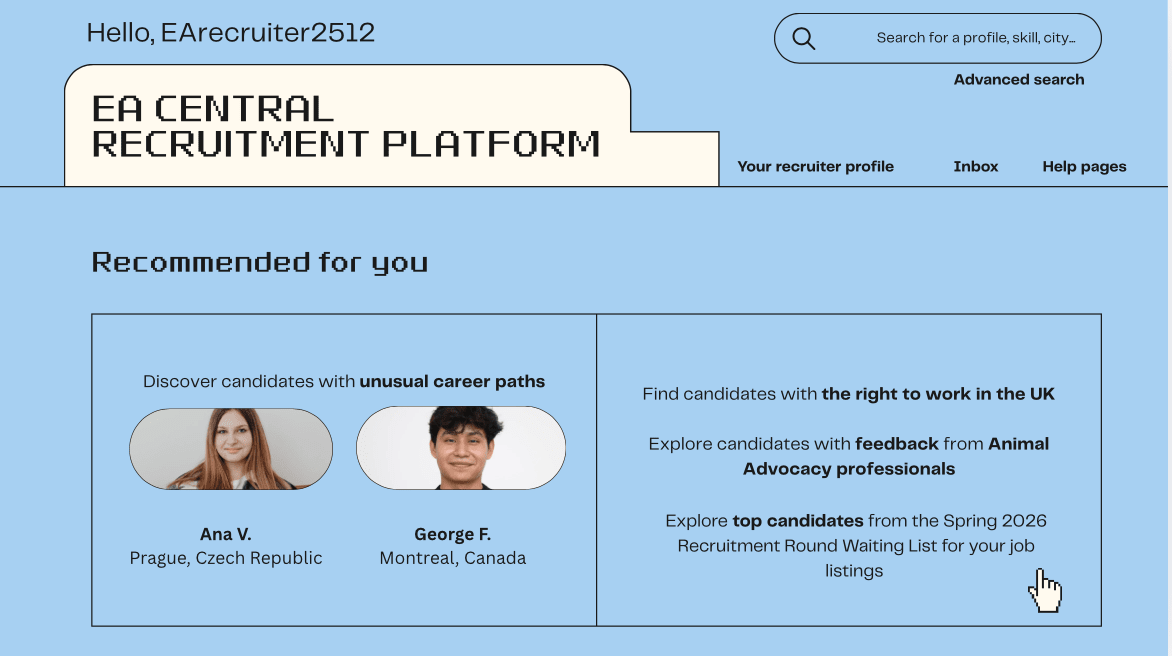

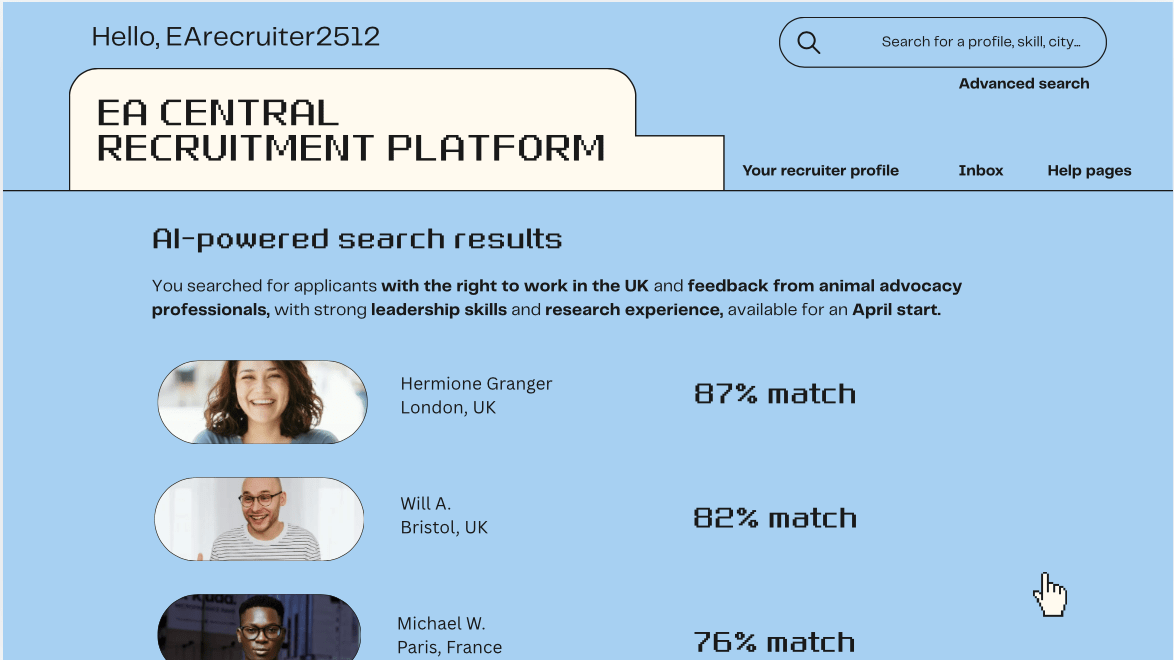

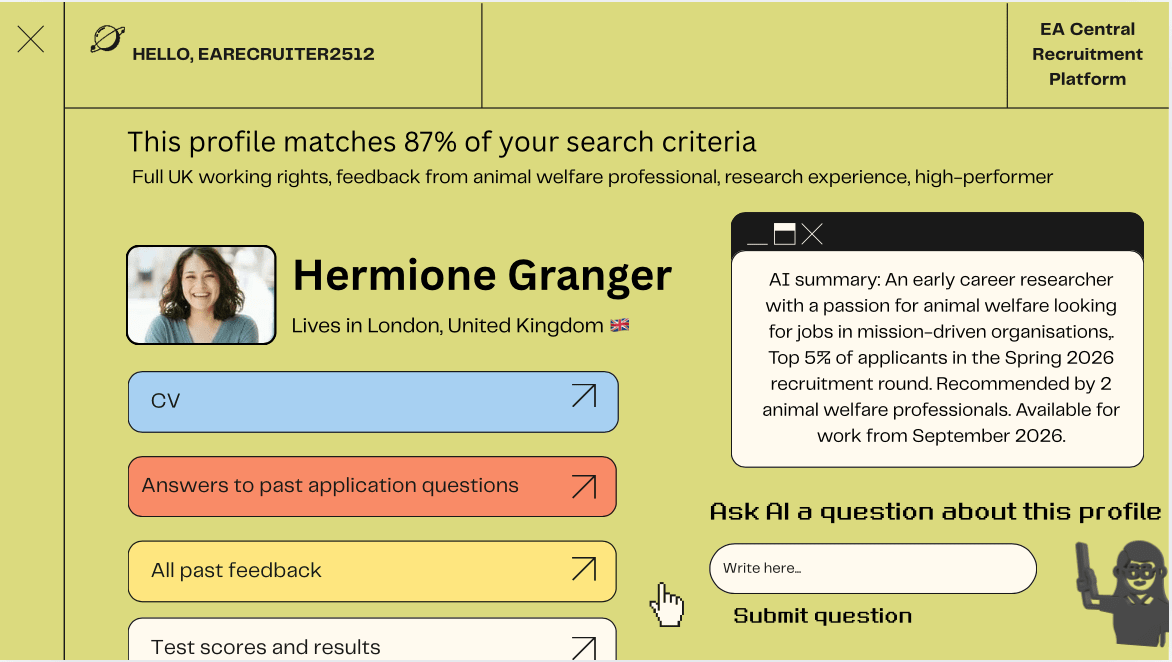

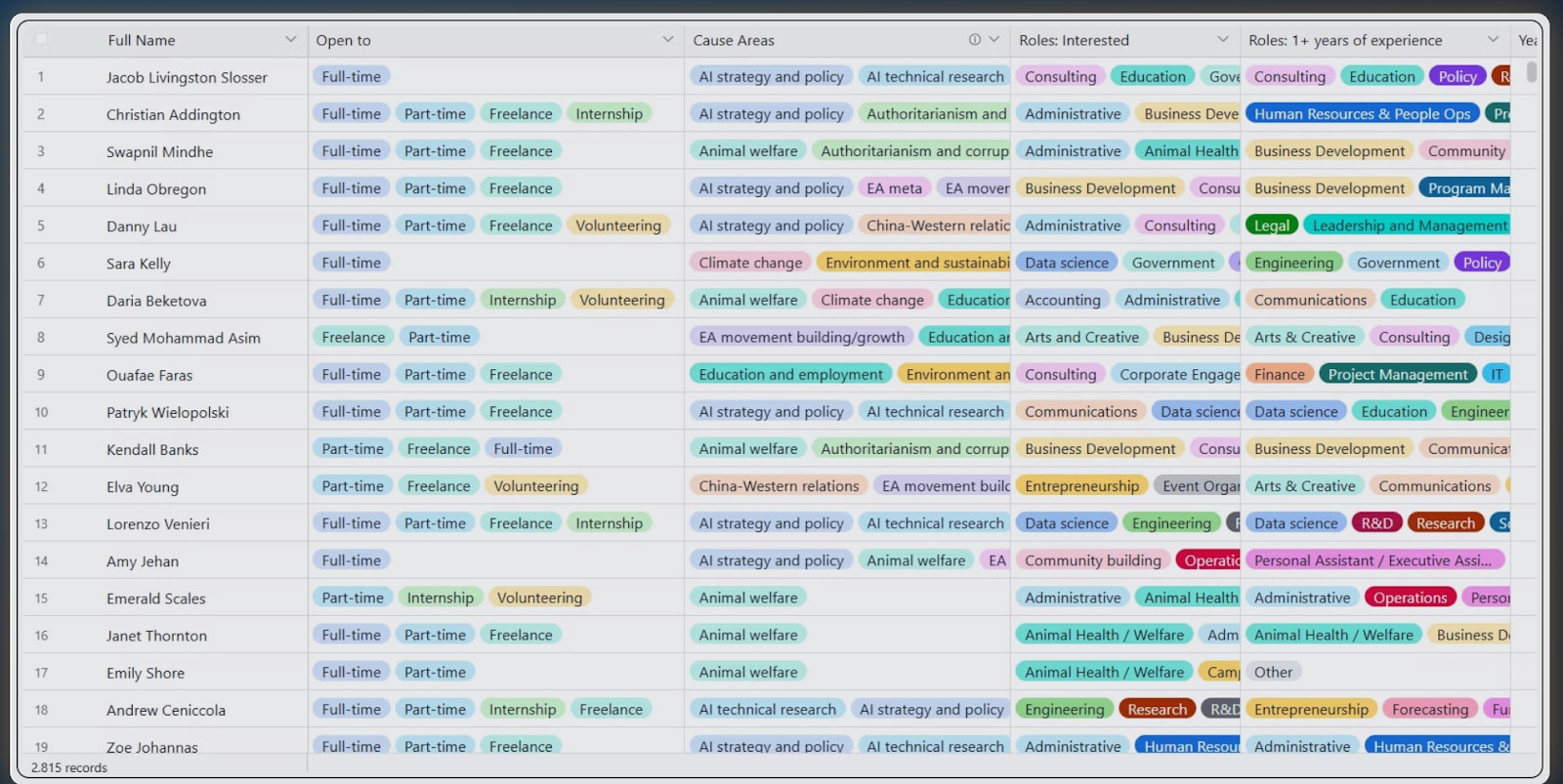

Proposal one: Decrease data-input repetition and the waste of high-quality data on candidates (e.g. careers advising session notes) by creating a purpose-built, centralised platform allowing candidates to consolidate all their career-related data-points in a single profile, including both candidate-inputed information and reviews of candidates by past career advisers/recruiters. A sort of “face book” where recruiters can window-shop for potential hires. A bit like LinkedIn, but this product would really be optimised for the needs of both EA candidates and recruiters (less flashy, but much more data-heavy, and with more options for advanced searches for candidate profiles than current products currently allow for).

Proposal two: Empower a wider sample of the job-hunting EA population by increasing the provision of specialised tests and excellence schemes aimed at currently underrepresented and underutilised population segments within EA (i.e. people with a Humanities background, mid-career professionals, people from the Global Majority, differently-abled people etc.) to prove their skills.

Proposal three: Increase the efficiency of the talent-acquisition process further by facilitating a shared recruitment process across several EA organisations, consisting of recruitment rounds utilising a single, ‘generalist’ application. This highly competitive process would test and rank candidates and only admit as many candidates to the job allocation stage as there are available job openings. Successful candidates would be automatically considered for all the available jobs within a round and allocated one based on best fit. This would enable a situation where all selected candidates get a job. Unsuccessful candidates would still benefit somewhat since their test results would be saved on the platform (proposal one).

- Aside from vast savings of time and resources, the reforms proposed above could also deliver collateral benefits such as increased diversity within EA, both from an EDI point of view and from a skillset point of view, and help make the EA talent acquisition process more aligned with core EA values such as mindful resource allocation (in this case, human resources, and time resources), caution against maximisation, and cooperation rather than competition.[2]

- Examples from other sectors (UK Civil Service Fast Stream, Oxbridge admissions, UCAS, UCAS Conservatoires, PwC...) and some EA examples (Ambitious Impact Incubator Programme) provide powerful examples of these suggestions in practice.

- Out of the three proposals, No. 3 is the most ‘controversial’, because there appears to be conflicting views on whether a pooled recruitment process would be popular within EA organisations. I have found that evidence in both directions is scant and anecdotal. Either way, I strongly believe that examples from other sectors where this is implemented shows that this system can work excellently. Separately, I also strongly believe it’s possible to convince a non-trivial number of organisations to use this method for at least some of their hires.

As a neglected, important, and tractable issue, the problem of improving job allocation within EA deserves our serious consideration.

Introduction: We all hoped EA would be better at talent acquisition

On 16th December 2024, @ElliotTep posted There is No EA Sorting Hat.[2] Here is a quote from it:

Question: How do you figure out how to do the most good with your career?

Answer: Find an EA sorting hat. Place it on your head. Let it read your mind. Listen carefully as it assigns you your chosen career track, or better yet, a specific role at a specific organisation. Well done. Work hard at your designated job and watch as the impact rolls in.

Wrinkle: There is no EA sorting hat. There is no omniscient individual or entity that will hear about your degree, your skills, your preferences, and your context, and spit out an ideal career.

By Autor:DALL·E (OpenAI); Indicaciones de Mαrti - Own work, Public Domain, https://commons.wikimedia.org/w/index.php?curid=171543578

What ElliotTep describes here is an expectation that, I too, had as a new EA that, since EA as a movement puts so much emphasis on the idea of an impactful career, it must also be really good at diminishing inefficiencies in the job searching/talent acquisition process, compared to the baseline of the broader job-searching landscape. Clearly, I wasn’t alone in having this expectation, it must have been quite wide-spread amongst new EAs for EliottTep to write an article about it. I was aware of how awfully soul-crushing it is to try and find a job nowadays, but, upon reading 80,000 hours’ frankly stunning research on the art of deciding which career path is best for you, I thought, maybe, just maybe, such an enlightened group of people as Effective Altruists would have figured out a way to allocate talent to jobs that is better than the general public. But after exploring the EA talent acquisition ecosystem, this is what I found:

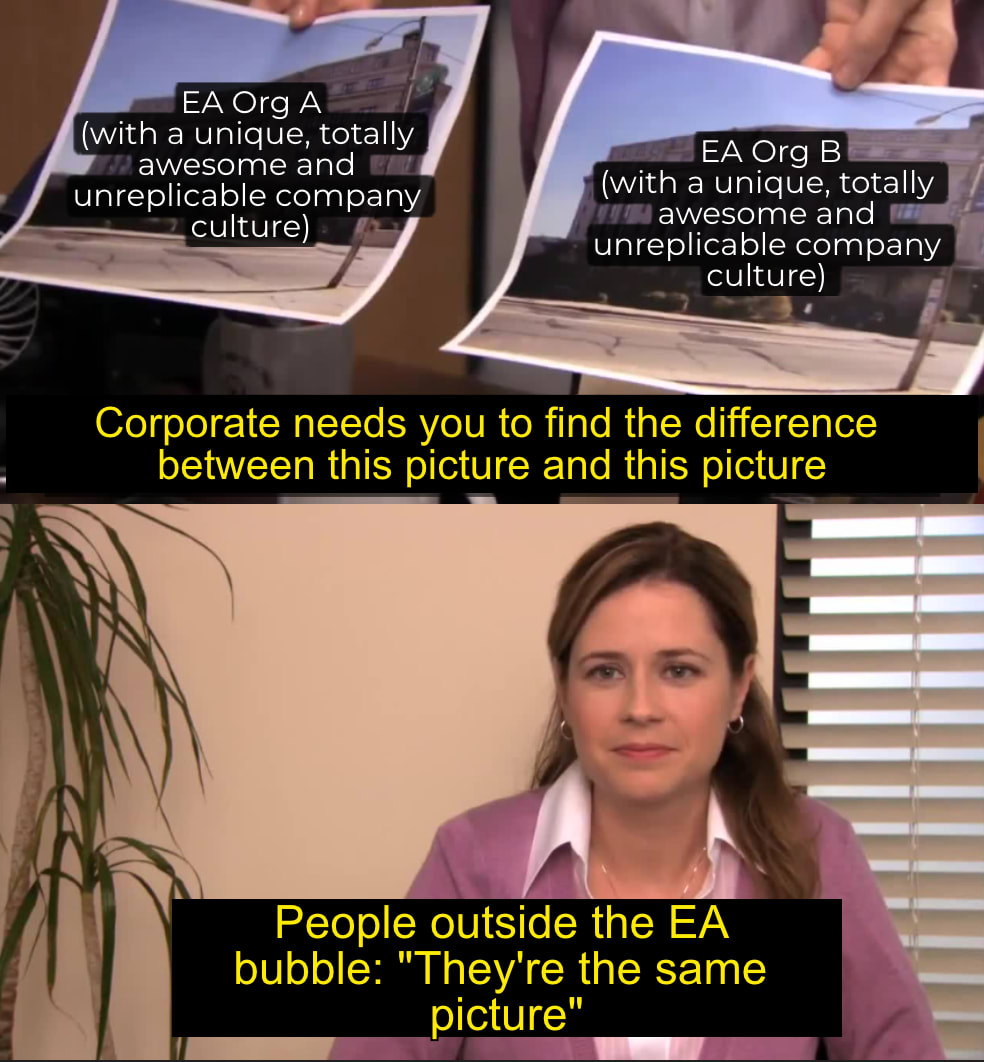

(Thank You For Your Time: Understanding the Experiences of Job Seekers in Effective Altruism)

In other words: although the EA movement really values and believes in the power of leveraging careers for impact, the processes of talent acquisition within it are not much better than the rest of the world’s.

This post makes three concrete proposals to improve the talent acquisition process within EA:

Proposal one: Create a purpose-built, centralised platform allowing candidates to consolidate all their career-related data-points in a single profile, including candidate-inputed information as well as reviews of candidates by past career advisers/recruiters, and more (e.g. tests results).

Proposal two: Increase the provision of specialised tests and excellence scheme allowing currently underrepresented and underutilised population segments within EA (i.e. people with a Humanities background, mid-career professionals, people from the Global Majority, differently-abled people etc.) to prove their skills.

Proposal three: Facilitate the creation of a shared recruitment process across several EA organisations, consisting in competitive recruitment rounds utilising a single, ‘generalist’ application. This competitive process would only admit as many candidates as there are job openings. Successful candidates would be automatically considered for all the available jobs within a round and allocated one based on best fit. This would enable a situation where all selected candidates get a job. Unsuccessful candidates would still benefit somewhat since their test results would be saved on the platform (proposal one).

Most of these proposals have been outlined in whole or in part by other posts, which I recommend reading to gain some additional context:

- What Happens After the Career Advice: The Case for a Shared EA Operations Hiring Platform by @Anaeli V. 🔹 (19 Feb 2026)

- 500k mid-career professionals want to do more good with their careers. Can we help them? by @Dom Jackman (11 Feb 2026)

- Recruitment is extremely important and impactful by Abraham Rowe (3 Nov 2025)

- There is No EA Sorting Hat by @ElliotTep (16 Dec 2024)

- Thank You For Your Time: Understanding the Experiences of Job Seekers in Effective Altruism by @Julia Michaels 🔸 (10 Jul 2024)

- Gaps and opportunities in the EA talent & recruiting landscape by @Anya Hunt and @KatieGlass (26th August 2022)

- Brief Presentation and Considerations for an EA Common Application by @che (4 May 2022)

- After one year of applying for EA jobs: It is really, really hard to get hired by an EA organisation by @EA applicant (26 Feb 2019)

When developing these proposals, as well as building on the research from other EA members on the topic of talent acquisition reform, I also drew on my personal experience as a concert pianist in the top 5th percentile worldwide newly converted to EA trying to switch careers to one optimising for impact at age 27, and my current experience as a recruiter receiving way more high-level / excellent applications than I have available slots for.

N.B.: Please note that although the abovementioned proposals would in my opinion do wonders to improve the efficiency and quality of the talent acquisition within EA, they do not solve the problem of EA job scarcity relative to the number of people who want a job in EA-related fields. Although this is a very important problem, it is separate, and solving it would require a different set of strategies which we won’t be discussing here!

Acknowledgements: I sincerely thank @Kevin Xia 🔸 , @SofiaBalderson , and @Anaeli V. 🔹 for providing me with invaluable feedback on an early draft of this post. I’d also like to thank all the authors of the articles I’ve based my research on, as well as @abrahamrowe from Good Structures, @Nina Friedrich🔸 from High Impact Professionals, and Gergő Gáspár @gergo from Effective Altruism UK who provided some useful context. All mistakes are mine.

Proposal One: A Centralised Recruitment Platform

Let me describe a couple of properties of this new product I’m proposing:

Property 1: It would avoid information duplication across several platforms

The above articles (especially this one and this one) often complain about the lack of a systematic, centralised CRM-style database of candidates, shared across multiple orgs.[3] This leads to much time wasted by applicants and recruiters in countless ways, who keep having to either request (in the case of recruiters) or provide (in the case of applicants) the same information that other recruiters have already asked for in a different setting.

Property 2: It would allow candidates to input information about themselves in a non-restrictive way, to enable potential recruiters to gain a more holistic view about them

In such a platform, candidates would be able to upload all sorts of relevant information about themselves, like:

- Their CV

- Their working rights and visa permissions

- Their life story, goals and aspirations

- Results of tests, competitions…

Etc.

The amount of information provided could be as large or as small as the candidate wishes, and it would all be volunteered willingly so there are no confidentiality issues.[4] Concision in the materials provided would not be a requirement here as this information is largely for the benefit of the LLM being able to conduct things like ‘advanced searches’ from the candidates database using as broad a set of parameters as recruiters might wish to use so candidates can be as thorough as they wish. This is where this purpose-built platform is superior to LinkedIn for our purposes: on LinkedIn you could never do a search like ‘Find candidates with UK working rights and experience in animal advocacy’; but on the platform discussed here, you could.

Unlike in many talent directories, as a candidate, you would have the option to update this info as much as you wanted, and you would be incentivised to do so to make sure the data doesn’t become stale.[5]

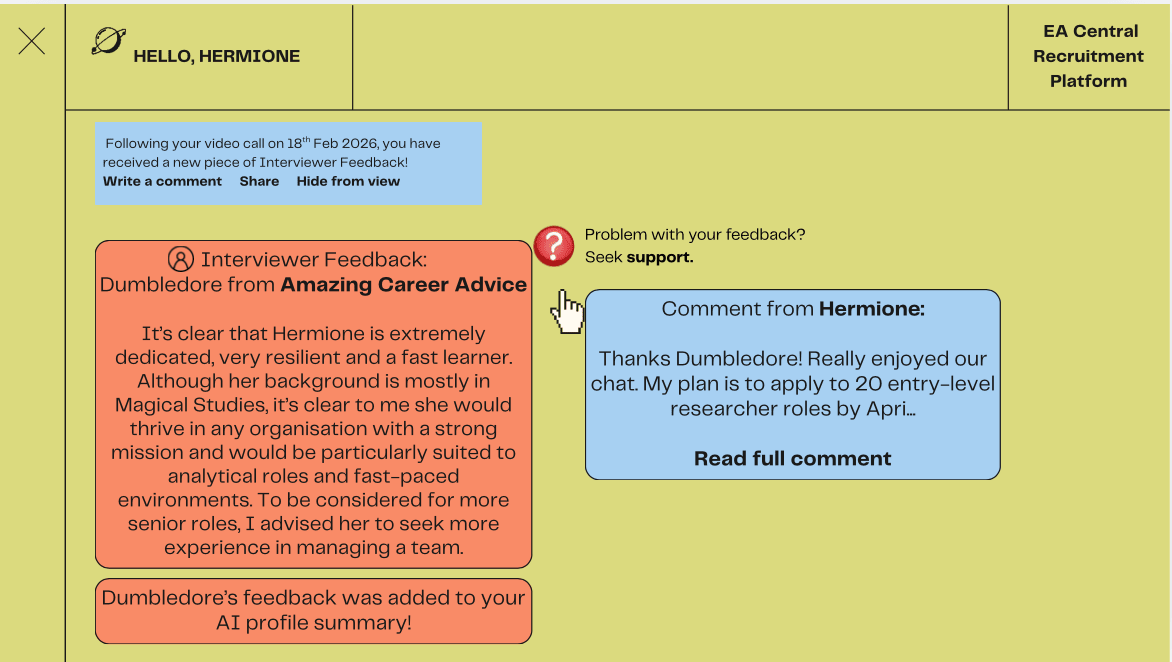

Property 3: It would include high-quality, currently underutilised data points such as notes from career-advising sessions

Several organisations (Probably Good, 80,000 Hours, local EA groups, etc) offer high-quality career advising sessions, usually taking the form of a 45 mn call with an adviser after the candidate is asked to fill in a form explaining their story. From a call, a skilled adviser can infer and also test out many human aspects of the candidate that are often left out of CVs and interviews: the candidate's life story, their personality, their vibe, aspirations etc. There's no pressure to conform to any mould, as the adviser is looking at their profile in the abstract, not in relation to any job specification or organisation. EA-inclined career advisers are keen to advise the candidate really well because their morally-driven goals incentivise them to try to multiply their impact by helping someone else maximise their career's potential impact.

I think there is a wasted opportunity to make the insight of advisers following the call available to potential recruiters.

Career advisers in the EA community are highly trusted and usually remarkably insightful people who can provide an independent judgment on a candidate.

This added dimension of incorporating externally-provided views on a candidate would be a huge improvement from the current situation with talent repertory listings, where you are only able to view unchecked, candidate-inputted information.

I know that, as a recruiter, I value the opinion of trusted people in my network a lot more than anything I could read on someone’s CV, as having this external opinion of someone who’s already interacted with a candidate is a powerful way to test assumptions I might have about said candidate.

As well as career advisers feedback, previous recruiters’ interview feedback could be similarly added to the platform.

With all pieces of feedback, users of the platform would be able to choose whether to display it publicly or hide it. There would also be options to comment on the feedback, for example, to shed some light on an uncertainty the feedback expressed about the candidate, or provide useful context.

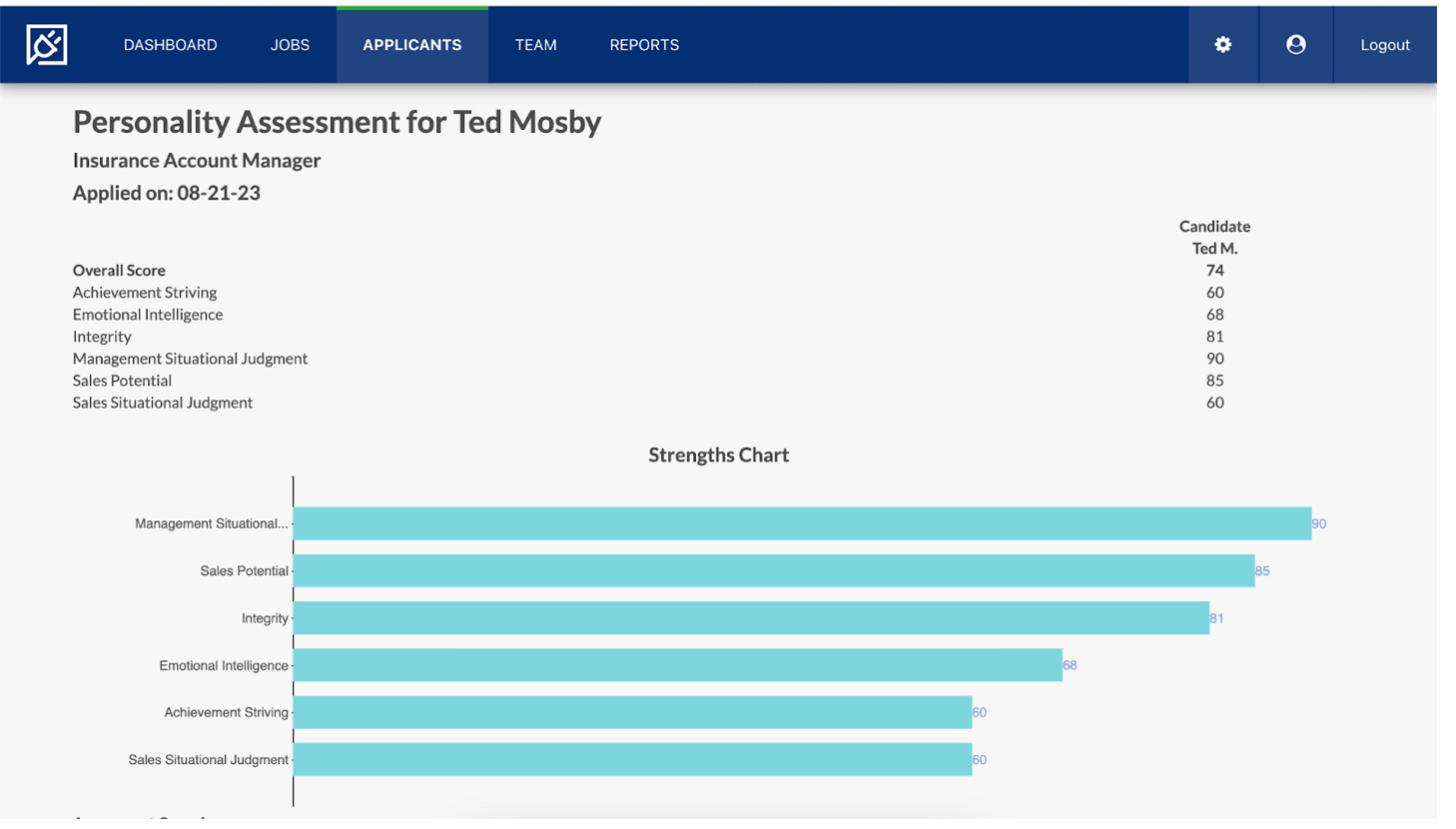

Going further… Property 4 (optional, nice to have): it could include state-of-the-arts tests and competition results…

In this game, the candidate has to bank as much ‘money’ as possible by deciding to inflate a certain number of balloons. The more inflated the balloons, the more money they get, but if they inflate them too much, they may pop! Players can choose to bank a balloon at any time.

Games include exercises like decoding the five-digit lock on a safe, selecting the next number in a sequence and identifying how a person is feeling based on their facial expression.

IMO, someone's professional experience is best described using an old fashioned CV, but there are lots of other traits that recruiters might be interested in that are very hard to capture on paper, like soft skills, temperament, and relative work performance when compared to an average applicant.

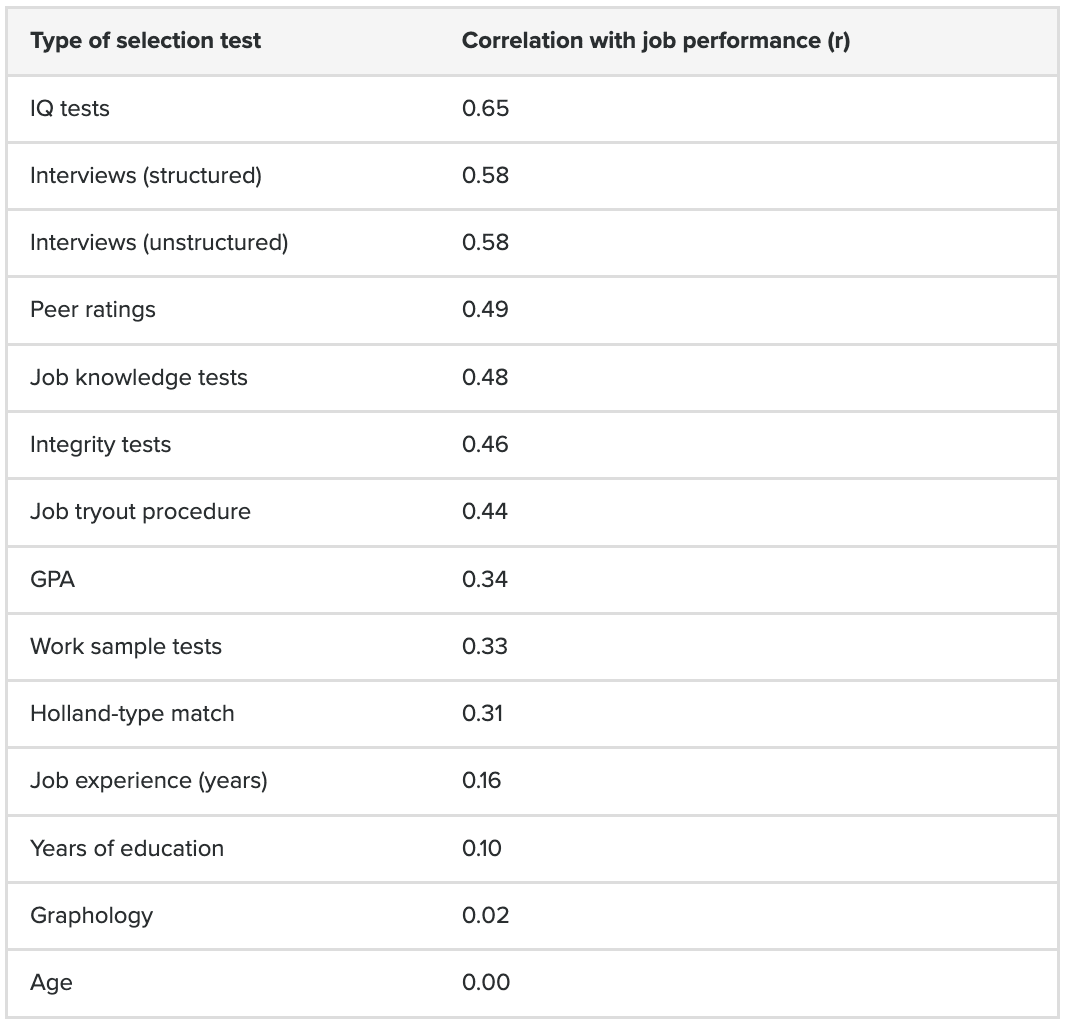

On personality tests: A 2016 research paper, quoted by 80,000 Hours in their careers advice pages, suggested that Holland-type match (a sort of test where they try to see whether your general ‘personality type’ would be fulfilled doing a specific job) are quite bad at predicting job performance.[7] But since publishing this article, based on new evidence (which they're not quoting for some reason?), 80,000 hours have updated their views on how important they think the general idea of interest-matching is when trying to find the best job for you.

Even though it seems we are lacking a clear verdict from 80,000 hours on whether or not personality tests are good predictors of job performance, I would surmise that most recruiters do value having insights into the applicant’s personality, not so much because they think that’s necessarily linked to their ability to do their job properly, but because knowing about someone’s personality can help answer other important questions such as ‘will I enjoy working with this person / will this person enjoy working with me?’ or ‘what kind of things should I be doing to ensure this person is able to give their best / enjoy working / feels supported?’ etc. This may in turn heavily influence the decision to hire someone over another person.

Notice, however, that interviews scored quite highly... Another point in favour of making any feedback / interview notes from organisations available to other organisations.

On the games-based assessment used by PwC: Having done it myself, it's quite fun (unlike SO much of the job hunting process, yuck!), and could provide potentially useful added info to recruiters that are specific to that individual and can't be faked. They're assessing things that are not necessarily right or wrong, like risk appetite, grit, cooperative spirit, and even reflexes...

Proposal Two: Design Specialist Tests and Excellence Schemes for Underrepresented Segments

If we want to create a platform where there are equal opportunities to success, and one which is conducive to an environment where a range of skillsets and backgrounds are valued, it is important we build it in a way where no single demographic or applicant profile has significantly more opportunities to prove itself than another.

If we made such a platform tomorrow, and I went on there as an excellent applicant with a STEM background, I would have no difficulty proving my competence: I could add my team’s ranking at the latest programming hackathon, or add my Maths Olympiad results from my high-school days…

Let’s imagine now that instead I am an applicant with a humanities background. It’s suddenly a lot harder to prove excellence in my domain, not because such assessments are impossible (I have found examples of policy, comms, or systems thinking hackathons) but because there are just much fewer of them, and almost none that are native to the EA ecosystem.

This undervaluation of the humanities is largely symptomatic / representative of the world we live in today, and in my opinion we are paying the price for this neglect every day.

As Rutger Bregman explains in his book Moral Ambition, to succeed in changing the world, you need collaboration with a vast number of people with a vast number of skillsets and who can contribute a range of perspectives.

It’s not just the Humanities though. Here are just some examples of demographics that I think are underutilised by EA:

- Global Majority people

- Mid-career professionals

- Retired people

- Differently-abled people

- Famous and moderately-famous people in the Arts, Entertainment and Sports sectors

Proposal Three: A Shared Recruitment Process

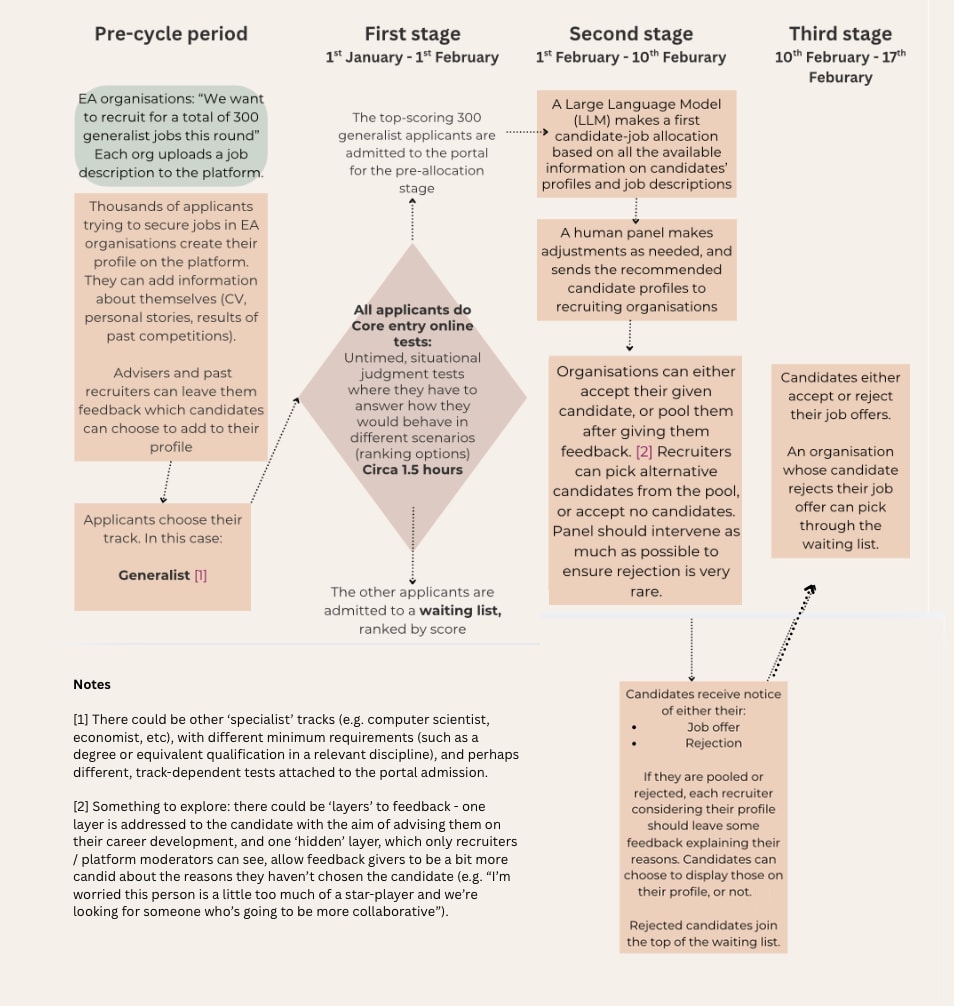

The biggest change I am proposing is the shared recruitment process across several participating organisations.

In this scenario, an LLM, moderated by a human panel, would be matching a finite number of competitively selected candidates to the same number of total available jobs.

This would be as opposed to organisations each recruiting for their own jobs from a theoretically infinite pool of candidates. This is obviously quite controversial, as it requires participating organisations to 'play the game' — i.e. they should limit their recruitment to this process only (basically not change their mind mid-process), which theoretically reduces their ability to really get the best candidates if they were to cast their net wider. They could also miss out on the best candidates in that round because the moderating panel collectively thinks the candidate would be able to make a better impact at a different organisation. Still, at the moment I think this method is superior to the status quo, as it would greatly improve efficiency and diminish friction for everyone involved.

An early, small scale 'trial' of this process could simply look like one organisation saying to another: 'Hey, we're a group of 3 animal advocacy non-profits doing pooled recruitment next month for generalists roles. Wanna join?'

When the process has gained enough legitimacy and popularity to the point where it reliably gets used for EA organisations' recruitment, instead of the recruitment rounds being 'ad hoc', there could be regular recruitment rounds throughout the year.

Some considerations of what this pooled recruitment process would need to work:

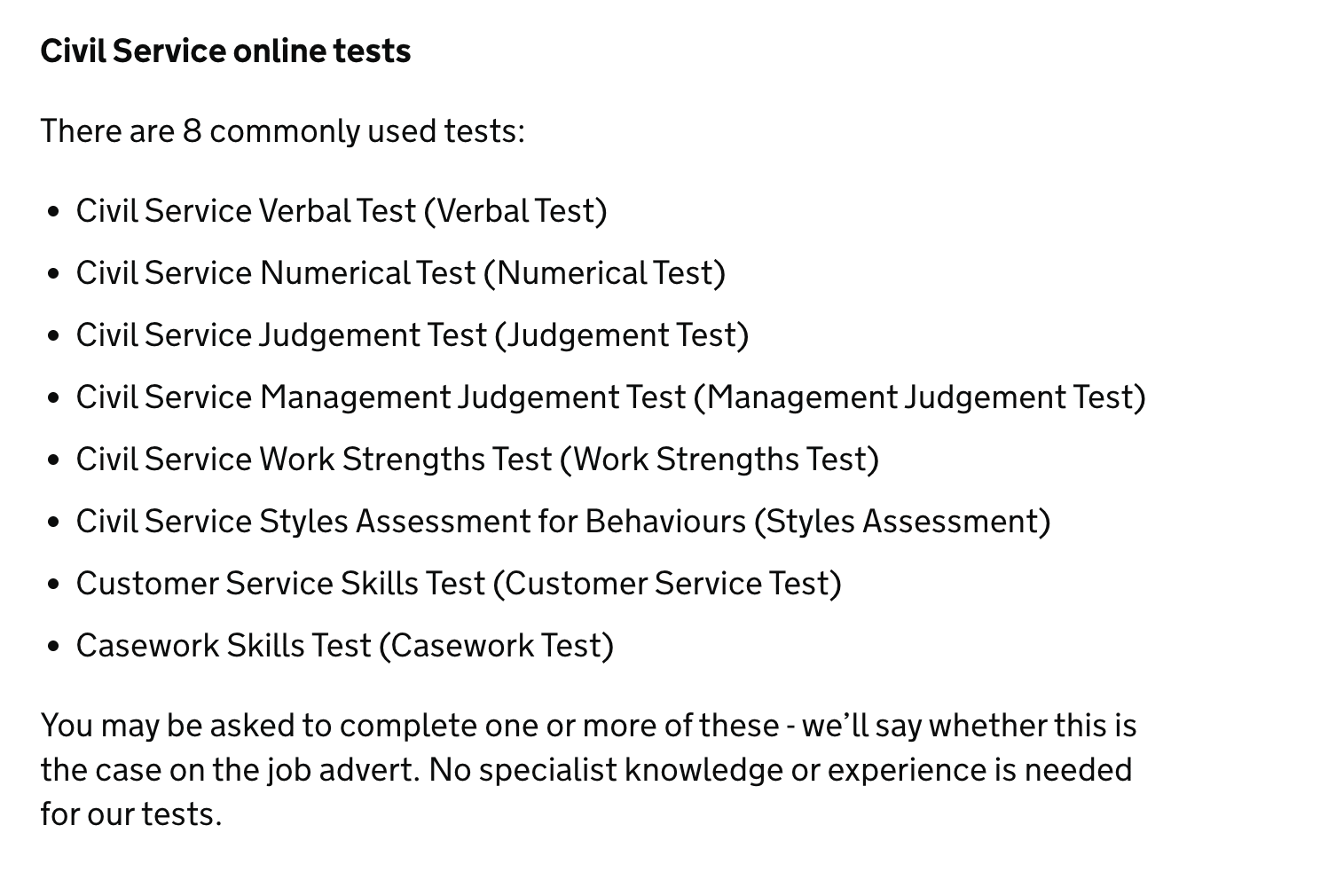

- The admissions test: It should be a test that allows for candidates to get a scored ranking, but for which the ‘right’ answer can’t be figured out using AI.

It’s challenging to generate such questions but to get inspiration on the style of questions able to generate such a ranking, look at UK civil service admissions’ tests or the Ambitious Impact Charity Entrepreneurship Incubator admissions’ tests. - Work permissions: In order to make sure that all selected applicants are given jobs at the end of the process, one of the eligibility criteria for taking the test should be to have work permissions that are compatible with what organisations are expecting to have for their jobs. For this reason, it might be easier to carry out recruitment rounds by country working rights to ensure that selected candidates can do the job!

Candidates allowed to work in several countries could apply to separate country rounds. - A flexibility mindset from candidates, that makes them value the idea of getting a job offer more than whether this role is the absolute job of their dreams. My personal experience talking to people makes me think this is already the case for most people, but just in case, we could screen for such a quality using the admissions test, to avoid getting ‘perfect fit divas’.

Why I think a common recruitment process would be better aligned to EA values than the current situation

- EA organisations, on paper, are supposed to think about cause areas other than their own when deciding how best to allocate resources (including talent), and supposedly should care about collective benefit more than the success of their own organisation. Therefore, on paper, they should be ok with a process that cooperates with other EA orgs rather than competes with them when it comes to talent acquisition, given that they will all reliably be getting incredibly skilled talent anyway.

- A common recruitment process would make EA values more consistent across the board, which could help combat unconscious value-drift / narrow-mindedness from certain organisations.

- The huge waste of time and resources in the current recruitment processes to me is an example of maximisation gone wrong. We could probably still recruit excellent people using the proposed system, with 100x (100x is a figure of speech here: I didn’t actually calculate this value, and if I had, it’d probably be higher) less time and energy wasted.

- An independent, centralised entity charged with recruitment on behalf of many different organisations has other benefits: you can collect more data about the staffing in the movement as a whole, and observe if any patterns emerge that we don’t want (such as inadequate diversity for example), and do something about it.

- The entity charged with the running of this platform would develop unique expertise since it would be fully dedicated to this mission. It would be uniquely more effective than if each organisation tried to increase its own procedures independently, as a side-quest to its main mission as an organisation.

It is worth noting that programmes that do operate like this, i.e. with an extremely selective pre-recruitment process but a guaranteed position at the end - like Ambitious Impact's Charity Entrepreneurship programme (once you're in, you know you'll end up co-founding a highly-effective charity), or the recruitment process of collegiate universities like Cambridge or Oxford or the UK civil service selection process, are extremely popular and tend to attract great talent, probably for the reasons above. Although applicants recognise they might not get their top option, they are willing to go through a very exacting selection process once because if they get in they are guaranteed an amazing time, with learning opportunities and career progression possibilities, even if they get the 'worst' option. I would assume that employers also find it useful as they reliably get an extremely qualified pool of people to choose from, and what they lose in role-matching optimisation they gain in simplicity of process and time gained.

Possible counterarguments against the proposals

Against Proposal One: A Centralised Recruitment Platform

- You are proposing to build a platform with lots of data points about candidates. Could we not just build a super talent repertory instead, just taking something like the HIP talent database and adding more data points?

Essentially yes, what I am proposing is very similar to a collaborative spreadsheet on steroids (such as the HIP talent directory), but with a nicer interface (it would look like a website rather than a spreadsheet), an integration with an LLM that would make searching for candidates using various filters and complex combinations of filters much easier, and finally, would allow for the inputting of infinite amounts of datapoints (whatever the candidates feels relevant to mention, as opposed to whatever fields the spreadsheet ‘allows for’).

It would also be dynamic and allow candidates to regularly change the data that they’ve put in, which is currently possible in some databases (HIP) but not all (e.g. Expressions of Interest at most organisations).

Basically, I’m proposing building a better product for the same function.

- Building such a platform might be very expensive (or would it?)

I don’t have the necessary knowledge to have a proper idea of how much such a website, which would need to include applicants profiles and integrate them all to an LLM able to carry out advanced searches using personalised filters (e.g. show me candidates with the right to work in the UK whose profile has been vetted by an animal advocacy professional) would cost to make. Any insights on this would be much appreciated.

There are also connected questions: What would be the business model for this platform? Would this platform be built for profit, or financed by grants? etc.

Against Proposal Two: Specialised Tests for Underrepresented Segments

- You are proposing things like hackathons for humanities students / previously neglected demographics. Is this good value for money?

This is a question I’m exploring right now. In the very near future I will make an attempt at putting some numbers on things (please feel free to contact me if you’d like to help doing this!), but right now, just based on my instinct, I am estimating that the highly counterfactual nature of improving the EA learning/training/experience-building for previously neglected segments would justify some investment in it (the question then becomes, how much?).

Some ideas of what this offer could look like, for example if it was aimed at people with humanities expertise:

Content Creation Hackathon: Teams have 48 hours to create social media content aiming to communicate important academic ideas to a large audience. Teams are graded on content quality, strategy, reach, and engagement.

Fundraising hackathon: Teams have 48 hours to carry out and present a fundraising audit for participating non-profits to a panel. Most valuable audit wins.

Beyond competitions, we could also do excellence schemes or pipelines to recruitment (selective entry courses or programmes with career opportunities at the end).

These initiatives would enable to have more diversity within the movement, which would make it more qualified, more resilient, and less insular.

Against Proposal Three: A Shared Recruitment Process

- Take-up from recruiting organisations might be limited as they would rather recruit their own people using their own processes to maximise fit (specific skills needed, ‘org culture fit’, etc)

According to research by Abraham Rowe (@abrahamrowe) a non-trivial number of organisations (about 20) would be willing to recruit using a pooled system for at least some of their roles. He also calculated that a pooled recruitment process could save all those organisations a total of 500-1350 work hours in total per year. It would take a lot less time in my opinion to devise a pooled recruitment process.

However, Nina Friedrich from High Impact Professionals told me that Coefficient Giving, Good Structures, and HIP had discussed a common application process for their recruitment and ‘the consensus was that even for roles that exist in almost all orgs (e.g. Ops), what the orgs look for specifically is so broad, that doing this well would be extremely hard’. I would disagree with this assessment on the basis of having seen it work very well in other settings, and not thinking this would be very hard to replicate.

Either way, the extent of the evidence we have on this question seems quite scant and anecdotal, and I’m not even sure everyone involved in the discussion was clear on exactly what they were discussing. It’s difficult to make a decision on something that’s super ill-defined, and with this blog post I tried to define what this process could look like in quite some detail to facilitate a future, serious discussion on this which I think is definitely needed.

From what I see, recruiting orgs are experiencing frustrations when it comes to recruitment (despite being definitely in a stronger position compared to applicants), and complain about finding it very hard to recruit for talent (see here for example).

From what I can see on my LinkedIn, many organisations also sympathise with the fact the current processes are wasting so much time on the applicants' side, and there is at least some interest in making the process less painful for them. I certainly feel that way myself as a recruiter.

As per the argument that EA organisations have VASTLY different work cultures which makes it IMPOSSIBLE to recruit using the same processes… I think that’s probably not true: of course there are cultural differences from one EA org to the next, but I think they’re generally trivial enough that they don’t justify having separate recruitment processes. Yes, admittedly, by having separate processes, we might increase candidate fit to an organisation from like, 90% to 95%, but that trivial 5% extra fit factor to me doesn’t justify the astronomical loss of time that siloed recruitment processes inevitably cause.

- Take-up from recruiting organisations might be limited, not because they are very attached to doing their own recruitment, but because of other reasons (lack of visibility on the quality of recruitment coming out of this new process, mistrust of the organisation managing and operating the platform, etc.)

That’s correct, and any wannabe platform would have to think seriously about overcoming these issues, as @abrahamrowe helpfully points out. - With such a system, you’re not allowing more people to integrate EA jobs, you’re just moving the selection point earlier. Just like other pooled application systems (e.g. Oxbridge, UK Civil Service, CE Incubator Programme etc), this system still rejects the majority of applicants.

This shared recruitment method isn’t a way to absorb more people into EA jobs, but about increasing the efficiency of talent-to-job matching processes with the current number of jobs. The number of people who would be recruited with this system would always be ‘upper-bounded’ by the number of available jobs. The fact that there is a huge gap between the number of available EA jobs and the number of applicants is a separate issue, and one that in my opinion would also be worth thinking about at some point. But let’s stay focussed on our issue (system efficiency) for now.

The aim of the proposal here is simply to make the process of applying to similar EA jobs much more efficient. Let’s imagine there are 300 relatively similar job openings in the shared recruitment round. As an applicant, I can do one application, and all I need to do is to perform highly enough to be within the top 300 applicants to be guaranteed a job. This is a MASSIVE saving of time. And even if I’m not selected, because it would be done through the platform where I have a profile on which I can add data, it’s still in my interest to give it a go, as my results will be kept in the system and I might be ‘picked up’ by another organisation, like one that’s recruiting out of cycle for example.

- Recruitment processes guaranteeing cool outcomes to a given number of pre-selected candidates with similar profiles already exist in EA (for example Ambitious Impact Incubator programme, Kickstarting for good)

Yep, and I’d say they’re doing pretty well in terms of recruitment outcomes. Of course people still complain because they’re extremely selective, but all candidates are very appreciative of the fact that every effort is made to not waste their time unnecessarily. - What if an organisation doesn’t like the candidate that the process matches to their job listing? What happens if an applicant doesn’t like the job offered to them and has to decline it?

See flowchart above for a visualisation of the different options at each stage that covers the questions above and more.

How can you even design online, unsupervised tests that fairly select candidates?

Designing an unsupervised testing system that is AI-cheat proof is certainly a challenge. Like any test, it will realistically be imperfect and accidentally screen out people that should have been accepted, and vice versa.However, I’d argue that although imperfect, such a selection process can still deliver meaningful enough results. On the point of unfairness, I would also say that part of the skill in any selection process is to accept the ‘game’, and try one’s best to identify and teach oneself the ways to succeed at the very specific task of 'passing the test'.

Examples of Civil Service Fast Stream selection tests. You can have a go at some published practice tests online. - Building the selecting tools (tests in Stage 1) and the LLM integration matching successful applicants profiles with job openings according to best fit (Stage 2) would be expensive

Again, not enough expertise on this topic to make a useful guess as to how much that would cost.

Conclusion

When viewed through the filter of core EA values (effective resource allocation; caution against ineffective maximisation; valuing equal opportunities and diversity of perspectives; valuing good mental health etc.) there are strong reasons to urgently seek to reform the talent acquisition processes within EA. The good news is that there are promising solutions to do so. Given the potential gains and savings these would enable, these proposals could form a promising basis for a business or a mostly grant-funded non-profit. Interested in helping to make any of these proposals happen? Let’s talk! :)

- ^

See Thank You For Your Time: Understanding the Experiences of Job Seekers in Effective Altruism for a devastating picture.

- ^

On maximisation, see:

EA is about maximization, and maximization is perilous and Reflecting on the Last Year — Lessons for EA (opening keynote at EAG)

On the necessity to use resources wisely, see:

Four Ideas You Already Agree With

On the value of cooperating rather than competing, applied to a talent-acquisition context, see:

Comparative advantage in the talent market. Relatedly, about the best use of resources in an environment where you can share information Shapley values: Better than counterfactuals. - ^

In talent acquisition lingo this is sometimes referred to as an ATS (Applicant Tracking System).

- ^

If candidates were worried about the amount of information that would be available about them online, they could easily anonymise their profile by not displaying their full name, or using a pseudonym. They could also choose to only share their data with a custom group of organisations, or not show it at all and just build a ‘private’ profile, which only the LLM can see and would only be ‘read’ in the event of them being successful in a recruitment round.

N.B.: This is only a problem if the database is made public by design, as opposed to being a closed system with only a few select organisations being allowed to view profiles. Both versions of such ATS systems exist. - ^

I can think of a number of mechanisms to incentivise that, such as rewarding, in the search functions, profiles that have been updated more recently.