This post seeks to further encourage the development of an EA Common App. In the first part, I compare a simplified version of the current EA applications landscape to a process that is streamlined and collaborative. Then, I discuss steps to develop an MVP. Last, I ask and offer a perspective on key questions about an EA Common App. I argue that a Common App increases EA hiring, cooperation, and learning efficiencies and presents no risks that cannot be mitigated.

Current EA applications landscape

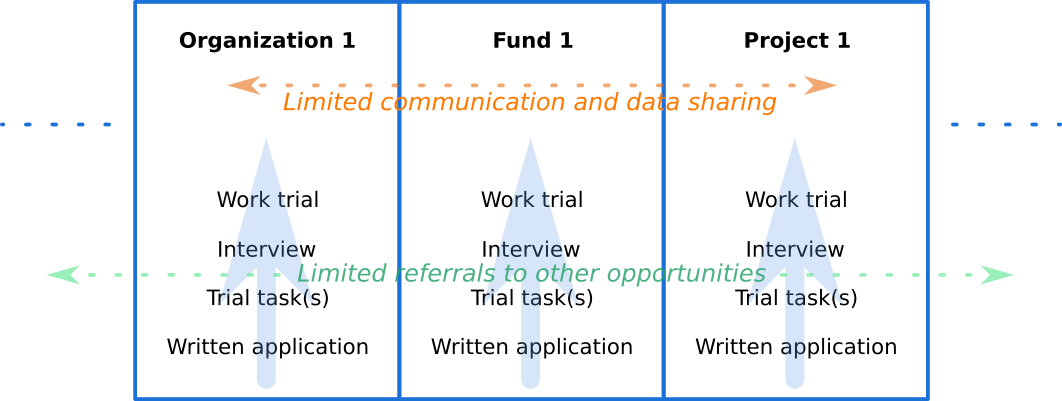

Currently, the EA hiring process looks something like this:

EA-related organizations, funds, and projects have their own separate application processes, communicate about hiring in a limited way, and share limited data about applicants’ skills. Recruiters rarely refer candidates who they end up not hiring to suitable opportunities. Furthermore, applicants are imperfectly informed about available openings and in-demand skills. A few people estimate EA-related opportunities’ future needs and this process is uncoordinated.

This causes inefficiencies in job search and skills development.

Streamlined Common App

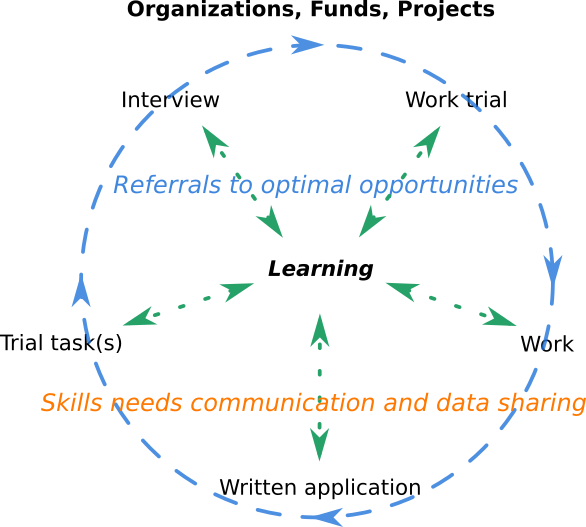

A Common App can reduce job search and hiring frictions and enable EA-related organizations to cooperate on talent development. It can look something like this:

Common App candidates can apply once (in a while), and be referred to steps optimal for them at every stage of the hiring process. The steps include learning, which candidates can jump from and to different stages of the application-work cycle. EA-related opportunities communicate regarding their expected skills needs and abilities to develop in-demand skills to their benefit. Non-EA learning opportunities and EA-related learning materials are suggested when this optimizes efficiency, conditional on the candidate’s enjoyment.

EA-related organization recruiters, fund managers, and project leads cooperate on referring candidates to suitable opportunities. These specialists keep informed about the changing skills and learning opportunities that are available and in demand. They always keep in mind the preferences of the applicant, offering what they could best enjoy, of the available opportunities. Candidates can always choose that or something else. Feedback is gathered to optimize for an individual's enjoyment of the referrals (given the existing opportunities based on needs).

Common App development steps

For a Common App to bring high value, the following should take place:

- EA-related ventures should agree on application questions, trial task(s), interview questions and procedures, and work trial content and assessment, all of which can have different options (e. g. different trial tasks) A candidate should be able to choose which option to use. This selection can suggest an applicant's interests and skills. Skills development preferences should be explicitly asked for.

- Referrers should be selected by a transparent process. These people should be impartial, able to learn fast about true EA-related opportunities’ needs and candidates’ skills and preferences, keep up with non-EA skills development opportunities, and be able to refer to large amounts of data.

- Applicant’s feedback forms should be developed and implemented. These should optimize for the sincerity of feedback and its applicability to improving the recommendation process.

- Communication among EA opportunities and referrers should be formalized and optimized for minimizing non-recruiters’ time demands while maintaining recruiters’ informedness about the opportunities’ changing needs and openings. For example, recruiters can be automatically notified about new postings or expected needs updates.

- Trial should take place. I am describing how this trial can look like in the following section.

MVP aspects overview

A minimum viable product can look like a combination of the following (all including broad referral steps based on the applicant’s responses):

- An online form with compulsory and self-selected questions

- Trial task database on an appropriate platform

- Sets of interview questions and structures (some of which can be pre-recorded)

- Set of work trials available to candidates

- EA-related learning opportunities database

- EA-unlabeled vetted learning opportunities database

Questions

I am including my perspective. Further discussion is welcome.

Should an EA Common App be developed at some point?

Yes, because as the community is growing and becoming more complex, there is an increasing benefit of reducing information frictions and streamlining skills development. This can increase the efficiency and quality of applications and hiring processes.

When should the Common App be developed?

Whenever there is sufficient interest from recruiters and job seekers. This should prevent inefficient resource spending.

Should data be stored?

Yes, data should be stored, because it increases efficiency compared to remembering. Data sharing consent should always be required and GDPR rules followed.

Should data be overwritten or accumulated, by default?

Data should be overwritten because next steps at any point in time are recommended. Accumulating data can bias referrers. Alternatively, a question on the candidate's career path can be included.

Who should have access to candidates’ responses?

Applicants should always select who sees their responses. The application should not ask for any sensitive information and candidates should be cognizant of their audience.

Should the process be crowdsourced or employ specialized referrers?

Referrers should be responsible for recommending suitable opportunities but anyone can suggest what the applicant may particularly enjoy. Feedback should be gathered to optimize for the candidate’s experience and to improve the calibration of those who suggest opportunities.

When do opportunities get involved?

While opportunities may choose to wait until a candidate completes a trial task or (a pre-recorded) interview, they can suggest next steps to a candidate at any stage. These suggestions can enable referrers to better understand the opportunity’s hiring needs.

What are the risks associated with an EA-related Common App and how can they be mitigated?

1. Surveillance perception

Candidates’ perception of surveillance should be mitigated by transparency of the entire process, overwriting responses, following GDPR, inclusive (as possible) access, not asking for sensitive information, the universal norm of optimizing for the candidate’s interests, and the ability of candidates to freely reject any suggestions.

2. Niche audiences’ loss

Niche audiences' loss should be prevented by a holistic and personalized approach to referrals. Then, niche audiences can feel supported in their areas of interest.

3. ‘Using’ non-EA opportunities for skills development infamy

Non-EA decisionmakers should benefit from candidates’ work and possibly also the connection after they leave. Candidates should not plan to quit early (for example, take time-limited contracts suitable for professionals interested in skills development).

4. Job loss

If the Common App is successful, some career advisers and community organizers will be freed to do other, more interesting work. Due to an efficiency increase, better jobs should be available after adjustment.

5. Failure and delay in developing a beneficial Common App later

A failure can be prevented by a cost-effectiveness analysis and resources assessment before any progress is made.

Conclusion

An EA Common App can increase hiring, collaboration, and skills development efficiency and quality. EA-related opportunities can cooperate on talent development. Risks can be minimized by centralizing a candidate's actual interests. Further analysis of the cost-effectiveness of a streamlined EA application process can inform any next steps.

As of 2022-06-04, the certificate of this article is owned by brb243 (100%).

Hey!

TL;DR: I'd split this up into:

(1) applying to many EA orgs at once, and

(2) having common interviews

Applying to many EA orgs at once

I happen to know that many EAs are stuck at the "apply" stage and I think that solving just this would be useful.

Also, it could be really easy!

Some MVP ideas:

Sharing the interview process

I'm basically against this.

The "knock down argument" for me is that this is hard, orgs won't agree on it easily, and we have such an easy option above ("Applying to many EA orgs at once").

I also think it has downsides, like "if I failed the interview for one EA org, is it a good thing if I automatically fail the interview to all of them?" - this is unclear to me

Thank you very much.

I should keep 1. in mind when communicating with EA orgs representatives. This can be relatively easy to implement, for example by a Sheets formula that displays all responses with the checkbox TRUE and leaves rows with sharing checkbox FALSE blank. Then, copying all non-blank rows to a spreadsheet that combines data from different applications. Applicants could even suggest edits (e. g. delete or modify their responses) using the gmail account associated with their application.

2. OK, that is a great idea. Writing on the Forum can give a very detailed picture about the person's actual interests. I suggested it via the EA Forum feature suggestion thread.

3. OK, let me take a bit of time to develop this. This can be added to the responses from the other applications. I argue for adding these in one row, maybe index matching by e-mail or other unique identifier. Rewriting responses that are edited can be taken care of by having the same fields from the original form always linked.

That makes sense, orgs should be able to add or modify questions, if anyone is bought in. The main bottleneck, in my perspective, is not enough jobs that are interesting enough for candidates who do not have their own projects in mind (for example, being an assistant of a researcher may be not so appealing to someone who wants to advance megaprojects). This can be resolved by supporting independent projects that also develop expertise (maybe ideal project question can be added) and more employee shadowing (cognizant of info hazards) (including for free or small stipend) opportunities. I could suggest this consideration when communicating with EA org employees.

Regarding modifying agreement about application steps to applying to many orgs at once and just copying the responses I agree. It is easier for everyone.

Yes, maybe the question can be whether interview responses should be included and if so, in what form. I think that full interview recordings can be biasing since the responses are tailored or pertinent to a specific job. Full interview notes can be also biasing, since they can pinpoint reasons for rejection for a particular role rather than describe general skillset. One way to 'protect' candidates while providing value can be adding skillset description and recommendations regarding applying to similar or different roles. So, for example, if someone fails a specific PA interview but has a PA skillset, that that is specified and further, maybe a recommendation is to 'demonstrate ability to professionally answer many emails' or 'apply for a PA of a farm animal welfare researcher.'

Thank you for the useful tip on importrange.

Yes, I mean to use maybe a Google Form. Ah hah, it makes sense that all can be optional (name, sure) but even no way of contacting the candidate can be possible (maybe just writing in the form - hm here is where digital people enter haha).

Ok, what about some interview-like questions, such as

Or, questions relevant to the specific candidate's preferences

Or, something that shows the applicants' interests more broadly, such as

Axiological, moral value, and risk attitude questions can add information on the candidate's fit, such as

10. orgs multiselect: for non-EA orgs (recommended by 80k), it can be interesting to just copy general interest app fields and then (if it would not constitute a reputational loss risk for the applicant) paste the responses and see what happens. Founders Pledge orgs make sense - have not thought of these.

Maybe I can go through some applications of EA-related orgs, Funds, 80k orgs, Founders Pledge ventures, opportunities relevant to Probably Good profiles, etc to synthesize questions.

A few maybe-blind-spots:

Also, "eyes on the ball":

The top priority bottle neck that I am trying to solve is "letting EA orgs contact candidates".

All the other questions more or less help filter down candidates.

If you help EA orgs filter candidates at the cost of "scaring away" some candidates because of a too long form - then I think you are probably making a mistake, or at least I would think very hard before doing it.

Note that I haven't heard from EA orgs that they get too many candidates and need help filtering them down. So this falls into "solving a problem that may not exist".

I do think/hope that asking what general profession the person wants to work in would not deter too many people (especially since I offer checkboxes). I do admit, though, that I am not confident that even asking for a CV/linkedin is a good idea since candidates are often nervous about it (but I decided yes to include it by default)

eyes on the ball: after speaking with people, they have to fill out the form?

There should be the option to just link a CV. After, people could answer more questions or schedule a call.

Ok, so getting people upload a CV may be key.

Oh, well, they have to upload something. They can always update or delete it and will not be penalized for any earlier uploads as these are overwritten. Maybe asking about priorities that they think progress should be made in can provide similar information to what they want to make progress in but make people less nervous.

I talk to many EAs (especially devs) who are considering applying to orgs and I can tell you for sure that there are all sorts of other problems (including ones not in the link maybe) that could be solved by making it easier and less scary to apply.

I actually think I never talked to someone who didn't have at least one project in mind that they'd be excited to join (but perhaps I'm forgetting something. Anyway it's at least rare)

Edit: Something that does happen often is that a person thinks that no exciting EA project exists, but then I tell them about such a project that they haven't yet considered

Yes, there should be enough actually interesting opportunities (for developers) ranging from AI safety research and increasing NGO, impact sector, and public infrastructure efficiencies to developing products that apply safety principles, communicating with hardware manufacturers, informing AI strategy and policy, or upskilling in an area that they have not explored and pivoting. It should not be scary to apply, management by fear reduces thriving.

From the link/your writing, feedback of a candidate who rejected an offer can be also valuable. General support with CV writing can be valuable, as long as it highlights candidates' unique background and identities rather than standardizes the documents.

What is the percentage of people interested in something who applied for funding and who tried to find someone interested in a similar project, as an estimate?

What if this recommendation was not done as part of a discussion but written, would people who you spoke with still be enthusiastic about the recommendations?

1.

Sorry, I didn't understand the context, do you mean you'd want to offer these things too? (At the MVP?)

2.

Sorry, I didn't understand this either, could you ask in different words? (or explain why you're asking, maybe that would help me?)

3.

I publish articles after I user test them. I do have one draft article about a similar topic that has good early results but I am not yet confident in it. Here's the link to the draft, if you'd like to look/comment (though I wouldn't count it as a user test unless you're somewhat looking for a job yourself, and if you'd, before reading, tell me your default plans for the next few weeks/months (so we can see if the article changed anything)).

1. I think that feedback regarding rejected offers can be valuable and low marginal effort (e. g. adding a column). Some CV writing support could be taken care of by Career Centers (that are sometimes available also to alumni). EA community members could further assist with CV specifics if they are familiar with what different (competitive) positions are looking for that the candidate can highlight. As an MVP, comments on linked docs can be used.

2. I mean, of the people who you spoke with and who had idea of a personal project

a) How many applied for EA-related funding to work on this project and how many did not?

b) What percentage tried to find someone with a similar idea in mind to work with them on the project?

I am asking to assess to what extent people with personal project ideas could be constrained by encouragement to apply for funding and by being connected with someone else. If they applied and were rejected then integrating funds can be less of a value. If they looked for collaborators but could not find any, then increasing the number of skilled people should be prioritized over recommending connections.

3. Tested. Realizing that writing can motivate engagement/action.

I'm pretty sure this is wrong. If it was so easy, orgs would already give feedback today by email or something, the problem is not the missing column.

This doesn't sound like what I'd call an MVP (unless this was the entire project), but I will stop trying to convince you about this by default

Eh, I don't have a good "feel" for this. I encourage people to apply to funding when it seems relevant. Applying for funding does seem at least somewhat scary, I know this myself too.

It is very common for people to look for a cofounder (which does not mean "someone with a similar idea")

3. Thank you!

1. Ok, maybe actually getting sincere feedback on rejected offers seems like an additional project.

2. Ok.

Hey Yonatan!

As another MVP idea, what would you think about monthly "who's hiring" and "who wants to be hired" forum threads, like they do on Hacker News?

I've heard mixed feelings about the HN version, and am curious to hear your perspective on copying it on this forum.

I think the MVP-MVP would be doing this once (before we try to do it monthly)

wdyt?

Sent a message to Lizka, let's see!

Hmm .. who is hiring is dynamically updated via 80k (opportunities for a specific audience), Impact CoLabs, the Job listing (open) tag, Fellowships and internships tag, EA Internships board, Animal Advocacy Careers, EA Work club, some posts that look for collaborators or contract work (maybe Requests (open) and Bounty (open) tags). Additional lists of organizations (that have openings on their websites) are on AI Safety Support, the EA-related organizations list, and probably elsewhere. Sometimes, people post projects that they would like to see (Take action tag?). Some EA Funding opportunities are interested in specific ventures. It could be worth to aggregate the opportunities. One way can be copying and pasting or writing a bot to copy-paste and then just edit. Categorization could also be useful for filtering. (The filtering can be also be collated, for example deadline is on AAC but not on 80k or Internships board. I am wondering about what filters can be even more informative.) This could be available in real time but also sent periodically.

Who wants to be hired can be also resolved in real time, by some type of a tag, or maybe a checkbox on the spreadsheet. I would argue for adding it where there is a lot of information about the person and many people look, so the EA Forum, maybe LessWrong, AI Alignment Forum, and possibly EA events profiles (there is less written information but the person is there). A hiring/contract timeline could also be useful (e. g. if someone is looking for an internship or has a contract that expires in 3 months and is not looking to renew it). Recruiters would probably check this when they need to hire but getting regular notifications can be valuable to people who are employed and have ideas (and ideally funding) and are looking for someone with specific skills to do something that they do not have the time for.

1. Who is hiring: 80k are already running a job board which also sends updates by email. Are you considering improving their job board - in your MVP - which is supposed to also do other things except for being a job board? (I wouldn't)

2.

This sounds to me like solving a pain point that might not exist (no?)

I mean, maybe worst case a candidate gets contacted, says they're not looking anymore, and then their checkbox is updated (?)

3.

Again, seems like the MVP is having this information available at all - before adding features (that solve a problem we are not sure exists) like notifications

no?

I could even imagine notifications having negative value for some busy people like you described

1. I think that the 80k board can be best improved by a greater variety of opportunities, not only those related to EA-labeled organizations and governance in large economies but also opportunities that develop win-win solutions useful to the decisionmakers, understand fundamentals of wellbeing, share already developed solutions with networks where top-down decisionmaking possibilities are limited, motivate positive norms within institutions that can have large positive or negative impact (such as developing nations' governments), possibly develop comparative advantage in positive-externality sectors (such as crop processing vs. industrial animal farming), increase private sector efficiencies in a way that benefits large numbers of individuals (e. g. agricultural machinery leasing to smallholders, traffic coordination in cities, medical supplies distribution that considers bottlenecks, etc), implement solutions or conduct research for local prices, introduce impactful additions to existing programs (e. g. hypothetically central micronutrient fortification of food aid), offer shorter personal projects contracts, understand intended beneficiary actual preferences, etc.

This increased variety of opportunities can be conditional to a Common App bringing value by increasing the efficiency of hiring for a specific set of opportunities. Some of these additional opportunities are on the EA Forum or in the minds of community members. Since 80k could appear informal if it included these opportunities, it may be best to list them on a spreadsheet or/and refer individuals to others with ideas/let others find collaborators or contractors.

Integration of EA funding opportunities, including less formal more counterfactual funding (one would not donate to bednets but they would give a stipend to a fellow group member to learn over the summer and produce a practice project), can be key. Risk should be considered with this approach, for example, funding should not be given to projects that relate to info hazards or could make decisionmakers enthusiastic about risky topics. This should be specified and checked in a risk-averse way by some responsible people (who also have time), such as group organizers.

One way that existing 80k resources could be added to is using the career planning resource where people write answers and then based on these answers some career considerations are recommended. Just enabling people to (edit and) post their answers online can be valuable. The added value is that others can hire them or make recommendations based on their interests. I would still add more questions, because they can paint a more comprehensive picture of the candidate without the need to interview or interact with them or ask for a reference.

I think that getting project ideas even from a well-written post of an engaged community member onto the 80k board can be a challenge due to the scope of opportunities that are considered.

2. I mean before they start needing a job, not after they get one. For example, if someone is looking in March for a 3-month internship starting June 1, they should not be getting offers that extend before June 1 or after September 1. Of course, if someone is hired they (or anyone) should update that otherwise others will be wasting time reviewing their application.

3. Yes, maybe there should be a balance between distracting busy professionals and enabling them to save time by hiring others. Ideally the community would pre-filter the applications. Bias in this process can be limited by asking people to make recommendations in a non-preferential way and include their reasoning for recommending a particular opportunity. While there should be an option to get a list of applicants filtered by some criteria specified by the professional sent periodically, a greater value can be from reviewing others' reasoning why candidates can be a great fit for a role that one posts (and providing feedback on the reasoning).

Yes, in practice interview questions should vary a lot between different roles, even if on paper the roles are fairly similar, so I'm not sure they could be coordinated, beyond possibly some entry level roles.

In a situation where someone is good but doesn't quite fit in a role the referral element might be useful. Often I've interviewed someone thinking 'they're great but not as good a fit for the role' even if they match on paper, and being able to refer that person on to another organisation would be a mutual benefit.

Nice

So here's my #1 user research:

Would you like to add a checkbox for that in your own application form?

Oh yes, add a checkbox! I think the wording can be:

I want my application responses to be copied to a public online spreadsheet for the purpose of connecting me to employment, contract, or grant opportunities; potential collaborators; and/or relevant resources. I agree to be contacted via e-mail for this purpose. I will be able to modify or delete my responses by editing the spreadsheet using the e-mail address used in this application.

(default unchecked)

This should be GDPR compliant. The list should be comprehensive and terms clear. It is possible that it is excessive but only "for the purpose of connecting me to potential collaborators and/or relevant resources" that is used by EA Events can exclude the instances when someone is recommending a grant opportunity or seeking to fund a personal project.

I wonder if 'for the purpose of connecting me to' implies that they agree to be contacted or if an additional 'I agree to be contacted via e-mail for this purpose' should be added. I added it just in case.

I think that some questions can be used universally across seniority levels and cause areas. For example, something on 'describe an important problem that you resolved in the past few months.' Other questions can be applicable to similar types of roles (e. g. research manager) even in different fields (maybe 'a researcher has a great idea that another one disagrees with, how do you go about making a decision'). Then, some questions can be applicable to any job within a cause area ('what draws you to hen welfare?') and some particular to a type of organizations ('what interests you about research').

It could be noted what role type, cause area, and/or organization type the question is pertinent to. Then, organizations could see responses of candidates who interviewed for the role/cause/organization type. Bias could be introduced by candidates tailoring their responses to a particular position. This can be mitigated either by having questions independent of position or recruiters looking beyond the context on the actual skills (e. g. if someone resolved a disagreement in ML research, they could resolve a disagreement also in math research).

Ok, that is great. What do you think about giving some of these pieces of feedback

This alone can direct candidates to better roles and provide feedback on presentation while adding only a few minutes per candidate and, in conjunction with other application material, can inform referrers what to recommend more accurately.

I think there will be many challenges and difficulties in creating something like this, but at it's core it has value. Thus, my overall perspective is quite positive, but there are also a plethora of areas that can cause this to not function well.

The issue/problem that comes to my mind most readily is that different organizations are looking for different things, and different roles require different things. Thus, while some information about an applicant may be relevant for all job applications (name, email address, most recent few job titles, what locations the applicant is and isn't willing to live in, etc.), the majority of what an organization wants to know in order to make a hiring decision will be unique/distinct to the organization or to the role.

Some of this could probably be address easily by having a core part of the application which is applicable to a wide variety of roles, and org specific part of the application which is different for each organization, and a role specific part of the application which is specific that the role that the organization is hiring for. Thus, if John Doe is applying to be a recruiter at Open Phil, and a recruiter at The Centre for Effective Altruism, and an operations associate at The Centre for Effective Altruism, then he would end up filling out and submitting:

After submitting this information the individuals in charge of hiring for each role would choose whether or not to contact him for additional information. This is roughly parallel to the Common App for college applications.

That is one way to look at this that organizations look at different aspects to hire the best fit candidate. Another way is that the constraint is that there is not really anyone sincerely interested in working for that specific organization in a particular capacity. This is what I am trying to address: by filling an application people should better define their interests, and these, alongside with their skills/background, should be readily available for organizations (who may thus start looking to hire a specific skillset), plus funds that may be seeking people to advance projects, and people looking to just contract others for some tasks or for collaborators. So, it can be argued that this can help organizations find what they are looking for.

Yes, that makes sense. That would be many organization-specific parts, but that can be done relatively easily, maybe adding a few questions per organization, and people can choose which ones to fill. Role-specific parts can be relatively more challenging as the application would have to keep changing but that is also possible.

Then, this person would be only marginally better off than if he filled 3 applications and just copied-pasted the organization-specific for CEA (and filling name and e-mail, .. takes almost no time). The improvement here is if he fills the role-specific info for recruiter only once. Of course, a recruiter at CEA is different from recruiter at OpenPhil but if there is just one/few common questions about a recruiter then he can get to a better-fit role because he cannot be tailoring answers based on role descriptions/etc. - I actually wonder if then people would be more sincere or more biased in a different way (e. g. try to optimize for attention).