I've previously written that good ideas and conversations about AGI seem to have propagated through ML weirdly slowly.

A different weird phenomenon I observe is that the field's relative inaction about AGI seems less based on a confident set of beliefs about AGI definitely going well (or definitely being far off), and more based on an implicit sense like "the default is everything going well, and we don't need to change anything until there's overwhelming evidence to the contrary".

Some people do have confident beliefs that imply "things will go well"; I disagree there, but I expect some amount of disagreement like that.

But that doesn't seem to be the crux for most people in ML.

In a sane world, it doesn't seem like "well, maybe AI will get stuck at human-ish levels for decades" or "well, maybe superintelligence couldn't invent any wild new tech" ought to be cruxes for "Should we pause AI development?" or "Is alignment research the world's top priority?"

Note that I'm not arguing "an AGI-mediated extinction event is such a big deal that we should make it a top priority even if it's very unlikely". There are enough other powerful technologies on the horizon, and enough other risks for civilizational collapse or value lock-in, that I don't in fact think AGI x-risk should get major attention if it's very unlikely.

But the most common view within ML seems to be less "it's super unlikely for reasons X Y Z", and more of an "I haven't thought about it much" and/or "I see some reasons to be very worried, but also some reasons things might be fine, so I end up with medium-ish levels of worry".

In a mid-2022 survey, 48% of researchers who had recently published in NeurIPS or ICML gave double-digit probabilities to advanced AI's long-term effect being “extremely bad (e.g., human extinction)”. A similar number gave double-digit probabilities to "human inability to control future advanced AI systems causing human extinction or similarly permanent and severe disempowerment of the human species".

In an early 2021 survey, 91% of researchers working on "long-term AI topics" at CHAI, DeepMind, MIRI, OpenAI, Open Philanthropy, and what would become Anthropic gave double-digit probabilities to "the overall value of the future will be drastically less than it could have been, as a result of AI systems not doing/optimizing what the people deploying them wanted/intended".

The level of concern and seriousness I see from ML researchers discussing AGI on any social media platform or in any mainstream venue seems wildly out of step with "half of us think there's a 10+% chance of our work resulting in an existential catastrophe".

I think the following four factors help partly (though not completely) explain what's going on. If I'm right, then I think there's some hope that the field can explicitly talk about these things and consciously course-correct.

- "Conservative" predictions, versus conservative decision-making.

- Waiting for a fire alarm, versus intervening proactively.

- Anchoring to what's familiar, versus trying to account for potential novelties in AGI.

- Modeling existential risks in far mode, versus near mode.

1. "Conservative" predictions, versus conservative decision-making

If you're building toward a technology as novel and powerful as "automating every cognitive ability a human can do", then it may sound "conservative" to predict modest impacts. But at the decision-making level, you should be "conservative" in a very different sense, by not gambling the future on your technology being low-impact.

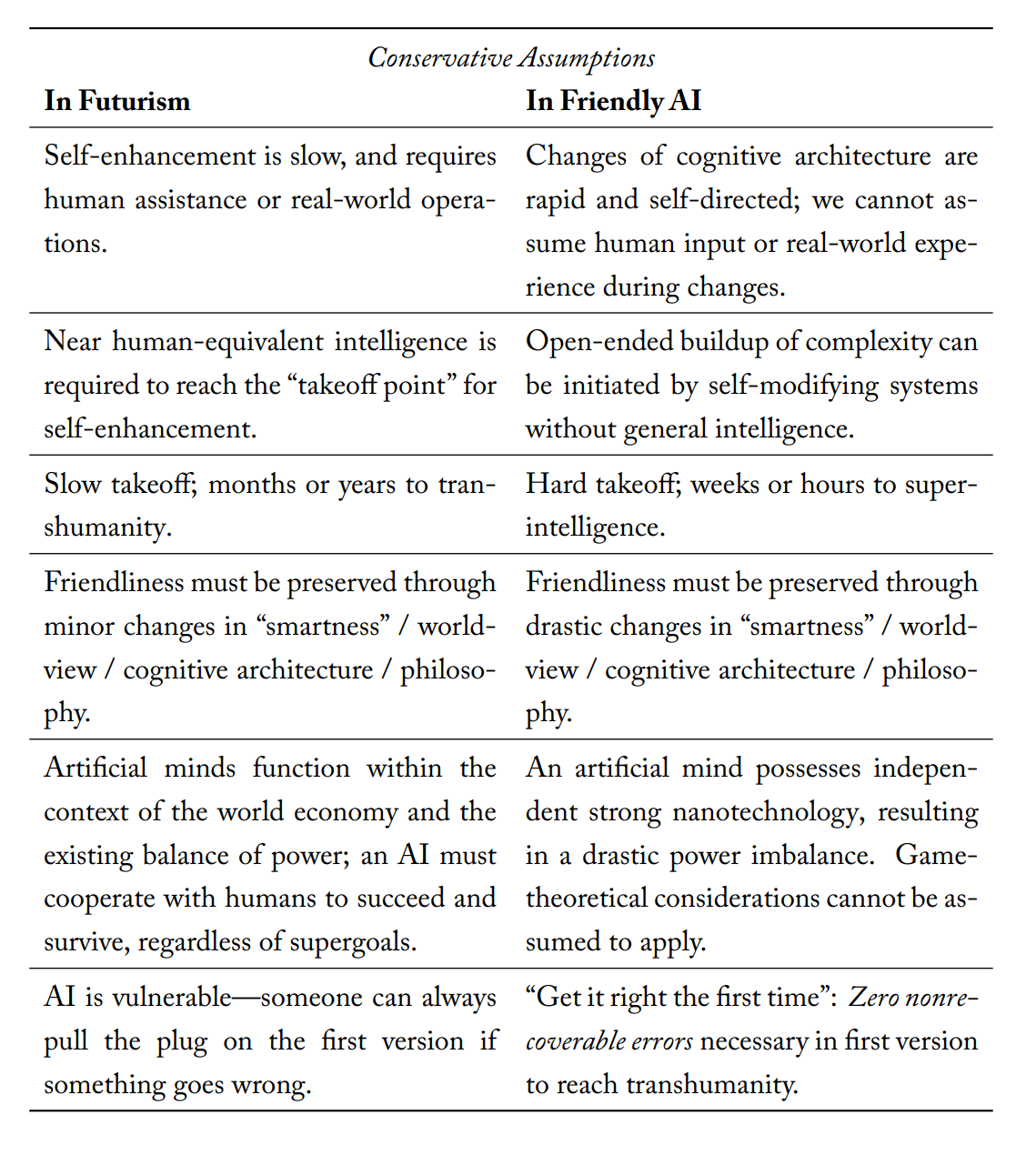

The first long-form discussion of AI alignment, Eliezer Yudkowsky's Creating Friendly AI 1.0, made this point in 2001:

The conservative assumption according to futurism is not necessarily the “conservative” assumption in Friendly AI. Often, the two are diametric opposites. When building a toll bridge, the conservative revenue assumption is that half as many people will drive through as expected. The conservative engineering assumption is that ten times as many people as expected will drive over, and that most of them will be driving fifteen-ton trucks.

Given a choice between discussing a human-dependent traffic-control AI and discussing an AI with independent strong nanotechnology, we should be biased towards assuming the more powerful and independent AI. An AI that remains Friendly when armed with strong nanotechnology is likely to be Friendly if placed in charge of traffic control, but perhaps not the other way around. (A minivan can drive over a bridge designed for armor-plated tanks, but not vice-versa.)

In addition to engineering conservatism, the nonconservative futurological scenarios are played for much higher stakes. A strong-nanotechnology AI has the power to affect billions of lives and humanity’s entire future. A traffic-control AI is being entrusted “only” with the lives of a few million drivers and pedestrians.

People who think their role is only to be a "conservative predictor", and not a "conservative decision-maker", will skew the scholarly conversation toward taking more extreme risks, because acknowledging extreme things sounds too out-there to them.

I personally wouldn't even call the predictions here "conservative", since this conflates "sounds normal" with "robust to uncertainty". All consistent object-level views about AI and technological progress have at least one "wild" implication (as noted in Holden Karnofsky's The Most Important Century), so views that sound normal here generally have to use misdirection and vagueness to obscure the wild part.

The availability heuristic and absurdity bias cause us to neglect big changes until it's too late.

My own view is that extreme disaster scenarios are very likely, not just a tail risk to hedge against. I actually expect AGI systems to achieve Drexler-style nanotechnology within anywhere from a few months to a few years of reaching human-level-or-better ability to do science and engineering work. At this point, I'm looking for any hope of us surviving at all, not holding out hope for a "conservative" scheme (sane as that would be).

But the point stands that if you have more "medium-sized" probabilities on those capabilities being available (as opposed to very high or very low ones), then a sane response to AGI should explicitly grapple with that, not pretend the probability is negligible because it's scary.

I do think debates between the "risk is extremely high" camp and the "risk is medium-sized" camp are important. But the importance mostly stems from "this suggests we have different background models, and should try to draw those out so they can be discussed explicitly", not "we should only take action about extreme risks once we're 95+% sure of them".

2. Waiting for a fire alarm, versus intervening proactively

There's No Fire Alarm for Artificial General Intelligence (written in 2017) makes a few different claims:

- Predicting when future technologies will be invented is usually very hard, and may be flatly impossible for humans in the typical case, at least when the technology isn't imminent. AGI is likely no exception.

- There isn't any "fire alarm" for AGI, i.e., there's no event that field leaders all know is going to happen well before AGI, such that everyone can coordinate around that event as "here's the trigger for us to start thinking seriously about AGI risk and alignment".

- Many people aren't working on AGI alignment today (in 2017) because they're waiting for some clear future social signal that you won't look weird or panicky for thinking about something as science-fictiony as AGI. But in addition to there being no known event like that we can safely wait around for, there probably aren't any unknown events like that either. It will probably still be socially risky to loudly worry about AGI even on the eve of AGI's invention. If you're waiting for social permission to get involved, you'll likely never get involved at all.

Quoting Yudkowsky:

When I observe that there’s no fire alarm for AGI, I’m not saying that there’s no possible equivalent of smoke appearing from under a door.

What I’m saying rather is that the smoke under the door is always going to be arguable; it is not going to be a clear and undeniable and absolute sign of fire; and so there is never going to be a fire alarm producing common knowledge that action is now due and socially acceptable.

Claims 1 and 2 still seem correct to me. We can hope that 3 is maybe false, and that we're now seeing a shift in the field toward taking AGI seriously, even if this wasn't foreseeable in 2017 and doesn't come with a lot of clarity about timelines.

For now, however, it still seems to me that the basic dynamics described in the Fire Alarm post are inhibiting action. Things are murky now, and I think there's a common implicit expectation that they'll be less murky later, and that we can safely put off thinking about the problem until some unspecified future date.

The bystander effect still seems powerful here. People don't want to be the first in a given social context to express alarm, so they default to looking vaguely calm while waiting for someone else to speak up or spring into action first. But everyone else is doing the same thing, so no one ends up acting at all.

This is a case where unilaterally acting at all (in sane and actually-helpful ways), speaking up, blurting your actual thoughts, etc. can be particularly powerful and important.

In some cases it may only take one person shattering the Overton window in order to open the floodgates for other people who were quietly worried. And even where that's not true, I expect better results from people hashing out their disagreements in argument than from people timidly waiting for the right moment.

3. Anchoring to what's familiar, versus trying to account for potential novelties in AGI

The level and nature of the risk from AGI turns on the physical properties of AGI. "AlphaGo wasn't dangerous" is evidence for "AGI won't be dangerous" only insofar as you think AGI is similar to AlphaGo in the relevant ways.

But for some reason a lot of people who wouldn't go out on a limb and claim that AlphaGo and AGI are actually particularly similar in the ways that matter, do treat AGI like "just a normal ML system". Their policy suggests confidence that AGI is in the same reference class as systems like AlphaGo or DALL-E in all the ways that matter, even though they wouldn't ever actually state that as a belief.

The whole conversation is baked through with a tacit assumption that the difficulty, danger, and importance of AGI alignment needs to be "just more of the same", even though AGI itself is a very new sort of beast.

But "get a smarter-than-human AI system to produce good outcomes" is not in fact similar to a problem we've faced before! I think it's a solvable problem in principle, but the difficulty level does not need to be calibrated to business-as-usual efforts.

Quoting Beyond the Reach of God:

Once upon a time, I believed that the extinction of humanity was not allowed. And others who call themselves rationalists, may yet have things they trust. They might be called "positive-sum games", or "democracy", or "technology", but they are sacred. The mark of this sacredness is that the trustworthy thing can't lead to anything really bad; or they can't be permanently defaced, at least not without a compensatory silver lining. In that sense they can be trusted, even if a few bad things happen here and there.

The unfolding history of Earth can't ever turn from its positive-sum trend to a negative-sum trend; that is not allowed. Democracies—modern liberal democracies, anyway—won't ever legalize torture. Technology has done so much good up until now, that there can't possibly be a Black Swan technology that breaks the trend and does more harm than all the good up until this point.

There are all sorts of clever arguments why such things can't possibly happen. But the source of these arguments is a much deeper belief that such things are not allowed. Yet who prohibits? Who prevents it from happening? If you can't visualize at least one lawful universe where physics say that such dreadful things happen—and so they do happen, there being nowhere to appeal the verdict—then you aren't yet ready to argue probabilities.

[...] If there is a fair(er) universe, we have to get there starting from this world—the neutral world, the world of hard concrete with no padding, the world where challenges are not calibrated to your skills.

4. Modeling existential risks in far mode, versus near mode

Quoting Yudkowsky again, in "Cognitive Biases Potentially Affecting Judgment of Global Risks":

In addition to standard biases, I have personally observed what look like harmful modes of thinking specific to existential risks. The Spanish flu of 1918 killed 25-50 million people. World War II killed 60 million people. is the order of the largest catastrophes in humanity’s written history. Substantially larger numbers, such as 500 million deaths, and especially qualitatively different scenarios such as the extinction of the entire human species, seem to trigger a different mode of thinking—enter into a “separate magisterium.” People who would never dream of hurting a child hear of an existential risk, and say, “Well, maybe the human species doesn’t really deserve to survive.”

There is a saying in heuristics and biases that people do not evaluate events, but descriptions of events—what is called non-extensional reasoning. The extension of humanity’s extinction includes the death of yourself, of your friends, of your family, of your loved ones, of your city, of your country, of your political fellows. Yet people who would take great offense at a proposal to wipe the country of Britain from the map, to kill every member of the Democratic Party in the U.S., to turn the city of Paris to glass—who would feel still greater horror on hearing the doctor say that their child had cancer—these people will discuss the extinction of humanity with perfect calm. “Extinction of humanity,” as words on paper, appears in fictional novels, or is discussed in philosophy books—it belongs to a different context than the Spanish flu. We evaluate descriptions of events, not extensions of events. The cliché phrase end of the world invokes the magisterium of myth and dream, of prophecy and apocalypse, of novels and movies. The challenge of existential risks to rationality is that, the catastrophes being so huge, people snap into a different mode of thinking.

In terms of construal level theory, personal tragedies are "near", while human extinction is "far". We think of far-mode things in more abstract and detached terms, more like morality tales or symbols than like messy, concrete, mechanistic processes.

Rationally, we ought to take larger disasters proportionally more seriously than equally probable small-scale risks. In practice, we don't seem to do that at all.

"Well, maybe we aren't all going to die; it's not a sure thing!" is a lot weaker than the bar we usually require for doing anything.

The above is my attempt at a partial explanation of what's going on:

- People are taking the risks unseriously because they feel weird and abstract.

- When they do think about the risks, they anchor to what's familiar and known, dismissing other considerations because they feel "unconservative" from a forecasting perspective.

- Meanwhile, social mimesis and the bystander effect make the field sluggish at pivoting in response to new arguments and smoke under the door.

What do you think of this picture? Do you have a different model of what's going on? And if this is what's going on, what should we do about it?

Nice post!

I think I’d want to revise your first taxonomy a bit. To me, one (perhaps the primary) disagreement among ML researchers regarding AI risk consists of differing attitudes to epistemological conservatism, which I think extends beyond making conservative predictions. Here’s why I prefer my framing:

I also think that the language of conservative epistemology helps counteract (what I see as) a mistaken frame motivating this post. (I’ll try to motivate my claim, but I’ll note that I remain a little fuzzy on exactly what I’m trying to gesture at.)

The mistaken frame I see is something like “modeling conservative epistemologists as if they were making poor strategic choices within a non-conservative world-model”. You state:

I have concerns about you inferring this claim from the survey data provided,[1] but perhaps more pertinently for my point: I think you’re implicitly interpreting the reported probabilities as something like all-things-considered credences in the proposition researchers were queried about. I’m much more tempted to interpret the probabilities offered by researchers as meaning very little. Sure, they’ll provide a number on a survey, but this doesn’t represent ‘their’ probability of an AI-induced existential catastrophe.

I don’t think that most ML researchers have, as a matter of psychological fact, any kind of mental state that’s well-represented by a subjective probability about the chance of an AI-induced existential catastrophe. They’re more likely to operate with a conservative epistemology, in a way that isn’t neatly translated into probabilistic predictions over an outcome space that includes the outcomes you are most worried about. I think many people are likely to filter out the hypothesis given the perceived lack of evidential support for the outcome.

I actually do think the distinction between 'conservative predictions' and 'conservative decision-making' is helpful, though I'm skeptical about its relevance for analyzing different attitudes to AI risk.

If my analysis is right, then a first-pass at the practical conclusions might consist in being more willing to center arguments about alignment from a more empirically grounded perspective (e.g. here), or more directly attempting to have conversations about the costs and benefits of more conservative epistemological approaches.

First, there are obviously selection effects present in surveying OpenAI and DeepMind researchers working on long-term AI. Citing this result without caveat feels similar using (e.g.) PhilPapers survey results revealing that most specialists in philosophy of religion are to support the claim that most philosophers are theists. I can also imagine similar selection effects being present (though to lesser degrees) in the AI Impacts Survey. Given selection effects, and given that response rates from the AI Impacts survey were ~17%, I think your claim is misleading.

Nice post!

In other words, a failure, but not of prediction: