After seven years of operations, the Charity Elections programme is closing down indefinitely. The goal of this post is to review the project, its evaluation, and our takeaways from its 45 events run across schools in 7 countries.

TL;DR

- Review of the programme's goals: The Charity Elections programme was designed to cultivate critical thinking and compassion, introduce high school students to principles of effective giving, and inspire young people to make a positive impact in the world. Student and teacher participants of the programme have regularly described experiencing those benefits, as well as others including fostering civic participation and promoting positive school climate.[1]

- Conceptualisation of programme evaluation: Though logistical and legal obstacles around data collection were significant, the preliminary analysis suggests that the programme achieved at least some of its objectives. Dr David Reinstein conducted an analysis of data from 2022–23 charity elections through the EA Market Testing (EAMT) team, and it is possible he will be the source of a follow-up post on this analysis. Despite the challenges in making conclusive, quantitative determinations about the programme's success, we speculate that the benefits of the programme were sufficient to justify its costs during the time of its operations, given (1) the relatively low cumulative actual operational cost per participating student vote ($8.39 USD), (2) our interpretation of the quantitative and qualitative evidence, and (3) the development of a field-tested curriculum that, now made public, enables schools to continue to run charity elections on their own.

- Reasons for closing down indefinitely: Now that the programme has been discontinued by Giving What We Can (GWWC), the primary reasons we have decided not to continue operations independently are: (1) we did not identify a mechanism that could reliably enrol new schools, suggesting the project might stagnate after the end of the initial wave of expansion with GWWC; (2) we believe the remaining project funds would be better off absorbed by GWWC than used to transition the programme to a new fiscal sponsor; and (3) if a school can fundraise $2 per voting student, it now has the potential to run a charity election independently that will have zero operational cost from an external entity (i.e., the Charity Elections programme) and which will generate entirely counterfactual donations to highly effective charities.

- Takeaways moving forward: We discuss the key challenges that came up and how they were addressed, and we propose that charity elections should continue to fit into the effective giving ecosystem as a free school programme—especially for teachers involved with EA who are interested in sharing their aspirations with their school community.

Historical review

Before reading further, we would highly recommend that you scan some of the following resources. For conciseness, the post itself will not include essential information about the programme and its history; for completeness, this section will be dense with hyperlinks to more information. The term 'Charity Elections programme' refers to the project being discontinued, while the term 'charity elections' refers to the school events, which schools can continue to run.

Suggested resources: programme overview and materials

For a high-level conceptualisation of the programme

| Resource | Recommended scan time | Specific takeaways |

| Introduction to how a charity election works | 2–3 minutes | Structure of programme; role of student leaders; curriculum designed to be simple and efficient |

| Further details on the programme (project brief) | 3–5 minutes | Variety of programme goals; theory of change; relation to other areas such as civic participation, student-centred education, social and emotional learning, and youth empowerment |

| Examples of successful school events | 1–2 minutes | Samples of qualitative data; perspectives from teachers who have run the programme |

For a sample of the curriculum provided to schools

| Resource | Recommended scan time | Specific takeaways |

| 1–2 minutes | A key element of a charity election that focuses on cost-effectiveness and evidence; students conduct their own research and discuss it with peers; examples of highly effective charities on the ballot | |

| 1–2 minutes | Guidelines for student discussion; relevant student competencies based on philosophy education | |

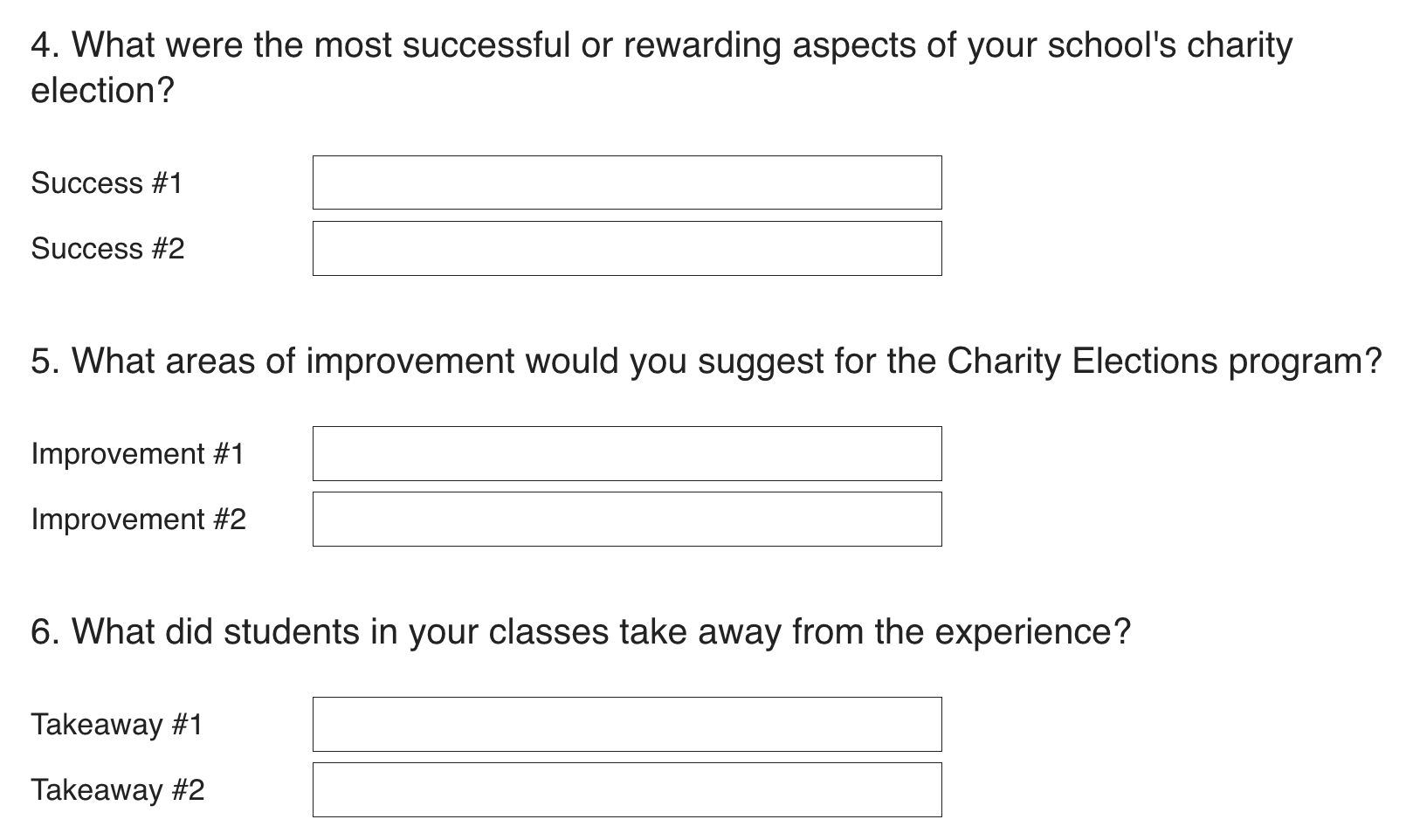

Digital Voter Registration Form | 1–2 minutes | Non-voting-related questions included both as part of experiential learning process and also as part of programme evaluation; Voter Registration Form roughly functions as pre-event measurement and Voting Ballot roughly functions as post-event measurement |

| 3–5 minutes | Key resource to run a charity election with complete teacher instructions in presenter notes; distributed by lead staff member (i.e., election coordinator) to participating teachers; divided into multiple parts to encourage stronger implementation fidelity and improve information value of data analysis |

For details around the programme's discontinuation and status moving forward

| Resource | Recommended scan time | Specific takeaways |

Announcement of programme discontinuation Post from Giving What We Can on the discontinuation of Charity Elections and other initiatives | 1–2 minutes | Programme was discontinued in 2025 after incubation and fiscal sponsorship from Giving What We Can (GWWC); programme was first implemented in 2018 with support from The Life You Can Save (TLYCS); schools can still run charity elections on their own |

| How schools can still run charity elections and send money to highly effective charities, even after the programme has been discontinued | 1–2 minutes | Guide for schools to run charity elections on their own; some schools have already independently fundraised ($6.5k USD reported from 2018–25); potential for teachers to independently run charity elections without operational support or overhead of any kind |

For other resources that may be useful but less essential to this post, with huge thanks🙏 to these and other community members who have volunteered over the years:[2]

| Resource | Specific takeaways |

| Video presentation from a maths teacher on the results of his school's charity election | Tangible explanation of how a charity election works; discussion of the student experience; relevance to civic participation and effective altruism

🙏 🙏 to Dan Roeder for preparing this presentation and otherwise supporting programme outreach. |

| 🇮🇹Video presentation about running charity elections in Italian (Charity Elections: l'impatto nella scuola secondaria) | Tangible explanation of how a charity election works in Italian

🙏 🙏 to Fabio Sandrecchi for translating the curriculum, as well as Stefania Delprete and the Altruismo Efficace Italia community for their support. |

| Article from Northfield High School | Reflections from two student leaders and an election coordinator; relevance to civic participation and positive school climate |

| Article from Highgate School | Discussion of systems change in school fundraising; example of how teachers can enhance the programme with supplemental curriculum

🙏 🙏 to Jenny Chapman for getting charity elections started at Highgate School, and otherwise improving and expanding the programme. |

| Article from Istituto Salesiano Madonna degli Angeli | Discussion of the peer and teacher interview component of the student leader role; discussion of the research process |

| Detailed guide for both student leaders and the election coordinator; includes training requirements and instructions for student interviews; references links to research the charities on the ballot and a poster for student leaders to promote them around the school | |

| Visualisation of how voting week progresses from voter registration, to research and discussion, and to voting | |

| Instructional video | Short video introducing students to the research process; included in part 2 of the event slideshow |

| New landing page for Charity Elections | List of several other relevant resources, including student and teacher testimonials, a sample certificate awarded to student leaders, and a digital plant-based cookbook resulting from a post-event student club |

If you wish to know more about something referenced in the resources above or in the body of the post below, we would be happy to share further information.

Additional topics

Other notable aspects of the programme not discussed in the resources:

Interdisciplinary focus

The programme was initially designed for and within a particular school, with no broad agenda for the project's development beyond introducing effective giving to students. It was developed through an interdisciplinary process involving collaboration with a teacher of Civics and AP Government and former Minnesota State Senator at a public high school; regular implementation of Giving Games sponsored by The Life You Can Save in philosophy classes at an international summer learning programme; and curriculum design incorporating research in school psychology. After it was implemented in its first school in 2018, it was developed for application to more schools and we began to refine and expand its objectives. A Communications and Outreach Lead was brought on in 2022 to allow the programme to scale up further.

Accessibility and adaptability of curriculum

Over the years, the majority of teachers running the programme did not have background in effective altruism. Accordingly, the curriculum emphasised the principles of effective giving but did not reference EA for the most part. The programme was designed to be adaptable, so that teachers could adjust it to meet a variety of needs such as limitations in class time, integration with projects such as school-wide presentations and essays, and adjustments to vocabulary in order to run pilots with students as young as 5th grade. It also allowed for slight modifications to centre particular classes such as IB Philosophy (e.g., incorporating a discussion of Peter Singer) and maths (e.g., adding an emphasis on cost-effectiveness).

Thoughtful design for an empowering experience

To our knowledge, the programme achieved a zero-pushback record across students, teachers, and administrators. The video content for each charity/fund on the ballot was selected thoughtfully to promote capacities such as counterfactual thinking, moral circle expansion, and compassion at the appropriate developmental level, while maintaining a straightforward depiction of tractable cause areas with a respectful, collaborative, and uplifting tone. The charities on the ballot rotated each academic year, and they were typically selected to include a charity with a particularly tangible impact in global health (e.g., Malaria Consortium SMC Programme), a charity/fund focused on non-human animal well-being and/or climate change (e.g., The Humane League Corporate Animal Welfare Campaigns), and a fund in global health (or a charity in global health, if the second organisation was a fund instead of a charity) that might further the complexity of thinking and promote nuanced discussions around risk and opportunity cost (e.g., GiveWell All Grants Fund).

Amounts and sources of funding

A grant was awarded to the Charity Elections programme in 2022 by the Effective Altruism Infrastructure Fund (EAIF). This was used for operational costs and to cover the sponsored funds directed to highly effective charities based on student votes. Between the EAIF grant ($110.1k USD), funding from The Life You Can Save, and donations from the Giving What We Can community, a total of $122.7k was spent on legal, salary, marketing, and other operational costs and $32.1k was directed to the charities on the ballot over a seven-year period from 2018–25.[4]

Operational cost per participating student

The total operational cost can be considered in light of the programme generating 14,627 student votes across 45 school events, with 138 registered student leaders and an additional, counterfactual $6.5k known to be fundraised and directed to highly effective charities by schools beyond the amount provided by Giving What We Can. The 45 school events were run across a total of 26 unique schools in the United Kingdom, the United States, Italy, Australia, Spain, Israel, and Hong Kong, with several schools running charity elections in consecutive years. Schools varied significantly in the number of student votes per charity election, with 8 events running classroom-level charity elections of less than 50 student votes and 14 events at large schools running whole-school charity elections of 500–1,000 student votes. The estimated total number of participating students—given the number of student votes and which schools ran a charity election in consecutive years—is approximately 10–11,000 unique students. Accordingly, the estimated cumulative operational cost per unique participating student was approximately $11.00–12.50 USD ($122.7k / 10–11,000 estimated unique students), and the cumulative operational cost per participating student vote was $8.39 ($122.7k / 14,627 student votes).[5]

Behind-the-scenes support

Most of the programme's successes would not have been possible without the support of Luke Freeman, who continually provided crucial guidance of many kinds, and the active involvement of Zou Xinyi during the programme's years with Giving What We Can. We are also grateful for Kathryn Mecrow-Flynn and Jon Behar, who were immensely supportive in getting the programme started.

Data analysis

Data analysis of the Charity Elections programme was conducted by Dr David Reinstein through his work with the EA Market Testing (EAMT) team. The current form of the data analysis does not solely include fully anonymised school-wide results, which prevents it from being immediately publishable given the need to protect student data per the programme's agreements with schools. If it seems valuable, it is possible that there will be a follow-up post from David with more detail later on. The analysis included charity elections from 12 schools across the 2022–23 academic year, featuring Unlimit Health (formerly the SCI Foundation), the Clean Air Task Force, and GiveDirectly as the charities on the ballot.[6]

Context of data analysis

Prior to reviewing an analysis that may be posted later on, we would recommend reviewing the below information on the context surrounding data collection:

- Various obstacles limiting data collection

- Timing and purposes of data collection within the structure of the programme

- Approach to impact assessment

- List of the prioritised outcomes

- List of the non-prioritised outcomes

- Adjustments to data collection

- Proposed conceptualisation of programme evaluation

Although the following sections of the report will address each of these topics, it will specifically describe the aspects of the data collection process that we (the Charity Elections programme) believe are most necessary to understand in order to accurately and contextually interpret the data analysis, along with our point of view on how to conceptualise programme evaluation. Accordingly, we want to emphasise that we defer to David's expertise and neutral position in evaluating the programme, and that any views expressed in this post do not necessarily represent his views.

Various obstacles limiting data collection

In an ideal world, the most informative research design for the programme would likely be a randomised controlled trial—such that a large number of schools would participate in the Charity Elections programme and a similar cohort would either not participate in the programme or would participate in an alternative programme that schools might be likely to implement if they did not bring in a charity election. In this scenario, schools would potentially be assigned to either condition via random sampling or stratified sampling across variables such as median household income. Furthermore, it would be ideal to follow up with participants over time to collect a wide range of longitudinal behavioural data, including subsequent donation behaviour, involvement in altruistic activities such as EA Groups if they attend university, civic participation, and career choice; even though the programme only consists of a few hours of curriculum, thousands of students have participated per year and differences across these data for just a handful of students might be sufficient—given the programme’s low operational cost per participating student—to suggest the programme is cost-effective.

Practically speaking, however, there are significant legal, ethical, financial, and logistical obstacles to coordinating a randomised controlled trial, collecting longitudinal data, collecting certain forms of behavioural data, and clearly defining and measuring constructs in behavioural terms, especially when they can appear in many different observable ways. These obstacles include barriers to collecting or retaining certain forms of data from minors, differences in legal procedures across the countries in which schools have run the programme, incentives that would be needed for schools to participate in the control condition, the nature of the programme as customisable in order for it to be compatible with pre-existing school curriculum, the budget required to run a study of this sort, and conditions for data collection within the voting process that challenge precise pre-post comparison between schools.

That said, the data that were collected cannot produce evidence of the Charity Elections programme’s effectiveness relative to a control group, nor can they be shown to explicitly demonstrate that the programme results in long-term behavioural outcomes for students. To account for this reality, the report of programmatic efficacy is probably best understood as a tentative estimate of the impact and cost-effectiveness of the programme amidst uncertainty, rather than a thorough and confident determination of the programme's value. Before describing the framework proposed to evaluate the impact and cost-effectiveness of the programme, the following sections will outline the types of data that were collected and ultimately considered against the programme’s operational costs.

Timing and purposes of data collection

The two forms of data collected were (1) approximate pre-event and post-event survey data, which were collected as part of the Voter Registration Form toward the beginning of the event and the Voting Ballot toward the end of the event, and (2) a variety of mostly qualitative outcomes that resulted from running each charity election event.

Approximate pre-event and post-event survey data

Although the event is flexible in that schools can customise it based on their individual schedules and needs, charity elections were designed to be run across a week of research and voting, with activities run across three classes ranging from 20–45 minutes per day of curriculum (e.g., on a Monday, Wednesday, and Friday of a single week). Given differences in class-time availability among schools (e.g., incorporation into a particular department's curriculum vs. arranging one or more school-wide assemblies), several of the participating schools would not have been able to run a charity election if strong adherence to a particular standard model were required. It is unreasonable to expect teachers experiencing a variety of demands and unique contexts to implement the curriculum with a high level of consistency. Therefore, while the standard model was highly encouraged, to include more schools, they were offered flexibility as long the research and discussion process remained central to the proposed adaptation. As a result, there was notable variance among schools in terms of where the Voter Registration Form and Voting Ballot fit into the overall process.

The reason that this is relevant to data analysis is because the Voter Registration Form functioned as the pre-event survey and the Voting Ballot functioned as the post-event survey. The primary purposes of the forms were to facilitate voting and promote reflection and learning, via questions designed to stimulate thinking around major world issues and what young people can do to contribute to solving them. Accordingly, if teachers modified the sequence of the curriculum for some understandable approved reason or if there were low implementation adherence, the difference in timing had the potential to shift the Voter Registration Form further from the actual pre-event point in time and shift the Voting Ballot further from the actual post-event point in time.

These situations, then, differed from the standard process, in which students were expected to fill out the Voter Registration Form and Voting Ballot toward the beginning and end, respectively, of their school's charity election, with the Voter Registration Form and Voting Ballot largely comparable in terms of number of responses.

That said, there were a variety of factors that produced complications to account for in pre-post comparisons, with some inevitable issues including:

- Students submitting the Voter Registration Form and Voting Ballot on the same day due to extracurriculars, sickness, or other attendance-related reasons (e.g., if a student missed class on the first day of voting week, the teacher might instruct them to fill out the Voter Registration Form immediately before submitting the Voting Ballot)

- Effect: Pre-event measure and post-event measure submitted at roughly the same point in time for some students, potentially decreasing overall effects

- Student leaders campaigning or fundraising for the charities on the ballot prior to voting week (e.g., via the spotlight poster), which is encouraged as part of the programme in the event handbook

- Effect: Students exposed to aspects of the programme prior to the pre-event measure, potentially raising baseline outcomes artificially

- Student leaders and/or teachers announcing the election results after voting week (e.g., via this format), which is an essential component of the programme

- Effect: Students not exposed to aspects of the programme until after the post-event measure, such that it might capture only some of the programme's impact

- Students do not submit their name, or any other personally identifying information, in the Voter Registration Form and Voting Ballot

- Effect: Though this can be accounted for via unpaired tests, the data cannot appropriately be interpreted via paired statistical tests

- Students registering to vote, and thus submitting the approximate pre-event survey, twice due to the school disabling certain permissions[7]

- Effect: If a large school has browser limitations disabled (e.g., if there are not sufficient devices for all participating students to vote when browser limitations are turned on), it is plausible that a very small number of students would register to vote and/or vote twice[8]

A few examples of school-specific issues that arose during the 2022–23 academic year:

- At one school, some of the teachers mistakenly instructed ~10% of participating students to submit the Voter Registration Form twice.

- At another school, the teacher did not present the Voter Registration Form until after students had begun the research and discussion process by mistake.

- At a third school, some of the teachers did not present the Voter Registration Form to students at all, likely due to not following the instructions passed on from the lead teacher or a miscommunication between the lead teacher and other participating teachers.

Given the demanding and sometimes unpredictable school environment, issues such as the above have been par for the course across the programme's seven years of operations, even at the most motivated schools and with teachers in the effective altruism community. Though there could potentially be stricter conditions around implementation adherence (e.g., not providing sponsorship for schools with implementation issues), this would not only take away from the spirit of the event, but it would also render the programme infeasible for some schools and punish others for unexpected implementation challenges (e.g., technology issues, fire drills, etc.) that cannot reasonably be prevented. For most teachers, it is already difficult to commit two hours of class time to run the programme within the context of their curriculum expectations.

In order to improve implementation adherence as much as possible, a few changes were made over the 2022–23 academic year:

- The event slideshow was divided into three different parts, with different days of voting week designated for each. This was intended to lead to a more standardised presentation among schools.

- The voting week timeline was adopted to further clarify the sequence of the curriculum.

- The election coordinators were provided reminders of upcoming steps in the programme and received particularly timely email replies during their school's voting week.

Ultimately, the data analysis identified the schools with the most implementation difficulties and accounted for them, such as by removing those schools from some of the analyses.

Other outcomes from running a charity election

Given that non-falsifiable hypotheses were not as relevant to programme evaluation, these mostly qualitative outcomes will be discussed in less detail.

Just as examples, from the 2022–23 academic year these included:

- Students participating in a charity election through a 5th-grade pilot event wrote essays on this article from GWWC. This activity was done unprompted by the Charity Elections programme and was only discovered when we were informed by the election coordinator after the fact.

- Two student leaders and a teacher travelled to give a presentation at the National Council for the Social Studies annual conference, inspiring one of the teachers in attendance to run a charity election the following year.

We saw a variety of such extensions taken on proactively by schools over the course of the Charity Elections programme, illustrating schools’ creativity in using the programme for expanded learning and revealing for us, albeit outside our effectiveness measurements, the potential for further engagement.

Approach to impact assessment

As background for the selection of relevant outcomes, the mission of GWWC includes to "make giving effectively and significantly a cultural norm." The mission of the Charity Elections programme was to “inspire young people to make a positive impact in the world, and in doing so cultivate a culture of giving and giving effectively.” Through starting applied conversations about universal principles of effective altruism (e.g., cost-effectiveness and moral circle expansion) among large populations of young people, the programme aimed to produce a variety of outcomes. The programme's multi-tracked theory of change reviews its conceptualisation of the most essential of those outcomes, how they relate to the programme's design, and how they might be operationalised within the necessary legal parameters.

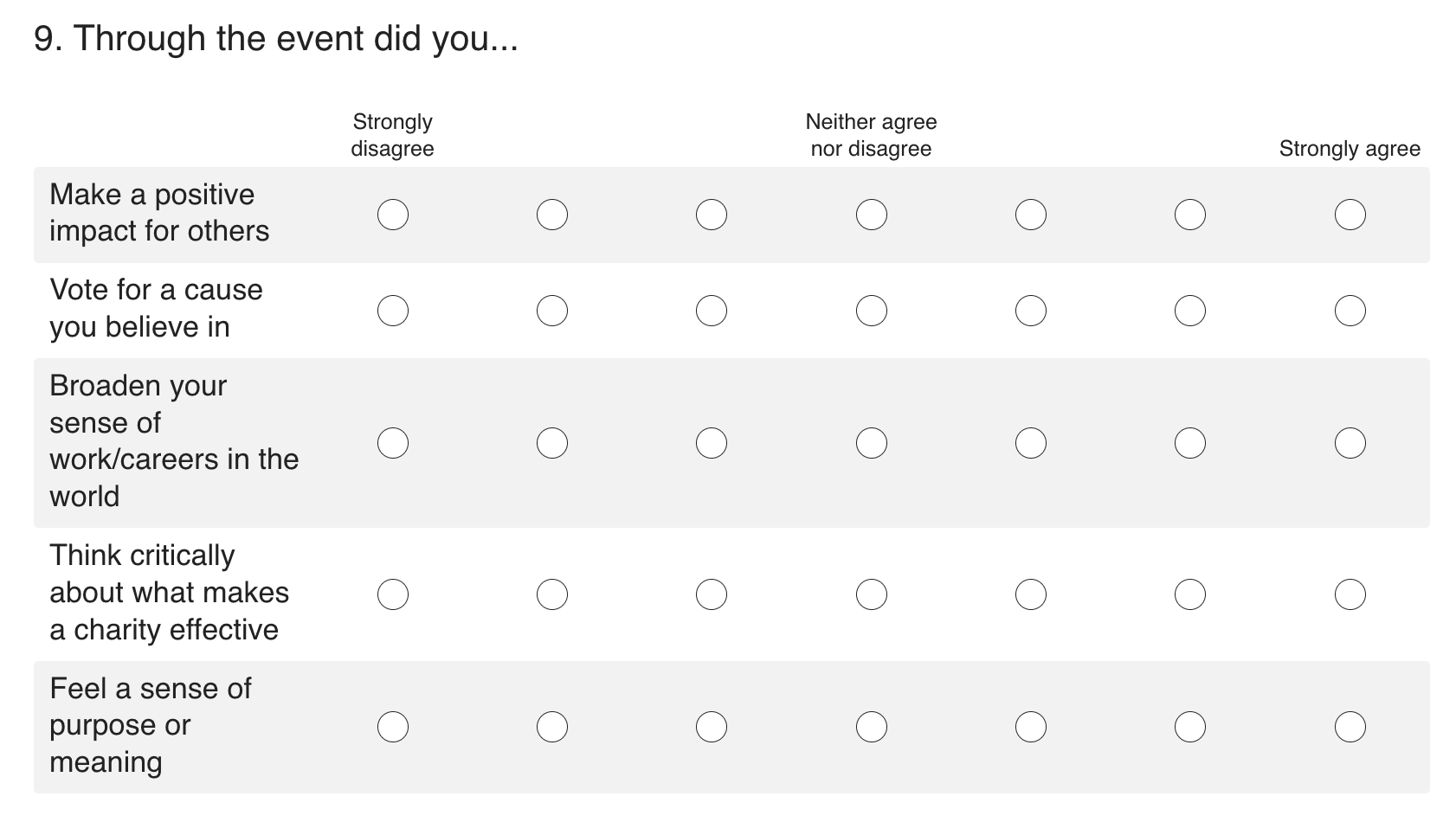

Prior to data analysis with the EAMT team, in its first years of operations the programme collected data that was not grounded within a particular theory of change and generally not collected for the sake of impact assessment. For example, after voting, students had the option to indicate whether they had made a difference for people in need and whether they voted for a cause they believed in, through binary yes/no questions. The vast majority of students regularly reported that they did make a difference for people in need and voted for a cause they believed in, which held value for schools and seemed to suggest potential, but the evidence was not very meaningful from the perspective of impact measurement. At the time, the programme was considering close involvement with a variety of communities alongside effective altruism—particularly philosophy education, student-centred education, civic participation, youth development, experiential learning, and school psychology—and there was a strong priority given to directly involving students, teachers, school counselors, and other school-based stakeholders in the process, which was more exploratory than evaluative.

Accordingly, though the programme was initially collecting data to demonstrate value to schools more so than assess impact, as it gained traction it was beginning to approach impact assessment from the standpoint of Comprehensive Mixed-Methods Participatory Evaluation (CMMPE). The six basic assumptions of that framework are as follows: "Programme success is dynamic and multi-dimensional; definitions and perspectives of programme success are likely to vary among stakeholders; programme evaluation has multiple purposes; comprehensive programme evaluation requires mixed qualitative-quantitative methods; comprehensive programme evaluation requires participation of stakeholders; and comprehensive programme evaluation requires advanced planning and is integral to service delivery" (Nastasi & Hitchcock, 2008).[9]

This framework is useful in that it recognizes several fundamental qualities of the programme, especially its multi-dimensionality and relevance to a variety of stakeholders, and it allowed the programme to incorporate falsifiable hypotheses and maintain a broad understanding of impact. However, in the interests of removing ambiguity and providing the opportunity for a more rigorous form of evaluation, it was ultimately replaced by a preregistered analysis led by an expert and neutral party.[10] Through this approach to impact assessment, particular outcomes within the already-more-targeted theory of change were identified as most essential, with falsifiable hypotheses and ways of accounting for biases.

List of the prioritised outcomes

The following outcomes were preregistered prior to data collection and regarded as most relevant to impact assessment. For reference, most of the quantitative outcomes, as well as some of the qualitative outcomes, can be viewed within the context of the Voting Ballot. The outcomes are only defined briefly, with the understanding that a more complete level of detail, if deemed valuable, would be reserved for a follow-up post from David Reinstein at some point in the future.

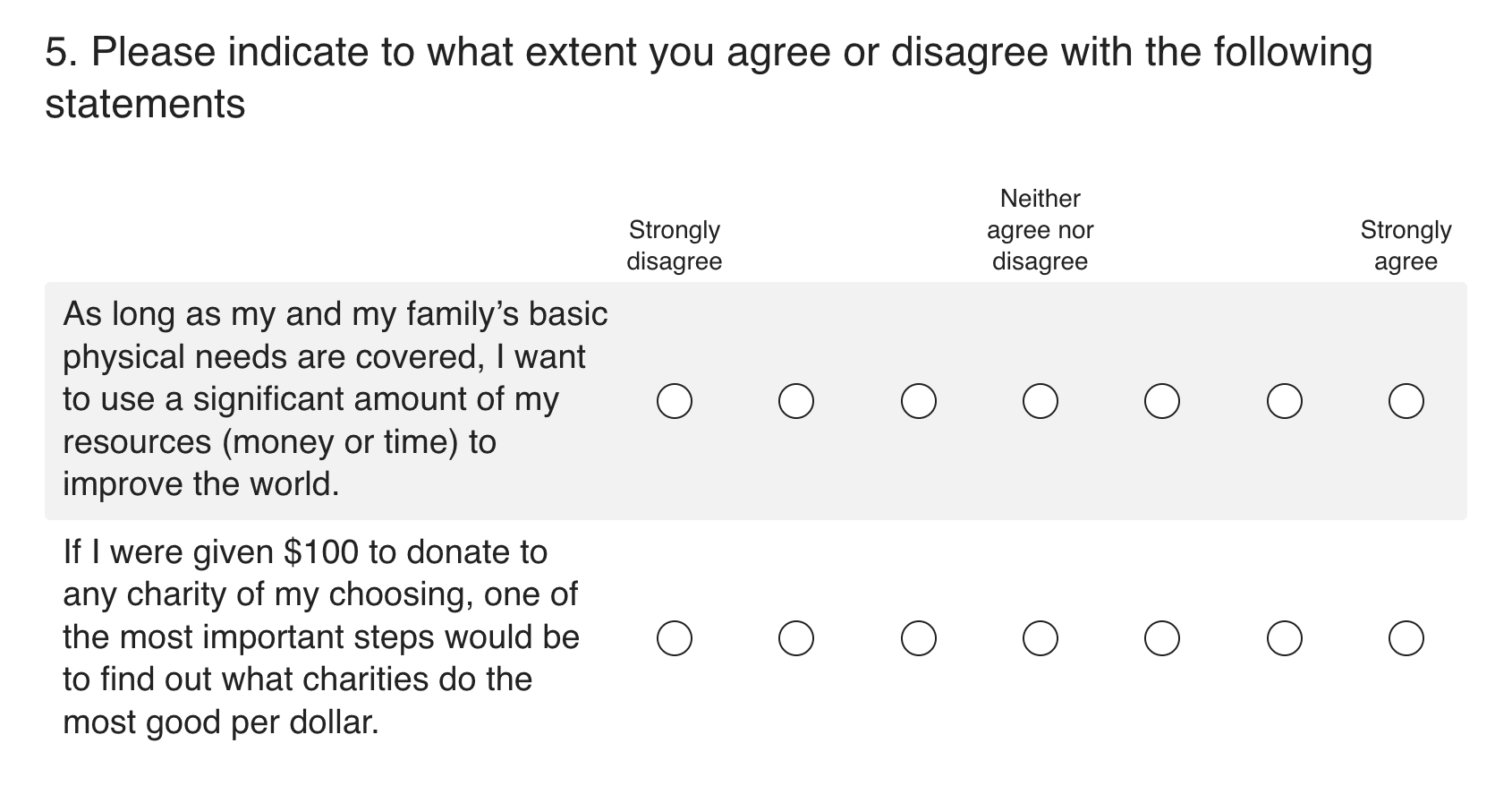

Aspects of the GWWC mission, derived from the proto-EA subscales

An item from each of the subscales from Dr Lucius Caviola et al.'s research (the expansive altruism subscale and effectiveness-focus subscale) were revised to be more accessible to high school students and independently capture the 'giving significantly' and 'giving effectively' aspects of the GWWC mission. Students cannot register to vote or vote without submitting a response to each of these items. This outcome was regarded as essential to the programme's mission, offering a falsifiable hypothesis, and subject to agreeability bias—in that students might respond with more agreement to these items in the Voting Ballot, anticipating that the programme is looking to foster attitudes of giving and giving effectively.

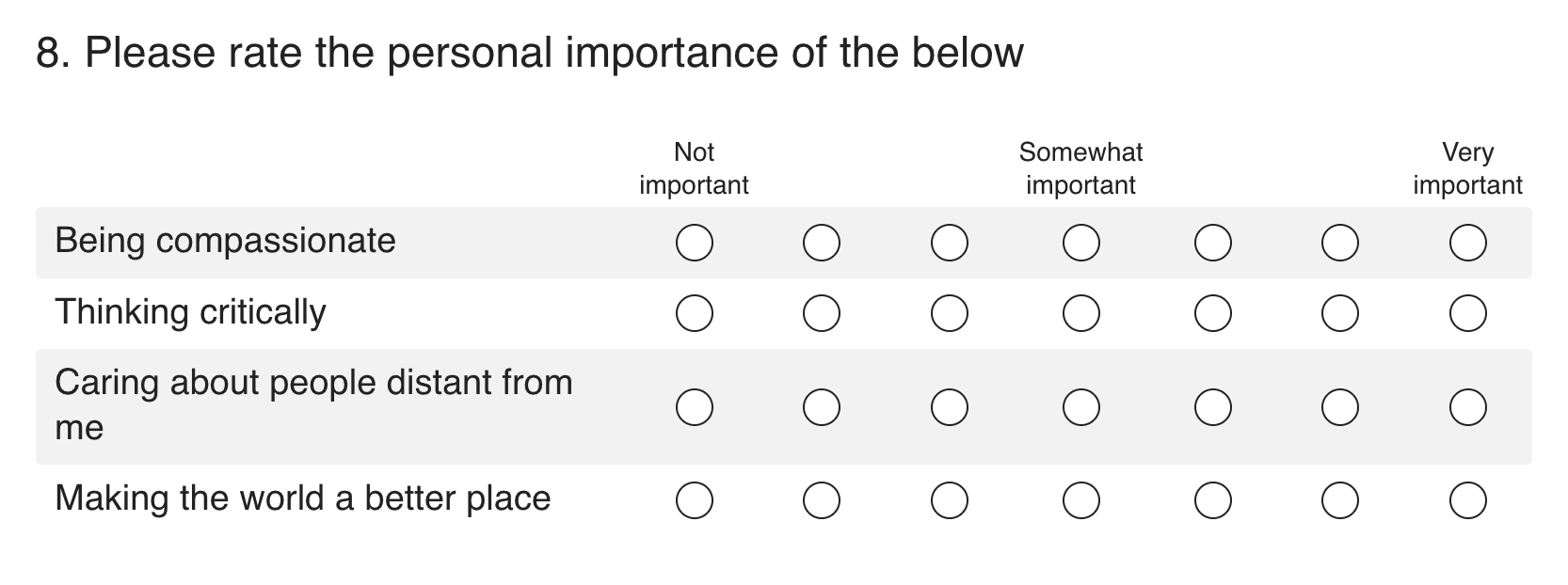

Promoting positive values globally

The GWWC strategy highlights 'promoting positive values globally' as an indirect impact of promoting effective giving. Students cannot register to vote or vote without submitting a response to each of these items. This outcome was regarded as essential to the programme's mission, offering a falsifiable hypothesis, and similarly subject to agreeability bias.

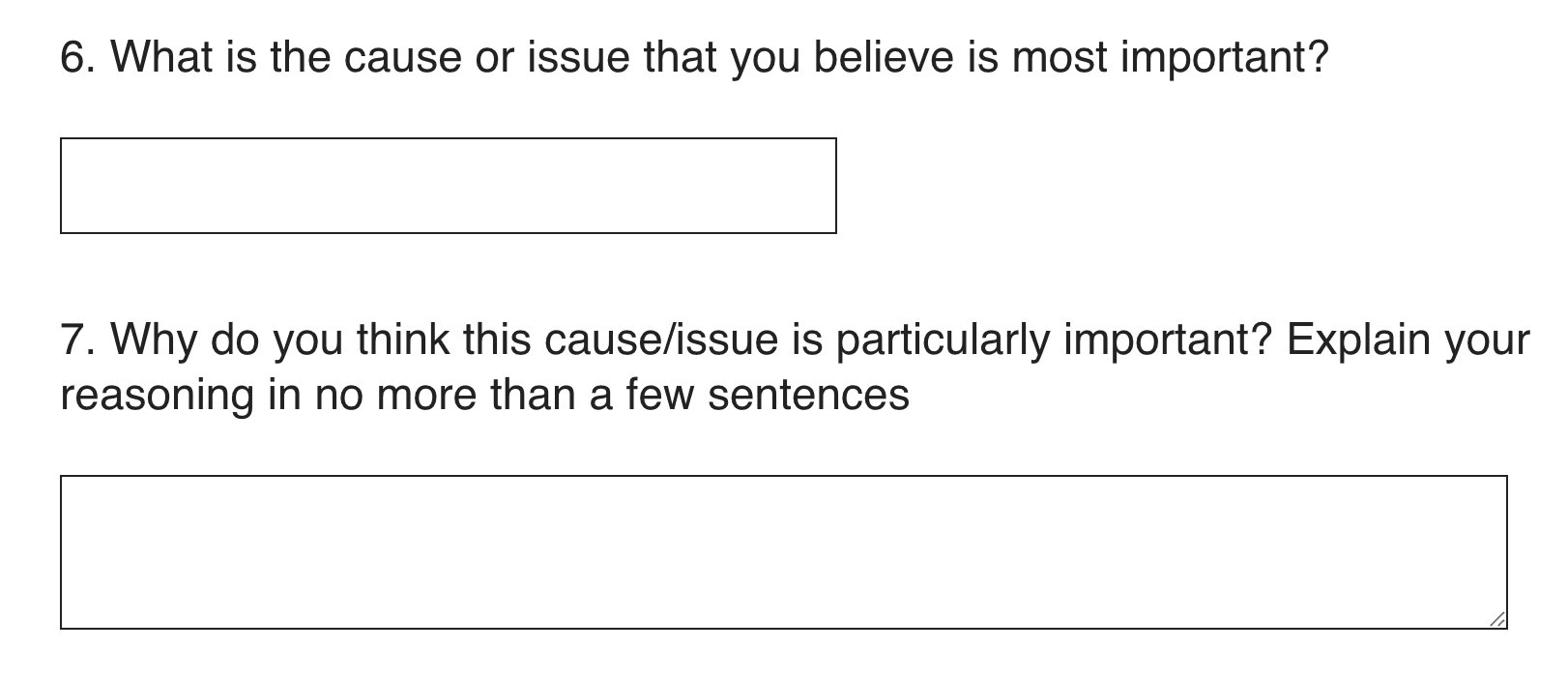

Priority causes/issues

Although this item was originally included in the post-event survey to promote reflection and learning, it was ultimately moved to the Voter Registration Form and Voting Ballot to be incorporated into impact assessment. It seems less subject to agreeability bias than the former two outcomes, and through coding procedures it seems possible to examine falsifiably. Note that the Charity Elections programme does not intend to change what issues students believe are most important to them in a particular way—but does intend to enhance the ability to think critically about world issues with expanded moral circles, as intended by the following codings:

- 'Mainly international or out-group' over 'mainly domestic or in-group'

- 'Poorer country' over 'richer country'

- 'Animal-centred' over 'human-centred'

- 'Farm or wild' animals over 'human companion' animals

- 'Medium- or long-term' over 'nearer-term'

In the preregistration, these coded shifts were regarded as more important than the aspects of the GWWC mission derived from the proto-EA scales and promoting positive values globally, because they do not seem particularly subject to agreeability bias. However, after some of the schools included in the data analysis had already run charity elections, it was noted that this outcome does not address the core objectives of the programme, which were preregistered as "the extent to which participating students, in general, indicate attitudes consistent with the giving significantly and giving effectively aspects of the GWWC mission." It was suggested that if agreeability bias is not sufficiently accounted for, then the data will have limited interpretability; and that if the core objectives of the programme are not prioritised, then the intended impact of the programme is given a secondary role in impact assessment.[11]

List of the non-prioritised outcomes

The following outcomes were discussed prior to data collection but were not preregistered as particularly significant for the purposes of this approach to impact assessment.

Quantitative outcomes in the post-event survey

The post-event survey refers to the second page of the Voting Ballot, which students are not required to submit and is displayed after students receive a confirmation that they have voted. These items were left out of the required first page of the Voting Ballot to keep the form concise, as teachers had commonly reported that the forms were too long for their students. The Voter Registration Form does not have a second page.

Other quantitative outcomes of interest

Other quantitative outcomes might include the number of students/teachers trained to apply basic EA frameworks (e.g., research worksheet) and the number of student leaders running the programme, receiving introductory training in effective giving and earning service leadership certificates.[12] These outcomes were not regarded as particularly significant to this approach to impact assessment; but they might be regarded as reflections of the scale of impact, while other outcomes reflect the magnitude of effects applicable to the average student participant. This is analogous to giving significantly and giving effectively: the programme seeks to (a) involve significant numbers of students and (b) for those students, make the largest possible positive difference.

Impact on thinking about doing good

As part of the post-event survey, students are asked an open-ended question about 'doing good for others.' A thematic analysis of some sort might determine the broad sorts of takeaways from students, possibly quantifying the percentage of responses consistent with the goals of the programme relative to the percentage of responses indicating that the programme was ineffective.[13]

Other outcomes relevant to impact assessment

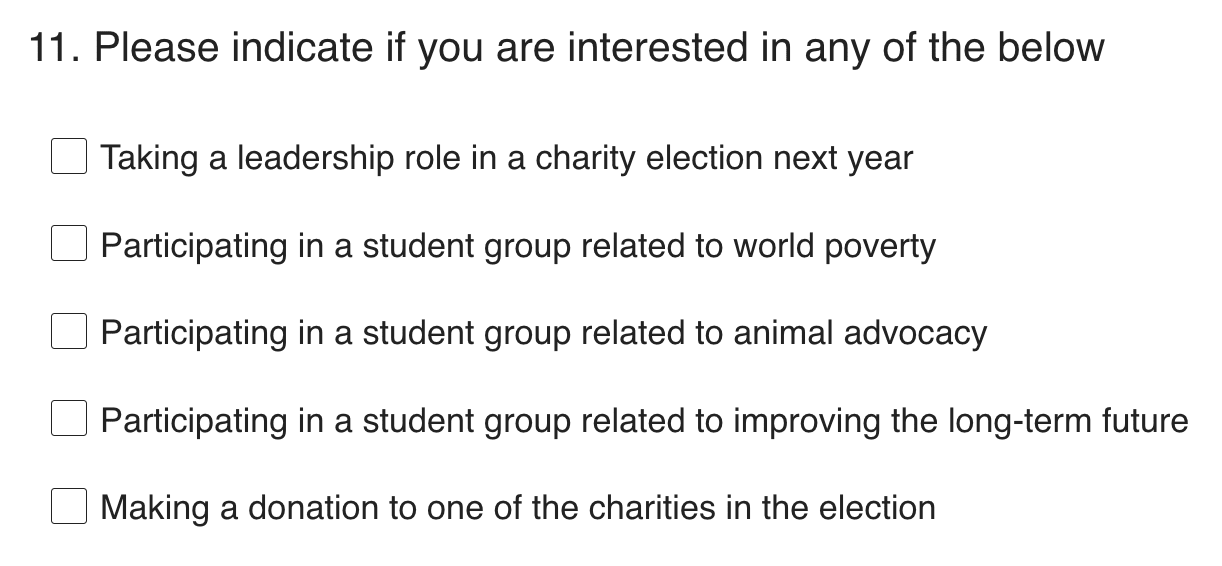

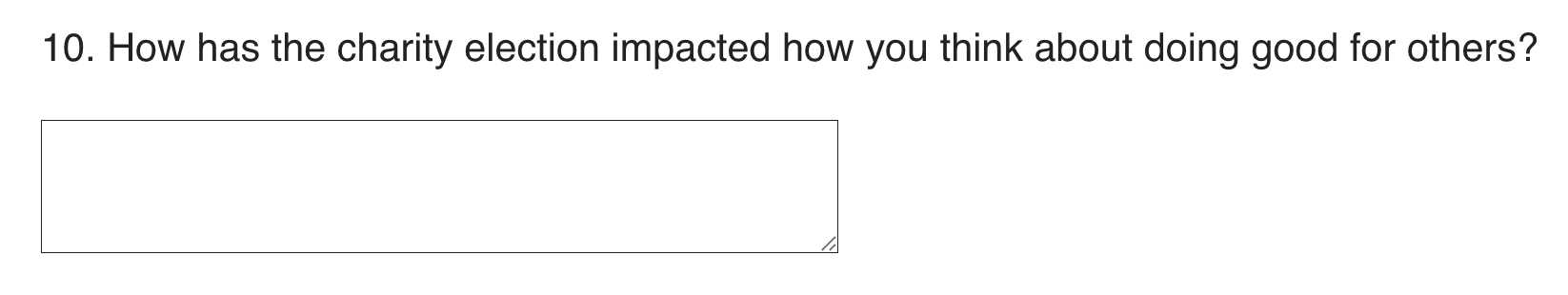

Some of the other outcomes discussed prior to data collection included work produced by student leaders (e.g., outcomes from peer interviews) and results from teacher feedback surveys (e.g., responses to the questions below).

Adjustments to data collection

As the initial data were collected and impact assessment was discussed, several adjustments were made to improve the meaningfulness of the data, including:

- The item, 'What do you believe are the most important issues in the world? Please enter three issues at most, in order of importance,' was refined to 'What are the causes and issues that you believe are most important? Please enter three issues at most, in order of importance,' and later to 'What is the cause or issue that you believe is most important?

- Purpose: Avoid prompting wide moral circles via use of the word, 'world,' and make the data collected more specific

- The item, 'Caring about people distant from me,' was substituted for the item, 'Thinking creatively,' which was originally included as an outcome that may be seen as valuable to teachers

- Purpose: Collect further data on moral circle expansion

- The item, 'Broaden your sense of work/careers in the world,' was substituted for the item, 'Reflect on important world issues'

- Purpose: Experiment with including reflections on work/career in the process

- The item, 'How confident do you feel about your ability to change the world for the better?,' was added to the Voter Registration Form and Voting Ballot

- Purpose: Evaluate whether the programme increases students' confidence to improve the world, which may be relevant data for non-EA stakeholders[14]

- The item, 'Have you ever participated in a charity election before?,' was added to the Voter Registration Form

- Purpose: Account for effects resulting from schools running charity elections in consecutive years

Proposed conceptualisation of programme evaluation

Put simply, programme evaluation might be conceptualised as an examination of the estimated impact of the programme for an individual student against the projected operational cost per participant student. The reason that sponsorship to the highly effective charities on the ballot might not be included alongside operational cost is because the sponsored funds are already assumed to be given effectively, such that there is no better opportunity cost.

In a bit more detail, the steps needed to evaluate the programme might include:

- Based on the data analysis and any other relevant information, determine an average set of expectations for how a charity election will impact a school with XYZ participating students

- List all potential effects worthy of consideration next to the probability of achieving them in a given charity election (e.g., XYZ participating students experiencing a slight increase in attitudes consistent with the 'giving significantly' portion of the GWWC mission, 85%; student leaders starting a post-event student club related to effective giving, 5%; etc.)

- Referencing criteria from the applicable stakeholder, assess the monetary value of each component of that average set of expectations being achieved in a school of XYZ participating students

- Assign a monetary value to each individual effect (e.g., XYZ participating students experiencing a slight increase in attitudes consistent with the 'giving significantly' portion of the GWWC mission = $5,000 USD of value; student leaders starting a post-event student club related to effective giving = $7,500 of value)

- Determine the expected value of running a charity election

- Multiply the assigned monetary value of each effect by its probability, adding those products together to generate the expected value of a charity election as a whole (e.g., {$5,000 of value * .85} + {$7,500 of value * .05 = $4,625 of value})

- Determine the expected value of collecting an individual student vote in a charity election

- Divide the expected value of a charity election as a whole by XYZ, yielding $N.ML (e.g., if XYZ = 150 students, $4,625 of value / 150 student votes = $15.42 of value per each student vote)

- Based on the programme's history, determine the cumulative operational cost of generating a student vote in a charity election

- Divide the cumulative operational cost by the total number of student votes (e.g., $122.7k / 14,627 student votes = $8.39 per student vote)

- Based on projections of the number of schools that can be enrolled in the programme per a set amount of operational budget, estimate the projected operational cost of generating student vote

- Adjust the operational cost per student vote based on the relative potential or lack of potential of future school enrolment efforts (e.g., if school enrolment efforts are estimated to be half as effective the following year, $8.39 * 2 = $16.78 per student vote)

- Evaluate the programme by comparing the expected value of collecting an individual student vote to the projected operational cost of generating a student vote

- Consider funding the programme if the expected value per student vote (e.g., $15.42) is greater than the projected operational cost per student vote (e.g., $16.78; so based on this oversimplified example, do not fund the programme)

Given the limitations to data collection and the consequent difficulty making conclusions about long-term behavioural impact, it is possible that this approach to programme evaluation would involve too many assumptions to be practically meaningful. In any case, it may provide a useful starting point for making decisions about the programme's value in the most objective way feasible.

Reporting programmatic efficacy

Since it is extremely unlikely that the Charity Elections programme will seek funding or resume formal operations at any point moving forward, we are aware that the value of anonymising and publishing the original data analysis is limited; meanwhile, there are other projects that are actionable and have the potential to directly benefit from research. If there are other reasons that publishing a fully anonymised, school-wide version of the data analysis would be valuable, we would defer to David in deciding whether to prioritise compiling and communicating that version of the data analysis and his views on programme evaluation. At that point in time, the programme will have fully closed down operations, and since it is not in a fully objective position, it would likely be better for the team members of the Charity Elections programme to not contribute to writing a report of this sort anyway.

That said, as discussed previously the data analysis cannot be immediately published because the current form does not solely include fully anonymised school-wide results. If it is to be published later on, it will be as a report of programmatic efficacy, rather than as research for another purpose. The approved uses of school-wide anonymised data include grant reporting, other reporting of programmatic efficacy, and programme marketing efforts; the data cannot be used for research outside of programme evaluation.[15]

Other reflections

Reasons for closing down indefinitely

As stated at the top of the post, the primary reasons we have decided not to continue operations independent from Giving What We Can (GWWC) post-discontinuation are: (1) there was not a mechanism established that reliably enrolled new schools, suggesting the project might stagnate after the end of the initial wave of expansion with GWWC, (2) we believe the remaining project funds would be better off absorbed by GWWC than used to transition the programme to a new fiscal sponsor, and (3) if a school can fundraise $2 per voting student, it now has the potential to run a charity election but with zero operational cost and with counterfactual donations to highly effective charities.

Difficulties motivating school enrolment

In some ways, it would be reasonable to consider the programme having been successful at enrolling schools in the programme; relative to the programme's total budget, the cumulative operational cost per student vote was $8.39 USD. From another perspective, though, despite taking a variety of approaches a mechanism for reliably motivating additional schools to enrol was not found.

The methods attempted, a suggestion of the amount of resources dedicated to them, and the number of schools known to have enrolled through each are listed below.

| Method | Description of method | Amount of resources (time and/or money) | Number of school events |

| Programme outreach | Within EA:

To schools:

| Within EA:

To schools:

| 15 |

| Misc. referral within EA community | EA groups:

Personal referrals:

Other:

| None, though general community involvement and positive association with GWWC contributed to referrals | 8 |

| Run by Charity Elections staff | Schools:

| Very high | 3 |

| Unknown | The lead teacher learned of the programme from a student | NA | 1 |

| Consecutive enrolment | Events that were run during a school's second, third, etc. year of participation | Medium-low | 18 |

Resources: Outreach and promotions

For more information on some of the programme's extensive outreach history and activities, feel free to review the links below.

| Resource | Specific takeaways |

| Did not have strong follow-through from volunteers in calling schools; potentially due to calling schools not being a preferred volunteer task; calling schools was relatively effective compared to other methods of outreach per cost and staff hour; encountered logistical limitations, such as the international nature of the team | |

| Sample promotional language incorporated into fundraisers | |

| Various posts made to promote the programme within the EA community | |

| Sample of video ad for student leaders and teachers; small budget; regardless of budget, low evidence of success | |

| Promo video | Produced by high school and university student team members and volunteers |

Several other initiatives included conference presentations at the Educational Collaborative for International Schools (ECIS) in London, EAGx Berlin, and EAGx Prague; running a sample charity election with the American Library Association; making posts in groups for teachers, school psychologists, and other school staff on Facebook; mass email campaigns using purchased mailing lists of secondary teachers; and outreach to university students in the effective altruism community, including through EA Group Facebook pages, who we hoped would be interested in motivating their former high school to enrol or in volunteering with us.

Programme status and cost

As an unincorporated nonprofit association, the Charity Elections programme would need to transition from GWWC to a third fiscal sponsor, or become its own nonprofit, to continue operations.[16] We have decided to close down programme operations given that:

- both of these possibilities would require notable work to transition and use funds that could be better absorbed by GWWC for direct impact;

- there seemed to be recent stagnation in enrolling new schools;

- GWWC provides the most natural context for the programme; and

- the curriculum had achieved a point of development at which it had been sufficiently field-tested for schools to run charity elections independently.

Now, if a teacher or other school staff member is interested in running a charity election, they can access the 'how to run a charity election' document, which walks them through the steps to run an event at the individual classroom or whole-school level. Supplemental lesson plans have also been developed to augment the learning in the charity election, and it is possible that Adam will further develop and promote these documents as an independent project. This might be valuable in itself and potentially also motivate teachers to run whole-school charity elections.

Reflections on key programme obstacles

We learned a great deal from our experiences along the way, and several key challenges stood out to us as potentially useful areas of reflection for those interested in similar projects. If you would like to discuss any of these areas with us, feel free to reach out.

Enrolling new schools in the programme

Even though we received guidance from multiple experts in marketing, we struggled to translate their advice into successful outcomes, especially in the context of schools and the practical limitations they face when deciding to take on a new programme of any sort. In retrospect, we have seen there might have been value in retaining a marketing specialist with deep knowledge of how to reach the education sector, to fully lead our marketing efforts or at least play a more central role. Relatedly, though there is less certainty, reaching out to influencers who might be interested in promoting the programme at scale might have yielded a high expected value, but we did not prioritise this approach.

Addressing limitations in teacher and school capacity

The ability—and therefore, willingness—of schools to fit new activities into their curriculum served as a primary obstacle to preventing more uptake of the programme. As dicussed elsewhere, teachers and administrators are painfully aware of the tight timetables they work under in order to meet objectives at the school, district, and even federal levels; it was simply very difficult to persuade schools to open up space for this programme, no matter how universally valuable its objectives.

In an effort to reduce barriers to implementation, the programme was made to be as simple as possible while preserving the basic core of the curriculum. Additionally, teachers were granted flexibility when they needed to adapt the curriculum or otherwise deviate from the standard model of implementation. There are both benefits and drawbacks to this approach; but regardless of the simplicity and adaptability of the programme, schools were often unable to run the programme due plainly to limitations in teacher and school capacity.

Deciding how to address legal obstacles to data collection

Over the course of two years of legal advice, we learned that it can be extremely challenging to collect data from students, especially when doing so across multiple countries. This had significant implications for programme evaluation, as we did not find a way to collect longitudinal behavioural data in a way that aligned with the core objectives of the programme. As discussed above, the short duration and format of the event were problematic for pre- and post-experience surveys, and we were unable to measure changes in the students’ attitudes, giving behaviors, and so on in the months and years that followed the charity election experience.

It is possible that piloting programming at schools with slightly older students would have enabled the programme to collect more forms of data. Unfortunately, we were not able to take advantage of one such opportunity—to run charity elections in folk schools in the Netherlands, where students tend to be age 18 and up—due to a focus on data protection issues and legal considerations around expanding to new countries. Even if this or another potential project were to fully address legal obstacles, however, it would still be necessary to navigate several notable logistical and financial obstacles, especially if pursuing a randomised controlled trial.

Scaling to additional countries

Through notable effort, we ensured that iterations of the event obtained appropriate legal permissions in the seven countries where the programme was run. We faced limitations on expansion to other countries due to factors including:

- the legal expense to check programme viability

- the perception of legal constraints, especially around differences in child protection regimes

- the different needs of educational institutions in different countries

- limited success in school outreach

Except when we had pro-bono legal counsel, there was a high cost for this process per country, and to continue expanding we eventually developed a low-risk adapation that did not include the student leader portion of the programme. The most noteworthy success in scaling was undoubtedly localising charity elections to Italy, translating the entire programme with the support of the Altruismo Efficace Italia community.

Navigating the EA funding landscape

Through the grant application process, we learned that some potential funders would be interested in a program like this one providing a funnel for students, especially those who have been particularly academically successful, to be guided to get involved with activities in effective altruism. As a programme goal, this was ultimately determined to be peripheral to our core objectives; the program seemed to be better suited for reaching a larger number of students—whole schools, when possible—with concepts around effective giving, to extend moral circles and cultivate a broader culture of giving in a non-exclusive manner. Although opportunities for students to get involved with effective altruism was sometimes a result of charity elections, it was difficult to measure and not a core feature of the programme.

Retracting the student mentorship programme

After receiving EAIF funding, one of our first major projects was developing a programme through which student mentors—university students at Georgetown EA—would train and support student leaders running charity elections at high schools throughout the process. While this project seemed to demonstrate high potential in theory, it ultimately was not feasible in practice due to a combination of legal and logistical complications.

Motivating school outreach volunteers

We made a concerted and repeated effort to bring in volunteers from the EA community to support, with the most need for participation in contacting schools. After developing a comprehensive process for volunteer training and onboarding, through which volunteers would reach out to schools via phone call to recommend charity elections, several volunteers received training and were prepared to contact schools. Regrettably, the trained volunteers did not ultimately conduct calls, an activity that may have an extremely high expected value for personable individuals inspired by effective giving. While this was disappointing, there were many other areas in which volunteers supported the programme's growth and development.

Balancing individualised teacher support with standardised workflow

Instead of individually coaching teachers through the process of running a charity election, or on the other hand, relying on teachers to review the written resources without dedicated email support, it probably would have been useful to further streamline the process through a series of video recordings. This might have allowed some teachers who were wary of the time commitment involved in running the Charity Elections programme to participate. The most active teachers running charity elections often found unique ways to involve their school communities, and their successes could be incorporated into the process through a revamped teacher training module. Some possibilities for school community impact were limited by legal obstacles (e.g., collecting student emails) and teacher capacity, but we believe that other opportunities would open up when teachers were motivated to involve their community beyond the core curriculum.

Potential role within the effective giving ecosystem

As one of a small number of programmes in the EA community serving the general high school population, the pre-existing Charity Elections curriculum is already well-positioned to multiply the efforts of teachers involved with effective giving; provide a field-tested experience that students involved with EA can share with teachers and run as student leaders; and direct counterfactual donations to highly effective charities, all while inspiring young people to apply the principles of effective giving in a community-building school event. It now is compatible with supplementary lesson plans that support and extend beyond the baseline curriculum, which can be implemented by teachers anytime, whether for an individual classroom activity or a whole-school event.

If you know (or are) a teacher and end up running a charity election, feel free to drop us a line at greg.charityelections@gmail.com, adam.charityelections@gmail.com, or as a comment on this post.

Click here to get started

- ^

Note that there are plausible arguments for incorporating these potential benefits into programme evaluation. As one example, in Doing Good Better Dr Will MacAskill refers to expected value to suggest that voting is "like donating thousands of dollars to charity." That said, this post will not address potential benefits less traditionally relevant to effective altruism, on the premise that they do not hold sufficient weight to be regarded as significant by stakeholders.

- ^

Shout-out to some of the programme's other most active contributors and volunteers:

🙏 🙏to Alicia Pollard for her contributions to motivating school enrolment and programme development.

🙏 🙏to Hamidah Oderinwale for her work leading early programme promotion efforts (e.g., promo video) and gathering further opportunities for high school students.

🙏 🙏Kearney Capuano for her contributions to curriculum development, organising university students around the programme, and her TED Talk inspiring student leaders.

🙏 🙏to Jeffrey Mueller for his contributions to curriculum development and input to improving programme efficiency and design.

🙏 🙏to Michael Aird for running the first charity election with the standardized curriculum and providing input on the initial programme model.

🙏 🙏to Ignasi Llobera Trias for implementing several charity elections and sharing ideas for new school resources.

🙏 🙏to Emma Kenzie for implementing several charity elections and providing input on the curriculum.

🙏 🙏to Kevin Dahle for running the first charity election, providing input on the curriculum, and otherwise supporting programme development.

- ^

The curriculum took on different forms over the years, and some versions included reference to frameworks and resources more commonly associated with effective altruism (e.g., discussion around the TED Talk, What are the most important moral problems of our time?).

- ^

The reason that the total amount of sponsored funds directed to the highly effective charities on the ballot is not exactly double the number of student votes is because the first couple years of charity elections experimented with different voting methods (e.g., in-person voting with ballot boxes and 'I Voted' stickers) and models of ballot value (e.g., $1–5 USD depending on amount of research time).

- ^

In order to determine which figure is more relevant to discussions of programme evaluation, it would be useful to compare the average impact of the programme for a participating student via their first charity election experience to the average impact of the programme for a participating student via their second, third, and fourth charity election experience. This has not been examined empirically, and we would imagine that in reinforcing the frameworks from the prior charity election(s), providing new research and discussion experiences, and serving as a reminder of the event as a whole, the average impact via a student's second, third, and fourth charity election would be greater than no impact but less than the average impact via a student's first charity election. Assuming this is correct, on average across the programme's years of operations, $8.39 would have produced more value than one student participating in their first charity election and less value than one student participating in their second, third, and/or fourth charity election. In other words, since on average we would expect a student participating in their first charity election (10–11,000 student votes) to derive more value from the programme than a student participating in their second, third, or fourth charity election (3.5–4.5k student votes)—and since the $8.39 figure was calculated with reference to both of those groups—we would also expect perhaps $7–8 toward the average student's first charity election to yield the same amount of value as perhaps $10–11 toward the average student's second, third, or fourth charity election.

Furthermore, the approximate range of $11.00–12.50—the estimated total operational cost per unique participating student—would be the appropriate figure for discussions of programme evaluation if there were no benefit at all experienced by students participating in their second, third, and fourth charity elections. This is because that approximate range was calculated by fully excluding students not participating in their first charity election. The more value that students are believed to experience participating in their second, third, and fourth charity election (while assuming it will not exceed the value for students participating in their first charity election), the further below the approximate range of $11.00–12.50, and the closer to $8.39, would be the appropriate figure for comparison.

That said, there is not data collected that will allow for a meaningful estimate of the differences in value experienced by students across years of participation; and regardless of the actual values across years of participation, the data included in the 2022–23 impact assessment is very similar in composition (3,616 student votes with roughly 2.5–2.9k unique students) to data representing the full population (14,627 student votes with roughly 10–11,000 unique students), and the impact assessment considered data without regard for whether a student has already participated in a charity election. Therefore, it seems more appropriate to refer to $8.39, the cumulative operational cost per student vote, in discussions of programme evaluation.

- ^

During each of the other academic years that the Charity Elections programme was incubated by GWWC (2021–22, 2023–24, and 2024–25), the charities on the ballot included at least one charity focused on animal well-being and one fund. The reason an animal well-being charity was not included over the 2022–23 academic year was because as the programme was expanding to new countries, there was a need to ensure that the charities on the ballot were appropriate across countries from a legal perspective; after this was confirmed, the Animal Charity Evaluators (ACE) Recommended Charities Fund was featured on the ballot over the 2023–24 academic year. The reason a fund was not included on the ballot over the 2022–23 academic year was to experiment with a different sort of learning exercise. While comparing the impact of a particularly tangible charity in global health to a fund can promote discussion around risk and opportunity cost, comparison of the former to GiveDirectly seemed to lead to another meaningful form of reflection around the value of a targeted intervention with a predefined use of funds relative to the value of an individual's own sense of what is best for them.

- ^

Students can only submit the Voter Registration Form and Voting Ballot once per browser, which is set up by default to prevent purposeful or unintentional duplicate votes, unless the election coordinator requests that browser limitations are disabled.

- ^

Duplicate voting on the digital version of the Voting Ballot was noticed on a few occassions in very small numbers of students, and it was not possible to determine whether this was purposeful or unintentional. When working in schools and running charity elections with in-person voting in 2018 and 2019, Greg Gianopoulos, the Project Lead of the Charity Elections programme, noticed that on only one occassion did the same student submit multiple ballots. This was presumably because he cared about the cause, but regardless of the motivation it was a behaviour that was misguided and not in the spirit of the event. Ultimately, his teacher initiated a kind and corrective conversation with him about it.

- ^

Nastasi, B. K., & Hitchcock, J. H. (2008). Evaluating quality and effectiveness of population-based services. In B. Doll & J. A. Cummings (Eds.), Transforming school mental health services (pp. 245–276). Bethesda, MD: National Association of School Psychologists.

- ^

There are two conflicts of interest that we have known for disclosure:

(1) From the funding that the Charity Elections programme received through its EAIF grant, the first payment of $1,000 USD for David's initial work on the analysis was arranged to the EAMT team, a fiscally sponsored entity, for effective giving research in May 2023. Given that the EAMT team no longer had an organisational status in July 2025, the second (delayed) payment of $1,000 for the remainder of David's work on the analysis was arranged to his registered nonprofit, The Unjournal. Alternative funding sources were not successfully identified, and the potential conflict of interest was mitigated by sending payments to the entities at which David conducted research rather than to David directly. These issues were discussed with relevant stakeholders, particularly Luke Freeman, who was head of Giving What We Can at the time and had originally recommended working with David, and Peter Hurford, who was working with the EAIF to determine whether the Charity Elections programme would receive a second grant. Ultimately, though Peter had expressed an effort to make the grant happen, a second grant was not made due to issues with access to funding at the EAIF.

(2) After the initial evaluation had been completed and the lack of continued funding from the EAIF had been confirmed, in June 2023 David and Adam Steinberg, the Communications and Outreach Lead of the Charity Elections programme, discussed working together on The Unjournal, as David continued data analysis for the Charity Elections programme. David had approached Adam to assist on a freelance basis with occasional communications tasks for The Unjournal, and for transparency, this potential conflict of interest was discussed with both Luke and Peter.

Specifically, it was noted that someone with an external perspective might consider the following:

- Adam might favor providing David with work on the Charity Elections programme as a quid pro quo for his providing Adam with continued work on the project.

- David might interpret data from charity elections in a positive light to secure Adam's support for or improve his commitment to the project.

We were not able to identify any specific conflicts that might arise from the Survival and Flourishing Fund's support for both the Unjournal and Effective Ventures, which was the fiscal sponsor of the Charity Elections programme before Giving What We Can was an independent nonprofit.

Ultimately, since the final call on David's involvement in evaluating the Charity Elections programme was to be determined by Greg Gianopoulos in consultation with the relevant stakeholders, the first consideration was approached through a commitment to openness about the situation and Adam remaining fully peripheral to contracting decisions. In regard to the second consideration, it was approached through a commitment to integrity around keeping work on the two projects fully separate with transparency throughout the process.

That said, discussions about Adam working with David did not begin until after the preliminary evaluation, and these considerations are only relevant from June 2023 forward. After the considerations were disclosed to Peter, he named it as an important conflict of interest and was in favour of it being disclosed in materials related to evaluations of the programme. He also shared that he trusted Adam and David to operate with minimal bias and didn't see the conflict of interest as an obstacle to success.

- ^

Even if moral circle expansion were reasonably operationalised and used to inform analysis of the core objectives of the programme rather than used as a standalone measure of impact, students’ views of the most important causes/issues might be unlikely to change except to reflect the particular causes/issues encountered through the charities on the ballot. This is because the programme (a) does not present students with a span of issues other than those addressed by the three selected charities and (b) does not emphasise certain issues as more important than others, though it does encourage critical thinking and only present students with highly effective charities. If students truly are influenced by the causes on the ballot and moral circles were expanded accordingly, it would be expected that moral circles would specifically expand in reference to whichever morally distant groups are represented within the charities on the ballot.

- ^

Though student leaders were provided training requirements in the event handbook, the student mentorship programme, in which university students from Georgetown EA would mentor student leaders, was ultimately retracted due to a combination of legal and logistical issues.

- ^

Given that the programme aims to cultivate a culture of giving and giving effectively overall and not necessarily for every participating student, it might be reasonable to examine the ratio of neutral and negative responses (e.g., "Not much") to positive responses (e.g., "It makes me realise how interconnected we are and any positive impacts in one part of the world can echo globally") relative to the number of participating students. For example, if there were 300 participating students, 0–3 negative responses, and 1 positive response for every 4 neutral responses, ~60 students would have explicitly reported a positive takeaway from the event.

- ^

This item was not included in data collection until the 2024–25 academic year.

- ^

In 2022, the Charity Elections programme attempted to include surveys for Dr Lucius Caviola's research on psychological traits related to effective altruism in young people and Dr Matti Wilks' research on speciesism in young people. Due to legal reasons, the parameters around data collection became more complicated than first expected, which resulted in a different, more restrictive approach to data collection being deemed as legally appropriate. Since that approach ended up presenting ambiguity around ethics regulations (viz. considerations around researchers approaching students vs. students approaching researchers), the surveys ultimately were not included in the curriculum.

- ^

The programme's first fiscal sponsor was Effective Ventures, before GWWC became its own nonprofit and the programme's second fiscal sponsor.