[Subtitle.] And so is prioritarianism

This is a crosspost for Egalitarianism Is False by Bentham's Bulldog, which was originally published on Bentham's Newsletter on 30 March 2026.

1 Introduction

Lots of philosophers endorse a view that values something equalityish. There are two main ways this tends to go:

- Egalitarianism: on this view, equality is an intrinsic good. So a more unequal society is worse even if it has the same amount of total welfare.

- Prioritarianism: on this view, benefitting the worse off is disproportionately important. It’s better if an extra 5 units of welfare are given to someone who is badly off than someone who is well-off.

I think these views are very likely wrong. But I realized that I have not written about my disagreements with them since I was in high school, when around 20 people read my blog, and my posts were a bizarre firehose of occasional good points and elementary confusions. So it seemed time for a repeat.

Before I explain why I reject these views, let me give a brief clarification concerning what’s at stake in this debate. Those who reject egalitarianism and prioritarianism can think that there usually are practical reasons to prioritize benefitting the worse off. This is because it is usually easier to help the worse off. You can double the yearly income of hundreds of millions of people by giving them a few hundred dollars. You can’t do that if you give it to, say, Elon Musk.

The dispute in philosophy is about whether equality and benefitting the worse off has intrinsic value, not whether they’re often instrumentally good. Similarly, the relevant kind of equality and prioritization is about well-being, which refers to how well-off someone is. So this debate isn’t about whether, say, money should be given to poor people—utilitarians like me can easily accept that it should, because the poor benefit more from money than the rich.

Okay, with that out of the way, here’s why I’m not an egalitarian or prioritarian.

2 Huemer’s argument

Michael Huemer has a nice argument against egalitarianism that proceeds from the following principles:

- If one world is better than another world for everyone who exists in either world, that world is better. E.g. our world would get better if everyone was made better off, or if everyone was made better off and a bunch of other happy people would be created.

- If one world has higher average utility, total utility, and more equal or equally equal distribution of utility than another, it is better with respect to utility.

- If A is better than B and B is better than C, then A is better than C.

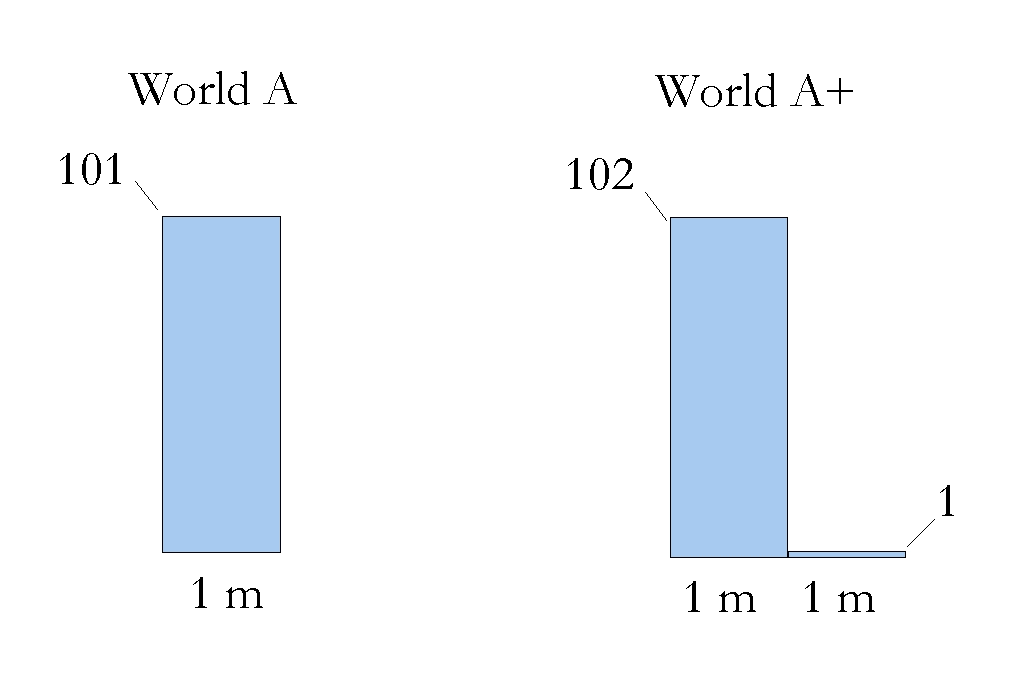

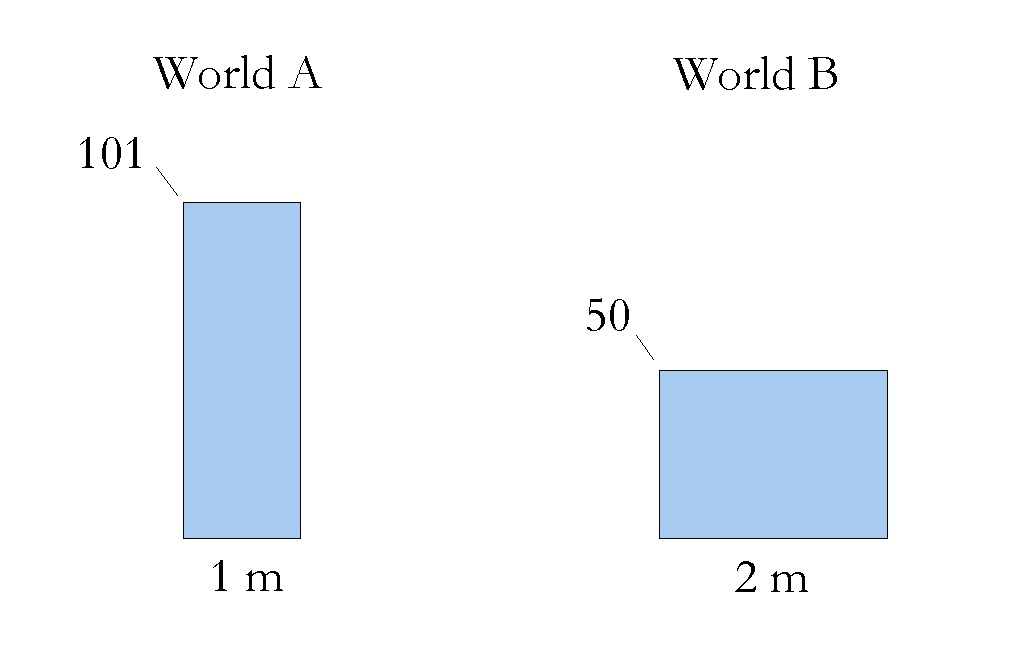

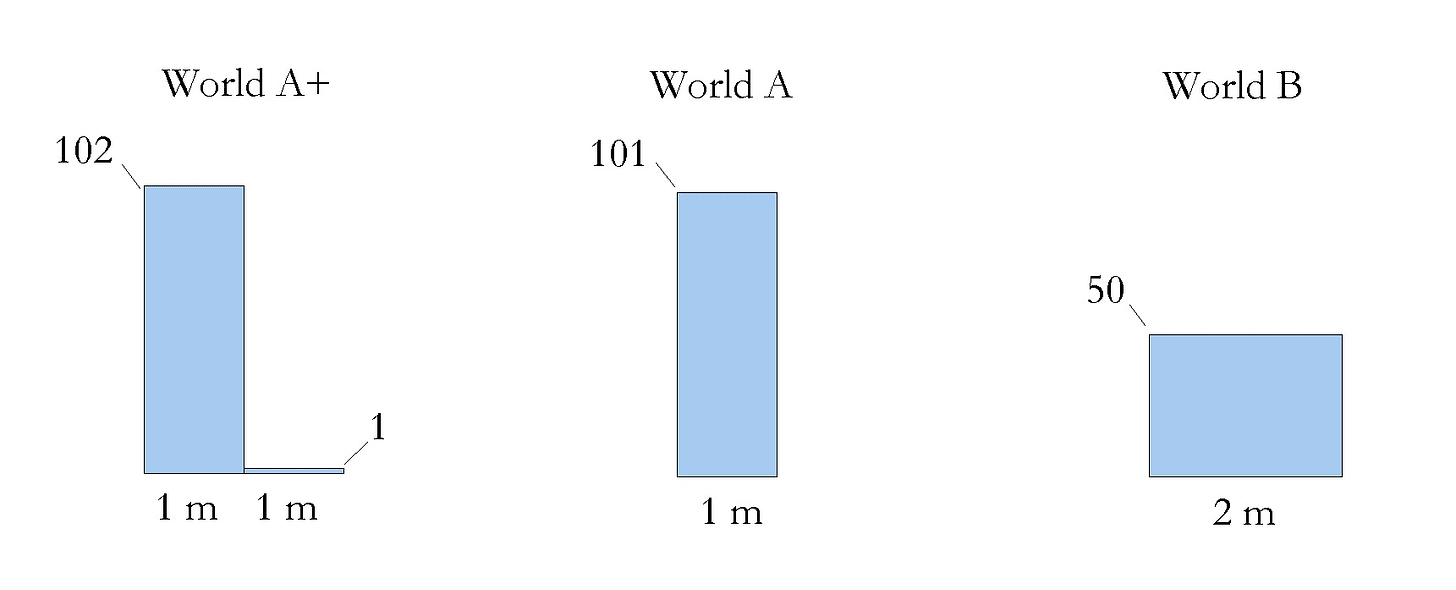

These all seem straightforward enough. But now let’s consider the following worlds (m refers to a million people).

World A+ has all the same people as world A has, plus some extra well-off people. So A+ is better. Now compare:

World A has higher average utility, total utility, and they’re equally equal. So world A is better. But now let’s compare the three worlds.

By transitivity, then, A+ is better than B. This is despite A+ having vastly more inequality and only slightly higher welfare. Thus, egalitarianism and prioritarianism are false.

You could give up transitivity, but I don’t think that’s plausible for reasons I give here. The other principles both seem extremely plausible. That egalitarians and prioritarians will have to give one of them up seems like a big cost to the view.

Now, you might try to give up the view that world A is worse than world A+. You might think a person coming into existence is only good if their welfare is above some threshold. This view is independently motivated, though I don’t find it plausible at all.

But crucially, it doesn’t much help. In the above chart, just let 1 unit of welfare be slightly above the threshold at which a person is worth creating. The argument still goes through.

Overall, this argument strikes me as very strong.

3 Ex-ante Pareto violations

Another big problem for prioritarianism and egalitarianism is that they violate ex-ante Pareto. Ex-ante Pareto says that if some action is better for everyone in expectation, then it’s better overall. For instance, it’s better for everyone to have an 80% chance of getting some reward than a 20% chance, because they all have better expected prospects.

Here’s the basic idea: both views imply that improving your welfare is more important if you’re at a lower welfare level (for this to be true on egalitarianism, imagine that there are other people who are at the higher welfare level). But then this means that you sometimes have reason to take guaranteed lower payouts instead of a lower chance of higher expected payouts.

For example, suppose that there are two options. Option A gives you a 50% chance of 101 utils. Option B gives you a guarantee of 50 utils. Now—and this is very important—we’re stipulating that 100 utils just is the amount of utils for which a 1/2 chance of it leaves you as well off, in expectation, as a guarantee of 50 utils. That is how utils are defined for present purposes.

In this case, the prioritarian cares more about the first 50 utils than the next 51. So they have every incentive to take option A, even though it’s worse for people in expectation.

There are worse problems in this vicinity. To see this, imagine that you can’t remember if you previously had a high welfare level on account of having had your memory erased. You think there’s a 55% chance you did and a 45% chance you had a low welfare level. Now you have two options:

- Get a cake if you had a high welfare level previously.

- Get a cake if you had a low welfare level previously.

In this case, prioritarianism and egalitarianism imply that you ought to take option 2, for it is better to have a cake if you previously had a low welfare level (if you stipulate the relevant things about other people’s welfare). But that’s nuts! I should just do the thing that gives me better odds of getting a cake. The marginal goodness of eating a cake doesn’t depend on my welfare before my memory was erased.

Thus, prioritarianism and egalitarianism violate the following constraint:

Higher odds: if there are two actions which both give me some reward at some probability, I should take the one that gives me reward at higher probability, all else equal.

(Ex-ante Prioritarianism doesn’t help with violations of Ex-ante Pareto and also has other big problems analogous to the ones discussed here).

There’s another concern in this vicinity: both egalitarianism and prioritarianism imply that, in the right circumstances, the value of your welfare depends on your welfare at previous points. But this doesn’t seem right. Suppose that a billion years in the past, I briefly existed and experienced some welfare. I have no memory of this. It just seems bizarre that this would affect my welfare’s value.

Similarly, it implies that how good it is for me to eat a cake depends on things like how happy I was in dreams when I was one that I’ve subsequently forgotten about. That doesn’t seem right!

It even depends on how well-off I’ll be in the future. The value of my experiencing some amount of happiness goes down, on prioritarianism, if I’ll later be well off—for then the later experience will add value to a supremely well-off life. But that just seems insane! How good it is for me to spend 10 minutes enjoying a cake doesn’t depend on whether I’ll be happy when I’m 83!

Now, you can try to patch the view by saying the only thing that matters is your welfare in times you can remember. But that view bizarrely implies you often have reason to erase your memory of enjoyable experiences, so as to make other ones non-instrumentally better. Note: in this case, it wouldn’t be so that you could appreciate them more, but just that there’s greater intrinsic value on such experiences.

It also seems weird that how happy I was in dreams that I can remember would affect how good it is for me to be made better off.

4 Egalitarianism definitely isn’t right

Egalitarianism, remember, is the view that assigns intrinsic value to equality. I think that it is much less plausible than prioritarianism. The objections I’ve given so far have applied to both egalitarianism and prioritarianism. Prioritarianism is a much better view—I think that egalitarianism is very unlikely to be right.

The first big objection to egalitarianism is that it implies, counterintuitively, that it is in some way good to simply make a person worse off. Suppose that Bob is very well off. You can break his nose and benefit no one. That doesn’t seem good in any way. But on egalitarianism it is good in some way—it reduces inequality. It might not be good all things considered, but it’s at least good in some respect.

This is the one everyone’s heard of, and it strikes me as decisive. Egalitarians generally just bite the bullet. That doesn’t seem plausible to me.

Here’s a bigger problem: egalitarianism seems to imply that how good your welfare is depends in bizarre ways on other people. For instance, suppose that there are a bunch of nearby sapient air spirits floating about. It doesn’t seem like this should affect how good it is for me to get married or watch a nice movie, assuming they have no causal effects on me.

But egalitarianism violates this constraint. It implies that if the air spirits have high welfare, then my watching the movie is more important—reducing inequality. If they have low welfare, then it’s less important. But this surely cannot be correct! By the same lights, it seems to imply that the value of my being happy depend on how well off people are in Egypt—for this will affect whether it boost inequality.

Now, maybe egalitarians can say that only equality within a society matters. But this has big problems:

- It just doesn’t seem to capture the egalitarian intuition. If one society was rich and prosperous, and another was desolate and poor, egalitarians would want to say that was a bad thing!

- This would seem to imply that there might be strong reasons to prevent people from immigrating. Suppose, for instance, that some people from outside our society could join our society. This would boost their welfare by some small amount. However, egalitarianism of this sort might imply it would be bad, because it would then massively increase our society’s inequality (assuming the people from outside our society have higher or lower welfare). This view implies that one should sometimes do things that are worse for some people and good for no one.

*Huemer has another clever argument against egalitarianism.

5 Contrary intuitions

What about the intuitiveness of egalitarianism and prioritarianism? Most people intuitively think that a society where half of people are very well-off and half are very badly off is worse than one with lower average welfare but more equality.

To start with, I don’t actually think prioritarianism and egalitarianism are that intuitive when people are clear on what’s being talked about. We don’t really have direct intuitions about units of utility. We have the intuition that a society with less average wealth that’s more equal is better than one with more average wealth but less equality. But that follows straightforwardly from the diminishing marginal utility of money.

So for this reason, I’m a bit dubious about the alleged intuitiveness of prioritarianism and egalitarianism.

Here’s my account of why we have this intuition: nearly all goods in the world have declining marginal utility. Your first banana is very good, your second is medium, and your fiftieth has no value. Your first 100,000 dollars are very valuable, your next are far less valuable, and the ones after that diminish even more. From this, we intuitively pick up a pattern: stuff gets less valuable at the margins if you have more of the rest of it. We thus come to falsely believe that something similar applies to utility, even though declining marginal utility explains the phenomenon in question.

It would be a bit suspicious if the structure of the moral universe independently resembled the value of various goods. It would be weird if goods have diminishing marginal utility and exactly the same diminishing marginal value structure was mirrored in the value of utility itself.

Another reason to distrust egalitarian intuitions is that they are highly political. They are much more likely to be had by left-wingers than others. You should distrust intuitions that plausibly arise from trying to justify your political commitments—especially if they are direct intuitions not supported by independent argument, and people on the other side don’t share them.

There’s an additional reason to believe one of these accounts. As I’ve argued, egalitarianism is very unlikely to be right. The only plausible view in the vicinity is prioritarianism. But prioritarians who reject egalitarianism will have to tell some similar story about where our wrongheaded egalitarian intuitions come from (for egalitarianism is similarly intuitive). Whatever explanation they give of those will also probably explain our prioritarian intuitions. Thus, there’s a dilemma:

- Accept egalitarianism despite its associated problems.

- Reject egalitarianism, but then the tools for explaining away our egalitarian intuitions generalize to prioritarianism.

6 Conclusion

Egalitarianism and prioritarianism are somewhat intuitive views. I can see the motivation for them. But the intuitions favoring them aren’t very trustworthy, and they conflict with a number of more plausible principles: separability, ex-ante Pareto, higher odds, and one of Huemer’s principles. For this reason, I’m reasonably confident that egalitarianism and prioritarianism are false.