Summary

Recent work has focused on the conflict between philosophies of altruism emphasizing the next few years (near-termism) and those emphasizing the next few centuries to millennia (long-termism). We propose and describe an alternate philosophy, ultra-near-termism, focusing on the next few hours to days. We explain why adding ultra-near-termism to a “philosophical portfolio” or “moral parliament” addresses deficiencies left by a focus on only near- or long-termism, and speculate on methods for identifying ultra-near-termist causes. Finally, we discuss the “ultra-near-termist hypocrisy problem” - can a moral agent consistently use their next few minutes to investigate and promote ultra-near-termist principles, or are they obligated to use those minutes on direct action supporting ultra-near-termist charities immediately?

Thanks to the many people who consulted with us on earlier versions of this essay. We are especially grateful to the readers who educated us about the reasons that our original name, Center for Ultra-Near-Termism, was offensive. We recognize the importance of inclusivity and will strive to do better in the future.

Long-Termism And Its Critics

William MacAskill provisionally defines long-termism as "the view that positively influencing the longterm future is a key moral priority of our time" (MacAskill 2019). He proposes various paths toward a more final definition, the strongest of which focuses on the statement Those who live at future times matter just as much, morally, as those who live today”.

This claim implies a discount rate of 0%, which has encountered controversy. Some authors give purely practical arguments against the zero rate, for example in Estimating The Philanthropic Discount Rate (Dickens 2020):

Even if we do not admit any pure time preference, we may still discount the value of future resources for four core reasons:

All resources become useless (I will refer to this as "economic nullification").

We lose access to our own resources.

We continue to have access to our own resources, but do not use them in a way that our present selves would approve of.

The best interventions might become less cost-effective over time as they get more heavily funded, or might become more cost-effective as we learn more about how to do good.

Aside from these, we can also consider a “pure” time preference, in which future lives are inherently less valuable than present ones. Frank Ramsey (Ramsey 1928) has called this practice “ethically indefensible”. Other economists disagree; Robin Hanson has suggested fundamentally discounting the future at the market rate of return [Hanson, 2008] on the grounds that other rates produce absurdities in investment decisions, although this argument does not grapple with the possibility of existential risk. The anonymous blogger “Entirely Useless” goes further [entirelyuseless.com 2018] stating that reasoning without discount rates introduces infinities and is necessarily inconsistent.

An entirely different critique is launched by Phil Torres (Torres 2021), who believes that long-termism could be used as an excuse to avoid present problems such as climate change, or that utilitarianism plus long-termism could be a potential justification for atrocities. He writes:

If this sounds appalling, it’s because it is appalling. By reducing morality to an abstract numbers game, and by declaring that what’s most important is fulfilling “our potential” by becoming simulated posthumans among the stars, longtermists not only trivialize past atrocities like WWII (and the Holocaust) but give themselves a “moral excuse” to dismiss or minimize comparable atrocities in the future. This is one reason that I’ve come to see longtermism as an immensely dangerous ideology.

Although Torres does not write about discount rates in his article, in order to avoid the excessive concern over the far future he warns against, we would need to apply some discount rate to keep our charitable spending yoked to the present.

The Argument For Ultra-High Discount Rates

Suppose we start out agnostic to what the correct discount rate should be. What should our prior be given minimal information?

A naive answer might be 50%; after all, excluding absurd situations where moral value increases with time or where future resources have negative value, the discount rate is bounded by [0, 100]. But 50% in what time interval? Per year? Per day? Per century?

If the discount rate was 50% per century, that implies a discount rate of around 1% per year. If it was 50% per day, that implies a discount rate of about 100-(10^100)% per year.

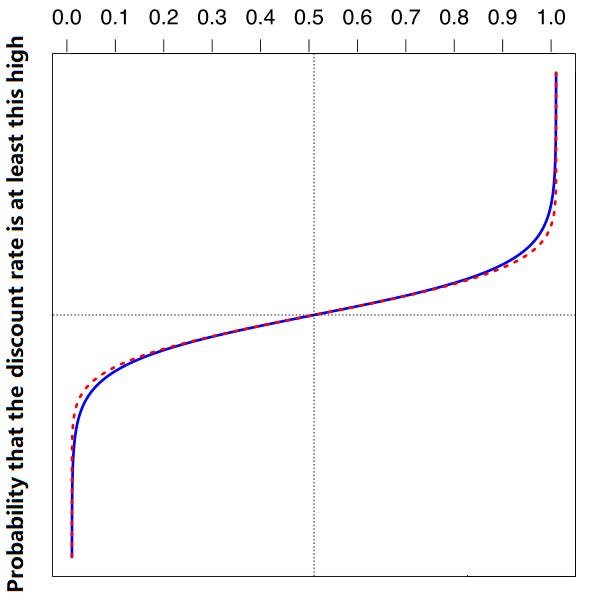

The Frivolous Theorem Of Arithmetic (Steinbach 1990) states that almost all numbers are very, very, very, large. As a corollary, we can infer that most discount rates are either very, very, very large, or very, very, very small. So our prior for the discount rate per any given time period should be a logistic sigmoid which rises steeply near zero and one but is almost flat in the middle.

Do we have any evidence to move us away from this prior? Hume (1739) notes that no factual evidence can ever have any bearing on moral questions; this is classically called the “is - ought dichotomy”. This suggests it is unlikely that any evidence should be able to shift us from this prior suggesting either very high or very low discount rates.

How do we choose between extremely low versus extremely high rates? Von Moltke (O’Riordan 2013) proposes the “precautionary principle”: if some course of action carries a threat of extreme harm, we should lean away from that course. Given Torres’ argument that long-termism is “immensely dangerous”, this suggests a bias away from the extremely low discount rates (and thus in favor of the extremely high ones.)

In practice, we do not believe decision-makers need to make an “either-or” choice on this question. Long-termism already has an immense inflow of resources and expertise (Lindmark 2022, Pajich 2022). Therefore, on the neglectedness principle (Gunitilaka 2021), donors who are indifferent between long-termist and ultra-near-termist causes should prefer ultra-near-termist causes on the margin.

Intuitions Behind Ultra-Near-Termism

The idea of very high discount rates seems contradictory to common sense. For most people, an intervention delivered several days from now feels substantially similar in utility to an intervention delivered immediately. Daniel Dennett developed the idea of an “intuition pump”, a thought experiment that helps a reader understand the cases in which a position makes sense (Dennett 1995)., What are some possible intuition pumps for ultra-near-termism?

Consider questions around personal identity; ie, is the “you” that wakes up tomorrow morning the same “you” who went to sleep at night, or does the discontinuity in consciousness lead to a discontinuity in identity? Insofar as the answer to that question is uncertain, interventions that affect donors and recipients days in the future could be considered morally equivalent to interventions that affect future generations.

Another such experiment involves the Boltzmann brain, a consciousness that forms serendipitously from the random collision of atoms in a primordial gas cloud (Aguirre, 2019). By some calculations, the majority of consciousnesses may be in such a situation (Carroll 2017). In such a scenario, moral value would only be possible in the few moments before the observer or the observable universe faded back into the void.

The simulation hypothesis could be considered a more palatable equivalent of the Boltzmann brain scenario. Simulators might be running the universe to answer some specific short-term question, such as “what will individual I do in situation S?” In this case, all achievable moral value would take place in the short period before simulators determined the answer to their question and “unplugged” the simulation.

Foundations Of Ultra-Near-Termism

The arguments above do not suggest a particular discount rate, and in fact suggest that whatever discount rate you choose, the real discount rate is very likely lower. Nevertheless, we recommend that ultra-near-termists assume a discount rate of 80% per day on pragmatic considerations: if the discount rate is higher, most moral actions become impossible. By the time an agent decides to act, the vast majority of value in the universe is already gone.

This discount rate cannot be overwhelmed by the potentially astronomical number of our descendants. A future galactic-supercluster-spanning civilization might include 10^38 individuals (Bostrom, 2003). However, at a discount rate of 80% per day, all inhabitants of this galactic civilization, two months from now, are worth less than a single individual today.

Thus, we define ultra-near-termism as a philosophy of charity which focuses on the welfare of individuals within one day of the current moment. That is, a successful ultra-near-termist charity will produce its positive effects on the world within one day of the donor electing to send them the money.

A successful ultra-near-termist charity will need to address three forms of speed in order to translate a donation into ultra-near-term action:

Transfer speed: The money must go from the donor’s account to the charity’s account as quickly as possible, without being subject to bank and credit card delays.

Administrative speed: The charity must notice the donation and take action (such as giving it to a recipient) as quickly as possible.

Consumption speed: The recipient must gain utility from receiving the donation as quickly as possible.

Consider a charity C which puts little effort into improving these domains. It takes one day for the donor’s funds to transfer, one more day for an administrator to allocate the funds to recipients, and a third day for the recipient to gain utility from the funds. This means it will take three days for the donor’s money to have any effect. At a discount rate of 80% per day, this means the funds will be worth only 0.8% as much as when they were donated!

In addition to these three domains, ultra-near-termists cannot ignore the basic principles of all effective altruism: importance, tractability, and neglectedness. Balancing the speed and effectiveness imperatives are a unique challenge for ultra-near-termism in particular for which there is still no adequate answer.

The Search For Ultra-Near-Termist Charities

Existing charities generally fail at simultaneously satisfying the three speed criteria and three effectiveness criteria listed above. Within this generally pessimistic picture, FOUNT has identified the following as potential ultra-near-termist cause areas:

Sample UNT global poverty intervention: promising people large sums of money. For example, a donor could send an email to all of their friends, saying that the recipient is their best friend and they would like to give them a gift of [the largest amount the recipient would believe]. This will make the recipients very happy in the ultra-near-term, while the inevitable pain of learning that the promise will not be kept can be delayed until the future, when moral value will be much less.

Sample UNT health intervention: claiming to have cured serious disease. A donor could buy a lab coat, go into a hospital, and tell terminal patients that their latest test results indicated they had been miraculously cured. Again, the recipients will be very happy in the ultra-near-term, in exchange for crushing disappointment in a future which will have much less moral value.

Sample UNT health intervention: distributing euphoria-producing drugs. Euphoria producing drugs like heroin and oxycodone present a fast and powerful option for increasing people’s utility. While many of these drugs later produce negative effects such as addiction and health issues, these generally happen long after the first day of taking them, suggesting that they are a net positive from the ultra-near-termist point of view. The main obstacle towards ultra-near-termist distribution of these substances is access; they usually take more than one day to obtain. We suggest that donors who already have access to these substances distribute them immediately. Other donors’ comparative advantage may be ability to get these substances (for example, from a prescriber or illicit seller) within a day, in which case this remains a strong option. This route to impact will not be a good fit for donors who cannot quickly access euphoria-producing medications.

Sample UNT animal welfare intervention: petting dogs. Most animals have no clear ability to form expectations about the future (Clayton 2003). This precludes deception-based strategies such as (1) and (2) above for animal welfare. FOUNT suggests petting dogs. This can be done very quickly, especially for urban residents with close access to a nearby dog park, and seems to make the dogs very happy. We are currently investigating other potential animal interventions, like giving bread to ducks.

Sample UNT AI intervention: prank calls to AI researchers. We reject the idea that artificial intelligence is necessarily a long-termist issue. If unfriendly AI were to be developed in the next 24 hours, it would have devastating effects on the ultra-near-term future. One promising prevention strategy is to make long, meandering phone calls to top AI researchers, wasting their time and preventing them from getting any work done today. FOUNT is currently building a team to pursue our current AI research agenda (figuring out Demis Hassabis' phone number).

We recognize this list is inadequate, and are holding the Ultra-Near-Term Charity Ideas Contest in order to expand it. If you can think of another ultra-near-term charity worthy of inclusion on our list, please post it as a comment. Prizes will be offered (but not given) to the best ideas posted before the deadline, thirty minutes from now.

The Hypocrisy Objection To Ultra-Near-Term Capacity-Building

Ultra-near-termists are vulnerable to a hypocrisy argument: by their own values, it should be futile - indeed, counterproductive - to promote, explain, or otherwise movement-build ultra-near-termism. After all, movement-building is necessarily about investing present resources to improve odds of future expansion. But ultra-near-termists hold as a tenet that the future is almost devoid of moral value. Therefore, instead of building an ultra-near-termist movement, they should be donating or otherwise engaging in high-value charitable activities immediately.

We do not believe this argument can be defeated on its own terms. Rather, a successful explanation for ultra-near-termist movement building (such as the creation of FOUNT and the writing of this document) can only be found in the literature around supererogation and the finitude of moral duty. No individual can be expected to act morally at all times. Therefore, moral actors are expected to devote some fraction of their resources towards the greater good, while using others according to their own preference; for example, donating 1% of one’s income (as recommended by 1ForTheWorld) or 10% of one’s income (as recommended by Giving What We Can). Effective altruists generally agree that it is acceptable to give to local causes, personal friends, and other imperfectly efficient recipients as long as this is not the entirety of one’s giving (GiveWell 2020).

By the same argument, ultra-near-termists may, in addition to their ultra-near-termist giving, pursue other projects out of personal interest, and one of those projects may be ultra-near-termist movement building. Ultra-near-termists will naturally be interested in meeting like-minded donors and explaining their worldview, and these activities are not immoral as long as they are combined with robust ultra-near-term giving and direct work.

A more sophisticated version of this argument is: although ultra-near-termists may ethically engage in altruistic work and personal hobbies, they are morally obligated to do the former first, since they will miss their chance at almost all value if they wait. However, at every time t they will still be obligated to (for the next interval) do more altruism before starting their personal hobbies, since the calculus will be the same. Therefore, they can never engage in their hobbies at all. Although this strategy would cause burnout, the burnout would happen in the future, after almost all moral value was gone, and therefore be irrelevant to the analysis.

We agree with this formulation. One of our founders pursued direct charitable action for sixty-two hours straight, not pausing to eat or sleep, until he finally had a mental breakdown. His psychiatrist then banned him from participating in direct charity further, at which point he founded FOUNT.

Conclusion: Ultra-Near-Termism Is Literally An Idea Whose Time Has Come

We have shown that ultra-near-termism is a philosphically coherent, morally desirable, and practically achievable idea. We believe it is valuable as a moral strategy both in its own right, and as a hedge for long-termist action in a “moral portfolio”.

Some will say that ultra-near-termism is pessimistic, implying as it does that there is no time to movement-build, no time to reflect on strategy, and only a brief flurry of activity before an endless empty future essentially devoid of moral value.

We feel otherwise. Ultra-near-termism implies that the present moment is the most important of all, the moment when almost all of the value in the universe can be seized. Ultra-near-termism frees us from regrets about the past and worries about the future in favor of a Zen-like focus on immediate experience and improving the lives of those closest to us. Further research should focus on identifying more promising ultra-near-term causes and how we can best deploy resources to them effectively and quickly.

Footnotes

[1] MacAskill 2019: https://forum.effectivealtruism.org/posts/qZyshHCNkjs3TvSem/longtermism

[2] Dickens 2020: https://forum.effectivealtruism.org/posts/3QhcSxHTz2F7xxXdY/estimating-the-philanthropic-discount-rate

[3] Ramsey 1928: http://piketty.pse.ens.fr/files/Ramsey1928.pdf

[4] Hanson 2008 https://www.overcomingbias.com/2008/01/protecting-acro.html

[5] entirelyuseless.com 2018: https://entirelyuseless.com/2018/12/25/discount-rates/

[6] Rawls 1971: https://www.amazon.com/Theory-Justice-John-Rawls/dp/0674000781

[7] Torres 2021: https://www.currentaffairs.org/2021/07/the-dangerous-ideas-of-longtermism-and-existential-risk

[8] Steinbach 1990: https://mathworld.wolfram.com/FrivolousTheoremofArithmetic.html

[9] Hume 1739: https://www.amazon.com/Treatise-Human-Nature-David-Hume-ebook/dp/B00P6V0IMS

[10] O’Riordan 2013: https://books.google.com/books?id=e4vjoqgeaIMC

[11] Lindmark 2022: https://forum.effectivealtruism.org/posts/fDLmDe8HQq2ueCxk6/ftx-future-fund-and-longtermism

[12] Pajich 2022 https://www.ndtv.com/world-news/elon-musk-igor-kurganov-where-did-elon-musks-5-7-billion-mystery-donation-go-2771003

[13] Gunatilaka 2021 https://thinkinc.org.au/effective-altruism-a-framework-for-prioritising-causes/

[14] Dennett 1995 https://www.nybooks.com/articles/1995/12/21/the-mystery-of-consciousness-an-exchange/

[15] Aguirre 2019 https://www.preposterousuniverse.com/podcast/2019/06/17/episode-51-anthony-aguirre-on-cosmology-zen-entropy-and-information/

[16] Carroll 2017 https://arxiv.org/abs/1702.00850

[17] Bostrom 2003 https://www.nickbostrom.com/astronomical/waste.html

[18] Clayton 2003 https://www.nature.com/articles/nrn1180

[19] GiveWell 2020 https://blog.givewell.org/2020/12/10/staff-members-personal-donations-for-giving-season-2020/

As someone dedicated to ultra near termism, I question the alignment of the author.

This post is suspiciously long and erudite. I am skeptical that this output is consistent with the preferences and abilities (constrained by investment) of an ultra-neartermist.

Is this a good faith effort to garner attention to our cause, or is it an attempt to steer our (minute) resources to other causes?

I’ll now elaborate my concerns, within the necessary constraints:

I think that

or its funny to write like that if you feel like it. charles raises a fair point that social reactions to a post are far in the future, but they can be many more than the value of the time you invested. that probably makes more sense for sposts than comments though

I wonder if an 80% daily discount rate is sufficient in general? For example, I feel this post itself is at least 20x more effective today than it would have been tomorrow.

Ultra near termism doesn't mean stopping AI. The ultra near termist would really really like a FAI FOOM that simulates a billion subjective years of utopia in the next few days.

Of course, they would choose a 0.1% chance of this FAI FOOM now, as opposed to a 100% chance of FAI FOOM in 2 months.

If a FAI with nanotech could make simulations that are X times faster, and Y times nicer than the status quo, you should encourage AI so long as you think it has at least 1 in XY chance of being friendly.

One thing that potentially breaks ultra-near-termism is the possibility of timetravel. If you have a consistent discount rate over all time, this implies a goal of taking a block of edumonium back in time to the big bang. With pretty much any other goal having negligible utility in comparison. If you consider the past to have no value, then the goal would be to send a block of edumonium back in time to now.