Our U.S. COVID-19 response was based on a bet

In April 2020, we launched Project 100, a U.S. COVID-19 response, in partnership with Propel and Stand for Children. To date, the program has delivered one-time $1,000 payments to over 178K Americans living in poverty, with the most recent round going out last week.

For the past decade, our core mission has been to reach people living in extreme poverty. While many millions in the United States are in poverty, they’re typically not facing extreme poverty as it is officially defined (living below $1.90/day).

We decided to launch the program given:

- the growing need we saw in the U.S. that could be met with cash

- the number of funders interested in getting cash to people in the U.S. who would not otherwise give internationally

- the opportunity to raise direct giving’s public profile beyond what we could do with our international programs alone

Our bet, based on points 2 and 3, was that a justified U.S. cash program would also end up drawing in more funding for people living below the extreme poverty line internationally.

We kept funds & attention going to our global work

We continued to focus on driving attention to our global work and took precautions not to divert funds when setting up Project 100:

- We only allocated funds specifically donated to the U.S. program to American recipients. No other funding went to the program

- We tested and tracked whether donors who first gave to the U.S. would be interested in giving internationally with their future gifts

- We maximized press/social media attention on direct giving and ensured the press mentioned our international work wherever possible

Our U.S. work helped make 2021 our best fundraising year yet for international recipients

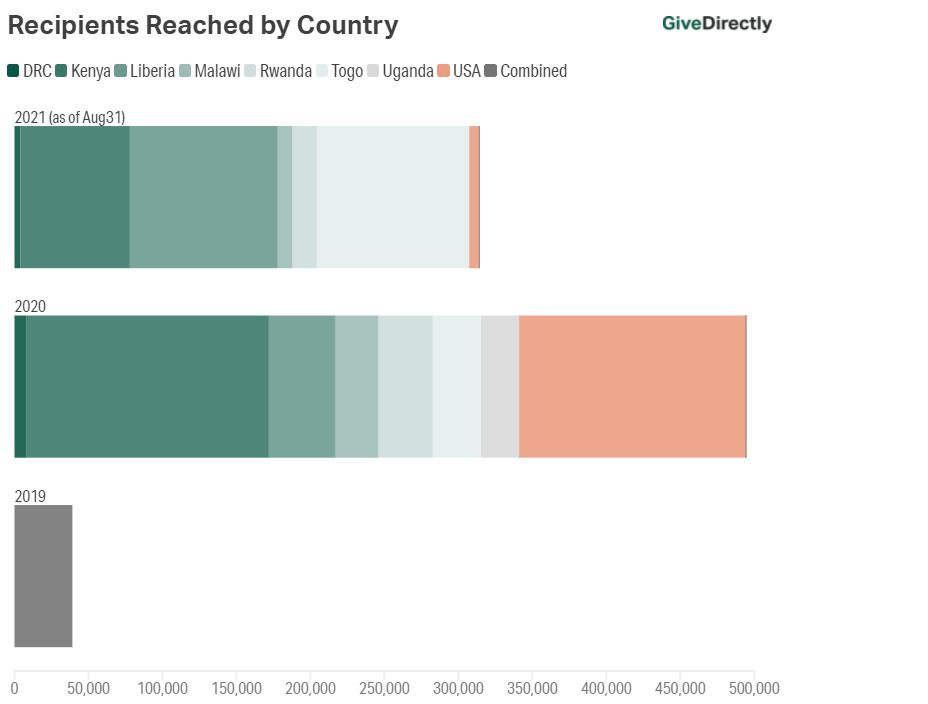

A year and a half on, the results suggest the bet has paid off. This has already been our strongest year raising funds for people living in poverty internationally with $138.8M YTD in 2021 versus $121.4M and $47.2M total raised for int’l recipients in 2020 and 2019, respectively. Beyond that:

- We’ve driven over $70M to international programs from donors who initially gave to U.S. projects — more than our revenue for any year before 2020

- Our U.S. program had over 100 press mentions, helping to raise direct cash giving’s profile on the national and global stage

- We expect to reach even more international recipients by the end this year than last:

We will continue to work in the U.S.

We expect there to continue to be a legitimate need in the US that can be met with cash, be it after a catastrophic hurricane or in geographies that are chronically poor & under-resourced. We also know there will always be funders who are more focused on giving within the U.S. (perhaps some immutably, and some not). So, moving forward, we’ll launch U.S. programs that we think could both fill a real need and raise direct giving’s profile to drive more support to international recipients.

This was a bet that we’re glad paid off. We’re proud to have helped 178K Americans living in poverty:

Numbers as of Sept 13th, 2021. To hear more from recipients themselves, check out stories from Project 100 recipients and GDLive to hear from folks we’ve reached internationally.

Thanks for this post! When I learned last year that GiveDirectly was including U.S. recipients I admit my naive reaction was disappointment. It's great to hear it has worked out so well, and also a useful example where I failed to appreciate the complexity of a situation.

Really happy to see that this seems to have worked so well!

Would it be possible to clarify how MacKenzie Scott's donation of $50 million was counted in the above figures and how the numbers change if one were to not include it?

For the EA community: what other usually internationally facing organizations do you think could benefit from trying this approach?

Meh. Not from me. I quit donating to GiveDirectly based on this very change. and your analysis above doesn't control for confounding factors...At a minimum, you should compare your revenue increase with that of the other GiveWell groups where my money went instead.