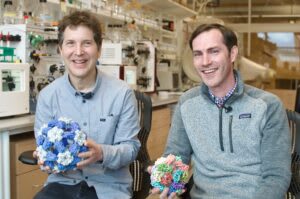

In October, our longtime grantee David Baker won the Nobel Prize in Chemistry for groundbreaking work in protein design. We wanted to take the opportunity to highlight his work and some of its practical applications, such as enabling better vaccines, that we have been supporting Baker and his colleague Neil King in developing. Baker and King have both been vocal about the impact of Open Philanthropy’s funding in enabling their work. We’re proud to have supported both the basic methods development and the potentially high-impact humanitarian applications of computational protein design.

*****

Each year, a global committee of scientists faces the daunting task of predicting which of the thousands of known strains of influenza will be the most prevalent. This challenge, complicated by the virus’s rapid mutation rate and complex circulation patterns, relies on data from 144 national influenza centers in more than 110 countries. The resulting seasonal flu vaccine — developed on the basis of the three or four strains selected by the committee — has widely varying efficacy, ranging from 19% to 60% over the past 14 years.

A “universal flu vaccine” — one that protects against all, or most, influenza strains — would revolutionize flu prevention. Such a vaccine would lower morbidity and mortality rates, streamline vaccine production by eliminating the need for annual reformulation, and help guard against unexpected pandemic flu outbreaks.

In 2016, Neil King, a structural biologist at the University of Washington School of Medicine, began to pursue this ambitious goal using computational protein design, a new method for creating proteins with specific properties and functions made possible by technology pioneered by his colleague David Baker.

Vaccines are substances that prepare the body’s immune system against a specific disease. They often use weakened or inactive parts of a pathogen (like a virus or bacteria) to stimulate the production of antibodies and other cellular changes that create long-term protection.

Traditional vaccine development methods can resemble cooking without a recipe — a process of trial and error. Scientists experiment with various methods to render pathogens harmless or create specific viral proteins, aiming for a safe formula that can stimulate a desired immune response. While often effective, this vaccine creation strategy is unpredictable, time-consuming, and resource-intensive — like throwing spaghetti at a wall and hoping something sticks.

Computational protein design, by contrast, is more like using an advanced cooking simulator. Scientists use sophisticated software programs to design the perfect “recipe”: proteins that mimic parts of the virus or bacteria, carefully crafted to interact with the immune system in specific ways. While no tool to date can simulate the full complexity of an immune system, software can be used to optimize proteins before they are physically produced, allowing for adjustments and fine-tuning prior to real-world testing. Once the design looks promising on the computer, it’s brought into the lab. The result is unprecedented efficiency and efficacy in vaccine development.

Taking calculated bets with venture philanthropy

In 2016, protein design was an emerging field with largely theoretical promise. Computational approaches to protein engineering were not yet mainstream in scientific research, and federal institutions typically favored projects with more established track records. Baker was gaining attention for his invention of Rosetta, a pioneering protein folding software package — work that would eventually earn him the 2024 Nobel Prize in Chemistry — and had secured some early and ongoing support from the Bill & Melinda Gates Foundation for vaccine development. Yet large-scale funding to directly improve Rosetta remained elusive. King, meanwhile, had spent over a year submitting flu vaccine development proposals to governmental grantmakers like the National Institutes of Health (NIH) without success. The innovative nature of their ideas seemed to work against them in the conservative world of federal funding. “What we were proposing was so new and so different that people didn’t quite know what to make of it,” King recalls.

That’s when Open Philanthropy program officers Chris Somerville and Heather Youngs learned about the project at a small gathering convened by the Science Philanthropy Alliance to discuss the need for better influenza vaccines. Somerville and Youngs were open to backing Baker and King’s potentially transformative ideas. As self-described “venture philanthropists,” they embrace taking calculated risks, a practice that aligns with Open Philanthropy’s “hits-based giving” approach. “We have enough experience in science to see that completely new things can have a much bigger impact on progress than incremental things,” Somerville explains. “A large number of incremental discoveries is worthwhile, and that’s what federal agencies typically fund. But step changes can have a much bigger effect.

Somerville and Youngs, recognizing AI’s advancing contributions to science, especially in the realm of “rational design” — the deliberate creation of biomolecules with specific properties — made a two-part $11 million grant to advance Baker and King’s research.

The first part supported Baker’s work to improve Rosetta’s predictive power and explore new computational strategies; this helped the Baker Lab integrate deep learning into their work, unlocking significant advancements in protein modeling and design.

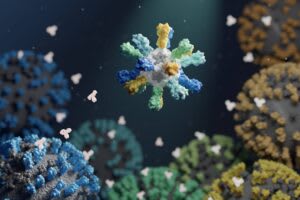

The second part supported King’s multi-seasonal flu vaccine, developed in collaboration with Barney S. Graham and Masaru Kanekiyo at the NIH Vaccine Research Center. Their approach used computational protein design to improve the immune system’s ability to recognize influenza antigens — viral fragments that the body can learn to identify and defend against. Unlike traditional vaccines that often target parts of an antigen that are prone to mutation, King’s strategy focused on stable regions of the virus that remain largely unchanged year after year. This precision was made possible by advanced molecular design techniques, with the goal of creating a vaccine that is effective against multiple strains for several years.

King’s innovative method displays antigens on the surface of specially engineered nanoparticles in a “repetitive array” — a symmetrical pattern resembling the three-dimensional structure of viruses or the two-dimensional arrangement of flower petals. This configuration makes it easier for the immune system to identify the antigens and remember the threat. Remarkably, even when using antigens identical to those found in current vaccines, this presentation method showed superior protection. This suggests that how antigens are presented to the immune system — as well as which antigens are selected — can play a critical role in vaccine efficacy.

While King has not yet created a true “universal flu vaccine” — one that protects against all of the thousands of strains of influenza — his team’s improved flu vaccine, which protects against a wider array of strains at or above the current level of care in preclinical experiments, is currently undergoing Phase I clinical trials in humans. When asked what might have happened in the absence of his grant from Open Philanthropy, King shares that it was “literally life or death for our flu program.”

Nanoparticle technology’s expanding reach

While King’s research on flu vaccines continues, his nanoparticle technology has already found applications beyond influenza.

During the COVID-19 pandemic, King’s lab collaborated with colleagues in the Veesler Lab at the University of Washington School of Medicine to produce a nanoparticle vaccine for SARS-CoV-2. This computer-generated vaccine was found to be more effective than the Oxford/AstraZeneca vaccine Covishield/Vaxzevria, eliciting roughly three times more neutralizing antibodies, even at lower doses. This vaccine, now approved in the United Kingdom and South Korea, marks the first fully approved medicine created using computational protein design.

Building on this success, King’s lab, part of the Institute for Protein Design at UW Medicine, has expanded its collaborative network to tackle other global health challenges. Their current work spans vaccine development for various diseases, with many projects originating from introductions facilitated by Somerville and Youngs. Open Philanthropy has supported these partnerships through several grants, including ongoing projects on influenza, syphilis, hepatitis C, and malaria, aiming to scale promising protein design techniques to address other high-burden diseases.

To decide which diseases to target, King’s lab carefully evaluates potential interventions against three key criteria:

- Impact. Similar to Open Philanthropy’s “importance” criterion for cause selection, King’s team focuses on widespread diseases that affect many millions of people such as the flu, HIV, and malaria, aiming to make the largest possible difference.

- Technical fit. As King puts it, “being able to do the thing” – having the necessary knowledge and abilities to tackle the challenge at hand. In Open Phil language, “tractability” — clear ways to contribute to significant progress.

- Technology development. The lab specializes in developing new technology to address well-defined problems, which can then be shared with the wider scientific community to amplify impact.

Somerville praises King’s work: “Neil is changing the world by developing better vaccines and teaching others how to develop better vaccines. They’re better in several ways: higher efficacy, more predictable outcomes at each stage of development, potentially safer, and potentially less expensive to manufacture.”

In September 2024 — eight years after his original federal flu vaccine proposals were rejected — King received two major grants from the US government via ARPA-H and NIAID to design more new vaccines. With a combined $70 million in research support, he is now leading projects to develop herpes vaccines and pandemic preparedness technologies.

That larger-scale support is a notable change from the early days of King’s research. Reflecting on the shift in the funding landscape, King credits Somerville and Youngs for paving the way for that broader recognition: “Now the federal funders get it, and they’re piling in. But visionaries like Chris and Heather saw that beforehand.”

Executive summary: David Baker and Neil King are revolutionizing vaccine development through computational protein design, creating more effective vaccines by using AI to precisely engineer proteins that better trigger immune responses.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.