Here I summarized each of the 11 Parts of the blog series the "most important century" by Holden Karnofsky in very short paragraphs. Simultaneously, I often described the central theme of some of the parts while stating why that piece is significant. I found listening to the podcast while reading the best approach to get the most out of this blog series. Although the original blog piece has plenty of other resources and branches to the arguments by the author.

The central idea

of the blog is the claim that the century we live in may very well be the deciding time for the future of humanity, driven by Transformative AI. Through this series the author forecast more than a 10% chance we'll see transformative AI within 15 years (by 2036); a ~50% chance we'll see it within 40 years (by 2060); and a ~2/3 chance we'll see it this century (by 2100).

Key Takeaways

All Possible Views About Humanity's Future Are Wild

A very rich argument about our galaxy-wide expansion is described in this section. Akin to a fantasy tale narrated by a humble but stern voice, a possibility here is given the spotlight using the method of elimination of lesser odds. What I loved most are the sceptical views, which seem rather criticizing but are actually very true to the nature of how people present their views while in denial. Welcoming conservative view, Holden sternly made clear that our galaxy is empty(at least of intelligent life)

The Duplicator

It delves into the concept of Digital Human Brains. A Duplicator. Thinking of Shadow Clones of Naruto, this argument by Holden is effectively materialized in my thoughts like VR. The concept is often tackled by the Sci-Fi culture and the writer is aware of their themes. In contrast, this piece centered forecasts skeptical analysis around the economic explosion and productivity feedback loop that can be reactivated by the Duplicators; hopefully, creating solace for the unprivileged economies.

And sure enough, if such a feat is achieved, space expansion would be urgent.

Digital People Would Be An Even Bigger Deal

From digital minds, we get digital people.

This piece discusses in detail the raw concept of the existence of intelligent beings besides humans both as a source of advancement and/or a challenge to overcome. The implication of mind uploading is the central theme here and evidently so if considered in 2022. One part that I loved-FAQs, especially the one where a systematic and prolonged argument is written on how digital people would actually survive and get by their lives on earth. The inclusion of their laws, liberty, and other aspects addressed as sentient beings i.e. People. Apart from productivity, one striking aspect(which I still find hard to believe) is the sheer capability of digital people to improve our(Humans’) personal lives by possibly finding answers to the psychological queries humans tend to fail at, provided the necessary set-up. The emergence of digital people (if and when possible) will most likely be coupled with their rapid growth, and further lead us(and them) out of the bounds of the planet. Energy as the supplement, space expansion is ever more likely for self-sufficient digital beings.

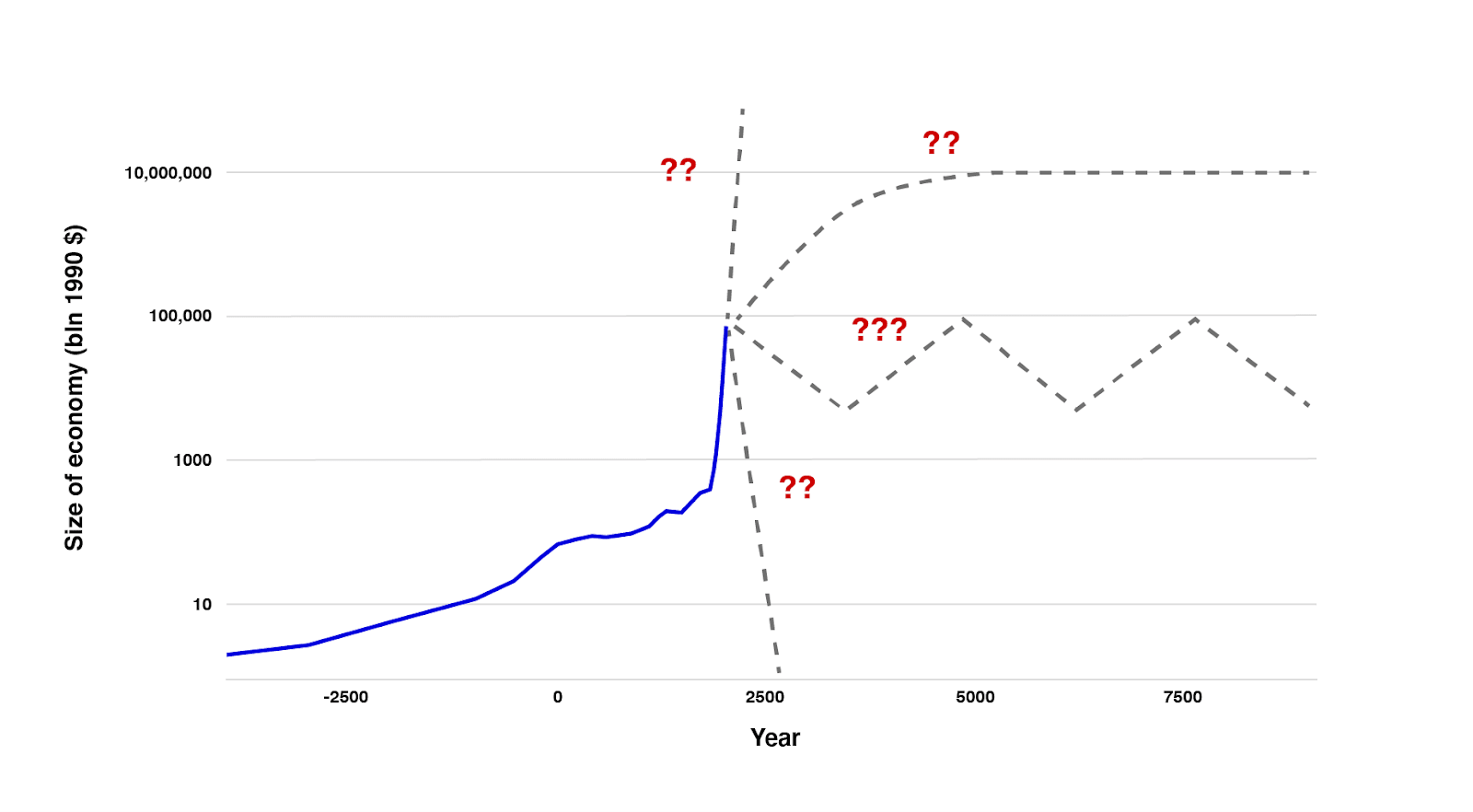

This Can't Go On

This piece focuses on the current time we live in, throwing a spotlight on the insane rate of economic growth in recent centuries compared to the ancient ones. Here we analyse the three possible near-future cases. Stagnation, which is least likely. Explosion, which is most likely but heavily dependent on future advancements. And Collapse, which is becoming more and more realistic in current human society. The latter two cases are the direct outputs of scientific advancements.

Forecasting Transformative AI: What Kind of AI?

Then we analyze, a collection of 4 pieces, all focusing on transformative AI and getting closer to finding when exactly such a Process for Automating Scientific and Technological Advancement(PASTA) would be ready. Here PASTA is narrower than artificial general intelligence. 1st piece discusses the roots of AI from basic programming to machine learning(ML). A brief study on how artificial neural networks involve a train of trial and error to make ML grow and learn. PASTA would use ML as a building block, but later surpass the likes of AlphaZero. In detail, we study the impact of such a process on our growth rate; a rapid technological boon or a fateful misaligned AI overpowering the outnumbered humans. Such a system is predicted to grow differently with each iteration/multiplication, having varying human controls.

Forecasting Transformative AI: What's The Burden Of Proof?

Next, we look at how and why the proofs of current forecasting come with mixed(often negative) feedback. Holden walks through various angles and multiple probabilities to address scenarios based on prior studies, claims, analyses and even unsuccessful theories. Here, we note that the current rate of development and several other trajectories are in line with one involving the development of a PASTA-like system, and expert opinions in AI will only increase in the coming decades to support and likely update this hypothesis.

Forecasting Transformative AI: Are We "Trending Toward" Transformative AI?

Next, we question the hype of AI and try to map it to the current technology trends. Here Holden describes how his analogies work and compares the growth towards transformative AI with some of the most known(and evident) patterns of Covid-19, Greenhouse emissions, etc. Subjective extrapolations are key to projecting AI effectiveness in years to come. Here we correlated AI to varied human capabilities and map their forecasting based on the existing trends in the scientific space w.r.t cost of development and size of AI models.

Forecasting Transformative AI: The "Biological Anchors" Method In A Nutshell

Then we explored how various experts predict their timelines of transformative AI arrival and on what they think it depends. Here, in line with a researcher-wide Grace et al 2017 survey, the probability estimates come out to be on the same path as Holden’s. Another forecasting of Transformative AI is shown with the help of Bio Anchors. This involves AI processing and development directly in comparison with the human brain and estimating the upper limit of cost and time to achieve such a scenario. This piece also deals with various critiques and takes on adapting the biological route. Although the Bio Anchor analysis comes out to be fully consistent with the AI concept scaled to the human brain, polar opposite reactions exist in most of the feedback received yet.

AI Timelines: Where The Arguments, And The "Experts," Stand

This piece reflects on the absence of a solid consensus among the experts when it comes to predicting something like a PASTA. Here, Holden consolidates all other forecastings on the topic and addresses the reasons behind the skepticism acknowledgment and uncertainties. The best part about this piece(and probably the entire study) is the acknowledgement of Cunningham’s law, which encourages seeking the right answer after predicting a wrong answer. The writer highlights here why such a procedure is key for AI forecasting.

How To Make The Best Of The Most Important Century?

Perhaps the most relatable piece of the series, it argues that we, the people will be decided how the near future is shaped through AI. The writer presents two frames of consideration here- Caution and Competition, with examples of necessary actions for consideration.

In the cautionary frame, we discuss the worst of the possibilities from Misaligned AI and its adversarial maturity to the best ones like a scenario for better negotiations and governance, and the idea of reflection on the strides we are going to make toward major AI goals.

In the competitive frame, we discuss how the scale of Transformative AI may lead to an international contest of power-grab. Holden also highlights, how the current world, with all its faults and glory, tends to incline towards it out of social stigma and national insecurities.

In the last part, the writer urges a call for ‘vigilance’ rather than ‘action’ in an attempt to prevent temporary impulsive actions. A heads start to identify when to take action to make the most impact.