If you are interested in supporting the development of research projects and researchers in AI safety, the MATS team is currently hiring for Community Manager, Research Manager, and Operations Generalist roles and would love to hear from you. Please apply by Nov 3 to join our team for the Winter 2024-25 Program over the period of Dec 9-Apr 11. Most open roles have the possibility of long-term extension depending on performance and program needs.

Introduction

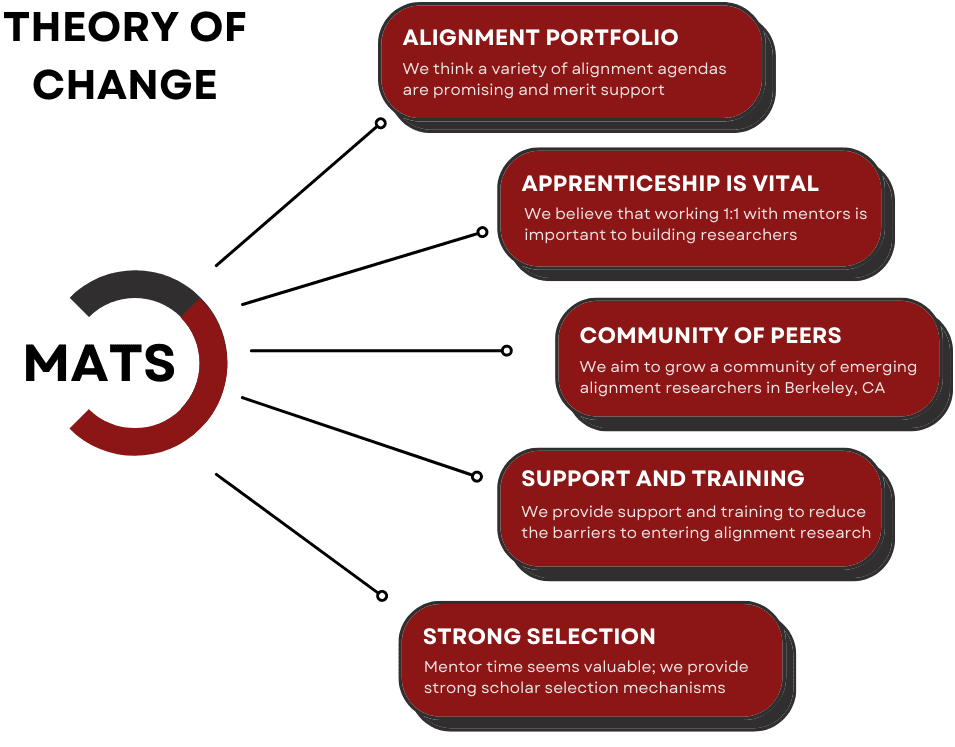

The ML Alignment & Theory Scholars (MATS) program aims to find and train talented individuals for what we see as the world’s most urgent and talent-constrained problem: reducing risks from unaligned artificial intelligence. We believe that ambitious young researchers from a variety of backgrounds have the potential to contribute to the field of alignment research. We aim to provide the mentorship, curriculum, financial support, and community necessary to aid this transition. Please see our theory of change and website for more details.

We are generally looking for candidates who:

- Are excited to work in a fast-paced environment and are comfortable switching responsibilities and projects as the needs of MATS change;

- Want to help the team with high-level strategy;

- Are self-motivated and can take on new responsibilities within MATS over time and;

- Care about what is best for the long-term future, independent of MATS’ interests.

Please apply via this form.

Community Manager

We are expanding the MATS Community Health Team and are looking for an individual with strong interpersonal skills who will work with the Community Management Lead and the rest of the MATS team to support the needs of scholars and improve the MATS community. Ideal candidates are excited about AI safety field-building and enjoy supporting others.

Responsibilities

A Community Manager needs to be proactive and responsive to MATS scholars’ needs and able to enhance scholar experience through personal conversations, mediation, event planning, and documentation of community health concerns. This role reports primarily to the Community Management Lead and to the Executive Team. Specific responsibilities will vary according to applicant experience and interests, and may change depending on program needs. However, you can expect tasks like:

- Meeting with scholars to get to know them and learn about their needs, provide emotional support, talk through scholar challenges, and receive community feedback;

- Coordinating with the Community Management Lead about scholar needs;

- Collaborate with Research Management and Operations teams, as needed, to support scholars;

- Supporting scholar orientation and offboarding;

- Organizing and supervising socials, networking events, and workshops (for example, hosting board game nights, hikes, 1-on-1 speed networking, remote scholar events, lightning talks, and more);

- Setting up and monitoring systems, e.g. writing surveys and analyzing data, to improve the program based on scholar feedback;

- Staying aware of the cohort’s physical and mental health and connecting scholars with appropriate resources.

We are a small team running a large program, so we expect team members to enthusiastically take on tasks unrelated to their job title. For example, MATS team members have compiled a MATS Alumni Impact Analysis and conducted analyses for internal use and external publication.

Criteria

- US work authorization required;

- Located in or willing to move to Berkeley, CA;

We encourage you to apply even if you are not sure that you sufficiently meet all of the following criteria:

- Experience providing emotional support and mediating conflicts, ideally in a setting similar to MATS (e.g., as a university peer counselor);

- Strong interpersonal skills (e.g., patience);

- High cognitive empathy;

- Knowledge of emotional regulation tools;

- Autonomy and proactivity;

- Comfortable working with private and confidential information;

- Able to function in urgent and/or high-stakes situations, if necessary;

- Some data analysis skills a plus, but not required;

- Ideally, some knowledge of AI safety-specific concerns that sometimes bear on community health (e.g., "doomerism," "infohazards," etc.).

Details

Compensation will be $43-57/h, depending on experience. Must be willing to work from Berkeley, CA. Office space, catered lunches and dinners, and health, dental, and vision insurance are provided for full-time workers. Estimated 40 hours per week from December 9 to April 11, 2024 (with a one-week break Dec 23-27), potentially continuing beyond this depending on performance and program needs.

Applying

Please apply via this form.

Research Manager

We are looking for resourceful generalists with strong project management, critical thinking, and interpersonal skills to enhance the effectiveness of the MATS program. As a Research Manager, you will help coordinate and support our research scholars and mentors, playing a pivotal role in empowering AI safety researchers.

Overview

This role involves weekly meetings with scholars and their mentors to support research output and scholar development over the program. A great Research Manager is able to quickly familiarize themselves with scholars’ research at a high level and help mentors and scholars achieve program outcomes, including high-impact publications, successful grant applications, and career development. Additionally, you will dedicate up to 10-15 hours per week to "special projects" such as applicant selection, workshop coordination, and program impact evaluation.

Strong candidates are particularly excited about problem-solving, project coordination, and empowering AI safety researchers. Bonus qualities include: AI safety or machine learning (ML) research or engineering experience, experience and connections within the AI safety ecosystem, experience managing small teams, and pedagogical knowledge or experience. This is a four-month position with the possibility of long-term extension depending on performance and program needs.

Details

Compensation will be $44-62/h, depending on experience. Must be willing to work from Berkeley, CA. Office space, catered lunches and dinners, and health, dental, and vision insurance are provided for full-time workers. Estimated 40 hours per week from December 9 to April 11, 2024 (with a one-week break Dec 23-27), potentially continuing beyond this depending on performance and program needs. We are also able to accommodate workers working as little as 30 h/week.

Responsibilities

A research manager’s primary role is to help scholars and mentors achieve program goals, as well as accelerate and enhance scholar development through the MATS program via personalized support. This role reports primarily to the Research Management Lead and to the Executive Team for special projects. Specific responsibilities will change depending on cohort and program needs, but you can expect tasks like:

- Meeting with mentors to understand their vision and needs over the program;

- Meeting with scholars to provide 1-1 research support, mentor meeting preparation, accountability, goal planning, and career advice;

- Hosting daily or weekly standup meetings for scholars;

- Performing regular check-ins with scholars via text, phone, virtually, or in-person;

- Reviewing program deliverables (e.g., research plans, symposium talks, grant applications, papers, blog posts) with scholars and giving feedback;

- Assisting with various aspects of program administration (i.e., “special projects”) where your skills align (e.g., rubrics for milestone deliverables, running workshops, curriculum planning, data analysis, applicant selection, mentor selection);

- Directing scholars towards appropriate resources to help them flourish throughout the MATS program, including referring scholars to the Community Management Lead or Executive Team where appropriate;

- Relaying trends in scholar needs or stress points as well as any specific questions or concerns to the team lead and executive team when appropriate;

- Participating in regular Research Management team meetings and 1-1s with the team lead to check in and troubleshoot any issues holding scholars back.

Criteria

- US work authorization required;

- Must be willing to work from Berkeley, CA.

We encourage you to apply even if you are not sure that you sufficiently meet all of the following criteria:

- Time management and project management skills (e.g. Getting Things Done);

- Ability to solve abstract problems;

- Enjoyment from helping others (e.g., “servant leadership”);

- Familiarity with AI safety-specific concerns (e.g., “doomerism,” “dual-use” research, “infohazards,” etc.);

- Eagerness to continue learning new problem-solving methods and tools (e.g., backchaining, builder/breaker methodology, crux decomposition);

- Well-developed “theory of mind” (people-modeling skills) and cognitive empathy;

- Autonomy and proactivity, especially if/when friction points arise;

- An understanding of discretion, as well as the ability to maintain confidentiality where predefined boundaries exist;

- Bonus: a background in AI safety, interpretability, or governance.

Applying

Please apply via this form.

Operations Generalist

We’re looking for proactive and dedicated operations professionals to help the program run smoothly. As an Operations Generalist at MATS, you’ll support scholars, mentors, and team members by facilitating ease and community such that they might better focus on the content of their work.

Details

Compensation will be $36-48/h, depending on experience. Must be willing to work from Berkeley, CA. Office space, catered lunches and dinners, and health, dental, and vision insurance are provided for full-time workers. Estimated 40 hours per week from December 9 to April 11, 2024 (with a one-week break Dec 23-27), potentially continuing beyond this depending on performance and program needs.

Responsibilities

Operations Generalists at MATS perform a wide variety of duties. For this particular hire, we have in mind:

- Managing office guests

- Interfacing with external parties (like the contractors who help maintain our office space)

- Helping run social events and parties

- Addressing scholar needs, however they may arise

The core task of the role will be addressing office requests from scholars, mentors, and team members, to help keep things running smoothly for them (i..e sourcing equipment, investigating potential maintenance concerns, etc). However, the ideal candidate would be willing to greet any challenge or special project that may appear over the course of the cohort. Depending on experience, you will be expected either to own these projects (with guidance from William, our Operations Lead), or to help William run them.

Criteria

- US work authorization required;

- Must be willing to work from Berkeley, CA.

Applying

Please apply via this form.