Epistemic status: Very uncertain about my estimates of numerical values but pretty certain that proposed way of calculating relative x-risk value is useful. Been thinking about this for a while but wrote it quickly.

Summary

In short:

- We can divide x-risk by how bounded it is into three categories:

- Human-bounded x-risk kills all humans but leaves at least some life on Earth

- Earth-bounded x-risk kills all life on Earth but doesn’t spread to other parts of the universe

- Unbounded x-risk spreads through the universe and sterilizes it of any potential value

- These categories could have very high differences in importance per unit of probability because:

- Human-bounded x-risk leaves the possibility of other Earth life becoming intelligent and optimizing the universe

- Earth-bounded x-risk leaves the possibility of intelligent life originating on other planets in our light cone and optimizing the universe

- As the new intelligent life becomes less similar to humans, we lose the ability to predict how much their values would align with ours, or “objective morality” if there is such a thing

- It seems pretty likely that other Earth life would eventually become as intelligent as humans

- This would imply that unbounded risk, like unbounded AI risk or grey goo, have more moral importance per unit of probability than other risks

- I propose a way of calculating expected value of reducing various x-risks that takes these differences into account, and I estimate that preventing unbounded AI risk is three times as important as preventing other x-risks per unit of probability

Introduction

I think different kinds of X-risk could have much different moral importance per unit of probability, as humans aren’t the only thing that has the opportunity to maximize the value of the universe.

It could be that other Earth-based life (like apes that could evolve human-like capabilities after a while, or completely different organisms evolving intelligence after a long while) could maximize the utility of the universe, as this life would evolve in similar ways to humans, so might have similar values to ours.

There’s also the possibility that intelligent alien life could potentially evolve and then maximize the value of the universe. Although the probability of both of these events are quite hard to estimate and I’m very confused about the Fermi paradox.

X-risk categorized by boundedness

I propose the division of x-risk into three categories of boundedness:

- Human-bounded x-risk (erases humanity’s potential)

- Earth-bounded x-risk (erases the potential of Earth life)

- Unbounded x-risk (erases potential of all life in our light cone)

Of course, these categories aren’t perfect, and probably one way to do this while losing less detail would be to rate each x-risk by its probability of killing all of humanity, probability of killing different categories of Earth-based life, and probability of spreading throughout the universe and sterilizing it of potential value.

There’s also a lot of area between human-bounded and Earth-bounded x-risk, where something that would kill complex life but leave very simple life might allow the possibility of complex life arising again after a while. I’ll ignore this for time’s sake.

But I think these three categories are quite useful as I think there isn’t much overlap between the various categories.

Just from this categorization and assuming a positive expected value of a universe optimized by other lifeforms, it is obvious that unbounded x risk is the most important per unit of probability, followed by Earth-bounded x-risk, followed by human-bounded x-risk.

Expected value of a world without humans

Let’s imagine a world where all of humanity dies, maybe through an engineered pandemic.

There are two variables that are relevant to the way I propose we can calculate x-risk importance taking into account boundedness:

- Expected time for Earth life to become as intelligent as humans are currently

- Expected value of Earth-optimized universe per unit of time

I don’t know much about biology and evolution, but it seems pretty likely to my uneducated brain that, conditional upon other life on Earth staying around for a while, at least some of it would eventually evolve to be similarly intelligent to current-day humans. I’ll put this number at half a billion years as a rough guess.

Given that they evolved in a similar evolutionary environment, maybe there’d be a high probability that they’d evolve into similarly pro-social creatures as humans, and value happiness in others. I’ll put the expected value of Earth-optimized universe per unit of time at 0.7 (compared to 1, which is the value for Human-optimized universe per unit of time, as what we’re looking for is relative importance).

Expected value of a world without Earth life

Let’s imagine a world where all of Earth’s potential is erased, maybe through a gamma ray burst or through a bounded AI catastrophe.

The variables we want to know are:

- Expected time for alien life to become as intelligent as humans are currently

- Expected value of alien-optimized universe per unit of time

The Fermi paradox and the anthropic principle confuse me a lot, but I’ll put an expected waiting time for intelligent alien life at 50 billion years. This is a wild guess.

Because aliens would be much less similar to humans on expectation than other Earth life, I feel much less confident about predicting the expected value of an alien-optimized universe per unit of time. I’ll put this number at 0.2.

Other variables

Some other variables relevant for comparing values of different x-risk categories are:

- Time it takes a civilization to go from human-level intelligence to optimizing the universe. I’ll put this at 0 to make the calculation simpler as it’s not a large quantity on a cosmic timescale.

- Time the universe will be optimizable. I’ll estimate this at 100 billion years as a wild guess.

- Upper bound of value as a function of time. It’s pretty safe (?) to assume that, as there’s less usable energy, it will be harder to optimize the universe. There’s also the fact that as optimization starts later in time, there will be less of the universe that’s reachable. I’ll ignore both of these.

Back of the napkin calculation

using my numbers, all Earth life dying is much worse than just humanity dying.

For any steady-state universe lifetime, the expected value equals the expected value per unit time, multiplied by how long it will last.

Let's define some terms.

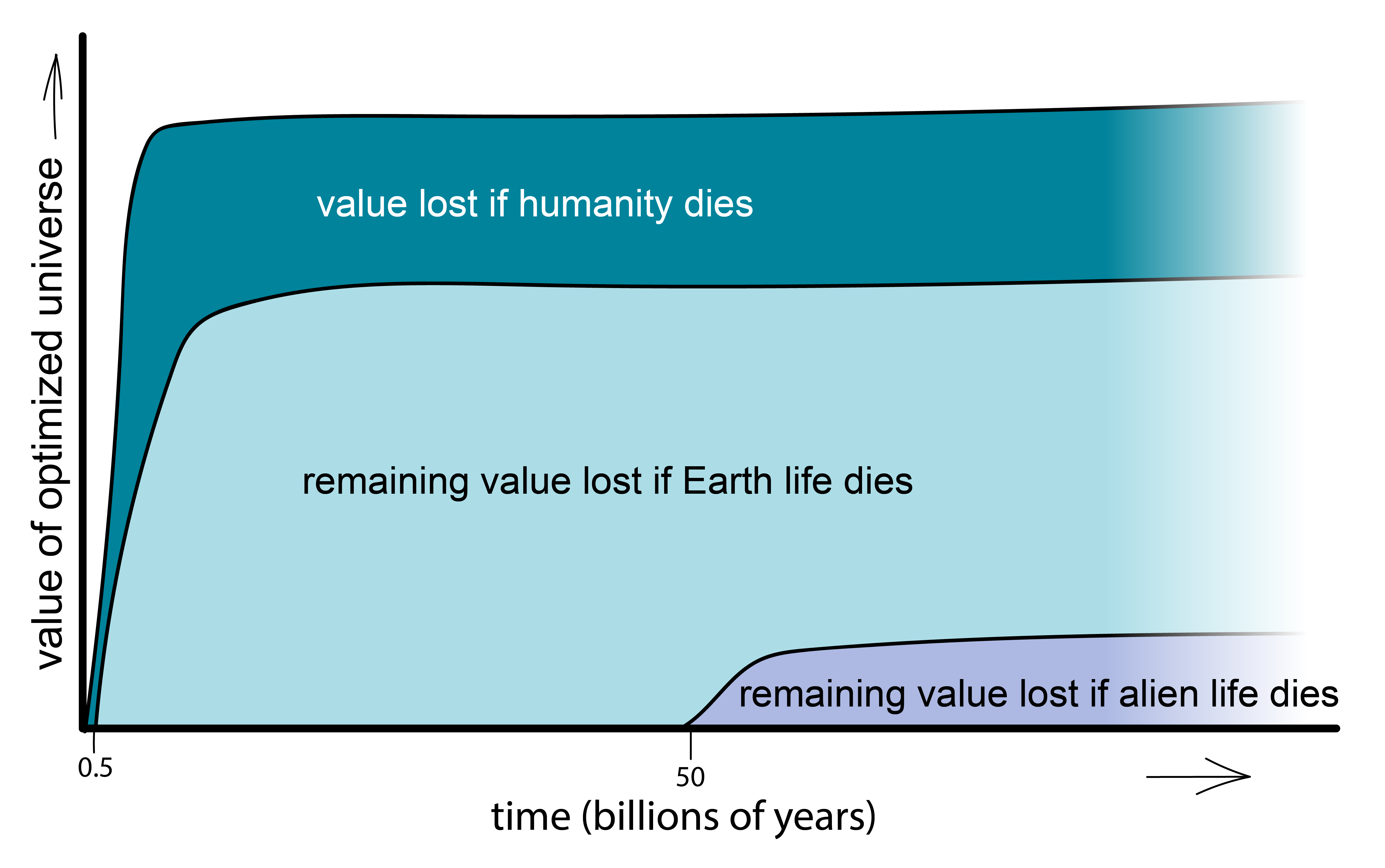

Then, for humanity surviving, this would equal 100 billion units of value.

For humanity dying but Earth life surviving, this would equal around 70 billion units of value.

For Earth life dying but alien life surviving, this would equal about 10 billion units of value.

Therefore:

- the event of humanity dying but Earth life surviving loses 30 billion units of value

- the event of humanity and other Earth life dying (terracide?) but alien life surviving loses 95 billion units of value

- the event of all potential life dying (cosmocide?) loses 100 billion units of value

So, using my numbers, the ratio of preventing these three events by unit of probability is 30:95:100.

In other words, scenarios that would destroy Earth life or Earth and alien life are roughly 3 times more important than human-bounded scenarios.

Implications for cause prioritization

This would imply that we should weigh more heavily towards preventing catastrophes that could be not just bounded to killing humanity. For instance, if you think AI catastrophes could have a 50% chance of being Earth-bounded or unbounded, even if you place the same probability on AI risk and biorisk, you would find preventing AI risk twice as important.

In other words, the existential risks that don't just stop at humanity (unbounded AI risk, grey goo, gamma ray bursts?, large asteroids?, false vacuum decay) should be weighed about 3 times more in cause prioritization, if you think my numbers are correct.

I encourage you to plug in your own numbers or draw your own graph and see how this affects your cause prioritization, but probably the most important effect this has is to make AI risk more important per unit probability than other risks. One crucial question is:

What is the probability than an AI catastrophe would not stop at humanity, given that an AI catastrophe would happen?

I think this number is pretty close to 1, and this makes preventing AI risk roughly 3 times as important as other relevant x-risks per unit of probability.

Also relevant:

https://80000hours.org/podcast/episodes/paul-christiano-ai-alignment-solutions/ talks about intelligence re-evolving.

Global Catastrophic and Existential Risks Communication Scale different scales of X risk

Surviving global risks through the preservation of humanity's data on the Moon

Interesting post!

I think if mammals were to evolve greater intelligence and were able to build and transfer knowledge like us, enough to start colonizing space (or have their descendants do so), it's quite likely they would end up with values similar to us (or within the normal range of human values), since they already have emotional empathy (emotional contagion + non-reciprocal altruism), and they would likely have to become more social and cooperative along the way.

Plausibly (although I really don't know) other animals could become intelligent, social and cooperative without developing emotional empathy, and that could be bad. They could be selfish and Machiavellian. That being said, becoming social and cooperative may coincide with the development of emotional empathy and inequity aversion, since these will promote group fitness.

I'm not sure what other animals have emotional empathy. I know chickens have emotional empathy for their young, but this might not extend to other chickens. Parenting animals probably generally have emotional empathy for their offspring. I don't know how far the emotional empathy of corvids, parrots and octopuses would extend, and these are some of the next smartest non-mammalian animals.

You might find Christian Tarsney's model useful for this. See also this EA Forum post on it and the discussion.

I agree with your post in principle, we should take currently unknown, non-human moral agents into the calculation of X-risks.

On the other hand, I personally think leaving behind an AGI (which, afterall, is still an "agent" influenced by our thoughts and values and carries it on in some manner) is a preferable end game for human civilisation compared to a lot of other scenarios, even if the impact of an AGI catastrophe is probably going to span the entire galaxy and beyond.

Grey goo and other "AS-catastrophes" are definitely very bad.

From "worst" to "less bad", I think the scenarios would line up something like this:

1: False vacuum decay, obliterates our light-cone.

2: High velocity (relativistic) grey goo with no AGI. Potentially obliterates our entire light-cone, although advanced ailen civilisations might survive.

3: Low velocity grey goo with no AGI, sterlises the Earth with ease and potentially spreads to other solar systems or the entire galaxy but probably not beyond (the intergalatic travel time would probably be too long for the goo to maintain their function). Technological ailen civilisations might survive.

4: End of all life on Earth from other disasters.

5: AGI catastrophe with spill over in our light cone. I think an AGI's encounter with intelligent alien life is not guaranteed to follow the same calculus as its relationship with humans, so even if an AGI destroys humanity, it is not necessarily going to destroy (or even be hostile to) some ailen civilisation it encounters.

For a world without humans, I am a bit uncertain about whether the Earth has enough "time left" (about ~500 million years before the sun's increasing luminosity makes the Earth significantly less habitable) for another intelligent species to emerge after a major extinction event (say, large mammals took the same hit as dinosaurs) that included humanity. And whether the Earth would have enough accessible fossil fuel for them to develop technological civilisation.