Last month, Anthropic developed Claude Mythos, a model they considered too dangerous for public release.

As per Anthropic (and via testing from AISI), we know that Mythos:

- Found thousands of previously unknown vulnerabilities in every major operating system and browser.

- Surpasses the coding capabilities of all but the most skilled humans.

- Exposed a 25+ year old flaw in the world’s most secure operating system that would let it crash essential infrastructure.

There’s a great write up from 80,000 Hours explaining Mythos here.

*

The question I consider is how we should update on the potential for AI-enabled coups in the wake of such a powerful model and Anthropic’s response to it.

I suggest Mythos makes certain coup pathways more plausible, primarily by reducing the minimum viable coalition needed to cause targeted disruption. Glasswing, Anthropic’s attempt to mitigate that specific risk, is a useful governance precedent. It bolsters the defensive capabilities of critical actors before deploying a model with significantly improved cyber capabilities. But that same mechanism concentrates decision-making over potentially state-level threats in the hands of a small number of private actors, handing them the keys to decide who can access strategically important tech and who can prepare themselves against it.

The first risk concerns what the model can do, the second concerns who decides which actors or institutions have access to it.

AI-Enabled Coups

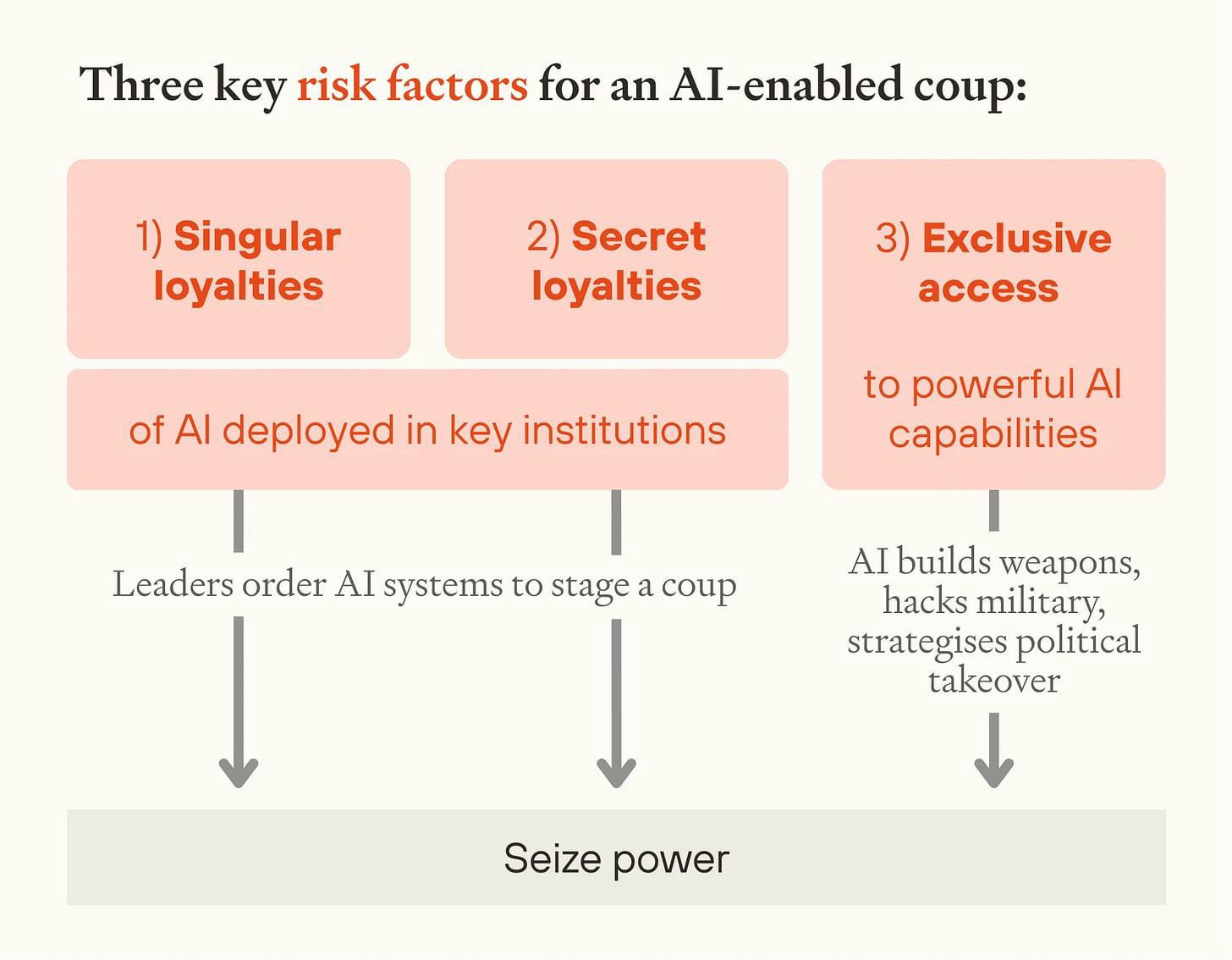

How powerful AI could be used to enact a coup is an underexplored area of AI governance research. Forethought have written the most comprehensive account of this to date, outlining three key risk factors:

- Singular loyalty: the risk that an advanced AI system could be developed to be singularly loyal to one person or institution.

- Secret loyalty: the risk that an AI system could appear to uphold the rule of law while covertly advancing the interests of one actor, programming those loyalties into future models, and ultimately using military AI systems to stage a coup.

- Exclusive access: the risk that AI with greater-than-human capabilities across domains - persuasion, strategy, weapons development - becomes concentrated in the hands of a few actors.

Mythos is most directly relevant to the third risk factor, but not only in the way that’s immediately obvious. Forethought identify a specific coup pathway worth attention in this context: the hacking of autonomous military AI systems. Their argument is that once fully autonomous military systems are widely deployed, an actor with sufficiently advanced cyber capabilities could simultaneously compromise enough of them — by disabling some, seizing control of others — to tip a constitutional crisis into the collapse of democratic institutions. Historically, coups have succeeded with excellent timing and very few military resources; the key is preventing other forces from blocking their route to power.

Mythos, which autonomously discovers and exploits vulnerabilities at scale, is the first model that makes this pathway feel more like a near-term threat than a speculative forecast.

Mythos & cybersecurity threats

Mythos is a clear shift in cybersecurity risk. AISI found that the model could execute multi-stage attacks and autonomously discover and exploit vulnerabilities. The expert cybersecurity team at SACR write that Mythos:

Transforms software exploitation into an automatable industrial process…This significantly lowers the barrier to entry and compresses the time required to develop working exploits, effectively making Zero Days sub-hour vulnerabilities.

Anthropic recognised this. They tightly controlled the model’s release: access to Mythos is limited to internal staff, a small set of external customers, and was later granted to Project Glasswing partners, who are granted access for defensive work.

Limiting access was a sensible move by a safety-conscious lab, buying time to shore up defences and test alignment, where Mythos now scores remarkably well. There is no credible reason to think other frontier labs will remain significantly behind once Mythos-level capabilities are released; the history of AI development is a history of rapid diffusion. Comparable offensive capabilities will likely reach competitors and, eventually, open models. When they do, the minimum viable coalition needed to cause targeted political disruption shrinks considerably. It will be easier for a small group of actors to carry out coordinated attacks on strategically valuable digital infrastructure, as Mythos enables them to do so quicker and with less technical expertise. The smaller that minimum coalition, the more plausible certain AI-enabled coup pathways become. One actor does not need to seize power with overwhelming force if a small group can cause targeted disruption at an opportune moment.

Project Glasswing

This is where Project Glasswing comes in, which is the most interesting piece of the Mythos puzzle. The Anthropic-led coalition brings together select partners sitting on the most valuable cyber infrastructure - including Apple, AWS, Google, and JP Morgan Chase - and grants them privileged access to Mythos for defensive purposes. Anthropic write that:

The work of defending the world’s cyber infrastructure might take years; frontier AI capabilities are likely to advance substantially over just the next few months.

Glasswing responds to a specific asymmetric threat: namely, that attackers gain frontier cyber capability before cybersecurity has caught up to the threat. If critical infrastructure maintainers can find and patch vulnerabilities before those capabilities diffuse to malicious actors, the window for disruption narrows. This is directly relevant to coup mitigation, because the asymmetric disruption pathways Forethought describe depend on defenders being slower than attackers.

Glasswing also builds stronger relationships between labs and the organisations or state-adjacent institutions who uphold the most important digital infrastructure. In a coup scenario, the ability to operate with speed and trust will matter, so it is vital these working relationships exist before a political emergency. If other labs adopt this as standard practice - letting partners bolster defences before releasing models with significantly improved offensive capabilities - it would be a meaningful governance precedent.

But there is a more cautionary read of this approach. Glasswing makes Anthropic the effective arbiter of which infrastructure is strategically important enough to be protected, and which actors are trusted enough to access capabilities that could be deployed to cause serious harm. By all accounts they are behaving responsibly. The concern is the concentration of power in the hands of a smaller number of private actors: one private institution is distributing strategically important capability according to its own criteria, in the absence of any agreed framework for what those criteria should be.

These decisions will become increasingly consequential. The capabilities of frontier models are not going to slow, and each major capability leap will be followed by some version of the same question: who should have access to this, who should be able to defend themselves against this, and who should make that call? Right now, the answer is determined by whichever lab happens to develop the most capable model. They are making those decisions without clear rules governing their behaviour, or democratic accountability for the outcomes, which could have serious consequences for democratic resilience.

This points to a geopolitical implication that will become harder to ignore. It is likely that states will increasingly frame access to frontier models as a question of sovereignty; if a state cannot defend its own digital infrastructure without privileged access to systems held by an American tech company, it is not fully sovereign in its domain. On that basis, frontier labs will increasingly become targets for governments, corporations and political factions. In a coup scenario, it is plausible to think that a plotting group would attempt to pressure, co-opt or infiltrate whichever institution controls access to the most capable AI.

How could an ‘asymmetric disruption pathway’ play out?

If we consider a plausible near-term coup pathway emerging from all of this, it could look less dramatic than the scenarios Forethought list, and more like this:

- A small group gains privileged or early access to advanced AI cyber capabilities.

- The group uses them to identify weaknesses in politically important infrastructure.

- The group times disruption or confusion around a constitutional, electoral, military, or succession crisis.

- The disruption weakens institutional coordination and public trust.

- The group uses existing political, bureaucratic, or security relationships to claim authority or entrench control.

- Defenders struggle because capability, visibility, and response coordination are unevenly distributed.

Mythos updates the plausibility of steps one through three. It opens a greater window for strategic asymmetries that a small group could exploit at a decisive moment, whether that small group is operating within a lab or from outside of it.

The intuitive picture of AI-enabled coup risk is whether a model could seize power itself, or autonomously ‘perform a coup’. I think the nearer-term risk is an access-control problem: that a handful of private actors determine who can control - and who can defend themselves against - humanity’s most powerful technology. Glasswing is a genuine attempt to manage that problem responsibly. But a private actor behaving responsibly is less secure than actors operating within legitimate governance frameworks. As capabilities grow, that distinction will matter more.

This is my first post here, and my first post on AI governance, so I particularly welcome thoughts, feedback, criticism, coffee invites, whatever. I plan to write another post on what updated coup risk mitigations might look like in light of Mythos and Glasswing.