tl;dr: Ask questions about AGI Safety as comments on this post, including ones you might otherwise worry seem dumb!

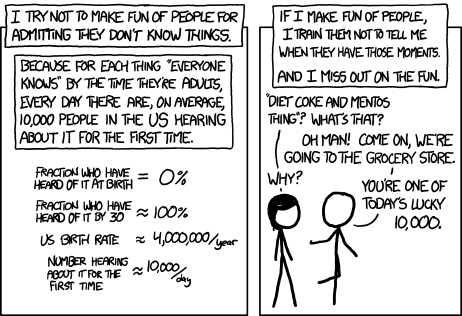

Asking beginner-level questions can be intimidating, but everyone starts out not knowing anything. If we want more people in the world who understand AGI safety, we need a place where it's accepted and encouraged to ask about the basics.

We'll be putting up monthly FAQ posts as a safe space for people to ask all the possibly-dumb questions that may have been bothering them about the whole AGI Safety discussion, but which until now they didn't feel able to ask.

It's okay to ask uninformed questions, and not worry about having done a careful search before asking.

AISafety.info - Interactive FAQ

Additionally, this will serve as a way to spread the project Rob Miles' team[1] has been working on: Stampy and his professional-looking face aisafety.info. This will provide a single point of access into AI Safety, in the form of a comprehensive interactive FAQ with lots of links to the ecosystem. We'll be using questions and answers from this thread for Stampy (under these copyright rules), so please only post if you're okay with that!

You can help by adding questions (type your question and click "I'm asking something else") or by editing questions and answers. We welcome feedback and questions on the UI/UX, policies, etc. around Stampy, as well as pull requests to his codebase and volunteer developers to help with the conversational agent and front end that we're building.

We've got more to write before he's ready for prime time, but we think Stampy can become an excellent resource for everyone from skeptical newcomers, through people who want to learn more, right up to people who are convinced and want to know how they can best help with their skillsets.

Guidelines for Questioners:

- No previous knowledge of AGI safety is required. If you want to watch a few of the Rob Miles videos, read either the WaitButWhy posts, or the The Most Important Century summary from OpenPhil's co-CEO first that's great, but it's not a prerequisite to ask a question.

- Similarly, you do not need to try to find the answer yourself before asking a question (but if you want to test Stampy's in-browser tensorflow semantic search that might get you an answer quicker!).

- Also feel free to ask questions that you're pretty sure you know the answer to, but where you'd like to hear how others would answer the question.

- One question per comment if possible (though if you have a set of closely related questions that you want to ask all together that's ok).

- If you have your own response to your own question, put that response as a reply to your original question rather than including it in the question itself.

- Remember, if something is confusing to you, then it's probably confusing to other people as well. If you ask a question and someone gives a good response, then you are likely doing lots of other people a favor!

- In case you're not comfortable posting a question under your own name, you can use this form to send a question anonymously and I'll post it as a comment.

Guidelines for Answerers:

- Linking to the relevant answer on Stampy is a great way to help people with minimal effort! Improving that answer means that everyone going forward will have a better experience!

- This is a safe space for people to ask stupid questions, so be kind!

- If this post works as intended then it will produce many answers for Stampy's FAQ. It may be worth keeping this in mind as you write your answer. For example, in some cases it might be worth giving a slightly longer / more expansive / more detailed explanation rather than just giving a short response to the specific question asked, in order to address other similar-but-not-precisely-the-same questions that other people might have.

Finally: Please think very carefully before downvoting any questions, remember this is the place to ask stupid questions!

- ^

If you'd like to join, head over to Rob's Discord and introduce yourself!

I would like to know about the history of the term "AI alignment". I found an article written by Paul Christiano in 2018. Did the use of the term start around this time? Also, what is the difference between AI alignment and value alignment?

https://www.alignmentforum.org/posts/ZeE7EKHTFMBs8eMxn/clarifying-ai-alignment

Some considerations I came to think about which might prevent AI systems from becoming power-seeking by default:

So even if AI systems make plans / chose actions based on expected value calculations, just doing the thing they are trying to do might be the better strategy. (Even if gaining more power first would, if it worked, eventually make the AI system better achieve its goal).

Am I missing something? And are there any predictions on which of these two trends will win out? (I'm speaking of cases where we did not intend the system to be power-seeking, as opposed to, e.g., when you program the system to "make as much money as possible, forever".)

I asked a similar question in the LW thread: https://www.lesswrong.com/posts/SFuLQA7guCnG8pQ7T/all-agi-safety-questions-welcome-especially-basic-ones-may?commentId=La8GtcDSKASbgvG2J

What are the arguments for why someone should work in AI safety over wild animal welfare? (Holding constant personal fit etc)

What are the key cruxes between people who think AGI is about to kill us all, and those who don't? I'm at the stage where I can read something like this and think "ok so we're all going to die", then follow it up with this and be like "ah great we're all fine then". I don't yet have the expertise to critically evaluate the arguments in any depth. Has anyone written something that explains where people begin to diverge, and why, in a reasonably accessible way?

80k's AI risk article has a section titled "What do we think are the best arguments against this problem being pressing?"

Do the concepts behind AGI safety only make sense if you have roughly the same worldview as the top AGI safety researchers - secular atheism and reductive materialism/physicalism and a computational theory of mind?

Can you highlight some specific AGI safety concepts that make less sense without secular atheism, reductive materialism, and/or computational theory of mind?

I'd like to underline that I'm agnostic, and I don't know what the true nature of our reality is, though lately I've been more open to anti-physicalist views of the universe.

For one, if there's a continuation of consciousness after death then AGI killing lots of people might not be as bad as when there is no continuation of consciousness after death. I would still consider it very bad, but mostly because I like this world and the living beings in it and would not like them to end, but it wouldn't be the end of consciousnesses like some doomy AGI safety people imply.

Another thing is that the relationship between consciousness and the physical universe might be more complex than physicalists say - like some of the early figures of quantum physics thought - and there might unknown to current science factors at play that could have an effect on the outcome. I don't have more to say about this because I'm uncertain what the relationship between consciousness and the physical universe might be in such a view.

And lastly, if there's God or gods or something similar, such beings would have agency and could have an effect on what the outcome might be. For example, there are Christian eschatological views that say that the Christian prophecies about the New Earth and other such things must come true in some way, so the future cannot end in a total extinction of all human life.

Suppose someone is an ethical realist: the One True Morality is out there, somewhere, for us to discover. Is it likely that AGI will be able to reason its way to finding it?

What are the best examples of AI behavior we have seen where a model does something "unreasonable" to further its goals? Hallucinating citations?

I've been doing a 1-year CS MSc (one of the 'conversion' courses in the UK). I took as many AI/ML electives as I'm permitted to/can handle, but I missed out on an intro to RL course. I'm planning to take some time to (semi-independently) up-skill in AI safety after graduating. This might involve some projects and some self-study.

It seems like a good idea to be somewhat knowledgeable on RL basics going forward. I've taken (paid) accredited, distance/online courses (with exams etc.) concurrently with my main degree and found them to be higher quality than common perception suggests - although it does feel slightly distracting to have more on my plate.

Is it worth doing a distance/online course in RL (e.g. https://online.stanford.edu/courses/xcs234-reinforcement-learning ) as one part of the up-skilling period following graduation? Besides the Stanford online one that I've linked, are there any others that might be high quality and worth looking into? Otherwise, are there other resources that might be good alternatives?