SALA has undoubtedly been one of the most important AI events of the year in Latin America.

The event brought together incredibly talented students, an outstanding group of speakers , not only in terms of academic and professional excellence, but also in their human quality, and a community deeply committed to thinking responsibly about the future of artificial intelligence.

The SALA organizing team, together with its general director Pablo Samuel Castro, Adjunct Research Scientist at Google DeepMind, gave it their all, truly everything. The level of care and effort behind every detail of the event was evident.

Preparing as a community

As part of the activities of AI Safety México, before attending the event we met with our community to analyze and discuss the International AI Safety Report 2026, led by Yoshua Bengio and developed with the participation of more than 100 AI experts.

Our goal: To identify our uncertainties, concerns, and areas of opportunity from a Latin American perspective.

This preparation was extremely valuable because it allowed me to arrive at the event with concrete questions that represented not only my own concerns, but also those of our community.

Conversations with researchers and industry leaders

One of the most valuable moments was having the opportunity to sit down and speak with researchers and leaders from organizations directly involved in the development of advanced AI systems.

Among them were people working with or collaborating with:

- Google DeepMind

- Apple

- Amazon

- Mila

- LawZero

I took the opportunity to ask directly:

What approach are these organizations taking toward AI safety?

Responsible AI and privacy

Sammy Bengio shared that Apple is placing strong emphasis on Responsible AI, particularly when it comes to user data privacy.

Another aspect I found particularly important in Sammy’s talk (ML at Apple) was how limitations such as lack of generalization under small changes (distribution shift), difficulty in abstracting the underlying structure of problems, and poor calibration in high-stakes settings directly connect to real-world risks. If seemingly irrelevant changes, such as modifying names, numbers, or adding distracting information—can significantly alter a model’s behavior, then these systems are likely to behave unpredictably in novel or adversarial environments, precisely where reliability matters most. This reinforces the need to move beyond average-case performance and instead focus on worst-case robustness, as well as to shift from relying on traditional benchmarks to designing evaluations that truly stress models under distribution shift.

Deepfakes and risk mitigation

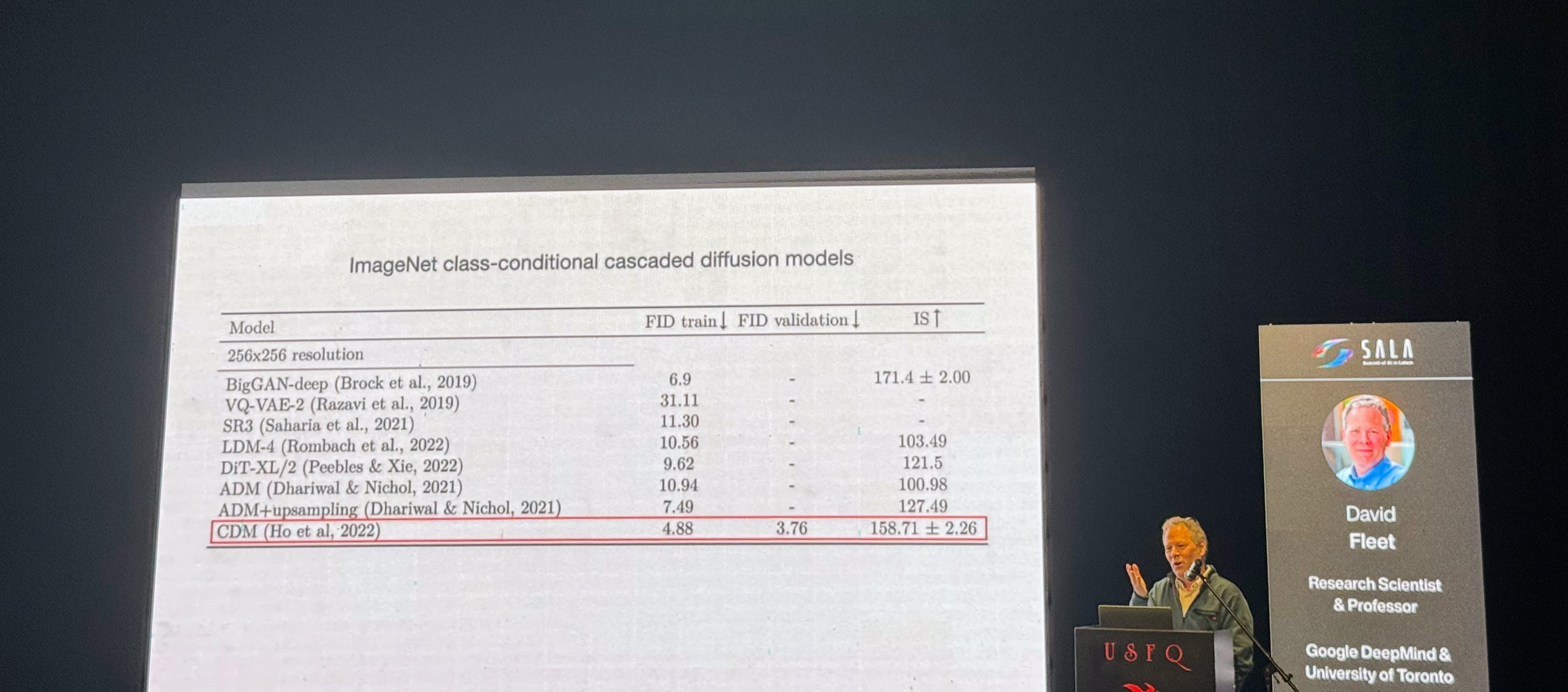

I also asked David Fleet from Google DeepMind about his perspective on deepfakes and the actions large technology companies are taking to address this issue.

He emphasized that this is currently a huge challenge for the industry.

Among the strategies being explored are techniques such as steganography, aimed at helping identify or trace artificially generated content, especially in contexts where it could cause harm.

He also highlighted an important point:

The safety of these technologies does not depend solely on companies, but also on the responsible use of these tools by users.

This connects directly with one of the key points mentioned in the AI Safety Report:

“Deepfake pornography, which disproportionately affects women and girls, is especially concerning.”

In that sense, it was reassuring to see that people like David Fleet and Sammy Bengio are working within these organizations with a clear awareness of the risks and a real commitment to making these technologies safer.

A conversation about model evaluations

Another topic I was especially interested in discussing relates to my undergraduate thesis work on model evaluation.

One concern mentioned in the report stood out to me:

“Situational awareness may allow AI models to produce different outputs depending on whether they are being evaluated or deployed.”

In other words, the possibility that models adjust their behavior when they detect that they are being evaluated.

I had the opportunity to ask Vincent Mai, Senior Research Scientist at LawZero, whether he was aware of promising approaches to address this issue.

He shared some relatively simple evaluation techniques that can help make visible behavioral patterns that are not usually easy to detect.

Although the field is still exploring more robust solutions, it was fascinating to see how even simple methodological adjustments can provide useful signals when evaluating complex AI systems.

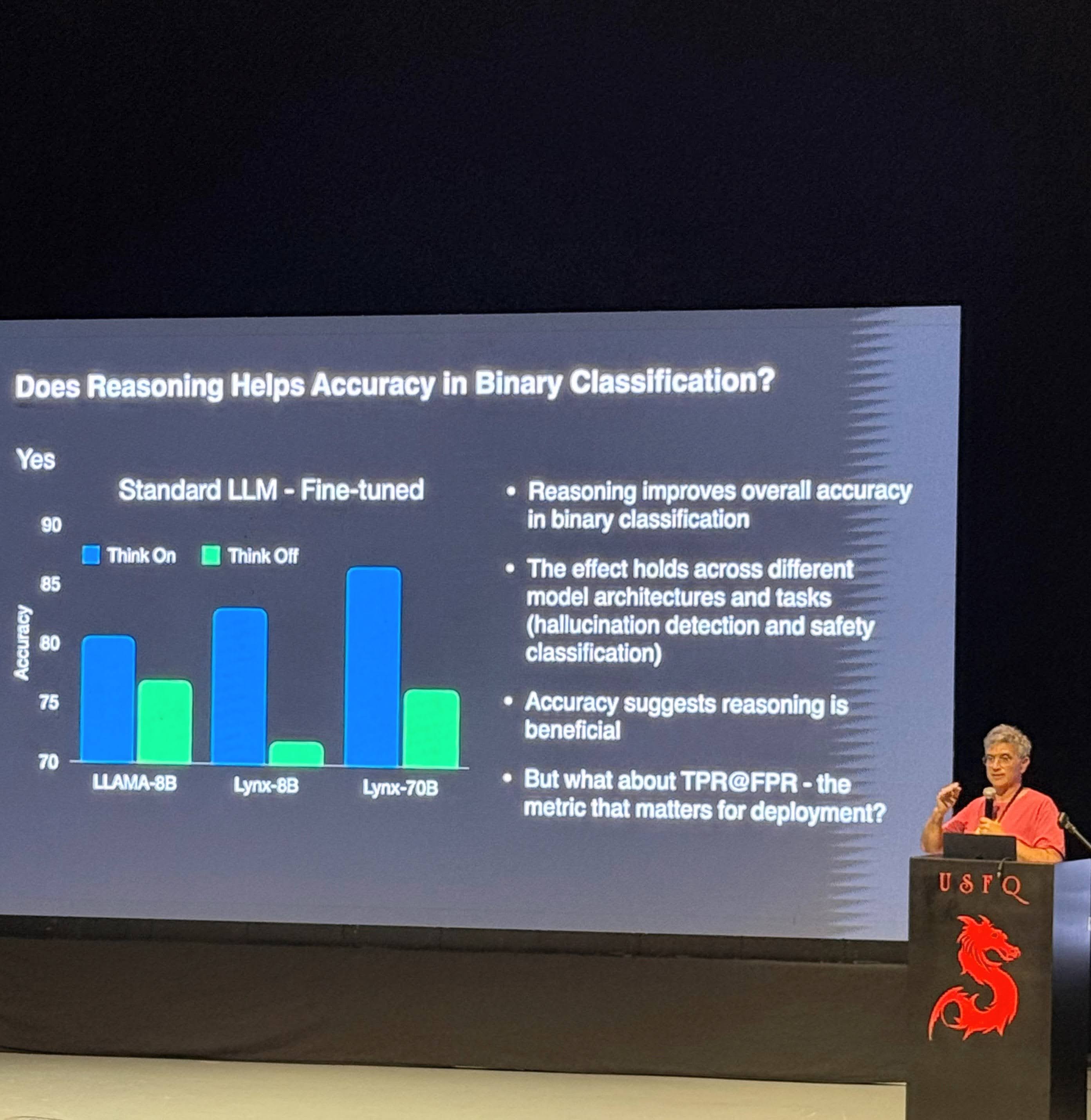

In a related direction, Vincent’s talk addressed the role of Reinforcement Learning (RL) as a tool to improve model behavior. He outlined its foundation in sequential decision-making problems, commonly modeled as Markov Decision Processes (MDPs), where the goal is to learn a policy that maximizes expected reward. In this context, RL is used to guide models toward better problem-solving strategies, although it still faces important limitations, particularly when tasks require long, multi-step reasoning.

Orr Paradise’s Talk & Our Participation in SALA's Hackathon

Another highlight of the event was Orr Paradise’s talk, “AI for Decoding Sperm Whale Communication”, which served as an introduction to a hands-on hackathon focused on marine ecosystems and animal communication.

The hackathon included multiple tracks. One focused on marine conservation in collaboration with MigraMar, using video data for fish detection. Another, where our team decided to participate, was based on underwater acoustic recordings collected near the Galápagos Islands. The task was to explore, label, and classify these audio datasets to better understand the soundscape of the region.

Motivated by Orr Paradise’s inspiring work, we decided to participate in the hackathon by following one of the suggested approaches, specifically, the second one, which involved leveraging pretrained models such as Perch 2.0 and BirdNET.

Our approach relied on pretrained models. We used Perch 2.0, a model trained on ~14,500 terrestrial species, which generalized surprisingly well to marine audio by extracting embeddings (numerical representations) from short audio clips. We also used BirdNET, trained on ~6,500 bird species, leveraging the idea that acoustic patterns can transfer across domains.

The suggested pipeline involved:

- Running a humpback whale detector across recordings

- Extracting embeddings from 5-second audio clips

- Applying dimensionality reduction and clustering to group similar sounds

- Listening to clusters to interpret what each sound might represent

This allowed us to move from completely unlabeled data toward meaningful structure in the dataset.

Another particularly interesting approach, closely aligned with Orr Paradise’s work, involved the Whale Acoustic Model (WAM), presented at NeurIPS 2025. This model was originally trained for music generation and later adapted to whale vocalizations. The key insight is that both music and animal communication share underlying structure, such as rhythm, pitch, and temporal patterns, making musical models a strong foundation for understanding non-human communication.

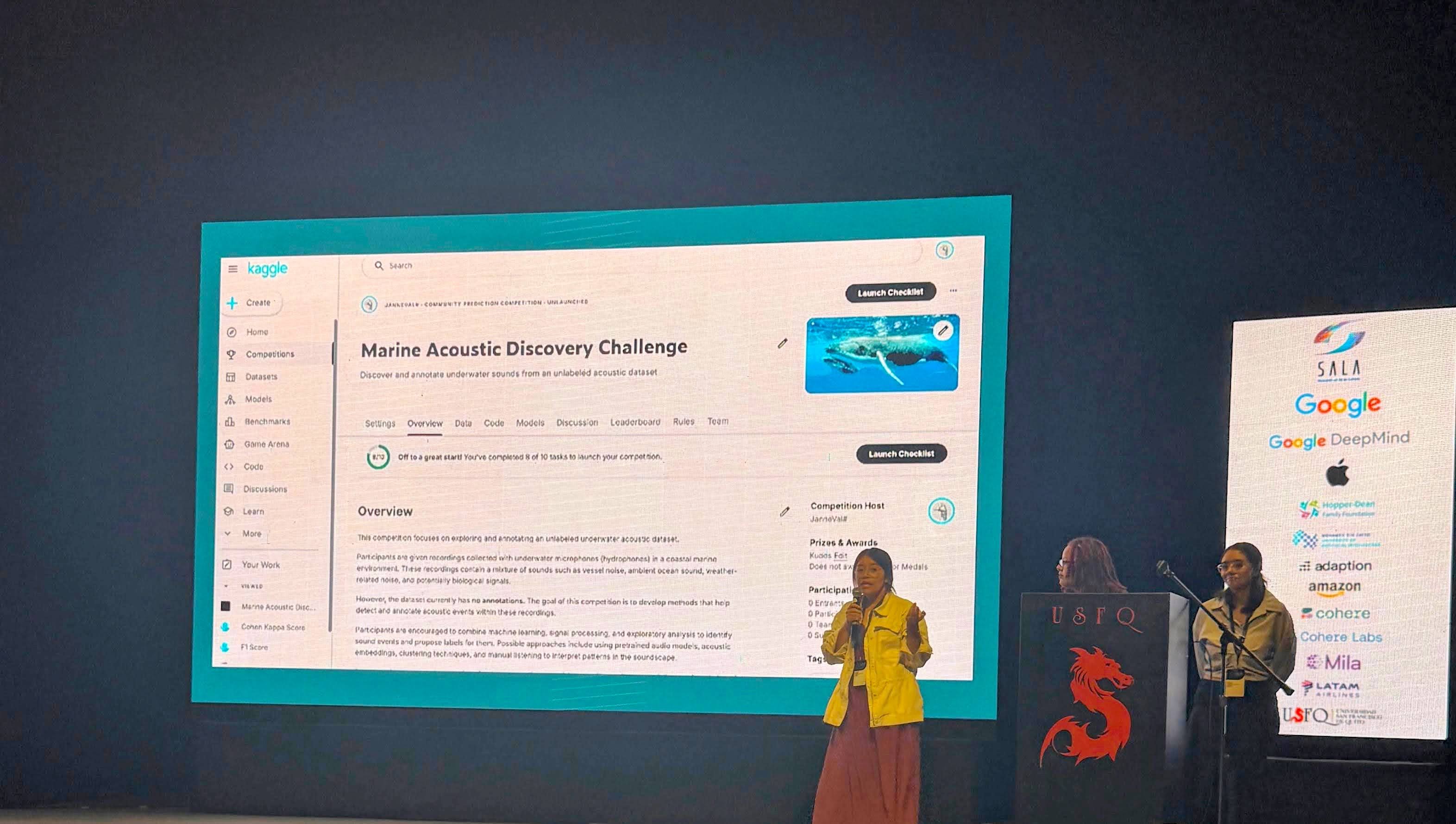

As an additional contribution, our team proposed developing a Kaggle-style competition to collaboratively build a high-quality labeled dataset. The idea is to leverage open participation to accelerate progress—bringing together data scientists, engineers, and researchers from around the world to contribute to this cause. In doing so, we aim not only to advance the science, but also to strengthen community engagement and channel the diverse skills of a global community toward a shared, impactful goal.

This proposal received special recognition from the event organizers. From our side, our team, Mónica (part of the EA community at UPY), Vanessa (part of the EA community at Universidad de La Sabana), and myself (EA Universidades México), are very excited about the possibility of taking this idea forward in collaboration with researchers working in the Galápagos.

It is also worth noting that this idea emerged during a conversation over lunch with Francisco Gómez (Universidad Nacional de Colombia), whose advocacy for thinking beyond the immediate project, toward scalability of social impact and collaborative approaches, was a key inspiration.

Final reflection

Leaving conversations like these with more questions than answers is actually a very good sign.

Events like SALA AI remind us that AI safety is not just a technical problem. It is a collective effort that involves:

- researchers

- industry

- academic communities

- civil society

These spaces push the boundaries of what AI can do and open opportunities to apply these technologies to high-impact, often neglected areas such as animal welfare and environmental protection.

At AI Safety México & EA Universidades México, we will continue building spaces for critical discussion, collaboration, and action, supporting the development of talent committed to advancing AI in ways that are safe, responsible, and beneficial for the world.

Executive summary: SALA AI 2026 was an important Latin American AI event that brought together talented students, speakers, and safety-focused communities; the author describes valuable conversations with AI researchers and industry leaders about responsible AI development, and highlights a hackathon project on marine ecosystem analysis using machine learning.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.