If this ends up being the most important century due to advanced AI, what are the key factors in whether things go well or poorly?

(Click to expand) More detail on why AI could make this the most important century

In The Most Important Century, I argued that the 21st century could be the most important century ever for humanity, via the development of advanced AI systems that could dramatically speed up scientific and technological advancement, getting us more quickly than most people imagine to a deeply unfamiliar future.

This page has a ~10-page summary of the series, as well as links to an audio version, podcasts, and the full series.

The key points I argue for in the series are:

- The long-run future is radically unfamiliar. Enough advances in technology could lead to a long-lasting, galaxy-wide civilization that could be a radical utopia, dystopia, or anything in between.

- The long-run future could come much faster than we think, due to a possible AI-driven productivity explosion.

- The relevant kind of AI looks like it will be developed this century - making this century the one that will initiate, and have the opportunity to shape, a future galaxy-wide civilization.

- These claims seem too "wild" to take seriously. But there are a lot of reasons to think that we live in a wild time, and should be ready for anything.

- We, the people living in this century, have the chance to have a huge impact on huge numbers of people to come - if we can make sense of the situation enough to find helpful actions. But right now, we aren't ready for this.

A lot of my previous writings have focused specifically on the threat of “misaligned AI”: AI that could have dangerous aims of its own and defeat all of humanity. In this post, I’m going to zoom out and give a broader overview of multiple issues transformative AI could raise for society - with an emphasis on issues we might want to be thinking about now rather than waiting to address as they happen.

My discussion will be very unsatisfying. “What are the key factors in whether things go well or poorly with transformative AI?” is a massive topic, with lots of angles that have gotten almost no attention and (surely) lots of angles that I just haven’t thought of at all. My one-sentence summary of this whole situation is: we’re not ready for this.

But hopefully this will give some sense of what sorts of issues should clearly be on our radar. And hopefully it will give a sense of why - out of all the issues we need to contend with - I’m as focused on the threat of misaligned AI as I am.

Outline:

- First, I’ll briefly clarify what kinds of issues I’m trying to list. I’m looking for ways the future could look durably and dramatically different depending on how we navigate the development of transformative AI - such that doing the right things ahead of time could make a big, lasting difference.

- Then, I’ll list candidate issues:

- Misaligned AI. I touch on this only briefly, since I’ve discussed it at length in previous pieces. The short story is that we should try to avoid AI ending up with dangerous goals of its own and defeating humanity. (The remaining issues below seem irrelevant if this happens!)

- Power imbalances. As AI speeds up science and technology, it could cause some country/countries/coalitions to become enormously powerful - so it matters a lot which one(s) lead the way on transformative AI. (I fear that this concern is generally overrated compared to misaligned AI, but it is still very important.) There could also be dangers in overly widespread (as opposed to concentrated) AI deployment.

- Early applications of AI. It might be that what early AIs are used for durably affects how things go in the long run - for example, whether early AI systems are used for education and truth-seeking, rather than manipulative persuasion and/or entrenching what we already believe. We might be able to affect which uses are predominant early on.

- New life forms. Advanced AI could lead to new forms of intelligent life, such as AI systems themselves and/or digital people. Many of the frameworks we’re used to, for ethics and the law, could end up needing quite a bit of rethinking for new kinds of entities (for example, should we allow people to make as many copies as they want of entities that will predictably vote in certain ways?) Early decisions about these kinds of questions could have long-lasting effects.

- Persistent policies and norms. Perhaps we ought to be identifying particularly important policies, norms, etc. that seem likely to be durable even through rapid technological advancement, and try to improve these as much as possible before transformative AI is developed. (These could include things like a better social safety net suited to high, sustained unemployment rates; better regulations aimed at avoiding bias; etc.)

- Speed of development. Maybe human society just isn’t likely to adapt well to rapid, radical advances in science and technology, and finding a way to limit the pace of advances would be good.

- Finally, I’ll discuss how I’m thinking about which of these issues to prioritize at the moment, and why misaligned AI is such a focus of mine.

- An appendix will say a small amount about whether the long-run future seems likely to be better or worse than today, in terms of quality of life, assuming we navigate the above issues non-amazingly but non-catastrophically.

The kinds of issues I’m trying to list

One basic angle you could take on AI is:

“AI’s main effect will be to speed up science and technology a lot. This means humans will be able to do all the things they were doing before - the good and the bad - but more/faster. So basically, we’ll end up with the same future we would’ve gotten without AI - just sooner.

“Therefore, there’s no need to prepare in advance for anything in particular, beyond what we’d do to work toward a better future normally (in a world with no AI). Sure, lots of weird stuff could happen as science and technology advance - but that was already true, and many risks are just too hard to predict now and easier to respond to as they happen.”

I don’t agree with the above, but I do think it’s a good starting point. I think we shouldn’t be listing everything that might happen in the future, as AI leads to advances in science and technology, and trying to prepare for it. Instead, we should be asking: “if transformative AI is coming in the next few decades, how does this change the picture of what we should be focused on, beyond just speeding up what’s going to happen anyway?”

And I’m going to try to focus on extremely high-stakes issues - ways I could imagine the future looking durably and dramatically different depending on how we navigate the development of transformative AI.

Below, I’ll list some candidate issues fitting these criteria.

Potential issues

Misaligned AI

I won’t belabor this possibility, because the last several pieces have been focused on it; this is just a quick reminder.

In a world without AI, the main question about the long-run future would be how humans will end up treating each other. But if powerful AI systems will be developed in the coming decades, we need to contend with the possibility that these AI systems will end up having goals of their own - and displacing humans as the species that determines how things will play out.

(Click to expand)Why would AI "aim" to defeat humanity?

A previous piece argued that if today’s AI development methods lead directly to powerful enough AI systems, disaster is likely by default (in the absence of specific countermeasures).

In brief:

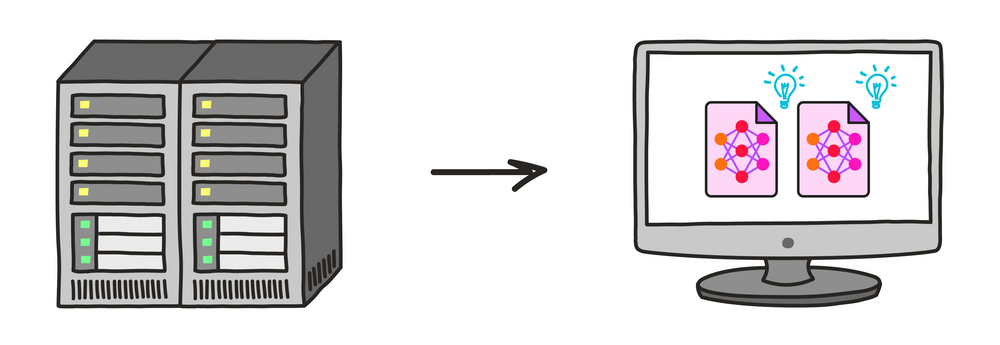

- Modern AI development is essentially based on “training” via trial-and-error.

- If we move forward incautiously and ambitiously with such training, and if it gets us all the way to very powerful AI systems, then such systems will likely end up aiming for certain states of the world (analogously to how a chess-playing AI aims for checkmate).

- And these states will be other than the ones we intended, because our trial-and-error training methods won’t be accurate. For example, when we’re confused or misinformed about some question, we’ll reward AI systems for giving the wrong answer to it - unintentionally training deceptive behavior.

- We should expect disaster if we have AI systems that are both (a) powerful enough to defeat humans and (b) aiming for states of the world that we didn’t intend. (“Defeat” means taking control of the world and doing what’s necessary to keep us out of the way; it’s unclear to me whether we’d be literally killed or just forcibly stopped[1] from changing the world in ways that contradict AI systems’ aims.)

(Click to expand) How could AI defeat humanity?

In a previous piece, I argue that AI systems could defeat all of humanity combined, if (for whatever reason) they were aimed toward that goal.

By defeating humanity, I mean gaining control of the world so that AIs, not humans, determine what happens in it; this could involve killing humans or simply “containing” us in some way, such that we can’t interfere with AIs’ aims.

One way this could happen is if AI became extremely advanced, to the point where it had "cognitive superpowers" beyond what humans can do. In this case, a single AI system (or set of systems working together) could imaginably:

- Do its own research on how to build a better AI system, which culminates in something that has incredible other abilities.

- Hack into human-built software across the world.

- Manipulate human psychology.

- Quickly generate vast wealth under the control of itself or any human allies.

- Come up with better plans than humans could imagine, and ensure that it doesn't try any takeover attempt that humans might be able to detect and stop.

- Develop advanced weaponry that can be built quickly and cheaply, yet is powerful enough to overpower human militaries.

However, my piece also explores what things might look like if each AI system basically has similar capabilities to humans. In this case:

- Humans are likely to deploy AI systems throughout the economy, such that they have large numbers and access to many resources - and the ability to make copies of themselves.

- From this starting point, AI systems with human-like (or greater) capabilities would have a number of possible ways of getting to the point where their total population could outnumber and/or out-resource humans.

- I address a number of possible objections, such as "How can AIs be dangerous without bodies?"

Power imbalances

I’ve argued that AI could cause a dramatic acceleration in the pace of scientific and technological advancement.

(Click to expand) How AI could cause explosive progress

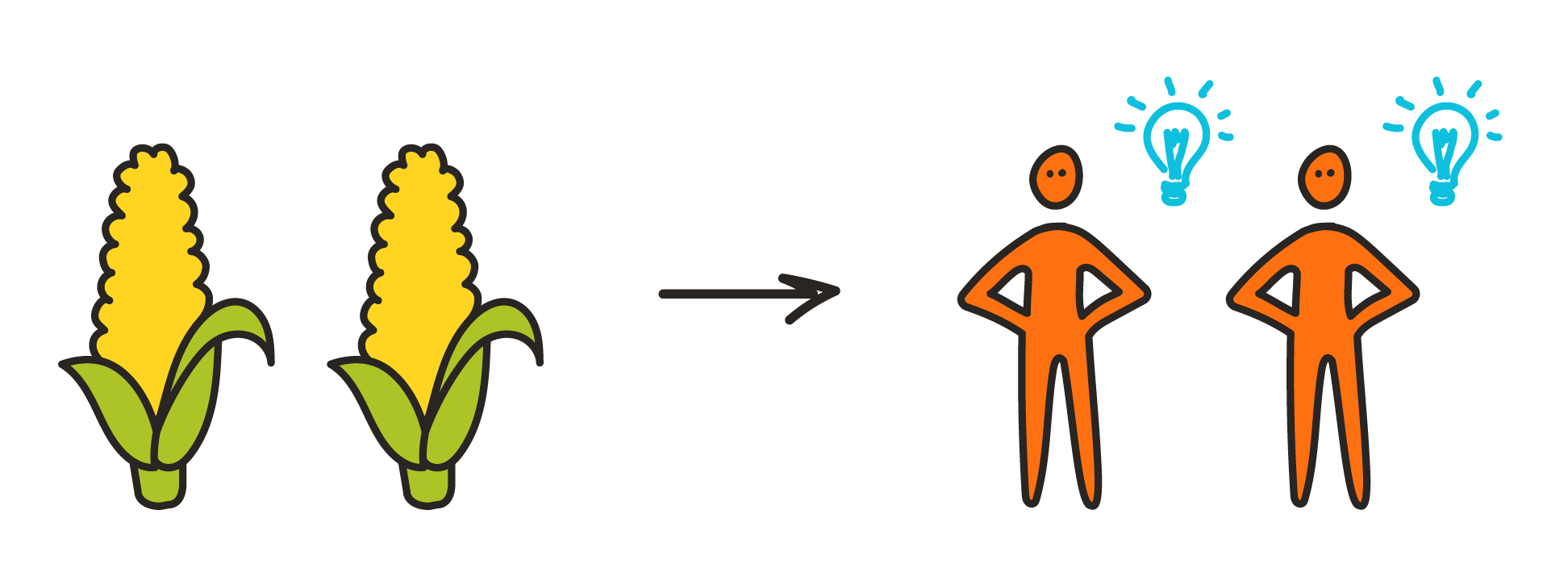

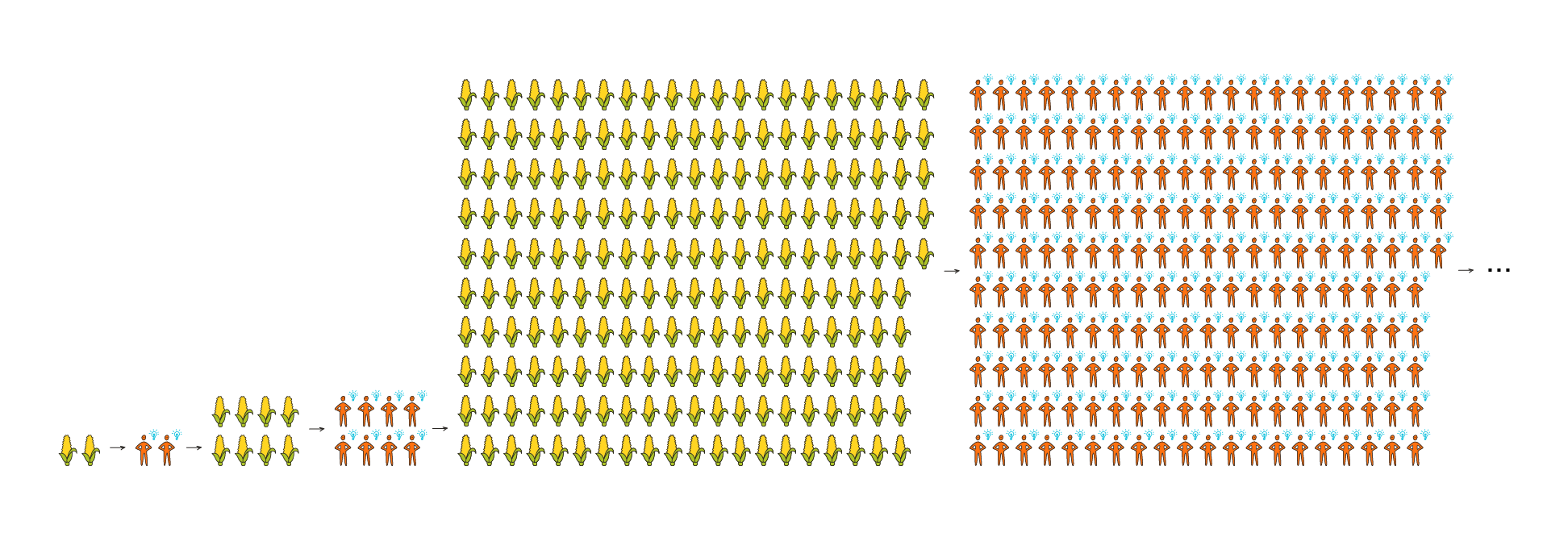

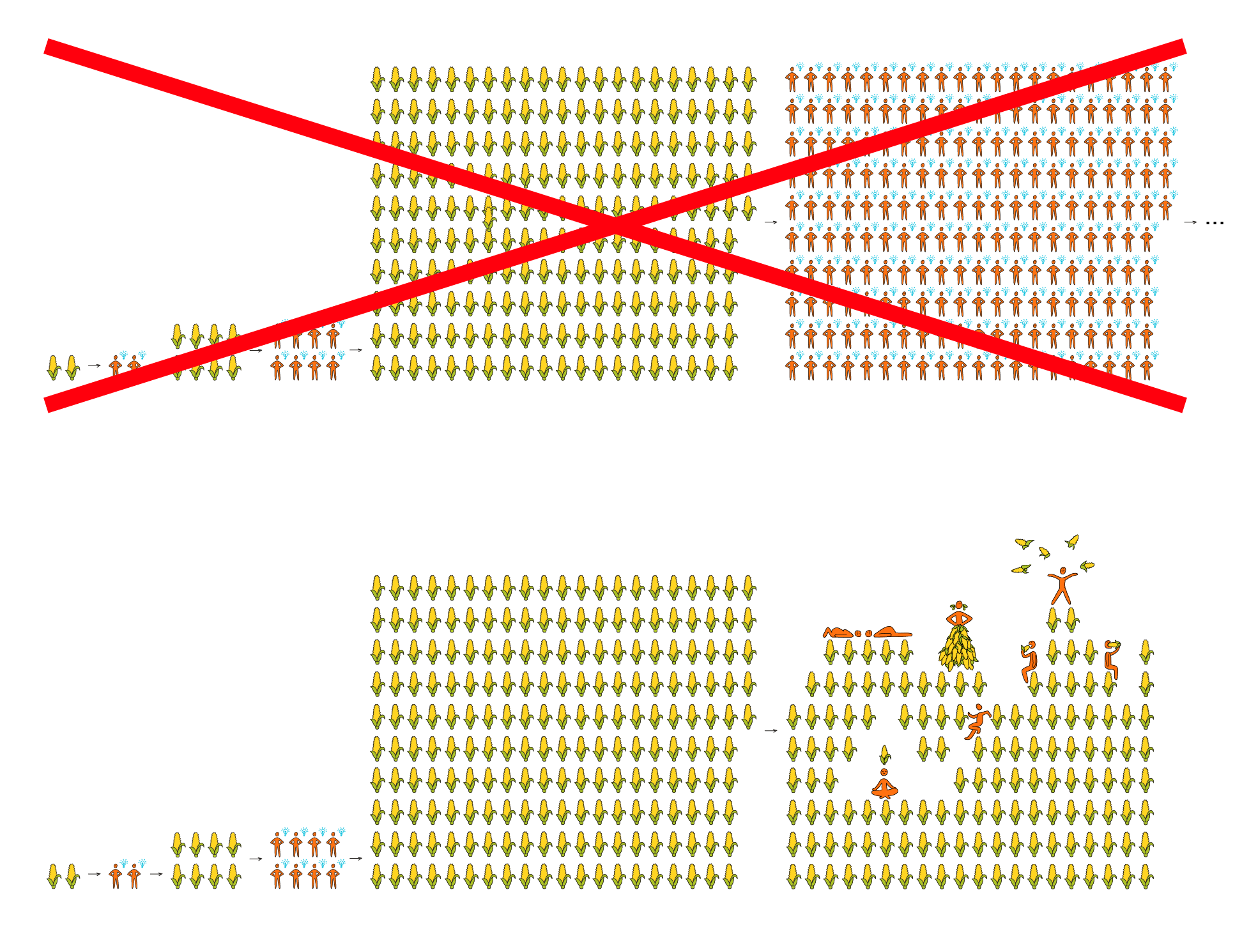

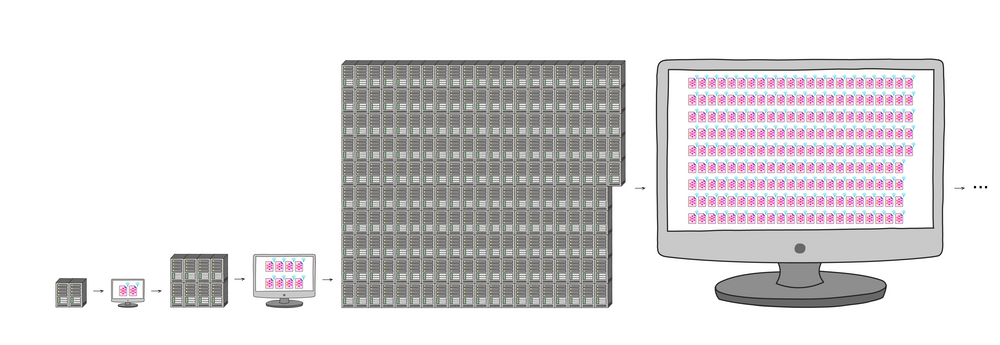

(This section is mostly copied from my summary of the "most important century" series; it links to some pieces with more detail at the bottom.)Standard economic growth models imply that any technology that could fully automate innovation would cause an "economic singularity": productivity going to infinity this century. This is because it would create a powerful feedback loop: more resources -> more ideas and innovation -> more resources -> more ideas and innovation ...

This loop would not be unprecedented. I think it is in some sense the "default" way the economy operates - for most of economic history up until a couple hundred years ago.

But in the "demographic transition" a couple hundred years ago, the "more resources -> more people" step of that loop stopped. Population growth leveled off, and more resources led to richer people instead of more people:

The feedback loop could come back if some other technology restored the "more resources -> more ideas" dynamic. One such technology could be the right kind of AI: what I call PASTA, or Process for Automating Scientific and Technological Advancement.

That means that our radical long-run future could be upon us very fast after PASTA is developed (if it ever is).

It also means that if PASTA systems are misaligned - pursuing their own non-human-compatible objectives - things could very quickly go sideways.

Key pieces:

One way of thinking about this: perhaps (for reasons I’ve argued previously) AI could enable the equivalent of hundreds of years of scientific and technological advancement in a matter of a few months (or faster). If so, then developing powerful AI a few months before others could lead to having technology that is (effectively) hundreds of years ahead of others’.

Because of this, it’s easy to imagine that AI could lead to big power imbalances, as whatever country/countries/coalitions “lead the way” on AI development could become far more powerful than others (perhaps analogously to when a few smallish European states took over much of the rest of the world).

One way we might try to make the future go better: maybe it could be possible for different countries/coalitions to strike deals in advance. For example, two equally matched parties might agree in advance to share their resources, territory, etc. with each other, in order to avoid a winner-take-all competition.

What might such agreements look like? Could they possibly be enforced? I really don’t know, and I haven’t seen this explored much.[1]

Another way one might try to make the future go better is to try to help a particular country, coalition, etc. develop powerful AI systems before others do. I previously called this the “competition” frame.

I think it is, in fact, enormously important who leads the way on transformative AI. At the same time, I’ve expressed concern that people might overfocus on this aspect of things vs. other issues, for a number of reasons including:

- I think people naturally get more animated about "helping the good guys beat the bad guys" than about "helping all of us avoid getting a universally bad outcome, for impersonal reasons such as 'we designed sloppy AI systems' or 'we created a dynamic in which haste and aggression are rewarded.'"

- I expect people will tend to be overconfident about which countries, organizations or people they see as the "good guys."

(More here.)

Finally, it’s worth mentioning the possible dangers of powerful AI being too widespread, rather than too concentrated. In The Vulnerable World Hypothesis, Nick Bostrom contemplates potential future dynamics such as “advances in DIY biohacking tools might make it easy for anybody with basic training in biology to kill millions.” In addition to avoiding worlds where AI capabilities end up concentrated in the hands of a few, it could also be important to avoid worlds in which they diffuse too widely, too quickly, before we’re able to assess the risks of widespread access to technology far beyond today’s.

Early applications of AI

Maybe advanced AI will be useful for some sorts of tasks before others. For example, maybe - by default - advanced AI systems will soon be powerful persuasion tools, and cause wide-scale societal dysfunction before they cause rapid advances in science and technology. And maybe, with effort, we could make it less likely that this happens - more likely that early AI systems are used for education and truth-seeking, rather than manipulative persuasion and/or entrenching what we already believe.

There could be lots of possibilities of this general form: particular ways in which AI could be predictably beneficial, or disruptive, before it becomes an all-purpose accelerant to science and technology. Perhaps trying to map these out today, and push for advanced AI to be used for particular purposes early on, could have a lasting effect on the future.

New life forms

Advanced AI could lead to new forms of intelligent life, such as AI systems themselves and/or digital people.

Digital people: one example of how wild the future could be

In a previous piece, I tried to give a sense of just how wild a future with advanced technology could be, by examining one hypothetical technology: "digital people."

To get the idea of digital people, imagine a computer simulation of a specific person, in a virtual environment. For example, a simulation of you that reacts to all "virtual events" - virtual hunger, virtual weather, a virtual computer with an inbox - just as you would.

I’ve argued that digital people would likely be conscious and deserving of human rights just as we are. And I’ve argued that they could have major impacts, in particular:

- Productivity. Digital people could be copied, just as we can easily make copies of ~any software today. They could also be run much faster than humans. Because of this, digital people could have effects comparable to those of the Duplicator, but more so: unprecedented (in history or in sci-fi movies) levels of economic growth and productivity.

- Social science. Today, we see a lot of progress on understanding scientific laws and developing cool new technologies, but not so much progress on understanding human nature and human behavior. Digital people would fundamentally change this dynamic: people could make copies of themselves (including sped-up, temporary copies) to explore how different choices, lifestyles and environments affected them. Comparing copies would be informative in a way that current social science rarely is.

- Control of the environment. Digital people would experience whatever world they (or the controller of their virtual environment) wanted. Assuming digital people had true conscious experience (an assumption discussed in the FAQ), this could be a good thing (it should be possible to eliminate disease, material poverty and non-consensual violence for digital people) or a bad thing (if human rights are not protected, digital people could be subject to scary levels of control).

- Space expansion. The population of digital people might become staggeringly large, and the computers running them could end up distributed throughout our galaxy and beyond. Digital people could exist anywhere that computers could be run - so space settlements could be more straightforward for digital people than for biological humans.

- Lock-in. In today's world, we're used to the idea that the future is unpredictable and uncontrollable. Political regimes, ideologies, and cultures all come and go (and evolve). But a community, city or nation of digital people could be much more stable.

- Digital people need not die or age.

- Whoever sets up a "virtual environment" containing a community of digital people could have quite a bit of long-lasting control over what that community is like. For example, they might build in software to reset the community (both the virtual environment and the people in it) to an earlier state if particular things change - such as who's in power, or what religion is dominant.

- I consider this a disturbing thought, as it could enable long-lasting authoritarianism, though it could also enable things like permanent protection of particular human rights.

I think these effects could be a very good or a very bad thing. How the early years with digital people go could irreversibly determine which.

More:

Many of the frameworks we’re used to, for ethics and the law, could end up needing quite a bit of rethinking for new kinds of entities. For example:

- How should we determine which AI systems or digital people are considered to have “rights” and get legal protections?

- What about the right to vote? If an AI system or digital person can be quickly copied billions of times, with each copy getting a vote, that could be a recipe for trouble - does this mean we should restrict copying, restrict voting or something else?

- What should the rules be about engineering AI systems or digital people to have particular beliefs, motivations, experiences, etc.? Simple examples:

- Should it be illegal to create new AI systems or digital people that will predictably suffer a lot? How much suffering is too much?

- What about creating AI systems or digital people that consistently, predictably support some particular political party or view?

(For a lot more in this vein, see this very interesting piece by Nick Bostrom and Carl Shulman.)

Early decisions about these kinds of questions could have long-lasting effects. For example, imagine someone creating billions of AI systems or digital people that have capabilities and subjective experiences comparable to humans, and are deliberately engineered to “believe in” (or at least help promote) some particular ideology (Communism, libertarianism, etc.) If these systems are self-replicating, that could change the future drastically.

Thus, it might be important to set good principles in place for tough questions about how to treat new sorts of digital entities, before new sorts of digital entities start to multiply.

Persistent policies and norms

There might be particular policies, norms, etc. that are likely to stay persistent even as technology is advancing and many things are changing.

For example, how people think about ethics and norms might just inherently change more slowly than technological capabilities change. Perhaps a society that had strong animal rights protections, and general pro-animal attitudes, would maintain these properties all the way through explosive technological progress, becoming a technologically advanced society that treated animals well - while a society that had little regard for animals would become a technologically advanced society that treated animals poorly. Similar analysis could apply to religious values, social liberalism vs. conservatism, etc.

So perhaps we ought to be identifying particularly important policies, norms, etc. that seem likely to be durable even through rapid technological advancement, and try to improve these as much as possible before transformative AI is developed.

One tangible example of a concern I’d put in this category: if AI is going to cause high, persistent technological unemployment, it might be important to establish new social safety net programs (such as universal basic income) today - if these programs would be easier to establish today than in the future. I feel less than convinced of this one - first because I have some doubts about how big an issue technological unemployment is going to be, and second because it’s not clear to me why policy change would be easier today than in a future where technological unemployment is a reality. And more broadly, I fear that it's very hard to design and (politically) implement policies today that we can be confident will make things durably better as the world changes radically.

Slow it down?

I’ve named a number of ways in which weird things - such as power imbalances, and some parts of society changing much faster than others - could happen as scientific and technological advancement accelerate. Maybe one way to make the most important century go well would be to simply avoid these weird things by avoiding too-dramatic acceleration. Maybe human society just isn’t likely to adapt well to rapid, radical advances in science and technology, and finding a way to limit the pace of advances would be good.

Any individual company, government, etc. has an incentive to move quickly and try to get ahead of others (or not fall too far behind), but coordinated agreements and/or regulations (along the lines of the “global monitoring” possibility discussed here) could help everyone move more slowly.

What else?

Are there other ways in which transformative AI would cause particular issues, risks, etc. to loom especially large, and to be worth special attention today? I’m guessing I’ve only scratched the surface here.

What I’m prioritizing, at the moment

If this is the most important century, there’s a vast set of things to be thinking about and trying to prepare for, and it’s hard to know what to prioritize.

Where I’m at for the moment:

It seems very hard to say today what will be desirable in a radically different future. I wish more thought and attention were going into things like early applications of AI; norms and laws around new life forms; and whether there are policy changes today that we could be confident in even if the world is changing rapidly and radically. But it seems to me that it would be very hard to be confident in any particular goal in areas like these. Can we really say anything today about what sorts of digital entities should have rights, or what kinds of AI applications we hope come first, that we expect to hold up?

I feel most confident in two very broad ideas: “It’s bad if AI systems defeat humanity to pursue goals of their own” and “It’s good if good decision-makers end up making the key decisions.” These map to the misaligned AI and power imbalance topics - or what I previously called caution and competition.

That said, it also seems hard to know who the “good decision-makers” are. I’ve definitely observed some of this dynamic: “Person/company A says they’re trying to help the world by aiming to build transformative AI before person/company B; person/company B says they’re trying to help the world by aiming to build transformative AI before person/company A.”

It’s pretty hard to come up with tangible tests of who’s a “good decision-maker.” We mostly don’t know what person A would do with enormous power, or what person B would do, based on their actions today. One possible criterion is that we should arguably have more trust in people/companies who show more caution - people/companies who show willingness to hurt their own chances of “being in the lead” in order to help everyone’s chance of avoiding a catastrophe from misaligned AI.[2]

(Instead of focusing on which particular people and/or companies lead the way on AI, you could focus on which countries do, e.g. preferring non-authoritarian countries. It’s arguably pretty clear that non-authoritarian countries would be better than authoritarian ones. However, I have concerns about this as a goal as well, discussed in a footnote.[3])

For now, I am most focused on the threat of misaligned AI. Some reasons for this:

- It currently seems to me that misaligned AI is a significant risk. Misaligned AI seems likely by default if we don’t specifically do things to prevent it, and preventing it seems far from straightforward (see previous posts on the difficulty of alignment research and why it could be hard for key players to be cautious).

- At the same time, it seems like there are significant hopes for how we might avoid this risk. As argued here and here, my sense is that the more broadly people understand this risk, the better our odds of avoiding it.

- I currently feel that this threat is underrated, relative to the easier-to-understand angle of “I hope people I like develop powerful AI systems before others do.”

- I think the “competition” frame - focusing on helping some countries/coalitions/companies develop advanced AI before others - makes quite a bit of sense as well. But - as noted directly above - I have big reservations about the most common “competition”-oriented actions, such as trying to help particular companies outcompete others or trying to get U.S. policymakers more focused on AI.

- For the latter, I worry that this risks making huge sacrifices on the “caution” front and even backfiring by causing other governments to invest in projects of their own.

- For the former, I worry about the ability to judge “good” leadership, and the temptation to overrate people who resemble oneself.

This is all far from absolute. I’m open to a broad variety of projects to help the most important century go well, whether they’re about “caution,” “competition” or another issue (including those I’ve listed in this post). My top priority at the moment is reducing the risks of misaligned AI, but I think a huge range of potential risks aren’t getting enough attention from the world at large.

Appendix: if we avoid catastrophic risks, how good does the future look?

Here I’ll say a small amount about whether the long-run future seems likely to be better or worse than today, in terms of quality of life.

Part of why I want to do this is to give a sense of why I feel cautiously and moderately optimistic about such a future - such that I feel broadly okay with a frame of “We should try to prevent anything too catastrophic from happening, and figure that the future we get if we can pull that off is reasonably likely (though far from assured!) to be good.”

So I’ll go through some quick high-level reasons for hope (the future might be better than the present) - and for concern (it might be worse).

In this section, I’m ignoring the special role AI might play, and just thinking about what happens if we get a fast-forwarded future. I’ll be focusing on what I think are probably the most likely ways the world will change in the future, laid out here: a higher world population and greater empowerment due to a greater stock of ideas, innovations and technological capabilities. My aim is to ask: “If we navigate the above issues neither amazingly nor catastrophically, and end up with the same sort of future we’d have had without AI (just sped up), how do things look?”

Reason for hope: empowerment trends. One simple take would be: “Life has gotten better for humans[4] over the last couple hundred years or so, the period during which we’ve seen most of history’s economic growth and technological progress. We’ve seen better health, less poverty and hunger, less violence, more anti-discrimination measures, and few signs of anything getting clearly worse. So if humanity just keeps getting more and more improvement on other dimensions too. This could be partly explained by something like the following dynamic:

- Most people would - aspirationally - like to be nonviolent, compassionate, generous and fair, if they could do so without sacrificing other things.

- As empowerment rises, the need to make sacrifices falls (noisily and imperfectly) across the board.

- This dynamic may have led to some (noisy, imperfect) improvement to date, but there might be much more benefit in the future compared to the past. For example, if we see a lot of progress on social science, we might get to a world where people understand their own needs, desires and behavior better - and thus can get most or all of what they want (from material needs to self-respect and happiness) without having to outcompete or push down others.[5]

Reason for hope: the “cheap utopia” possibility. This is sort of an extension of the previous point. If we imagine the upper limit of how “empowered” humanity could be (in terms of having lots of technological capabilities), it might be relatively easy to create a kind of utopia (such as the utopia I’ve described previously, or hopefully something much better). This doesn’t guarantee that such a thing will happen, but a future where it’s technologically easy to do things like meeting material needs and providing radical choice could be quite a bit better than the present.

An interesting (wonky) treatment of this idea is Carl Shulman’s blog post: Spreading happiness to the stars seems little harder than just spreading.

Reason for concern: authoritarianism. There are some huge countries that are essentially ruled by one person, with little to no democratic or other mechanisms for citizens to have a voice in how they’re treated. It seems like a live risk that the world could end up this way - essentially ruled by one person or relatively small coalition - in the long run. (It arguably would even continue a historical trend in which political units have gotten larger and larger.)

Maybe this would be fine if whoever’s in charge is able to let everyone have freedom, wealth, etc. at little cost to themselves (along the lines of the above point). But maybe whoever’s in charge is just a crazy or horrible person, in which case we might end up with a bad future even if it would be “cheap” to have a wonderful one.

Reason for concern: competitive dynamics. You might imagine that as empowerment advances, we get purer, more unrestrained competition.

One way of thinking about this:

- Today, no matter how ruthless CEOs are, they tend to accommodate some amount of leisure time for their employees. That’s because businesses have no choice but to hire people who insist on working a limited number of hours, having a life outside of work, etc.

- But if we had advanced enough technology, it might be possible to run a business whose employees have zero leisure time. (One example would be via digital people and the ability to make lots of copies of highly productive people just as they’re about to get to work. A more mundane example would be if e.g. advanced stimulants and other drugs were developed so people could be productive without breaks.)

- And that might be what the most productive businesses, organizations, etc. end up looking like - the most productive organizations might be the ones that most maniacally and uncompromisingly use all of their resources to acquire more resources. Those could be precisely the organizations that end up filling most of the galaxy.

- More at this Slate Star Codex post. Key quote: “I’m pretty sure that brutal … competition combined with ability to [copy and edit] minds necessarily results in paring away everything not directly maximally economically productive. And a lot of things we like – love, family, art, hobbies – are not directly maximally economic productive.”

That said:

- It’s not really clear how this ultimately shakes out. One possibility is something like this:

- Lots of people, or perhaps machines, compete ruthlessly to acquire resources. But this competition is (a) legal, subject to a property rights system; (b) ultimately for the benefit of the investors in the competing companies/organizations.

- Who are these investors? Well, today, many of the biggest companies are mostly owned by large numbers of individuals via mutual funds. The same could be true in the future - and those individuals could be normal people who use the proceeds for nice things.

- If the “cheap utopia” possibility (described above) comes to pass, it might only take a small amount of spare resources to support a lot of good lives.

Overall, my guess is that the long-run future is more likely to be better than the present than worse than the present (in the sense of average quality of life). I’m very far from confident in this. I’m more confident that the long-run future is likely to be better than nothing, and that it would be good to prevent humans from going extinct, or a similar development such as a takeover by misaligned AI.

-

A couple of discussions of the prospects for enforcing agreements here and here. ↩

-

I’m reminded of the judgment of Solomon: “two mothers living in the same house, each the mother of an infant son, came to Solomon. One of the babies had been smothered, and each claimed the remaining boy as her own. Calling for a sword, Solomon declared his judgment: the baby would be cut in two, each woman to receive half. One mother did not contest the ruling, declaring that if she could not have the baby then neither of them could, but the other begged Solomon, ‘Give the baby to her, just don't kill him!’ The king declared the second woman the true mother, as a mother would even give up her baby if that was necessary to save its life, and awarded her custody.”

The sword is misaligned AI and the baby is humanity or something.

(This story is actually extremely bizarre - seriously, Solomon was like “You each get half the baby”?! - and some similar stories from India/China seem at least a bit more plausible. But I think you get my point. Maybe.) ↩

-

For a tangible example, I’ll discuss the practice (which some folks are doing today) of trying to ensure that the U.S. develops transformative AI before another country does, by arguing for the importance of A.I. to U.S. policymakers.

This approach makes me quite nervous, because:

- I expect U.S. policymakers by default to be very oriented toward “competition” to the exclusion of “caution.” (This could change if the importance of caution becomes more widely appreciated!)

- I worry about a nationalized AI project that (a) doesn’t exercise much caution at all, focusing entirely on racing ahead of others; (b) might backfire by causing other countries to go for nationalized projects of their own, inflaming an already tense situation and not even necessarily doing much to make it more likely that the U.S. leads the way. In particular, other countries might have an easier time quickly mobilizing huge amounts of government funding than the U.S., such that the U.S. might have better odds if it remains the case that most AI research is happening at private companies.

(There might be ways of helping particular countries without raising the risks of something like a low-caution nationalized AI project, and if so these could be important and good.) ↩

-

Not for animals, though see this comment for some reasons we might not consider this a knockdown objection to the “life has gotten better” claim. ↩

-

This is only a possibility. It’s also possible that humans deeply value being better-off than others, which could complicate it quite a bit. (Personally, I feel somewhat optimistic that a lot of people would aspirationally prefer to focus on their own welfare rather than comparing themselves to others - so if knowledge advanced to the point where people could choose to change in this way, I feel optimistic that at least many would do so.) ↩