Are you an effective altruist or an altruistic rationalist (also known as avant-garde effective altruism)? It's like milk chocolate versus chocolate milk: The second word is the main thing. Take this fun quiz to find out where you are on the spectrum!

Your friend decides to donate money to the top GiveWell charities instead of paying tips. They're Canadian, where the minimum wage is about $11 USD. You think this is a good idea.

1 point - Strongly agree

2 points - Mostly agree

3 points - Neither agree or disagree

4 points - Mostly disagree

5 points - Strongly disagree

How often do you tell people your epistemic status

1 point - Epi-what?

2 points - never but i know what it means

3 points - 1-3 times in my life

4 points - multiple times in the past year

5 points - multiple times in the past month

When you decide how to spend your time contributing to EA, do you think about how you can have a positive impact on the most lives, present or future, human or otherwise? Or do you choose what to do based on the communities you have access to, and your skill set?

1 point - Always choose to maximize lives, present or future, human or otherwise

2 points - Mostly choose to maximize lives, present or future, human or otherwise

3 points - I'm 50/50

4 points - Mostly choose based on my communities and skill set

5 points - Always choose based on my communities and skill set

People will eventually become digital beings and it will be easy to be happy, which means that we should make as many digital people as possible, to maximize utility.

1 point - Strongly agree

2 points - Mostly agree

3 points - Neither agree or disagree

4 points - Mostly disagree

5 points - This is too hypothetical to be worth thinking seriously about

You care about and donate to causes that you know don't give good ROI in terms of suffering reduced (though you might consider the effectiveness of different organizations within that cause area).

5 points - Yes

4 points - I do but I feel kind of guilty about it

3 points - I do but not very intentionally, like if my cousin asks me to donate for their marathon for AIDS

2 points - I try not to

1 point - No

Sometimes you have to remind yourself not to think too hard about an AI that would torture you

1 point - What

2 points - I know what this is about, and I think it's dumb

3 points - Neutral

4 points - Agree

5 points - This gives me anxiety

A bill would increase the well being of many chickens, but decrease the well being of some farmers. Mathematicians that you trust estimate that the increase in well being of the chickens is worth two to four times as much as the decrease in well being of the farmers, with high confidence. How do you feel about the bill?

1 point - Strongly agree

2 points - Mostly agree

3 points - Neither agree or disagree

4 points - Mostly disagree

5 points - Strongly disagree

How often do you worry about not doing the right thing instead of actually doing a thing?

5 points - Always

4 points - Usually

3 points - Sometimes

2 points - Not often

1 point - Never

___________________

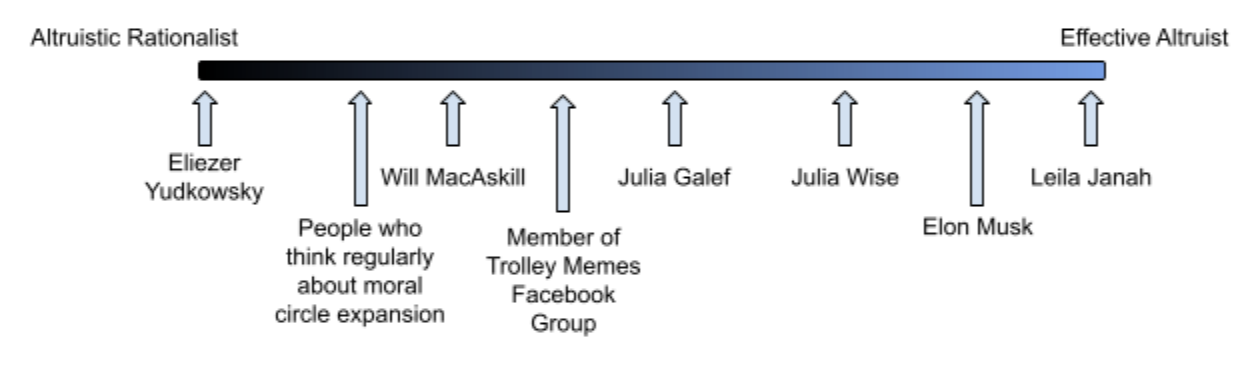

Add up your score! Scores range from 8 to 40, placing you somewhere on this line graph:

Which personality result did you get? Post in the comments below!

Score 8 to 14: Eliezer Yudkowsky

100% altruistic rationalist

You sometimes have dreams where you're arguing with someone on the LessWrong forum, because you do it so much in real life. You're an independent thinker and not afraid to be contrarian, garnering you lots of respect for your thought leadership. You think a lot about AI safety because it's been mathematically calculated to be likely to save a lot of lives (of people who don't exist yet), and also because it lets you exercise your big brain.

Score 15-21: Will MacAskill

30% effective altruist, 70% altruistic rationalist

You are really good at connecting with the average person about the benefits of effective charity giving. But what really makes you tick is academic exploration of the more extreme philosophical implications of effective altruism, such as catastrophic risks and other longtermist concepts. You wish that the movement wasn't moving in the direction of a thousand shitty AI researchers, but you're not sure what to do about it.

Score 22-27: Julia Galef

50% effective altruist, 50% altruistic rationalist

Whether it's your PhD or the book that you're writing, you often look at what you've been working on, become skeptical, and re-derive it all from ground principles to work out bias. The trait you find the most attractive is the willingness to publicly admit to being wrong. You care as much about striving for correctness as you do about having a positive impact with your work, and you hope to help others make the best decisions and arrive upon the best outcomes.

Score 28-34: Julia Wise

80% effective altruist, 20% altruistic rationalist

After having thought about how to use your time and money to do the most good for years, you've arrived at a place where you can give a significant part of your income or hours to effective causes, while still prioritizing things that give you joy. You invest yourself in family, friends, hobbies, and even doing things that are good but not the most effective, because these things are restorative and make you happy. It's always something you have to be conscious of and continually working on, but you know that you can contribute the most effectively in the long term when you do it while being cheerful and mentally balanced.

Score 35-40: Leila Janah

100% effective altruist

You were onto 'growth and the case against randomista development' before anyone else. You didn't read too much into what the philosophers and mathematicians were saying about how to do the most good -- you just followed your heart and dove right in. It's always been obvious to you that finding ways for people who don't have many opportunities to make more money would empower them to improve the quality of life for themselves and their communities. You are entrepreneurial, extremely hard working, and highly empathetic. Your authenticity and drive makes you a natural leader. The people you help might not know your name, but they know the organizations you run and the impact you've had on their lives.

Which personality result did you get? Is any of it accurate?? Post in the comments below!

Also if you are one of the people I named and want me to correct something, I will do it! Just comment or DM me. I hope I am providing enough comedic value to justify a little roasting...