Highlights

- Facebook's Forecast folds

- Hedgehog Markets now operational

- Polygon and Augur announce a $1M liquidity rewards program

Index

- Prediction Markets & Forecasting Platforms

- Blog Posts

- Long Content

- In The News

Sign up here or browse past newsletters here.

Prediction Markets & Forecasting Platforms

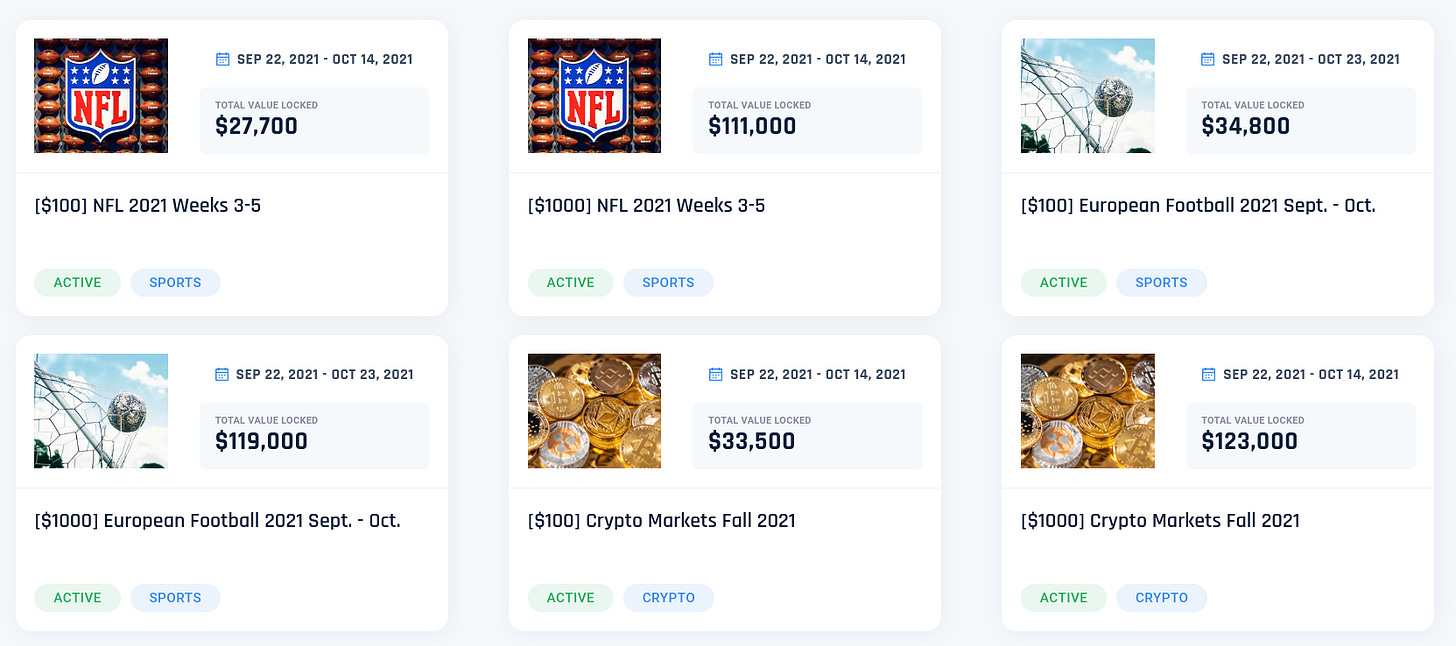

Hedgehog markets

Hedgehog markets (a) launches on the Solana mainnet, meaning that their prediction markets are now open to real-money bets. A brief explanation of how their no-loss competitions work and why they're interesting can be found on the July edition of this newsletter (a). Their first competition offers $100k in prizes (a).

Hedgehog's first markets are focused on sports and on the prices of various crypto assets. But their no-loss model is uniquely suitable for more long-term markets, so I'll be watching out for that.

Metaculus

SimonM (a) kindly curated the top comments from Metaculus this past August. They are:

- RyanBeck (a) points out some shortcomings in Arnold Kling's fortified essay on Two Theories of Inflation

- Charles (a) looks at Macron's re-election chances in 2022

- SimonM (a) calculates a base rate for US government defaults linked to the debt ceiling using Laplace's law

- ugandamaximum (a) looks at Trump's electoral prospects in 2024

- Comments on this question (a) wrangle with how to measure AI damage

- galen (a) makes a well-informed comment on What will be the Hue (in angular degrees) of Pantone's Color of the Year for 2022? (a)

Metaculus is advertising for various job openings (a): Lead Developer, Senior NLP/Machine Learning Engineer, Effective Altruism Question Author, and “analytical storyteller” (a).

Polymarket

Polymarket writes about Why You Should Get Your News From the Blockchain (a) on one of the largest crypto newsletters. Meanwhile, Polymarket keeps experiencing outages because of troubles with The Graph—one of the services it uses to connect its webpage with its blockchain contracts.

Last month, I lost a small amount of money following forecasts from Star Spangled Gamblers (a) and Karlstack (a), respectively on Britney Spears' conservatorship and August US inflation numbers. While I still find both sources very entertaining, I probably won't be following their lead on Polymarket in the future.

Hypermind

Hypermind launches another tournament: Forecast Covid-19 Mortality Worldwide (a), with $15,000 in total rewards.

Hypermind was also featured on Le Point (a), a large French magazine, on the topic of their upcoming presidential elections. Per the article, Marine Le Pen’s chances aren’t as high as those of a more entertaining far-right candidate, Éric Zemmour (a). h/t MonsieurDimanche.

Odds and ends

Polygon and Augur (Turbo) announce a $1M liquidity rewards program (a).

The program incentivizes liquidity providers (LPs) by rewarding them through so-called liquidity mining, in which users of a decentralized finance (DeFi) product earn an additional token on top of the regularly expected yield just for putting assets into a liquidity pool. In return, liquidity made available helps bootstrap user adoption and ensure the smooth running of Augur Turbo. Users can earn rewards by providing liquidity to every side of the bet on the platform.

This is interesting because providing liquidity is one of the most fickle and unprofitable parts of running a prediction market. Historically, Polymarket—which is also on the Polygon chain—has been setting large amounts of their money on fire providing liquidity to their own markets.

Augur Turbo is also setting their trading fees to 0% (a).

Facebook's Forecast (a), an experimental website and iPhone app from Facebook’s New Product Experimentation division, folds (a). Second-hand sources speculate that this was because of the community's poor performance. Forecast was previously announced on LessWrong here. h/t @dglid, Michał Dubrawski.

PredictIt Arbitrage calculator (a) fetches the PredictIt markets in which one can make money no matter what the outcome is—because the shares price doesn't sum to exactly 100%. If I'm reading this correctly, there aren't any salient opportunities right now.

Meanwhile in the corporate world, Amazon is searching for a Network Forecasting and Planning (a) Program Manager, with "Proficiency in MS Excel."

Blog Posts

John Cochrane (a) writes Climate Policy Should Pay More Attention to Climate Economics (a):

But the best guesses of the economic impact of climate change are surprisingly small. The U.N.’s IPCC finds that a (large) temperature rise of 3.66°C by 2100 means a loss of 2.6 percent of global GDP. Even extreme assumptions about climate and lack of mitigation or adaptation strain to find a cost greater than 5 percent of GDP by the year 2100.

Now, 5 percent of GDP is a lot of money — $1 trillion of our $20 trillion GDP today. But 5 percent of GDP in 80 years is couch change in the annals of economics.

[...]

For a small donation, pictures of cuddly animals might do. For trillion-dollar costs and regulations, they do not. To justify such costs, we need some dollar value on specific environmental damage of climate change. Yes, the numbers are uncertain. But those numbers are the only sensible framework to discuss spending trillions of dollars on climate now.

I'll file this under "big if true". For what it's worth, the 2.6% of GDP is indeed mentioned in page 256 of this IPCC report (a):

Under the no-policy baseline scenario, temperature rises by 3.66°C by 2100, resulting in a global gross domestic product (GDP) loss of 2.6% (5–95% percentile range 0.5–8.2%), compared with 0.3% (0.1–0.5%) by 2100 under the 1.5°C scenario and 0.5% (0.1–1.0%) in the 2°C scenario

However, note that these are forecasts about events 80 years into the future.

Updates and Lessons from AI Forecasting (a) gives Jacob Steinhardt's (a) outlook on having commissioned forecasts on the future of AI through Hypermind. See also a forecaster's rundown of his predictions for Steinhardt's tournament (a).

Cultured meat predictions were overly optimistic (a). "Of the 273 predictions collected, 84 have resolved - nine resolving correctly, and 75 resolving incorrectly. Additionally, another 40 predictions should resolve at the end of the year and look to be resolving incorrectly. Overall, the state of these predictions suggest very systematic overconfidence."

When pooling forecasts, use the geometric mean of odds (a). "There are many methods to pool forecasts. The most commonly used is the arithmetic mean of probabilities. However, there are empirical and theoretical reasons to prefer the geometric mean of the odds instead." SimonM finds that empirical Metaculus data (a) confirms this.

Measuring the information in an empirical prior (a):

For example, clinical trials reporting hazard ratios for treatment effects of say HR < 1/20 or HR > 20 are incredibly rare and typically fraudulent or afflicted by severe protocol violations. And then an HR of 100 could represent a treatment for which practically all the treated and none of the untreated respond, and thus is far beyond anything that would be uncertain enough to justify an RCT – we do not do randomized trials comparing jumping with and without a parachute from 1000m up. Yet typical “weakly informative” priors assign considerable prior probability to hazard ratios far below 1/20 or far above 20.

Long Content

A flurry of papers are out (a) detailing results and conclusions from the Makridakis 5 competition (a), one of the largest and longest-running ML forecasting competitions in the world. They will be presented at the M5 Conference (a) this September. Applicability of the M5 to Forecasting at Walmart ((sci-hub link (a)) presents the scoring details, and compares Walmart's own data pipeline to the winning entries.

Wikipedia: Predictions of the collapse of the Soviet Union (a). "Whether any particular prediction was correct is still a matter of debate, since they give different reasons and different time frames for the Soviet collapse."

AI Impacts in 2015 (a), on a large dataset of predictions of when human-level AI will be achieved.

Probabilistic Storytelling and Temporal Exigencies in Predictive Data Journalism (a) (sci-hub link (a)) outlines some considerations about predictive storytelling:

- Better prediction-making capabilities have only been game-changing in a few journalistic industries (political predictions, weather forecasting, sports)

- The position of "data journalist" isn’t very established; people with that position often call themselves by different names (journalists, researchers, data-analysts, interaction designers, etc.)

- Journalists are reluctant to use predictions

- This might be because

- news are churned pretty fast, and focused on the very short term...

- whereas predictions take time to gather and make sense of and tell stories around

- or because text, stories and human minds tend to follow one thread...

- whereas probabilistic futures are a garden of forking paths, and thus more difficult to represent

- news are churned pretty fast, and focused on the very short term...

Personally, I feel that the paper doesn't push enough on the lack of incentives for numerical predictions, or on the disappointing inadequacy of most—but not all—journalists to work with data, predictions, or nuance more generally. Further, it doesn't propose actionable insights. For instance, this seems like an area which would be amenable to top-down improvement, e.g., by directly paying newspapers to invest in quality probabilistic journalism, or by establishing prizes to incentivize such journalism.

"Proper scoring rules, like log or Brier, incentivize an expert to report their true belief. But what if there are multiple experts? In that case they can collude to guarantee themselves a larger reward". See this twitter thread (a) or the accompanying paper (a).

Hindenburg Research (a)—a firm whose profit model is to investigate companies until it uncovers fraudulent activities, and then to short those while revealing their research—alleged this June that DraftKings (a), a major US betting operator, has and continues to deal in countries where betting is illegal.

In the News

Nowcasting the Next Hour of Rain (a). Google’s DeepMind releases a more powerful model for forecasting weather in the very short term.

Weather Forecasting in Afghanistan military operations (a) is a nice example of superior forecasting prowess that had an example on the tactical level—e.g., being able to evacuate soldiers better, being able to better schedule attacks—but not at the strategic level—the US lost regardless.

FiveThirtyEight challenges readers (a) to beat their NFL forecasts.

The Business of Forecasting Fashion (a). In a Wall Street Journal podcast, a fashion forecasting expert talks about predicting what people will wear. What I found most interesting was trying to logically follow the impact of trends. For instance, as poorer millennials work more often from home, there is more relative demand for home wear.

Note to the future: All links are added automatically to the Internet Archive, using this tool (a). "(a)" for archived links was inspired by Milan Griffes (a), Andrew Zuckerman (a), and Alexey Guzey (a).

No airship will ever fly from New York to Paris. That seems to me to be impossible. What limits the flight is the motor. No known motor can run at the requisite speed for four days without stopping, and you can’t be sure of finding the proper winds for soaring. The airship will always be a special messenger, never a load-carrier. But the history of civilization has usually shown that every new invention has brought in its train new needs it can satisfy, and so what the airship will eventually be used for is probably what we can least predict at the present.

Wilbur Wright, of the Wright Brothers, 1908 (a) h/t Nintil (a) through the Best of Twitter (a) newsletter.