Clare_Diane

Bio

How others can help me

I really appreciate honest feedback! Here's a link for anyone who wants to give me anonymous feedback: https://www.admonymous.co/clare

Posts 4

Comments12

Thank you again for your compassionate commitment to helping others, Marie! Thank you for applying your intelligence, genuine kindness, drive, and open-mindedness in the service of inspiring and leading Hi-Med, and thank you for giving your team so much support and autonomy. I'm extremely grateful to have been on your team and learning from and with you all this time. (I know I and others said similar things on Slack, but I think it really can't be emphasised enough!) Wherever you work, they'll be lucky you're on their team!

Thanks for your comments, Kestrel, and your response, David (and apologies for not replying at the time). Agree that psychodynamic theory may have some relevance to the concepts we're talking about, and agree that the SWAP looks interesting!

Describing the SWAP wouldn't quite have fit with the goals of this piece, since it wasn't used in the large studies we cited here, and since it focuses on making clinical diagnoses (whereas our focus is on specific traits of concern to the long-term future - we give more details about this distinction in our appendices). But it definitely seems like the SWAP would have relevance in some contexts, and like David mentioned, there's already a National Security Edition. Your idea to develop more scalable versions of such a tool sounds fascinating, Kestrel. Thank you so much again for your engagement with our piece and for the helpful ideas here!

Agree that this post is confusing in parts and that Altman isn’t EA-aligned. (There were also some other points in the original post that I disagreed with.)

But the issue of "not calling a spade a spade" does seem to apply, at least in SBF’s case. Even now, after his many unethical decisions were discussed at length in court, some people (e.g., both the host and guest in this conversation) are still very hesitant to label SBF’s personality traits.

This doesn't need to be about soul searching or self-flagellation - I think it can (at times) be very difficult to recognize when someone has low levels of empathy. But sometimes (both in one’s personal life and in organizations) it's helpful to notice when someone's personality places them at higher risk of harmful behavior.

(This comment is basically just voicing agreement with points raised in Ryan’s and David’s comments above.)

One of the things that stood out to me about the episode was the argument[1] that working on good governance and working on reducing the influence of dangerous actors are mutually exclusive strategies, and that the former is much more tractable and important than the latter.

Most “good governance” research to date also seems to focus on system-level interventions,[2] while interventions aimed at reducing the impacts of individuals are very neglected, at least according to this review of nonprofit scandals:

It is notable that all the preventive tactics that have been studied and championed—audits, governance practices, internal controls—are aimed at the organizational level. It makes sense to focus on this level, as it is the level that managers have most control over. Prevention can also be implemented at the individual and sectoral levels. Training of staff, job-level checks and balances, and staff evaluations could all help prevent violations with individual-level causes. Sector-level regulation and oversight is becoming common in many countries. We, therefore, encourage future research on preventive measures to take a multilevel perspective, or at least consider the neglected sectoral and individual levels.

Six years before the review quoted above, this article called for psychopathy screening for public leadership positions (which would have represented one potential approach to interventions at the “individual level,” to adopt the terminology of the review quoted above).[3]

This leads me to wonder: what are the most compelling reasons for the lack of research (so far) on interventions to reduce the impact of dangerous actors, and which (if any) of these reasons provide strong arguments against doing at least some research in this neglected area? I think there are lots of possible answers here,[4] but none of them seem strong enough to justify the relative lack of research on this area so far (relative to the scale of the problem).

- ^

Here’s a quote from the episode (courtesy of Wei Dai's transcript) demonstrating this claim:

[Will MacAskill:] There's really two ways of looking at things: you might ask…is this a bad person - are we focusing on the character? Or you might ask…what oversight, what feedback mechanisms, what incentives does this person face? And yeah, one thing I've really taken away from this is to place even more weight than I did before on just the importance of governance, where that means the, you know, importance of people acting with oversight, with the feedback mechanisms and you know, with incentives to incentivize kind of good rather than bad behavior…

I agree that all these aspects of governance are important, but disagree that working on these things would entirely protect an organization from the negative impacts of malevolent actors.

- ^

To be clear, I am glad people are working on system-level solutions to low integrity and otherwise harmful behaviors, but I think it would be helpful if it wasn’t the *only* class of interventions that had substantial amounts of resources directed towards them.

- ^

Interestingly, one of the real-life cases Boddy refers to in support of his argument is the Enron scandal, a case which was also covered in the book Will MacAskill was talking about, Why They Do It.

- ^

Here are some of the reasons I’ve already thought about (listed roughly in order from most to least convincing to me as a reason to be pessimistic about this approach to risk reduction): potential lack of tractability; lower levels of social and political acceptability/feasibility; lack of existing evidence as to what methods work, to what extent, and in which contexts; and perhaps a perception that the problem (of dangerous actors) is small in scale. I’d be interested to know which (if any) of these reasons are the most important, and if there are other considerations I’m overlooking. Overall, despite these reasons against working on it, I still think this area is worth investigating to a greater extent than it has been to date.

I agree that most measures (including the ones that I mentioned being pessimistic about) could be used to update one’s estimated probability that an actor is malevolent, but like you, I’d be most interested in which measures give the highest value of information (relative to the costs and invasiveness of the measure).

I could have done a better job of explaining why I think that pupillometry, and particularly the measurement of pupillary responses to specific stimuli, would be much more difficult to game (if it was possible at all) relative to body language analysis and eye tracking. It’s because the muscles that control pupil size are not innervated by skeletal muscle but by smooth muscle, which is widely accepted as being not under conscious control. (The muscles involved are called the dilator pupillae, activated by the sympathetic nervous system, and the sphincter pupillae, activated by the parasympathetic nervous system). Having said this, there are some arguments (and some case studies) suggesting that one could indirectly train oneself to change one’s pupil size (e.g., via mental arithmetic or other forms of mental effort) or that some people may be able to find other methods of (training themselves to) change their pupillary size at will (here’s a video of someone doing it). But to me the main question is whether the initial response to specific stimuli (e.g., negative emotional stimuli, for which pupil responses are observed [in non-psychopathic people] within 2,000 ms of the stimulus) would be under voluntary control, and this seems very unlikely to me.

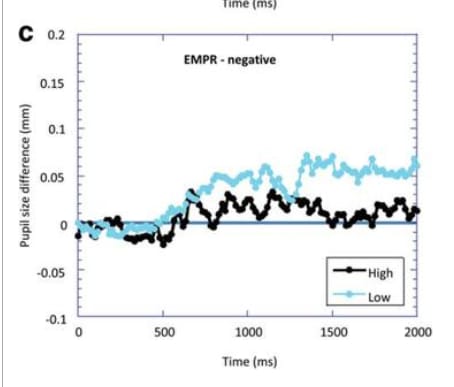

Early pupillary responses to certain stimuli are under the influence of subcortical structures, including the amygdala, and I think this point is particularly relevant to psychopathy. When we view faces, there’s a subcortical route to the amygdala which carries that information faster than it can be consciously processed and which allows the amygdala to be one of the first brain areas to trigger a fear response in reaction to someone seeing a fearful face. Why is this relevant? Well, there’s evidence that psychopaths demonstrate hypoactivity in their amygdala (relative to controls) in response to viewing human faces. And it seems that psychopaths’ pupillary responses to negative facial expressions differ[1] from those of controls (in that their pupils don’t dilate in response to negative stimuli [relative to neutral stimuli] like non-psychopaths’ pupils do) within the first 2000 ms of the stimulus being presented, not after.

If someone’s pupillary responses to negative facial expressions differ from non-psychopathic people, even if they somehow became aware of that and tried to alter it, I suspect it would be incredibly difficult (if it was possible at all) for them to voluntarily change their pupil size (in response to emotional stimuli) quickly enough to mimic normality. For these reasons, I think that assessing pupillary responses to viewing fearful faces is worth investigating as a cheap, noninvasive measure of psychopathy that would be much harder to manipulate relative to other cheap, noninvasive measures (if it was possible to manipulate at all, which I don’t think it would be).

- ^

Below, I briefly describe two little studies that are too small to be useful on their own but which make me think it’s worth at least exploring pupillometry a little more (as just one potential measure among a set of possible measures).

This little study included 82 males recruited from low and medium secure psychiatric hospitals in the United Kingdom, grouped according to whether they had low or high Factor 1 (interpersonal) factor scores based on the Psychopathy Checklist—Revised. The high Factor 1 group (n = 25) had a score of ≥10 on Factor 1 psychopathy and the low Factor 1 group (n = 27) had a score of ≤ 4. The chart below shows the difference in pupil diameter in response to negatively-valenced emotional images (compared to neutral images) for participants with high Factor 1 psychopathy scores (who had less pupil dilation in the first 2000 ms after the stimulus) compared to those with low Factor 1 psychopathy scores.

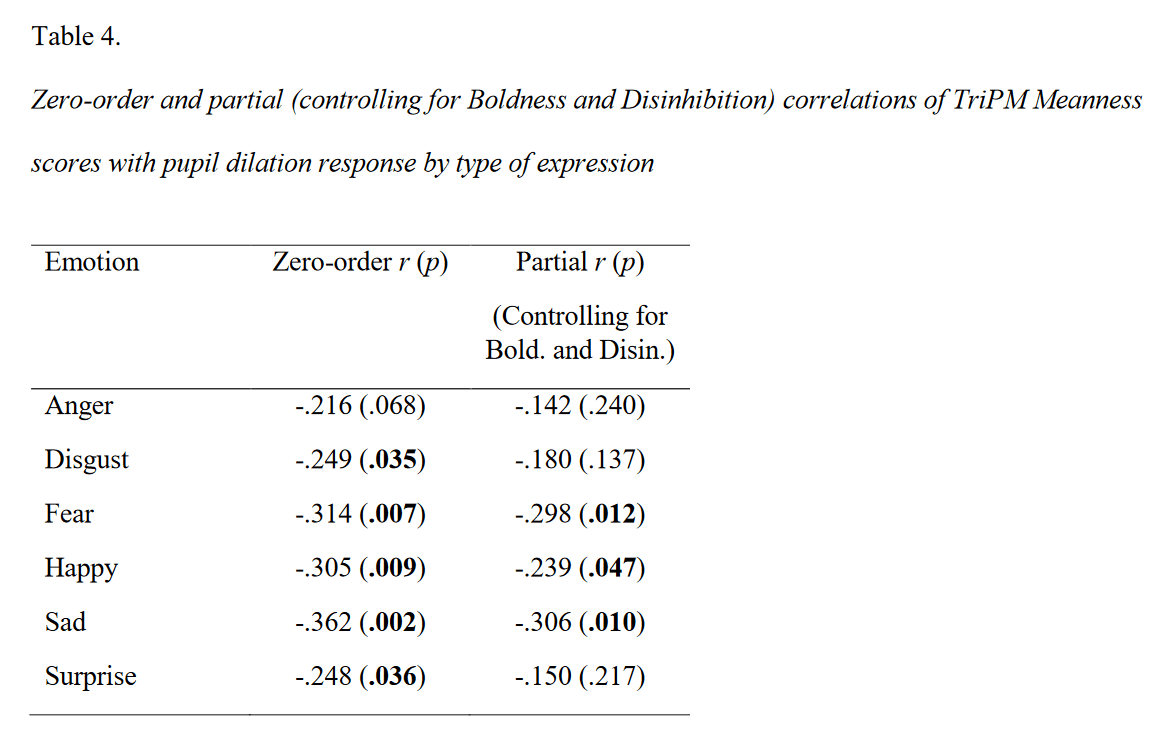

This little study included 73 adult male prisoners with histories of serious sexual or violent offenses, and it assessed psychopathy via The Triarchic Psychopathy Measure (TriPM; Drislane et al., 2014), which is a 58-item self-report measure with three subscales: Boldness, Meanness, and Disinhibition. They averaged participants’ pupil size measurements across each individual stimulus fixation for the duration of the stimulus display, then calculated an overall mean pupil size for each participant, across all trials, and calculated the percentage difference in pupil diameter for each stimulus category compared to the overall mean. They found weak but significant negative correlations between TriPM meanness scores and pupil dilation in response to a range of emotional stimuli (listed below).

Thank you - I think these ideas would be fascinating to investigate. I hope my current pessimism wrt eye tracking and body language analysis is misplaced, and I think that your ideas, if it turned out they were feasible to implement, could make these methods more useful than they appear (to me) to be at the moment.

The reason I’m pessimistic about these methods at the moment is that I imagine that some people with high levels of malevolent traits might be able to game them (by faking “normality”), but my concern ~only applies iff they were sufficiently motivated, informed about what body language or gaze behaviors would be considered normal, and if they then successfully practiced until they could go undetected.

I think it would be great if it was possible to study these things without publicizing the results or if it turned out that some normal behaviors are too difficult to practice successfully (or if some abnormal behaviors are too difficult to mask successfully).

Thank you, Tao - I’m glad you found it informative!

And thank you for that - I think that’s a great point. I was probably a bit too harsh in dismissing 360 degree reviews: at least in some circumstances, I agree with you - it seems like they’d be hard to game.

Having said that, I think it would mostly depend on the level of power held by the person being subjected to a 360 review. The more power the person had, the more I’d be concerned about the process failing to detect malevolent traits. If respondents thought the person of interest was capable of (1) inferring who negative feedback came from and (2) exacting retribution (for example), then I imagine that this perception could have a chilling effect on the completeness and frankness of feedback.

For people who aren’t already in a position of power, I agree that 360 degree reviews would probably be less gameable. But in those cases, I’d still be somewhat concerned if they had high levels of narcissistic charm (since I’d expect those people to have especially positive feedback from their “fervent followers,” such that - even in the presence of negative feedback from some people - their high levels of malevolent traits may be more likely to be missed, especially if the people reviewing the feedback were not educated about the potential polarizing effects of people with high levels of narcissism).

If 360 reviews were done in ways that guaranteed (to the fullest extent possible) that the person of interest could not pinpoint who negative feedback came from, and if the results were evaluated by people who had been educated about the different ways in which malevolent traits can present, I would be more optimistic about their utility. And I could imagine that information from such carefully conducted and interpreted reviews could be usefully combined with other (ideally more objective) sources of information. In hindsight, my comment didn’t really address these ways in which 360 reviews might be useful in conjunction with other assessments, so thank you so much for catching this oversight!

I’d always be interested in discussing any of these points further.

Thank you again for your feedback and thoughts!

Thank you for sharing these insights. I am also pessimistic about using self-report methods to measure malevolent traits in the context of screening, and it’s very helpful to hear your insights on that front. However, I think that the vast majority of the value that would come from working on this problem would come from other approaches to it.

Instead of trying to use gameable measures as screening methods, I think that:

(1) Manipulation-proof/non-gameable measures of specific malevolent traits are worth investigating further.[1] There are reasons to investigate both the technical feasibility and the perceived acceptability and political feasibility of these measures.[2]

(2) Gameable measures could be useful in low-stakes, anonymous research settings, despite being (worse than) useless as screening tools.

I explain those points later in this comment, under headings (1) and (2).

On the neglectedness point

I think the argument that research in this area is not neglected is very important to consider, but I think that it applies much more strongly in relation to the use of gameable self-report measures for screening than it does to other approaches such as the use of manipulation-proof measures. As I see it, when it comes to using non-gameable measures of malevolent traits, this topic is still neglected relative to its potential importance.

The specific ways in which different levels of malevolence potentially interact with x-risks and s-risks (and risk factors) also seem to be relatively neglected by mainstream academia.

Manipulation-proof measures of malevolence also appear to be neglected in practice:

- Despite a number of high-stakes jobs requiring security clearances, ~none that I’m aware of use manipulation-proof measures of malevolence,[3] with the possible exception of well-designed manipulation-proof behavioral tests[4] and careful background checks.[5]

- There are also many jobs that (arguably) should, but currently do not, require at least the level of rigor and screening that goes into security clearances. For those roles, the overall goal of reducing the influence of malevolent actors seems to be relatively absent (or at least not actively pursued). Examples of such roles include leadership positions at key organizations working to reduce x-risks and s-risks, as well as leadership positions at organizations working to develop transformative artificial intelligence.

- Many individuals and institutions don’t seem to have a good understanding of how to identify elevated malevolent traits in others and seem to fail to recognize the impacts of malevolent traits when they’re present.[6] It’s plausible that there’s a bidirectional relationship whereby low levels of recognition of situations where malevolence is contributing to organizational problems would plausibly reduce the degree to which people recognize the value of measuring malevolence in the first place (particularly in manipulation-proof ways), and vice versa.[7]

(1) Some reasons to investigate manipulation-proof/non-gameable measures of malevolent traits

I’ll assume anyone reading this is already familiar with the arguments made in this post. One approach to reducing risks from malevolent actors could be to develop objective, non-gameable/manipulation-proof measures of specific malevolent traits.

Below are some reasons why it might be valuable to investigate the possibility of developing such measures.

(A) Information value.

Unlike self-report measures, objective measures of malevolent traits are still relatively neglected (further info on this below). It seems valuable to at least invest in reducing the (currently high) levels of uncertainty here as to the probability that such measures would be (1) technically and (2) socially and politically feasible to use for the purposes discussed in the OP.

(B) Work in this area might actually contribute to the development of non-gameable measures of malevolent traits that could then be used for the purposes most relevant to this discussion.

I think it would be helpful to develop a set of several manipulation-proof measures to use in combination with each other. To increase the informativeness[8] of the set of measures in combination, it would be helpful if we could find multiple measures whose errors were uncorrelated with each other (though I do not know whether this would be possible in practice, I think it’s worth aiming for). To give an example outside of measuring malevolence, the combination of electroencephalography (EEG) and functional magnetic resonance imaging (fMRI) appears much more useful for diagnosing epilepsy than either modality alone.

In some non-research/real-life contexts, such as the police force, there are already indirect measures in place that are specifically designed to be manipulation-proof or non-gameable. These can include behavioral tests designed in such a way that those undertaking the test do not know (or at least cannot verify) whether they are undertaking a test and/or cannot easily deduce what the “correct” course of action is. They are designed to identify specific behaviors that are associated with underlying undesirable traits (such as behaviors that demonstrate a lack of integrity or that demonstrate dishonesty or selfishness). Conducting (covert) integrity testing on police officers is one example of this kind of test (see also: Klaas, 2021, ch. 12).

Behavioral tests such as these would likely require significant human resources and other costs in order to be truly unpredictable and manipulation-proof. Despite the costs, in the context of high-stakes decisions (e.g., regarding who should have or keep a key position in an organization or group that influences x-risks or s-risks), it seems worth considering the idea of using behavioral measures in combination with background checks (similar to those done as part of security clearances, as discussed earlier) plus a set of more objective measures (ideas for which are listed below).

Below is a tentative, non-exhaustive list of objective methods which seem worth investigating as future ways of measuring malevolent traits. Please note the following things about it, though:

- Listing a study or meta-analysis below doesn’t imply that I think it’s well done; this table was put together quickly.

- Many of the effect sizes in these studies are quite small, so using any one of these methods in isolation does not seem like it would be useful (based on the information I’ve seen so far).

| Objective approaches to measuring malevolence that seem worth investigating | ||

Approach

| Brief comments | Examples of research applications of this approach |

| Electroencephalography (EEG) event-related potentials (ERPs) | This involves recording electrical activity (measured at the scalp) of the brain via an electrogram. Thanks to its high temporal resolution, EEG allows one to assess unconscious neural responses within milliseconds of a given stimulus being presented.

Relatively portable and cheap.

| Assessing deficits in neural responses to seeing fearful faces among people with high levels of the “meanness” factor of psychopathy (across multiple studies) (link to full text thesis version of the same study) |

| Functional magnetic resonance imaging (fMRI) | This involves inferring neural activity based on changes in blood flow to different parts of the brain (which is called blood-oxygen-level dependent [BOLD] imaging). In comparison to EEG, fMRI offers high spatial resolution but relatively poor temporal resolution.

Not portable or convenient. (More expensive than all the other methods in this table; fMRI is at least 10 times the cost of fNIRS!) However, a lack of (current) scalability does not (in my opinion) completely rule out the use of such a test in high-stakes hiring decisions.[9] | Assessing mirror neuron activity during emotional face processing across multiple fMRI studies

|

| Functional near-infrared spectroscopy (fNIRS) | Measures changes in the concentrations of both oxygenated and deoxygenated hemoglobin near the surface of the cortex.

Relatively portable, but not as cheap as some of the other methods. |

|

| Pupillometry, especially in the context of measuring pupil reactivity to positive, negative, and neutral images | This involves measuring variations in pupil size; such measurements are reflective of changes in sympathetic nervous system tone, but they have an added advantage of higher temporal resolution compared to other measures of sympathetic nervous system activation.

Relatively portable and cheap. | Screening for children at risk of future psychopathology Assessing the reduced pupil dilation in response to negative images among 4-7-year-old children and using that to predict later conduct problems and reduced prosocial behavior

|

| Startle reactivity, especially the assessment of aversive startle potentiation (ASP) | The startle response in humans can be measured by monitoring the movement of the muscle orbicularis oculi surrounding the eyes. Aversive startle potentiation (ASP) involves presenting a stimulus designed to exaggerate the startle response, such as negative images or negative film clips.

Relatively portable and cheap.

| Assessing startle reactivity in response to negative, positive, and neutral images among people with high levels of psychopathy and everyday sadism |

Other approaches (not listed above)

There are also ~manipulation-proof measures that, despite their relatively low susceptibility to manipulation, seem to me to be non-starters due to being likely to have a low positive predictive value, including polygenic risk scores in adults and the use of structural neuroimaging in isolation (i.e., without any functional components), as I again predict that that would have a low positive predictive value. (That’s one of the reasons I did not list magnetoencephalograpy (MEG) above - relatively few functional [fMEG] studies appear to have been done on malevolent traits.)

Finally, there are multiple measures that I do *not* currently[10] think are promising due to being too susceptible to direct or indirect manipulation, including self-report surveys, implicit association tests (unless they are used in combination with pupillometry, in which case it’s possible that they would become less gameable), eye tracking, assessments of interpersonal distance and body language analysis, behavior in experimental game situations or in other contexts where it’s clear what the socially desirable course of action would be, measures of sympathetic nervous system activation that lack temporal resolution,[11] anonymous informant interviews (as mentioned earlier[5]), reference reports, and 360 reviews. In the case of the latter two approaches, I predict that individuals with high enough levels of social skills, intelligence, and charisma would be able to garner support through directly or indirectly manipulating (and/or threatening) the people responsible for rating them.

Thinking about implementation in the real-world: tentative examples of the social and political feasibility of using objective screening measures in high-stakes hiring and promotion decisions

Conditioned on accurate objective measures of malevolent traits being available and cost-effective (which would be a big achievement in itself), would such screening methods ever actually be taken up in the real world, and if they were taken up, could this be done ethically?

It seems like surveys could address the question of perceived acceptability of such measures. But until such surveys are available, it seems reasonable to be pessimistic about this point.

Having said this, there are some real-world examples of contexts in which it is already accepted that people should be selected based on specific traits. In positions requiring security clearance or other positions requiring very high degrees of trust in someone prior to hiring them, it is common to select candidates on the basis of their assessed character (despite the fact that there are not yet any objective measures for the character traits they are testing for). For example:

- The National Security Eligibility Determination considers the following “facts” about someone when vetting them: stability, trustworthiness, reliability, discretion, character & judgement, honesty, and “unquestionable loyalty to the U.S.”. Some of these (trustworthiness and honesty) would tend to be anticorrelated with malevolent traits.

- In New Zealand, Protective Security Requirements stipulate that a candidate “must possess and demonstrate” integrity, which they define as a collection of three character traits: honesty, trustworthiness and loyalty.

- The Australian Criminal Intelligence Commission (ACIC) says that they look for the following traits in employees: “honesty, trustworthiness, [being] impartial, [being] respectful, [and being] ethical.” All of these would tend to be anticorrelated with malevolent traits.

In addition, there are some professional contexts in which EEG is already being used in decisions relating to allowing people to start or keep a job (in much lower-stakes settings than the ones of interest to longtermits). In these contexts, it is to try to rule out the possibility of epilepsy, which people tend to think about in a different way to personality traits. However, the examples still seem worth noting.

- Before becoming a pilot, one typically has to undergo EEG screening for epileptiform activity, regardless of one’s medical history - and this is reportedly despite there not even being sufficient evidence for the benefits of this.

- Driving regulations for epilepsy in Europe often stipulate that someone with epilepsy should show no evidence of epileptiform activity on EEG (and often the EEG has to be done while sleep deprived as well).

Of course, taking on a leadership position in an organization that has the capacity to influence x-risks or s-risks would (arguably) be much a higher-stakes decision than taking on a role as a pilot or as a professional driver, for example. The fact that there is a precedent for using a form of neuroimaging as part of an assessment of whether someone should take on a professional role, even in the case of these much lower-stakes roles, is noteworthy. It suggests that it’s not unrealistic to expect people applying to much higher-stakes roles to undergo similarly manipulation-proof tests to assess their safety in that role.

(2) Why gameable/manipulable measures might be useful in research contexts (despite not being useful as screening methods)

Self-report surveys are easy to collect data on, and it seems that people are less likely to lie in low-stakes, anonymous settings.

Administering self-report surveys could improve our understanding of how various traits of interest correlate with other traits and with specific beliefs (e.g., with fanatical beliefs) and behaviors of interest (including beliefs and behaviors that would be concerning from an x-risk or s-risk perspective, which do not appear to have been studied much to date in the context of malevolence research).[12]

Thank you to David Althaus for very helpful discussions and for quickly reading over this! Any mistakes or misunderstandings are mine.

- ^

Just to be clear, I don’t think that these investigations need to be done by people within the effective altruism community. I also agree with William MacAuliffe’s comment above that there would be value in mainstream academics working on this topic, and I hope that more of them do so in the future. However, this topic seems neglected enough to me that there could be value in trying to accelerate progress in this area. And like the OP mentioned, there could be a role for EA orgs in testing the feasibility of using measures of malevolent traits, if or once they are developed.

- ^

Of course, anyone pursuing this line of investigation would need to carefully consider the downsides and ethical implications at each stage. Hopefully this goes without saying.

- ^

I’d be very happy to be proven wrong about this.

- ^

You could say: of course manipulation-proof measures of malevolence are neglected in practice - they don’t exist yet! However, well-designed manipulation-proof behavioral tests do exist in some places, and they do tend to test things that are correlated with malevolence, such as dishonesty. So it seems at least possible to track malevolence in some manipulation-proof ways, but this appears to be done quite rarely.

- ^

One could argue that security clearance processes also tend to include other, indirect measures of malevolence (or of traits that [anti]correlate with malevolent traits), and some of these indirect measures are less susceptible to manipulation than others. When it comes to background checks and similar checks as part of security clearances, these seem difficult to game, but not impossible (for example, if someone was a very “skilled” fraud, or if someone hypothetically stole the identity of someone who already had security clearance). Other things to note about background checks are that they can be costly and can still miss “red flags” (for example, this paper claims that this happened in the case of Edward J. Snowden’s security clearance). However, if done well, such investigations could identify instances where the person of interest has been dishonest, has displayed indirect evidence of past conflicts, or has a record of more overtly malevolent behaviors. In addition to background checks, interviews with people who know the person of interest could also be useful. However, I would argue that these are somewhat gameable, because sufficiently malevolent individuals might cause some interviewees/informants to be fearful about giving honest feedback (even if they were giving feedback anonymously, and even if they were actively reassured of their anonymity). Having said this, there could be value in combining information from anonymous informant reports with a set of truly manipulation-proof measures of malevolence (if or when some measures are found to be informative enough to be used in this context).

- ^

It appears there is also a relative paucity of psychoeducation about malevolent traits. A lack of understanding of malevolent traits has arguably also affected the EA community. For example, many people didn’t recognise how concerning some of SBF’s traits are for someone in a position as powerful as he was in. This lack of awareness, and the resulting lack of tracking of the probability that someone has high levels of malevolence, could plausibly contribute to institutional failures (such as a failure to take whistleblowers seriously and a general failure to identify and respond appropriately when someone shows a pattern of conflict, manipulation, deception, or other behaviors associated with malevolence). In the case of SBF, Spencer Greenberg found that some people close to SBF had not considered his likely deficient affective experience (DAE) as an explanation for his behavior within the set of hypotheses they’d been considering (disclosure: I work for Spencer, but I’m writing this comment in my personal capacity). Spencer spoke with four people who knew SBF well and presented them with the possibility that SBF had DAE while also believing in EA principles. He said that, after being presented with this hypothesis, all of their reactions “fell somewhere on the spectrum from “that seems plausible” to “that seems likely,” though it was hard to tell exactly where in that range they each landed.” Surprisingly, however, before their conversations with Spencer, the four people “seemed not to have considered [that possibility] before.”

- ^

In the absence of accurate methods of identifying people with high levels of malevolent traits, organizations may fail to identify situations where someone’s malevolent traits are contributing to poor outcomes. In turn, this lack of recognition of the presence and impact of malevolent traits would plausibly reduce the willingness of such organizations to develop or use measures of malevolence in the first place. On the other hand, if organizations either began to improve their ability to detect malevolent traits, or became more aware of the impact of malevolent traits on key outcomes, this could plausibly contribute to a positive feedback loop where improved ability to detect malevolence and an appreciation of the importance of detecting malevolence positively reinforce each other. Such improvements might be useful not only for hiring decisions but also for decisions as to whether to promote someone or keep them in a position of power. For example, it seems reasonable to expect that whistleblowers within organizations (or others who try to speak out against someone with high levels of malevolent traits) would be more likely to be listened to (and responded to appropriately) if there was greater awareness of the behavioral patterns of malevolent actors.

- ^

In this context, I’m talking about the degree to which measures could more sensitively detect (but not overestimate) when an individual’s levels of specific malevolent traits (such as callousness or sadism) would be high enough that we’d expect them to substantially increase x-risks or s-risks if given a specific influential position (that they’ve applied to, for example). Due to the dimensional, continuous nature of malevolent traits, the use of labels such as “malevolent” or “not malevolent” (which could be used if we wanted to calculate the sensitivity and specificity of measures of interest) would be artificial and would need to be decided carefully. Deciding whether and to what extent someone’s levels of malevolent traits would increase x-risks or s-risks would be a probabilistic assessment, but I think it would be an important part of assessing the risks involved in making high-stakes decisions about who to involve (or keep involved) in the key roles of high-impact organizations, groups, or projects.

- ^

The comment above (by William McAuliffe) mentioned that it would be difficult to implement non-gameable measures at scale in a practical context. I think this concern should definitely be investigated in more depth, but I also think that the measures would not necessarily need to be implementable on a large scale to provide value. The measures would, of course, need to be studied well enough to have a good understanding of their specificity and sensitivity with respect to identifying actors most likely to increase x-risks or s-risks if given specific positions of power, but the relatively low numbers of these positions may mean that it could be justifiable to implement non-gameable tests of malevolent traits even if they are expensive and difficult to scale. The higher-stakes the position of interest, the more it would seem to be justified to invest significant resources into preventing malevolent actors from taking up that position.

- ^

Some of the currently-gameable measures might become less gameable in the future. For example, using statistical or machine learning methods to model and correct for impression management and socially desirable responding in self-report surveys would at least increase the utility of self-report methods (i.e., it seems possible that such developments could elevate them from being worse than useless to being one component in a set of tests as part of a screening method). However, in view of William McAuliffe’s well-evidenced pessimism on this topic, I’m also less optimistic about this than I would otherwise have been.

- ^

There are several measures of sympathetic nervous system activation that I did not list above. This is mainly because sympathetic activation is arguably under some degree of voluntary control (e.g., one could hyperventilate to increase their level of sympathetic activation, and one can train oneself to vary one’s heart rate variability), and given that there are also multiple other factors (other than malevolent traits) that can contribute to variations in sympathetic activation, I did not list them as potential measures at this stage.

However, for completion, I’ll list a couple of specific examples in this category here. Heart rate (HR) orienting responses to images designed to induce threat and distress have been investigated among people with high versus low levels of callous-unemotional (CU) traits. Similarly, HR variability (HRV) appears to be altered among people with high levels of the boldness factor of psychopathy. In addition to heart rate variability, another indirect measure of sympathetic nervous system activation is skin conductance (SC) or electrodermal activity (EDA), which capitalizes on the fact that sweat changes the electrical conductance of the skin. Notwithstanding the noisiness and downsides of such measures, changes in electrodermal activity have been observed in psychopathy, antisocial personality disorder, and conduct disorder (across multiple studies). Both HRV and EDA measurements lack the temporal resolution of pupillometry, though, so if a measure of sympathetic activation was going to be investigated in the context of screening for malevolent traits, I predict that it would be more useful to use pupillometry in combination with specific emotional stimuli. - ^

This is extremely speculative and uncertain (sorry), but if it turns out that a better understanding of the correlations between different traits and outcomes of interest within humans could translate into a better ability to predict personality-like traits and behaviors of large language models (for example, if positive correlations between one trait and another among humans translated into positive correlations between those two traits in corpuses of training data and in LLM outputs), then research in this area could be relevant to evaluations of those models. However, this is just a very speculative additional benefit beyond the main sources of value discussed above.

Hi, happy to speak to the methodological points here.

Thanks for sharing the link and suggestion. We agree that understanding how much we can trust the data is crucial for interpreting our results, so thank you for engaging with this critically.

We didn’t measure individual reaction times for questions so using RT modelling isn’t an option. Modelling carelessness in other ways (e.g., modelling it as a latent tendency) would be fascinating, but I don’t endorse the assumptions we’d need to model carelessness as a latent variable. (I like how Rohrer & Paulewicz 2025 point out that latent variable modelling requires making some strong assumptions.) So even though we could try to model carelessness, I currently don’t think we should do so.

The headline results were designed such that it would be very unlikely for participants selecting at random to meet our definition of “consistent and concerning” endorsers. In the main piece, the focus is on who agreed with the hell question, AND selected “Forever” for the duration question (the last of 11 options), AND selected 1% or more for the proportion question. The supplementary materials additionally report those who endorse BOTH the “endorses system” question and “would create” question, AND selected “Forever” for the duration question (the last of 11 options), AND selected 1% or more for the proportion question. Partly due to the design of these “headline” results variables, the proportion of the sample meeting our definition of “consistent and concerning” turned out to be robust to the removal of all attention checks (i.e., the inclusion of everyone who didn’t drop out before the questions of interest, with no filtering).

Regarding the other things in the list you linked to from that LLM chat, though, we did do most of those things. For example, we included unobtrusive checks and multiple different quality measures, not just attention checks - I’d be interested in your thoughts on the checks outlined in our supplementary folder. And importantly, for our headline results, we did sensitivity analyses and shared the results (including confidence intervals) in our supplementary materials folder.

(Also, just to address the point about the N varying between questions - that’s because different numbers of participants completed some questions; slightly fewer completed the duration and hell questions because we had tested different wordings for both questions early in the study, before they were replaced with new versions that were used for the rest of the study.)

Would be happy to answer follow-up questions too. Thanks!