Devon Fritz 🔸

Bio

EA Meta for 7 years!

COO of Ambitious Impact (previously Charity Entrepreneurship).

Co-Founder of High Impact Professionals. We enable working professionals to maximize their positive impact by supporting them in donating their time, skills and resources effectively.

Ex-CTO and MD of Germany for Founders Pledge. Big on promoting Effective Giving.

Originally from New York, living in Berlin.

Posts 5

Comments107

Very interesting @Kirsten - thank you for the tips. I'll have my publicist follow up with those leads.

I am a dad myself and have on my list to make posts on my Substack about insights from having kids and impact. I give advice to EAs about many things, but I've notice my takes on parenting lead to really long conversations and follow up in a way that makes me think it could be valuable to the community.

@Federico Speziali and I both originally looked into support for this cohort of folks, but I ultimately got more pessimistic on it for two reasons:

- There were fewer people doing E2G than I thought.

- Those who were doing E2G were giving less than I thought.

I came to the view that those E2Gers giving significant amounts (100K+ let's say) were an order of magnitude lower than I'd hoped (maybe 50 instead of 500) and also that they were already known to the community for the most part and already pretty much maximizing their impact.

There could be room for an org that tries to bring more folks like this into the fold, but I am a bit pessimistic that it would be successful, as many EAs purport to do E2G and give smallish sums, and if card-carrying EAs who are saying they do E2G aren't doing that much on average, I don't think those unfamiliar with EA would likely convert to high-giving E2Gers. They might learn about EA and then E2G and then take it up, and we already have a pipeline for that.

I should say I wouldn't want to dissuade others from working on this as despite what I said I think this is underexplored on the margin and could bear fruit with some cheap experiments, but I think it is important to relay my true take here as well, even if it is a bit negative.

Should also note that GWWC is looking into this a bit so they might have a take they want to add.

Thanks for the great work as usual. This is a very good snapshot of where people are at.

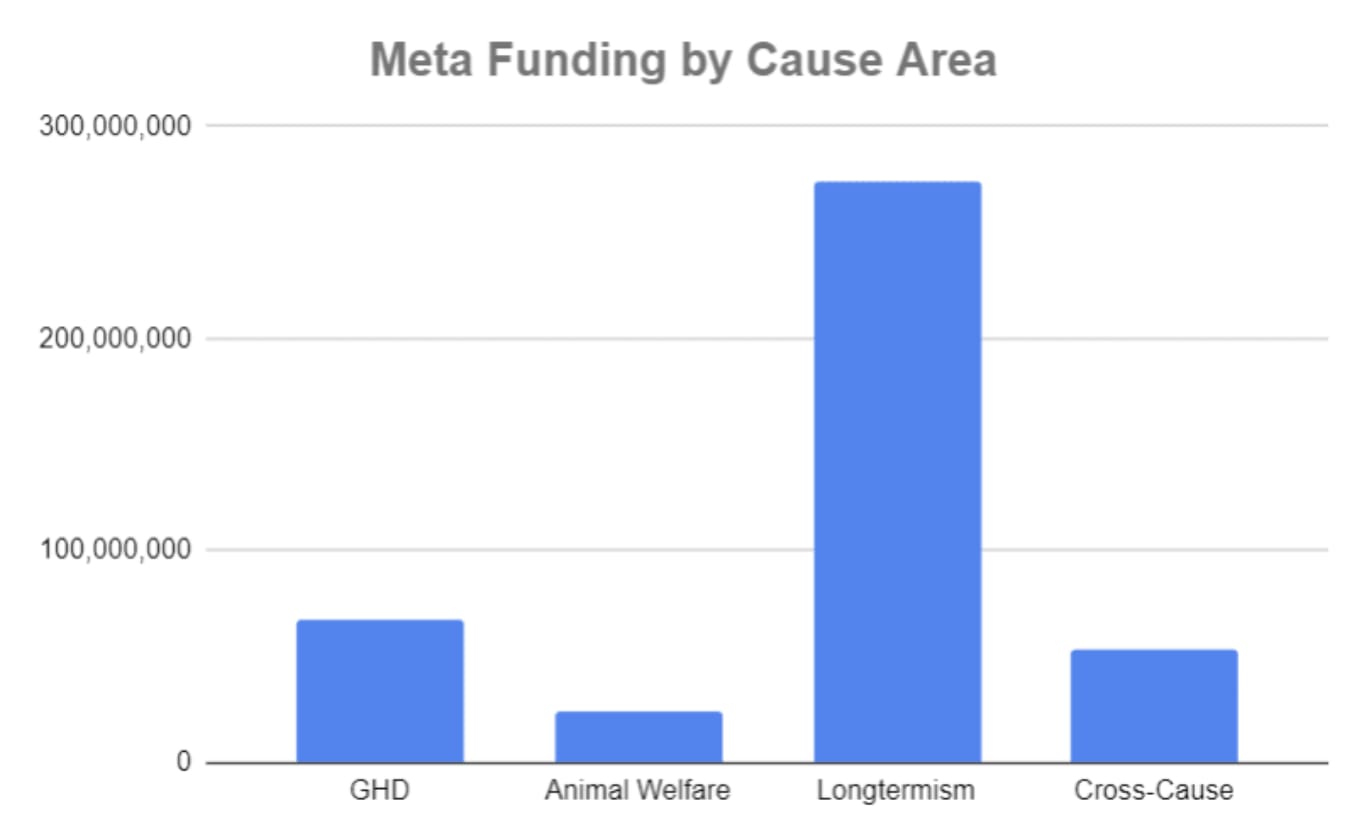

I would love to see an analysis that normalizes for meta funds flowing into causes areas. In @Joel Tan's recent Meta Funding Landscape post, he states that OP grants 72% of total meta funds and that the lion's share goes to longtermism.

From his post:

And although the EA Infrastructure fund supports multiple cause areas, if you scroll through the recent grants you might be surprised at the percentage going to LT.

Funders should, of course, prioritize the cause areas they want, but I hope to make it clear to people that when a vast majority of funding goes to prop up one area, it should be no surprise that that area has lots of adherents that advocate it.

Normalizing some of this data for meta-funding received would show that, among other things, GH&D is on top DESPITE a significant LT funding advantage.

Thank you for your support Uhitha! I hope you enjoy it. Please do let me know what you think.