Vasco Grilo🔸

Bio

Participation4

I am a generalist quantitative researcher. I am open to volunteering and paid work. I welcome suggestions for posts. You can give me feedback here (anonymously or not).

How others can help me

I am open to volunteering and paid work (I usually ask for 20 $/h). I welcome suggestions for posts. You can give me feedback here (anonymously or not).

How I can help others

I can help with career advice, prioritisation, and quantitative analyses.

Posts 248

Comments3164

Topic contributions42

Hi Jeff.

Let's try and make some similar predictions for 2028:

- My odds that the world has changed substantially are up significantly, maybe 55%, primarily due to AI.

Do you see any bet we could make about transformative AI (TAI) timelines, or what they supposedly imply, that is beneficial for both of us?

Hi titotal. Thanks for the post.

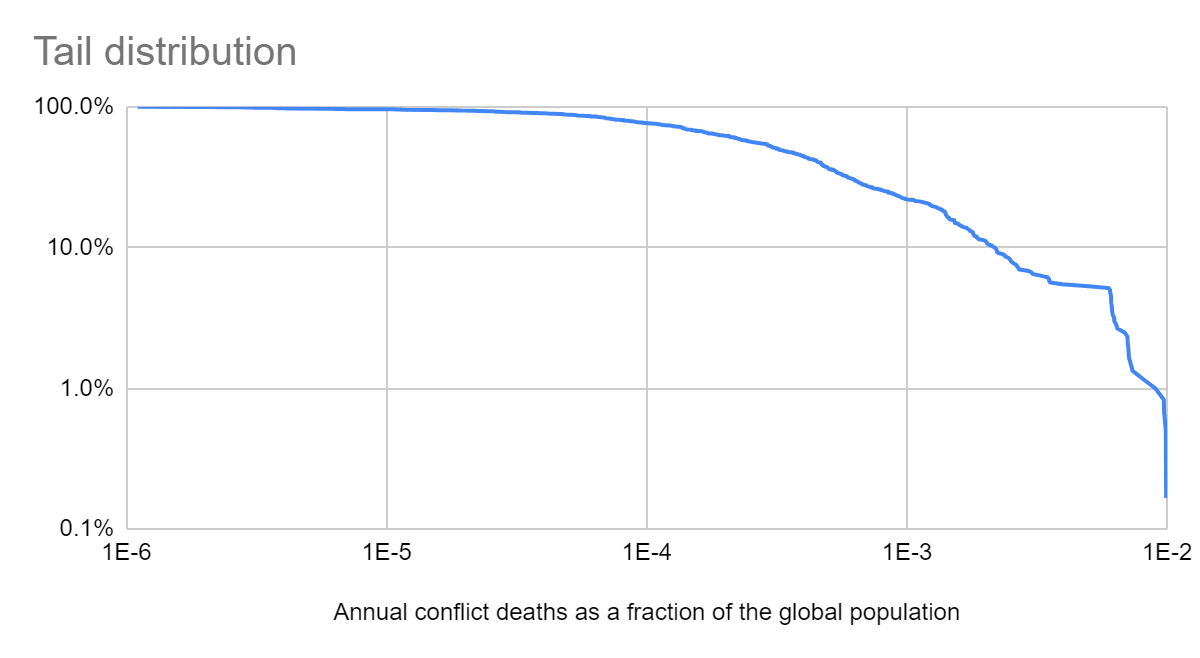

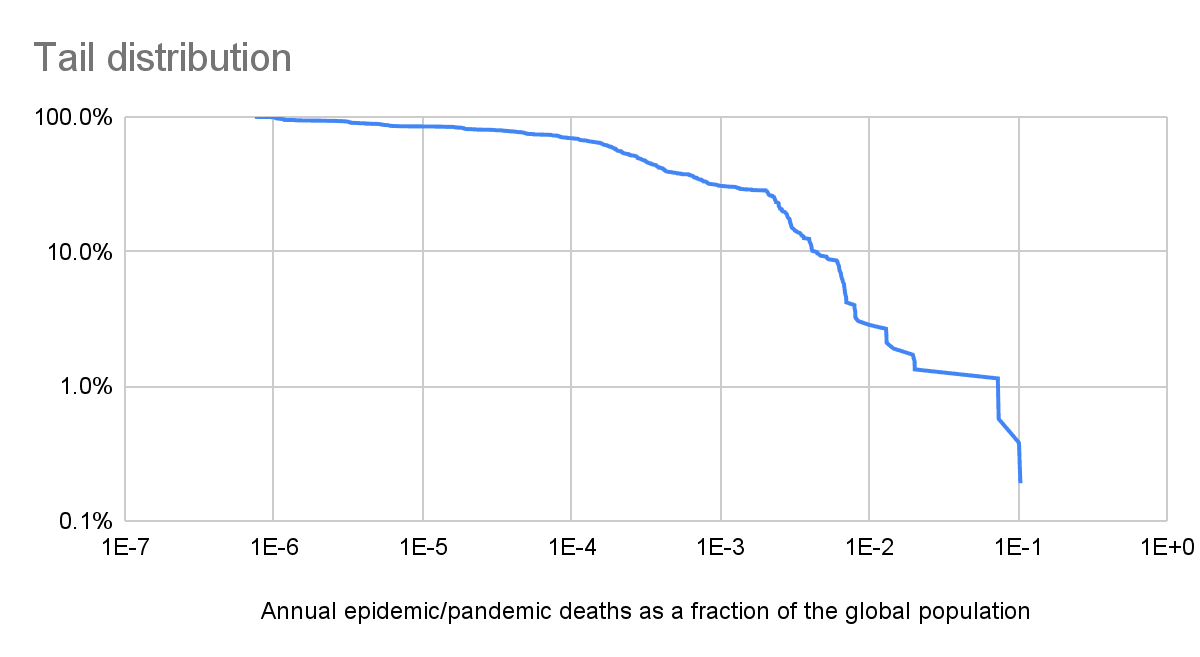

Below are the tail distributions I got for the annual conflict and pandemic/epidemic deaths as a fraction of the global population. The data for conflicts covers 1400 to 2000, and that for pandemics/epidemics coverns 1500 to 2023.

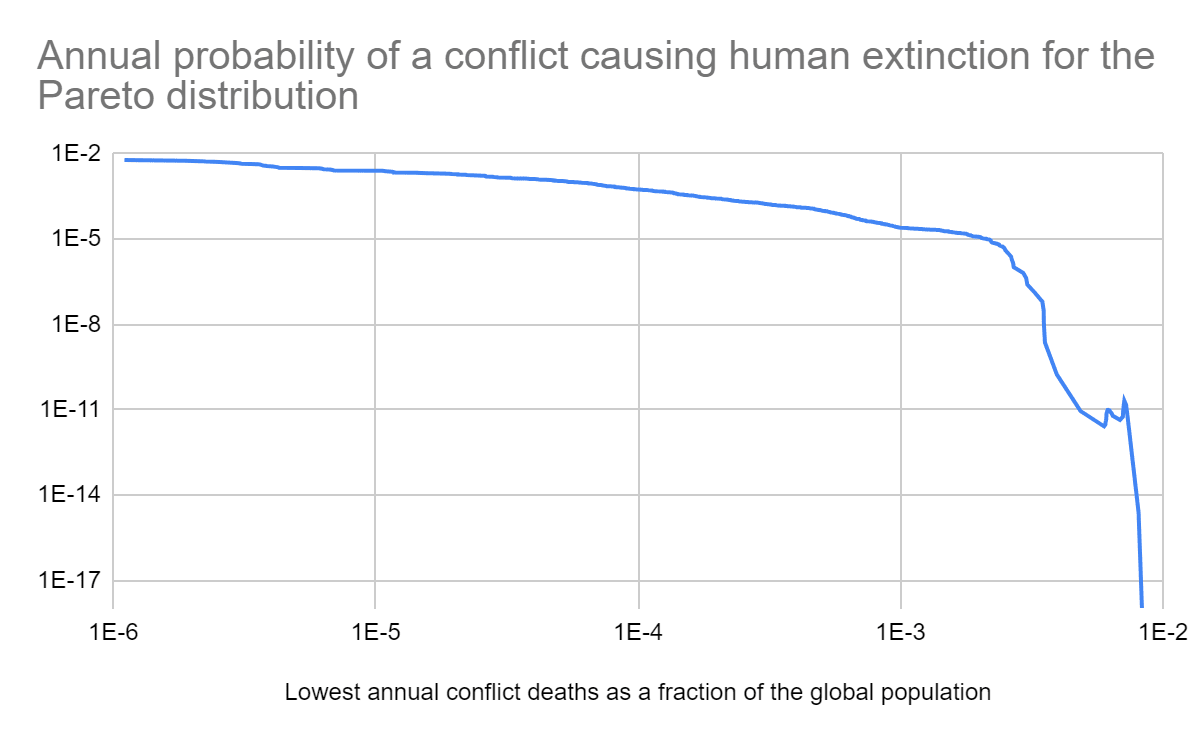

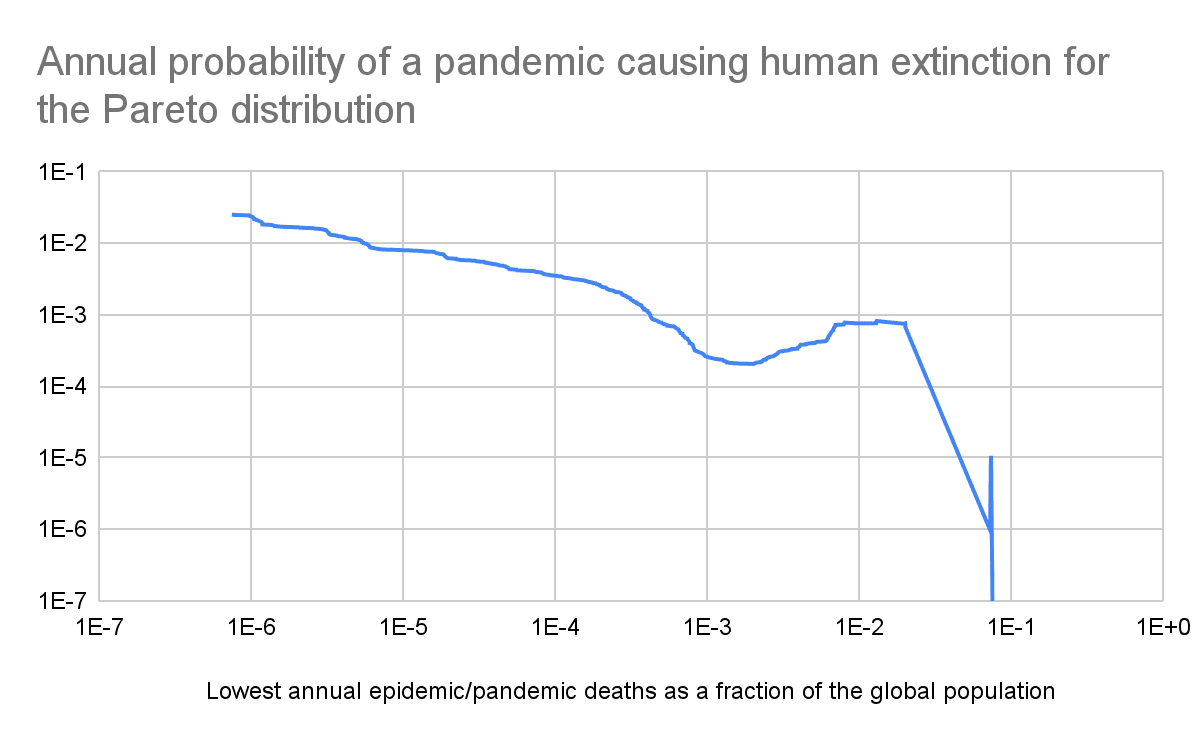

The tail distribution decays faster for greater annual deaths as a fraction of the global population. So tail risk would be overestimated if calculated based on a power law fit to less severe catatrophes. The graphs below illustrate this. They have the probability of human extinction for power laws fit to the data covering the N years with the most annual deaths as a fraction of the global population, where N goes from 2 to the number of data points available. The probability of human extinction is much lower when the power laws are fit to the years with the most annual deaths (rightmost part of the tail distributions above).

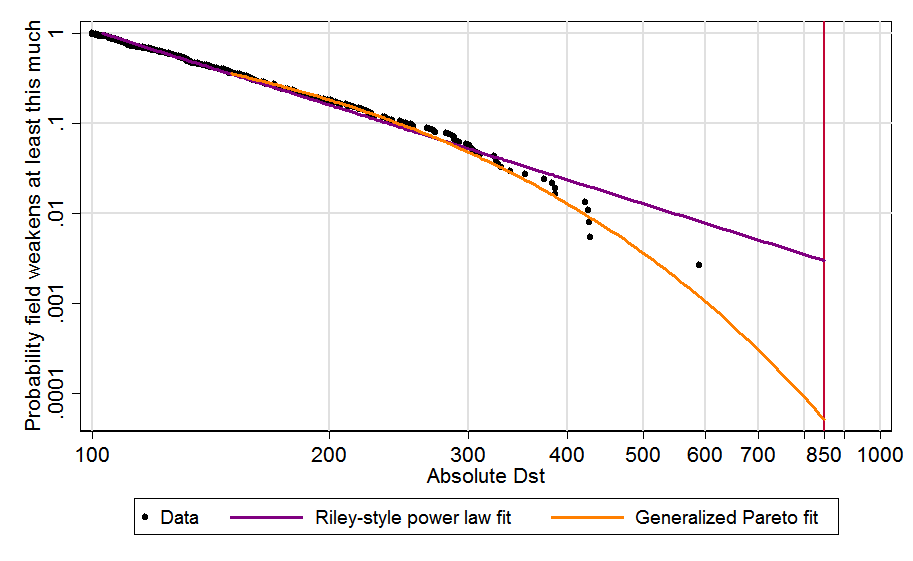

The above is in agreement with David Roodman's analysis of the tail distribution of the intensity of solar storms.

Why do you believe this?

Here is how I am thinking. Imagine the world has welfare 0.

With inaction, the final welfare will be 0 with probability 100 %.

With campaigns, there is lots of uncertainty, but here is a simplified set of outcomes:

- Agricultural land will increase with probability 75 %, and decrease with probability 25 %.

- If agricultural land increases, the final welfare will be -1 with probability 25 %, and 1 with probability 75 %. So the final welfare will be -1 with probability of 18.75 % (= 0.75*0.25), and 1 with probability 56.25 % (= 0.75*0.75) considering outcomes where agricultural land increases.

- If agricultural land decreases, the final welfare will be -1 with probability 75 %, and 1 with probability 25 %. So the final welfare will be -1 with probability 18.75 % (= 0.25*0.75), and 1 with probability 6.25 % (= 0.25*0.25) considering outcomes where agricultural land decreases.

- As a result, final welfare will be -1 with probability 37.5 % (= 0.1875*2), 1 with probability 62.5 % (= 0.5625 + 0.0625), and 0.25 (= 0.375*(-1) + 0.625*1) in expectation.

The worst possible outcome across the 2 interventions is a final welfare of -1. With inaction, it has a probability of 0. With campaigns, it has a probability of 37.5 %. So the campaigns make the worst possible outcome more likely.

Unless you believe the expected amount of wild animal suffering is higher all-things-considered than with inaction, you shouldn't really expect it to do worse according to "Avoiding the worst" risk aversion (as a heuristic; there could be exceptions).

The intervention which can decrease welfare the most is the one leading to the lowest possible final welfare.

You shouldn't be thinking in terms of "relative to inaction", which itself has a highly uncertain distribution of outcomes. Just evaluate the distribution of outcomes for each option, without fixing any as a comparison option.

I had understood this. As I said, "I understand I should look into the distributions of global welfare with and without the campaigns, and then assess their negative tails". My phrasing "cause lots of suffering (relative to inaction)" was confusing. However, I meant "increase the probability of outcomes with lots of suffering (the worst) relative to the probability under inaction".

Because we have limited capacity, this is going to be my last comment about soil animals in particular.

I am replying in case anyone is interested, or you want to comeback to it later. Feel free not to reply, and thanks for the thoughts you have shared.

Generally, I think that modeling is most useful in situations where we know enough about an issue to construct a solid framework for what effects to include, how to analyze it, and how to provide some evidence-based justification for key parameter values.

Results are more certain in the situations you describe, but very uncertain results could still be informative. They may help identify the most important uncertainties. The results would not be useful even for this if they were sufficiently arbitrary. However, I do understand why you would believe this.

Based on the "More options" drop-down in question 1 of the Donor Compass, you are comparing the welfare of humans with that of chickens, shrimps, and "non-shrimp invertebrates", which I assume includes BSFs (as these were covered in Bob's book). So I would have expected you to be open to comparing the welfare of chickens with that of soil ants and termites, which are macroarthropods like shrimps and BSFs.

In addition, based on the "More options" drop-down in question 2 of the Donor Comass, you are modelling effects after 500 years, which I think are very uncertain. So I would have expected you to be open to modelling how changes in feed consumption resulting from improving the conditions of farmed animals impact the population of soil invertebrates.

Given the enormous, many-layered uncertainties that surround second-order effects (like those on soil animals), and given that we don’t have yet a framework for analyzing such second-order effects comprehensively and equally across all interventions, I think it wouldn’t be responsible for me to speculate on either how various kinds of risk aversion can or should apply to them, or what the impact on our recommendations would be.

Makes sense. I just meant to illustrate accounting for effects on soil invertebrates could change recommendations even if there is large uncertainty about their magnitude and direction.

It might cause lots of suffering, but it could also prevent lots of suffering, too.

I agree. So the worst case is that the campaigns cause lots of suffering (relative to inaction)?

[...] Rather than thinking about what you cause, you should just look at both (distributions of) outcomes and ask which has more suffering in it, without privileging the results of inaction.

Unless you believe the expected amount of wild animal suffering is higher all-things-considered than with inaction, you shouldn't really expect it to do worse according to "Avoiding the worst" risk aversion (as a heuristic; there could be exceptions).

I understand I should look into the distributions of global welfare with and without the campaigns, and then assess their negative tails. I have little idea about which distribution has the highest expected value. However, I believe the distribution with the campaigns has longer positive and negative tails, and therefore the risk of the worst outcomes is higher with the campaigns (although the probability of the best outcomes is also higher).

Stepping back, regardless of how accounting for risk aversion changes recommendations, I would like greater reasoning transparency about why effects on soil invertebrates have been neglected. Roughly for the reasons @Marcus_A_Davis mentioned replying to Nick's concerns about conflicts of interest.

I [Marcus] think there is not a single EA organization I would consider unbiased on this question [cross-cause recommendations], including ourselves (despite our ongoing efforts not to be). That is exactly why we publish so much of our methodology and our assumptions openly. One of the main motivations for this work is concern about the effect of bias when assumptions and models are implicit or hidden. We would welcome more experts with broader backgrounds being involved in drafting and improving these estimates, which is part of what we hope this kind of public methodology enables.

In particular, I would like greater transparency about why effects on soil macroarthropods were neglected. They are covered in Bob's book, unlike soil nematodes and microarthropods. Moreover, I estimate cage-free campaigns for laying hens change the welfare of soil ants and termites much more than they increase the welfare of chickens for the sentience-adjusted welfare ranges presented in Bob's book.

Hi Michael. Thanks for sharing your thoughts.

I don't think welfare interventions targeting vertebrates will necessarily look worse than doing nothing on "Avoiding the worst" views, because they don't specifically, AFAIK, increase the risks of worse cases than inaction.

Interventions which cost-effectively increase the welfare of vertebrates will change land use much more than inaction, and a greater change in land use increases the probability of causing lots of suffering? Are you assuming that i) such interventions would increase agricultural land, and that ii) this decreases suffering?

On i), such interventions may decrease agricultural land due to increasing the price of animal products, and therefore decreasing their consumption? I estimate that replacing Ross 308 (fast growth broiler breed) with Rebro (slower growth) decreases cropland by 0.102 m²-year/Ross-308-chicken-kg, and that replacing replacing eggs from battery cages with those from barns or aviaries decreases cropland by 0.529 m²-year/battery-cages-egg-kg. These estimates neglect increases in the consumption of other foods, but I believe accounting for this would increase uncertainty, and therefore further increase the risk of the worst outcomes.

On ii), increasing agricultural land may increase suffering by increasing the number of soil macroarthropods/nematodes? I think effects on these may dominate (given the large uncertainty about welfare comparisons across species), and they may have negative lives (although I can easily see them having positive lives too).

Hi Laura. Thanks for the reply. I very much agree effects on soil invertebrates resulting from land use changes have very uncertain magnitude and direction. However, they should not be neglected under the types of risk aversion you studied?

- “Avoiding the worst” risk aversion: All else equal, we are averse to the worst states of the world arising and want to take actions that prevent them or lessen their badness.

- Difference-making risk aversion: All else equal, we are averse to our actions doing no tangible good in the world or, worse, causing harm.

- Ambiguity aversion: All else equal, we should be particularly cautious when taking actions for which the probabilities of the possible outcomes are unknown and quite uncertain.

"world" in 1 and 2, and "possible outcomes" in 3 should include effects soil invertebrates?

Do you agree that cage-free campaigns for laying hens may decrease the welfare of soil invertebrates much more than they increase the welfare of chickens, thus decreasing animal welfare a lot? I think this is very much on the table (although I can also see the effects on soil invertebrates being negligible). So I believe inaction is better than such campaigns for a sufficient level of “avoiding the worst”, or difference-making risk aversion. In addition, I infer inaction is better for a sufficient level of ambiguity aversion because the effects on soil invertebrates are very uncertain.

Here is a related comment from @Michael St Jules 🔸.

If we give extra weight to net harm over net benefits compared to inaction, as in typical difference-making [and "Avoiding the worst"] views, I think most animal interventions targeting vertebrates will look worse than doing nothing, considering only the effects on Earth or in the next 20 years, say. This is because:

- there are possibly far larger effects on wild invertebrates (even just wild insects and shrimp, but also of course also mites, springtails, nematodes and copepods) through land use change and effects on fishing, and huge net harm is possible through harming them, and

- there's usually at least around as much reason to expect large net harm to wild animals as there is to expect large net benefit to them, and difference-making gives more weight to the former, so it will dominate.

Hi Hans. I wonder whether the bulls may have positive lives despite the suffering they experience in the bullfights. In addition, the effects of producing their feed on soil invertebrates may be much larger or smaller than the effects on the bulls. I estimate that producing 1 kg of beef changes the living time of soil invertebrates by 1.39 billion animal-years, and of soil arthropods by 27.8 M animal-years.

Hi George. Thanks for sharing. I am open to bets against short transformative AI (TAI) timelines, or what they supposedly imply, up to 10 k$. Do you see any that we could make that is good for both of us under our own views?