This is a crosspost to my blog post. (For context, cluelessness is relevant to EA because, if we don't know the effects of our actions, then we may be unable to engage in consequence-focused altruism. Greaves has spoken in more depth about this issue here and here.)

In 2016, Hilary Greaves published the essay “Cluelessness,” in which she examined the issue of cluelessness, the idea that, in some decision situations, we can’t determine which action is better because we cannot know the consequences of our actions. This essay is important because, if cluelessness pervades the decision situations facing effective altruists, they may be less able or entirely unable to do as much good as they would desire.

In this post, I’m going to summarize most of the important ideas in her essay. In the first part of her essay, she addressed a concept which I will call “simple cluelessness,” and, in the second part of her essay, she addressed a concept which I will call “complex cluelessness.” In my view, she mostly resolves concerns about simple cluelessness, but she leaves the problem of complex cluelessness up to the reader.

Simple Cluelessness

According to consequentialism, good actions are those which produce the best consequences. As such, if one is choosing between two actions, a reasonable approach would be to choose the action that has the best objective consequences.

Unfortunately, as Greaves points out, since we can’t predict the future, we can never know, for sure, what the exact consequences of our actions will be. This, of course, also means that we cannot know whether one action’s consequences will be better than those of another.

One reason to reject this issue is to argue that the effects of our actions do have unforeseeable effects but that the size of these effects dramatically reduces over time. This is obviously false, since, for instance, if one were to cause traffic to get delayed by a minute, this could ultimately cause a couple to conceive a child different than the one they otherwise would have, which would, of course, cause the future to go very differently.

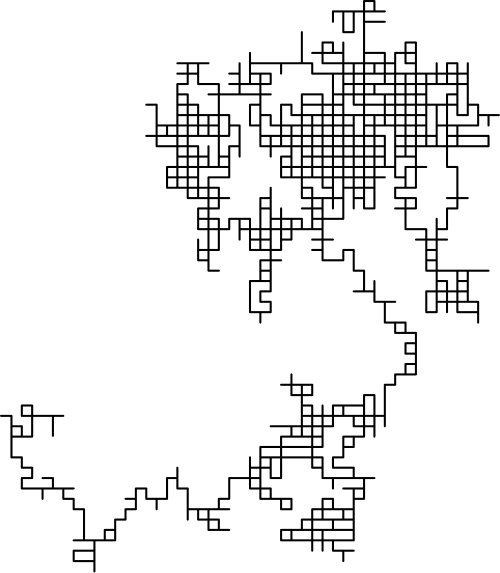

Another reason to reject this is that, although our actions will have effects that ripple out over the course of time, we should expect these effects to cancel each other out. Greaves also rejects this idea, pointing out that, if we take random walks from a point, we should almost certainly expect to end somewhere other than where we started, so we also should not expect our actions not to cancel out. I think this image of random walks from the origin (which was not included in her paper) is a helpful portrayal of this concept:

Since both of these objections fail, Greaves concludes that we cannot know whether one action is objectively better than another.

As a result of this, Greaves brings up another way of determining what actions to take. She argues that, instead of trying to determine which action has objectively better consequences, we should try to determine which action has subjectively better consequences by using expected value.

To reject this idea, one would have to argue that we cannot know whether any action is subjectively better than any other action, but Greaves reasonably argues that this is false.

In the previous case, we had to reject the idea that we can know whether the consequences of one action are objectively better than those of another action because, if we took into account the unforeseeable effects, it could cause us to choose another action.

In this case though, we actually can take into account these unforeseeable effects. Let’s imagine that action A1 could cause effects E1 or E2 and action A2 could cause effects E1 or E2. Since we do not know which action is more likely to cause which effect, we should expect that either effect will occur with equal likelihood from either action, making the expected value result to zero. As such, we can reasonably use subjectivity but not objectivity to decide which actions to take.

Complex Cluelessness

Then, Greaves moves onto a form of cluelessness that I will call complex cluelessness. A situation has complex cluelessness if,

For some pair of actions of interest A1, A2,

(CC1) We have some reasons to think that the unforeseeable consequences of A1 would systematically tend to be substantially better than those of A2;

(CC2) We have some reasons to think that the unforeseeable consequences of A2 would systematically tend to be substantially better than those of A1;

(CC3) It is unclear how to weigh up these reasons against one another.”

That is to say, we have good reasons to think that non-immediate effects of one action are better than those of another action but that we also have good reasons to think it is not. At the same time, we also don’t have a clear way of deciding which reasons to weigh more heavily.

Greaves point out that this is an especially important issue for effective altruists. She writes, “Effective Altruists place a lot of weight on the recommendations of independent charity evaluators, whose aim is to rank charities, as far as possible, in terms of overall cost-effectiveness: ‘amount of good done per dollar donated’. One charity that consistently comes out top in these rankings, at the time of writing, is the Against Malaria Foundation (amf), a charity that distributes free insecticide-treated bed nets in malarial regions. To justify this verdict, the charity evaluators clearly need (inter alia) estimates of the consequences of distributing bed nets, per extra net distributed (and hence per dollar donated). Equally clearly, however, these charity evaluators, just like everyone else, cannot possibly include estimates of all the consequences of distributing bed nets, from now until the end of time. In practice, their calculations are restricted to what are intuitively the ‘direct’ (‘foreseeable’?) consequences of bed net distribution: estimates of the number and severity of cases of malaria that are averted by bed net distribution, for which there is reasonably robust empirical data. In fact, the standard calculation focuses exclusively at the effectiveness of bed net distribution in averting deaths from malaria of children under the age of five, and (using standard techniques for evaluating death aversions) concludes that those benefits alone suffice for ranking amf’s cost-effectiveness above that of most other charities.18 It is only if our condition nrs [using cost effectiveness] holds when these effects alone are treated as the ‘foreseeable’ ones that the charity evaluators’ calculations can have the intended significance.

Averting the death of a child, however, has knock-on effects that have not been included in this calculation. What the calculation counts is the estimated value to the child of getting to live for an additional (say) sixty years. But the intervention in question also has systematic effects on others, which latter (1) have not been counted, (2) in aggregate may well be far larger than the effect on the child himself of prolonging the child’s life, and (3) are of unknown net valence. The most obvious such effects proceed via considerations of population size.19 In the first instance, averting a child death directly increases the size of the population, for the following (say) sixty years, by one. Secondly, averting child deaths has longer-run effects on population size, both because the children in question will (statistically) themselves go on to have children, and because a reduction in the child mortality rate has systematic, although difficult to estimate, effects on the near-future fertility rate.20 Assuming for the sake of argument that the net effect of averting child deaths is to increase population size, the arguments concerning whether this is a positive, neutral or negative thing are complex. But, callous as it may sound, the hypothesis that (overpopulation is a sufficiently real and serious problem that) the knock-on effects of averting child deaths are negative and larger in magnitude than the direct (positive) effects cannot be entirely discounted. Nor (on the other hand) can we be confident that this hypothesis is true. And, in contrast to the ‘simple problem of cluelessness’, this is not for the bare reason that it is possible both that the hypothesis in question is true and that it is false; rather, it is because there are complex and reasonable arguments on both sides, and it is radically unclear how these arguments should in the end be weighed against one another.”

In response to complex cluelessness, she points out four different possible responses.

First, she says that a skeptic might say that “Just as orthodox subjective Bayesianism holds, here as elsewhere, rationality requires that an agent have well-defined credences. Thus, in so far as we are rational, each of us will simply settle, by whatever means, on her own credence function for the relevant possibilities. And once we have done that, subjective c-betterness [focusing on subjective consequences] is simply a matter of expected value with respect to whatever those credences happen to be. In this model, the subjective c-betterness facts may well vary from one agent to another (even in the absence of any differences in the evidence held by the agents in question), but there is nothing else distinctive of ‘cluelessness’ cases.” The idea of this response is that an individual should just generate probabilities to calculate expected value no matter what even if someone with the same knowledge as them would produce different probabilities.

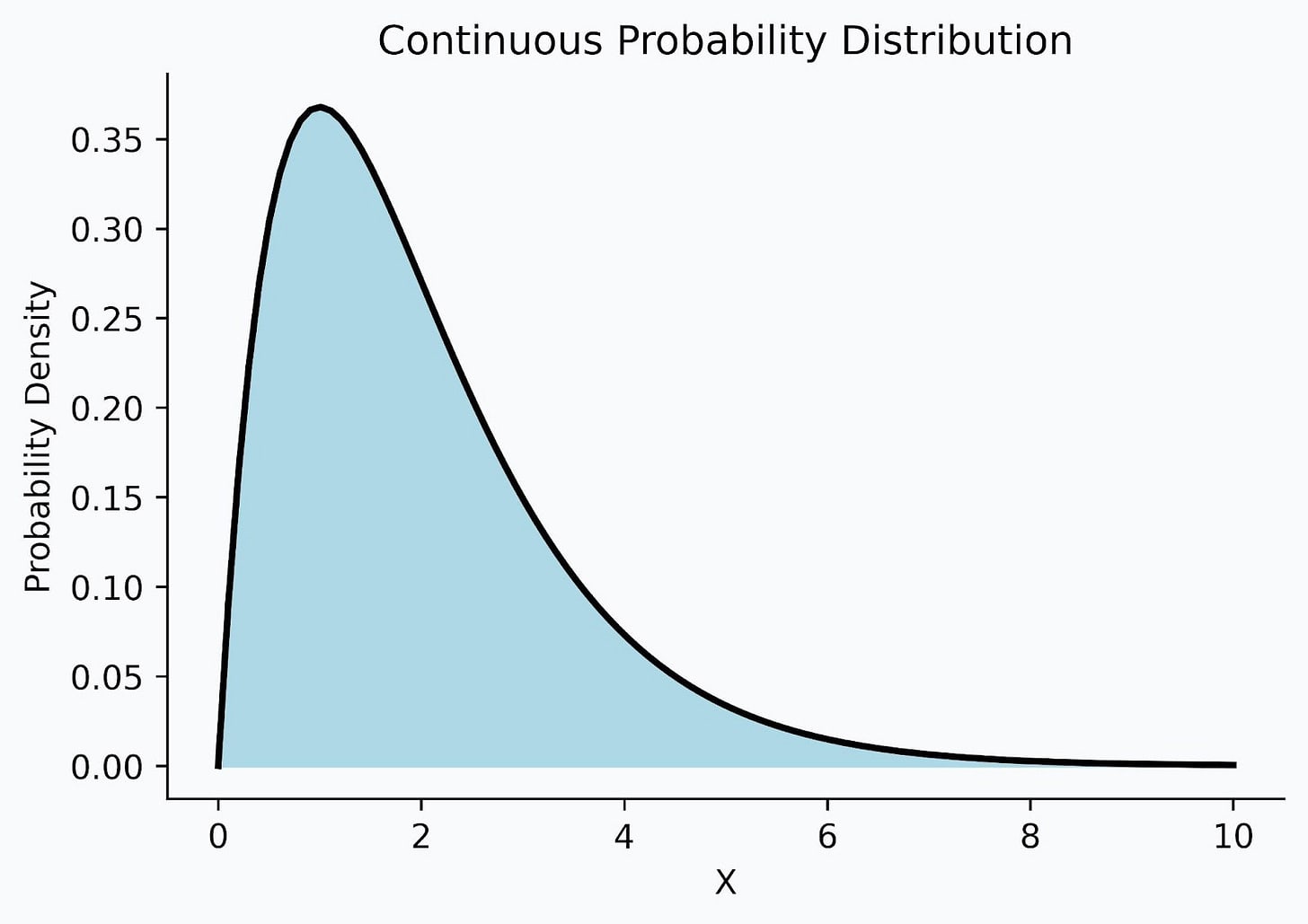

Second, she says that, perhaps, individuals should have imprecise credences. When one is determining the expected value of an action, if they are a Bayesian, they will determine this expected value by using a probability distribution such as the one in the picture below. In normal situations, an agent can rationally come to a single probability distribution, but, Greaves argues that, in a situation with complex cluelessness, an individual should instead have a set of probability functions that they are “rationally required to remain neutral between.” I’m not entirely sure what this means.

Third, she mentions, in a footnote, that one could consider many many probability distributions “rationally permissible.” (I also do not entirely know what this means.)

Lastly, she mentions, in the same footnote, that one could consider that “agents are not in any position to know which credence function is rationally required,” which is to say that one cannot know which probability distribution to choose.

In the essay, she goes into more detail on the second approach, which always generates that one must make an arbitrary choice. In my view, this is likely also true for the third and fourth approaches. It also seems to me that the first approach also essentially involves arbitrary choices as well.

To conclude, Greaves points out that complex cluelessness also occurs in a wealth of other decision-making situations, such as when a government is making a policy decision or when an individual is deciding what career to pursue so this situation is not unique to effective altruism.

You might be interested in this post I wrote explaining imprecision — hopefully answers "what this means".

Ah, thanks for sharing that!!